| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris Cluster Overview |

1. Introduction to Oracle Solaris Cluster

2. Key Concepts for Oracle Solaris Cluster

3. Oracle Solaris Cluster Architecture

Oracle Solaris Cluster Hardware Environment

Oracle Solaris Cluster Software Environment

Cluster Configuration Repository (CCR)

You can set up from one to six cluster interconnects in a cluster. While a single cluster interconnect reduces the number of adapter ports that is used for the private interconnect, it provides no redundancy and less availability. If a single interconnect fails, moreover, the cluster spends more time performing automatic recovery. Two or more cluster interconnects provide redundancy and scalability, and therefore higher availability, by avoiding a single point of failure.

The Oracle Solaris Cluster interconnect uses Fast Ethernet, Gigabit-Ethernet, InfiniBand, or the Scalable Coherent Interface (SCI, IEEE 1596-1992), enabling high-performance cluster-private communications.

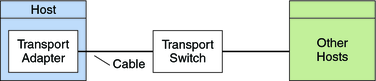

The cluster interconnect consists of the following hardware components:

Adapters – The network interface cards that reside in each cluster host. A network adapter with multiple interfaces could become a single point of failure if the entire adapter fails.

Switches – The switches, also called junctions, that reside outside of the cluster hosts. Switches perform pass-through and switching functions to enable you to connect more than two hosts. In a two-host cluster, unless the adapter hardware requires switches, you do not need switches because the hosts can be directly connected to each other through redundant physical cables. Those redundant cables are connected to redundant adapters on each host. Configurations with three or more hosts require switches.

Cables – The physical connections that are placed between either two network adapters or an adapter and a switch.

Figure 3-4 shows how the three components are connected.

Figure 3-4 Cluster Interconnect