| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Directory Server Enterprise Edition Troubleshooting Guide 11g Release 1 (11.1.1.5.0) |

| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Directory Server Enterprise Edition Troubleshooting Guide 11g Release 1 (11.1.1.5.0) |

1. Overview of Troubleshooting Directory Server Enterprise Edition

2. Troubleshooting Installation and Migration Problems

3. Troubleshooting Replication

Analyzing Replication Problems

Overview of Replication Data Collection

Setting the Replication Logging Level

Example: Troubleshooting a Replication Problem Using RUVs and CSNs

Possible Symptoms of a Replication Problem and How to Proceed

Troubleshooting a Replication Halt or Replication Divergence

Possible Causes of a Replication Halt

Possible Causes of a Replication Divergence

Collecting Data About a Replication Halt or Replication Divergence

Collecting Error and Change Logs

Collecting Data Using the insync and repldisc Commands

Collecting Information About the Network and Disk Usage

Analyzing Replication Halt Data

Resolving a Problem With the Schema

Analyzing Replication Divergence Data

Advanced Topic: Using the replcheck Tool to Diagnose and Repair Replication Halts

Diagnosing Problems with replcheck

Repairing Replication Failures With replcheck

Troubleshooting Replication Problems

Using the nsds50ruv Attribute to Troubleshoot 5.2 Replication Problems

Using the nsds50ruv and ds6ruv Attributes to Troubleshoot Replication Problems

Determining What to Reinitialize

Doing a Clean Reinitialization

To Create Clean Master Data in Directory Server

To Reinitialize a Suffix Using the DSCC

4. Troubleshooting Directory Proxy Server

5. Troubleshooting Directory Server Problems

6. Troubleshooting Data Management Problems

7. Troubleshooting Identity Synchronization for Windows

8. Troubleshooting DSCC Problems

9. Directory Server Error Log Message Reference

This section guides you through the general process of analyzing replication problems. It provides information about how replication works and tools you can use to collect replication data.

You need to collect a minimum of data from your replication topology when a replication error occurs.

You need to collect information from the access, errors, and, if available, audit logs. Before you collect errors logs, adjust the log level to keep replication information. To set the error log level to include replication, use the following command:

# dsconf set-log-prop ERROR level:err-replication

The insync command provides information about the state of synchronization between a supplier replica and one or more consumer replicas. This command compares the RUVs of replicas and displays the time difference or delay, in seconds, between the servers.

For example, the following command shows the state every 30 seconds:

$ insync -D cn=admin,cn=Administrators,cn=config -w mypword \ -s portugal:1389 30 ReplicaDn Consumer Supplier Delay dc=example,dc=com france.example.com:2389 portugal:1389 0 dc=example,dc=com france.example.com:2389 portugal:1389 10 dc=example,dc=com france.example.com:2389 portugal:1389 0

You analyze the output for the points at which the replication delay stops being zero. In the above example, we see that there may be a replication problem between the consumer france.example.com and the supplier, portugal, because the replication delay changes to 10, indicating that the consumer is 10 seconds behind the supplier. We should continue to watching the evolution of this delay. If it stays more or less stable or decreases, we can conclude there is not a problem. However, a replication halt is probable when the delay increases over time.

For more information about the insync command, see insync(1).

The repldisc command displays the replication topology, building a graph of all known replicas using the RUVs. It then prints an adjacency matrix that describes the topology. Because the output of this command shows the machine names and their connections, you can use it to help you read the output of the insync tool. You run this command on 6.0 and later versions of Directory Server as follows:

# repldisc -D cn=Directory Manager -w password -b replica-root -s host:port

The following command show an example of the output of the repldisc command:

$ repldisc -D cn=admin,cn=Administrators,cn=config -w pwd \

-b o=rtest -s portugal:1389

Topology for suffix: o=rtest

Legend:

^ : Host on row sends to host on column.

v : Host on row receives from host on column.

x : Host on row and host on column are in MM mode.

H1 : france.example.com:1389

H2 : spain:1389

H3 : portugal:389

| H1 | H2 | H3 |

===+===============

H1 | | ^ | |

---+---------------

H2 | v | | ^ |

---+---------------

H3 | | v | |

---+---------------

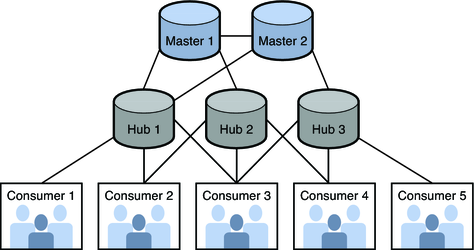

In this example, two masters replicate to three hubs, which in turn replicate to five consumers:

Replication is not working, and fatal errors appear in the log on consumer 4.

However, because replication is a topology wide feature, we look to see if other consumers in the topology are also experiencing a problem, and we see that consumers 3 and 5 also have fatal errors in their error logs. Using this information, we see that potential participants in the problem are consumers 3, 4, and 5, hubs 2 and 3, and masters 1 and 2. We can safely assume that consumers 1 and 2 and hub 2 are not involved.

To debug this problem, we need to collect at least the following information from the following replication participants:

Topology wide data, using the output of the insync and repldisc commands.

Information about the CSN or CSNs that are blocking, using the RUV of masters 1 and 2 and consumer 4.

Information for each potential participant in the problem, including dse.ldif, nsslapd -V, access and errors log (with replication enabled) related to the date when the blocking CSN was created.

Information about the replication participants that are functioning correctly and most likely not involved in the problem, including dse.ldif, nsslapd -V, and the access and errors log (with replication enabled).

With this data we can now identify where the delays start. Looking at the output of the insync command, we see delays from hub 2 of 3500 seconds, so this is likely where the problem originates. Now, using the RUV in the nsds50ruv attribute, we can find the operation that is at the origin of the delay. We look at the RUVs across the topology to see the last CSN to appear on a consumer. In our example, master 1 has the following RUV:

replica 1: CSN05-1 CSN91-1 replica 2: CSN05-2 CSN50-2

Master 2 contains the following RUV:

replica 2: CSN05-2 CSN50-2 replica 1: CSN05-1 CSN91-1

They appear to be perfectly synchronized. Now we look at the RUV on consumer 4:

replica 1: CSN05-1 CSN35-1 replica 2: CSN05-2 CSN50-2

The problem appears to be related to the change that is next to the change associated with CSN 35 on master 1. The change associated with CSN 35 corresponds to the oldest CSN ever replicated to consumer 4. By using the grep command on the access logs of the replicas on CSN35–01, we can find the time around which the problem started. Troubleshooting should begin from this particular point in time.

As discussed in Defining the Scope of Your Problem, it can be helpful to have information from a system that is working to help identify where the trouble occurs. So we collect data from hub 1 and consumer 1, which are functioning as expected. Comparing the data from the servers that are functioning, focusing on the time when the trouble started, we can identify differences. For example, maybe the hub is being replicated from a different master or a different subnet, or maybe it contains a different change just before the change at which the replication problem occurred.

Depending on the symptoms of your problem, your troubleshooting actions will be different.

For example, if you see nothing in the access logs of the consumers, a network problem may be the cause of the replication failure. Reinitialization is not required.

If the error log shows that it cannot find a particular entry in the change log, the master's change log is not up-to-date. You may or may not need to reinitialize your topology, depending upon whether you can locate an up-to-date change log somewhere in your replication topology (for example, on a hub or other master).

If the consumer has problems, for example experiences processing loops or aborts locks, look in the access log for a large number of retries for a particular CSN. Run the replck tool to locate the CSN at the root of the replication halt and to repair this entry in the change log.