11 Configuring High Availability for Enterprise Content Management

Oracle Enterprise Content Management (ECM) Suite, an Oracle Fusion Middleware component, is an integrated suite of products designed for managing content. It is the industry's most unified enterprise content management platform, which enables you to leverage industry-leading document management, Web content management, digital asset management, and records management functionality to build your business applications. Building a strategic enterprise content management infrastructure for content and applications helps you to reduce costs, easily share content across the enterprise, minimize risk, automate expensive, time-intensive and manual processes, and consolidate multiple Web sites onto a single platform.

Oracle ECM offers the following benefits:

-

Superior usability: Built-in support for end users, workgroups, content experts, process owners, administrators, and webmasters

-

Optimized management: Unified architecture for securely managing documents, files, web content and digital assets

-

Hot-pluggable: Out-of-the-box support for Oracle and third-party repositories, security systems, and enterprise applications

This chapter describes some of the Oracle Enterprise Content Management components from a high availability perspective. This chapter outlines single-instance concepts that are important for designing a high availability deployment.

The chapter includes the following topics:

-

Section 11.1, "Oracle Imaging and Process Management High Availability"

-

Section 11.2, "Oracle Universal Content Management High Availability"

-

Section 11.3, "Oracle ECM High Availability Configuration Steps"

11.1 Oracle Imaging and Process Management High Availability

This section provides an introduction to Oracle Imaging and Process Management (Oracle I/PM) and describes how to design and deploy a high availability environment for Oracle I/PM.

11.1.1 Oracle I/PM Component Architecture

Oracle I/PM provides organizations with a scalable solution focused on process-oriented imaging applications and image-enabling business applications, such as Oracle E-Business Suite and PeopleSoft Enterprise. It enables annotation and markup of images, automates routing and approvals, and supports high-volume applications for trillions of items.

Oracle I/PM is a transactional content management tool; it manages each document as part of a business transaction or process. A transactional document is important to you mostly during the time when the transaction is occurring, typically a relatively short period of time.

Figure 11-1 illustrates the Oracle I/PM internal components (components inside the Oracle I/PM package).

Figure 11-1 Oracle I/PM Components for a Single Instance

Description of "Figure 11-1 Oracle I/PM Components for a Single Instance"

Note the following before you begin setting up an Oracle I/PM configuration:

-

Oracle I/PM is a relatively thin layer of functionality on top of the Oracle Universal Content Manager (Oracle UCM) repository. Oracle I/PM provides an imaging facade on Oracle UCM. The repository performs a major portion of resource utilization. For this reason, Oracle recommends configuring Oracle UCM by putting an Oracle UCM instance with every Oracle I/PM instance. This configuration provides good utilization of hardware.

-

Oracle I/PM's largest performance factor is the movement of document content. Oracle I/PM is usually used in high-volume scenarios in which hundreds of thousands of documents are ingested per day. These documents are searched for, retrieved, and viewed frequently during the day. It is the movement of document content in and out of the system that has the largest impact on performance. Oracle I/PM stores TIF documents that average about 25K bytes in size. However, TIF documents can have multiple pages, which increases their size. Oracle I/PM also supports 400 other file formats of varying sizes, including larger sizes.

11.1.1.1 Oracle I/PM Component Characteristics

Oracle I/PM is a JEE application that runs in the WebLogic Server Managed Server environment. It includes the following components and subcomponents:

-

Servlets: Oracle I/PM has a servlet for internal use that provides a mechanism for the REST API and I/PM Applet Viewer to access the public API functions of the system.

-

EJBs: Oracle I/PM uses some EJB concepts internally; however it has no external facing EJBs.

-

Oracle I/PM leverages JMS to drive both its Input Agent and its BPEL Agent message-driven beans (MDBs). In Figure 11-1, the BPEL Agent is the collection of BPEL MDBs. These queues should be persistent and provide guaranteed delivery. They should also distribute work across clusters.

-

Oracle I/PM leverages JAX-WS and JAX-B to implement its Web services and serialization mechanisms.

-

Oracle I/PM leverages TopLink for its definition persistence as well as configuration. The objects are generally the definitions for applications and searches. In addition, TopLink is used to get user and system preferences.

-

Oracle I/PM leverages Java Object Cache (JOC) for an internal cache of documents retrieved from the repository and being processed for viewing. These caches exist only locally, and are not shared across the servers.

-

The interaction between AXF and BPEL is done through the BPEL remote API, which uses RMI via EJB.

Note:

Oracle I/PM does not use Java Transaction API (JTA) because Oracle I/PM does not support multi-component transactions.11.1.1.1.1 Oracle I/PM State Information

Because Oracle I/PM has a stateful UI but does not support session replication, it requires a sticky load balancing router.

Invocations to the Oracle I/PM services using the Oracle I/PM API are stateless.

11.1.1.1.2 Oracle I/PM Runtime Processes

Oracle I/PM's core uses a service-based architecture with a central API, UI and Web Service interface. The UI, which is ADF based, uses Oracle I/PM's public API to access the core services. End users can access this API via Web Services. Oracle I/PM also provides a thin Java client library that exposes those Web services in a more convenient packaging for Java developers.

Oracle I/PM has these agents to perform background processing:

-

The Input Agent watches an input directory for incoming lists of documents to ingest.

-

The BPEL Agent responds to document create events and creates new BPEL process instances with metadata from the document and a URL to the document attached. In Figure 11-1, the BPEL Agent is the collection of BPEL MDBs.

-

The Ticket Agent is a stateless EJB that is invoked off a timer. It is not shown in Figure 11-1. The Ticket Agent is responsible for identifying and removing expired URL tickets. These tickets are used to create clean URLs without parameters.

The Oracle I/PM service uses the Oracle UCM document repository for the storage of its scan documents. The Oracle UCM repository is a standalone product to which Oracle I/PM communicates via a TCP/IP socket-based connection mechanism provided by Oracle UCM called RIDC.

11.1.1.1.3 Oracle I/PM Process Lifecycle

Oracle I/PM is a JEE application which runs in a WebLogic Server managed server. It does not provide any specific death detection, but WebLogic Server Node Manager can be used to keep the server running.

The steps in the Oracle I/PM process lifecycle are:

-

Oracle I/PM is mostly initialized after the domain configuration is performed. The remaining initialization happens when Oracle I/PM starts and the first user logs on.

-

When the first user logs on, full security is granted to the user under the assumption that he can subsequently grant permissions to others. Oracle I/PM provides a self seeding database security mechanism. If Oracle I/PM starts and there is no security defined, the first user logging into the system is granted access to all things.

-

After Oracle I/PM's first startup Oracle I/PM is "empty." It is the administrative user's responsibility to create connections to the Oracle UCM document repository and BPEL servers.

-

Once the first UCM connection is created, the Oracle UCM repository initializes for Oracle I/PM's use.

-

Then, appropriate business object must be created including Applications, Searches, and Inputs. The administrative user may choose to import these business objects rather than create them from scratch.

-

If the Agent User is specified in step 1, the agents will be waiting for JMS queue events to begin their work after the servers has been restarted

-

After the server is running and the JAX-WS mechanics are ready, Oracle I/PM can receive client requests.

11.1.1.1.4 Oracle I/PM Configuration Artifacts

Oracle I/PM has the following configuration artifacts:

-

All Oracle I/PM configuration data is stored within the Oracle I/PM database. Configuration does not reside in local files. The configuration parameters are exposed either through the appropriate MBeans (available form Enterprise Manager or WLST) or through Oracle I/PM's UI.

-

The other Oracle I/PM configuration artifacts are the definition objects, which are described in Section 11.1.1.1.5, "Oracle I/PM External Dependencies."

-

Oracle I/PM uses Oracle UCM mechanisms for customizing Oracle UCM for use with Oracle I/PM, including management of UCM Profiles and Information Fields.

11.1.1.1.5 Oracle I/PM External Dependencies

Oracle I/PM has the following external dependencies:

-

Oracle I/PM requires a database to store its configuration. The database is initially create via RCU and is managed through WebLogic Server JDBC data sources. The database is accessed via TopLink.

-

Oracle I/PM leverages a variety of Oracle and Java technologies, including JAX-WS, JAX-B, ADF, TopLink, and JMS. These are included with the installation and do not require external configuration.

-

Oracle I/PM provides Mbeans for configuration. These are available through WLST and the EM Mbean browser. Oracle I/PM provides a few custom WLST commands.

-

Oracle I/PM depends on the existence of an Oracle UCM repository.

The following clients are dependent upon Oracle I/PM:

-

The Oracle I/PM UI is built on its public toolkit (the Oracle I/PM API). The UI and core API are distributed in the same EAR file.

-

Oracle I/PM provides Web services for integrations with other products, and provides a set of Java classes that wrap those Web services as a Java convenience for integrating with other products.

-

Oracle I/PM provides a URL toolkit to facilitate other applications interacting with Oracle I/PM searches and content.

-

Oracle I/PM provides a REST toolkit for referencing individual pages of documents for web presentation.

Clients connect to Oracle I/PM as follows:

-

Either through JAX-WS for the Web services and/or Java client. These connections are stateless and perform a single function.

-

Through HTTP for access to URL and REST tools:

-

REST requests are stateless and perform a single function.

-

URL tools log into the Oracle I/PM UI and provide a more stateful experience with the relevant UI components.

-

11.1.1.1.6 Oracle I/PM Log File Location

Oracle/IPM is a JEE application deployed on WebLogic Server. All log messages are logged in server log files of the WebLogic Server that the application is deployed on.

The default location of the diagnostics log file is:

WL_HOME/user_projects/domains/domainName/servers/serverName/logs/ serverName-diagnostic.log

You can use Oracle Enterprise Manager to easily search and parse these log files.

11.1.2 Oracle I/PM High Availability Concepts

This section provides conceptual information about using Oracle I/PM in a high availability two-node cluster.

11.1.2.1 Oracle I/PM High Availability Architecture

Figure 11-2 shows an Oracle I/PM high availability architecture:

Figure 11-2 Oracle I/PM High Availability Architecture Diagram

Description of "Figure 11-2 Oracle I/PM High Availability Architecture Diagram"

Oracle I/PM can be configured in a standard two node active-active high availability configuration. Although Oracle I/PM's UI layer will not fail over between active servers, its other background processes will.

In the Oracle I/PM high availability configuration shown in Figure 11-2:

-

The Oracle I/PM nodes are exact replicas of each other. All the nodes in a high availability configuration perform the exact same services and are configured through the centralized configuration database, from which all servers pull their configuration.

-

A load balancing router with sticky session routing must front-end the Oracle I/PM nodes. Oracle HTTP Server can be used for this purpose.

-

Oracle I/PM can run both within a WebLogic Server cluster or without.

-

All the Oracle I/PM instances must share the same database connectivity. This can be Oracle RAC for increased high availability potential. Oracle I/PM uses TopLink for database actions.

-

Oracle I/PM requires one common shared directory from which its Input Agent draws inbound data files staged for ingestion. Once an Input Agent pulls input definition files, processing of the input file and images associated will stay isolated to that WebLogic Server instance.

11.1.2.1.1 Starting and Stopping the Cluster

Oracle I/PM's agents (Input Agent, BPEL Agent) are started as integral part of the Oracle I/PM application residing in the WebLogic Server in the cluster. Based on outstanding work sitting in the JMS queues (which are persisted and preserved across failures), they begin processing immediately. The Input Agent also looks for work in its inbound file directory. If there are files to be processed, they are pushed to the corresponding JMS queues and a server in the cluster will consume it and process the associated images. The numbers of threads dedicated to the BPEL Agent and Input Agent are controllable though the corresponding WebLogic Server workload managers.

When the servers in a cluster are stopped, the agents finish their activity. The BPEL Agent has a short run cycle and all work is likely completed before shutdown. In flight BPEL invocations are retried three times and JMS logs preserve pending operations. The Input Agent can process files of considerable size. A large input file can take hours to process. The amount of work pending after a stop is preserved in the associated JMS persistence stores. When the server where the Input Agent is hosted is restarted, the agent resumes processing where it left off. Processing of image files continues in the server that initially consumed the corresponding input file after restart or server migration.

11.1.2.1.2 Cluster-Wide Configuration Changes

Oracle I/PM configuration is stored in the Oracle I/PM database and shared by all the Oracle I/PM instances in the cluster.

The number of threads for the BPEL Agent and Input Agent for a particular Oracle I/PM instance is a WebLogic Server property (stored as configuration of the Oracle I/PM application) and is controlled through the Administration Console or with WLST commands.

11.1.2.2 Protection from Failures and Expected Behaviors

Follow these recommendations to protect the Oracle I/PM high availability configuration from failure:

-

Use a load balancing router with sticky session routing to front-end the Oracle I/PM nodes. Oracle HTTP Server can be used for this purpose.

-

Configure timeouts for the load balancing router based on the size of the documents being uploaded or retrieved by Oracle I/PM for viewing.

Also, the following features help protect the Oracle I/PM high availability configuration from failure:

-

JMS failover is provided by WebLogic Servers's JMS implementation.

-

TopLink provides database retry logic.

-

For connections to Oracle UCM, retry logic exists for immediate retry attempts to resolve network glitches. Oracle I/PM provides a failover mechanism to find other Oracle UCM servers in a cluster. The customer can use a load balancer between Oracle I/PM and Oracle UCM to also achieve load balancing/failover goals.

-

For connections to BPEL, Oracle I/PM performs an immediate retry attempt and then push failed items back into the associated JMS queue for retry after a longer timeout (5 minutes). This JMS retry mechanism is performed three times. Any objects continuing to fail go into a holding queue for user intervention.

-

API level integrations (such as JPS) are not retried and are assumed to have internal retry logic.

An Oracle/IPM node failure affects the Agent processes for that node. Any unfinished transactions are abandoned and lost. During a failover, the user sessions in the Oracle I/PM UI for that node are lost. Users must log in again and restart. Since each Oracle I/PM cluster node is independent, failover is managed by the load balancing router and not by Oracle I/PM directly.

Because all the Oracle I/PM configuration information is stored in the database and all the nodes are replicas of each other, the configuration information is immediately available to the new node after failover.

These are the implications of an Oracle I/PM node failure on external resources:

-

For JMS transactions, the assumption is that atomic actions done to JMS are internally transactional, and that any messages received from the queue that had not been completed will be resubmitted. BPEL Agent and Input Agent failure recovery relies on persistence of the local messages to a file system. In the event that messages are in the local node, they will be continued upon start of that node. Other messages will be picked up by nodes still running.

-

For database transactions, the database is not responsible for a client failure except that it forces an implicit rollback operation when the disconnect occurs. Database transactions from a database outage will be managed by the necessary database facilities (for example, a failover when an Oracle RAC database is being used, and no failover when a single node database is being used). If there is a node failure in the middle of a database access, the user will most likely need to log in again to retry the UI operation.

-

User sessions within Oracle I/PM are lost during an Oracle I/PM server failure. If you are uploading documents through the Oracle I/PM UI and an Oracle I/PM server failure occurs, it is possible that you will not receive confirmation of successful manual document uploads. In this case, it is recommended that you search for the existence of the documents that you were uploading when the server failure occurred before you attempt to upload them again; this will help avoid the creation of duplicate documents.

If an external application accesses an Oracle I/PM cluster by means of a WebService invocation, it is expected that the front end load balancer will provide the appropriate failover logic when an Oracle I/PM server fails. If the application interacts with the Oracle I/PM system by directly pushing files to the input directory, it is the application's responsibility to retry should a failure in the file system occur.

If an Input definition file is being processed during the time of the outage, Oracle I/PM will maintain the file details and upon restart of that specific node, the local JMS queue will respawn that JMS message for retry. Upon retry, the Input Ingestor will attempt to determine where specifically in the file the shutdown occurred, to pick up where it left off so that no documents from the batch are lost. The definition file is maintained in the Stage directory until the specific node that was processing the definition file is able to fully restart and finish the file.

The input file is the smallest unit of work that the Input Agent can schedule and process. There are multiple elements to be taken into consideration to achieve the highest performance, scalability, and high availability:

-

All of the machines in an Oracle I/PM cluster share a common input directory.

-

Input files from this directory are distributed to each machine via a JMS queue.

-

The frequency with which the directory is polled for new files is configurable via the CheckInterval MBeans configuration setting.

-

Each machine has a specified number of Input Ingestors, responsible for parsing of the Input Definition files. The number of ingestors is controlled via the Workload manager for the InputAgent and is managed by WebLogic Server.

The optimum performance will be achieved when:

-

Each Oracle I/PM cluster node has the maximum affordable number of parsing agents configured via the Work Manager without compromising the performance of the other I/PM activities, such as the user interface and Web services.

-

The inbound flow of documents is partitioned into input files containing the appropriate number of documents. On average there should be two input files queued for every parsing agent within the cluster.

-

If one or more machines within a cluster fails, active machines will continue processing the input files. Input files from a failed machine will remain in limbo until the server is restarted. Smaller input files ensure that machine failures do not place large numbers of documents into this limbo state.

For example: Consider 10,000 inbound documents per hour being processed by two servers. A configuration of 2 parsing agents per server produces acceptable overall performance and ingests 2 documents per second per agent. The 4 parsing agents at 2 documents per second is 8 documents per second, or 28,800 documents per hour. Note that a single input file of 10,000 documents will not be processed in an hour since a single parsing agent working at 7,200 documents per hour will be unable to complete it. However, if you divide the single input file up into 8 input files of 1,250 documents, this ensures that all 4 parsing agents are fully used, and the 10,000 documents are completed in the one hour period. Also, if a failure should occur in one of the servers, the other can continue processing the work remaining on its parsing agents until the work is successfully completed.

Outages in Components on Which Oracle I/PM Depends

Oracle I/PM integrates with Oracle SOA Suite. If the SOA server is taken down, Oracle I/PM will experience failures. Oracle I/PM will retry actions three times. Each attempt is followed by a configurable delay time before it is repeated. If after the third attempt the action cannot be completed, then these requests are queued in a database table to be resubmitted at a later time. The resubmission is accomplished through WLST commands.

Oracle I/PM is not dependent on Oracle WebCenter.

Other Oracle ADF applications do not affect the operation of Oracle I/PM.

The Oracle I/PM UI allows specifying a list of Oracle UCM servers to which it connects. It uses a primary server to connect to and allows specifying multiple secondary servers. In case of a failure in the primary UCM server, Oracle I/PM retries the next UCM server in the list. If all the UCM servers are down, an exception is returned and traced in the Oracle I/PM server´s log.

11.1.2.3 Creation of Oracle I/PM Artifacts in a Cluster

Searches, inputs, applications (all Oracle I/PM structural configurations) are stored within the Oracle I/PM database. They become immediately available to all machines in the cluster, including the Web UI layers. The Web UI does not automatically refresh when a new entity is created in another Oracle I/PM server in the cluster or in the same server by a different user session; however, you can refresh your web browser to cause a navigation bar refresh for the imaging application.

11.1.2.4 Troubleshooting Oracle I/PM

This section describes how to trouble shoot the Oracle I/PM server, Advanced Viewer, and Input Agent in a high availability environment.

Additional Oracle I/PM troubleshooting information can be found in Oracle Fusion Middleware Administrator's Guide for Oracle Imaging and Process Management.

Oracle I/PM Server Troubleshooting

Oracle/IPM is a JEE application deployed on WebLogic Server. All log messages are logged in server log files of the WebLogic Server that the application is deployed on.

The default location of the diagnostics log file is:

WL_HOME/user_projects/domains/domainName/servers/serverName/logs/ serverName-diagnostic.log

You can use Oracle Enterprise Manager to easily search and parse these log files.

Advanced Viewer Troubleshooting

Oracle I/PM primarily provides server side logging, although it does have a client-side component called the Advanced Viewer. This Java Applet uses the standard logging utilities; however, these utilities are disabled in client situations. These utilities can be configured to direct the logging output to either a file or the Java Console via appropriate changes to the logging.properties file for the Java plug-in.

When the Input Agent for Oracle I/PM is uploading documents, if Oracle Universal Content Management fails, messages such as these are generated:

Filing Invoices Input completed successfully with 48 documents processed successfully out of 50 documents.

In addition, an exception similar to this is generated:

[2009-07-01T16:43:01.549-07:00] [IPM_server2] [ERROR] [TCM-00787]

[oracle.imaging.repository.ucm] [tid: [ACTIVE] .ExecuteThread: '10' for queue:

'weblogic.kernel.Default (self-tuning)'] [userId: <anonymous>] [ecid:

0000I8sgmWtCgon54nM6Ui1AIvEo00005N,0] [APP: imaging#11.1.1.1.0]

A repository error has occurred.

Contact your system administrator for assistance.

[[oracle.imaging.ImagingException: TCM-00787: A repository error has occurred.

Contact your system administrator for assistance.

stackTraceId: 5482-1246491781549

faultType: SYSTEM

faultDetails:

ErrorCode = oracle.stellent.ridc.protocol.ServiceException,

ErrorMessage = Network message format error.

at

oracle.imaging.repository.ucm.UcmErrors.convertRepositoryError(UcmErrors.

java:109

.

.

.

The Input Agent handles errors by placing the failed documents in an error file, so this behavior and the error messages that are generated under these circumstances are expected.

11.2 Oracle Universal Content Management High Availability

This section provides an introduction to Oracle Universal Content Management (Oracle UCM) and describes how to design and deploy a high availability environment for Oracle UCM.

11.2.1 Oracle Universal Content Management Component Architecture

Figure 11-3 shows the Oracle UCM single instance architecture.

Figure 11-3 Oracle Universal Content Management Single Instance Architecture

Description of "Figure 11-3 Oracle Universal Content Management Single Instance Architecture"

An instance of Oracle UCM runs within an Oracle WebLogic Managed Server environment. WebLogic Server is responsible for the lifecycle of the Oracle UCM application, such as starting and stopping it.

There are two entry points into the Oracle UCM application. One is a listening TCP socket, which can accept connections from clients such as Oracle I/PM or Oracle WebCenter. The second is an HTTP listening port, which can accept Web services invocations. The HTTP port is the same port as the Managed Server listen port.

The UCM Server also contains several embedded applications. These can be run as applets within the clients browser. They include a Repository Manager (RM) for file management and indexing functions, and a Workflow Admin for setting up user workflows. For more information on any of these applications, refer to the Oracle Fusion Middleware Application Administrator's Guide for Content Server.

The UCM Server has three types of persistent stores: the repository (which can be stored in an Oracle database), the search index (which can be stored in an Oracle database), and metadata and web layout information (which can be stored on the file system).

11.2.1.1 Oracle UCM Component Characteristics

Oracle UCM is a servlet that resides in WebLogic Server. A servlet request is a wrapper of a UCM request.

Oracle UCM includes various standalone administration utilities, including BatchLoader and IdcAnalyzer.

JMS and JTA are not used with Oracle UCM.

11.2.1.1.1 Oracle UCM State Information

A request in Oracle UCM is stateless. The session can be persisted in the database to retain authentication information.

11.2.1.1.2 Oracle UCM Runtime Processes

Oracle UCM has a service-oriented architecture. Each request comes into the system as a service. The Shared Services layer parses out the requests and hands the request to the proper handler. Each handler typically accesses the underlying repository to process the request. The three types of repositories and the data that they store are shown in Figure 11-3.

11.2.1.1.3 Oracle UCM Process Lifecycle

You can start and stop Oracle UCM as a WebLogic Server Managed Server. The UCM Administration Server can also be used to start, stop, and configure the UCM instance; the UCM Administration Server still works in the WebLogic Server environment, although it has been deprecated in favor of the WebLogic Administration Console. The load balancer can use the PING_SERVER service to monitor the status of the UCM instance.

During startup, Oracle UCM loads initialization files and data definitions, initializes database connections, and loads localization strings. Through the Oracle UCM internal component architecture, the startup sequence also discovers internal components that are installed and enabled on the system, and initializes those components. The Search/Indexing engine and file storage infrastructure are also initialized.

A client request is typically serviced entirely by one Oracle UCM instance. Existence of other instance in a cluster does not impact client request.

11.2.1.1.4 Oracle UCM Configuration Artifacts

Initialization files are stored in the file system and stored in Oracle UCM system directories.

11.2.1.1.5 Oracle UCM Deployment Artifacts

Oracle UCM uses nostage deployment. That is, all deployment files are local.

11.2.1.1.6 Oracle UCM External Dependencies

Oracle UCM requires WebLogic Server and an external Oracle database.

The following clients depend on Oracle UCM:

-

Oracle Universal Records Management (Oracle URM)

-

Oracle Imaging and Process Management (Oracle I/PM)

-

Oracle WebCenter

The connection from clients is short-lived, and is only needed for the duration of sessionless service.

Clients can connect to Oracle UCM using the HTTP, SOAP/Web Services, JCR, and VCR protocols.

11.2.1.1.7 Oracle UCM Log File Locations

Oracle UCM is a J2EE application deployed on WebLogic Server. Log messages are logged in the server log file of the WebLogic Server that the application is deployed on. The default location of the server log is:

WL_HOME/user_projects/domains/domainName/servers/serverName/logs/ serverName-diagnostic.log

Oracle UCM can also keep logs in:

WebLayoutDir/groups/secure/logs

Oracle UCM trace files can be configured to be captured and stored in:

IntraDocDir/data/trace

To view log files using the Oracle UCM GUI, choose the UCM menu and then choose Administration > Logs.

To view trace files using the Oracle UCM GUI, choose the UCM menu and then choose System Audit Information. Click the View Server Output and View Trapped Output links to view current tracing and captured tracing.

11.2.2 Oracle UCM High Availability Concepts

This section provides conceptual information about using Oracle UCM in a high availability two-node cluster.

11.2.2.1 Oracle UCM High Availability Architecture

Figure 11-4 shows a two-node active-active Oracle Universal Content Management cluster.

Figure 11-4 Oracle Universal Content Management Two-Node Cluster

Description of "Figure 11-4 Oracle Universal Content Management Two-Node Cluster"

In the Oracle UCM high availability configuration shown in Figure 11-4:

-

Each node runs independently against the shared file system, the same database schema, and the same search indexes. Each client request is served completely by a single node.

-

Oracle UCM can run with a WebLogic Server cluster or an external load balancer. Oracle UCM is also transparent to Oracle RAC and multi data source configuration.

-

Oracle UCM nodes can be scaled independently, and there is limited inter-node communication. Nodes communicate via writing and reading to a shared file system. This shared file system must support synchronized write operations.

11.2.2.1.1 Starting and Stopping the Cluster

When the cluster starts, each Oracle UCM node goes through its normal initialization sequence, which parses, prepares, and caches its resources, prepares its connections, and so on. If a node is part of a cluster then in-memory replication is initiated if other cluster members are available. One or all members of the cluster can be started at any one time.

Shutting down a cluster member will only involve that cluster member being unavailable to service requests. When a server is shut down gracefully, it will finish processing current requests, signal its unavailability, and then release all shared resources and close its file and database connections. All session state will be replicated as well, allowing users originally connected to this cluster member to fail over to another member of the cluster.

11.2.2.1.2 Cluster-Wide Configuration Changes

At the cluster level, new Oracle UCM features or customization of behaviors can be introduced through Oracle UCM internal components. Nodes need to be restarted to pick up these new changes.

For example, the metadata model can change system wide. For instance, a metadata field can be added, modified, or deleted. The system behavior that was driven by these metadata fields can be changed. This change would automatically be picked up by cluster nodes through notification between nodes.

For changes to occur on each cluster member, the first node needs to have shared folders configured. As long as all the shared folders have the same mount point on each cluster node, the other nodes do not need do make manual changes using WebLogic Server pack/unpack.

11.2.2.2 Oracle UCM and Inbound Refinery High Availability Architecture

Oracle UCM can be configured with one or more Inbound Refinery instances to provide document conversions.

Inbound Refinery is a conversion server that manages file conversions for electronic documents such as documents, digital images, and motion video. It also provides thumbnailing functionality for documents and images, the ability to extract and use EXIF data from digital images and XMP data from electronic files generated from programs such as Adobe Photoshop and Adobe Illustrator, and storyboarding for video. For more information about Inbound Refinery, refer to Oracle Fusion Middleware Administrator's Guide for Conversion.

The number of Inbound Refinery instances which need to be installed will depend on the amount of conversions which need to be supported. For availability, it is recommended that at least two Inbound Refinery instances be installed. All of the Inbound Refinery instances should be made available as providers to all members of a Content Server cluster.

11.2.2.2.1 Content Server and Inbound Refinery Communication

All communication between a Content Server and an Inbound Refinery is initiated by the Content Server. This includes:

-

Regular checks of all available Inbound Refinery instances to determine their status.

-

The initiation of a job request.

-

Retrieval of completed jobs.

The status includes information on whether the Inbound Refinery is available to accept requests. This will depend on how busy the Inbound Refinery is. If no Inbound Refinery instances are available, the request is retried.

When jobs are completed, this information will appear as part of the Content Server's status request. If so, the Content Server will initiate download of the completed job.

11.2.2.2.2 Content Server Clusters and Inbound Refinery Instances

In a Content Server cluster, the list of all available Inbound Refinery instances is shared among members of the cluster.

That is, when a new Inbound Refinery instance is added, it becomes available for any Content Server cluster member to use.

However, each individual Content Server communicates independently with all the available Inbound Refinery instances. There is no shared status in a Content Server cluster.

Likewise, all Inbound Refinery instances operate completely independently, responding to requests from Content Servers. Inbound Refinery instances do not share any information.

11.2.2.2.3 Inbound Refinery Instances and Load Balancers

Load balancers cannot be used in front of a collection of Inbound Refinery instances for the following reasons:

-

A Content Server expects to be able to contact an Inbound Refinery directly to get status and availability information.

-

When a job is finished, a Content Server must be able to access the one Inbound Refinery that has the specific job and initiate a download.

11.2.2.2.4 Inbound Refinery Availability

Requests to Inbound Refinery do not occur in real-time since all requests are asynchronous requests mediated by Content Server. Users access Content Servers, and Content Servers then delegate requests to available Inbound Refinery instances. Enough Inbound Refinery instances should be configured so that if one or more fail, there are always other Inbound Refinery instances available to service requests.

A Content Server will never mark an Inbound Refinery as "failed." If unable to contact an Inbound Refinery, then Content Server will choose another available Inbound Refinery and will continue to check the availability of all Inbound Refinery instances. It is the responsibility of a system administrator to manually remove failed Inbound Refinery instances from the Content Server lists.

11.2.2.3 Oracle URM High Availability

Oracle URM and Oracle UCM share the same architecture. As such, all high availability considerations which apply to Oracle UCM also apply to Oracle URM.

When configuring high availability, there are only two differences to note between Oracle UCM and Oracle URM:

-

Oracle URM will store its file system metadata in a different directory than Oracle UCM.

-

When configuring an HTTP Server in front of an Oracle URM cluster, a different session cookie name must be used than that of Oracle UCM.

More details on these two configuration differences are provided in the section below on the Oracle ECM High Availability Configuration Steps.

11.2.2.4 Protection from Failure and Expected Behaviors

The following features help protect an Oracle UCM node from failure:

-

Load balancing can be achieved by using an external load balancer. The session does not need additional sticky state data other than its authentication information.

-

The load balancer can be configured in front of Oracle UCM. Multi data sources can be configured with Oracle UCM to support Oracle RAC. Timeouts and retries are also supported.

-

Oracle UCM uses standard WebLogic Server persistent session.

-

Oracle UCM does not use EJBs.

-

Death can be detected using the Oracle UCM PING_SERVER service. When a node is restarted, it does not affect other nodes in the cluster.

-

Failover is handled either by the load balancer in front of the Oracle UCM nodes, or by the WLS module in Apache. Ongoing requests from the client would fail, but subsequent requests are rerouted to active nodes. Unfinished transactions would not complete.

-

Configuration information from the node is not lost, because it is stored on the shared file system and in the database.

-

When a node fails, a database connection between the node and the database should be reclaimed by the database.

-

Although instances normally do not get hung, it can occur because of unknown deadlock conditions in the Oracle UCM product or customized versions of Oracle UCM.

-

Users with active sessions should not experience any disruption as their sessions fail over to an active server. This includes users with UCM administration applets open.

-

After the installation of Oracle UCM is complete, the following value should be set in the

config.cfgfile. This will allow retry logic during an Oracle RAC failover:ServiceAllowRetry=true

If this value is not set, user will need to manually retry any operation that was in progress when the failover began.

The value can be set using the WebLogic Server Administration Console for Oracle UCM at the following URL:

http://<hostname>:<port>/cs

Go to Administration > Admin Server > General Configuration and add ServiceAllowRetry=true to Additional Configuration Variables. Save and restart all the UCM managed servers. The new value should appear in

config.cfgat the following location:SHARED_DISK_LOCATION_FOR_UCM/ucm/cs/config/config.cfg

11.2.2.5 Troubleshooting Oracle UCM High Availability

To troubleshoot Oracle UCM, look in the log files and then in the trace files.

See Section 11.2.1.1.7, "Oracle UCM Log File Locations" for information on the location of Oracle UCM log and trace files and information on how to view log and trace files using the Oracle UCM GUI.

11.3 Oracle ECM High Availability Configuration Steps

In a high availability environment such as the one shown in Figure 11-5, it is recommended that Oracle ECM products be set up in a clustered deployment, where the clustered instances access an Oracle Real Applications Cluster (Oracle RAC) database content repository and a shared disk is used to store the common configuration.

A hardware load balancer routes requests to multiple Oracle HTTP Server instances which, in turn, route requests to the clustered Oracle ECM servers.

This section provides the installation and configuration steps for the Oracle ECM high availability configuration shown in Figure 11-5.

It includes the following topics:

Note:

Oracle strongly recommends reading the release notes for any additional installation and deployment considerations prior to starting the setup process.Figure 11-5 Oracle ECM High Availability Architecture

Description of "Figure 11-5 Oracle ECM High Availability Architecture"

The configuration will include the following products:

-

Oracle I/PM

-

Oracle UCM

-

Oracle Universal Records Manager (URM)

-

Oracle Inbound Refinery (IBR)

All products will be installed on the same machines, with the exception of Inbound Refinery, which is assumed to be on remote machines.

If you do not want to install Oracle I/PM or Oracle URM, ignore the references to these products in the configuration steps.

11.3.1 Shared Storage

Cluster members require shared storage to access the same configuration files. This should be set up to be accessible from all nodes in the cluster.

When configuring Oracle UCM/URM/IBR clusters, follow these requirements:

-

For Oracle UCM clusters, all members of the cluster must point to the same configuration directory. This directory must be on a shared disk accessible to all members of the cluster.

-

For Oracle URM clusters, all members of the cluster must point to the same configuration directory. This directory must be on a shared disk accessible to all members of the cluster.

-

For Oracle IBR, each member of the IBR cluster must have its own separate configuration directory. This directory can be on local disk or shared disk. If the latter, care must be taken that the same directory is not used by multiple IBRs.

Take care if you install UCM and IBR on the same machines; configuration directories for all products are in the same subdirectory. By default, this directory is under DOMAIN_HOME but you can configure this for any other directory.

If IBR servers share the /ibr directory, an error appears when you try to start the second server:

<@csRefineryFileSystemLocked=Inbound Refinery {1} on machine {2} cannot start

because the file {3} exists.

The most likely cause of this failure to start is that this IBR is configured as a cluster node; the IBR does not support a clustered environment.

To disable this check set EnableReserveDirectoryCheck=false but do not use it to run IBR nodes on the same file system.

11.3.2 Configuring the Oracle Database

Configure the Oracle RAC database before completing this procedure.

The Repository Creation Utility (RCU) ships on its own CD as part of the Oracle Fusion Middleware 11g kit.

To run RCU and create the Oracle UCM schemas in an Oracle RAC database repository, follow these steps on any available host:

-

Issue this command:

prompt>

RCU_HOME/bin/rcu & -

On the Welcome screen, click Next.

-

On the Create Repository screen, select the Create operation to load component schemas into an existing database.

Click Next.

-

On the Database Connection Details screen, enter connection information for the existing database as follows:

Database Type:

Oracle DatabaseHost Name: Name of the computer on which the database is running. For an Oracle RAC database, specify the VIP name or one node name. Example:

ECMDBHOST1-VIPorECMDBHOST2-VIPPort: The port number for the database. Example:

1521Service Name: The service name of the database. Example:

ecmha.mycompany.comUsername:

SYSPassword: The SYS user password

Role:

SYSDBAClick Next.

If you entered everything correctly, a dialog box will display with the words "Operation Completed." If so, click OK. If not, you will see an error under Messages, such as "Unable to connect to the database" or "Invalid username/password."

-

On the Select Components screen, create a new prefix and select the components to be associated with this deployment:

Create a New Prefix:

ecmComponents: Select only the components for which you intend to create schemas. Under Oracle AS Repository Components > Enterprise Content Management, choose these components:

-

Oracle Content Server 11g - Complete

-

Oracle Universal Records Management 11g

-

Oracle Imaging and Process Management

Click Next.

If you entered everything correctly, a dialog box will display with the words "Operation Completed." If so, click OK. If not, you will see an error under Messages that you will need to resolve before continuing.

-

-

On the Schema Passwords screen, ensure that Use same passwords for all schemas is selected. This password will be used in a later step for Content Server to connect to this database schema.

Click Next.

-

On the Map Tablespaces screen, click Next.

When the warning dialog box appears, click OK.

The tablespace creation results should appear in a dialog with the text "Operation completed." Click OK.

-

On the Summary screen, click Create.

-

On the Completion Summary screen, click Close.

11.3.3 Installing and Configuring ECMHOST1

This section describes the installation and configuration steps for ECMHOST1.

11.3.3.1 Installing Oracle WebLogic Server on ECMHOST1

On ECMHOST1, start the Oracle WebLogic Server installation by running the installer executable file.

See "Understanding Your Installation Starting Point" in Oracle Fusion Middleware Installation Planning Guide for the version of Oracle WebLogic Server to use with the latest version of Oracle Fusion Middleware.

Start the Oracle WebLogic Server installer.

Then follow these steps in the installer to install Oracle WebLogic Server:

-

On the Welcome screen, click Next.

-

On the Choose Middleware Home Directory screen, choose a directory on your computer into which the Oracle WebLogic software is to be installed.

For the Middleware Home Directory, specify this value:

/u01/app/oracle/product/fmw

Click Next.

-

On the Register for Security Updates screen, enter your "My Oracle Support" User Name and Password.

-

On the Choose Install Type screen, a window prompts you to indicate whether you want to perform a complete or a custom installation.

Choose Typical or Custom.

Click Next.

-

On the Choose Product Installation Directories screen, specify the following values:

WebLogic Server:

/u01/app/oracle/product/fmw/wlserver_10.3

Oracle Coherence:

/u01/app/oracle/product/fmw/coherence_3.5.2

-

On the Installation Summary screen, the window contains a list of the components you selected for installation, along with the approximate amount of disk space to be used by the selected components once installation is complete.

Click Next.

-

On the Installation Complete screen, deselect the Run Quickstart checkbox.

Click Done.

11.3.3.2 Installing Oracle ECM on ECMHOST1

Follow these steps to start the Oracle Enterprise Content Management (Oracle ECM) installer:

-

On UNIX (Linux in the following example):

./runInstaller -jreLoc file-specification-for-jdk6

Ensure that your DISPLAY environment variable is set correctly before issuing the command above.

On Windows:

setup.exe

Specify the JRE location, for example:

C:\Oracle\Middleware\jdk160_11

Follow these steps in the ECM installer to install Oracle Enterprise Content Management on the system:

-

If this is the first installation, the Specify Oracle Inventory screen opens.

Accept the defaults and click OK.

If you are installing as a normal (non-root) user, the Inventory Location Confirmation dialog box opens. Follow its directions, or select Continue Installation with local inventory and click OK.

-

On the Welcome screen, click Next.

-

On the Prerequisite Checks screen, the installer completes the prerequisite check. If any fail, fix them and restart your installation.

Click Next.

-

On the Specify Installation Location screen, enter these values:

For the Middleware Home Directory, specify this value:

/u01/app/oracle/product/fmw

For the Oracle Home Directory, enter the name of the subdirectory where Oracle ECM will be installed (or use the default). This will be referred to as the ECM_HOME:

/u01/app/oracle/product/fmw/ecm

Click Next.

-

On the Installation Summary screen, review the selections to ensure that they are correct (if they are not, click Back to modify selections on previous screens), and click Install.

-

On the Installation Progress screen on UNIX systems, a dialog box appears that prompts you to run the

oracleRoot.shscript. Open a window and run the script, following the prompts in the window.Click Next.

-

On the Installation Complete screen, click Finish to confirm your choice to exit.

Note:

Before you run the Configuration Wizard by following the instructions in Section 11.3.3.3, "Create a High Availability Domain," ensure that you applied the latest Oracle Fusion Middleware patch set and other known patches to your Middleware Home so that you have the latest version of Oracle Fusion Middleware.See "Understanding Your Installation Starting Point" in Oracle Fusion Middleware Installation Planning Guide for the steps you must perform to get the latest version of Oracle Fusion Middleware.

11.3.3.3 Create a High Availability Domain

This section describes how to run the Oracle Fusion Middleware Configuration Wizard to create and configure a high availability domain into which you can deploy applications and libraries.

To start the Oracle Fusion Middleware Configuration Wizard:

-

On UNIX (Linux in the following example):

ECM_HOME/common/bin/config.shEnsure that your DISPLAY environment variable is set correctly before issuing the command above.

On Windows:

ECM_HOME/config.cmd

Follow these steps in the Configuration Wizard to create and configure the Oracle ECM domain on the computer:

-

On the Welcome screen, select Create a new WebLogic domain.

Click Next.

-

On the Select Domain Source screen, select the applications that you want to install, for example:

Select the applications you want to install, for example:

-

Oracle Universal Records Management - 11.1.1.0

-

Oracle Universal Content Management - Inbound Refinery - 11.1.1.0

-

Oracle Universal Content Management - Content Server - 11.1.1.0

-

Oracle Imaging and Process Management - 11.1.1.0

Other products such as Oracle JRF and Oracle Enterprise Manager will also be automatically selected.

-

-

On the Specify Domain Name and Location screen, make these entries:

-

Change the domain name to

ecmdomainor use the default,base_domain. A directory will be created for this domain, for example:MW_HOME/user_projects/domains/ecmdomain -

Domain Location: accept the default

-

Application Location: accept the default

Click Next.

-

-

On the Configure Administrator Username and Password screen:

-

In the Username field, enter a user name for the domain administrator or use the default username,

weblogic. -

In the Password field, enter a user password that is at least 8 characters, for example:

welcome1 -

In the Configure User Password field, enter the user password again.

Click Next.

-

-

On the Configure Server Start Mode and JDK screen:

-

WebLogic Domain Startup Mode: Select Production Mode.

-

JDK Selection: Choose a JDK from the list of available JDKs or accept the default.

Click Next.

-

-

On the Configure JDBC Component Schema screen:

-

Select both schemas and choose to configure as Oracle RAC.

-

For each database schema listed under Component Schema:

-

Click the checkbox in front of the schema to select that row.

-

Verify the values in the input field near the top: Enter the schema name, password, Oracle RAC hosts, ports, and service names.

-

Click Next.

-

-

On the Test Component Schema screen, the Configuration Wizard will test every schema listed on the previous screen. If all of them are specified correctly, you will see checkmarks in the Status column. If any are incorrect, the Status column will show a stop sign and the error messages will appear in the Connection Result Log. If this happens, click Previous to go back and fix the problem or problems:

After every schema is specified correctly, click Next.

-

Select JMS Distributed Destination, Administration Server and Managed Servers, Clusters and Machines.

-

In the Select JMS Distributed Destination Type screen, select UDD from the drop-down list for the JMS modules of all Oracle Fusion Middleware components.

Click Next.

-

In the Configure the Administration Server screen, enter the following values:

-

Name: AdminServer

-

Listen address: Enter a host or virtual hostname.

-

Listen port: 7001

-

SSL listen port: Not applicable.

-

SSL enabled: Leave this checkbox unselected.

Click Next.

-

-

On the Configure Managed Servers screen, add an additional Managed Server for each of the existing Servers. For example, add an WLS_UCM2 to match WLS_UCM1. The Listen Port for the second Managed Server should be the same as the first. Set the Listen Address for all the servers to the host name that the Managed Server runs on.

For example:

WLS_UCM1

Listen Address: ECMHOST1

Listen Port: 16200

WLS_UCM2

Listen Address: ECMHOST2

Listen Port: 16200

Do this for all the Managed Servers.

The Oracle I/PM servers require a virtual host name as the listen addresses for the managed server. These virtual host names and corresponding virtual IPs are required to enable server migration for the I/PM component. You must enable a VIP mapping to ECMHOST1VHN1 on ECMHOST1 and ECMHOST2VHN1 on ECMHOST2, and must also correctly resolve the host names in the network system used by the topology (either by DNS Server or hosts resolution).

The Inbound Refineries should be configured to reside on separate remote servers, for example: IBR_Server1 on ECMHOST3 and IBR_Server2 on ECMHOST4.

-

On the Configure Clusters screen, create a cluster for each pair of servers. For example, for UCM:

-

Name:

UCM_Cluster -

Cluster Messaging Mode:

unicast -

Multicast Address: Not applicable

-

Multicast Port: Not applicable

-

Cluster Address: Not applicable

Add similarly for other products: IPM_Cluster, URM_Cluster, and so on.

Click Next.

-

-

On the Assign Servers to Clusters screen, assign each pair of Managed Servers to the newly created cluster:

UCM Cluster:

-

WLS_UCM1

-

WLS_UCM2

Do this for all the clusters you created in the previous step in this section, such as IPM_Cluster, URM_Cluster, and IBR_Cluster.

Click Next.

-

-

On the Configure Machines screen, click the Unix Machine tab, and add the following machines:

-

ECMHOST1

-

ECMHOST2

-

ECMHOST3 (if Inbound Refinery is being configured)

-

ECMHOST4 (if Inbound Refinery is being configured)

-

-

On the Assign Servers to Machines screen:

-

To the ECMHOST1 machine, assign the AdminServer and all *1 Servers (WLS_UCM1, WLS_IPM1, WLS_URM1).

-

To the ECMHOST2 machine, assign all the *2 Servers (WLS_UCM2, WLS_IPM2, WLS_URM2).

-

If configuring Inbound Refinery, add WLS_IBR1 to ECMHOST3 and WLS_IBR2 to ECMHOST4.

-

-

On the Configuration Summary screen, click Create to create the domain.

11.3.3.4 Start the Administration Server and the Managed Servers on ECMHOST1

Start the Administration Server:

cd DOMAIN_HOME/bin

startWebLogic.sh &

Configure and start the Node Manager:

ECMHOST1> MW_HOME/oracle_common/common/bin/setNMProps.sh ECMHOST1> MW_HOME/wlserver 10.3/server/bin/startNodeManager.sh MW_HOME/oracle_common/common/bin/setNMProps.sh MW_HOME/wlserver 10.3/server/bin/startNodeManager.sh

Access the Administration Console at http://ECMHOST1:7001/console. In the Administration Console, start the WLS_UCM1, WLS_URM1, and WLS_IPM1 managed servers.

11.3.3.5 Disabling Host Name Verification for the Administration Server and the Managed Servers for ECMHOST1 and ECMHOST2

This step is required if you have not set up SSL communication between the Administration Server and the Node Manager. If SSL is not set up, you receive an error message unless you disable host name verification.

You can re-enable host name verification when you have set up SSL communication between the Administration Server and the Node Manager.

To disable host name verification on ECMHOST1:

-

In Oracle WebLogic Server Administration Console, select Servers, and then AdminServer.

-

Select SSL, and then Advanced.

-

In the Change Center, click Lock & Edit.

-

When prompted, save the changes and activate them.

-

Set Hostname Verification to None.

-

Select WLS_UCM1, SSL, and then Advanced.

-

Set Hostname Verification to None.

-

Repeat Steps 6 and 7 for WLS_URM1 and WLS_IPM1.

-

Restart the AdminServers and all the Managed Servers (for example, WLS_UCM1, WLS_URM1, and WLS_IPM1).

To disable host name verification on ECMHOST2:

-

In Oracle WebLogic Server Administration Console, select WLS_UCM2, SSL , and then Advanced.

-

Set Hostname Verification to None.

-

Repeat Steps 1 and 2 for WLS_Portlet2 and WLS_Services2.

-

Restart the AdminServers and all the Managed Servers (for example: WLS_UCM2, WLS_URM2, and WLS_IPM2).

11.3.3.6 Configure the WLS_UCM1 Managed Server

Access the WLS_UCM1 configuration page at http://ECMHOST1:16200/cs.

After you log in, the configuration page opens. Oracle UCM configuration files must be on a shared disk. This example assumes that the shared disk is at /u01/app/oracle/admin/domainName/ucm_cluster.

Set the following values on the configuration page to the values below:

-

Content Server Instance Folder:

/u01/app/oracle/admin/domainName/ucm_cluster/cs -

Native File Repository Location:

/u01/app/oracle/admin/domainName/ucm_cluster/cs/vault -

Web Layout Folder:

/u01/app/oracle/admin/domainName/ucm_cluster/cs/weblayout -

Server Socket: Port 4444

-

Socket Connection Address Security Filter: Set to a pipe-delimited list of localhost and HTTP Server Hosts: 127.0.0.1|WEBHOST1|WEBHOST2

-

WebServer HTTP Address: Set to the host and port of the load balancer HTTP:

ecm.mycompany.com:80

To apply these updates, click Submit and restart the WLS_UCM1 managed server.

11.3.3.7 Configure the WLS_URM1 Managed Server

Access the WLS_URM1 configuration page at http://ECMHOST1:16250/urm.

After you log in, the configuration page opens. The Oracle URM configuration files must be on a shared disk. This example assumes that the shared disk is at /u01/app/oracle/admin/domainName/ucm_cluster.

Set the following values on the configuration page to the values below:

-

Content Server Instance Folder:

/u01/app/oracle/admin/domainName/ucm_cluster/urm -

Native File Repository Location:

/u01/app/oracle/admin/domainName/ucm_cluster/urm/vault -

Web Layout Folder:

/u01/app/oracle/admin/domainName/ucm_cluster/urm/weblayout -

Server Socket: Port 4445

-

Socket Connection Address Security Filter: Set to a pipe-delimited list of localhost and HTTP Server Hosts: 127.0.0.1|WEBHOST1|WEBHOST2

-

WebServer HTTP Address: Set to the host and port of the load balancer HTTP:

ecm.mycompany.com:80

After making these updates, click Submit and then restart the WLS_URM1 managed server.

11.3.4 Installing and Configuring WEBHOST1

This section describes the installation and configuration steps to perform on WEBHOST1.

11.3.4.1 Installing Oracle HTTP Server on WEBHOST1

This section describes how to install Oracle HTTP Server on WEBHOST1.

-

Ensure that the system, patch, kernel and other requirements are met. These are listed in the Oracle Fusion Middleware Installation Guide for Oracle Web Tier in the Oracle Fusion Middleware documentation library for the platform and version you are using.

-

Ensure that port 7777 is not in use by any service on WEBHOST1 by issuing these commands for the operating system you are using. If a port is not in use, no output is returned from the command.

On UNIX:

netstat -an | grep "7777"

On Windows:

netstat -an | findstr :7777

-

If the port is in use (if the command returns output identifying the port), you must free the port.

On UNIX:

Remove the entries for port 7777 in the

/etc/servicesfile and restart the services, or restart the computer.On Windows:

Stop the component that is using the port.

-

Copy the

staticports.inifile from theDisk1/stage/Responsedirectory to a temporary directory. -

Edit the

staticports.inifile that you copied to the temporary directory to assign the following custom ports (uncomment the line where you specify the port number for Oracle HTTP Server):# The port for Oracle HTTP server Oracle HTTP Server port = 7777

-

Start the Oracle Universal Installer for Oracle Fusion Middleware 11g Web Tier Utilities CD installation as follows:

On UNIX, issue this command:

runInstaller.On Windows, double-click

setup.exe.The

runInstallerandsetup.exefiles are in the../install/platformdirectory, where platform is a platform such as Linux or Win32. -

On the Specify Inventory Directory screen, enter values for the Oracle Inventory Directory and the Operating System Group Name. For example:

Specify the Inventory Directory:

/u01/app/oraInventoryOperating System Group Name:

oinstallA dialog box opens with the following message:

"Certain actions need to be performed with root privileges before the install can continue. Please execute the script /u01/app/oraInventory/createCentralInventory.sh now from another window and then press "Ok" to continue the install. If you do not have the root privileges and wish to continue the install select the "Continue installation with local inventory" option"

Log in as root and run the "/u01/app/oraInventory/createCentralInventory.sh"

This sets the required permissions for the Oracle Inventory Directory and then brings up the Welcome screen.

Note:

The Oracle Inventory screen is not shown if an Oracle product was previously installed on the host. If the Oracle Inventory screen is not displayed for this installation, make sure to check and see:-

If the

/etc/oraInst.locfile exists -

If the file exists, the Inventory directory listed is valid

-

The user performing the installation has write permissions for the Inventory directory

-

-

On the Welcome screen, click Next.

-

On the Select Installation Type screen, select Install and Configure and click Next.

-

On the Prerequisite Checks screen, the installer completes the prerequisite check. If any fail, fix them and restart your installation.

Click Next.

-

On the Specify Installation Location screen:

-

On WEBHOST1, set the Location to:

/u01/app/oracle/admin

Click Next.

-

-

On the Configure Components screen:

-

Select Oracle HTTP Server.

-

Select Associate Selected Components with Weblogic Domain.

Click Next.

-

-

On the Specify WebLogic Domain screen:

Enter the location where you installed Oracle WebLogic Server. Note that the Administration Server must be running.

-

Domain Host Name:

ECMHOST1 -

Domain Port No:

7001 -

User Name:

weblogic -

Password:

******

Click Next.

-

-

On the Specify Component Details screen:

-

Enter the following values for WEBHOST1:

-

Instance Home Location:

/u01/app/oracle/admin/ohs_inst1 -

Instance Name:

ohs_inst1 -

OHS Component Name:

ohs1

-

Click Next.

-

-

On the Specify Webtier Port Details screen:

-

Select Specify Custom Ports. If you specify a custom port, select Specify Ports using Configuration File and then use the Browse function to select the file.

-

Enter the Oracle HTTP Server port, for example, 7777.

Click Next.

-

-

On the Oracle Configuration Manager screen, enter the following:

-

Email Address: Provide the email address for your My Oracle Support account

-

Oracle Support Password: Provide the password for your My Oracle Support account.

-

I wish to receive security updates via My Oracle Support: Click this checkbox.

-

-

On the Installation Summary screen, ensure that the selections are correct. If they are not, click Back and make the necessary fixes. After ensuring that the selections are correct, click Next.

-

On the Installation Progress screen on UNIX systems, a dialog appears that prompts you to run the

oracleRoot.shscript. Open a window and run the script, following the prompts in the window.Click Next.

-

On the Configuration Progress screen, multiple configuration assistants are launched in succession; this process can be lengthy.

-

On the Configuration Completed screen, click Finish to exit.

11.3.4.2 Configuring Oracle HTTP Server on WEBHOST1

After installing Oracle HTTP Server on WEBHOST1, add the following lines to the OHS_HOME/instances/ohs_instance1/config/OHS/ohs1/mod_wl_ohs.conf file:

# UCM

<Location /cs>

WebLogicCluster ecmhost1:16200,ecmhost2:16200

SetHandler weblogic-handler

WLCookieName JSESSIONID

</Location>

<Location /adfAuthentication>

WebLogicCluster ecmhost1:16200,ecmhost2:16200

SetHandler weblogic-handler

WLCookieName JSESSIONID

</Location>

# URM

<Location /urm>

WebLogicCluster ecmhost1:16250,ecmhost2:16250

SetHandler weblogic-handler

WLCookieName JSESSIONID

</Location>

<Location /urm/adfAuthentication>

WebLogicCluster ecmhost1:16250,ecmhost2:16250

SetHandler weblogic-handler

WLCookieName JSESSIONID

</Location>

# IBR

<Location /ibr>

WebLogicCluster ecmhost1:16300,ecmhost2:16300

SetHandler weblogic-handler

WLCookieName JSESSIONID

</Location>

<Location /ibr/adfAuthentication>

WebLogicCluster ecmhost1:16300,ecmhost2:16300

SetHandler weblogic-handler

WLCookieName JSESSIONID

</Location>

# I/PM Application

<Location /imaging>

SetHandler weblogic-handler

WebLogicCluster ECMHOST1VHN1:16000,ECMHOST2VHN1:16000

</Location>

# AXF WS Invocation

<Location /axf-ws>

SetHandler weblogic-handler

WebLogicCluster ECMHOST1VHN1:16000,ECMHOST2VHN1:16000

</Location>

These lines configure Oracle HTTP Server on WEBHOST1 to route requests to the clustered applications on ECMHOST1 and ECMHOST2.

Note:

The value ofWLCookieName is different in earlier software releases. The value was IDCCS_SESSIONID in earlier releases but is JSESSIONID in this release.After adding these lines, restart Oracle HTTP Server, and then ensure that the applications can be accessed at:

http://WEBHOST1:7777/cs http://WEBHOST1:7777/urm http://WEBHOST1:7777/imaging

Note:

More Location tags may need to be added depending on how the applications will be used. For example, Oracle UCM also requires access at /_dav for WebDav access and /idcws for web services access. Consult the product documentation for more details.11.3.5 Configuring the Load Balancer

Configure the load balancer so that the virtual host (for example, ucm.mycompany.com) routes to the available Oracle HTTP Servers using round-robin load balancing.

You should also configure the load balancer to monitor the HTTP and HTTPS listen ports for each Oracle HTTP Server.

Verify that Oracle Content Server can be accessed at http://WEBHOST1:7777/cs.

11.3.6 Installing and Configuring ECMHOST2

This section describes the installation and configuration steps to perform on ECMHOST2.

11.3.6.1 Installing Oracle WebLogic Server on ECMHOST2

To install Oracle WebLogic Server on ECMHOST2, perform the steps in Section 11.3.3.1, "Installing Oracle WebLogic Server on ECMHOST1" on ECMHOST2.

11.3.6.2 Installing ECM on ECMHOST2

To install Oracle ECM on ECMHOST2, perform the steps in Section 11.3.3.2, "Installing Oracle ECM on ECMHOST1" on ECMHOST2.

11.3.6.3 Using pack and unpack to Join the Domain on ECMHOST1

The pack and unpack commands are used to enable the WLS_UCM2 managed server on ECMHOST2 to join the ECM domain on ECMHOST1.

First, execute the pack command on ECMHOST1:

pack -managed=true -domain=/u01/app/oracle/product/WLS/11G/user_projects/domains /ecmdomain -template=ecm_template.jar -template_name="my ecm domain"

After copying the ecm_template.jar file to ECMHOST2, execute the unpack command on ECMHOST2:

unpack -domain=/u01/app/oracle/product/WLS/11G/user_projects/domains/ecmdomain -template=ecm_template.jar

11.3.6.4 Start Node Manager and the WLS_UCM2 Server on ECMHOST2

To start Node Manager on ECMHOST2, use this command:

ECMHOST2> MW_HOME/oracle_common/common/bin/setNMProps.sh ECMHOST2> WL_HOME/wlserver_10.3/bin/startNodeManager.sh

Access the Administration Console at http://ECMHOST1:7001/console. In the Administration Console, start the WLS_UCM2 managed server.

11.3.6.5 Start the Managed Servers on ECMHOST2

Access the Administration Console at http://ECMHOST2:7001/console. In the Administration Console, start the WLS_UCM2, WLS_URM2, and WLS_IPM2 managed servers.

11.3.6.6 Configure the WLS_UCM2 Managed Server

Access the WLS_UCM2 configuration page at http://ECMHOST2:16200/cs.

After you log in, the configuration page opens. The Oracle UCM configuration files must be on a shared disk. This example assumes that the shared disk is at /orashare/orcl/ucm.

Set the following values on the configuration page to the values below:

-

Content Server Instance Folder:

/orashare/orcl/ucm/cs/ -

Native File Repository Location:

/orashare/orcl/ucm/cs/vault/ -

WebLayout Folder:

/orashare/orcl/ucm/cs/weblayout/ -

Server Socket: Port 4444

-

Socket Connection Address Security Filter: Set to a pipe-delimited list of localhost and HTTP Server Hosts: 127.0.0.1|WEBHOST1|WEBHOST2

-

WebServer HTTP Address: Set to the host and port of the load balancer HTTP:

ucm.mycompany.com:80

After making these updates, click Submit and then restart the WLS_UCM2 managed server.

11.3.6.7 Configure the WLS_URM2 Managed Server

Access the WLS_URM2 configuration page at http://ECMHOST2:16250/urm.

After you log in, the configuration page opens. The Oracle URM configuration files must be on a shared disk. This example assumes that the shared disk is at /u01/app/oracle/admin/domainName/ucm_cluster.

Set the following values on the configuration page to the values below:

-

Content Server Instance Folder:

/u01/app/oracle/admin/domainName/ucm_cluster/urm -

Native File Repository Location:

/u01/app/oracle/admin/domainName/ucm_cluster/urm/vault -

Web Layout Folder:

/u01/app/oracle/admin/domainName/ucm_cluster/urm/weblayout -

Server Socket: Port 4445

-

Socket Connection Address Security Filter: Set to a pipe-delimited list of localhost and HTTP Server Hosts: 127.0.0.1|WEBHOST1|WEBHOST2

-

WebServer HTTP Address: Set to the host and port of the load balancer HTTP:

ecm.mycompany.com:80

After making these updates, click Submit and then restart the WLS_URM1 managed server.

11.3.7 Installing and Configuring WEBHOST2

This section describes the installation and configuration steps to perform on WEBHOST2.

11.3.7.1 Installing Oracle HTTP Server on WEBHOST2

This section describes how to install Oracle HTTP Server on WEBHOST2.

-

Ensure that the system, patch, kernel and other requirements are met. These are listed in the Oracle Fusion Middleware Installation Guide for Oracle Web Tier in the Oracle Fusion Middleware documentation library for the platform and version you are using.

-

Ensure that port 7777 is not in use by any service on WEBHOST2 by issuing these commands for the operating system you are using. If a port is not in use, no output is returned from the command.

On UNIX:

netstat -an | grep "7777"

On Windows:

netstat -an | findstr :7777

-

If the port is in use (if the command returns output identifying the port), you must free the port.

On UNIX:

Remove the entries for port 7777 in the

/etc/servicesfile and restart the services, or restart the computer.On Windows:

Stop the component that is using the port.

-

Copy the

staticports.inifile from theDisk1/stage/Responsedirectory to a temporary directory. -

Edit the

staticports.inifile that you copied to the temporary directory to assign the following custom ports (uncomment the line where you specify the port number for Oracle HTTP Server):# The port for Oracle HTTP server Oracle HTTP Server port = 7777

-

Start the Oracle Universal Installer for Oracle Fusion Middleware 11g Web Tier Utilities CD installation as follows:

On UNIX, issue this command:

runInstaller.On Windows, double-click

setup.exe.The

runInstallerandsetup.exefiles are in the../install/platformdirectory, where platform is a platform such as Linux or Win32.This displays the Specify Oracle Inventory screen.

-

On the Specify Inventory Directory screen, enter values for the Oracle Inventory Directory and the Operating System Group Name. For example:

Specify the Inventory Directory:

/u01/app/oraInventoryOperating System Group Name:

oinstallA dialog box appears with the following message:

"Certain actions need to be performed with root privileges before the install can continue. Please execute the script /u01/app/oraInventory/createCentralInventory.sh now from another window and then press "Ok" to continue the install. If you do not have the root privileges and wish to continue the install select the "Continue installation with local inventory" option"

Log in as root and run the "/u01/app/oraInventory/createCentralInventory.sh"

This sets the required permissions for the Oracle Inventory Directory and then brings up the Welcome screen.

Note:

The Oracle Inventory screen is not shown if an Oracle product was previously installed on the host. If the Oracle Inventory screen is not displayed for this installation, make sure to check and see:-

If the

/etc/oraInst.locfile exists -

If the file exists, the Inventory directory listed is valid

-

The user performing the installation has write permissions for the Inventory directory

-

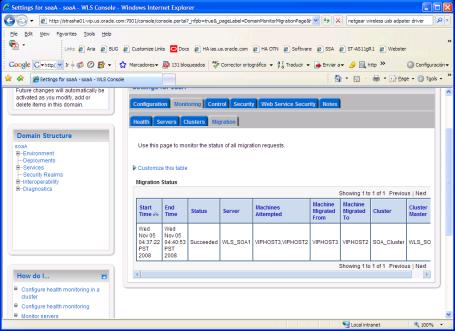

-