| Oracle® Fusion Middleware Deployment Planning Guide for Oracle Directory Server Enterprise Edition 11g Release 1 (11.1.1.7.0) Part Number E28974-01 |

|

|

PDF · Mobi · ePub |

| Oracle® Fusion Middleware Deployment Planning Guide for Oracle Directory Server Enterprise Edition 11g Release 1 (11.1.1.7.0) Part Number E28974-01 |

|

|

PDF · Mobi · ePub |

High availability implies an agreed minimum "up time" and level of performance for your directory service. Agreed service levels vary from organization to organization. Service levels might depend on factors such as the time of day systems are accessed, whether or not systems can be brought down for maintenance, and the cost of downtime to the organization. Failure, in this context, is defined as anything that prevents the directory service from providing this minimum level of service.

This chapter covers the following topics:

Directory Server Enterprise Edition deployments that provide high availability can quickly recover from failures. With a high availability deployment, component failures might impact individual directory queries but should not result in complete system failure. A single point of failure (SPOF) is a system component which, upon failure, renders an entire system unavailable or unreliable. When you design a highly available deployment, you identify potential SPOFs and investigate how these SPOFs can be mitigated.

SPOFs can be divided into three categories:

Hardware failures, for example, server crashes, network failures, power failures, or disk drive crashes

Software failures, for example, Directory Server or Directory Proxy Server crashes

Database corruption

You can ensure that failure of a single component does not cause an entire directory service to fail by using redundancy. Redundancy involves providing redundant software components, hardware components, or both. Examples of this strategy include deploying multiple, replicated instances of Directory Server on separate hosts, or using redundant arrays of independent disks (RAID) for storage of Directory Server databases. Redundancy with replicated Directory Servers is the most efficient way to achieve high availability.

The more common approach to providing a highly available directory service is to use redundant server components and replication. Redundant solutions are usually less expensive, easier to implement, and easier to manage. Note that replication, as part of a redundant solution, has numerous functions other than availability. While the main advantage of replication is the ability to split the read load across multiple servers, this advantage causes additional overhead in terms of server management. Replication also offers scalability on read operations and, with proper design, scalability on write operations, within certain limits. For an overview of replication concepts, see Chapter 7, Directory Server Replication, in Oracle Directory Server Enterprise Edition Reference.

During a failure, a redundant system might provide poor availability. Imagine, for example, an environment in which the load is shared between two redundant server components. The failure of one server component might put an excessive load on the other server, making this server respond more slowly to client requests. A slow response might be considered a failure for clients that rely on quick response times. In other words, the availability of the service, even though the service is operational, might not meet the availability requirements of the client.

In terms of the SPOFs that are described at the beginning of this chapter, redundancy handles failure in the following ways:

Single hardware failure. A single hardware failure is fatal to a machine. Therefore, even if you have redundant hardware, manual intervention is required to repair the failure.

Directory Server or Directory Proxy Server failure. The server is automatically restarted.

Database corruption. Depending on the architecture, a redundant solution should be able to survive database corruption.

This section provides basic information about hardware redundancy. Many publications provide comprehensive information about using hardware redundancy for high availability. In particular, see "Blueprints for High Availability" published by John Wiley & Sons, Inc. (http://www.amazon.com/exec/obidos/tg/detail/-/0471430269/qid=1105613280/sr=8-1/ref=sr_8_xs_ap_i1_xgl14/002-6680176-0680863?v=glances=booksn=507846)

Hardware SPOFs can be broadly categorized as follows:

Network failures

Failure of the physical servers on which Directory Server or Directory Proxy Server are running

Load balancer failures

Storage subsystem failures

Power supply failures

Failure at the network level can be mitigated by having redundant network components. When designing your deployment, consider having redundant components for the following:

Internet connection

Network interface card

Network cabling

Network switches

Gateways and routers

You can mitigate the load balancer as an SPOF by including a redundant load balancer in your architecture.

In the event of database corruption, you must have a database failover strategy to ensure availability. You can mitigate against SPOFs in the storage subsystem by using redundant server controllers. You can also use redundant cabling between controllers and storage subsystems, redundant storage subsystem controllers, or redundant arrays of independent disks.

If you have only one power supply, loss of this supply could make your entire service unavailable. To prevent this situation, consider providing redundant power supplies for hardware, where possible, and diversifying power sources. Additional methods of mitigating SPOFs in the power supply include using surge protectors, multiple power providers, and local battery backups, and generating power locally.

Failure of an entire data center can occur if, for example, a natural disaster strikes a particular geographic region. In this instance, a well-designed multiple data center replication topology can prevent an entire distributed directory service from becoming unavailable. For more information, see Using Replication and Redundancy for High Availability.

Failure in Directory Server or Directory Proxy Server can include the following:

Excessive response time

Write overload

Maximized file descriptors

Maximized file system

Poor storage configuration

Too many indexes

Read overload

Cache issues

CPU constraints

Replication issues

Synchronicity

Replication propagation delay

Replication flow

Replication overload

Large wildcard searches

These SPOFs can be mitigated by having redundant instances of Directory Server and Directory Proxy Server. Redundancy at the software level involves the use of replication. Replication ensures that the redundant servers remain synchronized, and that requests can be rerouted with no downtime. For more information, see Using Replication and Redundancy for High Availability.

Replication can be used to prevent the loss of a single server from causing your directory service to become unavailable. A reliable replication topology ensures that the most recent data is available to clients across data centers, even in the case of a server failure. At a minimum, your local directory tree needs to be replicated to at least one backup server. Some directory architects say that you should replicate three times per physical location for maximum data reliability. In deciding how much to use replication for fault tolerance, consider the quality of the hardware and networks used by your directory. Unreliable hardware requires more backup servers.

Do not use replication as a replacement for a regular data backup policy. For information about backing up directory data, see Designing Backup and Restore Policies and Chapter 8, Directory Server Backup and Restore, in Administrator's Guide for Oracle Directory Server Enterprise Edition.

LDAP client applications are usually configured to search one LDAP server only. Custom client applications can be written to rotate through LDAP servers that are located at different DNS host names. Otherwise, LDAP client applications can only be configured to look at a single DNS host name for Directory Server. You can use Directory Proxy Server, DNS round robins, or network sorts to provide failover to backup Directory Servers. For information about setting up and using DNS round robins or network sorts, see your DNS documentation. For information about how Directory Proxy Server is used in this context, see Using Directory Proxy Server as Part of a Redundant Solution.

To maintain the ability to read data in the directory, a suitable load balancing strategy must be put in place. Both software and hardware load balancing solutions exist to distribute read load across multiple replicas. Each of these solutions can also determine the state of each replica and to manage its participation in the load balancing topology. The solutions might vary in terms of completeness and accuracy.

To maintain write failover over geographically distributed sites, you can use multiple data center replication over WAN. This entails setting up at least two master servers in each data center, and configuring the servers to be fully meshed over the WAN. This strategy prevents loss of service if any of the masters in the topology fail. Write operations must be routed to an alternative server if a writable server becomes unavailable. Various methods can be used to reroute write operations, including Directory Proxy Server.

The following sections describe how replication and redundancy are used to ensure high availability:

Redundant replication agreements enable rapid recovery in the event of failure. The ability to enable and disable replication agreements means that you can set up replication agreements that are used only if the original replication topology fails. Although this intervention is manual, the strategy is much less time consuming than waiting to set up the replication agreement when it is needed. The use of redundant replication agreements is explained and illustrated in Sample Topologies Using Redundancy for High Availability.

Promoting or demoting a replica changes its role in the replication topology. In a very large topology that contains dedicated consumers and hubs, online promotion and demotion of replicas can form part of a high availability strategy. Imagine, for example, a multi-master replication scenario, with two hubs configured for additional load balancing and failover. If one master goes offline, you can promote one of the hubs to a master to maintain optimal read-write availability. When the master replica comes back online, a simple demotion back to a hub replica returns you to the original topology.

For more information, see Promoting or Demoting Replicas in Administrator's Guide for Oracle Directory Server Enterprise Edition.

Directory Proxy Server is designed to support high availability directory deployments. The proxy provides automatic load balancing as well as automatic failover and fail back among a set of replicated Directory Servers. Should one or more Directory Servers in the topology become unavailable, the load is proportionally redistributed among the remaining servers.

Directory Proxy Server actively monitors the Directory Servers to ensure that the servers are still online. The proxy also examines the status of each operation that is performed. Servers might not all be equivalent in throughput and performance. If a primary server becomes unavailable, traffic that is temporarily redirected to a secondary server is directed back to the primary server as soon as the primary server becomes available.

Note that when data is distributed, multiple disconnected replication topologies must be managed, which makes administration more complex. In addition, Directory Proxy Server relies heavily on the proxy authorization control to manage user authorization. A specific administrative user must be created on each Directory Server that is involved in the distribution. These administrative users must be granted proxy access control rights.

Directory Proxy Server can also be used to protect a replicated directory service from failure due to a faulty client application. To improve availability, a limited set of masters or replicas is assigned to each application.

Suppose a faulty application causes a server shutdown when the application performs a specific action. If the application fails over to each successive replica, a single problem with one application can result in failure of the entire replicated topology. To avoid such a scenario, you can restrict failover and load balancing of each application to a limited number of replicas. The potential failure is then limited to this set of replicas, and the impact of the failure on other applications is reduced.

The following sample topologies show how redundancy is used to provide continued service in the event of failure.

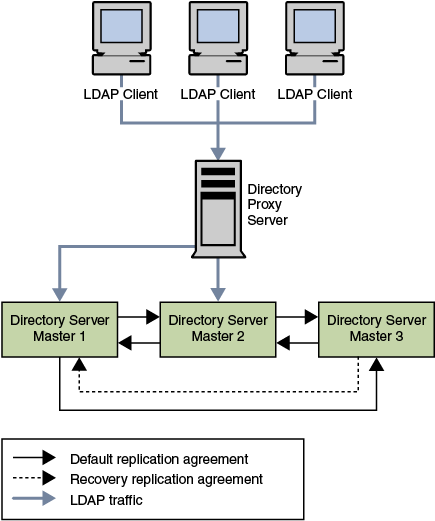

The data center that is illustrated in the following figure has a multi-master topology with three masters. In this scenario, the third master is used only for availability in case of failure. Read and write operations are routed to Masters 1 and 2 by Directory Proxy Server, unless a problem occurs. To speed up recovery and to minimize the number of replication agreements, recovery replication agreements are created. These agreements are disabled by default but can be enabled rapidly in the event of a failure.

Figure 12-1 Multi-Master Replication in a Single Data Center

In the scenario depicted in Figure 12-1, various components might become unavailable. These potential points of failure and the related recovery actions are described in this table.

Table 12-1 Single Data Center Failure Matrix

| Failed Component | Action |

|---|---|

|

Master 1 |

Read and write operations are rerouted to Masters 2 and 3 through Directory Proxy Server while Master 1 is repaired. The recovery replication agreement between Master 2 and Master 3 is enabled so that updates to Master 3 are replicated to Master 2. |

|

Master 2 |

Read and write operations are rerouted to Masters 1 and 3 while Master 2 is repaired. The recovery replication agreement between Master 1 and Master 3 is enabled so that updates to Master 3 are replicated to Master 1. |

|

Master 3 |

Because Master 3 is a backup server only, the directory service is not affected if this master fails. Master 3 can be taken offline and repaired without interruption to service. |

|

Directory Proxy Server |

Failure of Directory Proxy Server results in severe service interruption. A redundant instance of Directory Proxy Server is advisable in this topology. For an example of such a topology, see Using Multiple Directory Proxy Servers. |

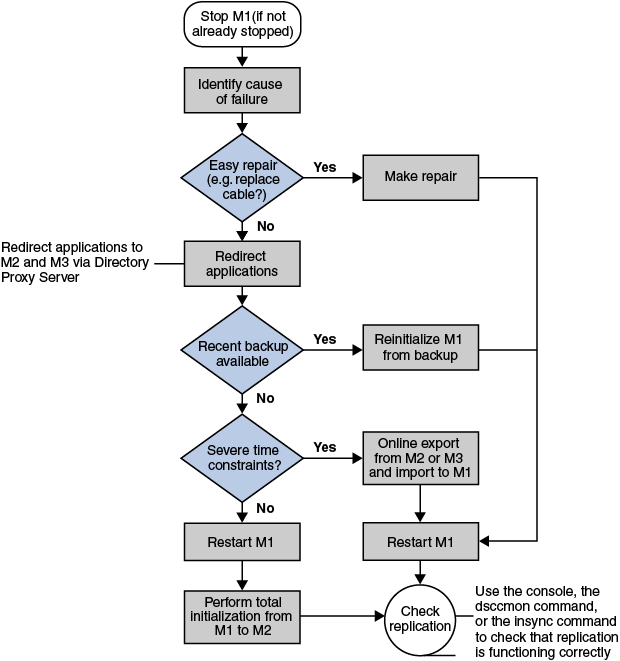

In a single data center with three masters, read and write capability is maintained if one master fails. This section describes a sample recovery strategy that can be applied to reinstate the failed component.

The following flowchart and procedure assume that one component, Master 1, has failed. If two masters fail simultaneously, read and write operations must be routed to the remaining master while the problems are fixed.

Figure 12-2 Single Data Center Sample Recovery Procedure

If Master 1 is not already stopped, stop it.

Identify the cause of the failure.

To troubleshoot a serious failure, follow these steps.

Ensure that any applications that access Master 1 are redirected to point to Master 2 or Master 3, through Directory Proxy Server.

Check the availability of a recent backup.

If a recent backup is available, reinitialize Master 1 from the backup and go to Step 3.

If a recent backup is not available, do one of the following:

Restart Master 1 and perform a total initialization from Master 2 or from Master 3 to Master 1.

For details on this procedure, see Initializing Replicas in Administrator's Guide for Oracle Directory Server Enterprise Edition.

If performing a total initialization will take too long, perform an online export from Master 2, or Master 3, and an import to Master 1.

Start Master 1, if it is not already started.

If Master 1 is in read-only mode, set it to read/write mode.

Check that replication is functioning correctly.

You can use DSCC, dsccmon view-suffixes, or the insync command to check replication.

For more information, see Getting Replication Status in Administrator's Guide for Oracle Directory Server Enterprise Edition, dsccmon, and insync.

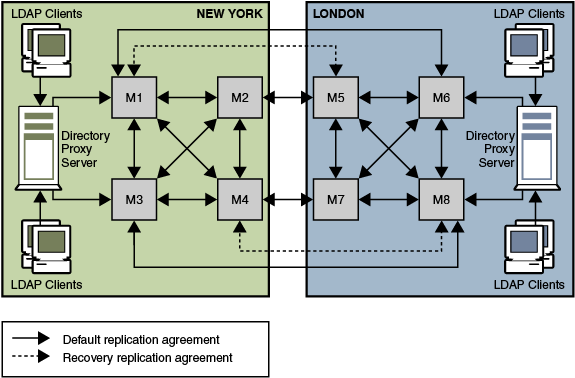

Generally in a deployment with two data centers, the same recovery strategy can be applied as described for a single data center. If one or more masters become unavailable, Directory Proxy Server automatically reroutes local reads and writes to the remaining masters.

As in the single data center scenario described previously, recovery replication agreements can be enabled. These agreements ensure that both data centers continue to receive replicated updates in the event of failure. This recovery strategy is illustrated in Figure 12-3.

An alternative to using recovery replication agreements is to use a fully meshed topology in which every master replicates its changes to every other master. While fewer replication agreements might be easier to manage, no technical reason exists for not using a fully meshed topology.

The only SPOF in this scenario would be the Directory Proxy Server in each data center. Redundant Directory Proxy Servers can be deployed to eliminate this problem, as shown in Figure 12-4.

Figure 12-3 Recovery Replication Agreements For Two Data Centers

The recovery strategy depends on which combination of components fails. However, after you have a basic strategy in place to cope with multiple failures, you can apply that strategy if other components fail.

In the sample topology depicted in Figure 12-3, assume that Master 1 and Master 3 in the New York data center fail.

In this scenario, Directory Proxy Server automatically reroutes reads and writes in the New York data center to Master 2 and Master 4. This ensures that local read and write capability is maintained at the New York site.

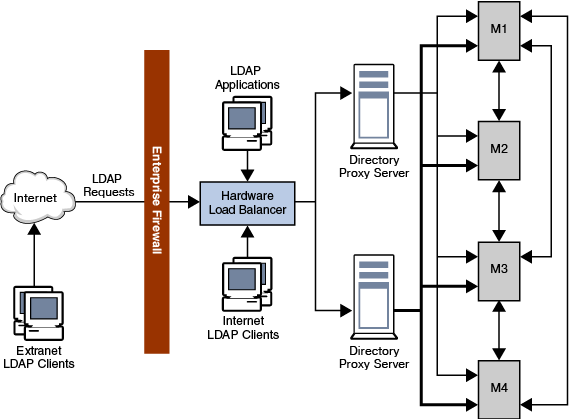

The deployment shown in the following figure includes an enterprise firewall that rejects outside access to internal LDAP services. Client LDAP requests that are initiated internally go through Directory Proxy Server by way of a network load balancer, ensuring high availability at the IP level. Direct access to the Directory Servers is prevented, except for the host that is running Directory Proxy Server. Two Directory Proxy Servers are deployed to prevent the proxy from becoming an SPOF.

A fully meshed multi-master topology ensures that all masters can be used at any time in the event of failure of any other master. For simplicity, not all replication agreements are shown in this diagram.

Figure 12-4 Internal High Availability Configuration

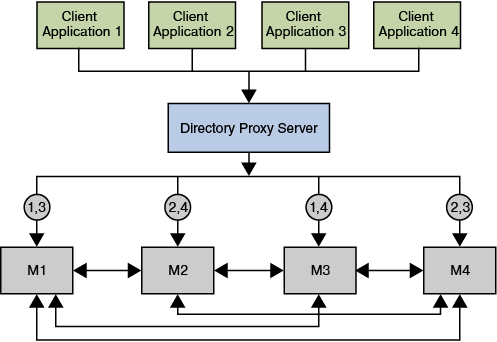

In the scenario illustrated in the following figure a bug in Application 1 causes Directory Server to fail. The proxy configuration ensures that LDAP requests from Application 1 are only ever sent to Master 1 and to Master 3. When the bug occurs, Masters 1 and 3 fail. However, Applications 2, 3, and 4 are not disabled, because they can still reach a functioning Directory Server.

Figure 12-5 Using Application Isolation in a Scaled Deployment