10 Configuring Server Migration

This chapter describes how to configure server migration in accordance with the EDG recommendations. It contains the following sections:

-

Section 10.2, "Setting Up a User and Tablespace for the Server Migration Leasing Table"

-

Section 10.3, "Creating a Multi-Data Source Using the Oracle WebLogic Server Administration Console"

-

Section 10.5, "Setting Environment and Superuser Privileges for the wlsifconfig.sh Script"

10.1 About Configuring Server Migration

The procedures described in this chapter must be performed for various components of the enterprise deployment topology outlined in Section 1.7, "Enterprise Deployment Reference Topology." Variables are used in this chapter to distinguish between component-specific items:

-

WLS_SERVER1 and WLS_SERVER2: these refer to the managed WebLogic servers for the enterprise deployment component

-

HOST1 and HOST2: these refer to the host machines for the enterprise deployment component

-

CLUSTER: this refers to the cluster associated with the enterprise deployment component.

The values to be used to these variables are provided in the component-specific chapters in this EDG.

In this enterprise topology, you must configure server migration for the WLS_SERVER1 and WLS_SERVER2 managed servers. The WLS_SERVER1 managed server is configured to restart on HOST2 should a failure occur. The WLS_SERVER2 managed server is configured to restart on HOST1 should a failure occur. For this configuration, the WLS_SERVER1 and WLS_SERVER2 servers listen on specific floating IP addresses that are failed over by WebLogic Server migration. Configuring server migration for the WLS managed servers consists of the following steps:

10.2 Setting Up a User and Tablespace for the Server Migration Leasing Table

The first step is to set up a user and tablespace for the server migration leasing table:

Note:

If other servers in the same domain have already been configured with server migration, the same tablespace and data sources can be used. In that case, the data sources and multi-data source for database leasing do not need to be re-created, but they will have to be retargeted to the cluster being configured with server migration.-

Create a tablespace called 'leasing'. For example, log on to SQL*Plus as the sysdba user and run the following command:

SQL> create tablespace leasing logging datafile 'DB_HOME/oradata/orcl/leasing.dbf' size 32m autoextend on next 32m maxsize 2048m extent management local; -

Create a user named 'leasing' and assign to it the leasing tablespace:

SQL> create user leasing identified by welcome1; SQL> grant create table to leasing; SQL> grant create session to leasing; SQL> alter user leasing default tablespace leasing; SQL> alter user leasing quota unlimited on LEASING;

-

Create the leasing table using the leasing.ddl script:

-

Copy the leasing.ddl file located in either the WL_HOME/server/db/oracle/817 or the WL_HOME/server/db/oracle/920 directory to your database node.

-

Connect to the database as the leasing user.

-

Run the leasing.ddl script in SQL*Plus:

SQL> @Copy_Location/leasing.ddl;

-

10.3 Creating a Multi-Data Source Using the Oracle WebLogic Server Administration Console

The second step is to create a multi-data source for the leasing table from the Oracle WebLogic Server Administration Console. You create a data source to each of the Oracle RAC database instances during the process of setting up the multi-data source, both for these data sources and the global leasing multi-data source.

Please note the following considerations when creating a data source:

-

Make sure that this is a non-XA data source.

-

The names of the multi-data sources are in the format of <MultiDS>-rac0, <MultiDS>-rac1, and so on.

-

Use Oracle's Driver (Thin) Version 9.0.1, 9.2.0, 10, 11.

-

Data sources do not require support for global transactions. Therefore, do not use any type of distributed transaction emulation or participation algorithm for the data source (do not choose the Supports Global Transactions option, the Logging Last Resource, Emulate Two-Phase Commit, or One-Phase Commit options of the Supports Global Transactions option), and specify a service name for your database.

-

Target these data sources to the cluster assigned to the enterprise deployment component (CLUSTER; see the component-specific chapters in this guide).

-

Make sure the initial connection pool capacity of the data sources is set to 0 (zero). To do this, select Services and then Data Sources. In the Summary of JDBC Data Sources screen, click the data source name in the list, then click the Connection Pool tab, and enter 0 (zero) in the Initial Capacity field. Click Save.

Perform these steps to create a multi-data source:

-

Log in to the Oracle WebLogic Server Administration Console.

-

In the Domain Structure area in the left, expand the Services node and then select the Data Sources node. The Summary of JDBC Data Source page opens.

-

Click Lock & Edit.

-

In the Summary of JDBC Data Source page, click New and choose Multi Data Source from the list. The Create a New JDBC Multi Data Source page opens.

-

Enter

leasingas the name. -

Enter

jdbc/leasingas the JNDI name. -

Select Failover as algorithm (default).

-

Click Next.

-

Select the cluster assigned to the enterprise deployment component as the target. (See the CLUSTER variable in the component-specific chapters in this guide.)

-

Click Next.

-

Select non-XA driver (the default).

-

Click Next.

-

Click Create New Data Source.

-

Enter

leasing-rac0as the name. Enterjdbc/leasing-rac0as the JNDI name. Enteroracleas the database type. For the driver type, select Oracle driver (Thin) for RAC Service-Instance connections, Versions: 10 and later.Note:

When creating the multi-data sources for the leasing table, enter names in the format of <MultiDS>-rac0, <MultiDS>-rac1, and so on. -

Click Next.

-

Deselect Supports Global Transactions.

-

Click Next.

-

Enter the service name, database name, host name, host port, database user name, and password for your leasing schema.

-

Click Next.

-

Click Test Configuration and verify that the connection works.

-

Click Next.

-

Target the data source to the cluster assigned to the enterprise deployment component (CLUSTER), and click Finish.

-

Select the data source and add it to the right screen.

-

Click Create a New Data Source for the second instance of your Oracle RAC database, target it to the cluster assigned to the EDG component (CLUSTER), repeating the steps for the second instance of your Oracle RAC database.

-

Add the second data source to your multi-data source.

-

Click Activate Changes.

10.4 Editing Node Manager's Properties File

The third step is to edit Node Manager's properties file. This needs to be done for the node managers in both nodes where server migration is being configured:

Interface=eth0 NetMask=255.255.255.0 UseMACBroadcast=true

-

Interface: This property specifies the interface name for the floating IP (for example, eth0).

Do not specify the sub-interface, such as

eth0:1oreth0:2. This interface is to be used without:0or:1. Node Manager's scripts traverse the different :X-enabled IPs to determine which to add or remove. For example, the valid values in Linux environments are eth0, eth1, eth2, eth3, ethn, depending on the number of interfaces configured. -

NetMask: This property specifies the net mask for the interface for the floating IP. The net mask should the same as the net mask on the interface; 255.255.255.0 is used as an example in this document.

-

UseMACBroadcast: This property specifies whether or not to use a node's MAC address when sending ARP packets, that is, whether or not to use the -

bflag in thearpingcommand.

Verify in Node Manager's output (shell where Node Manager is started) that these properties are being used, or problems may arise during migration. You should see something like this in Node Manager's output:

... StateCheckInterval=500 Interface=eth0 NetMask=255.255.255.0 ...

Note:

The steps below are not required if the server properties (start properties) have been properly set and Node Manager can start the servers remotely.-

Set the following property in the nodemanager.properties file:

-

StartScriptEnabled: Set this property to 'true'. This is required for Node Manager to start the managed servers using start scripts.

-

-

Start Node Manager on HOST1 and HOST2 by running the startNodeManager.sh script, which is located in the WL_HOME/server/bin directory.

Note:

When running Node Manager from a shared storage installation, multiple nodes are started using the same nodemanager.properties file. However, each node may require different NetMask or Interface properties. In this case, specify individual parameters on a per-node basis using environment variables. For example, to use a different interface (eth3) in HOSTn, use the Interface environment variable as follows:HOSTn> export JAVA_OPTIONS=-DInterface=eth3and start Node Manager after the variable has been set in the shell.10.5 Setting Environment and Superuser Privileges for the wlsifconfig.sh Script

The fourth step is to set environment and superuser privileges for the wlsifconfig.sh script (for the 'oracle' user):

-

Ensure that your PATH environment variable includes these files:

-

Grant sudo configuration for the wlsifconfig.sh script.

-

Configure sudo to work without a password prompt.

-

For security reasons, sudo should be restricted to the subset of commands required to run the wlsifconfig.sh script. For example, perform these steps to set the environment and superuser privileges for the wlsifconfig.sh script:

-

Grant sudo privilege to the WebLogic user ('oracle') with no password restriction, and grant execute privilege on the /sbin/ifconfig and /sbin/arping binaries.

-

Make sure the script is executable by the WebLogic user ('oracle'). The following is an example of an entry inside /etc/sudoers granting sudo execution privilege for

oracleand also overifconfigandarping:oracle ALL=NOPASSWD: /sbin/ifconfig,/sbin/arping

-

Note:

Ask the system administrator for the sudo and system rights as appropriate to this step. -

10.6 Configuring Server Migration Targets

The fifth step is to configure server migration targets. You first assign all the available nodes for the cluster's members and then specify candidate machines (in order of preference) for each server that is configured with server migration. Follow these steps to configure cluster migration in a migration in a cluster:

-

Log in to the Oracle WebLogic Server Administration Console (http://Host:Admin_Port/console). Typically, Admin_Port is 7001 by default.

-

In the Domain Structure window, expand Environment and select Clusters. The Summary of Clusters page opens.

-

Click the cluster for which you want to configure migration (CLUSTER) in the Name column of the table.

-

Click the Migration tab.

-

Click Lock & Edit.

-

In the Available field, select the machine to which to allow migration and click the right arrow. In this case, select HOST1 and HOST2.

-

Select the data source to be used for automatic migration. In this case, select the leasing data source.

-

Click Save.

-

Click Activate Changes.

-

Click Lock & Edit.

-

Set the candidate machines for server migration. You must perform this task for all of the managed servers as follows:

-

In the Domain Structure window of the Oracle WebLogic Server Administration Console, expand Environment and select Servers.

Tip:

Click Customize this table in the Summary of Servers page and move Current Machine from the Available window to the Chosen window to view the machine on which the server is running. This will be different from the configuration if the server gets migrated automatically. -

Select the server for which you want to configure migration.

-

Click the Migration tab.

-

In the Available field, located in the Migration Configuration section, select the machines to which to allow migration and click the right arrow. For WLS_SERVER1, select HOST2. For WLS_SERVER2, select HOST1.

-

Select Automatic Server Migration Enabled. This enables Node Manager to start a failed server on the target node automatically.

-

Click Save.

-

Click Activate Changes.

-

Restart the administration server, node managers, and the servers for which server migration has been configured.

-

10.7 Testing the Server Migration

The sixth and final step is to test the server migration. Perform these steps to verify that server migration is working properly:

-

Stop the WLS_SERVER1 managed server. To do this, run this command:

HOST1> kill -9 pid

where pid specifies the process ID of the managed server. You can identify the pid in the node by running this command:

HOST1> ps -ef | grep WLS_SERVER1

-

Watch the Node Manager console. You should see a message indicating that WLS_SERVER1's floating IP has been disabled.

-

Wait for Node Manager to try a second restart of WLS_SERVER1. It waits for a fence period of 30 seconds before trying this restart.

-

Once Node Manager restarts the server, stop it again. Node Manager should now log a message indicating that the server will not be restarted again locally.

-

Watch the local Node Manager console. After 30 seconds since the last try to restart WLS_SERVER1 on node 1, Node Manager on node 2 should prompt that the floating IP for WLS_SERVER1 is being brought up and that the server is being restarted in this node.

-

Access the soa-infra console in the same IP.

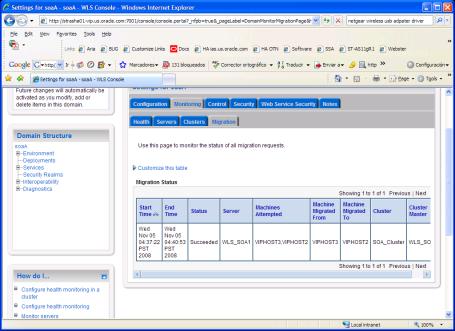

Verification from the Administration Console

Migration can also be verified in the Administration Console:

-

Log in to the Administration Console.

-

Click Domain on the left console.

-

Click the Monitoring tab and then the Migration subtab.

The Migration Status table provides information on the status of the migration (Figure 10-1).

Figure 10-1 Migration Status Screen in the Administration Console

Description of "Figure 10-1 Migration Status Screen in the Administration Console"

Note:

After a server is migrated, to fail it back to its original node or machine, stop the managed server from the Oracle WebLogic Administration Console and then start it again. The appropriate Node Manager will start the managed server on the machine to which it was originally assigned.