28 Working with AIA Design Patterns

This chapter provides an overview of AIA message processing patterns, AIA assets centralization patterns, AIA assets extensibility patterns.

Note:

Composite Business Processes (CBP) will be deprecated from next release. Oracle advises you to use BPM for modeling human/system interactions.

This chapter includes the following sections:

28.1 AIA Message Processing Patterns

This section discusses AIA message processing patterns and provides solutions to specific problems that you may encounter.

This section includes the following topics:

-

Section 28.1.1, "Synchronous Request-Reply Pattern: How to get Synchronous Response in AIA"

-

Section 28.1.3, "Guaranteed Delivery Pattern: How to Ensure Guaranteed Delivery in AIA"

28.1.1 Synchronous Request-Reply Pattern: How to get Synchronous Response in AIA

Many use cases warrant consumers to send requests synchronously to service providers and get immediate responses to each of their requests. These use cases need the consumers to wait until the responses are received before proceeding to their next tasks.

For example, Customer Relationship Management (CRM) applications may provide features such as allowing customer service representatives and systems to send requests to providers for performing tasks such as account balance retrieval, credit check, ATP (advanced time to promise) calculation, and so on. Since CRM applications expect the responses to be used in the subsequent tasks, this precludes the users from performing other tasks until the responses are received.

AIA recommends using the synchronous request and response service interface in all the composites involved in processing or connecting the back-end systems. There should not be any singleton service in the services involved in the query processing. The general recommendation is for all of the intermediary services and the service exposed by the provider application to implement a request-response based interface - a two-way operation. Even though it is technically possible to design all services but the initial caller to implement two one-way requests, this implementation technique should be avoided if possible.

Implementation should strive to ensure that no persistence of state information (dehydration) or audit details is done by any of the intermediary services or by the ultimate service provider. These techniques help keep the latency as low as possible.

AIA also recommends that the intermediary services are co-located to eliminate the overheads normally caused by marshalling and un-marshalling of SOAP documents.

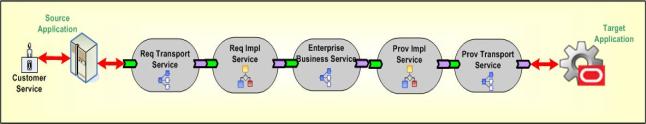

Figure 28-1 illustrates how a task implemented as an Enterprise Business Service (EBS) is invoked synchronously by the requester application.

-

All the resources are locked in until the response from service provider goes back to the originating system or user.

-

Either a transaction timeout or an increased latency may result if any of the services or the participating application takes more time for processing.

-

Service providers must be always available and ready to fulfill the service requests.

-

Service providers doing real-time retrieval and collation of data from multiple back-end systems to generate a response message could put an enormous toll on the overall resources and increase the latency. The synchronous request-response design pattern should not be used to implement tasks that involve real-time complex processing. Off loading of work must be done; the design must be modified to accommodate the staging of quasi-prepared data so that the real-time processing can be made as light as possible.

-

Services involved in implementing synchronous request-response pattern should refrain themselves from doing work for each of the repeatable child nodes present in the request message. A high number of child nodes in the payload in a production environment can have an adverse impact on the system.

28.1.2 Asynchronous Fire-and-Forget Pattern

The requester application should be able to continue its processing after submitting a request regardless of whether the service providers needed to perform the tasks are immediately available or not. Besides that, the user initiating the request does not have a functional need to wait until the request is fully processed. The bottom line is, the service requesters and service providers must have the capability to produce and consume messages at their own pace without directly depending upon each other to be present.

Also, the composite business processes should be able to support the infrastructure services like error handling and business activity monitoring services in a decoupled fashion without depending on the participating application or the AIA functional process flows.

For example, order capture applications should be able to keep taking orders regardless of whether the back end systems such as order fulfillment systems are available at that time. You do not want the order capturing capability to be stopped because of non-availability of any of the services or applications participating in the execution of order fulfillment process.

AIA recommends the fire-and-forget pattern using queuing technology with a database or file as a persistence store to decouple the participating application from the integration layer. The queue acts as a staging area allowing the requester applications to place the request messages. The request messages are subsequently forwarded to service providers on behalf of requester as and when the service providers are ready to process them.

It is highly recommended that the enqueueing of the request message into the queue is within the same transaction initiated by the requester application to perform its work. This ensures that the request message is enqueued into the queue only when the participating application's transaction is successful. The request message is not enqueued in situations where the transaction is not successful. Care must be taken to ensure that the services residing between two consecutive milestones are enlisting themselves into a single global transaction.

Refer to Section 27.5, "Implementing Error Handling and Recovery for the Asynchronous Message Exchange Pattern to Ensure Guaranteed Message Delivery" to understand how milestones are used as intermediate reliability artifacts to ensure the guaranteed delivery of messages.

AIA recommends having a queue per business object. All requests emanating from a requester application for a business object use the same queue.

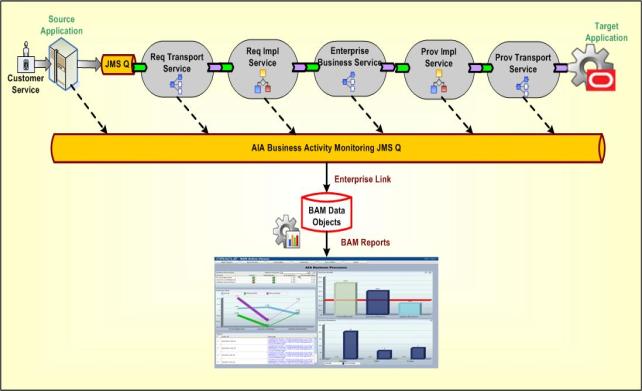

Figure 28-2 shows how JMS queues are used to decouple the source application from the integration layer processing. The source application submits the message to the JMS queue and continues with its other processing without waiting for a response from the integration layer.

The AIA composite business process may not be up and running while the application continues to produce the messages in the JMS queue. When the AIA composite business process comes up, it starts consuming the messages and processes them.

Figure 28-2 also shows how the monitoring services can be decoupled from the main AIA functional flows with the help of the JMS queue. All the AIA services and the participating applications can fire a monitoring message into the queue and forget about how it is processed by the downstream applications. In this pattern the services or participating applications do not expect any response. Similarly, the AIA infrastructure services capture the system or business errors from the composite business processes and publish them to an AIA error topic, which is used by the error handling framework for further processing (resubmission, notification, logging, and so on).

The default implementation does not have inherent support for notifying the requester application of success or the failure of messages. Even though the middleware systems provide the ability to monitor and administer the flow of in-flight message transmissions, there will be use cases where requester applications either want to be notified or have a logical reversal of work done programmatically.

For more information about how compensation handlers can be implemented for this message exchange pattern, see Chapter 15, "Designing Application Business Connector Services" and Chapter 16, "Constructing the ABCS."

28.1.3 Guaranteed Delivery Pattern: How to Ensure Guaranteed Delivery in AIA

Message delivery cannot be guaranteed when the participating systems and the services integrating those systems interact in an unreliable fashion.

For example, the Order Capture system might submit an order fulfillment request that triggers a business process, which in turn invokes the services provided by multiple disparate systems to fulfill the request. Expecting all of the service providers to be always available might be unrealistic.

A milestone is a message checkpoint where the enriched message is preserved after completing a certain amount of business functionality at this logical point or is submitted to the participating application for further processing of that message in an end-to-end composite business process. A milestone helps to commit the business transactions done to that logical point and also releases the resources used in the AIA services and the participating application.

AIA recommends introducing milestones at appropriate logical points in an end-to-end integration having the composite business processes, business activity services, or data services designed and implemented for integrating disparate systems to address a business requirement. Queues with database or file as persistence store are used to implement milestones.

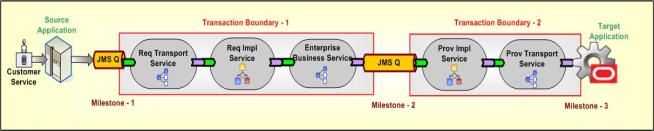

Figure 28-3 shows how to ensure guaranteed delivery with the help of milestones for a simple use case where the customer service representative updates the billing profile on the source application and the updated profile is reflected in the back-end billing systems.

AIA recommends that the source application push the billing profile information to Milestone -1 (JMS Queue) in a transactional mode to ensure the guaranteed delivery of the message. This transaction is outside the AIA transaction boundaries and is not shown in the diagram. After the billing profile information is stored in Milestone-1, the source application resources can be released because AIA ensures the guaranteed delivery of that message to the target application.

The implementation service has the message enrichment logic (if needed) and the Enterprise Business Service (EBS) routes the enriched message to appropriate providers or Milestone-2 (optional for very simple and straightforward use cases such as no enrichment or processing requirements for implementation service).

All the enriched messages would either be transferred to Milestone-2 or rolled back to Milestone - 1 for corrective action or resubmission. To achieve this, all the services present between Milestone-1 and Milestone-2 must enlist themselves in a single global transaction. After the messages are transferred to Milestone-2, all the Milestone-1 implementation service instances are released to accommodate new requests.

The provider implementation service picks up the billing profile information and ensures the message is delivered to the target application. The target application may or may not have the capability of participating in the transaction between Milestone-2 and Milestone-3 so the implementation service should be designed to take care of the compensatory transactions.

AIA recommends that the target application build the XA capability to ensure guaranteed delivery. If the target application does not have the XA capability, the implementation service should be designed for manual compensations or semi-automatic or automatic compensations based on the target system's capability to acknowledge the business message delivered from AIA.

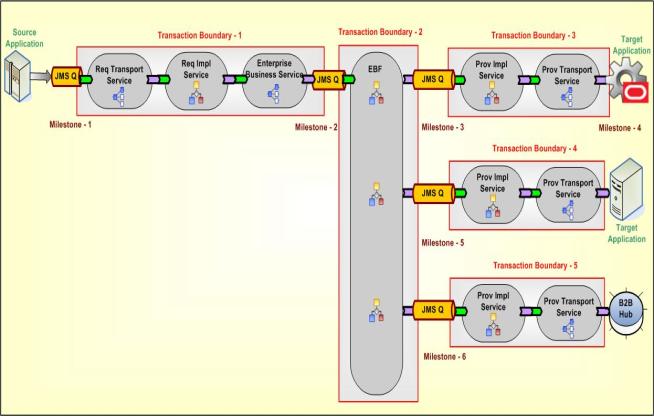

Figure 28-4 shows the usage of milestones to ensure guaranteed delivery for a complex use case where the source system submits an order provisioning request to AIA.

Introducing a milestone adds processing overhead and could impact the performance of composite business processes, business activity services, or data services. So selecting the number of milestones is a challenge for the designers. However, minimizing the milestones, thereby adding more work to a single transaction, could also have a detrimental effect.

Ensuring reliable messaging between the "milestones" and the "application and milestone" to achieve the guaranteed delivery adds transactional overheads. This could impact the performance at runtime in some situations (if the amount of work to be done between two milestones is too large) and may also require additional process design requirements for compensation.

28.1.4 Service Routing Pattern: How to Route the Messages to Appropriate Service Provider in AIA

Requester applications should build tightly coupled services if they must send information to a specific target system. The logic to identify the service provider, and in some cases the service provider instance (when you have multiple application instances for the same provider) has to be embedded within the logic of caller service. The decision logic in the caller service has to be constantly undergoing changes as and when either a new service provider is added into the mix or an existing service provider is retired from that mix.

For example, a customer might have three billing system implementations-two instances of vendor A's billing system, one dedicated to customers who reside in North America and the second dedicated to the customers residing in Asia-Pacific; one instance of vendor B's billing system dedicated to customers residing in other parts of the world. The requester service must have the decision logic to discern to whom to delegate the request.

AIA recommends content-based service routing to appropriate target service implementations to further process this message and send it to the target applications. AIA recommends externalizing the decision logic to determine the right service provider from the actual requester application or service. This decision logic is incorporated in the routing service. This allows for declaratively changing the message path when unforeseen conditions occur. The Enterprise Business Service acts as a routing service. This facilitates one-to-many message routing based on the content-based filtering. A message originating from a requester can go to all the targets, some targets, or just one target based on the business rules or content filters.

The routing service evaluates the rule and deciphers the actual service provider that must be used for processing a specific message. Using this approach, the clients or service requesters are totally unaware of the actual service providers. Similarly, the underlying service providers are oblivious to the client applications that made the request. This enables you to introduce new providers and retire existing providers without making any changes to the actual requester service.

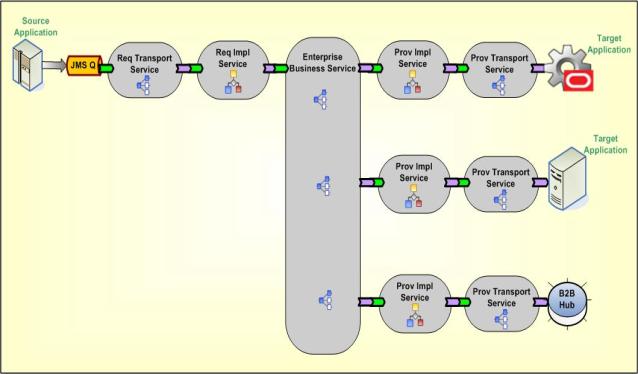

Figure 28-5 illustrates how a request sent by Req Impl Service (requester service) is sent to either one of two target applications or to a trading partner (B2B) using context based routing by routing service.

For the requester service to be loosely coupled with the actual service providers, the routing service should act as the abstraction layer; therefore, its service interface has to be carefully designed. Its interface can emulate the actual service provider's interface if only one unique service provider exists or it can have a canonical interface.

Even though Mediator technologies allow for routing rules to be added in a declarative way, validation of different routing paths must be tested before deploying them in production.

Managing the decision logic locally in each of the routing services can lead to duplication and conflict so having them managed in a centralized rule management system such as Oracle Business Rules Engine is an option to be considered.

28.1.5 Competing Consumers Pattern: How are Multiple Consumers used to Improve Parallelism and Efficiency?

When the requester application produces a huge number of business messages which should be handled and sent to the target application, consuming all the messages to meet the expected throughput is a challenge. This becomes much more profound when the processing of each message involves interacting with a multitude of systems thereby resulting in blocking for a significant period waiting for them to complete their work.

For example, the order capture application submits orders in the order processing queue for the fulfillment of orders. Each of the order fulfillment requests must be picked from the queue and handed over to the order orchestration engine for decomposition. Only upon the acknowledgment can the next message be picked up from the order processing queue. Unplanned outages of the order orchestration platform can result in a large accumulation of order fulfillment requests and serial processing of these requests upon availability of platform can cause an inordinate amount of delay.

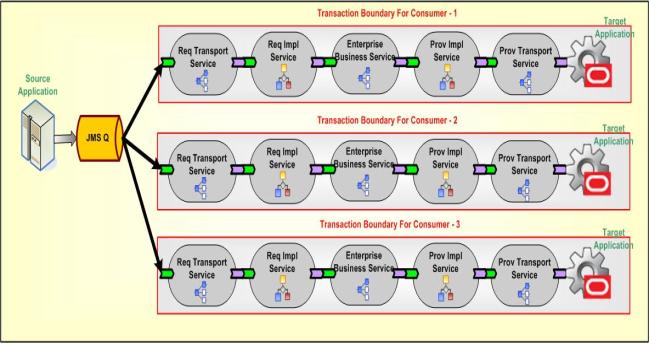

AIA recommends setting up multiple consumers or listeners connected to the source queue. AIA assumes that the requester application has published messages into the source queue.

Refer to Section 28.1.2, "Asynchronous Fire-and-Forget Pattern" for more details.

Figure 28-6 shows that setting up multiple listeners triggers consumption of multiple messages in parallel. Even though multiple consumers are created to receive the message from a single queue (Point-to-Point Channel), only one of the consumers can receive the message upon the delivery by JMS. Even though all of the consumers compete with each other to be the receiver, only one of them ends up receiving the message. Based on the business requirements, this pattern can be used across the nodes with each consumer set up on each node in an HA environment, or on a cluster to improve the scalability, or within the AIA artifact.

This solution only works for the queue and cannot be used with the Topic (Publish-Subscribe channel). In the case of Topic, each of the consumers receives a copy of the message.

Since multiple consumers are processing the messages in parallel, the messages are not processed in a specific order. If they must be processed in sequence without compromising parallelism and efficiency for a functional reason, then you must introduce a staging area to hold these messages until a contiguous sequence is received before delivering them to the ultimate receivers.

28.1.6 Asynchronous Delayed-Response Pattern: How does the Service Provider Communicate with the Requester when Synchronous Communication is not Feasible?

When a service provider has to take a large amount of time to process a request, how can the requester receive the outcome without keeping the requester in a suspended mode during the entire processing?

For example, the order capture application submits a request for fulfillment of an order. The order fulfillment process itself could take anywhere from several minutes to several days depending upon the complexity of tasks involved. Even though the order capture application would like to know the outcome of the fulfillment request, it cannot afford to wait idly until the process is completed to know the outcome.

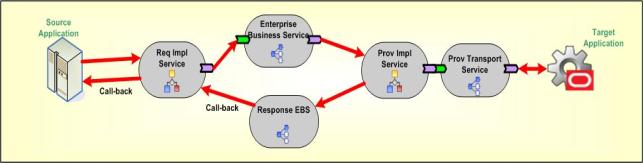

AIA recommends using the asynchronous delayed response pattern where the requester submits a request to the integration layer and sets up a callback response address by populating the metadata section in the request message (WSA Headers). The callback response address points to the end point location of the original caller's service that is contacted by service provider after the completion. Since multiple intermediary services are involved before sending the message to the ultimate receiver, sending the callback address using the metadata section in the message is needed. In addition to the callback address, the requester is expected to send correlation details that allow the service provider to send this information in future conversations so that the requesters are able to associate the response messages with the original requests.

Since a request-delayed response pattern is asynchronous in nature, the requester does wait for the response after sending the request message. In the flow implementing this pattern as shown in Figure 28-7, it is recommended that all of the services involved have two one-way operations. A separate thread must be invoked to initiate the response message. When the AIA provider service processes the message after getting a reply from the provider participating application, it creates a response thread by invoking the response EBS. The response EBS uses the WSA headers to identify the requesting service and processes the response to invoke the callback address given by the requesting application. The EBS has a pair of operations to support two one-way calls - one for sending the request and another for receiving the response. Both the operations are independent and atomic. A correlation mechanism is used to establish the application or caller's context.

Provider applications must have the capability to persist the correlation details and the callback address sent by the requester application and need these details populated in the response message.

28.1.7 Asynchronous Request Response Pattern: How does the Service Provider Notify the Requester Regarding the Errors?

Integration architects should understand the transactional requirements, the participating applications' capability of participating in a global transaction, and the concept of milestones before deciding upon the asynchronous request-response pattern implementation. The following sections present implementation solution guidelines for three different scenarios.

Solution: Success scenario calls the same requester but separate service for the Error Handling

In this solution, the interaction is divided into two transactions; that is, the error handling part is separated from the requester ABCS implementation. A new service should be constructed to accept the error message from the response EBS and the error response service should forward it to the source participating application.

Figure 28-8 shows that if the request is processed successfully, then it is routed back to the requester ABCS to complete the remaining process activities. If there is any error while processing the response, it follows the normal error handling mechanism and a notification is sent back to the source participating application. If the error is sent back to the caller, then a web service call to the error response service should be made in the error handling block of the requester ABCS implementation.

Solution: Using JMS Queue as a milestone

In this solution, the interaction is divided into three transactions. The JMS queue / milestone should be used to enable the transaction boundaries. Figure 28-9 depicts the error scenario. The success scenario will not have the "JMS-2" queue. Instead the provider ABCS directly invokes the response EBS. So, for the success scenario, the whole interaction is completed in two transactions.

Solution: Using parallel routing rule and Web Service call

In this solution, the interaction is divided into three transactions. Figure 28-10 depicts the error scenario. The parallel routing rule in the mediator (EBS) and a web service call from the provider ABCS reference to response EBS should be used to enable the transaction boundaries. The success scenario directly calls the response EBS using an optimized reference component binding. So, there would be only two transaction boundaries for the success case.

28.2 AIA Assets Centralization Patterns

This section describes how to avoid redundant data model representation and service contract representation in AIA.

This section includes the following topics:

-

Section 28.2.1, "How to Avoid Redundant Data Model Representation in AIA"

-

Section 28.2.2, "How to Avoid Redundant Service Contracts Representation in AIA"

28.2.1 How to Avoid Redundant Data Model Representation in AIA

The service contracts between the applications and AIA interfaces must use similar business documents or data sets which results in the redundant data models representation. A change in the data model is very difficult to apply and it is very difficult to govern.

AIA recommends separation of the data model or schemas physically from the service contracts or the implementations. These schemas should be centralized at a location which could be referenced easily during design or runtime.

All the EBOs (Enterprise Business Objects), ABOs (Application Business Objects), and any other utility schemas should be maintained at a central location in a structured way.

As the EBO schemas are used for various business processes in common, a thorough analysis should be done before coming up with a canonical data model.

-

A change in the data model or schema should be treated very carefully because multiple dependent service contracts could be impacted with a small change.

-

Versioning policies should be clearly defined, otherwise redundant service implementations for a minor change may occur.

-

Governance is a challenge to manage the shared data models or common assets.

28.2.2 How to Avoid Redundant Service Contracts Representation in AIA

The granularity of the service contract can be at the operation level or at the entity level. The entity level definition can have various operations defined in the same service contract but the implementation can be at operation level which results in creating multiple implementation artifacts using the same service contract. This causes redundant service contracts representation in various implementations.

AIA recommends that the service contracts (WSDL) be separated physically from the implementations. These service contracts should be centralized at a location which can be referenced easily during design or runtime.

All the EBS (Enterprise Business Service), ABCS (Application Business Connector Service), and any other utility service contracts should be maintained at a central location in a structured way.

-

A change in the service contract should be treated very carefully because multiple dependent service implementations could be impacted with a small change.

-

Governance is a challenge to manage the shared service contracts or common assets.

28.3 AIA Assets Extensibility Patterns

This section discusses the extensibility patterns for AIA assets and describes the problems and solutions for extending AIA artifacts.

This section includes the following topics:

28.3.1 Extending Existing Schemas in AIA

The delivered schemas may not be sufficient for some of your specific business operations, so you may want to add elements to the existing schemas in an upgrade safe manner. This wouldn't be possible by just adding the customer-specific elements in an existing schema, and the schema extension model is needed.

To allow you to extend the EBO or Enterprise Business Message (EBM) schemas in a nonintrusive manner, the complex type defined for every business component and the common component present in each of the EBO or EBM schemas has an extension placeholder element called Custom at the end. The data type for this custom element is defined in the schema modules allocated for holding customer extensions in the custom EBO schema files.

In Example 28-1 the custom element added at the end of the complex type in the "Schema-A" acts as a place holder to bring the customer extensions added in the "Custom Schema-A".

Here the Schema- A is "AIAComponents\EnterpriseObjectLibrary\Industry\Telco\EBO\ CustomerParty\V2\CustomerPartyEBO.xsd"

Example 28-1 Custom Element Acting as Placeholder

<xsd:complexType name="CustomerPartyAccountContactCreditCardType">

<xsd:sequence>

<xsd:element ref="corecom:Identification" minOccurs="0"/>

<xsd:element ref="corecom:CreditCard" minOccurs="0"/>

<xsd:element name="Custom"

type="corecustomerpartycust:CustomCustomerPartyAccountContactCreditCardType"

minOccurs="0"/>

</xsd:sequence>

<xsd:attribute name="actionCode" type="corecom:ActionCodeType"

use="optional"/>

</xsd:complexType>

Example 28-2 shows the Custom Schema - A: "AIAComponents\EnterpriseObjectLibrary\Industry\Telco\Custom\ EBO\CustomerParty\V2\CustomCustomerPartyEBO.xsd"

Example 28-2 Adding an Extension to EBO or EBM Schemas

<!-- ================================================================== --> <!-- ============== CustomerParty Custom Components ================ --> <!-- ============================================================ --> <xsd:complexType name="CustomCustomerPartyAccountAttachmentType"/> <xsd:complexType name="CustomCustomerPartyAccountContactType"/> <xsd:complexType name="CustomCustomerPartyAccountContactCreditCardType"> <!-- CUSTOMERS CAN ADD EXTENSIONS HERE ...> </xsd:complexType> <xsd:complexType name="CustomCustomerPartyAccountContactUsageType"/>

28.3.2 Extending AIA Services

The delivered services may not be sufficient to address some of your specific business functionality. You may want to add some validation logic, enrich the existing content in the service, or add some processing logic in between the existing service implementation. Adding this logic in the existing service code would not ensure upgrade safety because the upgrade process would overwrite the existing service code with new functionality added as part of the upgrade. So, there is a need for a service extension model.

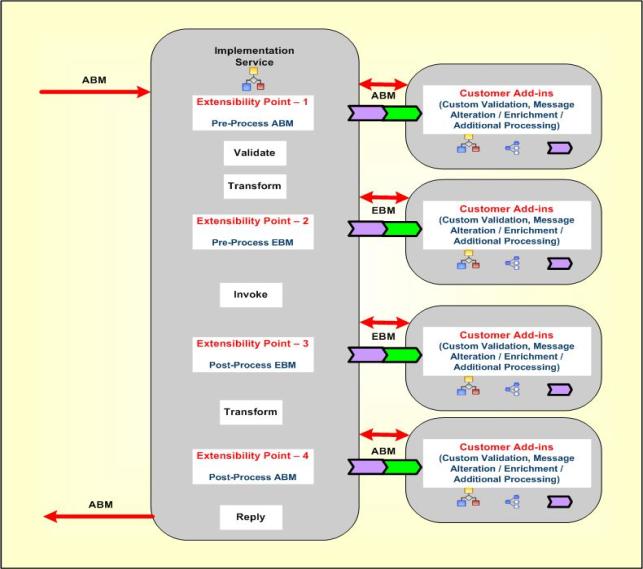

AIA recommends introducing various extension points at logical points during the service implementation. Figure 28-11 illustrates that there are four logical extension points identified in the ABCS to perform customer-specific validations, content enrichment or message alteration, and any additional logic that you would like to implement with the given payload at that extension point. Typically there are two pre-processing and two post-processing extension points identified: pre-processing ABM, post-processing ABM, pre-processing EBM and post-processing EBM.

The number of extension points may be more or less than four depending on the type of service implementation and the type of message exchange pattern used by that service.

These extension points can be enabled or disabled based on whether you want to implement those custom extension points.

28.3.3 Extending Existing Transformations in AIA

The delivered transformations with existing schemas may not be sufficient for some of your specific business operations. You may want to add elements to the existing schemas and then add transformation maps for the newly added elements to transfer the information from one application to the other in an upgrade safe manner. This would not be possible by just adding the customer-specific transformations in an existing XSL files so the transformations extension model is needed.

To extend the transformations in a nonintrusive manner, the call template statement is defined for every business component mapping present in each of the XSL transformation files which calls the "Custom Template" defined in the custom XSL transformation.

Example 28-3 is the example to add new transformations for the custom elements added by the customer:

The main XSL mapping "XformListOfCmuAccsyncAccountIoToCreateCustomerPartyListEBM.xsl" would import the custom XSL file and have call template statement to the extension template for each complex type or business component like this:

Example 28-3 Adding New Transformations for Customer-defined Custom Elements

<?xml version = '1.0' encoding = 'UTF-8'?>

..………………………………………..

xmlns:dvm="http://www.oracle.com/XSL/Transform/java/oracle.tip.dvm.LookupValue">

<xsl:import href="XformListOfCmuAccsyncAccountIoToCreateCustomerPartyListEBM_

Custom.xsl"/>

..………………………………………..

<xsl:value-of select="aia:getServiceProperty($ConfigServiceName,

'Routing.CustomerPartyEBS.CreateCustomerPartyList.CAVS.EndpointURI', false())"/>

</corecom:DefinitionID>

</xsl:if>

<xsl:call-template name="MessageProcessingInstructionType_ext">

<xsl:with-param name="currentNode" select="."/>

</xsl:call-template>

</corecom:MessageProcessingInstruction>

Example 28-4 shows the custom XSL file that has the template definition where you can add your custom mappings for the newly added custom elements or existing elements which are not mapped in the main transformation.

Example 28-4 Custom XSL Template Definition

<!-- HEADER CUSTOMIZATION -->

<xsl:template name="MessageProcessingInstructionType_ext">

<xsl:template name="EBMHeaderType_ext">

<xsl:param name="currentNode"/>

<!-- Customers add transformations here -->

</xsl:template>

<xsl:param name="currentNode"/>

<!-- Customers add transformations here -->

</xsl:template>

<!-- DATA AREA CUSTOMIZATION -->

<xsl:template name="ContactType_ext">

<xsl:param name="currentNode"/>

<!-- Customers add transformations here -->

</xsl:template>

28.3.4 Extending the Business Processes in AIA

The delivered composite business processes or Enterprise Business Flows may not be sufficient to address some of your specific business functionality. You may want to add more processing steps, message alteration, or additional processing logic between the existing composite business process implementation. Adding this logic in the existing process orchestration would not ensure upgrade safety so you need a process extension model.

AIA recommends introducing extension points at logical points during the service implementation. There are no fixed logical extension points identified for the Composite Business Processes (CBP) or Enterprise Business Flows (EBF). The number of extension points depends on the complexity of the business process and the number of message types that the process is handling in the implementation.

It is recommended to have one pre-processing and one post-processing extension point for each message type at various logical points in CBP or EBF implementation. The extension process should always be a synchronous process using the same message payload for request and response for that extension point.

These extension points can be enabled or disabled based on whether you want to implement those custom extension points.