Migrate to Autonomous AI Database

Oracle Zero Downtime Migration (ZDM) can be used to facilitate complex database migrations with maximum availability and minimal disruption. It uses the best practices of Oracle’s Maximum Availability Architecture (MAA) during the migration process. This topic outlines the use of Oracle Zero Downtime Migration for migrations to Autonomous AI Database. This capability supports moving databases across different platforms and versions, allowing deployment in multicloud environments.

Target Environment: Autonomous AI Database

Autonomous AI Database is a database service that automatically manages tasks such as provisioning, security, patching, and tuning. This automation reduces operational costs and helps prevent human error. When you deploy Autonomous AI Database on Oracle AI Database@AWS, it uses a native connection with low latency to AWS applications. This setup helps maintain high performance and makes it easier to integrate with other AWS services.

Key Benefits of Using Oracle Zero Downtime Migration for Autonomous AI Database MigrationsTable 1-3

Feature Description Operational Simplicity Oracle Zero Downtime Migration provides an end-to-end, automated, and streamlined orchestration process, often described as a single-button experience, to reduce the complexity of fleet-wide migrations. Minimal Risk The tool aligns with MAA best practices and includes intelligent pre-validation checks to reduce the risk of migration failures. High Flexibility Oracle Zero Downtime Migration's logical migration paths support moving databases between both identical and different database versions and platforms, enabling modernization during the migration. Integrated Performance You can benefit from the superior, automated management of Autonomous AI Database combined with the low-latency network performance provided by the Oracle AI Database@AWS infrastructure. Cost-Effectiveness Oracle Zero Downtime Migration is available at no cost, allowing organizations to leverage its advanced automation without an additional licensing expense. Supported Migration Work-flows

Oracle Zero Downtime Migration supports two work-flows that are designed to help move databases between different source and target environments.- Logical Online Migration

- Downtime Profile: Minimal (Near Zero).

- Compatibility: Supports migrations between the same or different database versions and platforms.

- Process:

- Initial Load: Oracle Data Pump is used to export the source data.

- Staging: Oracle Data Pump's dump files are temporarily stored on a managed NFS file share, specifically utilizing the AWS FSx for OpenZFS service.

- Synchronization: Oracle Golden Gate is deployed to maintain continuous synchronization, capturing and replicating transactions from the source to the target Autonomous AI Database to ensure data consistency until the final cut-over is performed.

- Logical Offline Migration

- Downtime Profile: Offline (Requires a planned application maintenance window).

- Compatibility: Supports migrations between the same or different database versions and platforms.

- Process:

- Export/Import: The method utilizes Oracle Data Pump for the complete export of the source database and subsequent import into the target Autonomous AI Database.

- Staging: The Oracle Data Pump's dump files are stored on a managed NFS file share such as AWS FSx for OpenZFS.

- Cutover: This method is used when the required application downtime is acceptable, as it does not employ Oracle Golden Gate for continuous synchronization.

Note

Logical migration methods are recommended for migrating to Autonomous AI Database because they support moving data across different platforms and database versions, which is often needed when using a managed cloud service.

For more information on Oracle Zero Downtime Migration, see the following resources:- Announcing Oracle ZDM Migrations to Autonomous AI Database on Oracle Database@AWS

- Oracle Database on AWS

- Oracle Zero Downtime Migration Documentation

- NFS Options for ZDM Migration to Oracle Database on AWS

- Oracle Zero Downtime Migration – Logical Offline Migration to Oracle Autonomous AI Database on Dedicated Infrastructure on Oracle Database@AWS

- Logical Online Migration

Amazon S3 serves as a flexible storage layer for Oracle Database workloads beyond direct migration use cases. It can store and manage a wide variety of database-related files, including data files, dump files, CSV, JSON, and Parquet files, logs, reports, and backup artifacts. Amazon S3 can also be used for features such as external tables, enabling Oracle Database to read and process data directly from object storage without persisting it in the database. Because Autonomous AI Database does not provide direct access to the underlying VM cluster, the Amazon S3 File Gateway approach is not applicable and is therefore not covered in this guide. Instead, the following solution shows how to integrate Amazon S3 by using the

DBMS_CLOUDpackage, allowing you to securely access, query, load, and unload data stored in Amazon S3. The key scenarios where this solution applies are outlined in the following section.Use Cases

- Table-level export and import: Using Oracle Data Pump with DBMS_CLOUD or custom wrappers to move individual tables or schema.

- File-based ingestion: Loading CSV, JSON, Parquet files into Exadata Database using DBMS_CLOUD.COPY_DATA.

- External tables: Querying data directly from Amazon S3 without physically importing it.

- Incremental loads: Staging delta files in Amazon S3 and applying them to Exadata Database tables.

- Analytics pipelines: Integrating Amazon S3 data lakes with Exadata Database for hybrid workloads.

Solution Overview

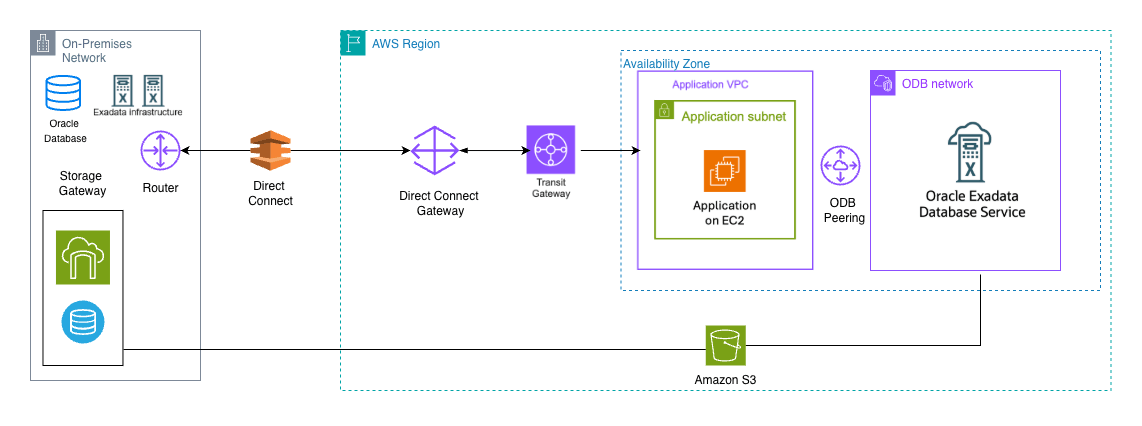

This solution shows how you can use Amazon S3 File Gateway as a staging area for logical backups and migration files, making it easier to move Oracle Database into Autonomous AI Database in Oracle AI Database@AWS.- Source: Oracle Database running on‑premises or in the cloud.

- Target: Autonomous AI Database deployed in Oracle AI Database@AWS.

- Work-flow: The S3 file-share is provisioned and mounted both on the source systems and on the Exadata infrastructure hosting Autonomous AI Database

- Purpose: The mounted Amazon S3 File Gateway acts as a staging area for export dump files or other migration artifacts, which are then seamlessly imported into the target Autonomous AI Database environment.

This architecture supports hybrid workloads, enabling enterprises to migrate, back up, and analyze data with minimal friction.

Prerequisites

The following prerequisites are required to migrate to Autonomous AI Database using Amazon S3 File Gateway.- Source database: Running on compatible Oracle Database such as Exadata Database, Oracle Real Application Clusters or standalone instance.

- Target Autonomous AI Database: Deployed on Oracle AI Database@AWS

- Amazon S3 Bucket: The source and target should have access to Amazon S3.

- Network connectivity: Appropriate network routes established between on‑premises systems and the AWS region hosting Autonomous AI Database.

- Prepare the Environment to Load Data from Amazon S3

- An Amazon S3 bucket contains a data file that you want to import, for example,

data.txt. The sample file in this example has the following contents:Direct Sales Tele Sales Catalog Internet Partners - From AWS console, navigate to the AWS Management Console.

- Grant access privileges to the AWS IAM user for the Amazon S3 bucket.

- Create an access key for the user and obtain an object URL for the data file stored in the Amazon S3 bucket.

- An Amazon S3 bucket contains a data file that you want to import, for example,

- Create Cloud Credentials in Autonomous AI Database

- To access data from the Amazon S3 bucket, which is not publicly accessible, you must create a credential to authenticate with Amazon S3. Log in to

<your PDB name>with the database user<your db user name>and run the following script to create credentials based on the access key ID and secret access key for the IAM user<your iam user>:BEGIN DBMS_CLOUD.CREATE_CREDENTIAL( credential_name => 'AWS_S3_CRED', username => 'AWS_ACCESS_KEY_ID', password => 'AWS_SECRET_ACCESS_KEY' ); END; /For detailed information about the parameters, see CREATE_CREDENTIAL Procedure.

- To access data from the Amazon S3 bucket, which is not publicly accessible, you must create a credential to authenticate with Amazon S3. Log in to

- Validate Amazon S3 Files in Amazon S3 using DBMS_CLOUD

- List the objects that are visible to Autonomous AI Database by using the stored credential.

SELECT * FROM DBMS_CLOUD.LIST_OBJECTS( credential_name => 'AWS_S3_CRED', location => 'https://s3.amazonaws.com/mybucket/', prefix => 'sample' );

- List the objects that are visible to Autonomous AI Database by using the stored credential.

- Load Data from Amazon S3 into Autonomous AI DatabaseNote

The target table must exist with the correct format and schema before loading data.- Stage files in Amazon S3: Upload CSV, JSON, or Parquet files to the mapped Amazon S3 bucket using Amazon S3 File Gateway and obtain the Amazon S3 file URI path.

Note

An Amazon S3 file URI path uses the formats3://bucket-name/key-name. - Load data using

DBMS_CLOUD.COPY_DATA:BEGIN DBMS_CLOUD.COPY_DATA( table_name => 'SAMPLE', credential_name => 'AWS_S3_CRED', file_uri_list => 'https://s3.amazonaws.com/mybucket/sample.csv', format => json_object('delimiter' value ',') ); END; /

For detailed information about the parameters, see COPY_DATA Procedure.

- Stage files in Amazon S3: Upload CSV, JSON, or Parquet files to the mapped Amazon S3 bucket using Amazon S3 File Gateway and obtain the Amazon S3 file URI path.

- Validate Data Load

- Run the following query to confirm ingestion and ensure data integrity.

SELECT * FROM <Table_Name>;

- Run the following query to confirm ingestion and ensure data integrity.

- Automate and Optimize

- Enable compression: Store files in compressed formats such as GZIP to reduce transfer costs.

- Monitor performance: Use Autonomous AI Database monitoring tools and Amazon CloudWatch for end-to-end visibility.

Automation supports repeatability, and monitoring helps optimize throughput, latency, and costs.

Advantages of this integration- Seamless data ingestion: Simplifies moving files from AWS workloads into Oracle AI Database@AWS.

- Cost efficiency: Amazon S3 File Gateway reduces the need for complex ETL pipelines.

- Security: IAM‑based credentials and Oracle’s built‑in encryption help ensure compliance.

- Scalability: Amazon S3 provides virtually unlimited storage, and Autonomous AI Database supports elastic compute scaling.

Conclusion:

By combining Amazon S3 File Gateway with Autonomous AI Database in Oracle AI Database@AWS, you can build a robust, secure, and scalable data pipeline. This approach streamlines ingestion, supports hybrid workloads, and leverages the strengths of both Oracle and AWS ecosystems.

For more information, see the following resources:

As organizations adopt hybrid and cloud-first strategies, integrating Oracle Autonomous AI Database with cloud-native storage solutions supports flexibility, scalability, and operational efficiency. Amazon Elastic File System provides a fully managed, scalable, and elastic network file system that can be integrated with Oracle AI Database@AWS. Amazon Elastic File System enables the use of shared storage for backups, schema exports, external tables, and large-scale analytics workloads without affecting database performance or security.

This guide provides step-by-step instructions for connecting Amazon Elastic File System to Autonomous AI Database, performing read and write operations, and using EFS for database export and external table use cases.

Solution Overview

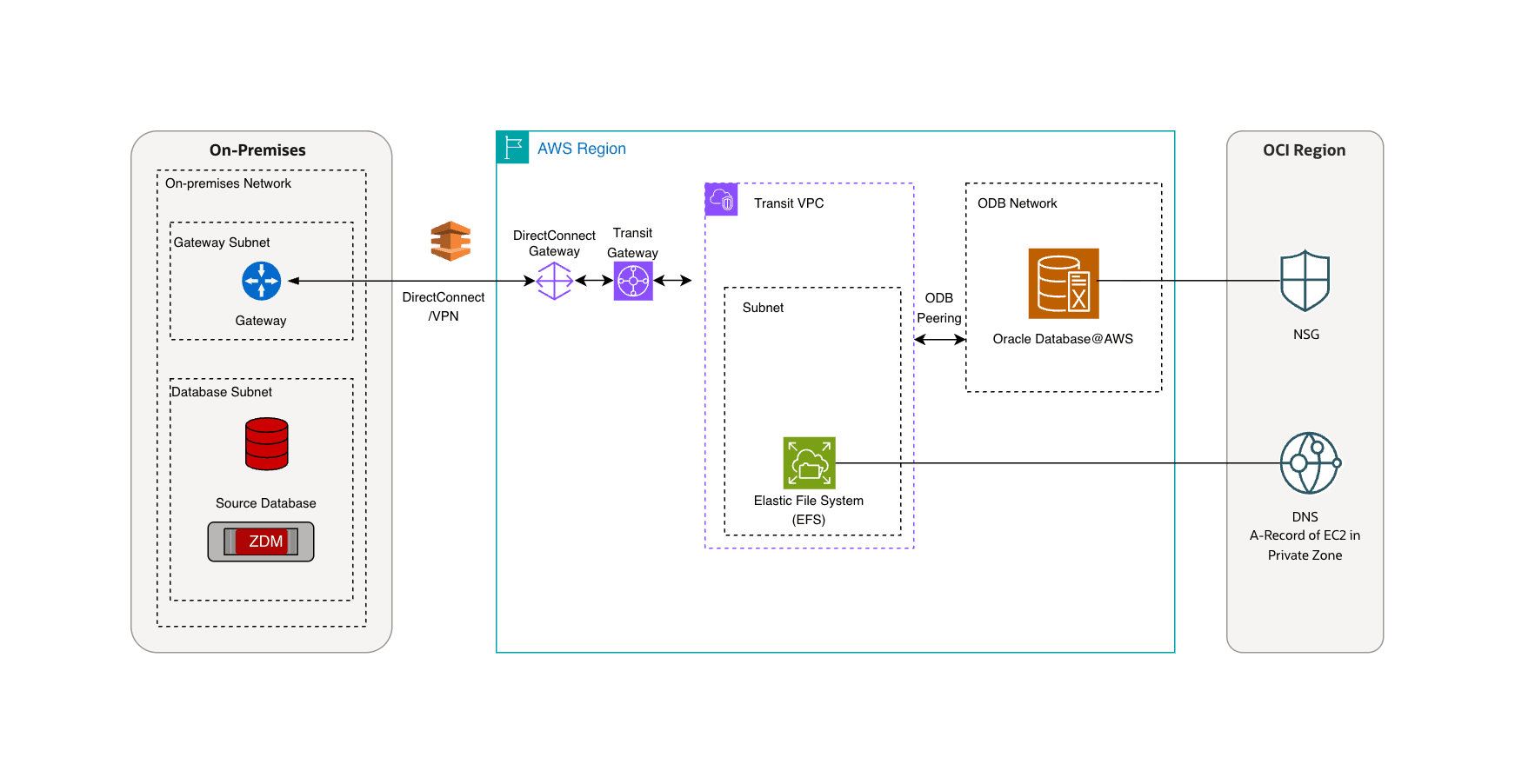

This solution shows how you can use Amazon Elastic File System as a landing zone for logical backups, making it easier to migrate Oracle Database to Autonomous AI Database on Oracle AI Database@AWS.- Source: Oracle Database running on‑premises or in the cloud.

- Target: Autonomous AI Database deployed in Oracle AI Database@AWS.

- Work-flow: The EFS share is provisioned and mounted both on the source systems and on Oracle Exadata Infrastructure hosting Autonomous AI Database.

- Purpose: The mounted EFS acts as a staging area for export dump files or other migration artifacts, which are then seamlessly imported into the target Autonomous AI Database environment.

Prerequisites

The following prerequisites are required to migrate to Autonomous AI Database using Amazon Elastic File System.- Source database: Running on compatible Oracle Database such as Exadata Database, Oracle Real Application Clusters or standalone instance.

- Target Autonomous AI Database: Deployed on Oracle AI Database@AWS

- Amazon Elastic File System: EFS mount point created in the same Availability Zone as your target Autonomous AI Database.

- Network connectivity: Appropriate network routes established between on‑premises systems and the AWS region.

- DNS resolver: It must be able to resolve the EFS IP address.

- Port requirements: TCP port 2049 must be open for inbound traffic to the Autonomous VM Cluster VPC.

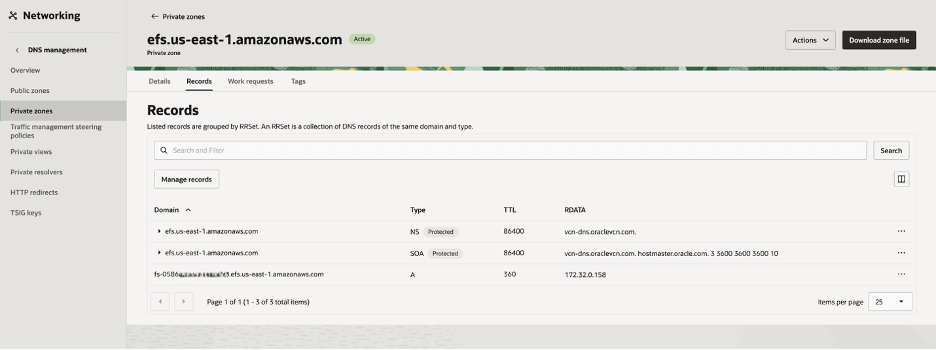

DNS Setup in OCI

These are the steps to resolve the NFS server name, create an A‑record in OCI DNS.- From the Autonomous AI Database details page in Oracle AI Database@AWS, select the Manage in OCI button.

- From the OCI portal, navigate to the Networking section, and then select Virtual cloud networks.

- Select your VCN from the list, and then from the Details tab, select the DNS Resolver link.

- From the Private resolvers Details tab, select the Default private view link.

- Select the Private zones tab and then select the Create zone button. For example, efs.us-east-1.amazonaws.com.

- After creating a zone successfully, select the Records tab to add a record. For example,

fs-0586XXXXXXXXXXXXX.efs.us-east-1.amazonaws.compointing to the actual IP address of the AWS‑managed NFS server. - Select the Review changes button, and then select the Publish changes button to apply your changes.

- Update the Network Security Group (NSG) in OCI to allow traffic from the VPC where the NFS server resides.

- Once it is complete, the FQDN from OCI DB will resolve to the AWS EFS endpoint.

These are the steps to migrate to Autonomous AI Database using Amazon Elastic File System.- Configure Network Access to EFS

- Add the EFS host to the Access Control List (ACL) to allow the database to connect and resolve the EFS endpoint by using the following PL/SQL script:

BEGIN DBMS_NETWORK_ACL_ADMIN.APPEND_HOST_ACE( host => 'fs-0586ab576856f1d00.efs.us-east-1.amazonaws.com', ace => xs$ace_type( privilege_list => xs_name_list('connect','resolve'), principal_name => 'ADMIN', principal_type => xs_acl.ptype_db ) ); END; / COMMIT; - Verify connectivity:

SELECT utl_inaddr.get_host_address( 'fs-0586ab576856f1d00.efs.us-east-1.amazonaws.com' ) AS ip_address FROM dual; - The output will return the IP address of your EFS mount point.

- Add the EFS host to the Access Control List (ACL) to allow the database to connect and resolve the EFS endpoint by using the following PL/SQL script:

- Create a Database Directory for Amazon Elastic File System

- Define a directory object in the database that points to the EFS file system path:

CREATE DIRECTORY EFS_DIR AS 'efs';

- Define a directory object in the database that points to the EFS file system path:

- Attach the Amazon Elastic File System

- Attach the EFS mount to the database using NFSv4:

BEGIN DBMS_CLOUD_ADMIN.ATTACH_FILE_SYSTEM( file_system_name => 'AWS_EFS', file_system_location => 'fs-0586ab576856f1d00.efs.us-east-1.amazonaws.com:/', directory_name => 'EFS_DIR', description => 'AWS EFS attached via NFSv4', params => JSON_OBJECT('nfs_version' VALUE 4) ); END; /

- Attach the EFS mount to the database using NFSv4:

- Verify the Directory

- Confirm that the directory is correctly mapped to the Amazon Elastic File System mount path:

SELECT directory_name, directory_path FROM dba_directories WHERE directory_name = 'EFS_DIR';

- Confirm that the directory is correctly mapped to the Amazon Elastic File System mount path:

- Test Read/Write Access Using UTL_FILE

- Write a File:

DECLARE f UTL_FILE.FILE_TYPE; BEGIN f := UTL_FILE.FOPEN('EFS_DIR', 'test_file.txt', 'w'); UTL_FILE.PUT_LINE(f, 'Hello from ADB-D to EFS!'); UTL_FILE.FCLOSE(f); END; / - Read the File Back:

DECLARE f UTL_FILE.FILE_TYPE; txt VARCHAR2(32767); BEGIN f := UTL_FILE.FOPEN('EFS_DIR', 'test_file.txt', 'r'); UTL_FILE.GET_LINE(f, txt); DBMS_OUTPUT.PUT_LINE('Read from EFS: ' || txt); UTL_FILE.FCLOSE(f); END; /

- Write a File:

- Export Database Schema to Amazon Elastic File System

- Use Oracle Data Pump (

expdp) to export the schema directly to the Amazon Elastic File System directory.expdp admin/DBatAWS_1234@demofspdb_tp schemas=TEST directory=EFS_DIR dumpfile=test.dmp logfile=test.log - Verify exported files:

SELECT OBJECT_NAME FROM DBMS_CLOUD.LIST_FILES('EFS_DIR');

- Use Oracle Data Pump (

- Import Database Schema from EFS into Autonomous AI Database

- Once the schema dump files are available on Amazon Elastic File System, you can import directly into the target database:

impdp admin/DBatAWS_1234@demofspdb_tp schemas=TEST directory=EFS_DIR dumpfile=test.dmp logfile=import_test.log - Verify imported objects:

SELECT table_name FROM user_tables WHERE owner = 'TEST';

- Once the schema dump files are available on Amazon Elastic File System, you can import directly into the target database:

Best Practices- Use NFSv4: Ensures better performance and compatibility with Autonomous AI Database.

- Enable Multi-AZ Mount Targets: Increases availability and fault tolerance.

- Secure Access with IAM and VPC Security Groups: Protects data in transit and at rest.

- Monitor Usage: Regularly check Amazon Elastic File System performance metrics to prevent bottlenecks for large workloads.

Conclusion

Integrating Amazon Elastic File System with Autonomous AI Database on Autonomous AI Database provides a flexible, scalable, and secure storage solution. By following the steps outlined above, you can use EFS for database exports, external tables, and general file storage. This enables seamless hybrid cloud work-flows while maintaining Oracle’s high availability and performance standards. This setup helps you efficiently manage large data-sets, streamline migrations, and improve business continuity in the cloud.