Exadata VM Cluster

To modify an existing Exadata VM Cluster, follow these steps.

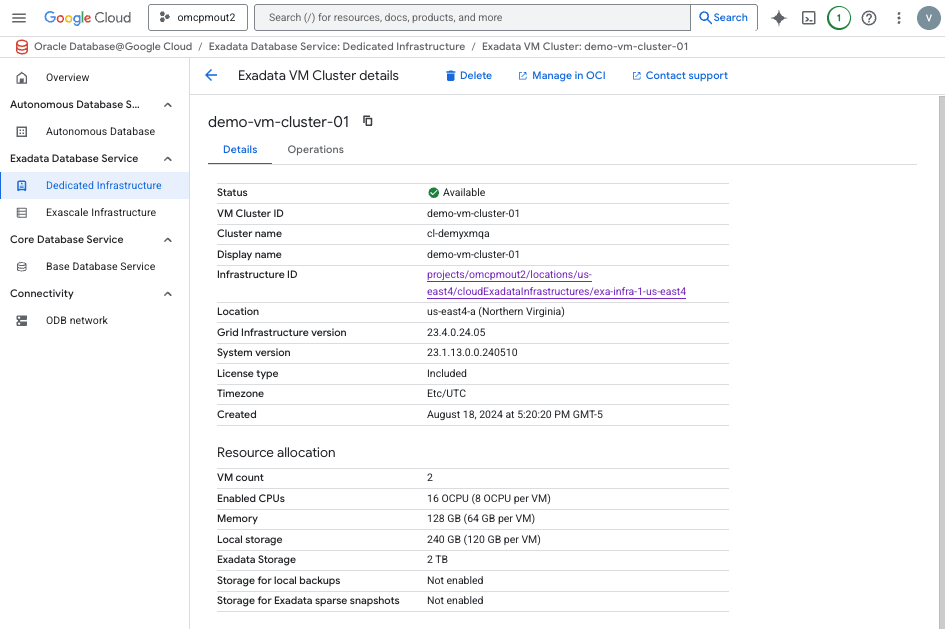

- From the Oracle AI Database@Google Cloud dashboard , select Exadata Database Service > Dedicated infrastructure from the left-menu.

- Select the Exadata VM Cluster tab from the Exadata Database Service list.

- Select the Exadata VM Cluster from the list.

- Select the Manage in OCI link.

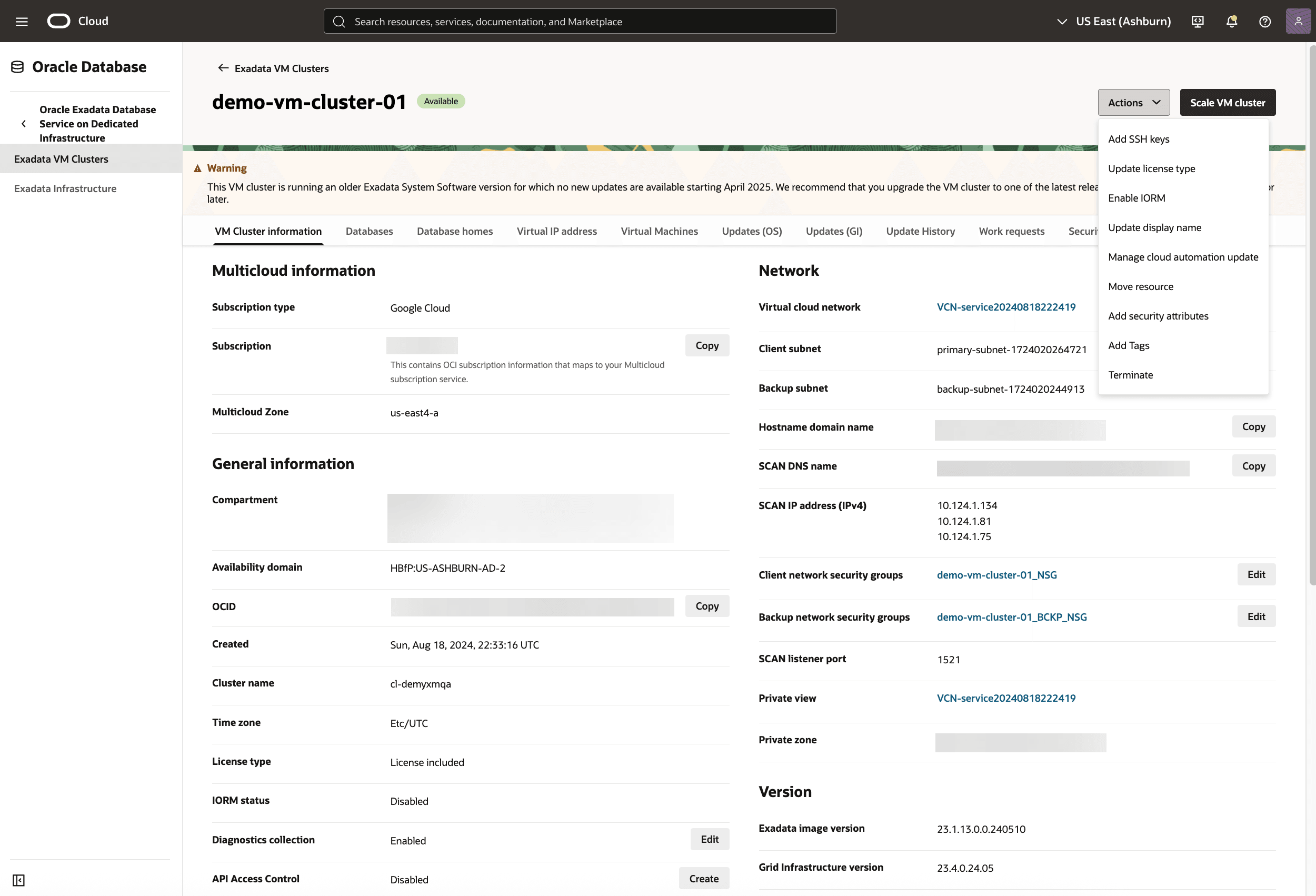

- To change scaling options, in the OCI console for the selected Exadata VM Cluster, select the Scale VM cluster button.

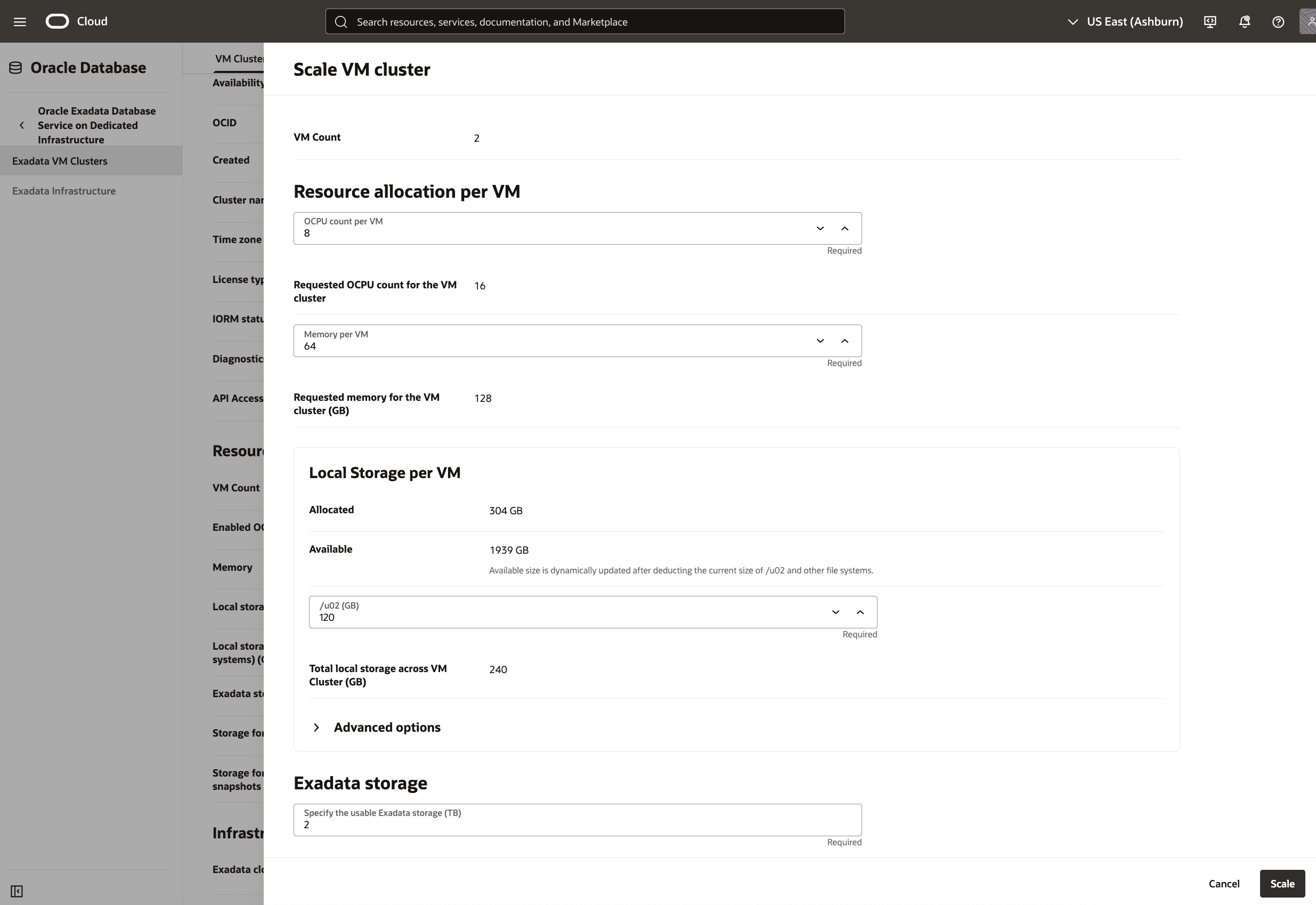

- From the Scale VM cluster page, you can scale your resource usage. The VM count shows you the current Exadata VM Cluster count.

- In the Resource allocation per VM section, you can modify the following values.

- In the OCPU count per VM field, you can enter a value. This value is constrained by your Exadata Infrastructure.

- The Requested OCPU count for the VM cluster is a read-only field.

- In the Memory per VM field, you can enter a value. This value is constrained by your Exadata Infrastructure.

- The Requested memory for the VM cluster (GB) is a read-only field.

- In the Local Storage per VM section, you can modify the following values.

- The Allocated and Available fields are read-only. These values are constrained by your Exadata Infrastructure.

- In the /u02 (GB) field, you can enter a value. The prompt shows you the range of valid values. This value is constrained by your Exadata Infrastructure.

- The Total local storage across VM Cluster (GB) is a read-only calculated value.

- In the Advanced options section, which is optional and collapsed by default, you can enter values for / (GB (root), /var (GB), /home (GB), /tmp (GB), /u01 (GB), /var/log (GB), and /var/log/audit (GB). These values are constrained by your Exadata Infrastructure and your previous selections.

- In the Exadata storage section, you can modify the following values.

- In the Specify the usable Exadata storage field, you can enter a value. The prompt shows you the range of valid values. This value is constrained by your Exadata Infrastructure.

- The Usable storage allocation: is a read-only calculated field.

- Select the Scale button when finished.

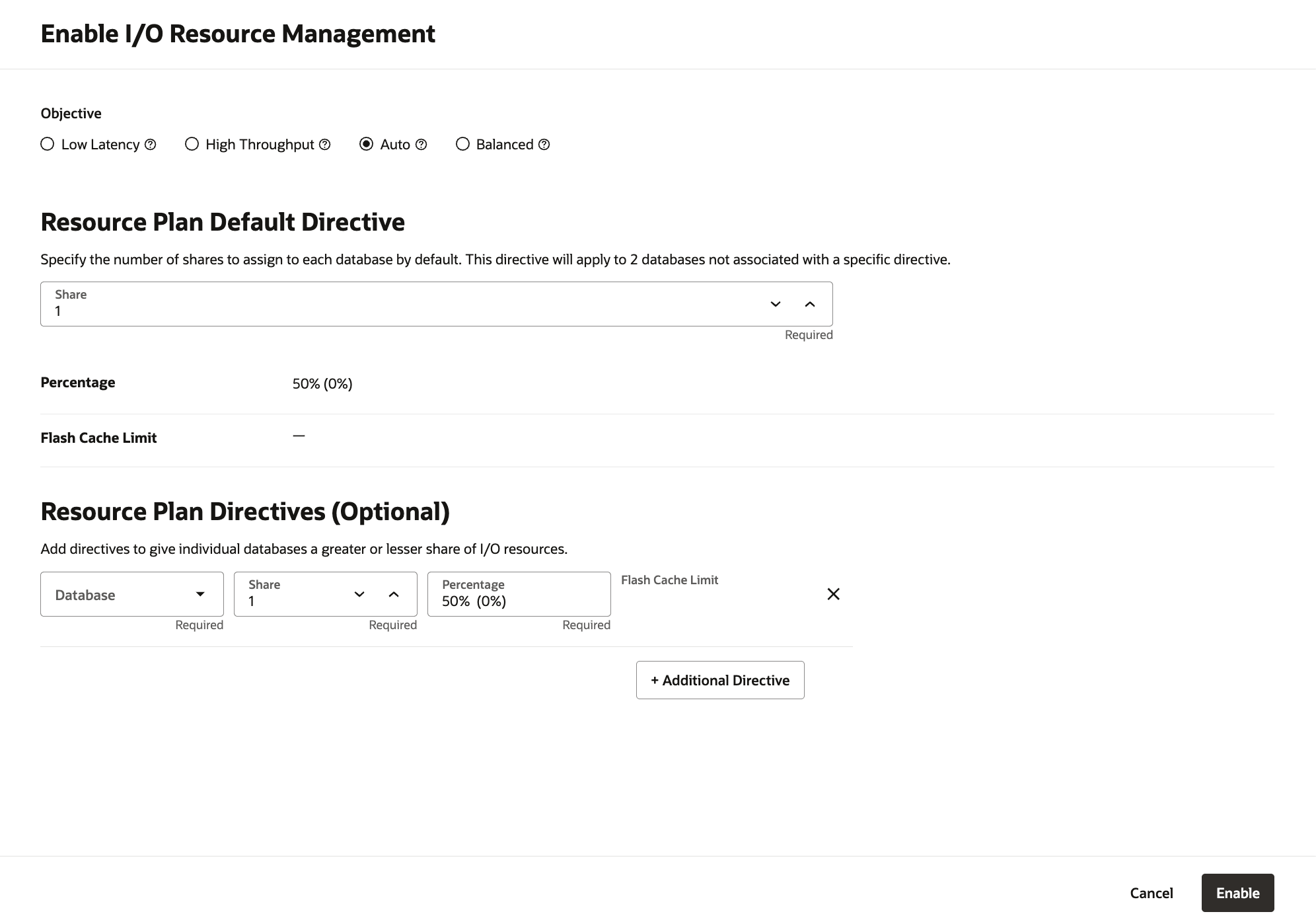

- To change the I/O resource management, in the OCI console for the selected Exadata VM Cluster, select the Actions button and the Enable IORM item from the menu. From the Enable I/O Resource Management page, you can change the following options.

Note

The Enable I/O Resource Management screen allows administrators to control how Exadata VM Cluster storage I/O resources are allocated across multiple databases. This ensures fair resource usage, prevents one database from monopolizing I/O, and aligns system performance with workload priorities. IORM does not set hard limits, but uses relative shares to prioritize databases. If a database is idle, others can use its share.- In the Objective radio group, select Low Latency, High Throughput, Auto, or Balanced as desired. For more on each of these, select the information icon beside the value.

- In the Resource Plan Default Directive section, set the Share value. This value specifies the number of shares to assign to each database by default.

- The Percentage and Flash Cache Limit are read-only calculated fields.

- The Resource Plan Directives (Optional) section, allows you to add directives for individual databases to allocate a greater or lesser share of I/O resources. By default, there are no directives. Select the + Additional Directive to add one or more.

- If a directive is added, the Database field allows you to select an available database. The Share field allows you to increase or decrease that database's resources. The Percentage field is calculated based on your input. The Flash Cache Limit allows you to cap this value, if available for your database.

- Select the Enable button to continue.

- To add a tag to your Exadata VM Cluster, in the OCI console for the selected Exadata VM Cluster, select the Actions button and the Add Tags item from the menu. From the Add Tags page, you can change following.

- The Namespace drop-down is a single value

None (free-form tag). - The Key and Value fields can be used needed.

- For more information on what you can do with tags, see Overview of Tagging.

- If you want to enter another tag, select the Add tag button.

- When you have added the tag(s) you want, select the Add button.

- The Namespace drop-down is a single value

- To change scaling options, in the OCI console for the selected Exadata VM Cluster, select the Scale VM cluster button.

There is currently no content for this page. Oracle AI Database@Google Cloud team intends to add content here, and this placeholder text is provided until that text is added. The Oracle AI Database@Google Cloud team is excited about future new features, enhancements, and fixes to this product and this accompanying documentation. We strongly recommend you watch this page for those updates.

There is currently no content for this page. Oracle AI Database@Google Cloud team intends to add content here, and this placeholder text is provided until that text is added. The Oracle AI Database@Google Cloud team is excited about future new features, enhancements, and fixes to this product and this accompanying documentation. We strongly recommend you watch this page for those updates.

You can modify an Exadata VM Cluster in Google Cloud using OCI Terraform provider.

Prerequisites

- Terraform or OpenTofu installed

- OCI credentials configured

- HashiCorp OCI provider version >= 7.0

- Exadata VM Cluster is created using Terraform

To modify an Exadata VM Cluster, perform the following steps:- Create a Modify configuration file and add the import block with the Exadata VM Cluster id. The id value can be found at the Create Exadata VM Cluster output block.

Sample Terraform Configuration using OCI Terraform Provider

# Set OCI Provider source and version terraform { required_providers { # OCI Provider oci = { source = "oracle/oci" version = "7.29.0" } } } # Configure the OCI Provider provider "oci" { tenancy_ocid = "ocid1.tenancy.oc1..aaaaaaaa2pg53mzroh6r2ub72r5mxxxxxxxxxxxxxpylciiqdihofe3dq" user_ocid = "ocid1.user.oc1..aaaaaaaaa6wyl3kzbgogsqpsixxxxxxxxxxxxxxxxuwzkemcrgue5q" private_key_path = "C:\\OCI-Terraform\\private-key.pem" # This example is for Windows OS fingerprint = "6c:c7:a7:60:f2:99:62:b9:8e:01:fc:e8:9b:eb:61:61" region = "us-desmoines-1" } # Import Exadata VM Cluster Configuration import { to = oci_database_cloud_vm_cluster.oci_exa_vm_cluster id = "ocid1.cloudvmcluster.oc1.us-desmoines-1.anrxkljrou6knayaa7xxxxxxxxxxxxunyiz4f6sdpfe5ov2tu3wvbyrq" # Replace the OCID value } - Run the following commands in the Terminal window:

terraform initterraform validateterraform plan -generate-config-out="C:\GoogleCloud-Terraform\Working-Directory\generated_exa_vm_cluster.tf" # An example on Windows OS - Open the

generated_exa_vm_cluster.tffile in a text editor and copy the content. - Append the

generated_exa_vm_cluster.tfcontent in the Modify configuration file. Remove the import block. - Run the following command in the Terminal window:

terraform import oci_database_cloud_vm_cluster.oci_exa_vm_cluster "ocid1.cloudvmcluster.oc1.us-desmoines-1.anrxkljrou6knayaa7xxxxxxxxxxxxunyiz4f6sdpfe5ov2tu3wvbyrq" # Replace the OCID value - Run the following command in the Terminal window to confirm the imported state file and the generated configuration file resources are in sync:

terraform plan - Update the properties/values in the Modify configuration file as required.

NoteSample Terraform Configuration using OCI Terraform Provider

Modifying the configuration of Exadata VM Cluster using OCI Terraform provider will make the Create configuration file out of sync. To avoid out of sync Force replacement / Destroy issue, add the following lifecycle block in the Create configuration file.lifecycle { ignore_changes = [ properties[0].db_server_ocids, properties[0].cpu_core_count, properties[0].memory_size_gb, properties[0].db_node_storage_size_gb, properties[0].data_storage_size_tb, properties[0].local_backup_enabled, properties[0].sparse_diskgroup_enabled ] }# __generated__ by Terraform # Please review these resources and move them into your main configuration files. # __generated__ by Terraform from "ocid1.cloudvmcluster.oc1.us-desmoines-1.anrxkljrou6knayaa7xxxxxxxxxxxxunyiz4f6sdpfe5ov2tu3wvbyrq" resource "oci_database_cloud_vm_cluster" "oci_exa_vm_cluster" { backup_network_nsg_ids = ["ocid1.networksecuritygroup.oc1.us-desmoines-1.aaaaaaaa5spgwe4zrtgf3dghs3xxxxxxxxxxxpxqp2ar32faknvrpojpptaxa"] backup_subnet_id = "ocid1.subnet.oc1.us-desmoines-1.aaaaaaaa4vmrgp2dcluqgxxxxxxxfbiurd7ttktoa5gwxx2ka" cloud_exadata_infrastructure_id = "ocid1.cloudexadatainfrastructure.oc1.us-desmoines-1.anrxkljrou6knayackcbpbksxfkxxxxxxxxxxxmfgw6cdmw55ystti7a" cluster_name = "cl-u3wvbyrq" compartment_id = "ocid1.compartment.oc1..aaaaaaaaqn5kip2ivclikxxxxxxxxxxxxrhoovfswhzc5rykq3wfkrdoa" cpu_core_count = 48 create_async = null data_storage_percentage = 80 data_storage_size_in_tbs = 4 db_node_storage_size_in_gbs = 120 db_servers = ["ocid1.dbserver.oc1.us-desmoines-1.anrxkljr6v2haxaahos55yinuxxxxxxxxqfumsycui7quarv4cjasaq", "ocid1.dbserver.oc1.us-desmoines-1.anrxkljr6v2haxaars7dueg46sm4xxxxxxxxxxxdozenln67rxv4r3wceh6b3q"] defined_tags = { "Oracle-Tags.CreatedBy" = "ocid1.multicloudlink.oc1.iad.amaaaaaaou6knayafyn3zgqe4cwafxxxxxxxxxxx6ygzgwiln3zdhyypbtq" "Oracle-Tags.CreatedOn" = "2026-01-02T05:25:45.792Z" } display_name = "demo-exadata-vm-cluster-tf" domain = "mauingybxr.v6a1fdff9.oraclevcn.com" freeform_tags = {} gi_version = "23.26.0.0.0" hostname = "vm-znkeq" is_local_backup_enabled = false is_sparse_diskgroup_enabled = false license_model = "BRING_YOUR_OWN_LICENSE" memory_size_in_gbs = 60 nsg_ids = ["ocid1.networksecuritygroup.oc1.us-desmoines-1.aaaaaaaadmguhpdoarhi7gqtltbmxxxxxxxxx4s3jebilpquzvufst2gq"] ocpu_count = 6 scan_listener_port_tcp = 1521 scan_listener_port_tcp_ssl = 2484 security_attributes = {} ssh_public_keys = ["ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC2Xwmyn1cVUhDhqyb/B0gYQwlR88gnEJOAg+bhUvGjMB0gVCxxxxxxxxxxxYGPdrrCH/dzWcfysXzSs8XZpVmv4yYhpMC4C/4hQkBjwqgsBii5tJP7EFQjb2TfQPYbTkrn4SBNZhR1lYHr543yhB1Va7tjJNZz7 first.last@email.com"] subnet_id = "ocid1.subnet.oc1.us-desmoines-1.aaaaaaaahawbny2yivfdvfxxxxxxxxxxxxxkwo6qq27i5cazf4vevnjupa" system_version = "25.1.10.0.0.251020" tde_key_store_type = "OCI" time_zone = "UTC" vm_cluster_type = "REGULAR" cloud_automation_update_details { is_early_adoption_enabled = false is_freeze_period_enabled = false apply_update_time_preference { apply_update_preferred_end_time = "06:00" apply_update_preferred_start_time = "00:00" } } data_collection_options { is_diagnostics_events_enabled = true is_health_monitoring_enabled = true is_incident_logs_enabled = true } file_system_configuration_details { file_system_size_gb = 50 mount_point = "$GRID_HOME" } file_system_configuration_details { file_system_size_gb = 15 mount_point = "/" } file_system_configuration_details { file_system_size_gb = 1 mount_point = "/boot" } file_system_configuration_details { file_system_size_gb = 20 mount_point = "/crashfiles" } file_system_configuration_details { file_system_size_gb = 4 mount_point = "/home" } file_system_configuration_details { file_system_size_gb = 3 mount_point = "/tmp" } file_system_configuration_details { file_system_size_gb = 20 mount_point = "/u01" } file_system_configuration_details { file_system_size_gb = 5 mount_point = "/var" } file_system_configuration_details { file_system_size_gb = 18 mount_point = "/var/log" } file_system_configuration_details { file_system_size_gb = 3 mount_point = "/var/log/audit" } file_system_configuration_details { file_system_size_gb = 9 mount_point = "reserved" } file_system_configuration_details { file_system_size_gb = 16 mount_point = "swap" } } - Run the following command in the Terminal window:

terraform apply