Data Flow

Data Flow is a fully managed, serverless Apache Spark service used to run large-scale distributed data processing for analytics. It enables teams to execute batch ETL/ELT pipelines, data preparation, feature engineering, and aggregations without provisioning or managing Spark clusters. Data Flow provisions compute on demand for each run, auto-scales, and then releases resources when jobs complete—supporting cost-efficient execution for intermittent and scheduled workloads. This subject area enables tracking Data Flow pools and their details.

Business Questions

The subject area can answer the following business questions:

- What's the Data Flow pool count?

- What's the number of nodes currently in active use for a pool?

- What's the number of runs currently using a pool?

- How many Data Flow pools are active today?

- How has the pool count changed over time (monthly)?

- What's the pool count across compartments?

Logical Model

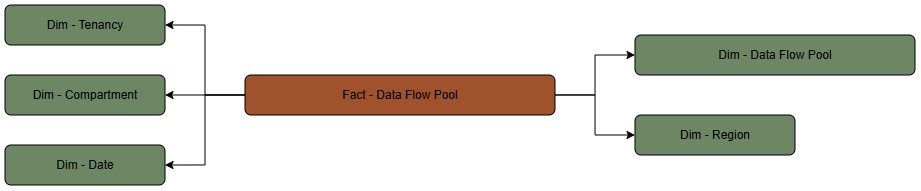

The Data Flow subject area is based on a relationship-driven logical model.

This diagram shows how the Data Flow pool fact table is related to its dimension tables:

Metric Details

The fact folders in this subject area include the following metrics:

| Metric | Description |

|---|---|

| Data Flow Pool Count | COUNT(ocira_fact_key) from the fact view's ocira_fact_key (maps to ocira$fact_key) |

| Idle Timeout in Minutes | Provides the idle timeout configured for the pool |

| Pool Metrics Active Runs Count | Provides the number of runs currently using the pool |

| Pool Metrics Actively Used Node Count | Provides the number of nodes currently in active use for this pool |