Process Large Data Sets Asynchronously with Different Bulk Operations

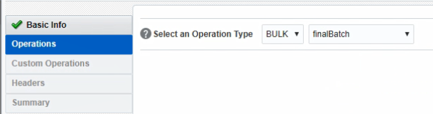

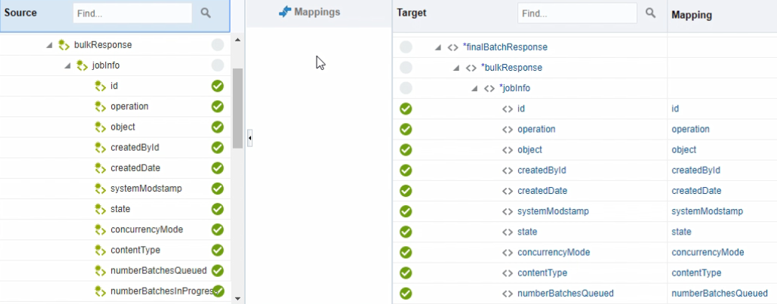

The Salesforce Bulk API enables you to handle huge data sets asynchronously with different bulk operations. For every bulk operation, the Salesforce application creates a job that is processed in batches.

A job contains one or more batches in which each batch is processed independently. The batch is a nonempty CSV/XML/JSON file that is limited to 8,000 records and is less than 8 MB in size. Because the batches are processed in parallel, no execution order is followed. A batch can contain a maximum of 10,000,000 characters in which 5,000 fields in a batch are allowed with a maximum of 400,000 characters for all its fields and 32,000 characters for each field.

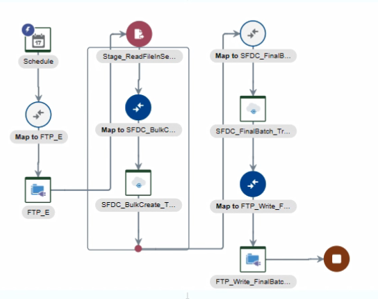

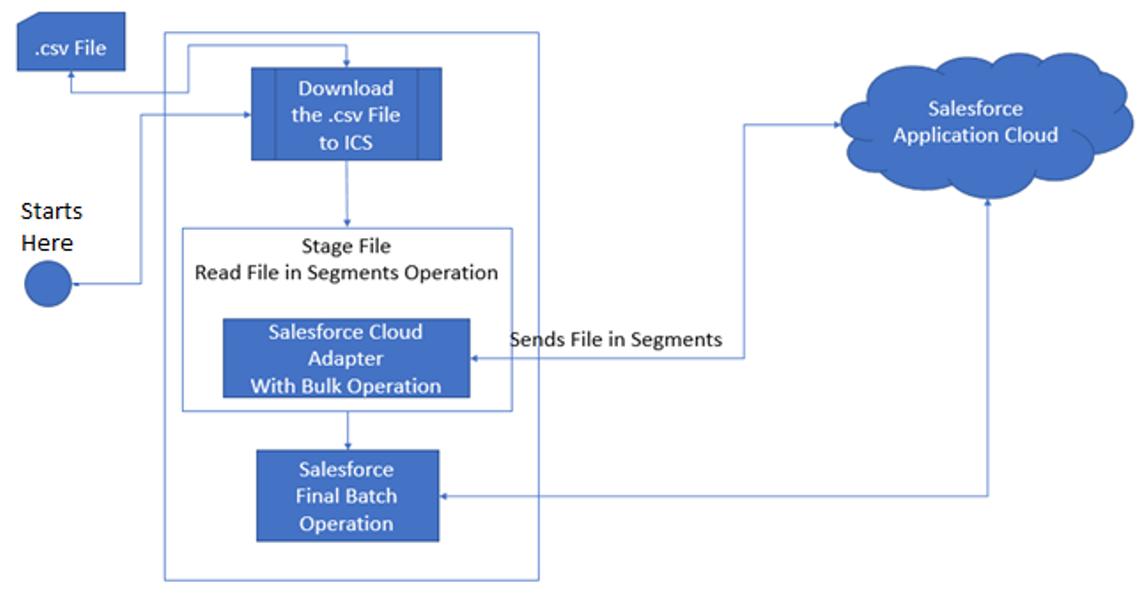

This use case discusses about how to configure the Salesforce Adapter to create a large number of account records in Salesforce Cloud.

To perform this operation, you create FTP Adapter and Salesforce Adapter connections in Oracle Integration.

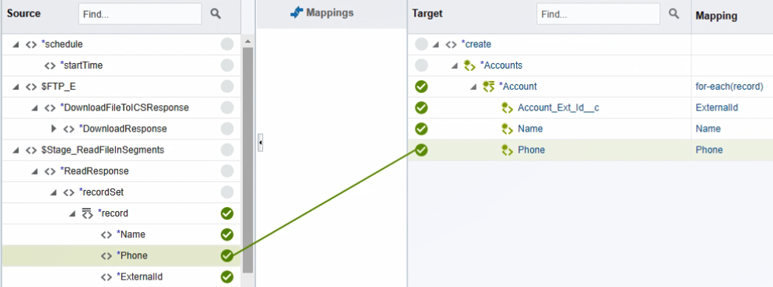

In this use case, a CSV file is used as input, but you can also use other format files. The Salesforce Adapter transforms the file contents into a Salesforce-recognizable format.

Description of the illustration bulk_operations.png