Load Data from Amazon Simple Storage Service into Oracle Fusion Data Intelligence (Preview)

As a service administrator, you can use the Oracle Fusion Data Intelligence extract service to acquire data from Amazon Simple Storage Service (AWS S3) and use it to create data augmentations.

Ensure that AWS S3 is enabled on the Enable Features page prior to creating this connection. See Make Preview Features Available.

- In Oracle Fusion Data Intelligence, create the AWS S3 data connection using these instructions:

- In Oracle Fusion Data Intelligence Console, click Data Configuration under Application Administration.

- On the Data Configuration page, click Manage Connections.

- On the Manage Connections page, click Create and then click Connection.

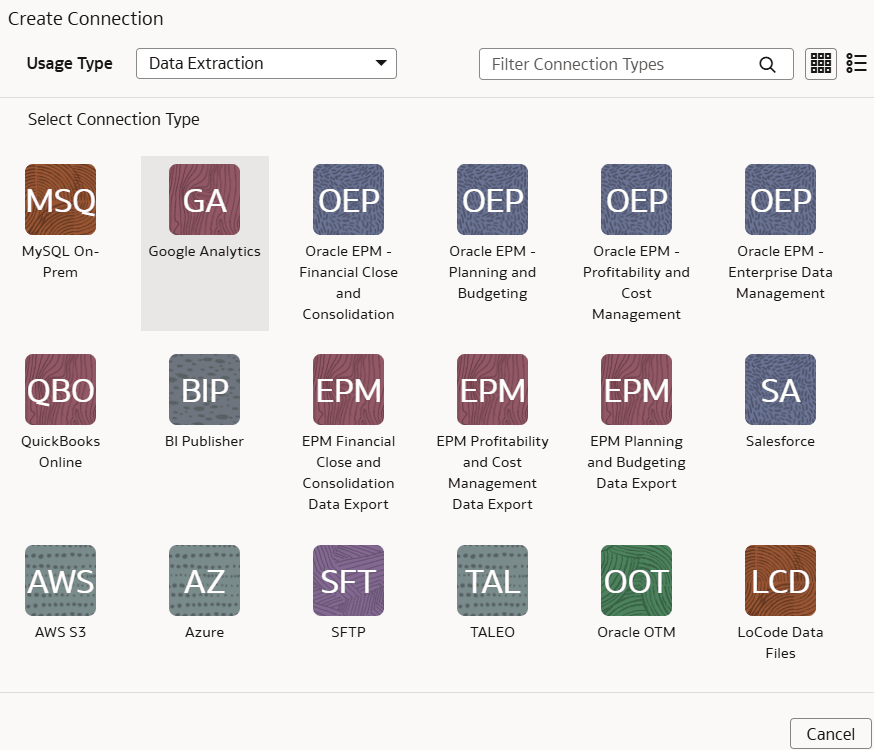

- In Create Connection, select Data Extraction in Usage Type, and then select AWS S3 as the connection type.

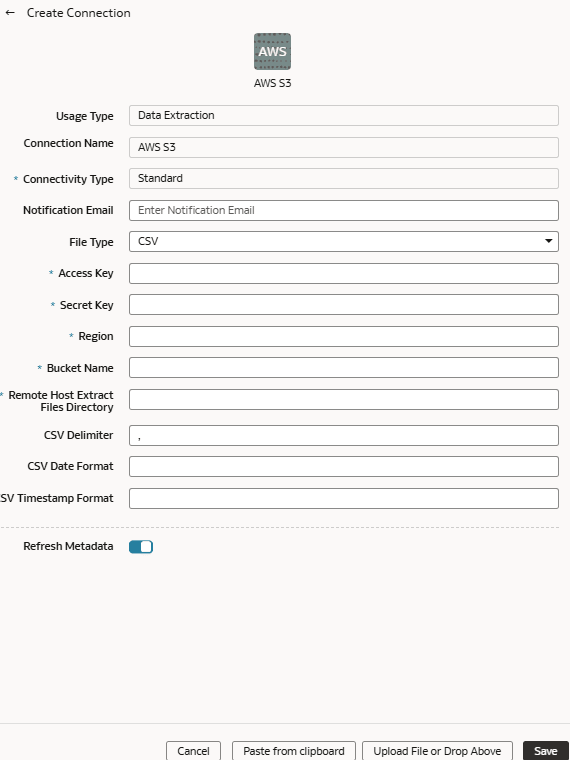

- In the dialog for the AWS S3 connection, select Standard in Connectivity Type, enter an email address to receive notifications in Notification Email, and provide applicable details of your AWS S3.

- Verify that the Refresh Metadata toggle is enabled to ensure that the metadata is refreshed when you save the connection. You can later refresh the metadata from the Actions menu on the Manage Connections page, if required.

Note:

You can’t create augmentations for AWS S3 unless you perform a metadata extract. - Click Save.

- In Oracle Object Storage Service:

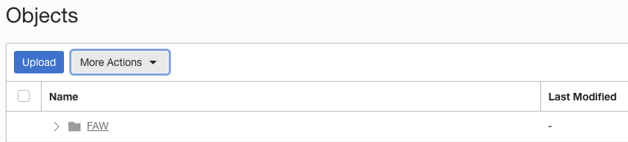

- Create the folder structure in the Bucket using these guidelines:

- The base folder in the bucket must match with the details provided in the connection.

- Inside the base folder, ensure to place each file in its own folder.

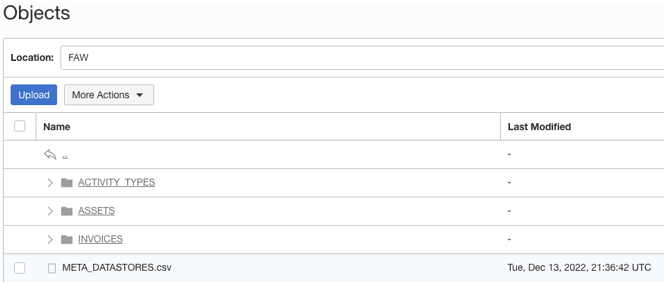

- Ensure that the Prefix of Data_Store_Name (same as Folder name) and Files in the target folder match exactly.

See the "To create a folder or subfolder" section in Using the Console.

- Inside the base folder, create the metadata file for the Data Store List. This file lists the supported data stores. Each data store is a folder that has the actual file used in data augmentation, for example, ASSETS. Ensure that the file name and folder name match and there aren’t any special characters (including space) in the datastore, folder or file names.

- Create the metadata file for each data file under the data store folder using these guidelines:

The META_DATASTORES.csv must have these columns:

- DATA_STORE_NAME - A mandatory column to identify the data store name.

- DATA_STORE_LABEL - A non-mandatory column that identifies the description of the data store.

Note:

If you add new datastores to an existing source connection, make sure that META_DATASTORES.csv contains the full list of datastores, including the older existing datastores.Each folder must have:

- A data file that has the actual data that gets loaded into Fusion Data Intelligence. This file must have a prefix with the DATA STORE NAME.

- A metadata file for the list of columns contains all the column information on the data. This file must have a Prefix with META_DATASTORES_<DATA_STORE_NAME>_COL.

- For the columns in this metadata, ensure the following:

- If column name is ABC, then metadata can be ABC or “ABC” - the double quotes are ignored.

- If column name is “ABC”, then metadata must be “”ABC”” – the first double quotes are ignored.

Example

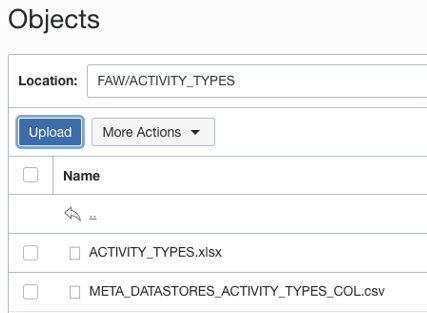

In the image, the folder name is ACTIVITY_TYPES. Hence, the data store name is ACTIVITY_TYPES. You can confirm this from the META_DATASTORES.csv file. In this example, the file is named ACTIVITY_TYPES.xlsx or ACTIVITY_TYPES.csv. The metadata file must be META_DATASTORES_ACTIVITY_TYPES_COL.csv.

The META_DATASTORES_ACTIVITY_TYPES_COL.csv has these columns:

The META_DATASTORES_ACTIVITY_TYPES_COL.csv has these columns:- DATA_STORE_NAME - This is a mandatory column.

- COLUMN_NAME - This is a mandatory column.

- COLUMN_LABEL - This is a non-mandatory column.

- DATA_TYPE – This is a mandatory column.

- WIDTH – This column identifies the string length.

- PRECISION - This column value must be Numeric data type.

- SCALE - This column value must be Numeric data type.

- KEY_SEQUENCE - This is a mandatory column that identifies the Primary Key definition. If you’re using the composite primary key, then use column order numbers as values.

- Create the folder structure in the Bucket using these guidelines:

- On the Manage Connections page, select Actions for the AWS S3 connection and then select Test Connection.

- To delete a connection, on the Manage Connections page, click Actions for the applicable connection and select Delete.

Note:

Ensure that you delete the functional areas, data augmentations, and custom data configurations related to the data connection before deleting it. You can't update or load data from deleted data connections to the warehouse. - After the connections are successfully established, navigate to the Data Configuration page, select the connection in Data Source, then click the Data Augmentation tile, and create a data augmentation using the AWS S3 data. Select the applicable AWS S3 source tables. See Augment Your Data.