SQL Tuning

After you have determined the overall locking and I/O strategies, make sure that the individual SQL statements run as efficiently as possible.

The following sections describe how to streamline your SQL statements:

-

Collect and Evaluate Sampling of Runtimes for SQL Statements

-

Creating Tables Without Indexes for Performance When Loading Data

-

Control the Invalidation of Commands in the SQL Command Cache

-

Store Data Efficiently with Column-Based Compression of Tables

-

Control Read Optimization During Concurrent Write Operations

Tune Statements and Use Indexes

Performance impact: Large

If only col1 from table t1 is needed, use

the statement:

SELECT col1 FROM t1...

instead of using:

SELECT * FROM t1...

The TimesTen Query Optimizer describes how to view the plan that TimesTen uses to run a statement.

Alternatively, you can use the ttIsql showplan command to view the

plan. View the plan for each frequently run statement in the application. If indexes are

not used to evaluate predicates, consider creating new indexes or rewriting the

statement or query so that indexes can be used. For example, indexes can only be used to

evaluate WHERE clauses when single columns appear on one side of a

comparison predicate (equalities and inequalities), or in a BETWEEN

predicate.

If this comparison predicate is evaluated often, it would therefore make sense to rewrite

WHERE c1+10 < c2+20

to

WHERE c1 < c2+10

and create an index on c1.

The presence of indexes does slow down write operations such as UPDATE, INSERT, DELETE and CREATE VIEW. If an application does few reads but many writes to a table, an index on that table may hurt overall performance rather than help it.

Occasionally, the system may create a temporary index to speed up query evaluation. If this happens frequently, it is better for the application itself to create the index. The CMD_TEMP_INDEXES column in the MONITOR table indicates how often a temporary index was created during query evaluation.

If you have implemented time-based aging for a table or cache group, create an index on the timestamp column for better performance of aging. See Time-Based Aging.

Collect and Evaluate Sampling of Runtimes for SQL Statements

Performance impact: Variable

Instead of tracing, the built-in procedures sample the runtime of SQL statements

during processing. The built-in procedures measure the runtime of SQL statements by timing the

processing within the SQLExecute API.

You can configure the sampling rate and how the runtimes are collected with the ttStatsConfig built-in procedure and the following name-value pairs:

Note:

See ttStatsConfig in the Oracle TimesTen In-Memory Database Reference.

Table 10-1 ttStatsConfig Parameter and Value Descriptions

| Parameter | Description |

|---|---|

|

|

Configures how often a SQL statement runtime sample is taken. The default is 0, which means that the sampling is turned off. For example, when set to 10, TimesTen captures the wall clock time of the SQL statement runtime for every 10th statement. |

|

|

Configures how often a SQL statement sample is taken for an individual connection. The value includes two parameters separated by a comma within quotes, so that it appears as a single value. The first number is the connection ID; the second is the same as the |

|

|

When set to a nonzero value, clears the SQL runtime histogram data. |

|

|

Sets the level of statistics to be taken. Values can be set to either |

The following are examples of how to set the name-value pairs with the ttStatsConfig built-in procedure:

Note:

You can achieve the best results by choosing representative connections with the ConnSampleFactor parameter in the ttStatsConfig built-in procedure, rather than sampling all transactions. Sampling all transactions with a small sample factor can affect your performance negatively.

For meaningful results, the database should remain in memory since unloading and re-loading the database empties the SQL command cache.

Sample every 5th statement on connection 1.

Command> call ttStatsConfig('ConnSampleFactor', '1,5');

< CONNSAMPLEFACTOR, 1,5 >

1 row found.Turn off sampling on connection 1.

Command> call ttStatsConfig('ConnSampleFactor', '1,0');

< CONNSAMPLEFACTOR, 1,0 >

1 row found.Sample every command:

Command> call ttStatsConfig('SqlCmdSampleFactor',1);

< SQLCMDSAMPLEFACTOR, 1 >

1 row found.Check whether sampling:

Command> call ttStatsConfig('SqlCmdSampleFactor');

< SQLCMDSAMPLEFACTOR, 1 >

1 row found.Check the current database statistics collection level.

Command> call ttStatsConfig('StatsLevel');

< STATSLEVEL, TYPICAL >

1 row found.Turn off database statistics collection by setting to NONE.

Command> call ttStatsConfig('StatsLevel','None');

< STATSLEVEL, NONE >

1 row found.Once you have configured the statistics that you want collected, the collected

statistics are displayed with the ttSQLCmdCacheInfo built-in procedure. To

display the runtime histogram at either the command or database levels, use the

ttSQLExecutionTimeHistogram built-in procedure.

The ttSQLCmdCacheInfo built-in procedure displays the following information relating to SQL runtime statistics:

-

Number of fetch executions performed internally for this statement.

-

The timestamp when the statement started.

-

The maximum wall clock run time in seconds of this statement.

-

Last measured runtime in seconds of the statement.

-

The minimum runtime in seconds of the statement.

In the following example, the display shows these statistics as the last five values:

Command> vertical call ttSQLCmdCacheInfo(135680792); SQLCMDID: 135680792 PRIVATE_COMMAND_CONNECTION_ID: -1 EXECUTIONS: 97414 PREPARES: 50080 REPREPARES: 1 FREEABLE: 1 SIZE: 3880 OWNER: SALES QUERYTEXT: select min(unique2) from big1 FETCHCOUNT: 40 STARTTIME: 2018-04-10 13:10:46.808000 MAXEXECUTETIME: .001319 LASTEXECUTETIME: .000018 MINEXECUTETIME: .000017 EXECLOC: 0 GRIDCMDID: 00000000000000000000 TEMPSPACEUSAGE: 0 MAXTEMPSPACEUSAGE: 0 1 row found.

See ttSQLCmdCacheInfo in the Oracle TimesTen In-Memory Database Reference.

The ttSQLExecutionTimeHistogram built-in procedure displays a histogram of SQL runtimes for either a single SQL command or all SQL commands in the command cache, assuming that sampling is enabled where SQLCmdSampleFactor is greater than zero.

The histogram displays a single row for each bucket of the histogram. Each row includes the following information:

-

The number of SQL statement runtime operations that have been measured since either the TimesTen database was started or after the

ttStatsConfigbuilt-in procedure was used to reset statistics. -

Accumulated wall clock runtime.

-

The runtime limit that denotes each time frame.

-

The last row shows the number of SQL statements that ran in a particular time frame.

The following example shows the output for the ttSQLExecutionTimeHistogram built-in procedure:

The following example of the ttSQLExecutionTimeHistogram built-in procedure shows that a total of 1919 statements ran. The total time for all 1919 statements to run was 1.090751 seconds. This example shows that SQL statements ran in the following time frames:

-

278 statements ran in a time frame that was less than or equal to .00001562 seconds.

-

1484 statements ran in a time frame that was greater than .00001562 seconds and less than or equal to .000125 seconds.

-

35 statements ran in a time frame that was greater than .000125 seconds and less than or equal to .001 seconds.

-

62 statements ran in a time frame that was greater than .001 seconds and less than or equal to .008 seconds.

-

60 statements ran in a time frame that was greater than .008 seconds and less than or equal to .064 seconds.

Command> call ttSQLExecutionTimeHistogram; < 1919, 1.090751, .00001562, 278 > < 1919, 1.090751, .000125, 1484 > < 1919, 1.090751, .001, 35 > < 1919, 1.090751, .008, 62 > < 1919, 1.090751, .064, 60 > < 1919, 1.090751, .512, 0 > < 1919, 1.090751, 4.096, 0 > < 1919, 1.090751, 32.768, 0 > < 1919, 1.090751, 262.144, 0 > < 1919, 1.090751, 9.999999999E+125, 0 > 10 rows found.

Creating Tables Without Indexes for Performance When Loading Data

Performance impact: Variable

After the data is loaded, you can then create your indexes. This reduces the time it takes to load the data into the tables. The exception is if you are using foreign keys and reference tables.

Select the Appropriate Type of Index

Performance impact: Variable

The following details when it is appropriate to use each type of index.

Hash indexes are useful for finding rows with an exact match on one or more columns. Hash indexes are useful for doing equality searches. A hash index is created with either of the following:

-

You can create a hash index or a unique hash index with the

CREATE [UNIQUE] HASH INDEXstatement. -

You can create a unique hash index when creating your table with the

CREATE TABLE... UNIQUE HASH ONstatement. The unique hash index is specified over the primary key columns of the table.Note:

If you are planning to load your tables with data, consider creating your tables without indexes. After the data is loaded, you can then create your indexes. This reduces the time it takes to load the data into the tables. The exception is if you are using foreign keys and reference tables.

Range indexes are created by default with the CREATE TABLE statement or created with the CREATE [UNIQUE] HASH INDEX statement. Range indexes can speed up exact key lookups but are more flexible and can speed up other queries as well. Select a range index if your queries include LESS THAN or GREATER THAN comparisons. Range indexes are effective for high-cardinality data: that is, data with many possible values, such as CUSTOMER_NAME or PHONE_NUMBER. Range indexes are optimized for in-memory data management.

Range indexes can also be used to speed up "prefix" queries. A prefix query has equality conditions on all but the last key column that is specified. The last column of a prefix query can have either an equality condition or an inequality condition.

Consider the following table and index definitions:

Command> CREATE TABLE T(i1 tt_integer, i2 tt_integer, i3 tt_integer, ...); Command> CREATE INDEX IXT on T(i1, i2, i3);

The index IXT can be used to speed up the following queries:

Command> SELECT * FROM T WHERE i1>12; Command> SELECT * FROM T WHERE i1=12 and i2=75; Command> SELECT * FROM T WHERE i1=12 and i2 BETWEEN 10 and 20; Command> SELECT * FROM T WHERE i1=12 and i2=75 and i3>30;

The index IXT is not used for the following queries, because the prefix property is not satisfied:

Command> SELECT * FROM T WHERE i2=12;

There is no equality condition for i1.

The index IXT is used, but matching only occurs on the first two columns for queries like the following:

Command> SELECT * FROM T WHERE i1=12 and i2<50 and i3=630;

Range indexes have a dynamic structure that adjusts itself automatically to accommodate changes in table size. A range index can be either unique or non-unique and can be declared over nullable columns. It also allows the indexed column values to be changed once a record is inserted. A range index is likely to be more compact than an equivalent hash index.

Size Hash Indexes Appropriately

Performance impact: Variable

CREATE INDEX statement. The size of the hash

index is determined by the PAGES parameter specified in the

UNIQUE HASH ON clause of the CREATE TABLE and

CREATE INDEX statements.

The value for PAGES should be the expected number of rows in

the table divided by 256; for example, a 256,000 row table should have PAGES =

1000. A smaller value may result in a greater number of hash collisions,

decreasing performance, while a larger value may provide somewhat increased performance

at the cost of extra space used by the index.

If the number of rows in the table varies dramatically, and if performance is the primary consideration, it is best to create a large index. If the size of a table cannot be accurately predicted, consider using a range index. Also, consider the use of unique indexes when the indexed columns are large CHAR or binary values or when many columns are indexed. Unique indexes may be faster than hash indexes in these cases.

If the performance of record inserts degrades as the size of the table gets larger,

it is very likely that you have underestimated the expected size of the table. You can

resize the hash index by using the ALTER TABLE statement to reset the

PAGES value in the UNIQUE HASH ON clause. See

information about SET PAGES in the ALTER TABLE section in the Oracle TimesTen In-Memory Database SQL

Reference.

Use Foreign Key Constraint Appropriately

Performance impact: Variable

SELECT queries, but it slows down the INSERT and

UPDATE operations on the table that the foreign key is defined on

and the UPDATE and DELETE operations on the table

referenced by the foreign key.

The slow down is proportional to the number of foreign keys that either reference or are defined on the table.

Compute Exact or Estimated Statistics

Performance impact: Large

Note:

You should compute statistics before preparing your statements, since the information is likely to result in a more efficient query optimizer plan. When gathering statistics, you need to determine when and how often to gather new statistics as performance is affected by the statistics collection process. The frequency of collection should balance the task of providing accurate statistics for the optimizer against the processing overhead incurred by the statistics collection process.

Since computing statistics is a time-consuming operation, you should compute statistics with the following guidelines:

-

Update statistics after loading your database or after major application upgrades.

-

Do not update statistics during a heavy transaction load.

-

Update statistics when there is substantial creation or alteration on tables, columns, or PL/SQL objects.

If you have created or altered a substantial number of tables, columns, or PL/SQL objects in your database, you should update the data dictionary optimizer statistics for the following system tables:

SYS.TABLES,SYS.COLUMNS, andSYS.OBJ$. -

When you substantially modify tables in batch operations, such as a bulk load or bulk delete, you can gather statistics on these tables as part of the batch operation.

-

Update statistics infrequently, such as once a week or once a month, when tables are only incrementally modified.

-

Update statistics as part of a regularly run script or batch job during low transaction load times.

-

When updating the statistics for multiple large tables, see Update Table Statistics for Large Tables in Parallel.

Note:

For performance reasons, TimesTen does not hold a lock on tables or rows when computing statistics.

Use the following for computing statistics: ttIsql

statsupdate command, ttOptUpdateStats, or

ttOptEstimateStats. Providing an empty string as the table name

updates statistics for all tables in the current user's schema.

-

The

statsupdatecommand withinttIsqlevaluates every row of the table(s) in question and computes exact statistics. -

The

ttOptUpdateStatsbuilt-in procedure evaluates every row of the table(s) in question and computes exact statistics. -

The

ttOptEstimateStatsprocedure evaluates only a sampling of the rows of the table(s) in question and produces estimated statistics. This can be faster, but may result in less accurate statistics. Computing statistics with a sample of 10 percent is about ten times faster than computing exact statistics and generally results in the same execution plans.

Note:

See ttIsql and Built-In Procedures in the Oracle TimesTen In-Memory Database Reference.

Update Table Statistics for Large Tables in Parallel

Performance impact: Large

ttOptUpdateStats built-in

procedure in parallel when updating the statistics for multiple large

tables.

Note:

A TimesTen table is considered a small table when it contains less than 1 million rows. A TimesTen table is considered a large table when it contains over 100 million rows.

Call the ttOptUpdateStats built-in procedure for all of the large

tables where you want to update table statistics. Make sure to call each

ttOptUpdateStats built-in procedure in parallel. See ttOptUpdateStats in the Oracle TimesTen In-Memory Database

Reference.

Command> call ttOptUpdateStats('table1',0,0);

Command> call ttOptUpdateStats('table2',0,0);

...

...

Command> call ttOptUpdateStats('finaltable',0,0);Once the ttOptUpdateStats built-in procedure calls have completed,

determine how many transactions are accessing the large TimesTen tables for which you

updated table statistics. During low transaction load times run the

ttOptCmdCacheInvalidate('',1) built-in procedure. See ttOptCmdCacheInvalidate in the Oracle TimesTen In-Memory Database

Reference. During high

transaction load times run the following built-in procedures and make sure to call each

ttOptCmdCacheInvalidate built-in procedure in parallel:

Command> call ttOptCmdCacheInvalidate('table1',1);

Command> call ttOptCmdCacheInvalidate('table2',1);

...

...

Command> call ttOptCmdCacheInvalidate('finaltable',1);The table statistics of your tables are now up to date and compiled commands in the SQL command cache are invalidated.

Create Script to Regenerate Current Table Statistics

Performance impact: Variable

ttOptStatsExport

built-in procedure from which you can restore the table statistics to the current state.

When you apply these statements, you re-create the same environment. Recreating the table statistics could be used for diagnosing SQL performance.

Call the ttOptStatsExport built-in procedure to return the set of statements required to restore the table statistics to the current state. If no table is specified, ttOptStatsExport returns the set of statements required to restore the table statistics for all user tables that the calling user has permission to access.

Note:

See ttOptStatsExport in the Oracle TimesTen In-Memory Database Reference.

The following example returns a set of built-in procedure commands that would be required to run to restore the statistics for the employees table:

Command> call ttOptStatsExport('hr.employees');

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'EMPLOYEE_ID', 0, (6, 0, 107, 107,

(20, 20, 1 ,100, 120, 101), (20, 20, 1 ,121, 141, 122), (20, 20, 1 ,142, 162,

143), (20, 20, 1 ,163, 183, 164), (20, 20, 1 ,184, 204, 185), (1, 1, 1 ,205, 206,

205))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'FIRST_NAME', 0, (1, 0, 89, 107,

(89, 107, 0, 'Adam', 'Winston', 'Adam'))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'LAST_NAME', 0, (1, 0, 97, 107, (97,

107, 0, 'Abel', 'Zlotkey', 'Abel'))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'EMAIL', 0, (6, 0, 107, 107, (20,

20, 1, 'ABANDA', 'DGREENE', 'ABULL'), (20, 20, 1, 'DLEE', 'JKING', 'DLORENTZ'),

(20, 20, 1, 'JLANDRY', 'LOZER', 'JLIVINGS'), (20, 20, 1, 'LPOPP', 'RMATOS',

'LSMITH'), (20, 20, 1, 'RPERKINS', 'WGIETZ', 'SANDE'), (1, 1, 1, 'WSMITH',

'WTAYLOR', 'WSMITH'))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'PHONE_NUMBER', 0, (1, 0, 103, 107,

(103, 107, 0, '011.44.1343.329268', '650.509.4876', '011.44.1343.329268'))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'HIRE_DATE', 0, (1, 0, 90, 107, (90,

107, 0 ,'1987-06-17 00:00:00', '2000-04-21 00:00:00', '1987-06-17 00:00:00'))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'JOB_ID', 0, (4, 0, 19, 107, (11,

16, 5, 'AC_ACCOUNT', 'PR_REP', 'FI_ACCOUNT'), (3, 11, 30, 'PU_CLERK', 'SA_REP',

'SA_REP'), (1, 20, 20, 'SH_CLERK', 'ST_CLERK', 'ST_CLERK'), (0, 0, 5, 'ST_MAN',

'ST_MAN', 'ST_MAN'))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'SALARY', 0, (1, 0, 57, 107, (57,

107, 0 ,2100, 24000, 2100))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'COMMISSION_PCT', 0, (1, 72, 7, 107,

(7, 35, 0 ,0.1, 0.4, 0.1))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'MANAGER_ID', 0, (1, 1, 18, 107,

(18, 106, 0 ,100, 205, 100))); >

< call ttoptsetcolIntvlstats('HR.EMPLOYEES', 'DEPARTMENT_ID', 0, (3, 1, 11, 107,

(4, 10, 45 ,10, 50, 50), (2, 6, 34 ,60, 80, 80), (2, 5, 6 ,90, 110, 100))); >

< call ttoptsettblstats('HR.EMPLOYEES', 107, 0); >

12 rows found.Control the Invalidation of Commands in the SQL Command Cache

Performance impact: Variable

Note:

See Using the Query Optimizer to Choose Optimal Plan for more information on how commands are automatically invalidated.

When you compute statistics, the process of updating and compiling commands may compete for the same locks on certain tables. If statistics are collected in multiple transactions and commands are invalidated after each statistics update, the following issues may occur:

-

A join query that references multiple tables might be invalidated and recompiled more than once.

-

Locks needed for recompilation could interfere with updating statistics, which could result in a deadlock.

You can avoid these issues by controlling when commands are invalidated in the SQL command cache. In addition, you may want to hold off invalidation of all commands if you know that the table and index cardinalities will be changing significantly.

You can control invalidation of the commands, as follows:

-

Compute statistics without invalidating the commands in the SQL command cache. Set the

invalidateoption to 0 in either thettIsqlstatsupdatecommand, thettOptUpdateStatsbuilt-in procedure, or thettOptEstimateStatsbuilt-in procedure. -

Manually invalidate the commands in the SQL command cache once all statistics have been compiled with the

ttOptCmdCacheInvalidatebuilt-in procedure.

The ttOptCmdCacheInvalidate built-in procedure can invalidate commands associated solely with a table or all commands within the SQL command cache. In addition, you can specify whether the invalidated commands are to be recompiled or marked as unusable.

Note:

For complete details on when to optimally calculate statistics, see Compute Exact or Estimated Statistics. In addition, see ttIsql, ttOptUpdateStats, ttOptEstimateStats, or ttOptCmdCacheInvalidate in the Oracle TimesTen In-Memory Database Reference.

Avoid ALTER TABLE

Performance impact: Variable

ALTER TABLE statement enables applications to

add columns to a table and to drop columns from a table. Although the ALTER

TABLE statement itself runs very quickly in most cases, the modifications

it makes to the table can cause subsequent DML statements and queries on the table to

run more slowly.

The actual performance degradation that the application experiences varies with the number of times the table has been altered and with the particular operation being performed on the table.

Dropping VARCHAR2 and VARBINARY columns is slower than dropping columns of other data types since a table scan is required to free the space allocated to the existing VARCHAR2 and VARBINARY values in the column to be dropped.

Avoid Nested Queries

Performance impact: Variable

The following are examples of nested queries that may need to be materialized and result in multiple rows:

-

Aggregate nested query with

GROUP BY -

Nested queries that reference

ROWNUM -

Union, intersect, or minus nested queries

-

Nested queries with

ORDER BY

For example, the following aggregate nested query results in an expensive performance impact:

Command> SELECT * FROM (SELECT SUM(x1) sum1 FROM t1 GROUP BY y1), (SELECT sum(x2) sum2 FROM t2 GROUP BY y2) WHERE sum1=sum2;

The following is an example of a nested query that references ROWNUM:

Command> SELECT * FROM (SELECT rownum rc, x1 FROM t1 WHERE x1>100), (SELECT ROWNUM rc, x2 FROM t2 WHERE x2>100) WHERE x1=x2;

The following is an example of a union nested query:

Command> SELECT * FROM (SELECT x1 FROM t1 UNION SELECT x2 FROM t2), (SELECT x3 FROM t3 GROUP BY x3) WHERE x1=x3;

See Subqueries in the Oracle TimesTen In-Memory Database SQL Reference.

Prepare Statements in Advance

Performance impact: Large

For example, if your application generates statements like:

SELECT A FROM B WHERE C = 10; SELECT A FROM B WHERE C = 15;

You can replace these statements with the single statement:

SELECT A FROM B WHERE C = ?;

TimesTen shares prepared statements automatically after they have been committed. As a result, an application request to prepare a statement for execution may be completed very quickly if a prepared version of the statement already exists in the system. Also, repeated requests to run the same statement can avoid the prepare overhead by sharing a previously prepared version of the statement.

Even though TimesTen allows prepared statements to be shared, it is still a good practice for performance reasons to use parameterized statements. Using parameterized statements can further reduce prepare overhead, in addition to any savings from sharing statements.

Avoid Unnecessary Prepare Operations

Performance impact: Large

But if connections are relatively short, a better strategy is to establish a long-duration connection that prepares the statements and runs them on behalf of all threads or processes. The trade-off here is between communication overhead and prepare overhead, and can be examined for each application. Prepared statements are invalidated when a connection is closed.

See ttSQLCmdCacheInfoGet in the Oracle TimesTen In-Memory Database Reference.

Store Data Efficiently with Column-Based Compression of Tables

Performance impact: Large

This mechanism provides space reduction for tables by eliminating the redundant storage of duplicate values within columns and improves the performance of SQL queries that perform full table scans.

When compressing columns of a TimesTen table, consider the following:

-

Compress a column if values are repeated throughout such as the name of countries or states.

-

Compress a column group if you often access multiple columns together.

-

Do not compress columns that contain data types that require a small amount of storage such as

TT_TINYINT. -

TimesTen does not compress

NULLvalues.

You can define one or more columns in a table to be compressed together, which is called a compressed column group. You can define one or more compressed column groups in each table.

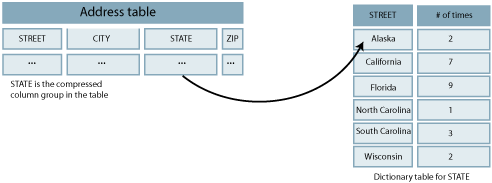

A dictionary table is created for each compressed column group that contains a column with all the distinct values of the compressed column group. The compressed column group now contains a pointer to the row in the dictionary table for the appropriate value. The width of this pointer can be 1, 2, or 4 bytes long depending on the maximum number of entries you defined for the dictionary table. So if the sum of the widths of the columns in a compressed column group is wider than the 1, 2, or 4 byte pointer width, and if there are a lot of duplicate values of those column values, you have reduced the amount of space used by the table.

Figure 10-1 shows the compressed column group in the table pointing to the appropriate row in the dictionary table.

Figure 10-1 Column-Based Compression of Tables

Description of "Figure 10-1 Column-Based Compression of Tables"

The dictionary table has a column of pointers to each of the distinct values. When the user configures the maximum number of distinct entries for the compressed column group, the size of the compressed column group is set as follows:

-

1 byte for a maximum number of entries of 255 (28-1). When the maximum number is between 1 and 255, the dictionary size is set to 255 (28-1) values and the compressed column group pointer column is 1 byte.

-

2 bytes for a maximum number of entries of 65,535 (216-1). When the maximum number is between 256 and 65,535, the dictionary size is set to 65,535 (216-1) values and the compressed column group pointer column is 2 bytes.

-

4 bytes for a maximum number of entries of 4,294,967,295 (232-1). When the maximum number is between 65,536 and 4,294,967,295, the dictionary size is set to 4,294,967,295 (232-1) values and the compressed column group pointer column is 4 bytes. This is the default.

Compressed column groups can be added at the time of table creation or added later

using ALTER TABLE. You can drop the entire compressed column group with

the ALTER TABLE statement. See ALTER TABLE and CREATE TABLE

in the Oracle TimesTen In-Memory Database SQL

Reference.

You can call the ttSize built-in procedure to review the level of

compression that TimesTen achieved on your compressed table. For more information on the

ttSize built-in procedure, see ttSize in the Oracle TimesTen In-Memory Database

Reference.

Control Read Optimization During Concurrent Write Operations

Performance impact: Variable

ttOptSetFlag ('tblLock',1) or statement

level optimizer hints such as /*+ tt_tbllock(1) tt_rowlock(0) */.

Write operations that operate concurrently with read optimized queries may result in contention.

You can control read optimization during periods of concurrent write operations with the ttDBWriteConcurrencyModeSet built-in procedure. This built-in procedure enables you to switch between a standard mode and an enhanced write concurrent mode. In the standard mode, the optimizer respects read optimization hints. In the enhanced write concurrent mode, the optimizer ignores read optimization hints and does not use shared read table locks or write table locks.

Note:

For more information about table locking, see Locking Granularities.

For more information about optimizer hints, see Use Optimizer Hints to Modify the Execution Plan.

For more information about transaction level optimizer hints, see ttOptSetFlag in the Oracle TimesTen In-Memory Database Reference.

Set the mode of the ttDBWriteConcurrencyModeSet built-in procedure to 1 to enable the enhanced write concurrent mode and disable read optimization. Set the mode to 0 to disable the enhanced write concurrent mode and re-enable read optimization.

When the mode is set to 1, all transaction and statement table lock optimizer hints are ignored. This affects the following:

-

Shared read table-level locks for

SELECTquery and subqueries that are triggered by optimizer hints. -

Write table locks for DML statements that are triggered by optimizer hints.

Regardless of the mode setting, table locks that are not triggered by optimizer hints are not affected.

Set the wait of the ttDBWriteConcurrencyModeSet built-in procedure to 0 to perform a mode switch without notifications. Set the wait of the ttDBWriteConcurrencyModeSet built-in procedure to 1 to force the built-in procedure to wait until the mode transition is complete.

Running certain SQL statements causes the mode of the ttDBWriteConcurrencyModeSet built-in procedure to remain in transition. Such SQL statements must match the following two conditions:

-

Affected by the write concurrency mode.

-

Compiled in a different write concurrency mode.

The mode of the ttDBWriteConcurrencyModeSet built-in procedure remains in transition until all such SQL statements complete. The ttDBWriteConcurrencyModeSet built-in procedure uses lock acquisition to wait during the mode transition. An error is returned if the ttDBWriteConcurrencyModeSet built-in procedure is not granted a lock within the timeout interval of the current connection.

Note:

See ttDBWriteConcurrencyModeSet, ttLockWait, and ttDBWriteConcurrencyModeGet in the Oracle TimesTen In-Memory Database Reference.

Choose SQL and PL/SQL Timeout Values

Performance impact: Variable

-

SQLQueryTimeoutorSQLQueryTimeoutMSec: Controls how long a SQL statement runs before timing out.By default, SQL statements do not time out. In some cases, you may want to specify a value for either the

SQLQueryTimeoutorSQLQueryTimeoutMSecconnection attribute to set the time limit in seconds or milliseconds within which the database should run SQL statements. (Note that this applies to any SQL statement, not just queries.)Both

SQLQueryTimeoutandSQLQueryTimeoutMsecattributes are internally mapped to one timeout value in milliseconds. If different values are specified for these attributes, only one value is retained. See SQLQueryTimeout and SQLQueryTimeoutMSec in the Oracle TimesTen In-Memory Database Reference. -

PLSQL_TIMEOUT: Controls how long a PL/SQL block runs before timing out.By default, PL/SQL program units (PL/SQL procedures, anonymous blocks and functions) are allowed to run for 30 seconds before being automatically terminated. In some cases, you may want to modify the

PLSQL_TIMEOUTconnection attribute value to allow PL/SQL program units additional time to run. You can also modify this attribute with anALTER SESSIONstatement during runtime.See PLSQL_TIMEOUT in the Oracle TimesTen In-Memory Database Reference.

-

TTC_Timeout: Controls how long a TimesTen client waits for a response from the TimesTen Server when the client has requested the server to run a SQL statement or PL/SQL block. -

TTC_ConnectTimeout: Controls how long the client waits for aSQLDriverConnectorSQLDisconnectrequest. It overrides the value ofTTC_Timeoutfor those requests.

If you use a TimesTen client/server, then SQLQueryTimeout (or

SQLQueryTimeoutMSec) and PLSQL_TIMEOUT (relevant

for PL/SQL) should be set to significantly lower values than

TTC_Timeout, to avoid the possibility of the client mistaking a

long-running SQL statement or PL/SQL block for a non-responsive server.

If you use PL/SQL, the relationship between SQLQueryTimeout (or SQLQueryTimeoutMSec) and PLSQL_TIMEOUT depends on how many SQL statements you use in your PL/SQL blocks. If none, there is no relationship. If the maximum number of SQL statements in a PL/SQL block is n, then PLSQL_TIMEOUT should presumably equal at least n x SQLQueryTimeout (or n x 1000 x SQLQueryTimeoutMSec), including consideration of processing time in PL/SQL.

Note:

If SQLQueryTimeout (or SQLQueryTimeoutMSec) and PLSQL_TIMEOUT are not set sufficiently less than TTC_Timeout, and the client mistakes a long-running SQL statement or PL/SQL block for a non-responsive server and terminates the connection, the server will cancel the SQL statement or PL/SQL block as soon as it notices the termination of the connection.