3 UDR Benchmark Testing

This chapter describes UDR, SLF, and EIR test scenarios.

3.1 Test Scenario 1: SLF Call Deployment Model

This section provides information about SLF call deployment model test scenarios.

3.1.1 SLF Call Model: 24.2K TPS for Performance-Medium Resource Profile for SLF Lookup

This test scenario describes performance and capacity of SLF functionality offered by UDR and provides the benchmarking results for various deployment sizes.

- Signaling (SLF Look Up): 24.2K TPS

- Provisioning: 1260 TPS

- Total Subscribers: 17M

- Profile Size: 450 bytes

Table 3-1 Traffic Model Details

| Request Type | Details | TPS |

|---|---|---|

| Lookup 24.2k | SLF Lookup GET Requests | 24.2K |

| Provisioning (1.26K using Provgw one site) | CREATE | 126K |

| DELETE | 126K | |

| UPDATE | 504K | |

| GET | 504K |

Note:

- To run this model, one UDR site is brought down and 24.2K look up traffic and 1.26K provisioning traffic are run from one site.

- The values provided is for single site deployment

Table 3-2 Testcase Parameters

| Input Parameter Details | Configuration Values |

|---|---|

| UDR Version Tag | 24.2.4 |

| Target TPS | 24.2K Lookup + 1.26K Provisioning |

| Traffic Profile | SLF 24.2K Profile |

| Notification Rate | OFF |

| UDR Response Timeout | 5s |

| Client Timeout | 30s |

| Signaling Requests Latency Recorded on Client | 10ms |

| Provisioning Requests Latency Recorded on Client | 30ms |

Table 3-3 Consolidated Resource Requirement

| Resource | CPU | Memory | Ephemeral Storage | PVC |

|---|---|---|---|---|

| cnDBTier | 90 | 283 GB | 16 GB | 438 |

| SLF | 187 | 119 GB | 32 GB | NA |

| ProvGw | 32 | 30 GB | 10 GB | NA |

| Buffer | 50 | 50 GB | 20 GB | 200 |

| Total | 359 | 482 GB | 78 GB | 638 |

Note:

All values are inclusive of ASM sidecar.

Note:

- The same resources and usage are application for cnDBTier2

- For cnDBTier, you must use ocudr_slf_37msub_dbtier and ocudr_udr_10msub_dbtier custom value files for SLF and UDR respectively. For more information, see Cloud Native Core, Unified Data Repository Installation, Upgrade, and Fault Recovery Guide.

Table 3-4 cnDBTier Resources and Usage

| Microservice Name | Container Name | Number of Pods | CPU Allocation Per Pod (cnDBtier1) | Memory Allocation Per Pod (cnDBtier1) | Ephemeral Storage Per Pod | PVC Allocation Per Pod | Total Resources (cnDBtier) | CPU Usage (cnDBtier) | Memory Usage | PVC Usage |

|---|---|---|---|---|---|---|---|---|---|---|

| Management node (ndbmgmd) | mysqlndbcluster | 2 | 2 CPUs | 11.25 GB | 1 GB | 16 GB | 6 CPUs

31 GB Ephemeral Storage: 2 GB PVC Allocation: 32 GB |

Minimal resources are used | 40 MB/pod | |

| istio-proxy | 1 CPUs | 4 GB | ||||||||

| Data node (ndbmtd) | mysqlndbcluster | 4 | 4 CPUs | 33 GB | 1 GB | 25 GB (Backup: 56 GB) |

28 CPUs 156 GB Ephemeral Storage: 4 GB PVC Allocation: 324 GB |

3 CPU/pod | 20 GB/pod | 9 GB/pod (Backup: 103 MB/pod) |

| istio-proxy | 2 CPUs | 4 GB | ||||||||

| db-backup-executor-svc | 100m CPU | 256 mi | ||||||||

| APP SQL node (ndbappmysqld) | mysqlndbcluster | 5 | 4 CPUs | 4 GB | 1 GB | 10 GB |

35 CPUs 40 GB Ephemeral Storage: 5 GB PVC Allocation: 50 GB |

4 CPU/pod | 1 GB/pod | 205 MB/pod |

| istio-proxy | 3 CPUs | 4 GB | ||||||||

| SQL node (Used for Replication) (ndbmysqld) | mysqlndbcluster | 2 | 4 CPUs | 16 GB | 1 GB | 16 GB |

13 CPUs 41GB Ephemeral Storage: 2 GB PVC Allocation: 32 GB |

Minimal resources are used | 2.2 GB/pod | |

| istio-proxy | 2 CPUs | 4 GB | ||||||||

| init-sidecar | 100m CPU | 256 MB | ||||||||

| DB Monitor Service (db-monitor-svc) | db-monitor-svc | 1 | 4 CPUs | 4 GB | 1 GB | NA |

5 CPUs 5 GB Ephemeral Storage: 1 GB |

Minimal resources are used | Minimal resources are used | |

| istio-proxy | 1 CPUs | 1 GB | ||||||||

| DB Backup Manager Service (backup-manager-svc) | backup-manager-svc | 1 | 100m CPU | 128 MB | 1 GB | NA |

2 CPUs 2 GB Ephemeral Storage: 1 GB |

Minimal resources are used | Minimal resources are used | |

| istio-proxy | 1 CPUs | 1 GB | ||||||||

| Replication Service (db-replication-svc) | db-replication-svc | 1 | 2 CPU | 12 GB | 1 GB | NA |

3 CPUs 13 GB Ephemeral Storage: 1 GB |

Minimal resources are used | Minimal resources are used | |

| istio-proxy | 200m CPU | 500MB | ||||||||

Table 3-5 SLF Resources and Usage

| Microservice name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage | CPU Utilization hpa |

|---|---|---|---|---|---|---|---|---|

| Ingress-gateway-sig | ingressgateway-sig | 8 | 6 CPUs | 4 GB |

80 CPUs 40 GB Memory Ephemeral Storage: 8 GB |

3 CPU/pod | 2 GB/pod | 49% |

| istio-proxy | 4 CPUs | 1 GB | 2.1 CPU/pod | 350 MB/pod | ||||

| Ingress-gateway-prov | ingressgateway-prov | 2 | 4 CPUs | 4 GB |

12 CPUs 10 GB Ephemeral Storage: 2 GB |

0.9 CPU/pod | 1.4 GB/pod | 24% |

| istio-proxy | 2 CPUs | 1 GB | 0.65 CPU/pod | 300 MB/pod | ||||

| Nudr-dr-service | nudr-drservice | 6 | 6 CPUs | 4 GB |

54 CPUs 30 GB Ephemeral Storage: 6 GB |

3.1 CPU/pod | 1.7 GB/pod | 55% |

| istio-proxy | 3 CPUs | 1 GB | 2 CPU/pod | 325 MB/pod | ||||

| Nudr-dr-provservice | nudr-dr-provservice | 2 | 4 CPUs | 4 GB |

12 CPUs 10 GB Ephemeral Storage: 2 GB |

.9 CPU/pod | 1.5 GB/pod | 22% |

| istio-proxy | 2 CPUs | 1 GB | 0.5 CPU/pod | 300 MB/pod | ||||

| Nudr-nrf-client-nfmanagement | nrf-client-nfmanagement | 2 | 1 CPU | 1 GB |

4 CPUs 4 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| Nudr-egress-gateway | egressgateway | 2 | 1 CPUs | 1 GB |

4 CPUs 4 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| Nudr-config | nudr-config | 2 | 2 CPUs | 2 GB |

6 CPUs 6 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| Nudr-config-server | nudr-config-server | 2 | 2 CPUs | 2 GB |

6 CPUs 6 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| alternate-route | alternate-route | 2 | 1 CPUs | 1 GB |

4 CPUs 4 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| app-info | app-info | 2 | 1 CPUs | 1 GB |

4 CPUs 4 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| perf-info | perf-info | 2 | 1 CPUs | 1 GB |

4 CPUs 4 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

Note:

The same resources and usage are used for Site2.The following table describes provision gateway resources and their utilization (Provisioning Latency: 30ms):

Table 3-6 Provision Gateway Resources and their utilization

| Microservice name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage | CPU Utilization hpa |

|---|---|---|---|---|---|---|---|---|

| provgw-ingress-gateway | ingressgateway | 2 | 2 CPUs | 2 GB |

6 CPUs 6 GB Memory Ephemeral Storage: 2 GB |

0.7 CPU/pod | 1.6 GB/pod | 45% |

| istio-proxy | 1 CPUs | 1 GB | 0.5 CPU/pod | 300 MB/pod | ||||

| provgw-egress-gateway | egressgateway | 2 | 3 CPUs | 2 GB |

6 CPUs 6 GB Memory Ephemeral Storage: 2 GB |

0.8 CPU/pod | 1 GB/pod | 51% |

| istio-proxy | 1 CPUs | 1 GB | 0.6 CPU/pod | 300 MB/pod | ||||

| provgw-service | provgw-service | 2 | 3 CPUs | 2 GB |

8 CPUs 6 GB Memory Ephemeral Storage: 2 GB |

1 CPU/pod | 1.2 GB/pod | 40% |

| istio-proxy | 1 CPUs | 1 GB | 0.6 CPU/pod | 300 MB/pod | ||||

| provgw-config | provgw-config | 2 | 2 CPUs | 2 GB |

6 CPUs 6 GB Memory Ephemeral Storage: 2 GB |

Minimal resources are used. Utilization data is not captured. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

| provgw-config-server | provgw-config-server | 2 | 2 CPUs | 2 GB |

6 CPUs 6 GB Memory Ephemeral Storage: 2 GB |

Minimal resources are used. Utilization data is not captured. | ||

| istio-proxy | 1 CPUs | 1 GB | ||||||

Table 3-7 Result and Observation

| Parameter | Values |

|---|---|

| Test Duration | 8hr |

| TPS Achieved | 24.2k SLF Lookup + 1.26k Provisioning |

| Success Rate | 100% |

| Average UDR processing time (Request and Response) | 20ms |

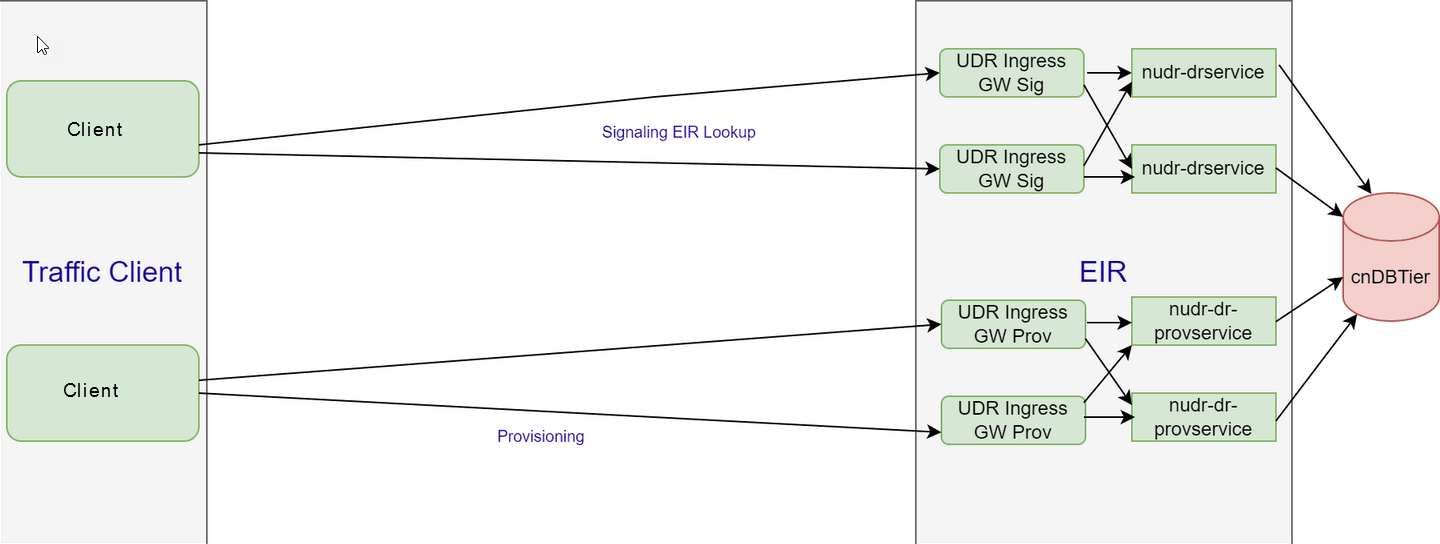

3.2 Test Scenario 2: EIR Deployment Model

Performance Requirement - 300K subscriber DB size with 10K EIR lookup TPS

This test scenario describes performance and capacity improvements of EIR functionality offered by UDR and provides the benchmarking results for various deployment sizes.

- TLS

- OAuth2.0

- Default Response set to EQUIPMENT_UNKNOWN

- Header Validations like XFCC, server header, and user agent header

EIR is benchmarked for compute and storage resources under following conditions:

- Signaling (EIR Look Up): 10K TPS

- Total Subscribers: 300K

- Profile Size: 130 bytes

- Average HTTP Provisioning Request Packet Size: NA

- Average HTTP Provisioning Response Packet Size: NA

Figure 3-1 EIR Deployment Model

The following table describes the benchmarking parameters and their values:

Table 3-8 Traffic Model Details

| Request Type | Details | TPS |

|---|---|---|

| Lookup 10k | EIR EIC | 10k |

The following table describes the testcase parameters and their values:

Table 3-9 Testcase Parameters

| Input Parameter Details | Configuration Values |

|---|---|

| UDR Version Tag | 22.3.0 |

| Target TPS | 10k Lookup |

| Traffic Profile | 10k EIR EIC |

| Notification Rate | OFF |

| EIR Response Timeout | 5s |

| Client Timeout | 10s |

| Signaling Requests Latency Recorded on Client | NA |

| Provisioning Requests Latency Recorded on Client | NA |

Table 3-10 Consolidated Resource Requirement

| Resource | CPUs | Memory |

|---|---|---|

| EIR | 32 | 30 GB |

| cnDBTier | 177 | 616 GB |

| Total | 600 | 903 GB |

Table 3-11 cnDBTier Resources and their Utilization

| Micro service name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage |

|---|---|---|---|---|---|---|---|

| Management node | mysqlndbcluster | 2 | 4 CPUs | 4 GB |

4 CPU 8 GB Memory |

.013 CPU/pod | .031 GB/pod |

| Data node | mysqlndbcluster | 4 | 16 CPUs | 32 GB |

64 CPU 128 GB Memory |

1 CPU/pod | 15.5 GB/pod |

| APP SQL node | mysqlndbcluster | 3 | 16 CPUs | 32 GB |

48 CPU 96 GB Memory |

4.1 CPU/pod | .8 GB/pod |

| SQL node (Used for Replication) | mysqlndbcluster | 2 | 2 CPUs | 4 GB |

4 CPU 8 GB Memory |

.02 CPU/pod | .6 GB/pod |

| DB Monitor Service | db-monitor-svc | 1 | 500m CPUs | 500 MB |

1 CPU 1 GB Memory |

Minimal resources are used. Utilization is not captured | |

| DB Backup Manager Service | replication-svc | 1 | 250m CPUs | 320 MB |

1 CPU 1 GB Memory |

Minimal resources are used. Utilization is not captured | |

Table 3-12 EIR Resources and their Utilization (Lookup Latency: 16.9ms) without Aspen Service Mesh (ASM) Enabled

| Micro service name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage |

|---|---|---|---|---|---|---|---|

| Ingress-gateway-sig | Ingress-gateway-sig | 5 | 6 CPUs | 4 GB |

30 CPUs 20 GB Memory |

3 CPU/pod | 2 GB/pod |

| Ingress-gateway-prov | Ingress-gateway-prov | 2 | 6 CPUs | 4 GB |

12 CPUs 8 GB Memory |

.07 CPU/pod | .9 GB/pod |

| Nudr-dr-service | nudr-drservice | 6 | 4 CPUs | 4 GB |

24 CPUs 24 GB Memory |

3 CPU/pod | 1.2 GB/pod |

| Nudr-dr-provservice | nudr-dr-provservice | 2 | 4 CPUs | 4 GB |

8 CPUs 8 GB Memory |

.02 CPU/pod | .5 GB/pod |

| Nudr-egress-gateway | egressgateway | 1 | 2 CPUs | 2 GB |

2 CPUs 2 GB Memory |

.04 CPU/pod | .4 GB/Pod |

| Nudr-config | nudr-config | 2 | 2 CPUs | 2 GB |

4 CPUs 4 GB Memory |

Minimal resources are used. Utilization is not captured | |

| Nudr-config-server | nudr-config-server | 2 | 2 CPUs | 2 GB |

4 CPU 4 GB Memory |

Minimal resources are used. Utilization is not captured | |

Note:

The following table provides observation data for the performance test that can be used for the benchmark testing to scale up EIR performance:

Table 3-13 Result and Observation

| Parameter | Values |

|---|---|

| Test Duration | 8hr |

| TPS Achieved | 10k |

| Success Rate | 100% |

| Average EIR processing time (Request and Response) | 16.9ms |

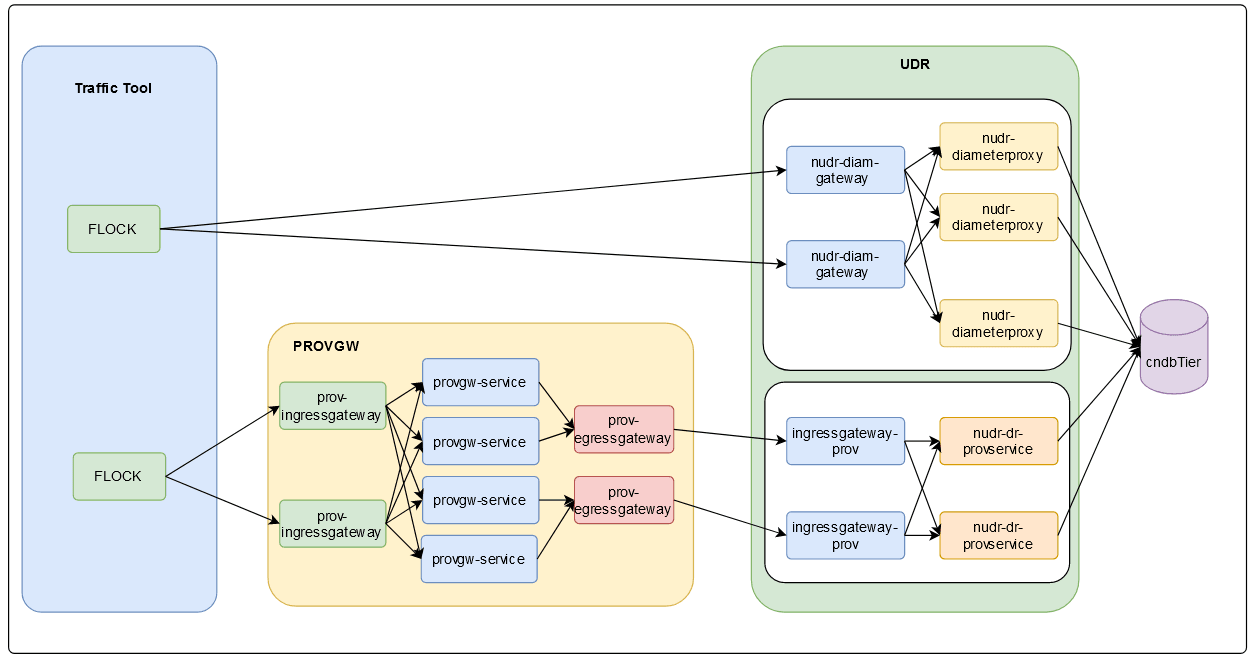

3.3 Test Scenario 3: SOAP and Diameter Deployment Model

2K SOAP provisioning TPS for ProvGw for Medium profile + Diameter 25K with Large profile

- TLS

- OAuth2.0

- Header Validations like XFCC, server header, and user agent header

UDR is benchmarked for compute and storage resources under following conditions:

- Signaling : 10K TPS

- Provisioning: 2K TPS

- Total Subscribers: 1M - 10M range used for Diameter Sh and 1M range used for SOAP/XML

- Profile Size: 2.2KB

- Average HTTP Provisioning Request Packet Size: NA

- Average HTTP Provisioning Response Packet Size: NA

Figure 3-2 SOAP and Diameter Deployment Model

The following table describes the benchmarking parameters and their values:

Table 3-14 Traffic Model Details

| Request Type | Details | TPS |

|---|---|---|

| Diameter SH Traffic | SH Traffic | 25K |

| Provisioning (2K using Provgw) | SOAP Traffic | 2K |

Table 3-15 SOAP Traffic Model

| Request Type | SOAP Traffic % |

|---|---|

| GET | 33% |

| DELETE | 11% |

| POST | 11% |

| PUT | 45% |

Table 3-16 Diameter Traffic Model

| Request Type | Diameter Traffic % |

|---|---|

| SNR | 25% |

| PUR | 50% |

| UDR | 25% |

The following table describes the benchmarking parameters and their values:

Table 3-17 Testcase Parameters

| Input Parameter Details | Configuration Values |

|---|---|

| UDR Version Tag | 22.2.0 |

| Target TPS | 25K + 2K |

| Traffic Profile | 25K sh + 2K SOAP |

| Notification Rate | OFF |

| UDR Response Timeout | 5s |

| Client timeout | 10s |

| Signaling Requests Latency Recorded on Client | NA |

| Provisioning Requests Latency Recorded on Client | NA |

Note:

PNR scenarios are not tested because server stub is not used.Table 3-18 cnDBTier Resources and their Utilization

| Micro service name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage |

|---|---|---|---|---|---|---|---|

| Management node | mysqlndbcluster | 3 | 4 CPUs | 10 GB |

12 CPUs 30 GB Memory |

0.2 CPU/pod | .2 GB/pod |

| Data node | mysqlndbcluster | 4 | 15 CPUs | 98 GB |

64 CPU 408 GB Memory |

5.8 CPU/pod | 92 GB/pod |

| db-backup-executor-svc | 100m CPU | 128 MB | NA | NA | |||

| APP SQL node | mysqlndbcluster | 4 | 16 CPUs | 16 GB |

64 CPUs 64 GB Memory |

9.5 CPU/pod | 8.8 GB/pod |

| SQL node (Used for Replication) | mysqlndbcluster | 4 | 8 CPUs | 16 GB |

49 CPUs 81 GB Memory |

Utilization data is not available for this service because of resource constraints, pods are not used. | |

| DB Monitor Service | db-monitor-svc | 1 | 200m CPUs | 500 MB |

3 CPUs 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

| DB Backup Manager Service | replication-svc | 1 | 200m CPU | 500 MB |

3 CPUs 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

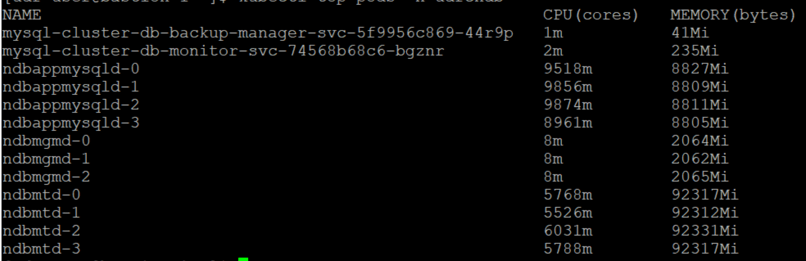

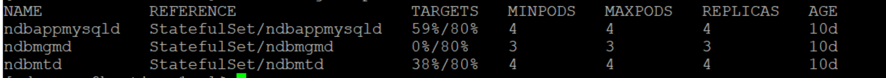

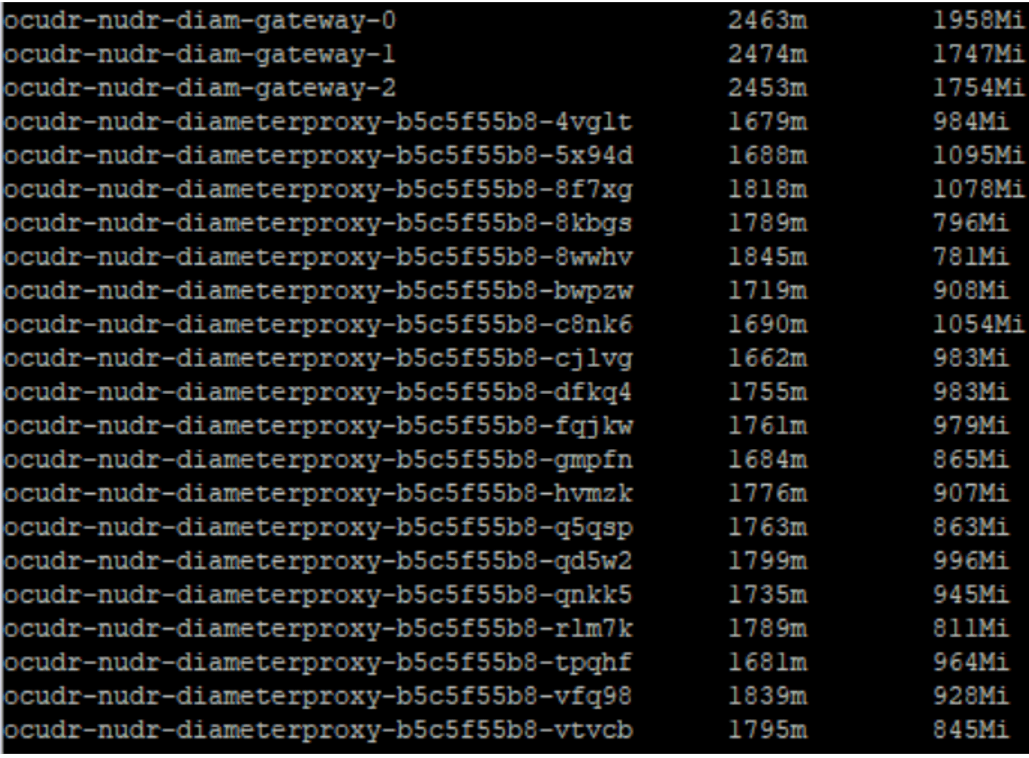

cnDBTier Usage

Results for Kubectl top pods on cndbtier is shown below:

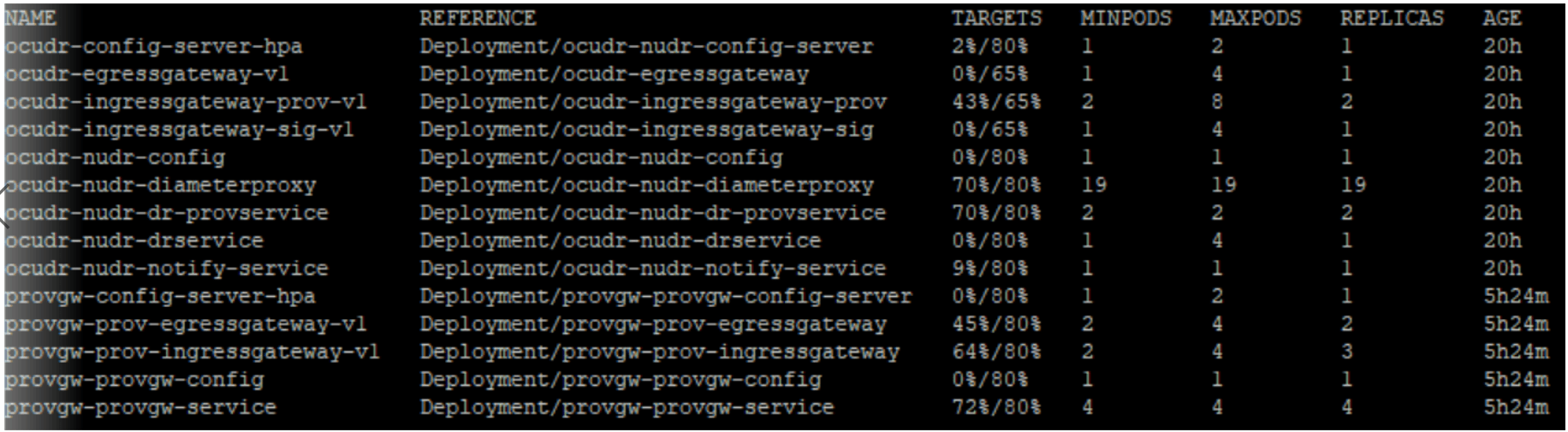

Results for Kubectl get hpa on cndbtier is shown below:

- Data memory usage: 72GB (5.164GB used)

- DB Reads per second: 52k

- DB Writes per second: 24k

Table 3-19 UDR Resources and their Utilization (Request Latency: 40ms)

| Micro service name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage |

|---|---|---|---|---|---|---|---|

| nudr-diameterproxy | nudr-diameterproxy | 19 | 2.5 CPUs | 4 GB |

47.5 CPUs 76 GB Memory |

1.75 CPU/pod | 1 GB/pod |

| nudr-diam-gateway | nudr-diam-gateway | 3 | 6 CPUs | 4 GB |

18 CPUs 12 GB Memory |

.2.5 CPU/pod | 2 GB/pod |

| Ingress-gateway-sig | ingressgateway-sig | 2 | 2 CPUs | 2 GB |

4 CPUs 4 GB Memory |

Minimal resources are used. Utilization is not captured | |

| Ingress-gateway-prov | ingressgateway-prov | 2 | 2 CPUs | 2 GB |

4 CPUs 4 GB Memory |

1 CPU/pod | 1 GB/pod |

| Nudr-dr-service | nudr-drservice | 2 | 2 CPUs | 2 GB |

4 CPUs 4 GB Memory |

Minimal resources are used. Utilization is not captured | |

| Nudr-dr-provservice | nudr-dr-provservice | 2 | 2 CPUs | 2 GB |

4 CPUs 4 GB Memory |

1.4 CPU/pod | 1 GB/pod |

| Nudr-nrf-client-nfmanagement | nrf-client-nfmanagement | 2 | 1 CPUs | 1 GB |

2 CPUs 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

| Nudr-egress-gateway | egressgateway | 2 | 2 CPUs | 2 GB |

4 CPU 4 GB Memory |

Minimal resources are used. Usage is not captured | |

| Nudr-config | nudr-config | 2 | 1 CPUs | 1 GB |

2 CPU 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

| Nudr-config-server | nudr-config-server | 2 | 1 CPUs | 1 GB |

2 CPU 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

| alternate-route | alternate-route | 2 | 1 CPUs | 1 GB |

2 CPU 2 GB Memory |

Minimal resources are used. Usage is not captured | |

| app-info | app-info | 2 | 1 CPUs | 1 GB |

2 CPU 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

| perf-info | perf-info | 2 | 1 CPUs | 1 GB |

2 CPU 2 GB Memory |

Minimal resources are used. Usage is not captured | |

Resource Utilization

Diameter resource utilization is shown below:

UDR HPA resource utilization is shown below:

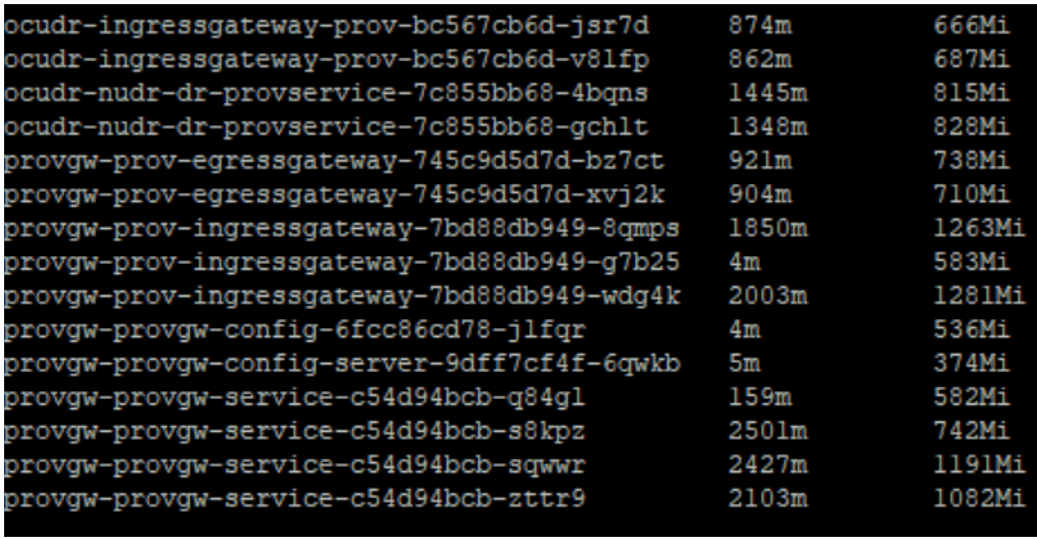

The following table describes provision gateway resources and their utilization:

Table 3-20 Provision Gateway Resources aand their Utilization (Provisioning Request Latency: 40ms)

| Micro service name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Total Resources | CPU Usage | Memory Usage |

|---|---|---|---|---|---|---|---|

| provgw-ingress-gatewa | ingressgateway | 3 | 2 CPUs | 2 GB |

6 CPUs 6 GB Memory |

1.3 CPU/pod | 1 GB/pod |

| provgw-egress-gateway | egressgateway | 2 | 2 CPUs | 2 GB |

4 CPUs 4 GB Memory |

.0.9 CPU/pod | 700 Mi/pod |

| provgw-service | provgw-service | 4 | 2.5 CPUs | 3 GB |

10 CPUs 12 GB Memory |

1.75 CPU/pod | 1 GB/pod |

| provgw-config | provgw-config | 2 | 1 CPUs | 1 GB |

2 CPUs 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

| provgw-config-server | provgw-config-server | 2 | 1 CPUs | 1 GB |

2 CPUs 2 GB Memory |

Minimal resources are used. Utilization is not captured | |

Provisioning Gateway resource utilization is shown below:

Table 3-21 cnUDR and ProvGw Resources Calculation

| Resources | cnUDR | ProvGw | ||||

|---|---|---|---|---|---|---|

| Core services used for traffic runs (Nudr-diamgw, Nudr-diamproxy, Nudr-ingressgateway-prov and Nudr-dr-prov) at 70% usage | Other Microservices | Total | Core services used for traffic runs (ProvGw-ingressgateway, ProvGw-provgw service and ProvGw-egressgateway) at 70% usage | Other Microservice | Total | |

| CPU | 73.5 | 24 | 97.5 | 20 | 4 | 24 |

| Memory in GB | 96 | 24 | 120 | 22 | 4 | 26 |

| Disk Volume (Ephemeral storage) in GB | 26 | 16 | 42 | 9 | 4 | 13 |

Table 3-22 cnDbTier Resources Calculation

| Resources | cnDbTier | |||||

|---|---|---|---|---|---|---|

| SQL nodes (at actual usage) | SQL Nodes (Overhead/ Buffer resources at 20%) | Data nodes (at actual usage) | Data nodes (Overhead/ Buffer resources at 10%) | MGM nodes and other resources (Default resources) | Total | |

| CPU | 76 | 16 | 23.2 | 5 | 18 | 138.5 |

| Memory in GB | 70.4 | 14 | 368 | 36 | 34 | 522 |

| Disk Volume (Ephemeral storage) in GB | 8 | NA | 960 (ndbdisksize= 240*4) | NA | 20 | 988 |

Table 3-23 Total Resources Calculation

| Resources | Total |

|---|---|

| CPU | 260 |

| Memory in GB | 668 GB |

| Disk Volume (Ephemeral storage) in GB | 104 GB |

The following table provides observation data for the performance test that can be used for the benchmark testing to scale up UDR performance:

Table 3-24 Result and Observation

| Parameter | Values |

|---|---|

| Test Duration | 18hr |

| TPS Achieved | 10K |

| Success Rate | 100% |

| Average UDR processing time (Request and Response) | 40ms |

3.4 Test Scenario 4: Policy Data Traffic Deployment Model

This section provides information about policy data traffic deployment model test scenarios.

3.4.1 Policy Data: 17.2K N36, 300 TPS Notifications and 500 TPS Provisioning

You can perform benchmark tests on UDR for compute and storage resources by considering the following conditions:

- Signaling : 17.2K

- Provisioning: 500 TPS

- Total Subscribers: 10M

The following table describes the benchmarking parameters and their values:

Table 3-25 Traffic Model Details

| Request Type | Details | TPS |

|---|---|---|

| N36 traffic (100%) 17.2K TPS for sm-data and subs-to-notify POST/DELETE | subs-to-notify POST | 3K (17.45%) |

| sm-data GET | 4.7K (27.3%) | |

| subs-to-notify DELETE | 3K (17.45%) | |

| sm-data PATCH | 6.5K (37.8%) | |

| 500 TPS PROVISIONING

Policy Data PUT Operation |

UPDATE | 300 (60%) |

| GET | 100 (40%) | |

| CREATE | 50 (10%) | |

| DELETE | 50 (10%) | |

| NOTIFICATIONS (triggered from 300 PUT provisioning traffic) | POST Operation (Egress) | 300 |

Table 3-26 Testcase Parameters

| Input Parameter Details | Configuration Values |

|---|---|

| UDR Version Tag | 24.2.0 |

| Target TPS | 17.2K Signaling |

| Notification Rate | 300 |

| UDR Response Timeout | 2700ms |

| Signaling Requests Latency Recorded on Client | 19ms |

| Provisioning Requests Latency Recorded on Client | 24ms |

Table 3-27 Consolidated Resource Requirement

| Resource | CPU | Memory | Ephemeral Storage | PVC |

|---|---|---|---|---|

| cnDBTier | 92 CPUs | 485 GB | 21 GB | 1404 GB |

| UDR | 215 CPUs | 156 GB | 48 GB | NA |

| Buffer | 50 CPUs | 50 GB | 20 GB | 200 GB |

| Total | 357 CPUs | 691 GB | 89 GB | 1604 GB |

Table 3-28 cnDBTier Resources and their Utilization

| Microservice name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Ephemeral Storage Per Pod | PVC Allocation Per Pod | Total Resources | CPU Usage | Memory Usage | PVC Usage |

|---|---|---|---|---|---|---|---|---|---|---|

| Management node (ndbmgmd) | mysqlndbcluster | 2 | 2 CPUs | 9 GB | 1 GB | 15 GB |

4 CPUs 18 GB Ephemeral Storage: 2 GB PVC Allocation: 30 GB |

Minimal resources are used. | 70 MB/pod | |

| Data node (ndbmtd) | mysqlndbcluster | 4 | 4 CPUs | 93 GB | 1 GB | 132 GB

Backup: 164 GB |

16 CPUs 372 GB Ephemeral Storage: 4 GB PVC Allocation: 1184 GB |

2 CPU/pod | 77.5 GB/pod | 33 GB/pod |

| APP SQL node (ndbappmysqld) | mysqlndbcluster | 10 | 6 CPUs | 4 GB | 1 GB | 2 GB |

60 CPUs 40 GB Ephemeral Storage: 10 GB PVC Allocation: 20 GB |

4.8 CPU/pod | 2 GB/pod | 200 MB/pod |

| SQL node (ndbmysqld,used for replication) | mysqlndbcluster | 2 | 4 CPUs | 24 GB | 1 GB | 13 GB |

8 CPUs 48 GB Ephemeral Storage: 2 GB PVC Allocation: 26 GB |

Minimal resources are used. | 2 GB/pod | |

| DB Monitor Service | db-monitor-svc | 1 | 4 CPUs | 4 GB | 1 GB | NA |

4 CPU 4 MB Ephemeral Storage: 1 GB |

Minimal resources are used. | Minimal resources used | |

| DB Backup Manager Service | backup-manager-svc | 1 | 100 millicores CPUs | 128 MB | 1 GB | NA |

1 CPU 128 MB Ephemeral Storage: 1 GB |

Minimal resources are used. | Minimal resources used | |

| Replication service (Multi site cases) | replication-svc | 1 | 2 CPUs | 2 GB | 1 GB | 143 GB |

2 CPUs 2 GB Ephemeral Storage: 1 GB PVC Allocation: 143 GB |

Minimal resources used | NA | |

Table 3-29 UDR Resources and their Utilization (Average Latency: 19ms for N36 and 24ms for Provisioning)

| Micro service name | Container name | Number of Pods | CPU Allocation Per Pod | Memory Allocation Per Pod | Ephemeral Storage Per Pod | Total Resources | CPU Usage | Memory Usage | CPU Utilization |

|---|---|---|---|---|---|---|---|---|---|

| Ingress-gateway-sig | ingressgateway-sig | 9 | 6 CPUs | 4 GB | 1 GB |

54 CPUs 36 GB Ephemeral Storage: 9 GB |

2.4 CPU/pod | 1.7 GB/pod | 39% |

| Ingress-gateway-prov | ingressgateway-prov | 2 | 4 CPUs | 4 GB | 1 GB |

8 CPUs 8 GB Ephemeral Storage: 2 GB |

0.5 CPUs/pod | 1.1 GB/pod | 11% |

| Nudr-dr-service | nudr-drservice | 17 | 6 CPUs | 4 GB | 1 GB |

102 CPUs 68 GB Ephemeral Storage: 17 GB |

3 CPUs/pod | 2 GB/pod | 48% |

| Nudr-dr-provservice | nudr-dr-provservice | 2 | 4 CPUs | 4 GB | 1 GB |

8 CPUs 8 GB Ephemeral Storage: 2 GB |

1.4 CPUs/pod | 1.9 GB/pod | 34% |

| Nudr-notify-service | nudr-notify-service | 3 | 6 CPUs | 5 GB | 1 GB |

18 CPUs 15 GB Ephemeral Storage: 3 GB |

4.2 CPUs/pod | 2.2 GB/pod | 70% |

| Nudr-egress-gateway | egressgateway | 2 | 6 CPUs | 4 GB | 1 GB |

12 CPUs 8 GB Ephemeral Storage: 2 GB |

0.75 CPUs/pod | 1.2 GB/pod | 10% |

| Nudr-config | nudr-config | 2 | 1 CPU | 1 GB | 1 GB |

2 CPUs 2 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| Nudr-config-server | nudr-config-server | 2 | 1 CPU | 1 GB | 1 GB |

2 CPUs 2 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| Alternate-route | alternate-route | 2 | 1 CPU | 1 GB | 1 GB |

2 CPUs 2 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| Nudr-nrf-client-nfmanagement-service | nrf-client-nfmanagement | 2 | 1 CPU | 1 GB | 1 GB |

2 CPUs 2 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| App-info | app-info | 2 | 1 CPU | 1 GB | 1 GB |

2 CPUs 2 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| Perf-info | perf-info | 2 | 1 CPU | 1 GB | 1 GB |

2 CPUs 2 GB Ephemeral Storage: 2 GB |

Minimal resources are used. | ||

| Nudr-dbcr-auditor-service | nudr-dbcr-auditor-service | 1 | 1 CPU | 1 GB | 1 GB |

1 CPU 1 GB Ephemeral Storage: 1 GB |

Minimal resources are used. | ||

Table 3-30 Result and Observation

| Parameter | Values |

|---|---|

| Test Duration | 4h30m |

| TPS Achieved | 17.2K Signaling |

| Success rate | 100% |