2 Troubleshooting OCNADD

Note:

kubectl commands might vary based on the platform

deployment. Replace kubectl with Kubernetes environment-specific

command line tool to configure Kubernetes resources through

kube-api server. The instructions provided in this document

are as per the Oracle Communications Cloud Native Environment (OCCNE) version of

kube-api server.

2.1 Generic Checklist

The following sections provide a generic checklists for troubleshooting OCNADD.

Deployment Checklist

- Failure in Certificate or Secret generation.

There may be a possibility of an error in certificate generation when the Country, State, or Organization name is different in CA and service certificates.

Problem The certification generation script will report an error if any of the following field in the requested service certificate is not matching with the CA whichis being used to sign the certificates for the services. countryName stateOrProvinceName organizationName Error Code/ErrorMessage: The countryName field is different between CA certificate (US) and the request (IN) (similar error message will be reported forState or Org name) Solution - Navigate to "ssl_certs/default_values/"and edit the "values"file. - Make changes for the below values under "[global]"section countryName stateOrProvinceName organizationName - The values should match exactly with your CA configurations For e.g., if your CA has country name as "US", state as "NY" and Org name as "ORACLE" then please set the values under [global] parameter like below: [global] countryName=US stateOrProvinceName=NY localityName=BLR organizationName=ORACLE organizationalUnitName=CGBU defaultDays=365 - Rerun the script and verify the certification and secret generation. - Run the following command to verify if OCNADD deployment, pods, and services created are running and available:

Verify the output, and check the following columns:# kubectl -n <namespace> get deployments,pods,svc- READY, STATUS, and RESTARTS

- PORT(S) of service

Note:

It is normal to observe the Kafka broker restart during deployment. - Verify if the correct image is used and correct environment variables are set in

the deployment.

To check, run the following command:

# kubectl -n <namespace> get deployment <deployment-name> -o yaml - Check if the microservices can access each other through a REST interface.

To check, run the following command:

Example:# kubectl -n <namespace> exec <pod name> -- curl <uri>kubectl exec -it pod/ocnaddconfiguration-6ffc75f956-wnvzx -n ocnadd-system -- curl 'http://ocnaddadminservice:9181/ocnadd-admin-svc/v1/topic'Note:

These commands are in their simple format and display the logs only if ocnaddconfiguration and ocnadd-admin-svc pods are deployed.

The list of URIs for all the microservices:

http://ocnaddconfiguration:<port>/ocnadd-configuration/v1/subscriptionhttp://oncaddalarm:<port>/ocnadd-alarm/v1/alarm?&startTime=<start-time>&endTime=<end-time>Use off-set date time format, for example, 2022-07-12T05:37:26.954477600Z

<ip>:<port>/ocnadd-admin-svc/v1/topic/<ip>:<port>/ocnadd-admin-svc/v1/describe/topic/<topicName><ip>:<port>/ocnadd-admin-svc/v1/alter<ip>:<port>/ocnadd-admin-svc/v1/broker/expand/entry<ip>:<port>/ocnadd-admin-svc/v1/broker/health

Application Checklist

Logs Verification

# kubectl -n <namespace> logs -f <pod name>-f to follow the logs or grep option to obtain a specific pattern in the log output.

# kubectl -n ocnadd-system logs -f $(kubectl -n ocnadd-system get pods -o name |cut -d'/' -f 2|grep nrfaggregation)Above command displays the logs of the ocnaddnrfaggregation service.

# kubectl logs -n ocnadd-system <pod name> | grep <pattern>Note:

These commands are in their simple format and display the logs only if there is atleast onenrfaggregation pod deployed.

Kafka Consumer Rebalancing

The Kafka consumers can rebalance in the following scenarios:

- The number of partitions changes for any of the subscribed topics.

- A subscribed topic is created or deleted.

- An existing member of the consumer group is shutdown or fails.

- In the Kafka consumer application,

- Stream threads inside the consumer app skipped sending heartbeat to Kafka.

- The batch of messages took longer time to process and causes the time between the two polls to take longer.

- Any stream thread in any of the consumer application pods dies because of some error and it is replaced with a new Kafka Stream thread.

- Any stream thread is stuck and not processing any message.

- A new member is added to the consumer group (for example, new consumer pod spins up).

When the rebalancing is triggered, there is a possibility that offsets are not committed by the consumer threads as offsets are committed periodically. This can result in messages corresponding to non-committed offsets being sent again or duplicated when the rebalancing is completed and consumers started consuming again from the partitions. This is a normal behavior in the Kafka consumer application. However, because of frequent rebalancing in the Kafka consumer applications, the counts of messages in the Kafka consumer application and 3rd party application can mismatch.

Data Feed not accepting updated endpoint

Problem

If a Data feed is created for synthetic packets with an incorrect endpoint, updating the endpoint afterward has no effect.

Solution

Delete and recreate the data feed for synthetic packets with the correct endpoint.

Kafka Performance Impact (due to disk limitation)

Problem

When source topics (SCP, NRF, and SEPP) and MAIN topic are created with Replication Factor = 1

For a low performance disk, the Egress MPS rate drops/fluctuates with the following traces in the Kafka broker logs:

Shrinking ISR from 1001,1003,1002 to 1001. Leader: (highWatermark: 1326, endOffset: 1327). Out of sync replicas: (brokerId: 1003, endOffset: 1326) (brokerId: 1002, endOffset: 1326). (kafka.cluster.Partition)

ISR updated to 1001,1003 and version updated to 28(kafka.cluster.Partition)Solution

The following steps can be performed (or verified) to optimize the Egress MPS rate:

- Try to increase the disk performance in the cluster where OCNADD is deployed.

- If the disk performance cannot be increased, then perform the following steps for

OCNADD:

- Navigate to the Kafka helm charts values file (<helm-chart-path>/ocnadd/charts/ocnaddkafka/values.yaml)

- Change the below parameter in the values.yaml:

- offsetsTopicReplicationFactor: 1

- transactionStateLogReplicationFactor: 1

- Scale down the Kafka and zookeeper deployment by modifying the

following lines in the helm

chart:

ocnaddkafka: enabled:false - Perform helm upgrade for OCNADD:

helm upgrade <release name> <chart path> -n <namespace>

- Delete PVC for Kafka and Zookeeper using the following commands:

- kubectl delete pvc -n <namespace> kafka-volume-kafka-broker-0

- kubectl delete pvc -n <namespace> kafka-volume-kafka-broker-1

- kubectl delete pvc -n <namespace> kafka-volume-kafka-broker-2

- kubectl delete pvc -n <namespace> kafka-broker-security-zookeeper-0

- kubectl delete pvc -n <namespace> kafka-broker-security-zookeeper-1

- kubectl delete pvc -n <namespace> kafka-broker-security-zookeeper-2

- Modify the value of the following parameter to

true:

ocnaddkafka: enabled:true - Perform helm upgrade for OCNADD:

helm upgrade <release name> <chart path> -n <namespace>

Note:

The following points are to be considered while applying the above procedure:

- In case a Kafka broker becomes unavailable, then you may experience an impact on the traffic on the Ingress side.

- Verify the Kafka broker logs or describe the Kafka/zookeeper pod which is unavailable and take the necessary action based on the error reported.

500 Server Error on GUI while creating/deleting the Data Feed

Problem

Occasionally, due to network issues, the user may observe a "500 Server Error" while creating/deleting the Data Feed.

Solution

- Delete and recreate the feed if it is not created properly.

- Retry the action after logging out from the GUI and login back again.

- Retry creating/deleting the feed after some time.

Kafka resources reaching more than 90% utilization

Problem

Kafka resources(CPU, Memory) reached more than 90% utilization due to a higher MPS rate or slow disk I/O rate

Solution

Add additional resources to the following parameters that are reaching high utilization.

File name: ocnadd-custom-values.yaml

Parameter name: ocnaddkafka.ocnadd.kafkaBroker.resource

kafkaBroker:

name:kafka-broker

resource:

limit:

cpu:5 ===> change it to require number of CPUs

memory:24Gi ===> change it to require number of memory size 2.2 Helm Install and Upgrade Failure

This section describes the various helm installation or upgrade failure scenarios and the respective troubleshooting procedures:

2.2.1 Incorrect Image Name in ocnadd/values File

Problem

helm install fails if an incorrect image name

is provided in the ocnadd/values.yaml file or if the image is

missing in the image repository.

Error Code or Error Message

When you run kubectl get pods -n <ocnadd_namespace>, the status of the pods might be ImagePullBackOff or ErrImagePull.

Solution

Perform the following steps to verify and correct the image name:

- Edit the

ocnadd/values.yamlfile and provide the release specific image names and tags. - Run the

helm installcommand. - Run the

kubectl get pods -n <ocnadd_namespace>command to verify if all the pods are in Running state.

2.2.2 Docker Registry is Configured Incorrectly

Problem

helm install might fail if the docker registry is not configured in

all primary and secondary nodes.

Error Code or Error Message

When you run kubectl get pods -n <ocnadd_namespace>, the status of

the pods might be ImagePullBackOff or ErrImagePull.

Solution

Configure the docker registry on all primary and secondary nodes. For information about docker registry configuration, see Oracle Communications Cloud Native Environment Installation Guide.

2.2.3 Continuous Restart of Pods

Problem

helm install might fail if MySQL primary or

secondary hosts are not configured properly in

ocnadd/values.yaml.

Error Code or Error Message

When you run kubectl get pods -n

<ocnadd_namespace>, the pods shows restart count increases

continuously or there is a Prometheus alert for continuous pod restart.

-

Verify MySQL connectivity.

MySQL servers(s) may not be configured properly. For more information about the MySQL configuration, see Oracle Communications Network Analytics Data Director Installation Guide.

- Describe the POD to check more details on the error, troubleshoot further based on the reported error.

- Check the POD log for any error, troubleshoot further based on the reported error.

2.2.4 Adapter Pods Does Not Receive Update Notification

Problem

Update notification from configuration service/GUI is not reaching Adapter pods..

Error Code or Error Message

On triggering update notification from GUI/Configuration Service, the configuration changes on Adapter does not take place.

Solution

- Check the Adapter pod logs

with:

"kubectl logs <adapter app name> -n <namespace> - If the update notification logs are not present in the Adapter logs execute the

below command to re-read the configurations with the following

command:

kubectl rollout restart deploy <adapter app name> -n <namespace>

2.2.5 Adapter Deployment Removed during Upgrade or Rollback

Problem

Adapter(data feed) is deleted during upgrade or rollback.

Error Code or Error Message

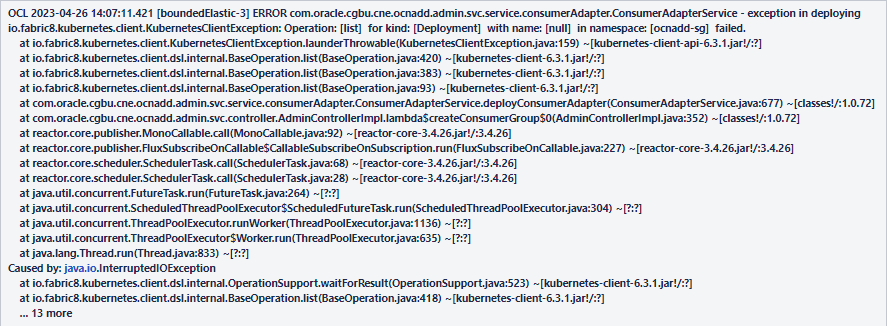

Figure 2-1 Error Message

Solution

- Run the following command to verify the data feeds:

kubectl get po -n <namespace> - If data feeds are missing, verify the above mentioned error message in the admin service log, by running the following command:

kubectl logs <admin svc pod name> -n <namespace> - If the error message is present, run the following command:

kubectl rollout restart deploy <configuration app name> -n <namespace>

2.2.6 Values.yaml File Parse Failure

This section explains the troubleshooting procedure in case of failure while

parsing the ocnadd/values.yaml file.

Problem

Unable to parse the ocnadd/values.yaml file or any

other while running Helm install.

Error Code or Error Message

Error: failed to parse ocnadd/values.yaml: error

converting YAML to JSON: yaml

Symptom

When parsing the ocnadd/values.yaml file, if

the above mentioned error is received, it indicates that the file is not

parsed because of the following reasons:

- The tree structure may not have been followed

- There may be a tab space in the file

Solution

Download the latest OCNADD custom templates zip file from MOS. For more information, see Oracle Communications Network Analytics Data Director Installation Guide.

2.2.7 Kafka Brokers Continuously Restart after Reinstallation

Problem

When re-installing OCNADD in the same namespace without deleting the PVC that was used for the first installation, Kafka brokers will go into crashloopbackoff status and keep restarting.

Error Code or Error Message

When you run, kubectl get pods -n <ocnadd_namespace> the broker pod's

status might be Error/crashloopbackoff and it might keep restarting continuously, with

"no disk space left on the device" errors in the pod logs.

Solution

- Delete the Stateful set (STS) deployments of the brokers. Run

kubectl get sts -n <ocnadd_namespace>to obtain the Stateful sets in the namespace. - Delete the STS deployments of the services with disk full issue. For example run the command

kubectl delete sts -n <ocnadd_namespace> kafka-broker1 kafka-broker2. - Delete the PVCs in the namespace, which is used by kafka-brokers. Run

kubectl get pvc -n <ocnadd_namespace>to get the PVCs in that namespace.The number of PVCs used is based on the number of brokers deployed. Therefore, select the PVCs that have the name kafka-broker or zookeeper, and delete them. To delete the PVCs, run

kubectl delete pvc -n <ocnadd_namespace> <pvcname1> <pvcname2>.

For example:

For a three broker setup in the namespace ocnadd-deploy, delete the following PVCs:

kubectl delete pvc -n ocnadd-deploy broker1-pvc-kafka-broker1-0, broker2-pvc-kafka-broker2-0, broker3-pvc-kafka-broker3-0, kafka-broker-security-zookeeper-0, kafka-broker-security-zookeeper-1 kafka-broker-security-zookeeper-22.2.8 Kafka Brokers Continuously Restart After the Disk is Full

Problem

This issue occurs when the disk space is full on the broker or zookeeper.

Error Code or Error Message

When you run kubectl get pods -n <ocnadd_namespace>, the broker pod's status might be error or crashloopbackoff and it might keep restarting continuously.

Solution

- Delete the STS(Stateful set) deployments of the brokers:

- Get the STS's in the namespace with the following

command:

kubectl get sts -n <ocnadd_namespace> - Delete the STS deployments of the services with disk full

issue:

kubectl delete sts -n <ocnadd_namespace> <sts1> <sts2>For example, for three broker setup:kubectl delete sts -n ocnadd-deploy kafka-broker1 kafka-broker2 kafka-broker3 zookeeper

- Get the STS's in the namespace with the following

command:

- Delete the PVCs in that namespace that is used by the removed kafka-brokers. To get

the PVCs in that

namespace:

kubectl get pvc -n <ocnadd_namespace>The number of PVCs used will be based on the number of brokers you deploy. So choose the PVCs that have the name kafka-broker or zookeeper and delete them.

- To delete PVCs,

run:

kubectl delete pvc -n <ocnadd_namespace> <pvcname1> <pvcname2>For example, For a three broker setup in namespace ocnadd-deploy, you will need to delete these PVCs;

kubectl delete pvc -n ocnadd-deploy broker1-pvc-kafka-broker1-0 broker2-pvc-kafka-broker2-0 broker3-pvc-kafka-broker3-0 kafka-broker-security-zookeeper-0 kafka-broker-security-zookeeper-1 kafka-broker-security-zookeeper-2

- To delete PVCs,

run:

-

Once the STS and PVC's are deleted for the services, edit the respective broker's values.yaml to increase the PV size of the brokers at the location:

<chartpath>/charts/ocnaddkafka/values.yaml.If any formatting or indentation issues occur while editing, refer to the files in<chartpath>/charts/ocnaddkafka/defaultTo increase the storage edit the fields pvcClaimSize for each broker. For recommendation of PVC storage, see Oracle CommunicationsNetwork AnalyticsData Director Planning Guide.

-

Upgrade the helm chart after increasing the PV size

helm upgrade <release-name> <chartpath> -n <namespace> - Create the required topics.

2.2.9 Kafka Brokers Restart on Installation

Problem

Kafka brokers re-start during OCNADD installation.

Error Code or Error Message

The output of the command kubectl get pods -n <ocnadd_namespace> displays the broker pod's status as restarted.

Solution

The Kafka Brokers wait for a maximum of 3 minutes for the Zookeepers to come online before they are started. If the Zookeeper cluster does not come online within the given interval, the broker will start before the Zookeeper and will error out as it does not have access to the Zookeeper.This may Zookeeper may start after the 3 interval as the node may take more time to pull the images due to network issues.Therefore, when the zookeeper does not come online within the given time this issue may be observed.

2.2.10 Database Goes into the Deadlock State

Problem

MySQL locks get struck.

Error Code or Error Message

ERROR 1213 (40001): Deadlock found when trying to get lock; try restarting the transaction.

Symptom

Unable to access MySQL.

Solution

Perform the following steps to remove the deadlock:

- Run the following command on each SQL

node:

This command retrieves the list of commands to kill each connection.SELECT CONCAT('KILL ', id, ';') FROM INFORMATION_SCHEMA.PROCESSLIST WHERE `User` = <DbUsername> AND `db` = <DbName>;Example:select CONCAT('KILL ', id, ';') FROM INFORMATION_SCHEMA.PROCESSLIST where `User` = 'ocnadduser' AND `db` = 'ocnadddb'; +--------------------------+ | CONCAT('KILL ', id, ';') | +--------------------------+ | KILL 204491; | | KILL 200332; | | KILL 202845; | +--------------------------+ 3 rows in set (0.00 sec) - Run the kill command on each SQL node.

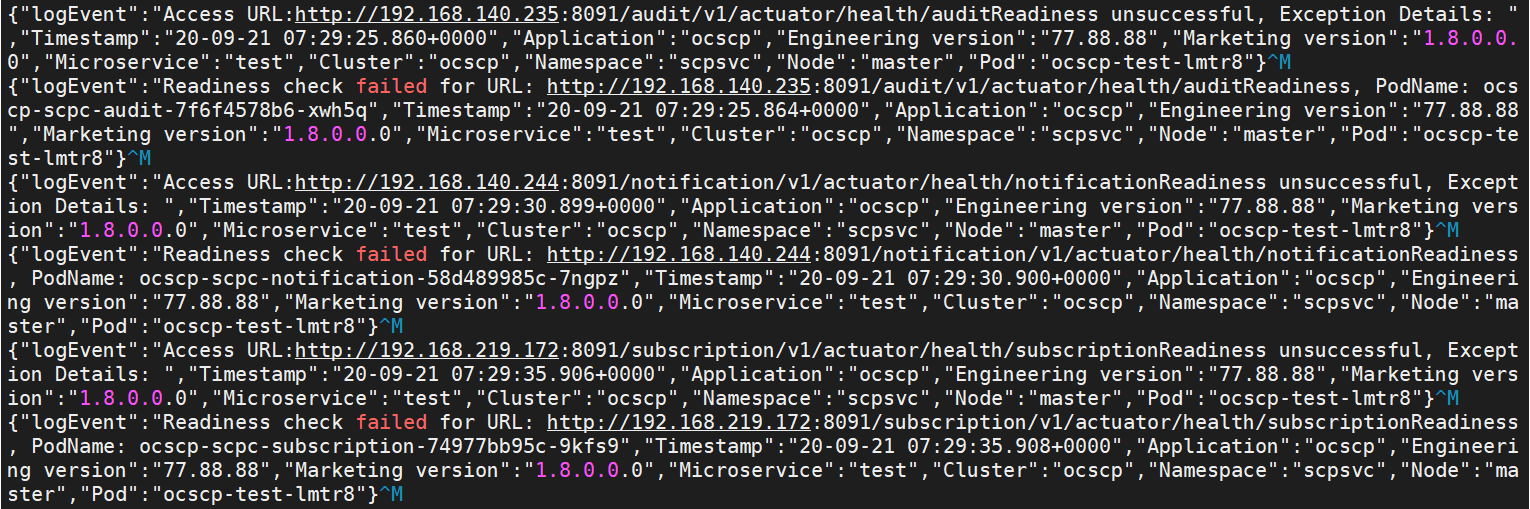

2.2.11 Readiness Probe Failure

The helm install might fail due to the readiness probe URL

failure.

If the following error appears, check for the readiness probe URL

correctness in the particular microservice helm charts under the charts folder:

Low Resources

Helm install might fail due to low

resources, and the following error may appear:

In this case, check the CPU and memory availability in the Kubernetes cluster.

2.2.12 Synthetic Feed Unable to Forward Packets After Rollback to 23.1.0

Scenario

- Synthetic data feeds are created in release 23.1.0 and verified with end-to-end traffic flow.

- User performs an upgrade to release 23.2.0. Synthetic data feeds created in release 23.1.0 are working as expected.

- User has to perform a rollback to release 23.1.0 due to some unrecoverable error.

- The thread configuration of the TCP synthetic data feed is modified resulting in traffic failure to the third party consumers.

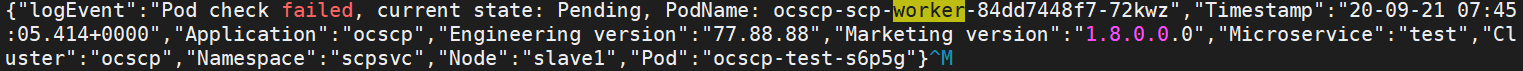

Error Code or Error Message

The following error message is observed in the synthetic adapter logs:

Figure 2-2 Error Message

Solution

- Delete the existing synthetic data feed.

- Create new synthetic feed with the same name and attributes, "Connection Failure setting" set to "Resume from point of failure".

- Verify the end-to-end traffic flow between OCNADD and the corresponding third party application.

2.2.13 The Pending Rollback Issue Due to PreRollback Database Rollback Job Failure

Scenario: Rollback to previous release or above versions are failing due to pre-rollback-db job failure and OCNADD is entering into pending-rollback status

Problem: In this case, OCNADD gets stuck and can't proceed with any other operation like install/upgrade/rollback.

Error Logs - Example with 23.2.0.0.1:

>helm rollback ocnadd 1 -n <namespace>

Error: job failed: BackoffLimitExceeded

$ helm history ocnadd -n ocnadd-deploy

REVISION UPDATED STATUS CHART APP VERSION DESCRIPTION

1 Fri Jul 14 10:38:31 2023 superseded ocnadd-23.2.0 23.2.0 Install complete

2 Fri Jul 14 11:38:31 2023 superseded ocnadd-23.2.0 23.2.0.0.1 Upgrade complete

3 Fri Jul 14 11:40:17 2023 superseded ocnadd-23.3.0 23.3.0.0.0 Upgrade complete

4 Mon Jul 17 07:22:04 2023 pending-rollback ocnadd-23.2.0 23.2.0.0.1 Rollback to 1

$ helm upgrade ocnadd <helm chart> -n ocnadd-deploy

Error: UPGRADE FAILED: another operation (install/upgrade/rollback) is in progressSolution:

To resolve the pending-rollback issue, delete the secrets related to the 'pending-rollback' revision. Follow these steps:

- Get secrets using

kubectl

$kubectl get secrets -n ocnadd-deploy NAME TYPE DATA AGE adapter-secret Opaque 8 14d certdbfilesecret Opaque 1 14d db-secret Opaque 6 47h default-token-dmrqq kubernetes.io/service-account-token 3 14d egw-secret Opaque 8 14d jaas-secret Opaque 1 4d19h kafka-broker-secret Opaque 8 14d ocnadd-deploy-admin-sa-token-mfh6l kubernetes.io/service-account-token 3 4d19h ocnadd-deploy-cache-sa-token-g7rd2 kubernetes.io/service-account-token 3 4d19h ocnadd-deploy-gitlab-admin-token-qfmmf kubernetes.io/service-account-token 3 4d19h ocnadd-deploy-kafka-sa-token-2qqs9 kubernetes.io/service-account-token 3 4d19h ocnadd-deploy-sa-ocnadd-token-tj2zf kubernetes.io/service-account-token 3 47h ocnadd-deploy-zk-sa-token-9x2rb kubernetes.io/service-account-token 3 4d19h ocnaddadminservice-secret Opaque 8 14d ocnaddalarm-secret Opaque 8 14d ocnaddcache-secret Opaque 8 14d ocnaddconfiguration-secret Opaque 8 14d ocnaddhealthmonitoring-secret Opaque 8 14d ocnaddnrfaggregation-secret Opaque 8 14d ocnaddscpaggregation-secret Opaque 8 14d ocnaddseppaggregation-secret Opaque 8 14d ocnaddthirdpartyconsumer-secret Opaque 8 14d ocnadduirouter-secret Opaque 8 14d oraclenfproducer-secret Opaque 8 14d regcred-sim kubernetes.io/dockerconfigjson 1 8d secret Opaque 7 4d19h sh.helm.release.v1.ocnadd.v1 helm.sh/release.v1 1 4d19h sh.helm.release.v1.ocnadd.v2 helm.sh/release.v1 1 4d19h sh.helm.release.v1.ocnadd.v3 helm.sh/release.v1 1 4d19h sh.helm.release.v1.ocnadd.v4 helm.sh/release.v1 1 47h sh.helm.release.v1.ocnaddsim.v1 helm.sh/release.v1 1 8d zookeeper-secret Opaque 8 14d - Delete Secrets Related to Pending-Rollback Revision: In this

case the secrets of revision 4, that is,

'sh.helm.release.v1.ocnadd.v4'need to be deleted since the data director entered 'pending-rollback' status in revision 4:kubectl delete secrets sh.helm.release.v1.ocnadd.v4 -n ocnadd-deploySample output:

secret "sh.helm.release.v1.ocnadd.v4" deleted - Check Helm History: Verify that the pending-rollback status has

been cleared using the following

command:

helm history ocnadd -n ocnadd-deploySample output:

REVISION UPDATED STATUS CHART APP VERSION DESCRIPTION 1 Fri Jul 14 10:38:31 2023 superseded ocnadd-23.2.0 23.2.0 Install complete 2 Fri Jul 14 11:38:31 2023 superseded ocnadd-23.2.0 23.2.0.0.1 Upgrade complete 3 Fri Jul 14 11:40:17 2023 superseded ocnadd-23.3.0-rc.2 23.3.0.0.0 Upgrade complete - Restore Database Backup: Restore the database backup taken before the upgrade started. Follow the "Create OCNADD Restore Job" section of the "Fault Recovery" from the Oracle Communications Network Analytics Data Director Installation, Upgrade and Fault Recovery Guide.

- Perform Rollback: Perform rollback again using the following

command:

helm rollback <release name> <revision number> -n <namespace>For example:

helm rollback ocnadd 1 -n ocnadd-deploy --no-hooks - Verification: Verify that end-to-end traffic is running between the DD and the corresponding third-party application.

2.2.14 Upgrade fails due to unsupported changes

Problem

Upgrade failed from the source release to the target release due to unsupported changes in the target release

The upgrade failed from the source release to the target release due to unsupported changes in the target release (during the upgrade the Database Job was successful but the upgrade failed due to an error).

Note:

This issue is not a generic issue, however, may occur if users are unable to sync up the target release charts.Example:

Scenario: If there is a PVC size mismatch from the source release to the target release.

Error Message in Helm History: Example with 23.2.0.0.1 to 23.3.0

Error: UPGRADE FAILED: cannot patch "zookeeper" with kind StatefulSet: StatefulSet.apps "zookeeper" is invalid: spec: Forbidden: updates to statefulset spec for fields other than 'replicas', 'template', 'updateStrategy', 'persistentVolumeClaimRetentionPolicy' and 'minReadySeconds' are forbiddenRun the following command: (with 23.2.0.0.1)

helm history <release-name> -n <namespace>Sample output with version 23.2.0.0.1:

REVISION UPDATED STATUS CHART APP VERSION DESCRIPTION

1 Tue Aug 1 07:01:29 2023 superseded ocnadd-23.2.0 23.2.0 Install complete

2 Tue Aug 1 07:08:29 2023 deployed ocnadd-23.2.0 23.2.0.0.1 Upgrade complete

3 Tue Aug 1 07:24:08 2023 failed ocnadd-23.3.0 23.3.0.0.0 Upgrade "ocnadd" failed: cannot patch "zookeeper" with kind StatefulSet: StatefulSet.apps "zookeeper" is invalid: spec: Forbidden: updates to statefulset spec for fields other than 'replicas', 'template', 'updateStrategy', 'persistentVolumeClaimRetentionPolicy' and 'minReadySeconds' are forbiddenSolution

Perform the following steps:

- Correct and sync the target release charts as per the source release, and ensure that no new feature of the new release is enabled.

- Perform an upgrade. For more information about the upgrade procedure, see "Upgrading OCNADD" in the Oracle Communications Network Analytics Data Director Installation, Upgrade, and Fault Recovery Guide.

2.2.15 Upgrade Failed from Source Release to Target Release Due to Helm Hook Upgrade Database Job

Problem

Upgrade failed from patch source release to target release due to helmhook upgrade DB job (Upgrade job fails).

Error Code or Error Message: Example with 23.2.0.0.1 to 23.3.0

Error: UPGRADE FAILED: pre-upgrade hooks failed: job failed: BackoffLimitExceededRun the following command:

helm history <release-name> -n <namespace>Sample Output:

REVISION UPDATED STATUS CHART APP VERSION DESCRIPTION

1 Tue Aug 1 07:01:27 2023 superseded ocnadd-23.2.0 23.2.0 Install complete

2 Tue Aug 1 07:08:29 2023 deployed ocnadd-23.2.0 23.2.0.0.1 Upgrade complete

2 Tue Aug 1 07:24:08 2023 failed ocnadd-23.3.0 23.3.0.0.0 Upgrade "ocnadd" failed: pre-upgrade hooks failed: job failed: BackoffLimitExceededSolution

Rollback to patch, correct the errors, and then run the upgrade once again.

- Run helm rollback to previous release

revision:

helm rollback <helm release name> <revision number> -n <namespace> - Restore the Database backup taken before upgrade. For more information see, the procedure "Create OCNADD Restore Job" in the "Fault Recovery" section in the Oracle Communications Network Analytics Data Director Installation, Upgrade, and Fault Recovery Guide.

- Correct the upgrade issue and run a fresh upgrade. For more information, see "Upgrading OCNADD" in the Oracle Communications Network Analytics Data Director Installation, Upgrade, and Fault Recovery Guide.

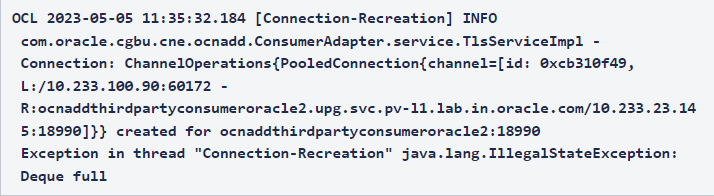

2.2.16 Configuration Service Displays Error Logs

Problem

On restarting ocnaddconfiguration and adapter or aggregation pods, notifications are reaching the adapter or aggregation pods, but error logs present in Configuration Service. This is observed if the old pods IP entry is not deleted from the database.

Note:

Ensure that configuration and aggregation or adapter pods are not deleted simultaneously.Error Code or Error Message

Configuration Service Error Log

Error has been observed at the following site(s): *__checkpoint ? Request to POST http://10.233.122.199:9182/ocnadd-consumeradapter/v2/notifications [DefaultWebClient] Original Stack Trace: at org.springframework.web.reactive.function.client.ExchangeFunctions$DefaultExchangeFunction.lambda$wrapException$9(ExchangeFunctions.java:136) ~[spring-webflux-6.0.7.jar!/:6.0.7] at reactor.core.publisher.MonoErrorSupplied.subscribe(MonoErrorSupplied.java:55) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.Mono.subscribe(Mono.java:4485) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onError(FluxOnErrorResume.java:103) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxPeek$PeekSubscriber.onError(FluxPeek.java:222) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxPeek$PeekSubscriber.onError(FluxPeek.java:222) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxPeek$PeekSubscriber.onError(FluxPeek.java:222) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoNext$NextSubscriber.onError(MonoNext.java:93) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoFlatMapMany$FlatMapManyMain.onError(MonoFlatMapMany.java:204) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.SerializedSubscriber.onError(SerializedSubscriber.java:124) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxRetryWhen$RetryWhenMainSubscriber.whenError(FluxRetryWhen.java:225) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxRetryWhen$RetryWhenOtherSubscriber.onError(FluxRetryWhen.java:274) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.onError(FluxContextWrite.java:121) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxConcatMapNoPrefetch$FluxConcatMapNoPrefetchSubscriber.maybeOnError(FluxConcatMapNoPrefetch.java:326) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxConcatMapNoPrefetch$FluxConcatMapNoPrefetchSubscriber.onNext(FluxConcatMapNoPrefetch.java:211) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.onNext(FluxContextWrite.java:107) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.SinkManyEmitterProcessor.drain(SinkManyEmitterProcessor.java:471) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.SinkManyEmitterProcessor$EmitterInner.drainParent(SinkManyEmitterProcessor.java:615) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxPublish$PubSubInner.request(FluxPublish.java:602) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.request(FluxContextWrite.java:136) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxConcatMapNoPrefetch$FluxConcatMapNoPrefetchSubscriber.request(FluxConcatMapNoPrefetch.java:336) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.request(FluxContextWrite.java:136) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.Operators$DeferredSubscription.request(Operators.java:1717) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxRetryWhen$RetryWhenMainSubscriber.onError(FluxRetryWhen.java:192) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoCreate$DefaultMonoSink.error(MonoCreate.java:201) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.netty.http.client.HttpClientConnect$MonoHttpConnect$ClientTransportSubscriber.onError(HttpClientConnect.java:311) ~[reactor-netty-http-1.1.5.jar!/:1.1.5] at reactor.core.publisher.MonoCreate$DefaultMonoSink.error(MonoCreate.java:201) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.netty.http.client.Http2ConnectionProvider$DisposableAcquire.onError(Http2ConnectionProvider.java:281) ~[reactor-netty-http-1.1.5.jar!/:1.1.5] at reactor.netty.http.client.Http2Pool$Borrower.fail(Http2Pool.java:813) ~[reactor-netty-http-1.1.5.jar!/:1.1.5] at reactor.netty.http.client.Http2Pool.lambda$drainLoop$2(Http2Pool.java:443) ~[reactor-netty-http-1.1.5.jar!/:1.1.5] at reactor.core.publisher.FluxDoOnEach$DoOnEachSubscriber.onError(FluxDoOnEach.java:186) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxMap$MapConditionalSubscriber.onError(FluxMap.java:265) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoCreate$DefaultMonoSink.error(MonoCreate.java:201) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.netty.resources.DefaultPooledConnectionProvider$DisposableAcquire.onError(DefaultPooledConnectionProvider.java:162) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at reactor.netty.internal.shaded.reactor.pool.AbstractPool$Borrower.fail(AbstractPool.java:475) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at reactor.netty.internal.shaded.reactor.pool.SimpleDequePool.lambda$drainLoop$9(SimpleDequePool.java:429) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at reactor.core.publisher.FluxDoOnEach$DoOnEachSubscriber.onError(FluxDoOnEach.java:186) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoCreate$DefaultMonoSink.error(MonoCreate.java:201) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.netty.resources.DefaultPooledConnectionProvider$PooledConnectionAllocator$PooledConnectionInitializer.onError(DefaultPooledConnectionProvider.java:560) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at reactor.core.publisher.MonoFlatMap$FlatMapMain.secondError(MonoFlatMap.java:241) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoFlatMap$FlatMapInner.onError(MonoFlatMap.java:315) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onError(FluxOnErrorResume.java:106) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.Operators.error(Operators.java:198) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.MonoError.subscribe(MonoError.java:53) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.Mono.subscribe(Mono.java:4485) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onError(FluxOnErrorResume.java:103) ~[reactor-core-3.5.4.jar!/:3.5.4] at reactor.netty.transport.TransportConnector$MonoChannelPromise.tryFailure(TransportConnector.java:587) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at reactor.netty.transport.TransportConnector$MonoChannelPromise.setFailure(TransportConnector.java:541) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at reactor.netty.transport.TransportConnector.lambda$doConnect$7(TransportConnector.java:265) ~[reactor-netty-core-1.1.5.jar!/:1.1.5] at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:590) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:583) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:559) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.DefaultPromise.notifyListeners(DefaultPromise.java:492) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.DefaultPromise.setValue0(DefaultPromise.java:636) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.DefaultPromise.setFailure0(DefaultPromise.java:629) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.DefaultPromise.tryFailure(DefaultPromise.java:118) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe$1.run(AbstractNioChannel.java:262) ~[netty-transport-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.PromiseTask.runTask(PromiseTask.java:98) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.ScheduledFutureTask.run(ScheduledFutureTask.java:153) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.AbstractEventExecutor.runTask(AbstractEventExecutor.java:174) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java:167) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:470) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:569) ~[netty-transport-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:997) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at java.lang.Thread.run(Thread.java:833) ~[?:?] Caused by: io.netty.channel.ConnectTimeoutException: connection timed out: /10.233.122.199:9182 at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe$1.run(AbstractNioChannel.java:261) ~[netty-transport-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.PromiseTask.runTask(PromiseTask.java:98) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.ScheduledFutureTask.run(ScheduledFutureTask.java:153) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.AbstractEventExecutor.runTask(AbstractEventExecutor.java:174) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java:167) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:470) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:569) ~[netty-transport-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:997) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30) ~[netty-common-4.1.90.Final.jar!/:4.1.90.Final] at java.lang.Thread.run(Thread.java:833) ~[?:?]

Solution

Ensure the restart sequence is maintained:

- Restart the adapter pods once they are running, then restart configuration pod.

- If the previous step is unsuccessful, restart the configuration service again.

- If issue persists, then it is a known issue and can be ignored as the error logs are not impacting the call flow.

2.2.17 Webhook Failure During Installation or Upgrade

Problem

Installation or upgrade unsuccessful due to webhook failure.

Error Code or Error Message

Sample error log:

Error: INSTALLATION FAILED: Internal error occurred: failed calling webhook "prometheusrulemutate.monitoring.coreos.com": failed to call webhook: Post "https://occne-kube-prom-stack-kube-operator.occne-infra.svc:443/admission-prometheusrules/mutate?timeout=10s": context deadline exceeded

Solution

Retry installation or upgrade using Helm.