Appendix

Server Status Indicators LEDs

These sections describe Oracle Communications Server E6-2L status indicators (LEDs) located on the front and back panels, including indicators located on components and ports.

System-Level Status Indicators

System-level status indicators (LEDs) are located on both the server front panel and the back panel. For the location of the status indicators, see Front Panel Components and Back Panel Components. The following table describes these indicators.

Table A-1 System-Level Status Indicators

| Status Indicator Name | Icon | Color | State and Meaning |

|---|---|---|---|

| Locate Button/LED | Front and back panel |

White | Indicates the location of the server. OFF – Server is operating normally. FAST BLINK (250 ms) – Use Oracle ILOM to activate this LED indicator to enable you to locate a particular system quickly and easily. Pressing the Locate button toggles the LED indicator fast blink on or off. |

| ServiceFault-Service Required | Front and back panel |

Amber | Indicates the fault state of the server. OFF – The server is operating normally. STEADY ON – A fault is present on the server. This LED indicator lights whenever a fault indicator lights for a replaceable component on the server. Note: When this LED indicator is lit, a system console message might appear that includes a recommended service action. |

| OK

System OK |

Front and back panel |

Green | Indicates the operational state of the chassis. OFF – AC power is not present or the Oracle ILOM boot is not complete. STANDBY BLINK (on for 100 ms, off for 2900 ms) – Standby power is on, but the chassis power is off and the Oracle ILOM SP is running. SLOW BLINK (1000 ms) – Startup sequence was initiated on the host. This pattern begins soon after you power on the server. This status indicates either: power-on self-test (POST) code checkpoint tests are running on the server host system, or the host is transitioning from the powered-on state to the standby state on shutdown. STEADY ON – The server is powered on, and all host POST code checkpoint tests are complete. The server is in one of the following states: the server host is booting the operating system (OS), or the server host is running the OS. |

| SP OK | Front panel |

Green | Indicates the state of the service processor. OFF – Service processor (SP) is not running. SLOW BLINK – SP is booting. STEADY ON – SP is fully operational. |

| Top Fan | Front panel |

Amber | Indicates that one or more of the internal fan modules failed. OFF – Indicates steady state; no service is required. STEADY ON – Indicates service required. |

| Rear Power Supply Fault | Front panel |

Amber | Indicates that one of the server power supplies failed. PS0 and PS1 are located on the server back panel. OFF – Indicates steady state; no service is required. STEADY ON – Indicates service required; service the power supply. |

| Temp-Fault

System Over Temperature Warning |

Front panel |

Amber | Indicates a warning for an overtemperature condition. OFF – Normal operation; no service is required. STEADY ON – The system is experiencing an overtemperature warning condition. Note: This is a warning indication, not a fatal overtemperature. Failure to correct this might result in the system overheating and shutting down unexpectedly. |

| DO NOT SERVICE | Front panel |

White | Indicates that the system is not ready to service. OFF – Normal operation. STEADY ON – The system is not ready for service. Note: The DO NOT SERVICE indicator is application specific. This indicator is only illuminated on demand by the Host application. |

Power Supply Status Indicators

There are two status indicators (LEDs) on each power supply. Power supply status indicators are visible from the back of the server.

Table A-2 Power Supply Status Indicators

| Status Indicator Name | Icon | Color | State and Meaning |

|---|---|---|---|

| AC OK/ DC OK | Green | OFF – No AC power is present. SLOW BLINK – Normal operation. Input power is within specification. DC output voltage is not enabled. STEADY ON – Normal operation. Input AC power and DC output voltage are within specification. | |

| Fault-Service Required | Amber | OFF – Normal operation. No service action is required. STEADY ON – The power supply (PS) detected a PS fan failure, PS overtemperature, PS overcurrent, or PS overvoltage or undervoltage. |

Fan Module Status Indicators

Each fan module has one status indicator (LED). Fan LEDs are located on the chassis fan tray adjacent to and aligned with the fan modules, and are visible when the server top cover is removed.

Table A-3 Fan Module Status Indicators

| Status Indicator Name | Icon | Color | State and Meaning |

|---|---|---|---|

| Fan Module Fault | Amber | OFF – The fan module is correctly installed and operating within specification. STEADY ON – The fan module is faulty. The front TOP FAN LED and the front and back panel Fault-Service Required LEDs are also lit if the system detects a fan module fault. |

Storage Drive Status Indicators

There are three status indicators (LEDs) on each drive.

Table A-4 Storage Drive Status Indicators

| Status Indicator Name | Icon | Color | State and Meaning |

|---|---|---|---|

| OK/Activity | Green | OFF – Power is off or installed drive is not recognized by the system. STEADY ON – The drive is engaged and is receiving power. RANDOM BLINK – There is disk activity. Status indicator LED blinks on and off to indicate activity. | |

| Fault-Service Required | Amber | OFF – The storage drive is operating normally. STEADY ON – The system detected a fault with the storage drive. | |

| OK to Remove |  |

Blue | STEADY ON – The storage drive can be removed safely during a hot-plug operation. OFF – The storage drive is not prepared for removal. |

Network Management Port Status Indicators

The server has one 100/1000BASE-T Ethernet management domain interface, labeled NET MGT. There are two status indicators (LEDs) on this port. NET MGT indicators are visible from the back of the server.

Table A-5 Network Management Port Status Indicators

| Status Indicator Name | Location | Color | State and Meaning |

|---|---|---|---|

| Activity | Top left | Green | ON – Link up. OFF – No link or down link. BLINKING – Packet activity. |

| Link speed | Top right | Green | ON – 1000BASE-T link. OFF – 100BASE-T link. |

Ethernet Port Status Indicators

The server has one Ethernet port labeled 1GbE (NET 0). Two NET 0 status indicators (LEDs) are visible from the back of the server.

See 100/1000BASE-T Gigabit Ethernet port.

Table A-6 Ethernet Port Status Indicators

| Status Indicator Name | Location | Color | State and Meaning |

|---|---|---|---|

| Activity | Bottom left | Green | ON – Link up. OFF– No activity. BLINKING – Packet activity. |

| Link speed | Bottom right | Bi-colored: Amber/Green | OFF – 100BASE-T link (if link up).Green ON – 1000BASE-T link. |

Motherboard Status Indicators

The motherboard contains the following status indicators (LEDs).

Table A-7 Motherboard Status Indicators

| Status Indicator | Description |

|---|---|

| DIMM Fault Status Indicators | Each of the 24 DIMM slots on the motherboard has an amber fault status indicator (LED) associated with it. If Oracle ILOM determines that a DIMM is faulty, pressing the Fault Remind button on the motherboard I/O card signals the service processor to light the fault LED associated with the failed DIMM. See Replace DIMMs. |

| Processor Fault Status Indicators | The motherboard includes a fault status indicator (LED) adjacent to each of the two processor sockets. These LEDs indicate when a processor fails. Pressing the Fault Remind button on the motherboard I/O card signals the service processor to light the fault status indicators associated with the failed processors. See Replace Processors. |

| Fault Remind Status Indicator | This status indicator (LED) is located next to the Fault Remind button and is powered from the super capacitor that powers the fault LEDs on the motherboard. This LED lights to indicate that the fault remind circuitry is working properly in cases where no components failed and, as a result, none of the component fault LEDs illuminate. See Use the Server Fault Remind Button. |

| STBY PWRGD Status Indicator | This green status indicator (LED) is labeled STBY PWRGD and is located on the motherboard near the back of the server. STBY PWRGD LED lights to inform a service technician that the motherboard is receiving Standby power from at least one of the power supplies. This LED helps prevent service actions on the server internal components while the AC power cords are installed and power is being supplied to the server. |

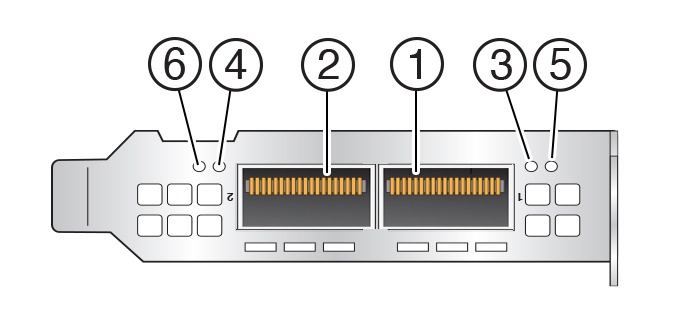

Mellanox ConnectX-6 Dx SmartNIC Status Indicators

The Mellanox ConnectX-6 Dx Dual Port RoCE SmartNIC status indicators are defined as follows:

| Callout | Description |

|---|---|

| 1 | Port 1 |

| 2 | Port 2 |

| 3 | Port 1 status indicator: OFF = Link has not been established SOLID GREEN = Valid link has been established BLINKING GREEN = Link activity BLINKING AMBER = 1 Hz blink indicates a beacon command is being run to locate the adapter card; 4 Hz blink indicates a problem with the physical link |

| 4 | Port 2 status indicator:OFF = Link has not been established SOLID GREEN = Valid link has been established BLINKING GREEN = Link activity BLINKING AMBER = 1 Hz blink indicates a beacon command is being run to locate the adapter card; 4 Hz blink indicates a problem with the physical link |

| 5 & 6 | Not used. |

Boot Process and Normal Operating State Indicators

A normal server boot process involves two indicators, the service processor SP OK LED indicator and the System OK LED indicator.

When AC power is connected to the server, the server boots into standby power mode:

-

The SP OK LED blinks slowly (0.5 seconds on, 0.5 seconds off) while the SP is starting, and the System OK LED remains off until the SP is ready.

-

After a few minutes, the main System OK LED slowly flashes the standby blink pattern (0.1 seconds on, 2.9 seconds off), indicating that the SP (and Oracle ILOM) is ready for use. In Standby power mode, the server is not initialized or fully powered on at this point.

When powering on the server (either by the On/Standby button or Oracle ILOM), the server boots to full power mode:

-

The System OK LED blinks slowly (0.5 seconds on, 0.5 seconds off), and the SP OK LED remains lit (no blinking).

-

When the server successfully boots, the System OK LED remains lit. When the System OK LED and the SP OK LED indicators remain lit, the server is in Main power mode.

The green System OK LED indicator and the green SP OK indicator remain lit (no blinking) when the server is in a normal operating state.

System Specifications

These sections describe specifications for Oracle Communications Server E6-2L.

Server Physical Specifications

The following table lists physical specifications for Oracle Communications Server E6-2L.

Table B-1 Physical Specifications

| Dimension | Server Specification | Measurements |

|---|---|---|

| Height | 2-rack unit (2U) nominal | 86.9 mm (3.42 inches) |

| Width | Server chassis | 445 mm (17.52 inches) |

| Depth | Maximum overall | 756 mm (29.76 inches) |

| Weight | Fully populated server | 34 kg (76 lbs) |

Dimensions do not include PSU handles.

Electrical Requirements

The server uses high-line AC power. The server can operate effectively over a range of voltages and frequencies.

The following table contains the power supply specifications for Oracle Communications Server E6-2L.

The power dissipation numbers listed in the following table are the maximum rated power numbers for the power supply used in the server. The numbers are not a rating of the actual power consumption of the server. For up-to-date information about server power consumption, go to Oracle Power Calculator .

Table B-2 Electrical Requirements

| Parameter | Specification |

|---|---|

| Electrical ratings | 200-240V~, 10A, 50/60Hz (x2) |

| Voltage (nominal) | 200-240 VAC |

| Input current (maximum) | 10.0A at 200-240 VAC |

| Frequency (nominal) | 50/60 Hz (47-63 Hz range) |

| Maximum power consumption | 1400W at AC 200V-240V |

| Maximum heat output | 11,600 BTU/Hr |

Table B-3 Power Supply Specifications

| Parameter | Power Supply Specification |

|---|---|

| Oracle Communications Server E6-2L | Two hot-swappable and highly-redundant 1400W A271 or A271A power supplies (PS0 and PS1)Note: The A271 and A271A power supplies are functionally identical. Either are supported in the system. |

| A271 Power Supply | A271 - Delta Electronics, Model AWF2DC1400W, Input rated 200 - 240 V~, 10A Max, 50/60 Hz. Output: V1 rated 12.1 Vdc, 116A Max., Vsb rated 12.1 Vdc, 3A Max, 1400W maximum |

| A271A Power Supply | A271A - Flextronics, Model SUN-S-1400ADU00, Input rated 200 - 240 V~, 10A Max, 50/60 Hz. Output: V1 rated 12.1 Vdc, 116A Max., Vsb rated 12.1 Vdc, 3A Max, 1400W maximum |

To protect your server from such disturbances, use a dedicated power distribution system, power-conditioning equipment, and lightning arresters or power cables for protection from electrical storms.

See the following additional power specifications.

Facility Power Guidelines

Electrical work and installations must comply with applicable local, state, or national electrical codes. To determine the type of power that is supplied to the building, contact your facilities manager or qualified electrician.

To prevent failures:

-

Design the input power sources to ensure adequate power is provided to the power distribution units (PDUs).

-

Use dedicated AC breaker panels for all power circuits that supply power to the PDU.

-

When planning for power distribution requirements, balance the power load between available AC supply branch circuits.

-

In the United States and Canada, ensure that the current load of the overall system AC input does not exceed 80 percent of the branch circuit AC current rating.

PDU power cords for Oracle racks are 4 meters (13.12 feet) long, and 1 to 1.5 meters (3.3 to 4.9 feet) of the cord might be routed in the rack cabinet. The installation site AC power receptacle must be within 2 meters (6.6 feet) of the rack.

Grounding Guidelines

Use the following guidelines for grounding the server:

-

The rack must use grounding type power cords. For example, Oracle Communications Server E6-2L uses three-wire, grounding-type power cords.

-

Always connect the grounding-type power cords to grounded power outlets.

-

Because different grounding methods are used, depending on location, verify the grounding type. For the correct grounding method, refer to local electrical codes.

-

Ensure that a facility administrator or qualified electrical engineer verifies the grounding method for the building and performs the grounding work.

Circuit Breaker and UPS Guidelines

To prevent failures:

-

Ensure that the design of your power system provides adequate power to the server.

-

Use dedicated AC breaker panels for all power circuits that supply power to the server.

-

Ensure that electrical work and installations comply with applicable local, state, or national electrical codes.

-

Ensure that the electrical circuits are grounded to Earth.

-

Provide a stable power source, such as an uninterruptable power supply (UPS) to reduce the possibility of component failures. If computer equipment is subjected to repeated power interruptions and fluctuations, then it is susceptible to a higher rate of component failure.

Environmental Requirements

The following table describes environmental specifications for Oracle Communications Server E6-2L.

Table B-4 Environmental Requirements

| Specification | Operating | Nonoperating |

|---|---|---|

| Ambient temperature (does not apply to removable media) | Maximum range: 5°C to 35°C (41°F to 95°F) up to 900 meters (2,953 feet)Optimal: 21°C to 23°C (69.8°F to 73.4°F)Maximum ambient operating temperature is derated by 1 degree C per 300 meters of elevation above 900 meters, to a maximum altitude of 3,000 meters. | –40°C to 68°C (–40°F to 154°F) |

| Relative humidity | 10% to 90% noncondensing, short term –5°C to 55°C (23°F to 113°F)5% to 90% noncondensing, with a maximum of 0.024 kg of water per kg of dry air (0.053 lbs water/2.205 lbs dry air) | Maximum wet bulb of 93% noncondensing 35°C (95°F) |

| Altitude | Up to 3,000 meters (9,840 feet)In China markets, regulations might limit installations to a maximum altitude of 2,000 meters (6,562 feet). | Maximum 12,000 meters (39,370 feet) |

| Acoustic noise | Fan speed % Pulse Width Modulation (PWM) Acoustic noise emission declaration based on the measured Sound Power LWAd (1Bel = 10 dB)4-Drive Sound Power (Bels)50% PWM: 8.4, 60% PWM: 8.8, 70% PWM: 9.1,80% PWM: 9.6, 90% PWM: 9.8 100% PWM: 10.112-drive Sound Power (Bels)40% PWM: 7.9, 50% PWM: 8.58, 60% PWM: 8.96,70% PWM: 9.3, 80% PWM: 9.5 | Not applicable |

| Vibration | 0.15 G (z-axis)0.10 G (x-, y-axes), 5-500Hz swept sineIEC 60068-2-6 Test FC | 0.5 G (z-axis),0.25 G (x-, y-axes), 5-500Hz swept sineIEC 60068-2-6 Test FC |

| Shock | 3.5 G, 11 ms half-sineIEC 60068-2-27 Test Ea | Roll-off: 1.25-inch roll-off free fall, front to back rolling directions Threshold: 13-mm threshold height at 0.65 m/s impact velocityETE-1010-02 Rev A |

Humidity Guidelines

The server ambient relative humidity range of 45 to 50 percent is acceptable for safe data processing operations and is the recommended optimal range. Foot 1An ambient relative humidity optimal range of 45 to 50 percent can:

-

Help protect computer systems from corrosion problems associated with high humidity levels.

-

Provide the greatest operating time buffer in the event of air conditioner control failure.

-

Help to avoid failures or temporary malfunctions caused by intermittent interference from static discharges that might occur when relative humidity is too low. Electrostatic discharge (ESD) is easily generated and not easily dissipated in areas where the relative humidity level is below 35 percent. ESD risk becomes critical when relative humidity levels drop below 30 percent.

Temperature Guidelines

An ambient temperature range of 21° to 23° Celsius (70° to 74° Fahrenheit) is optimal for server reliability and operator comfort. Most computer equipment can operate in a wide temperature range, but approximately 22° Celsius (72° Fahrenheit) is recommended because it is easier to maintain safe humidity levels. Operating in this temperature range provides a safety buffer in the event that the air conditioning system is not running for a period of time.

Ventilation and Cooling Requirements

Always provide adequate space in front of and behind the rack to allow for proper ventilation of rackmounted servers. Do not obstruct the front or back of the rack with equipment or objects that might prevent air from flowing through the rack. Rackmountable servers and equipment, including Oracle Communications Server E6-2L, draw cool air in through the front of the rack and release warm air out the back of the rack. There is no airflow requirement for the left and right sides due to front-to-back cooling.

If the rack is not completely filled with components, then cover the empty sections with filler panels. Gaps between components can adversely affect airflow and cooling in the rack.

The servers function while installed in a natural convection airflow. Follow these environmental specifications for optimal ventilation:

-

Ensure that air intake is in the front of the system, and the air outlet is in the back. Take care to prevent recirculation of exhaust air in a rack or cabinet.

-

Allow a minimum clearance of 123.2 cm (48.5 inches) in the front of the system, and 91.4 cm (36 inches) in the back.

-

Ensure that airflow is unobstructed through the chassis. Oracle Communications Server E6-2L uses internal fans that can achieve between 130 CFM to 160 CFM (depending on configuration), within the specified range of operating conditions.

-

Ensure that ventilation openings, such as cabinet doors for both the inlet and exhaust of the server, are unobstructed. For example, Oracle Rack Cabinet 1242 is optimized for cooling. Both the front and back doors have 80 percent perforations that provide a high level of airflow through the rack.

-

Ensure that the front and back clearances between the cabinet doors is a minimum of 2.5 cm (1 inch) at the front of the server and 8 cm (3.15 inches) at the back of the server when mounted. To improve cooling performance, these clearance values are based on the inlet and exhaust impedance (available open area) and assume a uniform distribution of the open area across the inlet and exhaust areas. The combination of inlet and exhaust restrictions, such as cabinet doors and the distance of the server from the doors, can affect the cooling performance of the server. You must evaluate these restrictions. Server placement is particularly important for high-temperature environments

-

Manage cables to minimize interference with the server exhaust vent.

Agency Compliance

Oracle Communications Server E6-2L complies with the following specifications.

Table B-5 Agency Compliance

| Category | Relevant Standards |

|---|---|

| Regulations | Product Safety: UL/CSA 60950-1, EN 60950-1, IEC 60950-1 CB Scheme with all country differences Product Safety: UL/CSA 62368-1, EN 62368-1, IEC 62368-1 CB Scheme with all country differences EMCEMC: Emissions: FCC 47 CFR 15, ICES-003, EN55022, EN55032, KN32, EN61000-3-2, EN61000-3-3Immunity: EN 55024, KN35 |

| Certifications | North America Safety (NRTL)(CE) European UnionInternational CB SchemeBIS (India)BSMI (Taiwan)CCC (PRC)EAC (EAEU including Russia)KC (Korea)RCM (Australia)VCCI (Japan)UKCA (United Kingdom) |

| European Union Directives | 2014/35/EU Low Voltage Directive2014/30/EU EMC Directive2011/65/EU RoHS Directive2012/19/EU WEEE Directive2009/125/EC Ecodesign Energy Related Products Directive |

Footnote 2

All standards and certifications referenced are to the latest official version. For additional detail, contact your sales representative.

Footnote 3

Other country regulations/certifications may apply.

Footnote 4

Regulatory and certification compliance were obtained for the shelf-level systems only.

Refer to safety information in Oracle Server Safety and Compliance Guide and in Important Safety Information for Oracle's Hardware Systems.

960GB NVMe M.2 Solid State Drive Specification

This section provides the specification for 960GB NVMe M.2 Solid State Drives.

The following tables list 960GB NVMe M.2 Solid State Drive specifications for Oracle Server E6-2L.

-

960GB, M.2, NVMe Solid State Drive 8212586 Specification

-

960GB, M.2, NVMe Solid State Drive 8213400 Specification

960GB M.2, NVMe Solid State Drive 8212586 Specification

NVMe Storage Drive 8212586 specifications are listed in the following table.

Table B-6 960GB, M.2, NVMe Solid State Drive 8212586 Specification

| Specification | Value |

|---|---|

| Device name | Product Identifier SAMSUNG MZ1L2960HCJR-00A07Oracle Part Number raw: 8213397 generic: 8212582 assy: 8221518Device Identification:PCIe Vendor ID : 0x144dPCIe Device ID : 0xa80aPCIe Subsystem VID 0x144dPCIe Subsystem ID: 0xaa89 |

| Manufacturing name | Samsung PM9A3 960 GB NVMe Solid-State Drive |

| Form factors | M.2 22110 |

| PCIe interface | PCIe Gen4 Interface, Single port x4 lanes |

| NAND | Samsung V6 128L TLC V-NANDdie size: 32GiB4 ODP packages2 planesPage Size: 16KBNumber of dies 32 NAND dies (32 * 32GiB = 1024 GiB) |

| Flash Controller | Samsung Elpis controller8 NAND ChannelsDRAM DDR4 – up to 3200MbpsNAND channel Toggle 4.0 - 1200Mbps |

| Features | TCG-OPAL 2.0 |

| Product Compliance | NVM Express Specification Rev. 1.4PCI Express Base Specification Rev. 4Enterprise SSD Form Factor Version 1.0aNVMe-MI Rev 1.1 (excluding VPD data) |

| Certifications and declarations | cUL-us, CE, TUV, CB, BSMI, KC, VCCI RCM, FCC, IC |

Table B-7 Drive Usage Information

| Usage | Description |

|---|---|

| Operating temperature (composite device temperature reported via SMART) | 0 to 70 degrees Celsius |

| Non-Operating temperature | -40 to 85 degrees Celsius |

| Maximum temperature (SMART trip) | Warning at 77 degrees Celsius (composite), thermal throttling when approaching critical 85 degrees Celsius. Thermal Shutdown at 93 degrees Celsius |

| Other environmental factors | Conforms to IEC standards |

| Error rates | Uncorrectable Bit Error Rate (UBER): 1 sector per 10^17 bits read |

| Data retention | 3 months powered off at 40 degrees Celsius at end of rated endurance |

| Endurance | Drive Writes Per Day (DWPD) for 5 years: 1 (@ 960 GB)1 PBW (at 4KB Random Write) 1.75 PWB |

Table B-8 Drive Reliability

| Attribute | Value |

|---|---|

| Component Design Life (Useful life) | 5 years |

| MTBF | 2,000,000 hours |

| Expected AFR (Annualized Failure Rate) | 0.44% for normal 24x7 operating conditions |

Table B-9 Drive Capacity and Performance

| Attribute | Value |

|---|---|

| Capacity, formatted | 960 GB Capacity format : 960197124096 bytesSector Size (LBA size): 512 bytes per sectorTotal Addressable LBAs : 1,875,385,008Max User Capacity is 960 GB |

| Capacity, raw NAND | 1024 GiB |

| Random 4 KB Read | IOPs - QD32, 4 thread - 550k IOPSLatency - QD=1 , 1 thread, 75us (typ) |

| Random 4 KB Write | IOPS - QD32, 4 thread - 60k IOPSLatency - QD=1 , 1 thread, 30us (typ) |

| Sequential Read | 128KB, QD 32, 1 Thread : 3.5 GB/s |

| Sequential Write | 128KB, QD 32, 1 Thread : 1.4 GB/s |

| Interface data transfer rate | Interface Data Rate: PCIe Gen4Data Transfer Rate 16 GT/secInterface drivers/receivers M.2: 1x4 lanes |

Table B-10 Drive Electrical Specifications

| Attribute | Value |

|---|---|

| Power On to Ready (no rebuild, no pending sanitize action) | 5 seconds (CSTS.Ready =1 ; may not be ready for IO) |

| Supply Voltage / Tolerance | 3.3 V +5%/-5%DC – 100Khz 300 mVp-p max100Khz-20Mhz 50 mVp-p maxmin Off time 10 ms |

| Inrush Current | 3.3 V, 1.0 A |

| Power Consumption | < 8.2 WActive Read: 7.5 WActive Write: 6.5 WIdle < 5 W (in power-saving idle mode which is activated in case of no host command for 10seconds) |

| Power Requirements | Refer to vendor product specification. |

Table B-11 Drive Physical Characteristics

| Attribute | Value |

|---|---|

| Height | 3.80 mm +/-0.18 mm |

| Width | 22.00 mm +/-0.15 mm |

| Length | 110.00 +/- 0.15 mm Max |

| Mass | Up to 20 g |

Table B-12 Solid-State Drive Characteristics

| Attribute | Value |

|---|---|

| NAND Technology | Samsung V6 128L TLC V-NAND |

| Over Provisioning | 13% (to raw) at 960GB |

| Number of NAND Channels | 8 |

| NAND Die Count | 128 |

| NAND Transfer Rate | Toggle 4.0 - 1200 Mbps |

| Sectors per page | 32 |

| Page size | 16 KB + 2048 B |

| Pages per Erase Block | 3096 Pages/Blocks |

| Number of Blocks per Plane | 340 |

| Number of Planes per die | 2 |

| Erase Block Size | 48 MB |

| TREAD (typ) | 41 us |

| TPROG (typ) | 0.7 ms |

| Program/Erase Cycles | 7K |

Table B-13 Drive Characteristics

| Attribute | Value |

|---|---|

| Minimum operating system versions | Refer to the server product notes for minimum operating system versions, hardware, firmware, and software compatibility. |

| Life monitoring capability | Provides alerts for proactive replacement of the drive before the endurance is depleted. Provides endurance remaining in NVMe SMART logs. SSD supports the standard method defined by NVMe for Solid State Drive to report NAND wear through the “Get Log” command SMART/Health Information Percentage Used field. The units are whole percentage of wear. Percentage Used: Contains a vendor specific estimate of the percentage of NVM subsystem life used based on the actual usage and the manufacturer’s prediction of NVM life. A value of 100 indicates that the estimated endurance of the NVM in the NVM subsystem has been consumed, but may not indicate an NVM subsystem failure. The value is allowed to exceed 100. Percentages greater than 254 are represented as 255. This value is updated once per power-on hour (when the controller is not in a sleep state). Refer to the JEDEC JESD218A standard for SSD device life and endurance measurement techniques. |

| End-to-End data path protection | Device does not support Metadata formats – only supports 512B and 4KB LBA formats |

| Enhanced power-loss data protection | Energy storage components complete buffered writes to the persistent flash storage in case of a sudden power loss. |

| Power loss protection capacitor self-test | Supports testing of the power loss capacitor. Power is monitored using SMART (Self-Monitoring, Analysis, and Reporting Technology) attribute critical warning. |

| Out-of-Band Management (SMBUS) | Managed through the SMBUS. Provides out-of-band management by means of SMBUS interface. SMBUS access includes temperature sensor via MI basic but excludes the VPD page which is blank at FFs as the device does not support a VPD write protection scheme. |

| Hot-plug Support | Device advanced power loss protection provides robust data integrity. During IOs, the storage drive integrated monitoring enables the integrity of already committed data on the media and commits acknowledged writes to the media. |

960GB M.2, NVMe Solid State Drive 8213400 Specification

NVMe Storage Drive 8213400 specifications are listed in the following table.

Table B-14 960GB, M.2, NVMe Solid State Drive 8213400 Specification

| Specification | Value |

|---|---|

| Device name | Product Identifier Micron_7450_MTFDKBG960TFROracle Part Number raw: 8213398 generic: 8212582 assy: 8221518Device Identification:PCIe Vendor ID : 0x1344PCIe Device ID : 0x51C3PCIe Subsystem VID 0x1344PCIe Subsystem ID: 0x2100 |

| Manufacturing name | Micron 7450 PRO 960GB NVMe Solid-State Drive |

| Form factors | M.2 22x110 M key |

| PCIe interface | PCIe Gen4 Interface, Single port x4 lanes |

| NAND | Micron 5th Gen 176L 3DNAND TLC (2ndnode RG)die size: 64GiB4 QDP packages4 planes Page Size: 16KBNumber of dies 16 NAND dies (16 * 64GiB = 1024 GiB) |

| Flash Controller | Micron Tomcat controller8 NAND Channels DRAM DDR4 – up to 3200MbpsNAND channel ONFI 4.2 |

| Features | Supports UEFI boot |

| Product Compliance | NVM Express Specification Rev. 1.4bPCI Express Base Specification Rev. 4Enterprise SSD Form Factor Version 1.0aNVMe-MI Rev 1.1b |

| Certifications and declarations | cUL-us, CE, TUV, CB, BSMI, KC, VCCI RCM, FCC, IC |

Table B-15 Drive Usage Information

| Usage | Description |

|---|---|

| Operating temperature (composite device temperature reported via SMART) | 0 to 70 degrees Celsius |

| Non-Operating temperature | -40 to 85 degrees Celsius |

| Maximum temperature (SMART trip) | Warning at 77 degrees Celsius (composite), thermal throttling when approaching critical 85 degrees Celsius.Thermal Shutdown at 93 degrees Celsius |

| Other environmental factors | Conforms to IEC standards |

| Error rates | Uncorrectable Bit Error Rate (UBER): 1 sector per 10^17 bits read |

| Data retention | 3 months powered off at 40 degrees Celsius at end of rated endurance |

| Endurance | Drive Writes Per Day (DWPD) for 5 years: 1 (@ 960 GB)PBW (at 4KB RW) 1.7 PWB |

Table B-16 Drive Reliability

| Attribute | Value |

|---|---|

| Component Design Life (Useful life) | 5 years |

| MTBF | 2,000,000 hours |

| Expected AFR (Annualized Failure Rate) | 0.44% for normal 24x7 operating conditions |

Table B-17 Drive Capacity and Performance

| Attribute | Value |

|---|---|

| Capacity, formatted | 960GB Capacity format : 960197124096 bytes Sector Size (LBA size): 512 bytes per sector Total Addressable LBAs : 1,875,385,008Max User Capacity is 960GB |

| Capacity, raw NAND | 1024 GiB |

| Random 4 KB Read | IOPs - 4k, 256 OIO - 520k IOPSLatency - QD=1 , 1 thread, 80us (typ) |

| Random 4 KB Write | IOPS - 4k, 128 OIO - 82k IOPSLatency - QD=1 , 1 thread, 15us (typ) |

| Sequential Read | 128KB, QD 32, 1 Thread : 5.0 GB/s |

| Sequential Write | 128KB, QD 32, 1 Thread : 1.4 GB/s |

| Interface data transfer rate | Interface Data Rate: PCIe Gen4Data Transfer Rate 16 GT/sec Interface drivers/receivers M.2: 1x4 lanes |

Table B-18 Drive Electrical Specifications

| Attribute | Value |

|---|---|

| Power On to Ready | 20 seconds (CSTS.Ready =1 ; may not be ready for IO) |

| Supply Voltage / Tolerance | 3.3 V +5%/-5%DC – 100Khz 300 mVp-p max100Khz-20Mhz 50 mVp-p maxmin Off time 1s |

| Inrush Current | 3.3 V, 2.5 A |

| Power Consumption | < 8.2 WActive Read: 7 WActive Write: 5.7 WIdle < 2.9 W |

| Power Requirements | Refer to vendor product specification. |

Table B-19 Drive Physical Characteristics

| Attribute | Value |

|---|---|

| Height | 3.80 mm +/-0.18 mm |

| Width | 22.00 mm +/-0.15 mm |

| Length | 110.00 +/- 0.15 mm Max |

| Mass | Up to 14 g |

Table B-20 Solid-State Drive Characteristics

| Attribute | Value |

|---|---|

| NAND Technology | Micron 5th Gen 176L 3DNAND TLC (2ndnode RG) |

| Over Provisioning | 13% (to raw) at 960GB |

| Number of NAND Channels | 8 |

| NAND Die Count | 16 |

| NAND Transfer Rate | ONFI 4.2 |

| Sectors per page | 32 |

| Page size | 16 KB + 2048 B |

| Pages per Erase Block | 2112 Pages/Blocks |

| Number of Blocks per Plane | 552 |

| Number of Planes per die | 4 |

| Erase Block Size | 33 MB |

| TREAD (typ) | TBA us |

| TPROG (typ) | TBA ms |

| Program/Erase Cycles | 10K |

Table B-21 Drive Characteristics

| Attribute | Value |

|---|---|

| Minimum operating system versions | Refer to the server product notes for minimum operating system versions, hardware, firmware, and software compatibility. |

| Life monitoring capability | Provides alerts for proactive replacement of the drive before the endurance is depleted. Provides endurance remaining in NVMe SMART logs. SSD supports the standard method defined by NVMe for Solid State Drive to report NAND wear through the “Get Log” command SMART/Health Information Percentage Used field. The units are whole percentage of wear. Percentage Used: Contains a vendor specific estimate of the percentage of NVM subsystem life used based on the actual usage and the manufacturer’s prediction of NVM life. A value of 100 indicates that the estimated endurance of the NVM in the NVM subsystem has been consumed, but may not indicate an NVM subsystem failure. The value is allowed to exceed 100. Percentages greater than 254 are represented as 255. This value is updated once per power-on hour (when the controller is not in a sleep state). Refer to the JEDEC JESD218A standard for SSD device life and endurance measurement techniques. |

| End-to-End data path protection | Device does not support Metadata formats – only supports 512B and 4KB LBA formats. |

| Enhanced power-loss data protection | Energy storage components complete buffered writes to the persistent flash storage in case of a sudden power loss. |

| Power loss protection capacitor self-test | Supports testing of the power loss capacitor. Power is monitored using SMART (Self-Monitoring, Analysis, and Reporting Technology) attribute critical warning. |

| Out-of-Band Management (SMBUS) | Managed through the SMBUS. Provides out-of-band management by means of SMBUS interface. SMBUS access includes temperature sensor via MI basic. |

| Hot-plug Support | Device advanced power loss protection provides robust data integrity. During IOs, the storage drive integrated monitoring enables the integrity of already committed data on the media and commits acknowledged writes to the media. M.2 FF does not support hot-plug. |

480GB NVMe M.2 Solid State Drive Specification

This section provides the specification for 480GB NVMe M.2 Solid State Drives.

The following tables list 480GB NVMe M.2 Solid State Drive specifications for Oracle Server E6-2L.

-

480GB, M.2, NVMe Solid State Drive 8214990 Specification

-

480GB, M.2, NVMe Solid State Drive 8214991 Specification

480GB M.2, NVMe Solid State Drive 8214990 Specification

NVMe Storage Drive 8214990 specifications are listed in the following table.

Table B-22 480GB, M.2, NVMe Solid State Drive 8214990 Specification

| Specification | Value |

|---|---|

| Device name | Product Identifier: MICRON_7450_MTFDKBA480TFROracle Part Number: 8214990Device Identification:PCIe Vendor ID: 0x1344PCIe Device ID: 0x51C3Subsystem PCIe Vendor ID: 0x1344Subsystem ID 0x1100 |

| Manufacturing name | Micron 7450 480GB M.2 NVMe Solid State Drive |

| Form factors | M.2 2280 M key |

| PCIe interface | PCIe Gen4 Interface, single port, x4 lanes |

| Features | NVMe PCIe Gen4 InterfaceNVMe-MI (MCTP)VPD per NVMe-MI Ver 1.0a specification |

| Product Compliance | NVM Express Specification Rev. 1.4.bPCI Express Base Specification Rev. 4.0Enterprise SSD Form Factor Version 1.0aNVMe-MI Rev 1.1b |

| Product ecological compliance | RoHS |

| Certifications and declarations | cUL-us, CE, TUV-GS, CB, CE, BSMI, KCC, Morocco, VCCI, RCM, FCC, IC |

Table B-23 Drive Usage Information

| Usage | Description |

|---|---|

| Operating temperature (composite device temperature reported via SMART) | 0 to 70 degrees Celsius |

| Non-Operating temperature | -40 to 85 degrees Celsius |

| Maximum temperature (SMART trip) | Warning at 77 degrees Celsius (composite), thermal throttling when approaching critical 85 degrees Celsius. Thermal Shutdown at 93 degrees Celsius |

| Error rates | Uncorrectable Bit Error Rate (UBER): 1 sector per 10^17 bits read |

| Data retention | 3 months powered off at 40 degrees Celsius at end of rated endurance |

| Endurance | Drive Writes Per Day (DWPD) for 5 years:1 PBW (at 4KB Random Write) 0.8 PBRefer to the JEDEC JESD218A standard for SSD device life and endurance measurement techniques. |

Table B-24 Drive Reliability

| Attribute | Value |

|---|---|

| Component Design Life (Useful life) | 5 years |

| MTBF | 2,000,000 hours |

| Expected AFR (Annualized Failure Rate) | 0.44% for normal 24x7 operating conditions |

Table B-25 Drive Capacity and Performance

| Attribute | Value |

|---|---|

| Capacity, formatted | Default Formatted Capacity: 480,103,981,056 bytes Sector Size (LBA size): 512 bytes per sector |

| Capacity, unformatted | Unformatted Capacity (Total User Addressable LBA): 937,703,088 (max 480 GB) |

| Capacity, raw NAND | 512 GiB |

| Random 4 KB Read | Random 4KB Read - 280k IOPsTypical 4 KB Random Read QD=1, Worker=1: 80us |

| Random 4 KB Write | Random 4 KB Write 40K IOPsTypical 4 KB Random Write QD=1, Worker=1: 15us |

| Sequential Read | 128 KB, QD 128, Worker=1: 5.0 GB/s |

| Sequential Write | 128 KB, QD 128, Worker=1: 0.7 GB/s |

| Interface data transfer rate | Interface Data Rate: PCIe Gen 4Data Transfer Rate 16 GT/secInterface drivers/receivers SFF: 1x4 lanes |

Table B-26 Drive Electrical Specifications

| Attribute | Value |

|---|---|

| Power On to Ready | 20 seconds (CSTS.Ready=1 ; may not be ready for IO) |

| Supply Voltage / Tolerance | 3.3 V +5%/-5% |

| Inrush Current | 3.3 V, 2.5 A |

| Power Consumption | Active Read: 7 WActive Write: 5.7 WIdle < 2.9 W |

| Power Requirements | Refer to vendor product specification. |

Table B-27 Drive Physical Characteristics

| Attribute | Value |

|---|---|

| Height | 3.80 mm +/-0.18 mm |

| Width | 22.00 mm +/-0.15 mm |

| Length | 80.00 mm +/- 0.15 mm |

| Mass | Up to 11 g |

Table B-28 Drive Characteristics

| Attribute | Value |

|---|---|

| Minimum operating system versions | Refer to the server product notes for minimum operating system versions, hardware, firmware, and software compatibility. |

| Life monitoring capability | Provides alerts for proactive replacement of the drive before the endurance is depleted. Provides endurance remaining in NVMe SMART logs. SSD supports the standard method defined by NVMe for Solid State Drive to report NAND wear through the “Get Log” command SMART/Health Information Percentage Used field. The units are whole percentage of wear. Percentage Used: Contains a vendor specific estimate of the percentage of NVM subsystem life used based on the actual usage and the manufacturer’s prediction of NVM life. A value of 100 indicates that the estimated endurance of the NVM in the NVM subsystem has been consumed, but may not indicate an NVM subsystem failure. The value is allowed to exceed 100. Percentages greater than 254 are represented as 255. This value is updated once per power-on hour (when the controller is not in a sleep state).Refer to the JEDEC JESD218A standard for SSD device life and endurance measurement techniques. |

| Enhanced power-loss data protection | Energy storage components complete buffered writes to the persistent flash storage in case of a sudden power loss. |

| Power loss protection capacitor self-test | Supports testing of the power loss capacitor. Power is monitored using SMART (Self-Monitoring, Analysis, and Reporting Technology) attribute critical warning. |

| Out-of-Band Management (SMBUS) | Managed through the SMBUS. Provides out-of-band management by means of SMBUS interface. SMBUS access includes NVMe-MI, the VPD page and temperature sensor. |

| Management utilities | For more information about management utilities, refer to the server documentation. |

480GB M.2, NVMe Solid State Drive 8214991 Specification

NVMe Storage Drive 8214991 specifications are listed in the following table.

Table B-29 480GB, M.2, NVMe Solid State Drive 8214991 Specification

| Specification | Value |

|---|---|

| Device name | Product Identifier: SAMSUNG MZVL2480HBJD-00A07Oracle Part Number: 8214991Device Identification:PCIe Vendor ID: 0x144DPCIe Device ID: 0xA80ASubsystem PCIe Vendor ID: 0x144DSubsystem ID 0xA81C |

| Manufacturing name | Samsung PM9A3 480GB M.2 NVMe Solid-State Drive |

| Form factors | M.2 2280 M key |

| PCIe interface | PCIe Gen4 Interface, Single port x4 lanes |

| Features | NVMe PCIe Gen4 InterfaceNVMe-MIVPD is blank “FFs” as supplied TCG Opal 2.0 compliant |

| Product Compliance | NVM Express Specification Rev. 1.4PCI Express Base Specification Rev. 4.0Enterprise SSD Form Factor Version 1.0aNVMe-MI Rev 1.1 (excluding VPD data) |

| Product ecological compliance | RoHS |

| Certifications and declarations | cUL, CE, TUV-GS, CB, BSMI, KC, VCCI, Morocco, RCM, FCC, IC |

Table B-30 Drive Usage Information

| Attribute | Value |

|---|---|

| Operating temperature (Composite, as reported via SMART) | 0 to 70 degrees Celsius |

| Non-Operating temperature | -40 to 85 degrees Celsius |

| Maximum temperature (SMART trip) | Warning at 77 degrees Celsius (composite). Thermal throttling in stages until critical 85 degrees Celsius. Thermal Shutdown at 99 degrees Celsius. |

| Error rates | Uncorrectable Bit Error Rate (UBER): 1 sector per 10^17 bits read |

| Data retention | 3 months powered off at 40 degrees Celsius at end of rated endurance |

| Endurance | Drive Writes Per Day (DWPD) for 5 years: 1PBW (at 4KB Random Write): 0.8 PBRefer to the JEDEC JESD218A standard for SSD device life and endurance measurement techniques. |

Table B-31 Drive Reliability

| Attribute | Value |

|---|---|

| Component Design Life (Useful life) | 5 years |

| MTBF | 2,000,000 hours |

| Expected AFR (Annualized Failure Rate) | 0.44% for normal 24x7 operating conditions |

Table B-32 Drive Capacity and Performance

| Attribute | Value |

|---|---|

| Capacity, formatted | Default Formatted Capacity: 480,103,981,056 bytesSector Size (LBA size): 512 bytes per sector |

| Capacity, unformatted | Unformatted Capacity (Total User Addressable LBA): 937,703,088 (max 480 GB) |

| Capacity, raw NAND | 512 GiB |

| Random 4 KB Read | 4 KB Random Read - 130K IOPS (up to)Latency - QD=1, 1 thread, 75us (Typical) |

| Random 4 KB Write | 4 KB Random Write - 20K IOPS (up to)Latency - QD=1, 1 thread, 50us (Typical) |

| Sequential Read | 128 KB, QD 64, 1 thread: 1.5 GB/s (up to) |

| Sequential Write | 128 KB, QD 64, 1 thread: 400 MB/s (up to) |

| Interface data transfer rate | Interface Data Rate: PCIe Gen 4Data Transfer Rate 16 GT/secInterface drivers/receivers SFF: 1x4 lanes |

Table B-33 Drive Electrical Specifications

| Attribute | Value |

|---|---|

| Power On to Ready | 5 seconds (CSTS. Ready =1; may not be ready for IO) |

| Supply Voltage / Tolerance | 3.3 V +5%/-5% |

| Inrush Current | 3.3 V, 1.0 A |

| Power Consumption | < 8.2 WActive Read: 3.6 WActive Write: 3.4 WIdle < 2.5 W |

| Power Requirements | Refer to vendor product specification. |

Table B-34 Drive Physical Characteristics

| Attribute | Value |

|---|---|

| Height | 3.80 mm +/-0.18 mm |

| Width | 22.00 mm +/-0.15 mm |

| Length | 80.00 mm +/-0.15 mm |

| Mass | 15 g Max |

Table B-35 Drive Characteristics

| Attribute | Value |

|---|---|

| Minimum operating system versions | Refer to the server product notes for minimum operating system versions, hardware, firmware, and software compatibility. |

| Life monitoring capability | Provides alerts for proactive replacement of the drive before the endurance is depleted. Provides endurance remaining in NVMe SMART logs. SSD supports the standard method defined by NVMe for Solid State Drive to report NAND wear through the “Get Log” command SMART/Health Information Percentage Used field. The units are whole percentage of wear. Percentage Used: Contains a vendor specific estimate of the percentage of NVM subsystem life used based on the actual usage and the manufacturer’s prediction of NVM life. A value of 100 indicates that the estimated endurance of the NVM in the NVM subsystem has been consumed, but may not indicate an NVM subsystem failure. The value is allowed to exceed 100. Percentages greater than 254 are represented as 255. This value is updated once per power-on hour (when the controller is not in a sleep state).Refer to the JEDEC JESD218A standard for SSD device life and endurance measurement techniques. |

| Enhanced power-loss data protection | Energy storage components complete buffered writes to the persistent flash storage in case of a sudden power loss. |

| Power loss protection capacitor self-test | Supports testing of the power loss capacitor. Power is monitored using SMART (Self-Monitoring, Analysis, and Reporting Technology) attribute critical warning. |

| Out-of-Band Management (SMBUS) | Managed through the SMBUS. Provides out-of-band management by means of SMBUS interface. SMBUS access includes NVMe-MI, the VPD page and temperature sensor. On this device the VPD is supplied as blank “FFs”. |

| Management utilities | For more information about management utilities, refer to the server documentation. |

22TB Hard Disk Drive Specification

This section provides the specification for 22TB Hard Disk Drives.

The following tables list 22TB Hard Disk Drive (HDD) specifications.

Table B-36 22TB HDD Power Requirements

| Condition | +5 VDC (+/-5%) | +12 VDC (+/-5%) |

|---|---|---|

| Motor start (Surge current 20 sec) Amp. peak | 0.94 A | 2.00 A |

| Idle power (Average) | 0.41 A | 0.33 A |

| Maximum peak operational power | 1.09 A | 2.00 A (10.0 W max workload) |

Table B-37 22TB HDD Drive Physical Characteristics

| Attribute | Value |

|---|---|

| Height | 26.1 mm, 1.03 in. |

| Width | 101.6 +/-0.25 mm, 4.00 +/-0.006 in. |

| Depth | 147 mm, 5.787 +/-0.006 in. |

| Weight | Up to 0.670 kg, 23.6336 oz |

Table B-38 22TB HDD Drive Usage

| Usage | Description |

|---|---|

| Useful life | 5 years Minimum |

| Expected annualized failure rate (AFR) for normal 24/7 operating conditions | 0.35% |

| Operating temperature (Case) | 0 to 50 degrees Celsius Note - Derating of AFR applies above 40 degrees Celsius. |

| Maximum temperature | 60 degrees Celsius (maximum sustained temperature)Note - Drive may be rendered unusable above 65 degrees Celsius. |

| Other environmental factors | Conforms to IEC standards |

Table B-39 22TB HDD Drive Capacity and Performance

| Attribute | Value |

|---|---|

| Capacity, formatted | 22,000,969,973,760 bytes (512 bytes per sector) |

| Average latency | 4.16 msec (7,200 rpm) |

| Seek times (read/write) | Typical |

| Single track seek | 0.25/0.35 msec |

| Average seek | 6.79/7.82 msec |

| Maximum seek | 13.63/14.68 msec |

| Random 2 KB reads per sec | Typical |

| Queue=1, 100% Volume (RR) | 87 IOPS |

| Queue=32, 100% Volume (RR) | 210 IOPS |

| HDA data transfer rate | Typical |

| Sustained sequential read | 257 MiB/s (OD) / 151 MiB/s (ID) |

| Interface data transfer rate | 12 Gbps/6 Gbps/3 Gbps/1.5 Gbps (auto negotiation, dual port, full duplex) SAS-3 |

| Maximum instantaneous data transfer rate | 1200 Mbytes per sec (12 Gbps mode) |

| Power On to Ready | 25 sec (typical) |

| Spin down | 30 sec (typical) |

Note - Most computer equipment can operate in a wide range (20 to 80 percent).

Server Installation Information

Receiving and Unpacking Requirements

When the server is unloaded at your site:

-

Leave the server in its shipping carton until it arrives at its installation location.

-

Use a separate area to remove the packaging material to reduce particle contamination before the server is taken to the data center.

-

Ensure that there is enough clearance and clear pathways to move the server from the unpacking area to the installation location.

-

Ensure that the entire access route to the installation location is free of raised-pattern flooring that causes vibration.

Maintenance Space Requirements

The maintenance area for the rackmounted Oracle Communications Server E6-2L must have the required access space. The following table lists the maintenance access requirements for the server when it is installed in a rack.

Table C-1 Maintenance Space Requirements

| Location | Maintenance Access Requirement |

|---|---|

| Back of the server | 91.4 cm (36 inches) |

| Area above the rack | 91.4 cm (36 inches) |

| Front of the server | 123.2 cm (48.5 inches) |

Rack Space Requirements

Oracle Communications Server E6-2L is a 2U server. For physical dimensions, see Server Physical Specifications.

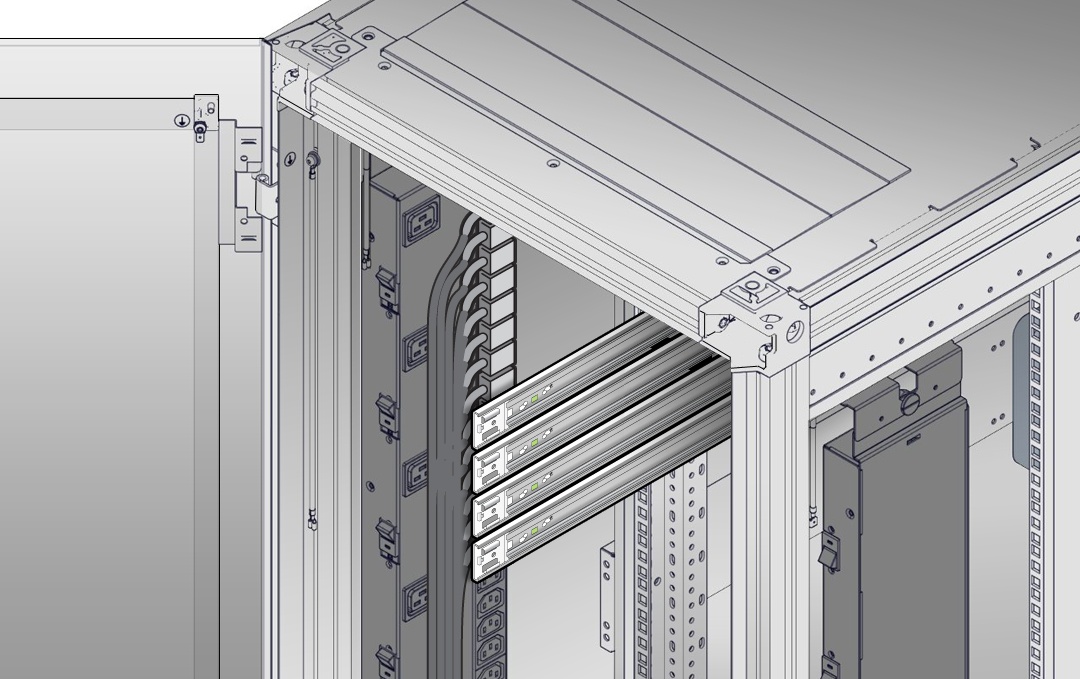

You can install the server into a four-post rack cabinet that conforms to ANSI/EIA 310-D-1992 or IEC 60297 standards, such as Oracle Rack Cabinet 1242. See Rack Compatibility.

The minimum ceiling height for the cabinet is 230 cm (90 inches), measured from the true floor or raised floor, whichever is higher. An additional 91.4 cm (36 inches) of ceiling height is required for top clearance. The space above the cabinet and its surroundings must not restrict the movement of cool air between the air conditioner and the cabinet, or the movement of hot air coming out of the top of the cabinet.

Rack Compatibility

The rack into which you install Oracle Communications Server E6-2L must meet the requirements listed in the following table. Oracle Rack Cabinet 1242 and Sun Rack II are compatible with Oracle Server E6-2L. For information about the racks, see Rackmount the Server.

Table C-2 Rack Compatibility

| Item | Requirement |

|---|---|

| Structure | Four-post rack: (mounting at both front and back) Supported rack types: square hole (9.5 mm) and round hole (M6 or 1/4-20 threaded only). Two-post racks are not compatible. |

| Rack horizontal opening and unit vertical pitch | Conforms to ANSI/EIA 310-D-1992 or IEC 60297 standards. |

| Distance between front and back mounting planes | Minimum 61 cm and maximum 91.5 cm (24 inches to 36 inches). |

| Clearance depth in front of front mounting plane | Distance to front cabinet door is at least 2.54 cm (1 inch). |

| Clearance depth behind front mounting plane | Distance to back cabinet door is at least 90 cm (35.43 inches) with the cable management arm, or 80 cm (31.5 inches) without the cable management arm. |

| Clearance width between front and back mounting planes | Distance between structural supports and cable troughs is at least 45.6 cm (18 inches). |

| Minimum clearance for service access | Clearance, front of server: 123.2 cm (48.5 inches)Clearance, back of server: 91.4 cm (36 inches) |

The following table contains Oracle Rack Cabinet 1242 rack specifications:

Table C-3 Oracle Rack Cabinet 1242 Rack Specifications

| Requirement | Specification |

|---|---|

| Usable rack units | 42 |

| Height | 199.9 cm (78.74 inches) |

| Width (with side panels) | 60 cm (23.62 inches) |

| Maximum dynamic load | 1005 kg (2215 lbs) |

The following table contains Sun Rack II Model 1242 and Sun Rack II Model 1042 rack specifications:

Table C-4 Sun Rack II Model 1242 and Sun Rack II Model 1042 Rack Specifications

| Requirement | Specification |

|---|---|

| Usable rack units | 42 |

| Height | 199.8 cm (78.66 inches) |

| Width (with side panels) | 60 cm (23.62 inches) |

| Depth Model 1242 | 120 cm (47.24 inches) |

| Depth Model 1042 | 105.8 cm (41.66 inches) |

| Weight Model 1242 | 150.6 kg (332 lbs) |

| Weight Model 1042 | 123.4 kg (272 lbs) |

| Maximum dynamic load | 1005 kg (2215 lbs) |

Depth is measured from front door handle to back door handle.

Installation Procedure

This topic provides an overview of the Oracle Communications Server E6-2L installation procedure. Review the entire installation procedure and find links to more information about each step.

The following list summarizes the tasks that you must perform to properly install Oracle Server E6-2L.

-

Review Known Issues for any late-breaking information about the server. Refer to Oracle AMD-Based Cloud Servers Product Notes.

-

Confirm Installation Prerequisites. Prepare to install the server.

-

Install the server into a rack. Rackmount the Server. To rackmount the server, secure the rack to the floor, stabilize the rack, and install the mounting brackets and slide rails. Install the server hardware.

-

Connect to the system. Attach cables and power cords to the server.

-

Power on the server.

-

Connect to Oracle Integrated Lights Out Manager (ILOM).

-

Install the operating system.

Installation Prerequisites

Prepare to install the server. Before you start the Rackmount Procedures or Reinstall the Server Into the Rack, ensure that the following tasks are complete.

-

Review the server:

-

Features

-

Components

-

Specifications

-

Site Planning Checklists

-

Management

-

Confirm that your site meets the required electrical and environmental requirements. See Site Planning Checklists.

-

Familiarize yourself with safety precautions and electrostatic discharge (ESD).

-

Before installing the server, read the safety information in Oracle Server Safety and Compliance Guide and in Important Safety Information for Oracle's Hardware Systems.

-

Assemble the required tools and equipment for installation.

-

Confirm that you received all the items you ordered. See Shipping Inventory.

-

Install any separately shipped optional components.

Safety Precautions

This section describes safety precautions you must follow when installing the server into a rack.

Communicate instructions: When performing a two-person procedure, communicate your intentions clearly to the other person before, during, and after each step to minimize confusion.

Elevated operating ambient temperature: If you install the server in a closed or multi-unit rack assembly, the operating ambient temperature of the rack environment might be higher than the room ambient temperature. Install the equipment in an environment compatible with the maximum ambient temperature (Tma) specified for the server. For server environmental requirements, see Environmental Requirements.

Reduced airflow: Install the equipment in a rack so that it does not compromise the amount of airflow required for safe operation of the equipment.

Mechanical loading: Mount the equipment in the rack so that it does not cause a hazardous condition due to uneven mechanical loading.

Circuit overloading: Consider the connection of the equipment to the supply circuit and the effect that overloading the circuits might have on over-current protection and supply wiring. Also consider the equipment nameplate power ratings used when you address this concern.

Reliable earthing: Maintain reliable earthing of rackmounted equipment. Pay attention to supply connections other than direct connections to the branch circuit (for example, use of power strips).

Mounted equipment: Do not use slide-rail-mounted equipment as a shelf or a workspace.

ESD Precautions

Electronic equipment is susceptible to damage by static electricity. To prevent electrostatic discharge (ESD) when you install or service the server:

-

Use a grounded antistatic wrist strap, foot strap, or equivalent safety equipment.

-

Place components on an antistatic surface, such as an antistatic discharge mat or an antistatic bag.

-

Wear an antistatic grounding wrist strap connected to a metal surface on the chassis when you work on system components.

Before installing the server, read the safety information in Oracle Server Safety and Compliance Guide and in Important Safety Information for Oracle's Hardware Systems.

Tools and Equipment For Installation

To install and maintain the server, you must have the following service tools:

-

Phillips (+) No. 1, No. 2

-

Torx (6 Lobe) T15, T20, T25, T30

-

Antistatic wrist strap

-

Antistatic mat

You must provide a system console device, such as a laptop (running terminal emulation software), workstation, or terminal server.

Shipping Inventory

Inspect the shipping cartons for evidence of physical damage. If a shipping carton appears damaged, request that the carrier agent be present when the carton is opened. Keep all contents and packing material for the agent inspection.

The carton contains these components:

-

Power cords, packaged separately with the country kit

-

Rackmount kit, containing rack rails, mounting brackets, screws, and the Rackmounting Template

-

Legal and safety documents

Rackmount the Server

To rackmount the server, secure the rack to the floor, stabilize the rack, and install the mounting brackets and slide rails. Then, install the server into the rack.

Rack Mount Instructions

A) Elevated Operating Ambient - If installed in a closed or multi-unit rack assembly, the operating ambient temperature of the rack environment may be greater than room ambient. Therefore, consideration should be given to installing the equipment in an environment compatible with the maximum ambient temperature (Tma) specified by the manufacturer.

B) Reduced Air Flow - Installation of the equipment in a rack should be such that the amount of air flow required for safe operation of the equipment is not compromised.

C) Mechanical Loading - Mounting of the equipment in the rack should be such that a hazardous condition is not achieved due to uneven mechanical loading.

D) Circuit Overloading - Consideration should be given to the connection of the equipment to the supply circuit and the effect that overloading of the circuits might have on overcurrent protection and supply wiring. Appropriate consideration of equipment nameplate ratings should be used when addressing this concern.

E) Reliable Earthing - Reliable earthing of rack-mounted equipment should be maintained. Particular attention should be given to supply connections other than direct connections to the branch circuit (e.g. use of power strips).

Equipment is intended for installation in Restricted Access Area

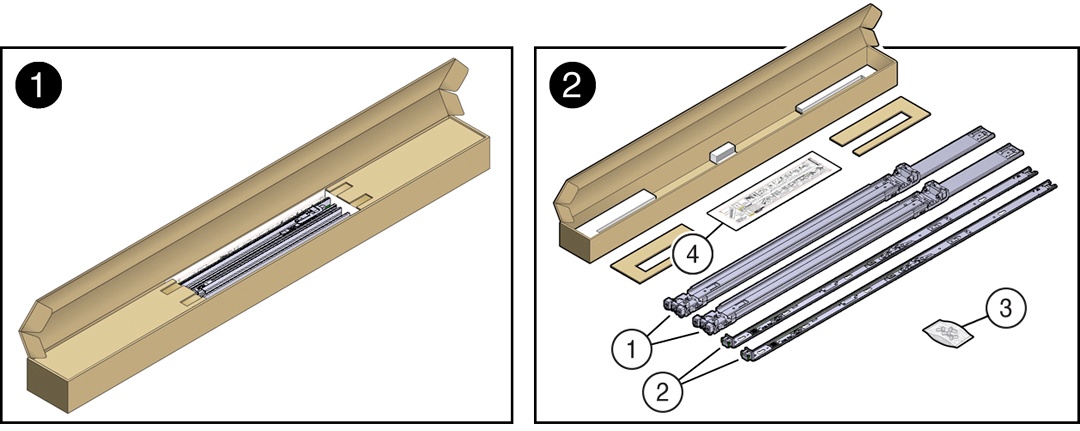

Rackmount Kit Contents

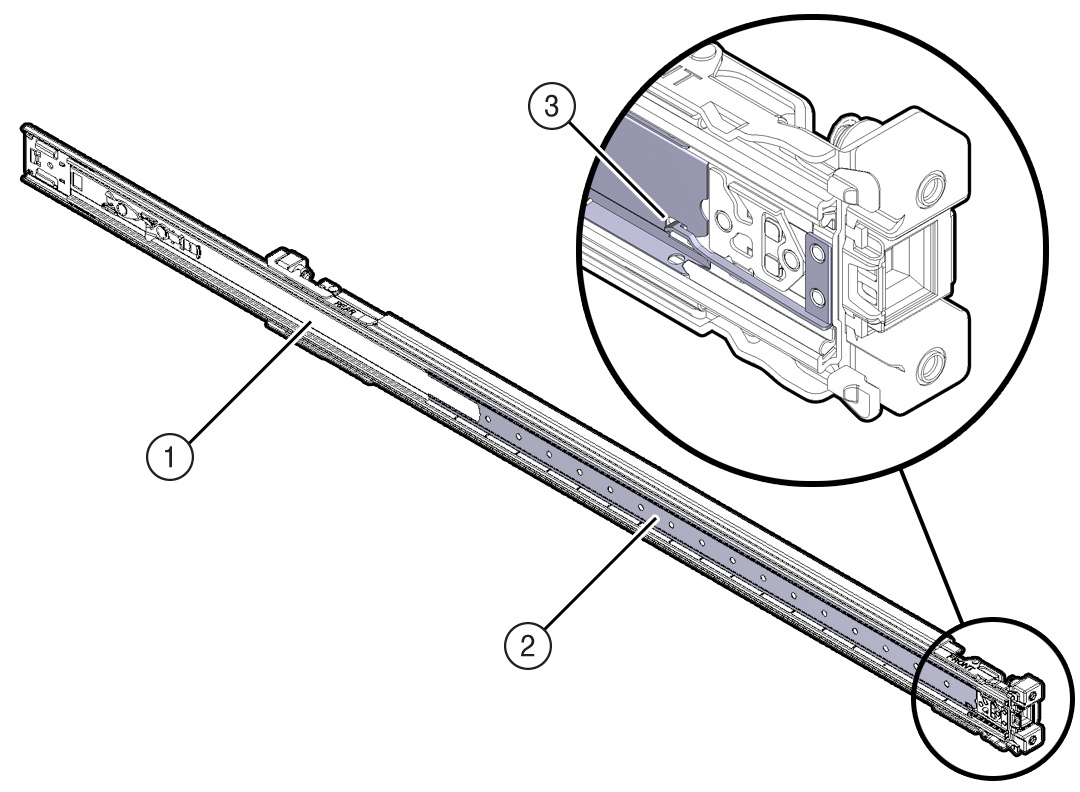

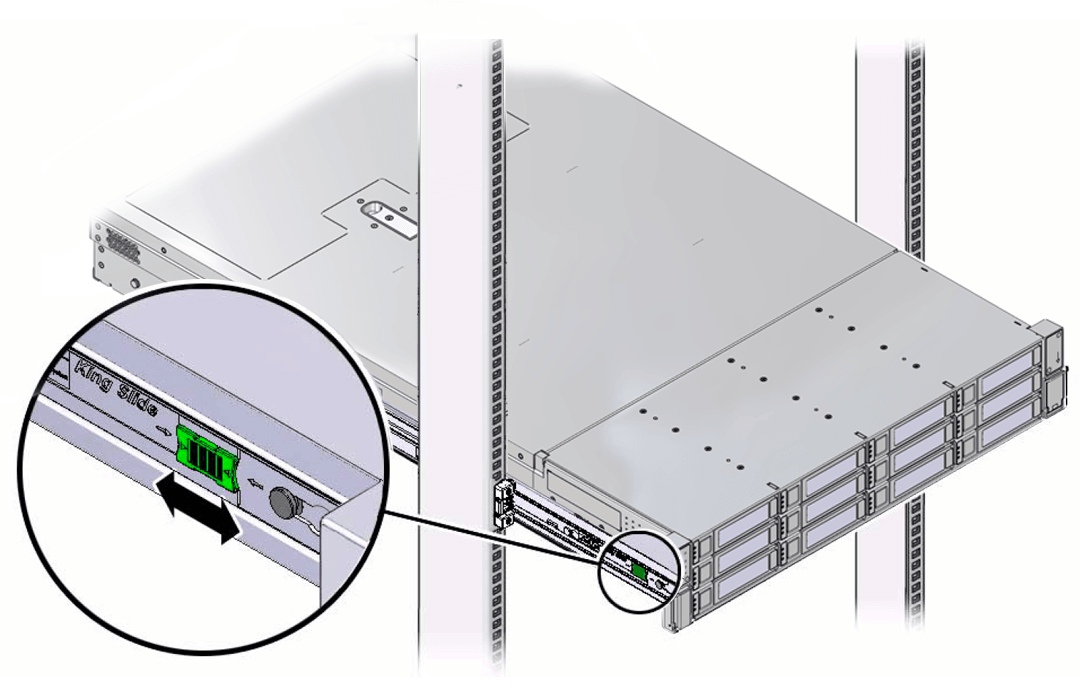

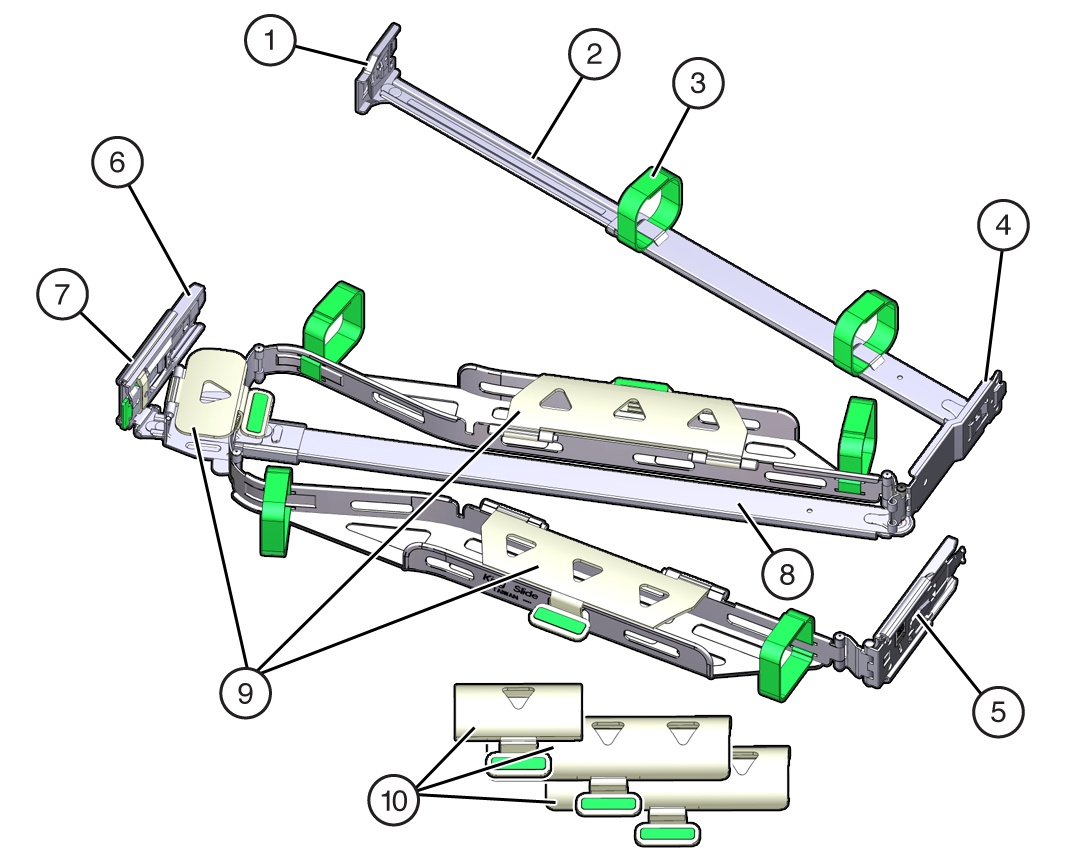

The following figure shows the Rackmount Kit contents. For additional instructions on how

to install your server in a four-post rack using the slide-rail and cable management arm

options, refer to the Rackmounting Template.

| Call Out | Description |

|---|---|

| 1 | Slide-rails |

| 2 | Mounting brackets |

| 3 | Four M4 x 5 fine-pitch mounting bracket securing screws (optional) |

| 4 | Rackmounting Template |

Stabilize the Rack

Refer to your rack documentation for detailed instructions for the following steps.

-

Open and remove the front and back doors from the rack cabinet, only if they impinge on the mounting bay.

-

To prevent the rack cabinet from tipping during the installation, fully extend the rack cabinet anti-tilt bar, which is located at the bottom front of the rack cabinet.

-

If the rack includes leveling feet beneath the rack cabinet to prevent the rack from rolling, extend these leveling feet fully downward and lock to the floor.

Related Rack Documentation

Install Mounting Brackets on the Server

To install the mounting brackets on the sides of the server:

-

Position a mounting bracket against the chassis so that the slide-rail lock is at the server front, and the five keyhole openings on the mounting bracket are aligned with the five locating pins on the side of the chassis.

Call Out Description 1 Chassis front 2 Slide-rail lock 3 Mounting bracket 4 Mounting bracket clip -

When the heads of the five chassis locating pins protrude through the five keyhole openings in the mounting bracket, pull the mounting bracket toward the front of the chassis until the mounting bracket clip locks into place with an audible click.

-

Verify that the back locating pin is engaged with the mounting bracket clip.

-

Repeat Step 1 through Step 3 to install the other mounting bracket on the other side of the server.

Mark the Rackmount Location

Identify the location in the rack where you want to place the server. Oracle Server E6-2L requires two rack units (2U). Use the Rackmounting Template to identify the correct mounting holes for the slide-rails.

-

Ensure that there is at least two rack units (2U) of vertical space in the rack cabinet to install the server. See Rack Compatibility.

-

Place the Rackmounting Template against the front rails, and measure up from the bottom of the Rackmounting Template. The bottom edge of the Rackmounting Template card corresponds to the bottom edge of the server.

-

Mark the mounting holes for the front slide-rails.

-

Mark the mounting holes for the back slide-rails.

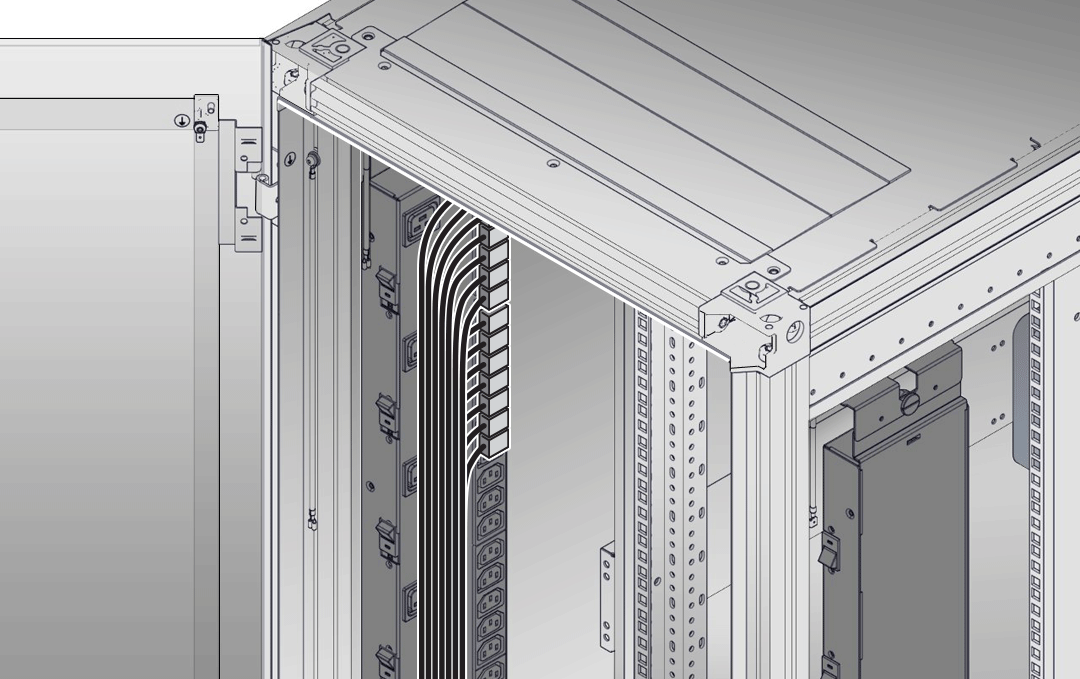

Install AC Power Cables and Slide-Rails

-

Before you install the slide-rails into the rack, install server right-angle AC power cables into the left-side and right-side PDU electrical sockets. Use part number 7079727 - Pwrcord, Jmpr, Bulk, SR2, 2m, C14RA, 10A, C13, 2-meter, right-angle AC power cable for this procedure.

-

Install the slide-rails into the rack. See Attach the Slide-Rails.

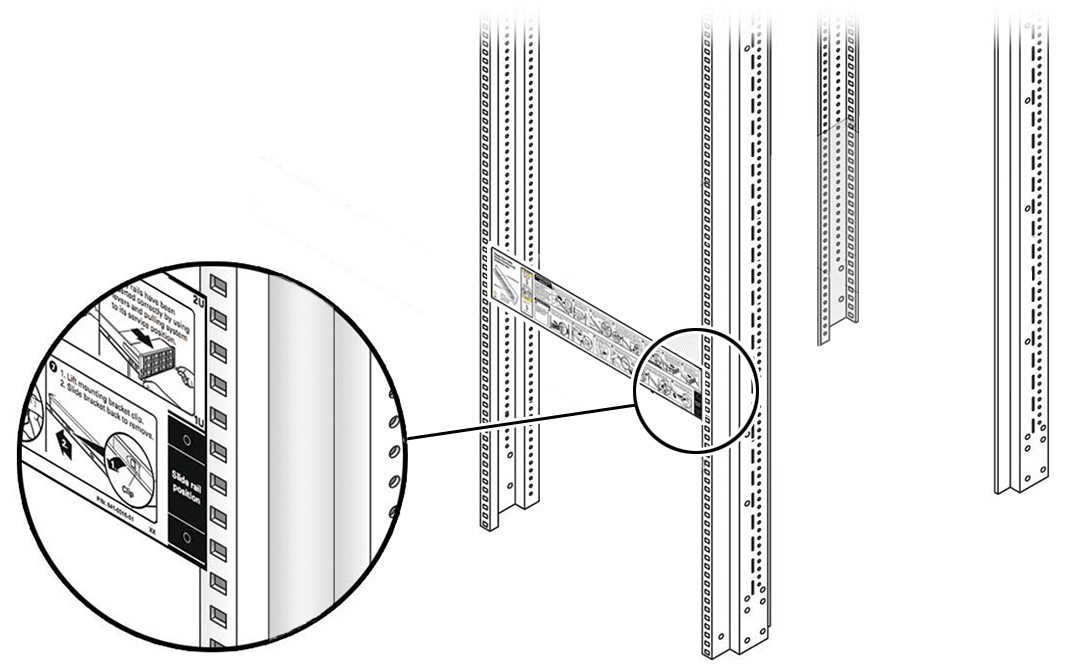

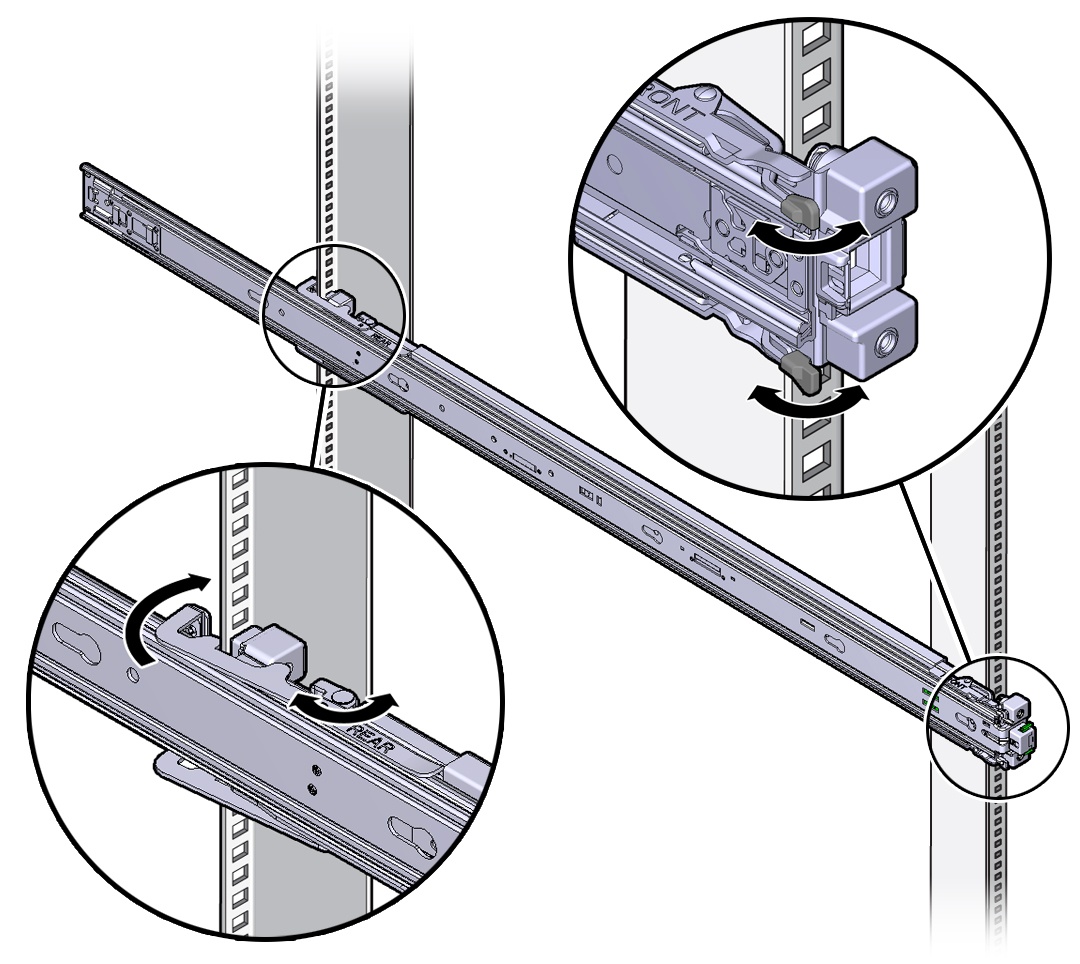

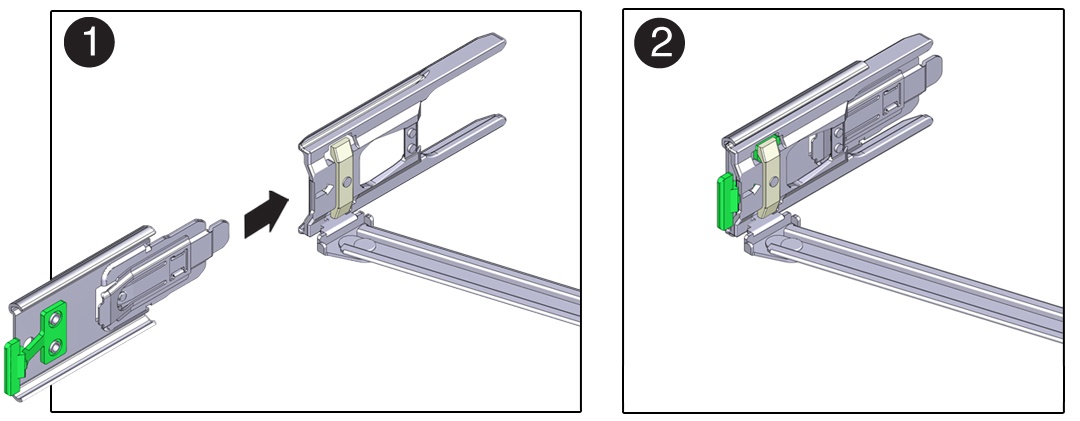

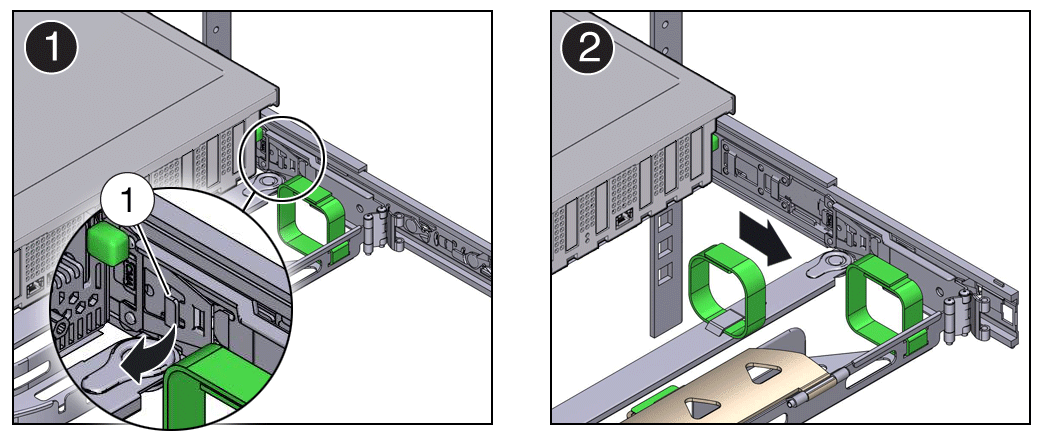

Attach the Slide-Rails

Use this procedure to attach slide-rail assemblies to the rack.

Before you install the slide-rails, be sure to install the server right-angle AC power cables (part number 7079727 - Pwrcord, Jmpr, Bulk, SR2, 2m, C14RA, 10A, C13). In the 1000 mm rack, the standard rail kit slide-rails obstruct access to the front of the 15kVA, 22kVA, and 24kVA Power Distribution Unit (PDU) electrical sockets. If you use the standard AC power cables, first plug them in, and then install the slide-rails into the rack. After you install the slide-rails, you cannot disconnect or remove the standard AC power cables from the PDU but you can remove them from the system.

-

Orient the slide-rail assembly so that the ball-bearing track is forward and locked in place.

Call Out Description 1 Slide-rail 2 Ball-bearing track 3 Ball-bearing locking mechanism -

Starting with either the left or right side of the rack, align the back of the slide-rail assembly against the inside of the back rack rail, and push until the assembly locks into place with an audible click.

-

Align the front of the slide-rail assembly against the outside of the front rack rail, and push until the assembly locks into place with an audible click.

-

Repeat Step 1 through Step 3 to attach the slide-rail assembly to the other side of the rack.

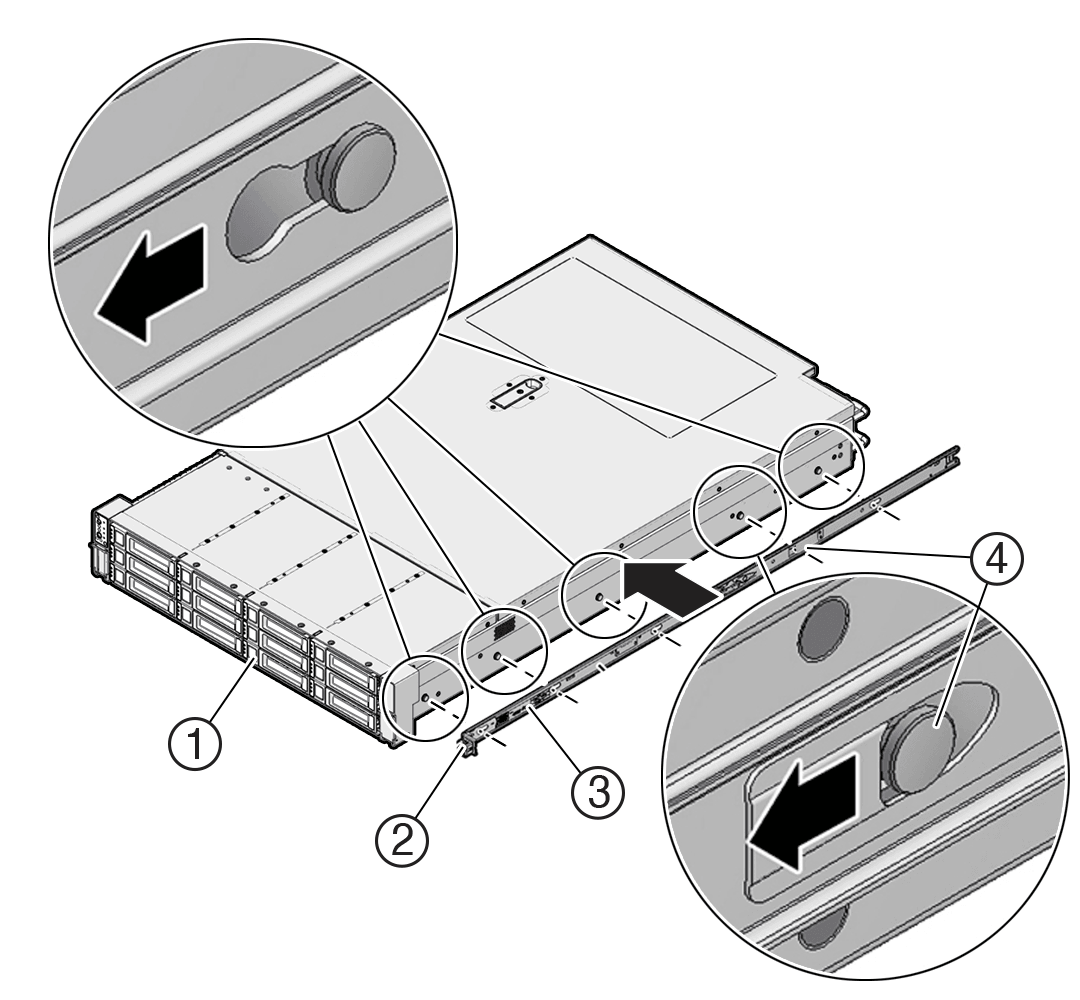

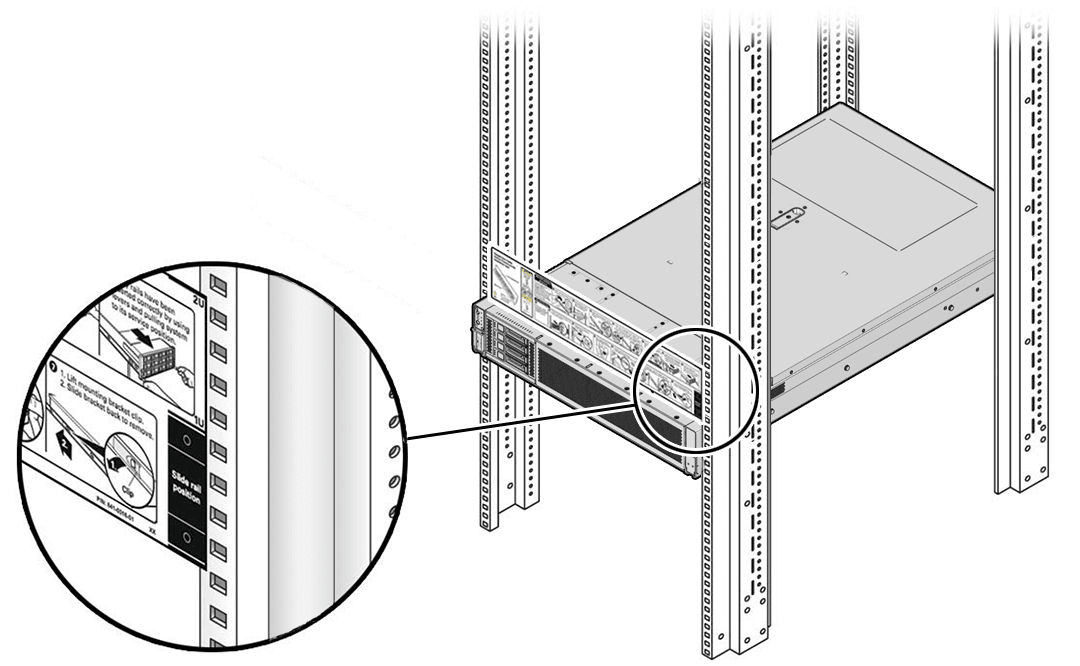

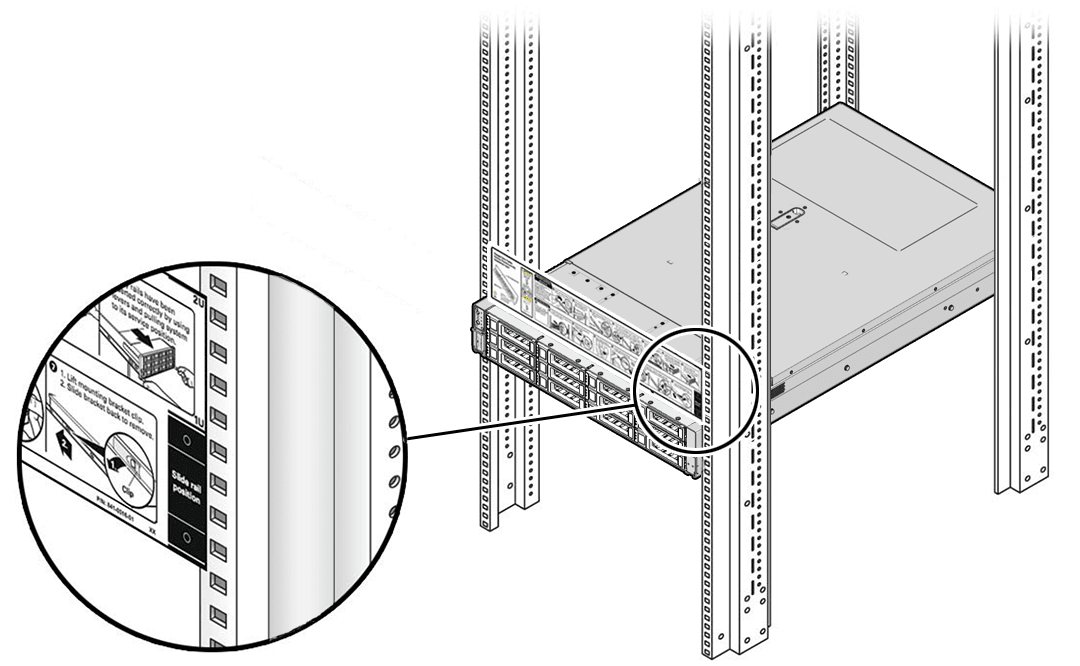

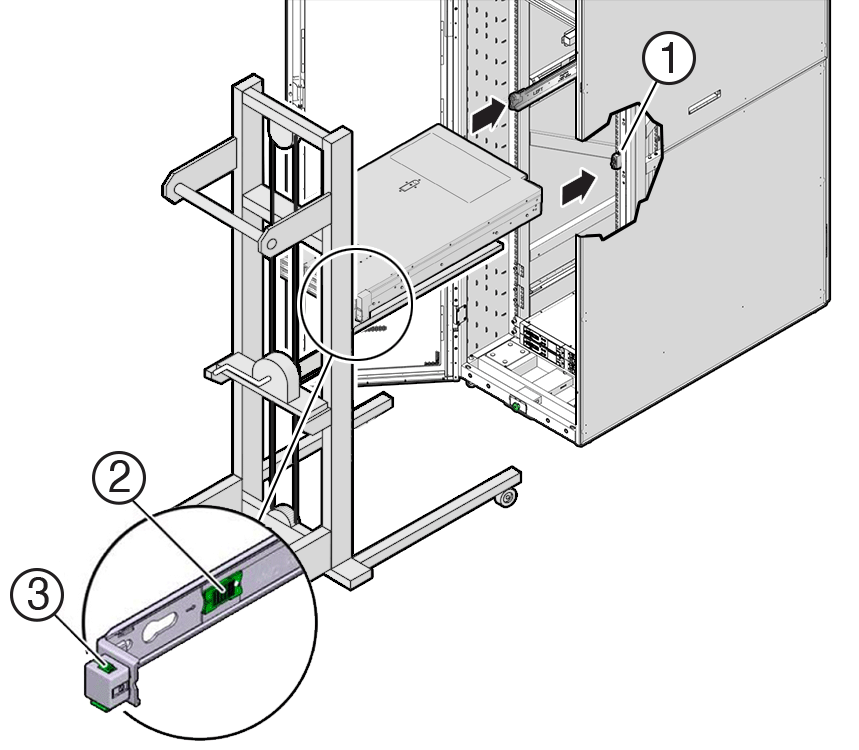

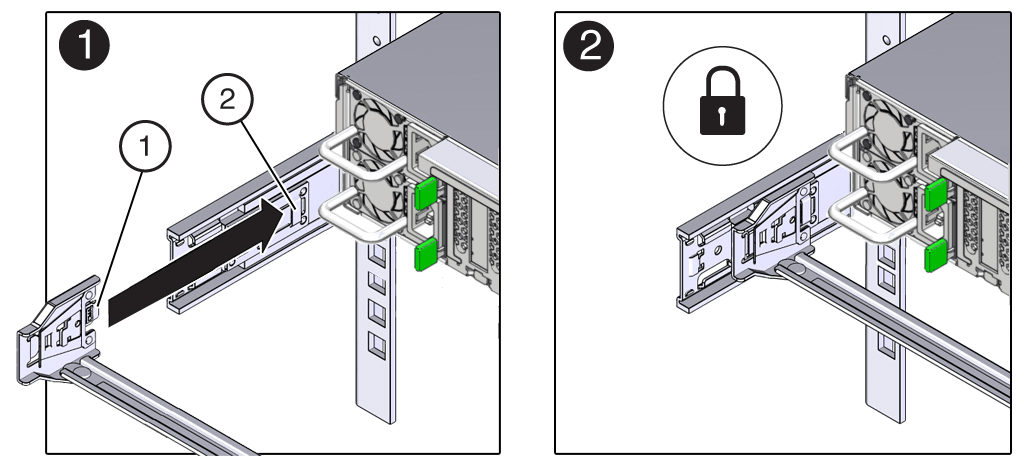

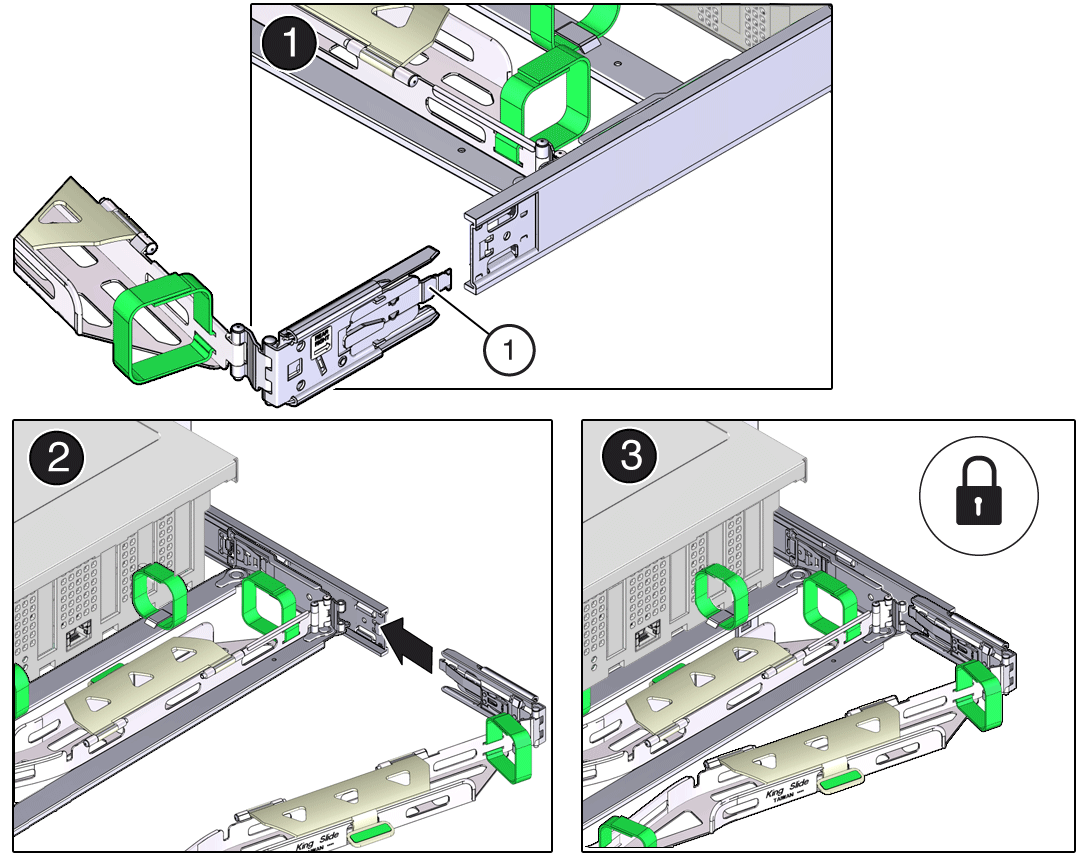

Install the Server Into the Slide-Rail Assemblies

Use this procedure to install the server chassis with mounting brackets into the slide-rail assemblies that are mounted to the rack.

Always load equipment into a rack from the bottom up so that the rack does not become top-heavy and tip over. Extend the rack anti-tilt bar to prevent the rack from tipping during equipment installation.

-

Push the slide-rails as far as possible into the slide-rail assemblies in the rack.

-

Position the server so that the back ends of the mounting brackets are aligned with the slide-rail assemblies that are mounted in the rack.

-

Insert the mounting brackets into the slide-rails, and then push the server into the rack until the mounting brackets are flush with the slide-rail stops (approximately 30 cm or 12 inches).

Call Outs Description 1 Inserting mounting bracket into slide-rail 2 Slide-rail release button 3 Slide-rail lock -

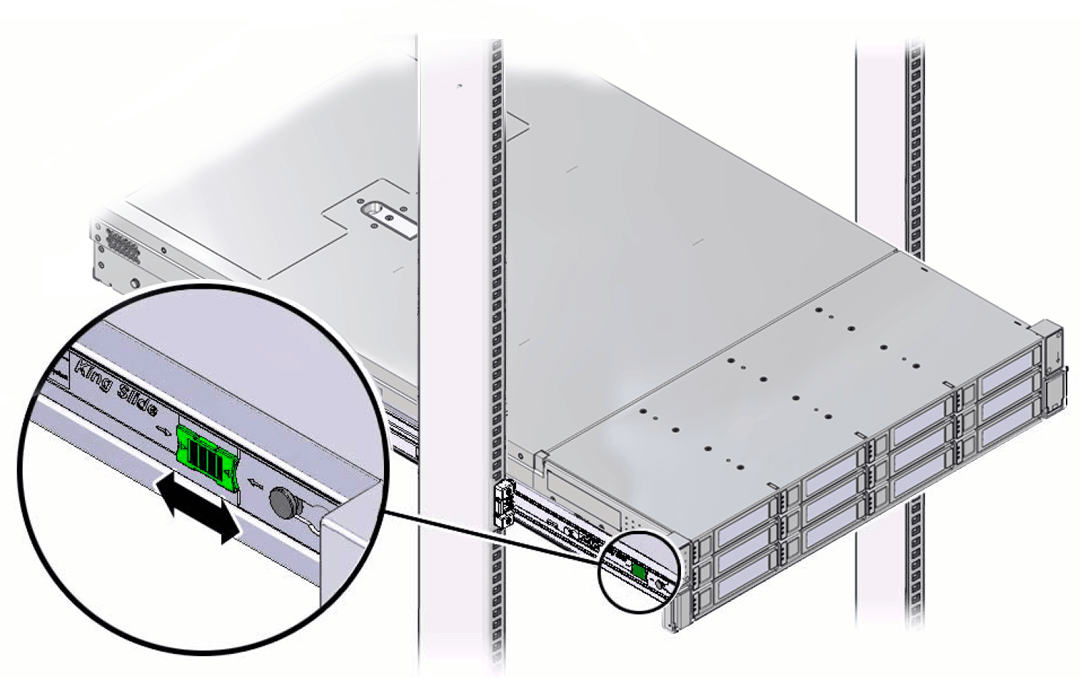

Simultaneously push and hold the green slide-rail release buttons on each mounting bracket while you push the server into the rack. Continue pushing the server into the rack until the slide-rail locks (on the front of the mounting brackets) engage the slide-rail assemblies with an audible click.

Caution:

Before you install the optional cable management arm verify that the server is securely mounted in the rack and that the slide-rail locks are engaged with the mounting brackets.

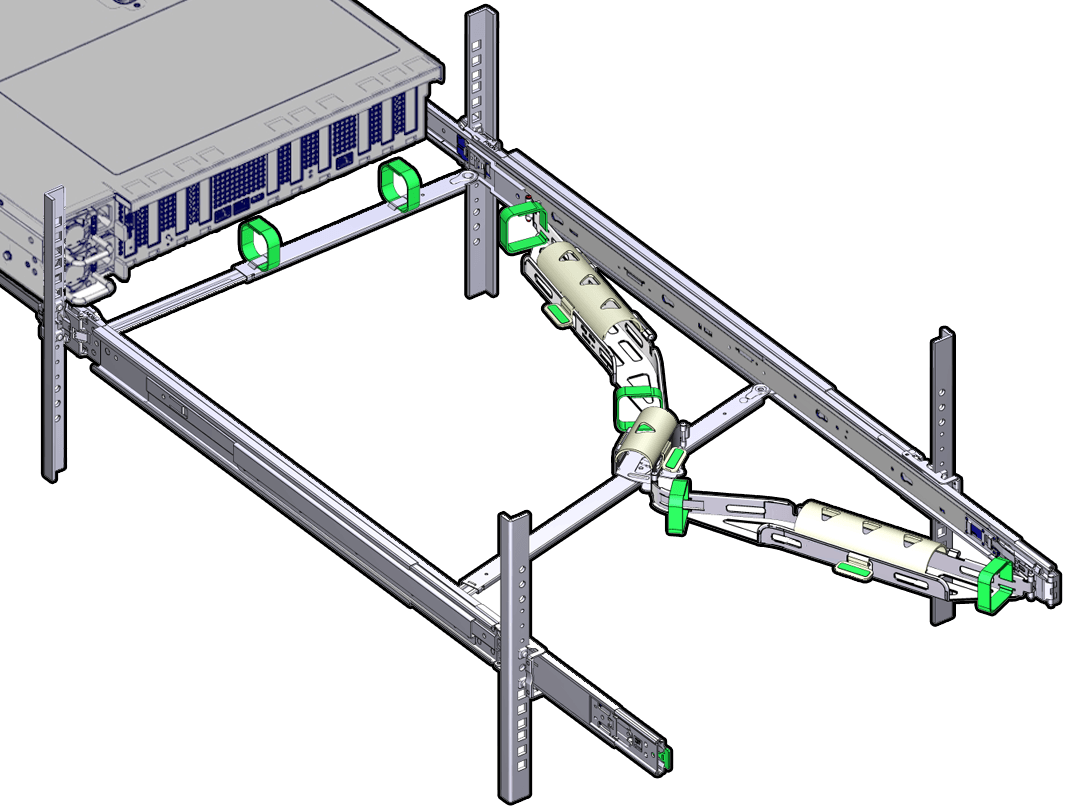

Install the Cable Management Arm (Optional)

Follow this procedure to install the cable management arm (CMA), which you can use to manage cables connected to the back of the server.

Before you install the CMA, ensure that the right-angle AC power cables are long enough to connect to the rackmounted servers when routed through the CMA.

-

Unpack the CMA, which contains the following components.

Call Out Description 1 Connector A 2 Front slide bar 3 Velcro straps (6) 4 Connector B 5 Connector C 6 Connector D 7 Slide-rail latching bracket (used with connector D) 8 Back slide bar 9 Server flat cable covers 10 Server round cable covers (optional) -

Prepare the CMA for installation.

-

Ensure that you install the flat cable covers for your server on the CMA.

-

Ensure that the six Velcro straps are threaded into the CMA. Ensure that the two Velcro straps located on the front slide bar are threaded through the opening in the top of the slide bar, as shown in the illustration in Step 1. This prevents the Velcro straps from interfering with the expansion and contraction of the slide bar when the server is extended out of the rack and returned to the rack.

-

To make it easier to install the CMA, extend the server approximately 13 cm (5 inches) out of the front of the rack.

-

Take the CMA to the back of the equipment rack, and ensure that you have adequate room to work at the back of the server. References to "left" or "right" in this procedure assume that you are facing the back of the equipment rack. Throughout this installation procedure, support the CMA and do not allow it to hang under its own weight until it is secured at all four attachment points

-

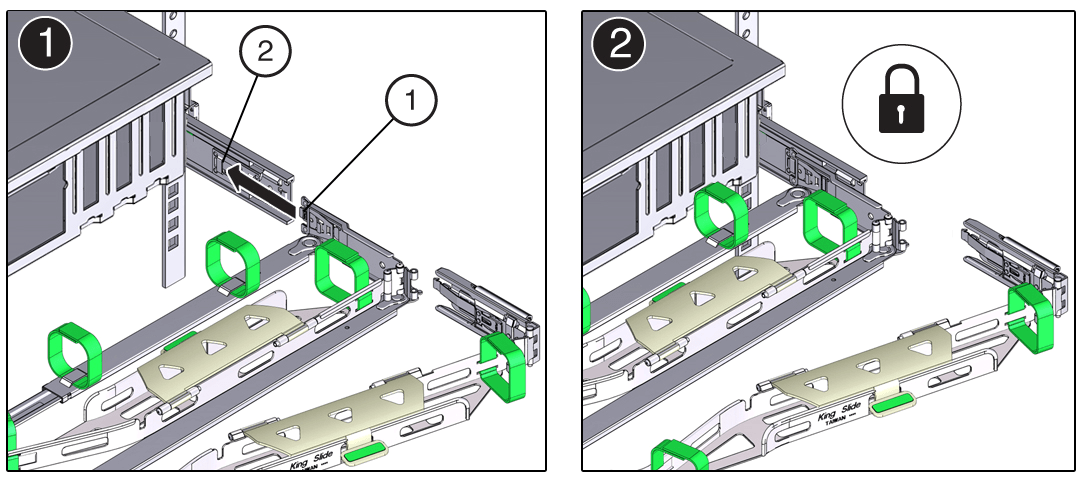

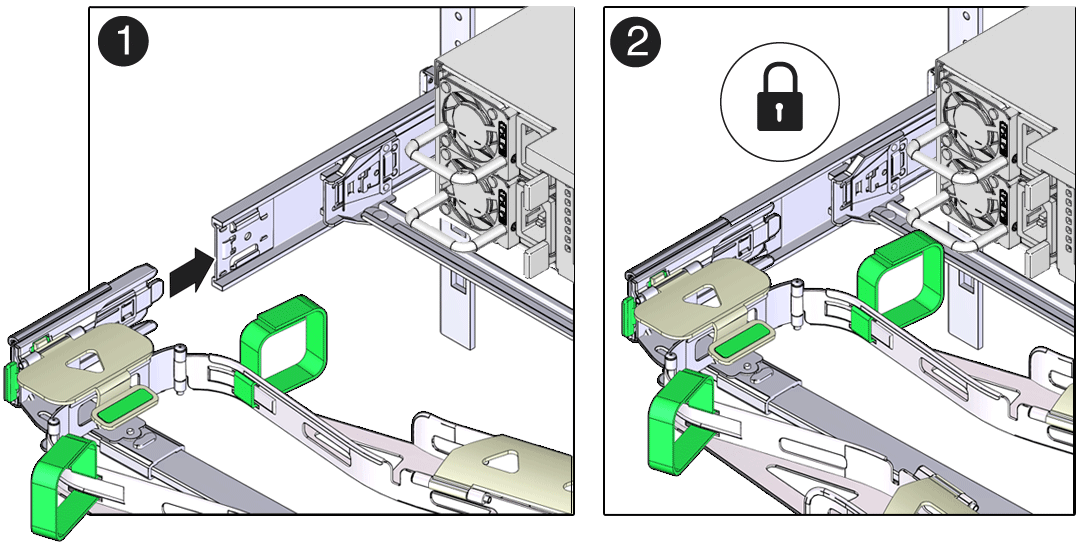

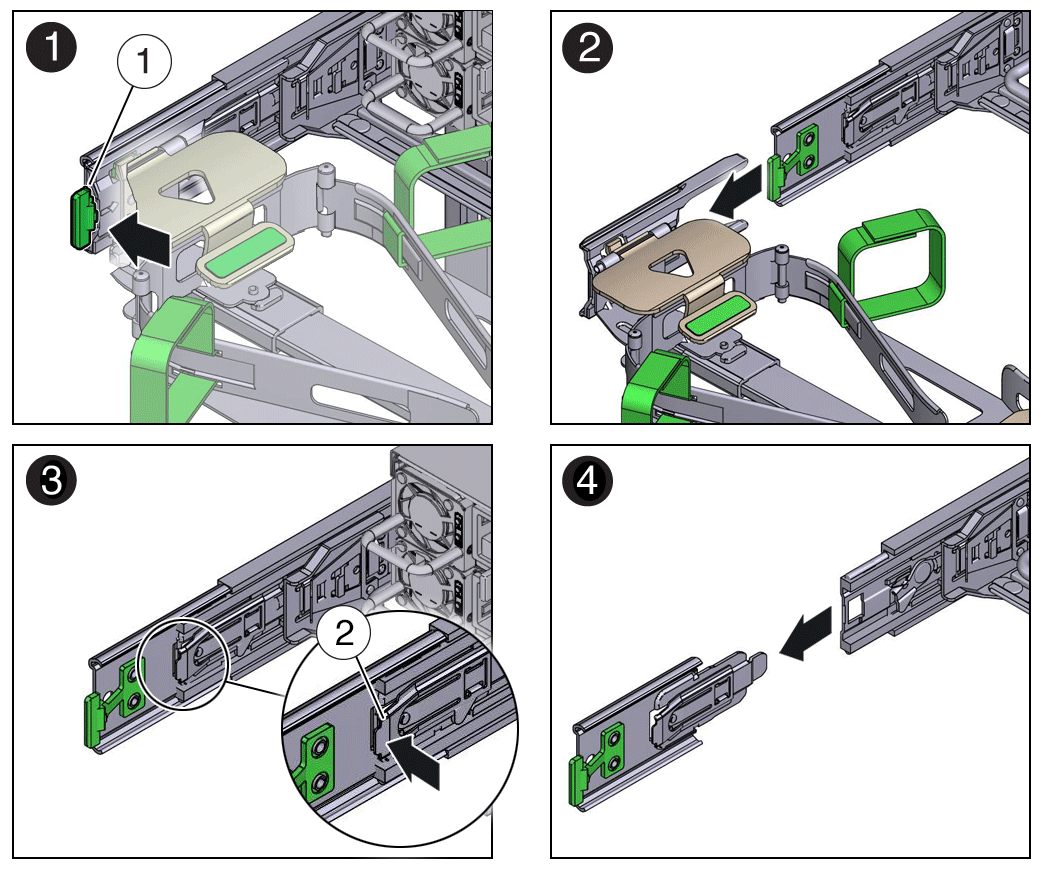

To install CMA connector A into the left slide-rail:

-

Insert CMA connector A into the front slot on the left slide-rail until it locks into place with an audible click [1 and 2]. The connector A tab (callout 1) goes into the slide-rail front slot (callout 2).

-

Gently tug on the left side of the front slide bar to verify that connector A is properly seated.

Call Out Description 1 Connector A tab 2 Left slide-rail front slot -

To install CMA connector B into the right slide-rail:

-

Insert CMA connector B into the front slot on the right slide-rail until it locks into place with an audible click [1 and 2]. The connector B tab (callout 1) goes into the slide-rail front slot (callout 2).

-

Gently tug on the right side of the front slide bar to verify that connector B is properly seated.

Call Out Description 1 Connector B tab 2 Right slide-rail front slot -

To install CMA connector C into the right slide-rail:

-

Align connector C with the slide-rail so that the locking spring (callout 1) is positioned inside (server side) of the right slide-rail [1].

Call Out Description 1 Connector C locking spring -

Insert connector C into the right slide-rail until it locks into place with an audible click [2 and 3].

-

Gently tug on the right side of the CMA back slide bar to verify that connector C is properly seated.

-

To prepare CMA connector D for installation, remove the tape that secures the slide-rail latching bracket to connector D, and ensure that the latching bracket is properly aligned with connector D [1 and 2]. The CMA is shipped with the slide-rail latching bracket taped to connector D. You must remove the tape before you install this connector.

-

To install CMA connector D into the left slide-rail:

-

While holding the slide-rail latching bracket in place, insert connector D and its associated slide-rail latching bracket into the left slide-rail until connector D locks into place with an audible click [1 and 2]. When inserting connector D into the slide-rail, the preferred and easier method is to install connector D and the latching bracket as one assembly into the slide-rail.

-

Gently tug on the left side of the CMA back slide bar to verify that connector D is properly seated. The slide-rail latching bracket has a green release tab. Use the tab to release and remove the latching bracket so that you can remove connector D.

-

Gently tug on the four CMA connection points to ensure that the CMA connectors are fully seated before you allow the CMA to hang by its own weight.

-

To verify that the slide-rails and the CMA are operating properly before routing cables through the CMA:

-

Ensure that the rack anti-tilt bar is extended to prevent the rack from tipping forward when the server is extended. For instructions to stabilize the rack, see Stabilize the Rack.

-

Slowly pull the server out of the rack until the slide-rails rach their stops.

-

Inspect the attached cables for any binding or kinks.

-

Verify that the CMA extends fully with the slide-rails.

-

To return the server to the rack:

-

Simultaneously pull and hold the two green release tabs (one on each side of the server) toward the front of the server while you push the server into the rack. As you push the server into the rack, verify that the CMA retracts without binding.

-

To pull the green release tabs, place your finger in the center of each tab, not on the end, and apply pressure as you pull the tab toward the front of the server.

-

Continue pushing the server into the rack until the slide-rail locks (on the front of the server) engage the slide-rail assemblies. You hear a click when the server is in the normal rack position.

-

Connect cables to the server, as required. See Reconnect Power and Data Cables.

-

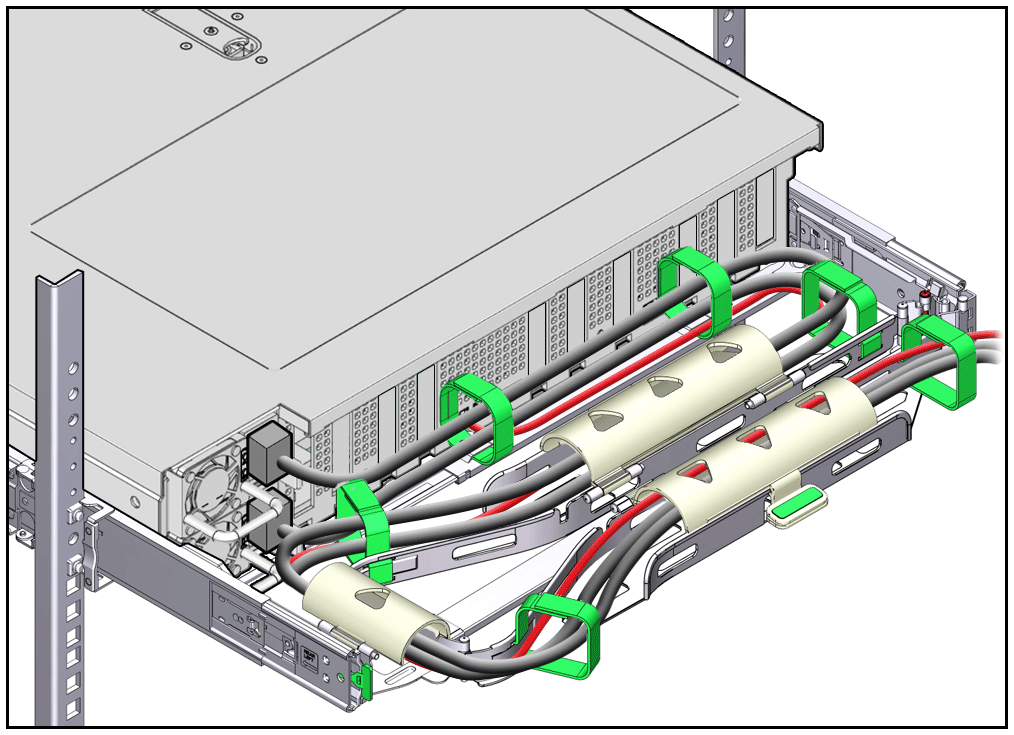

Open the CMA cable covers, route the server cables through the CMA cable troughs (in the order specified in the following steps), close the cable covers, and secure the cables with the six Velcro straps.

-

First through the front-most cable trough.

-

Then through the small cable trough.

-

Then through the back-most cable trough.

-

Ensure that the secured cables do not extend above the top or below the bottom of the server to which they are attached. Otherwise, the cables might snag on other equipment installed in the rack when the server is extended from the rack or returned to the rack.

-

If necessary, bundle the cables with additional Velcro straps to ensure that they stay clear of other equipment. If you need to install additional Velcro straps, wrap the straps around the cables only, not around any of the CMA components. Otherwise, expansion and contraction of the CMA slide bars might be hindered when the server is extended from the rack and returned to the rack.

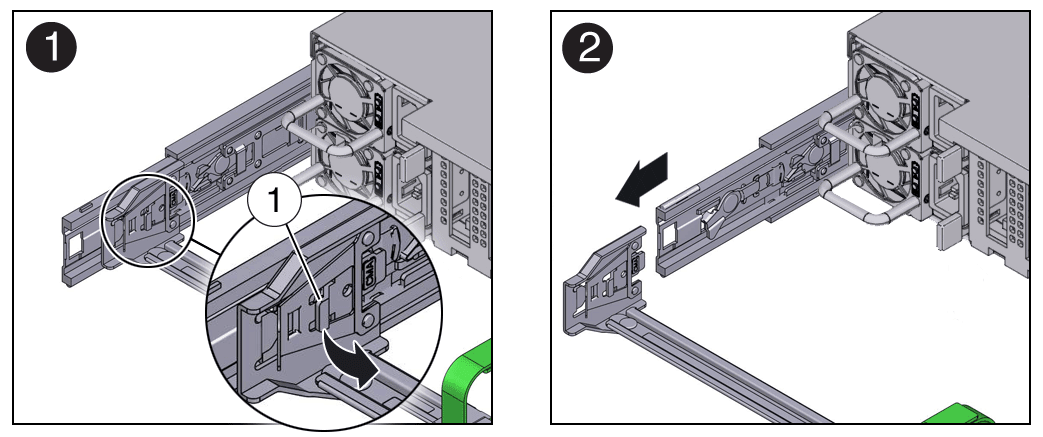

Remove the Cable Management Arm

Follow this procedure to remove the cable management arm (CMA).

Before you begin this procedure, refer to the illustration provided in Step 1 in the procedure Install the Cable Management Arm to identify CMA connectors A, B, C, and D. Disconnect the CMA connectors in the reverse order in which you installed them, that is, disconnect connector D first, followed by C, B, and A.

Throughout this procedure, after you disconnect any of the CMA four connectors, do not allow the CMA to hang under its own weight.

References to “left” or “right” in this procedure assume that you are facing the back of the equipment rack.

-

To prevent the rack from tipping forward when the server is extended, ensure that the rack anti-tilt bar is extended. For instructions to stabilize the rack, see Stabilize the Rack.

-

To make it easier to remove the CMA, extend the server approximately 13 cm (5 inches) out of the front of the rack.

-

To remove the cables from the CMA:

-

Disconnect all cables from the back of the server.

-

If applicable, remove any additional Velcro straps that were installed to bundle the cables.

-

Unwrap the six Velcro straps that are securing the cables.

-

Open the three cable covers to the fully opened position.

-

Remove the cables from the CMA and set them aside.

-

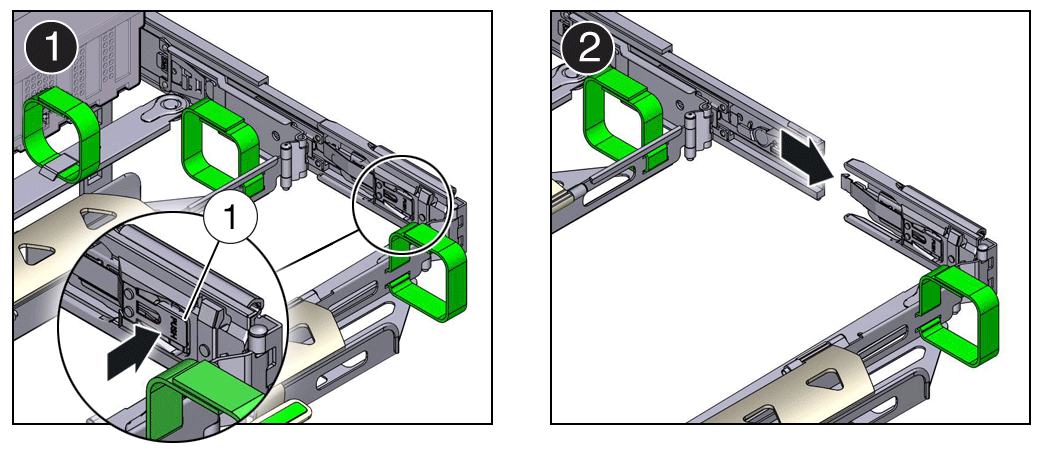

To disconnect connector D:

-

Press the green release tab (callout 1) on the slide-rail latching bracket toward the left and slide the connector D out of the left slide-rail [1 and 2]. When you slide connector D out of the left slide-rail, the slide-rail latching bracket portion of the connector remains in place. You disconnect connector D in the next step. After you disconnect connector D, do not allow the CMA to hang under its own weight. Throughout the remainder of this procedure, the CMA must be supported until all the remaining connectors are disconnected and the CMA can be placed on a flat surface.

Call Out Description 1 Connector D release tab (green) 2 Slide-rail latching bracket release tab (labeled PUSH) -

Use your right hand to support the CMA and use your left thumb to push in (toward the left) on the slide-rail latching bracket release tab labeled PUSH (callout 2), and pull the latching bracket out of the left slide-rail and put it aside [3 and 4].

-

To disconnect connector C:

-

Place your left arm under the CMA to support it.

-

Use your right thumb to push in (toward the right) on the connector C release tab labeled PUSH (callout 1), and pull connector C out of the right slide-rail [1 and 2].

Call Out Description 1 Connector C release tab (labeled PUSH) -

To disconnect connector B:

-

Place your right arm under the CMA to support it and grasp the back end of connector B with your right hand.

-

Use your left thumb to pull the connector B release lever to the left, away from the right slide-rail (callout 1), and use your right hand to pull the connector out of the slide-rail [1 and 2].

Call Out Description 1 Connector B release lever -

To disconnect connector A:

-

Place your left arm under the CMA to support it and grasp the back end of connector A with your left hand.

-

Use your right thumb to pull the connector A release lever to the right, away from the left slide-rail (callout 1), and use your left hand to pull the connector out of the slide-rail [1 and 2].

Call Out Description 1 Connector A release lever -

Remove the CMA from the rack and place it on a flat surface.

-

Go to the front of the server and push it back into the rack.

Operating System Installation Process

Each operating system has specific steps to follow to complete the installation. Refer to the OS documentation. The general process for all operating system installations is as follows.

-

Review the server Known Issues.

-

Confirm the server supported operating system version.

-

Install the server hardware.

-

Connect to the system.

-