Data Loading Workflow

The primary job of a Data Administrator is to prepare, upload, and load data into the application staging tables.

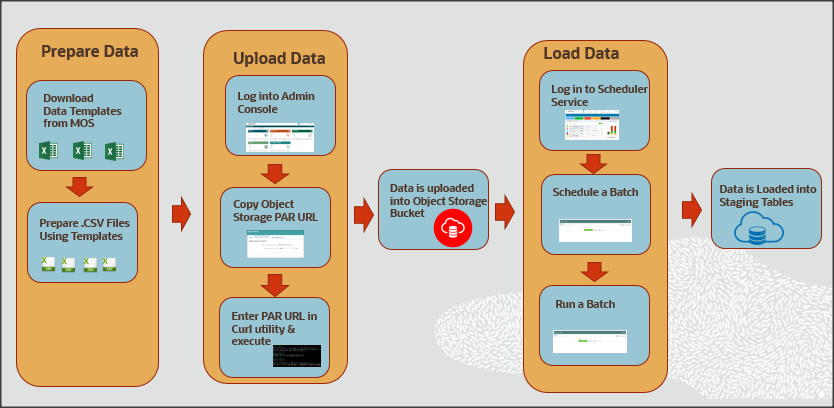

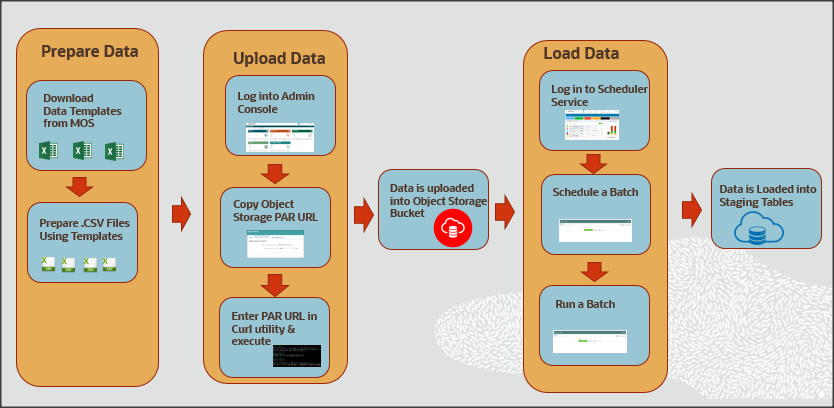

The following illustration provides the workflow of the OFS FCCM Cloud Service Data

Loading Service.

Figure 1-1 Data Loading Workflow

The primary job of a Data Administrator is to prepare, upload, and load data into the application staging tables.

As a Data Administrator, you must download specified data templates from the My Oracle

Support page and then export the bank's data into specified templates in the .csv format

using the ETL process every day. If the .csv file is bigger than 100MiB, it is

recommended to split them into two or more files for swift upload. For example, <

filename>_1 .csv, < filename>_2.csv, < filename>_3.csv, and so on. This helps to

load data swiftly into the application staging tables.

- Log in to Admin Console and go to the Object Storage Standard pane.

- Copy the Object Storage Standard bucket Pre-authenticated (PAR) URL.

- Open an HTTP utility such as cURL and enter the data file path, PAR URL, and name of the .csv file and then execute it. Data is uploaded into the Object Storage Standard bucket.

The Object Storage Standard bucket stores data for seven days. After seven days, data is

auto-archived in the Object Storage Archive Bucket.

To process data files from the Object Storage Standard Bucket to

the staging tables, log in to Scheduler Service, go to Schedule Batch, and then select

the AMLDataloading batch. Run the batch based on the requirement, for example, daily,

weekly, and so on. Business data is loaded into the application staging tables

successfully.

Note:

The PAR URL is refreshed after seven days.The following table serves as a quick reference to the Data Loading Workflow.

Table 1-1 Data Loading Workflow

| Workflow | Description |

|---|---|

| Preparing Data | Prepare the business data in the required format using the specified templates to load into the application staging area. This section also explains the type of data files you are required to create, the size of data files, and the template in which you must provide the data. |

| Uploading Data Files | After you prepare data in the required templates in the .csv format, you must use the PAR URL that is mentioned in the Object Storage to access the bucket. Enter the details of the .csv file path, PAR URL, and the .csv file name in the HTTP utility such as cURL to upload data files into the Object Storage. The PAR URL, which you use to access the Object Storage is refreshed every seven days. Multiple users can load data into the Object storage concurrently from different locations. You can modify the .csv data files and upload them using the same PAR URL. The modified data files overwrite the previously loaded data files in the Object Storage |

| Loading Data Files | Data that is uploaded into the Object Storage is loaded into the application staging tables. The Scheduler Service allows you to process data from the Object Storage to staging tables by scheduling and running batches. |