Using systemd to Manage cgroups v2

The preferred method of managing resource allocation with cgroups v2 is

to use the control group functionality provided by systemd.

By default, systemd creates a cgroup folder for each

systemd service set up on the host. systemd names

these folders using the format servicename.service,

where servicename is the name of the service associated with the

folder.

To see a list of the cgroup folders systemd creates for

the services, run the ls command on the

system.slice branch of the cgroup file

system as shown in the following sample code block:

ls /sys/fs/cgroup/system.slice/

... ... ...

app_service1.service cgroup.subtree_control httpd.service

app_service2.service chronyd.service ...

... crond.service ...

cgroup.controllers dbus-broker.service ...

cgroup.events dtprobed.service ...

cgroup.freeze firewalld.service ...

... gssproxy.service ...

... ... ...-

The folders app_service1.

serviceand app_service2.servicerepresent custom application services you might have on your system.

In addition to service control groups, systemd also creates a

cgroup folder for each user on the host. To see the

cgroups created for each user you can run the ls

command on the user.slice branch of the cgroup

file system as shown in the following sample code block:

ls /sys/fs/cgroup/user.slice/

cgroup.controllers cgroup.subtree_control user-1001.slice

cgroup.events cgroup.threads user-982.slice

cgroup.freeze cgroup.type ...

... ... ...

... ... ...

... ... ...In the preceding code block:

-

Each user

cgroupfolder is named using the formatuser-UID.slice. So, control groupuser-1001.sliceis for a user whoseUIDis 1001, for example.

systemd provides high-level access to the cgroups and

kernel resource controller features so you do not have to access the file system

directly. For example, to set the CPU weight of a service called

app_service1.service, you might choose to run

the systemctl set-property command as follows:

sudo systemctl set-property app_service1.service CPUWeight=150Thus, systemd enables you to manage resource distribution at an

application level, rather than the process PID level used when configuring

cgroups without using systemd functionality.

About Slices and Resource Allocation in systemd

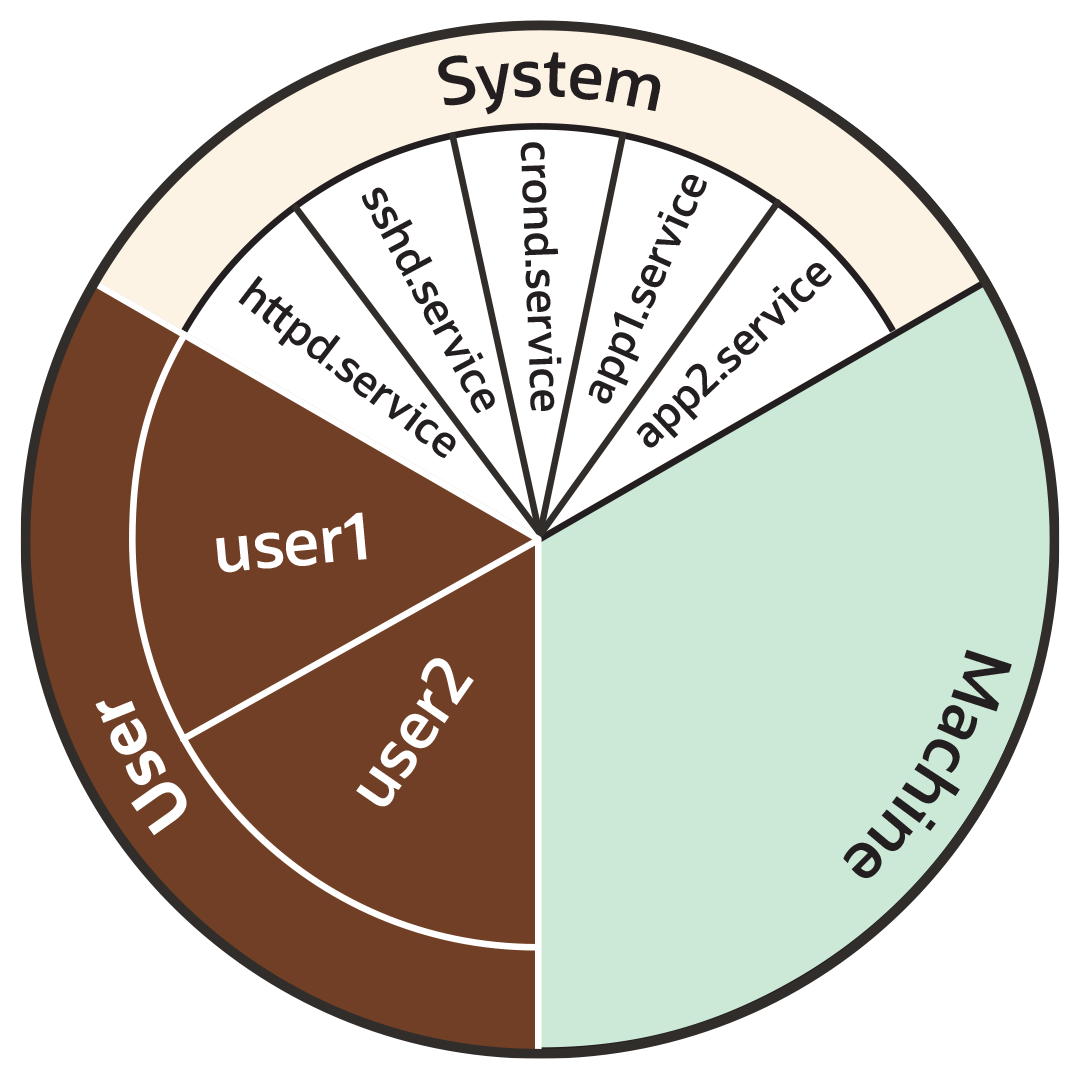

This section looks at the way systemd initially divides each of the

default kernel controllers, for example CPU, memory

and blkio, into portions called "slices" as illustrated by the

following example pie chart:

Note:

You can also create your own custom slices for resource distribution, as shown in section Setting Resource Controller Options and Creating Custom Slices.Figure 7-1 Pie chart illustrating distribution in a resource controller, such as CPU or Memory

As the preceding pie chart shows, by default each resource controller is divided equally between the following 3 slices:

-

System (

system.slice). -

User (

user.slice). -

Machine (

machine.slice).

The following list looks at each slice more closely. For the purposes of discussion, the examples in the list focus on the CPU controller.

- System (

system.slice) -

This resource slice is used for managing resource allocation amongst daemons and service units.

As shown in the preceding example pie chart, the system slice is divided into further sub-slices. For example, in the case of CPU resources, we might have sub-slice allocations within the system slice that include the following:-

httpd.service(CPUWeight=100) -

sshd.service(CPUWeight=100) -

crond.service(CPUWeight=100) -

app1.

service(CPUWeight=100) -

app2.

service(CPUWeight=100)

serviceand app2.servicerepresent custom application services you might have running on your system. -

- User (

user.slice) - This resource slice is used for managing resource allocation amongst user

sessions. A single slice is created for each

UIDirrespective of how many logins the associated user has active on the server. Continuing with our pie chart example, the sub-slices might be as follows:-

user1 (

CPUWeight=100,UID=982) -

user2 (

CPUWeight=100,UID=1001)

-

- Machine (

machine.slice) - This slice of the resource is used for managing resource allocation amongst hosted virtual machines, such as KVM guests, and Linux Containers. The machine slice is only present on a server if the server is hosting virtual machines or Linux Containers.

Note:

Share allocations do not set a maximum limit for a resource.

For instance, in the preceding examples, the slice user.slice has 2

users: user1 and user2. Each user is allocated

an equal share of the CPU resource available to the parent

user.slice. However, if the processes associated with

user1 are idle, and do not require any CPU resource, then its

CPU share is available for allocation to user2 if needed. In such

a situation, user2 might even be allocated the entire CPU

resource apportioned to the parent user.slice if it is required by

other users.

To cap CPU resource, you would need to set the CPUQuota property to

the required percentage.

Slices, Services, and Scopes in the cgroup Hierarchy

The pie chart analogy used in the preceding sections is a helpful way to conceptualize

the division of resources into slices. However, in terms of structural organization, the

control groups are arranged in a hierarchy. You can view the systemd

control group hierarchy on your system by running the systemd-cgls

command as follows:

Tip:

To see the entire cgroup hierarchy, starting from the root

slice -.slice, as in the following example, ensure you run

systemd-cgls from outside of the control group mount point

/sys/fs/cgroup/. Otherwise, If you run the command from within

/sys/fs/cgroup/, the output starts from the

cgroup location from which the command was run. See

systemd-cgls(1) for more information.

systemd-cglsControl group /:

-.slice

...

├─user.slice (#1429)

│ → user.invocation_id: 604cf5ef07fa4bb4bb86993bb5ec15e0

│ ├─user-982.slice (#4131)

│ │ → user.invocation_id: 9d0d94d7b8a54bcea2498048911136c8

│ │ ├─session-c1.scope (#4437)

│ │ │ ├─2416 /usr/bin/sudo -u ocarun /usr/libexec/oracle-cloud-agent/plugins/runcommand/runcommand

│ │ │ └─2494 /usr/libexec/oracle-cloud-agent/plugins/runcommand/runcommand

│ │ └─user@982.service … (#4199)

│ │ → user.delegate: 1

│ │ → user.invocation_id: 37c7aed7aa6e4874980b79616acf0c82

│ │ └─init.scope (#4233)

│ │ ├─2437 /usr/lib/systemd/systemd --user

│ │ └─2445 (sd-pam)

│ └─user-1001.slice (#7225)

│ → user.invocation_id: ce93ad5f5299407e9477964494df63b7

│ ├─session-2.scope (#7463)

│ │ ├─20304 sshd: oracle [priv]

│ │ ├─20404 sshd: oracle@pts/0

│ │ ├─20405 -bash

│ │ ├─20441 systemd-cgls

│ │ └─20442 less

│ └─user@1001.service … (#7293)

│ → user.delegate: 1

│ → user.invocation_id: 70284db060c1476db5f3633e5fda7fba

│ └─init.scope (#7327)

│ ├─20395 /usr/lib/systemd/systemd --user

│ └─20397 (sd-pam)

├─init.scope (#19)

│ └─1 /usr/lib/systemd/systemd --switched-root --system --deserialize 28

└─system.slice (#53)

...

├─dbus-broker.service (#2737)

│ → user.invocation_id: 2bbe054a2c4d49809b16cb9c6552d5a6

│ ├─1450 /usr/bin/dbus-broker-launch --scope system --audit

│ └─1457 dbus-broker --log 4 --controller 9 --machine-id 852951209c274cfea35a953ad2964622 --max-bytes 536870912 --max-fds 4096 --max-matches 131072 --audit

...

├─chronyd.service (#2805)

│ → user.invocation_id: e264f67ad6114ad5afbe7929142faa4b

│ └─1482 /usr/sbin/chronyd -F 2

├─auditd.service (#2601)

│ → user.invocation_id: f7a8286921734949b73849b4642e3277

│ ├─1421 /sbin/auditd

│ └─1423 /usr/sbin/sedispatch

├─tuned.service (#3349)

│ → user.invocation_id: fec7f73678754ed687e3910017886c5e

│ └─1564 /usr/bin/python3 -Es /usr/sbin/tuned -l -P

├─systemd-journald.service (#1837)

│ → user.invocation_id: bf7fb22ba12f44afab3054aab661aedb

│ └─1068 /usr/lib/systemd/systemd-journald

├─atd.service (#3961)

│ → user.invocation_id: 1c59679265ab492482bfdc9c02f5eec5

│ └─2146 /usr/sbin/atd -f

├─sshd.service (#3757)

│ → user.invocation_id: 57e195491341431298db233e998fb180

│ └─2097 sshd: /usr/sbin/sshd -D [listener] 0 of 10-100 startups

├─crond.service (#3995)

│ → user.invocation_id: 4f5b380a53db4de5adcf23f35d638ff5

│ └─2150 /usr/sbin/crond -n

...

The preceding sample output shows how all "*.slice" control groups

reside under the root slice -.slice. Beneath the root slice you can

see the user.slice and system.slice control groups,

each with their own child cgroup sub-slices.

Examining the systemd-cgls command output you can see how, with the

exception of root -.slice , all processes are on leaf nodes. This

arrangement is enforced by cgroups v2, in a rule called the "no

internal processes" rule. See cgroups (7) for more information about

the "no internal processes" rule.

The output in the preceding systemd-cgls command example also shows how

slices can have descendent child control groups that are systemd

scopes. systemd scopes are reviewed in the following section.

systemd Scopes

systemd scope is a systemd unit type that groups

together system service worker processes that have been launched independently of

systemd. The scope units are transient cgroups

created programmatically using the bus interfaces of systemd.

For example, in the following sample code, the user with UID 1001 has

run the systemd-cgls command, and the output shows

session-2.scope has been created for processes the user has spawned

independently of systemd (including the process for the command itself

, 21380 sudo systemd-cgls):

Note:

In the following example, the command has been run from within the control group mount point/sys/fs/cgroup/. Hence,

instead of the root slice, the output starts from the cgroup location

from which the command was run.

sudo systemd-cglsWorking directory /sys/fs/cgroup:

...

├─user.slice (#1429)

│ → user.invocation_id: 604cf5ef07fa4bb4bb86993bb5ec15e0

│ → trusted.invocation_id: 604cf5ef07fa4bb4bb86993bb5ec15e0

...

│ └─user-1001.slice (#7225)

│ → user.invocation_id: ce93ad5f5299407e9477964494df63b7

│ → trusted.invocation_id: ce93ad5f5299407e9477964494df63b7

│ ├─session-2.scope (#7463)

│ │ ├─20304 sshd: oracle [priv]

│ │ ├─20404 sshd: oracle@pts/0

│ │ ├─20405 -bash

│ │ ├─21380 sudo systemd-cgls

│ │ ├─21382 systemd-cgls

│ │ └─21383 less

│ └─user@1001.service … (#7293)

│ → user.delegate: 1

│ → trusted.delegate: 1

│ → user.invocation_id: 70284db060c1476db5f3633e5fda7fba

│ → trusted.invocation_id: 70284db060c1476db5f3633e5fda7fba

│ └─init.scope (#7327)

│ ├─20395 /usr/lib/systemd/systemd --user

│ └─20397 (sd-pam)Setting Resource Controller Options and Creating Custom Slices

systemd provides the following methods for setting resource controller

options, such as CPUWeight, CPUQuota, and so on, to

customize resource allocation on your system:

-

Using service unit files.

-

Using drop-in files.

-

Using the

systemctl set-propertycommand.

The following sections provide example procedures for using each of these methods to configure resources and slices in your system.

Using Service Unit Files

To set options in a service unit file, perform the following steps:

-

Create file

/etc/systemd/system/myservice1.servicewith the following content:[Service] Type=oneshot ExecStart=/usr/lib/systemd/generate_load.sh TimeoutSec=0 StandardOutput=tty RemainAfterExit=yes [Install] WantedBy=multi-user.target -

The service created in the preceding step requires a bash script

/usr/lib/systemd/generate_load.sh. Create the file with the following content:#!/bin/bash for i in {1..4};do while : ; do : ; done & done -

Make the script runnable:

sudo chmod +x /usr/lib/systemd/generate_load.sh -

Enable and start the service:

sudo systemctl enable myservice1 --now -

Run the

systemd-cglscommand and confirm the servicemyservice1is running undersystem.slice:systemd-cglsControl group /: -.slice ... ├─user.slice (#1429) ... └─system.slice (#53) ... ├─myservice1.service (#7939) │ → user.invocation_id: e227f8f288444fed92a976d391e6a897 │ ├─22325 /bin/bash /usr/lib/systemd/generate_load.sh │ ├─22326 /bin/bash /usr/lib/systemd/generate_load.sh │ ├─22327 /bin/bash /usr/lib/systemd/generate_load.sh │ └─22328 /bin/bash /usr/lib/systemd/generate_load.sh ├─pmie.service (#4369) │ → user.invocation_id: 68fcd40071594481936edf0f1d7a8e12 ... -

Create a custom slice for the service.

Add the line

Slice=my_custom_slice.sliceto the[Service]section in themyservice1.servicefile, created in a previous step, as shown in the following code block:[Service] Slice=my_custom_slice.slice Type=oneshot ExecStart=/usr/lib/systemd/generate_load.sh TimeoutSec=0 StandardOutput=tty RemainAfterExit=yes [Install] WantedBy=multi-user.targetAttention:

Use underscores instead of dashes to separate terms in slice names.

In

systemd, a dash in a slice name is a special character: insystemd, dashes in slice names are used to describe the fullcgrouppath to the slice (starting from the root slice). For example, if you specify a slice name as "my-custom-slice.slice", instead of creating a slice of that name,systemdcreates the followingcgroupspath underneath the root slice:my.slice/my-custom.slice/my-custom-slice.slice. -

After editing the file, ensure

systemdreloads its configuration files and then restart the service:sudo systemctl daemon-reload sudo systemctl restart myservice1 -

Run the

systemd-cglscommand and confirm the servicemyservice1is now running under custom slicemy_custom_slice:systemd-cglsControl group /: -.slice ... ├─user.slice (#1429) ... ├─my_custom_slice.slice (#7973) │ → user.invocation_id: a8a493a8db1342be85e2cdf1e80255f8 │ └─myservice1.service (#8007) │ → user.invocation_id: 9a4a6171f2844e479d4a0f347aac38ce │ ├─22385 /bin/bash /usr/lib/systemd/generate_load.sh │ ├─22386 /bin/bash /usr/lib/systemd/generate_load.sh │ ├─22387 /bin/bash /usr/lib/systemd/generate_load.sh │ └─22388 /bin/bash /usr/lib/systemd/generate_load.sh ├─init.scope (#19) │ └─1 /usr/lib/systemd/systemd --switched-root --system --deserialize 28 └─system.slice (#53) ├─irqbalance.service (#2907) │ → user.invocation_id: 00d64c9b9d224f179496a83536dd60bb │ └─1464 /usr/sbin/irqbalance --foreground ...

Using Drop-in Files

To use a drop-in file to configure resources, perform the following steps:

-

Create the directory for your service drop-in file.

Tip:

The "drop-in" directory for drop-in files for a service is located at/etc/systemd/system/service_name.service.dwhere service_name is the name of the service.Continuing with our example with service

myservice1, we would run the following command:sudo mkdir -p /etc/systemd/system/myservice1.service.d/ -

Create 2 drop-in files called

00-slice.confand10-CPUSettings.confin themyservice1.service.ddirectory created in the preceding step.Note:

-

Multiple drop-in files with different names are applied in lexicographic order.

-

These drop-in files take precedence over the service unit file.

-

-

-

Add the following contents to

00-slice.conf[Service] Slice=my_custom_slice2.slice MemoryAccounting=yes CPUAccounting=yes -

And add the following contents to

10-CPUSettings.conf[Service] CPUWeight=200

-

-

Create a second service (

myservice2) and assign it a differentCPUWeightto that assigned tomyservice1:-

Create file

/etc/systemd/system/myservice2.servicewith the following contents:[Service] Slice=my_custom_slice2.slice Type=oneshot ExecStart=/usr/lib/systemd/generate_load2.sh TimeoutSec=0 StandardOutput=tty RemainAfterExit=yes [Install] WantedBy=multi-user.target -

The service created in the preceding step requires a bash script

/usr/lib/systemd/generate_load2.sh. Create the file with the following content:#!/bin/bash for i in {1..4};do while : ; do : ; done & done -

Make the script runnable:

sudo chmod +x /usr/lib/systemd/generate_load2.sh -

Create a drop in file

/etc/systemd/system/myservice2.service.d/10-CPUSettings.conffor myservice2 with the following contents:[Service] CPUWeight=400

-

-

Ensure

systemdreloads its configuration files, and restartmyservice1, and also enable and startmyservices2:sudo systemctl daemon-reload sudo systemctl restart myservice1 sudo systemctl enable myservice2 --now -

Run the

systemd-cgtopcommand to display control groups ordered by their resource usage. You can see from the following sample output how, in addition to the resource usage of each slice, the systemd-cgtopcommand displays resource usage breakdown within each slice, so you can use it to confirm your CPU weight has been divided as expected.systemd-cgtopControl Group Tasks %CPU Memory Input/s Output/s / 228 198.8 712.5M - - my_custom_slice2.slice 8 198.5 1.8M - - my_custom_slice2.slice/myservice2.service 4 132.8 944.0K - - my_custom_slice2.slice/myservice1.service 4 65.6 976.0K - - user.slice 18 0.9 43.9M - - user.slice/user-1001.slice 6 0.9 13.7M - - user.slice/user-1001.slice/session-2.scope 4 0.9 9.4M - - system.slice 60 0.0 690.8M - -

Using systemctl set-property

The systemctl set-property command places the configuration files under

the following location:

/etc/systemd/system.controlCaution:

You must not manually edit the files systemctl set-property command creates.

Note:

The systemctl set-property command does not recognize every

resource-control property used in the system-unit and drop-in files covered earlier

in this topic.

The following procedure demonstrates how you can use the systemctl

set-property command to configure resource allocation:

-

Continuing with our example, create another service file at location

/etc/systemd/system/myservice3.servicewith the following content:[Service] Type=oneshot ExecStart=/usr/lib/systemd/generate_load3.sh TimeoutSec=0 StandardOutput=tty RemainAfterExit=yes [Install] WantedBy=multi-user.target -

Set the slice for the service to be

my_custom_slice2(the same slice used by the services created in from earlier steps) by adding the following line to the[Service]section in themyservice3.servicefile:Slice=my_custom_slice2.sliceNote:

The slice must be set in the service-unit file because the

systemctl set-propertycommand does not recognize theSliceproperty. -

The service created in the preceding step requires a bash script

/usr/lib/systemd/generate_load3.sh. Create the file with the following content:#!/bin/bash for i in {1..4};do while : ; do : ; done & done -

Make the script runnable:

sudo chmod +x /usr/lib/systemd/generate_load3.sh -

Ensure systemd reloads its configuration files, and then enable and start the service:

sudo systemctl daemon-reload sudo systemctl enable myservice3 --now -

Optionally run the to

systemd-cgtopconfirm all 3 services, ,myservice1,myservice2, andmyservice3, are all running in the same slice. -

Use

systemctl set-propertycommand to set theCPUWeightformyservice3to 800:sudo systemctl set-property myservice3.service CPUWeight=800 -

You can optionally confirm that a drop-in file has been created for you under

/etc/systemd/system.control/myservice3.service.d. However, you must not edit the file:cat /etc/systemd/system.control/myservice3.service.d/50-CPUWeight.conf# This is a drop-in unit file extension, created via "systemctl set-property" # or an equivalent operation. Do not edit. [Service] CPUWeight=800Ensure

systemdreloads its configuration files, and restart all the services:sudo systemctl daemon-reload sudo systemctl restart myservice1 sudo systemctl restart myservice2 sudo systemctl restart myservice3 -

Run the

systemd-cgtopcommand to confirm your CPU weight has been divided as expected:systemd-cgtopControl Group Tasks %CPU Memory Input/s Output/s / 235 200.0 706.1M - - my_custom_slice2.slice 12 198.4 2.9M - - my_custom_slice2.slice/myservice3.service 4 112.7 976.0K - - my_custom_slice2.slice/myservice2.service 4 56.9 996.0K - - my_custom_slice2.slice/myservice1.service 4 28.8 988.0K - - user.slice 18 0.9 44.1M - - user.slice/user-1001.slice 6 0.9 13.9M - - user.slice/user-1001.slice/session-2.scope 4 0.9 9.5M - -