Learn about detecting anomalies to predict failure

Established methods for asset maintenance are either reactive (replace on failure), or prescriptive (replace based on usage or time). In addition to the actual cost of replacing or repairing the asset, organizations must bear the costs associated with reliability, downtime, and supply chain backlog. An anomaly detection service that provides early warning of impending failure can reduce these costs.

Oracle Cloud Infrastructure Anomaly Detection service helps you detect anomalies in time series data without the need for statisticians or machine learning experts. It provides prebuilt algorithms, and it addresses data issues automatically. It is a cloud-native service accessible over REST APIs and can connect to many data sources. The OCI Console, CLI, and SDK make it easy for use in end-to-end solutions.

Architecture

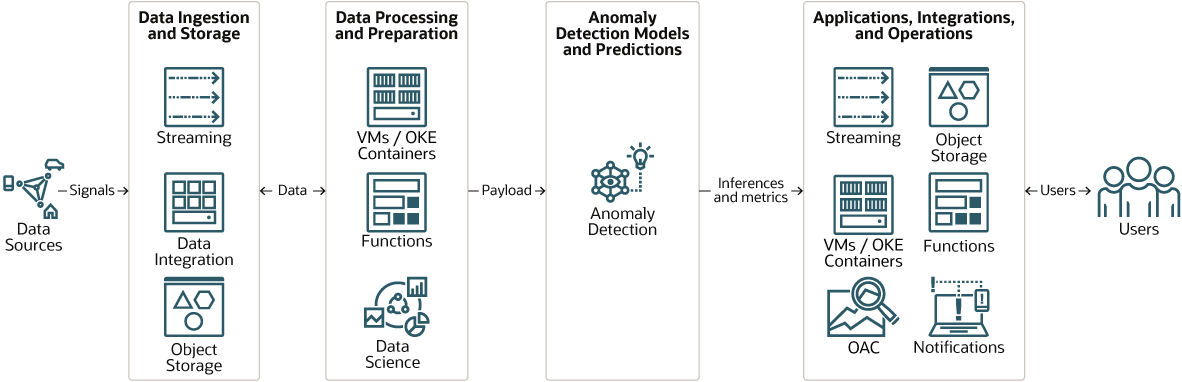

This architecture shows where Anomaly Detection service fits in the workflow.

Depending on requirements, data is ingested using OCI Streaming, OCI Data Integration service, or both. The system can handle both batch and streaming workloads.

The workflow has two main phases: training, and detection. During the training phase, data is cleansed and prepared for training, and then the model is trained and deployed. In the detection phase, Anomaly Detection detects anomalies in production data. The anomalies are reported and actions are taken based on the predictions.

Description of the illustration overview.png

Here is a description of the process, in broad terms:

- Data is ingested from one or more data sources and stored in Object Storage.

- One or more tools are used to prepare the data for training the model during the training phase, and for any pre-processing that might be required for the production phase. The results are stored in Object Storage (not shown).

- Anomaly Detection service builds the model during the training phase, and runs the anomaly detection algorithms during the production phase.

- The results of the anomaly detection process are sent to one or more apps that consume the data and prepare it for presentation to end users.

Overview

The core algorithm of our Anomaly Detection service is an Oracle-patented multivariate time-series anomaly detection algorithm called MSET.

MSET is a nonlinear, nonparametric anomaly detection machine learning technique that calibrates the expected behavior of a system based on historical data from the normal operational sequence of monitored signals. It incorporates the learned behavior of a system into a persistent model that represents the normal estimated behavior. It was originally developed by Oracle Labs and has been successfully used in several industries for prognosis analysis.

Anomaly Detection Service Concepts

- Project: Projects are collaborative workspaces for organizing data assets, models, deployments, and detection portals .

- Data Asset: A data asset is an abstracted data representation of a data source. The data asset is located in Object Storage. It can be training data which is cleaned and prepared for the model training phase. It can be production data, which is presented to Anomaly Detection Service after a model is trained and deployed.

- Model: The machine learning model that is created from the training data asset.

- Deployment: When model training is complete, it's deployed. This makes it available for use in the anomaly detection process.

- Detection: This is the process of presenting production data to the deployed model to find anomalies in the production data.

Anomaly Detection Process

At a high level, here is the process of completing a full cycle of using anomaly detection service.

- Create a project. A project is a place where you collect and organize different assets, models, and deployments in the same workspace.

- Create a data asset. This is the production data which is presented to Anomaly Detection Service for analysis.

- Train a model. After specifying a training data asset and the training parameters, train an anomaly detection model. Training can take five minutes or more depending on the size of the data asset and on the false alarm probability that you choose.

- Deploy a model. After a model is trained, deploy it.

- Detection with new data. Send production data with the same attributes as the training data to the deployment endpoint, or upload it to the deployment UI.

Note that one project can have multiple data assets, multiple models, multiple deployments.