| Oracle® Java Micro Edition Connected Device Configuration Runtime Guide Release 1.1.2 for Oracle Java Micro Edition Embedded Client A12345-01 |

|

Previous |

Next |

| Oracle® Java Micro Edition Connected Device Configuration Runtime Guide Release 1.1.2 for Oracle Java Micro Edition Embedded Client A12345-01 |

|

Previous |

Next |

The CDC Java runtime environment includes cvm, the CDC application launcher, for loading and executing Java applications. This chapter describes basic use of the cvm command to launch different kinds of Java applications, in addition to more advanced topics like memory management and dynamic compiler policies.

|

Note: For the Oracle Java Micro Edition (Java ME) Embedded Client, cvm is located in InstallDir/Oracle_JavaME_Embedded_Client/binaries/bin. See the Oracle Java Micro Edition Embedded Client Reference Guide for detailed information about usingcvm for the Oracle Java ME Embedded Client. |

cvm, the CDC application launcher is similar to java, the Java SE application launcher. For the Oracle (Java ME) Embedded Client, see the Oracle Java Micro Edition Embedded Client Reference Guide for detailed information about using cvm to launch Java applications for the Oracle Java ME Embedded Client.

Many of cvm's command-line options are borrowed from java. The basic method of launching a Java application is to specify the top-level application class containing the main() method on the cvm command-line. For example,

% cvm HelloWorld

By default, cvm looks for the top-level application class in the current directory. Alternatively, the synonymous -cp and -classpath command-line options specify a list of locations where cvm searches for application classes instead of the current directory. For example,

% cvm -cp /mylib/archive.zip HelloWorld

Here cvm searches for HelloWorld in an archive file /mylib/archive/.zip. See Section 3.3, "Class Search Path Basics" for more information about class search paths.

The -help option displays a brief description of the available command-line options. Appendix A provides a complete description of the command-line options available for cvm.

Managed application models allow developers to offload the tasks of deployment and resource management to a separate application manager. The CDC Java runtime environment includes sample application managers for an xlet application model.

The xlet application model doesn't require an explicit dependency on AWT. These features make xlets appropriate for embedded device scenarios like set-top boxes and PDAs.

The CDC Java runtime environment includes a simple xlet manager named com.sun.xlet.XletRunner. Xlets can be graphical, in which case the xlet manager displays each xlet in its own frame, or they can be non-graphical. The basic command syntax to launch XletRunner is:

% cvm com.sun.xlet.XletRunner { \

-name xletName \

(-path xletPath | -codebase urlPath) \

-args arg1 arg2 arg3 ...} \

...

% cvm com.sun.xlet.XletRunner -filename optionsFile

% cvm com.sun.xlet.XletRunner -usage

Table 3-1 describes XletRunner's command-line options:

Table 3-1 XletRunner Command-Line Options

| Option | Description |

|---|---|

-name xletName

|

Required. Identifies the top-level Java class that implements the |

-path xletPath

|

Required (or substituted with the Note: The xlet must not be found in the system class path, especially when running more than one xlet, because xlets must be loaded by their own class loader. |

-codebase urlPath

|

Optional. Specifies the location of the target xlet with a URL. The |

-args arg1 [arg2] [arg3] ...

|

Optional. Passes additional runtime arguments to the xlet. Multiple arguments are separated by spaces. |

-filename optionsFile

|

Optional. Reads options from an ASCII file rather than from the command line. The |

-usage |

Display a usage string describing |

Here are some command-line examples for launching xlets with com.sun.xlet.XletRunner:

To run MyXlet in Myclasses.jar:

% cvm com.sun.xlet.XletRunner \ -name basis.MyXlet \ -path Myclasses.jar

To run an xlet with multiple command-line arguments:

% cvm com.sun.xlet.XletRunner \

-name MyXlet \

-path . \

-args top bottom right left

To run more than one xlet, repeat the XletRunner options:

% cvm com.sun.xlet.XletRunner \ -name ServerXlet -path ./server \ -name ClientXlet -path ./client

To run an xlet whose compiled code is at the URL http://myurl.com/xlets/MyXlet.class:

% cvm com.sun.xlet.XletRunner \ -name MyXlet \ -codebase http://myurl.com/xlets/

To run an xlet in a jar file named xlet.jar with the arguments colorMap and blue, use the following command line:

% cvm com.sun.xlet.XletRunner \ -name StockTickerXlet \ -path xlet.jar \ -args colorMap blue

To run an xlet with command-line options in an argument file:

% cvm com.sun.xlet.XletRunner -filename myArgsFile

myArgsFile contains a text line with valid XletRunner options:

-name StockTickerXlet -path Myxlet.jar -args colorMap blue

The Java runtime environment uses various search paths to locate classes, resources and native objects at runtime. This section describes the two most important search paths: the Java class search path and the native method search path.

Java applications are collections of Java classes and application resources that are built on one system and then potentially deployed on many different target platforms. Because the file systems on these target platforms can vary greatly from the development system, Java runtime environments use the Java class search path as a flexible mechanism for balancing the needs of platform-independence against the realities of different target platforms.

The Java class search path mechanism allows the Java virtual machine to locate and load classes from different locations that are defined at runtime on a target platform. For example, the same application could be organized in one way on a MacOS system and another on a Linux system. Preparing an application's classes for deployment on different target systems is part of the development process. Arranging them for a specific target system i s part of the deployment process.

The Java class search path defines a list of locations that the Java virtual machine uses to find Java classes and application resources. A location can be either a file system directory or a jar or Zip archive file. Locations in the Java class search path are delimited by a platform-dependent path separator defined by the path.separator system property. The Linux default is the colon ":" character.

The Java SE documentationFoot 1 describes three related Java class search paths:

The system or bootstrap classes comprise the Java platform. The system class search path is a mechanism for locating these system classes. The default system search path is based on a set of jar files located in JRE/lib.

The extension classes extend the Java platform with optional packages like the JDBC Optional Package. The extension class search path is a mechanism for locating these optional packages. cvm uses the -Xbootclasspath command-line option to statically specify an extension class search path at launch time and the sun.boot.class.path system property to dynamically specify an extension class search path. The CDC default extension class search path is CVM/lib, except for some of the provider implementations for the security optional packages described in Table 2-2 which are stored in CVM/lib/ext. The Java SE default extension class search path is JRE/lib/ext.

The user classes are defined and implemented by developers to provide application functionality. The user class search path is a mechanism for locating these application classes. Java virtual machine implementations like the CDC Java runtime environment can provide different mechanisms for specifying an Java class search path. cvm uses the -classpath command-line option to statically specify an Java class search path at launch time and the java.class.path system property to dynamically specify an user class search path. The Java SE application launcher also uses the CLASSPATH environment variable, which is not supported by the CDC Java runtime environment.

The CDC HotSpot Implementation virtual machine uses the Java Native InterfaceFoot 2 (JNI) as its native method support framework. The JNI specification leaves the platform-level implementation of native methods up to the designers of a Java virtual machine implementation. For the Linux-based CDC Java runtime environment described in this runtime guide, a JNI native method is implemented as a Linux shared library that can be found in the native library search path defined by the java.library.path system property.

|

Note: The standard mechanism for specifying the native library search path is thejava.library.path system property. However, the Linux dynamic linking loader may cause other shared libraries to be loaded implicitly. In this case, the directories in the LD_LIBRRARY_PATH environment variable are searched without using the java.library.path system property. One example of this issue is the location of the Qt shared library. If the target Linux platform has one version of the Qt. shared library in /usr/lib and the CDC Java runtime environment uses another version located elsewhere, this directory must be specified in the LD_LIBRRARY_PATH environment variable. |

Here is a simple example of how to build and use an application with a native method. The mechanism described below is very similar to the Java SE mechanism.

Compile a Java application containing a native method.

% javac -boot class path lib/btclasses.zip HelloJNI.java

Generate the JNI stub file for the native method.

% Java -bootclasspath lib/btclasses.zip HelloJNI

Compile the native method library.

% gcc HelloJNI.c -shared -I${CDC_SRC}/src/share/javavm/export \

-I${CDC_SRC}/src/linux/javavm/include -o libHelloJNI.so

This step requires the CDC-based JNI header files in the CDC source release.

Relocate the native method library in the test directory.

% mkdir test % mv libHelloJNI.so test

Launch the application.

% cvm -Djava.library.path=test HelloJNI

If the native method implementation is not found in the native method search path, the CDC Java runtime environment throws an UnsatisfiedLinkError.

The CDC Java runtime environment uses memory to operate the Java virtual machine and to create, store, and use objects and resources. This section provides an overview of how memory is used by the Java virtual machine. Of course, the actual memory requirements of a specific Java application running on a specific Java runtime environment hosted on a specific target platform can only be determined by application profiling. This section, however, provides useful guidelines.

When it launches, the CDC Java runtime environment uses the native platform's memory allocation mechanism to allocate memory for native objects and reserve a pool of memory, called the Java heap, for Java objects and resources.

The size of the Java heap can grow and shrink within the boundaries specified by the -Xmxsize, -Xmssize and -Xmnsize command-line options described in Table A-1.

If the requested Java heap size is larger than the available memory on the device, the Java runtime environment exits with an error message:

% cvm -Xmx23000M MyApp

Cannot start VM (error parsing heap size command line option -Xmx)

Cannot start VM (out of memory while initializing)

Cannot start VM (CVMinitVMGlobalState failed)

Could not create JVM.

If there isn't enough memory to create a Java heap of the requested size, the Java runtime environment exits with an error message:

% cvm -Xmx2300M MyApp

Cannot start VM (unable to reserve GC heap memory from OS)Cannot start VM (out of memory while initializing)Cannot start VM (CVMinitVMGlobalState failed)Could not create JVM.

If the application launches and later needs more memory than is available in the Java heap, the CDC Java runtime environment throws an OutOfMemoryError.

The heap grows and shrinks between the -Xmn and -Xmx values based on heap utilization. This is true for Linux ports, but not all ports.

For example,

% cvm -Xms10M -Xmn5M -Xmx15M MyApp

launches the application MyApp and sets the initial Java heap size to 10 MB, with a low water mark of 5 MB and a high water mark of 15 MB.

When a Java application creates an object, the Java runtime environment allocates memory out of the Java heap. And when the object is no longer needed, the memory should be recycled for later use by other objects and resources. Conventional application platforms require a developer to track memory usage. Java technology uses an automatic memory management system that transfers the burden of managing memory from the developer to the Java runtime environment.

The Java runtime environment detects when an object or resource is no longer being used by a Java application, labels it as "garbage" and later recycles its memory for other objects and resources. This garbage collection (GC) system frees the developer from the responsibility of manually allocating and freeing memory, which is a major source of bugs with conventional application platforms.

GC has some additional costs, including runtime overhead and memory footprint overhead. However, these costs are small in comparison to the benefits of application reliability and developer productivity.

The Java Virtual Machine Specification does not specify any particular GC behavior and early Java virtual machine implementations used simple and slow GC algorithms. More recent implementations like the Java HotSpot Implementation virtual machine provide GC algorithms tuned to the needs of desktop and server Java applications. And now the CDC HotSpot Implementation includes a GC framework that has been optimized for the needs of connected devices.

The major features of the GC framework in the CDC HotSpot Implementation are:

Exactness. Exact GC is based on the ability to track all pointers to objects in the Java heap. Doing so removes the need for object handles, reduces object overhead, increases the completeness of object compaction and improves reliability and performance.

Default Generational Collector. The CDC HotSpot Implementation Java virtual machine includes a generational collector that supports most application scenarios, including the following:

general-purpose

excellent performance

robustness

reduced GC pause time

reduced total time spent in GC

Pluggability. While the default generational collector serves as a general-purpose garbage collector, the GC plug-in interface allows support for device-specific needs. Runtime developers can use the GC plug-in interface to add new garbage collectors at build-time without modifying the internals of the Java virtual machine. In addition, starter garbage collector plug-ins are available from Java Partner Engineering.

|

Note: Needing an alternate GC plug-in is rare. If an application has an object allocation and longevity profile that differs significantly from typical applications (to the extent that the application profile cannot be catered to by setting the GC arguments), and this difference turns out to be a performance bottleneck for the application, then an alternate GC implementation may be appropriate. |

The default generational collector manages memory in the Java heap. Table 3-1 shows how the Java heap is organized into two heap generations, a young generation and a tenured generation. The generational collector is really a hybrid collector in that each generation has its own collector. This is based on the observation that most Java objects are short-lived. The generational collector is designed to collect these short-lived objects as rapidly as possible while promoting more stable objects to the tenured generation where objects are collected less frequently.

The young generation is based on a technique called copying semispace. The young generation is divided into two equivalent memory pools, the from-space and the to-space. Initially, objects are allocated out of the from-space. When the from-space becomes full, the system pauses and the young generation begins a collection cycle where only the live objects in the from-space are copied to the to-space. The two memory pools then reverse roles and objects are allocated from the "new" from-space. Only surviving objects are copied. If they survive a certain number of collection cycles (the default is 2), then they are promoted to the tenured generation.

The benefit of the copying semispace technique is that copying live objects across semispaces is faster than relocating them within the same semispace. This requires more memory, so there is a trade-off between the size of the young generation and GC performance.

The tenured generation is based on a technique called mark compact. The tenured generation contains all objects that have survived several copying cycles in the young generation. When the tenured generation reaches a certain threshold, the system pauses and it begins a full collection cycle where both generations go through a collection cycle. The young generation goes through the stages outlined above. Objects in the tenured generation are scanned from their "roots" and determined to be live or dead. Next, the memory for dead objects is released and the tenured generation goes through a compacting phase where objects are relocated within the tenured generation.

The default generational garbage collector reduces performance overhead and helps collect short-lived objects rapidly, which increases heap efficiency.

The relative sizes of generations can affect GC performance. So the -Xgc:youngGen command-line option controls the size of the young object portion of the heap. See Table A-3 for more information about GC command-line options.

youngGen should not be too small. If it is too small, partial GCs may happen too frequently. This causes unnecessary pauses and retain more objects in the tenured generation than is necessary because they don't have time to age and die out between GC cycles.

The default size of youngGen is about 1/8 of the overall Java heap size.

youngGen should not be too large. If it is too large, even partial GCs may result in lengthy pauses because of the number of live objects to be copied between semispaces or generations will be larger.

By default, the CDC Java runtime environment caps youngGen size to 1 MB unless it is explicitly specified on the command line.

The total heap size needs to be large enough to cater for the needs of the application. This is very application-dependent and can only be estimated.

The CDC HotSpot Implementation virtual machine includes a mechanism called class preloading that streamlines VM launch and reduces runtime memory requirements. The CDC build system includes a special build tool called JavaCodeCompact that performs many of the steps at build-time that the VM would normally perform at runtime. This saves runtime overhead because class loading is done only once at build-time instead of multiple times at runtime. And because the resulting class data can be stored in a format that allows the VM to execute in place from a read-only file system (for example, Flash memory), it saves memory.

|

Note: It is important to understand that decisions about class preloading are generally made at build-time. See the companion document CDC Build Guide for information about how to useJavaCodeCompact to include Java class files with the list of files preloaded by JavaCodeCompact with the CDC Java runtime environment's executable image. |

Java class verification is usually performed at class loading time to insure that a class is well-behaved. This has both performance and security benefits. This section describes a performance optimization that avoids the overhead of Java class verification for some application classes.

One way to avoid the overhead of Java class verification is to turn it off completely:

% cvm -Xverify:none -cp MyApp.jar MyApp

This approach has the benefit of more quickly loading the application's classes. But it also turns off important security mechanisms that may be needed by applications that perform remote class loading.

Another approach is based on using JavaCodeCompact to preload an application's Java classes at build time. The application's classes load faster at runtime and other classes can be loaded remotely with the security benefits of class verification.

|

Note: JavaCodeCompact assumes the classes it processes are valid and secure. Other means of determining class integrity should be used at build-time. |

The companion document CDC Build Guide describes how to use JavaCodeCompact to preload an application's classes so that they are included with the CDC Java runtime environment's binary executable image. Once built, the mechanism for running a preloaded application is very simple. Just identify the application without using -cp to specify the user Java class search path.

% cvm -Xverify:remote MyApp

The remote option indicates that preloaded and system classes will not be verified. Because this is the default value for the -Xverify option, it can be safely omitted. It is shown here to fully describe the process of preloading an application's classes.

The -Xjit:maxWorkingMemorySize command-line option sets the maximum working memory size for the dynamic compiler. Note that the 512 KB default can be misleading. Under most circumstances the working memory for the dynamic compiler is substantially less and is furthermore temporary. For example, when a method is identified for compiling, the dynamic compiler allocates a temporary amount of working memory that is proportional to the size of the target method. After compiling and storing the method in the code buffer, the dynamic compiler releases this temporary working memory.

The average method needs less than 30 KB but large methods with lots of inlining can require much more. However since 95% of all methods use 30 KB or less, this is rarely an issue. Setting the maximum working memory size to a lower threshold should not adversely affect performance for the majority of applications.

This section shows how to use cvm command-line options that control the behavior of the CDC HotSpot Implementation Java virtual machine's dynamic compiler for different purposes:

Optimizing a specific application's performance.

Configuring the dynamic compiler's performance for a target device.

Exercising runtime behavior to aid the porting process.

Using these options effectively requires an understanding of how a dynamic compiler operates and the kind of situations it can exploit. During its operation the CDC HotSpot Implementation virtual machine instruments the code it executes to look for popular methods. Improving the performance of these popular methods accelerates overall application performance.

The following subsections describe how the dynamic compiler operates and provides some examples of performance tuning. For a complete description of the dynamic compiler-specific command-line options, see Appendix A.

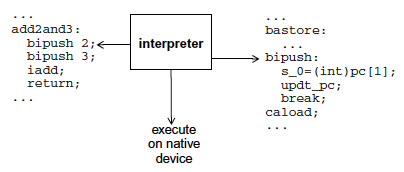

The CDC HotSpot Implementation virtual machine offers two mechanisms for method execution: the interpreter and the dynamic compiler. The interpreter is a straightforward mechanism for executing a method's bytecodes. For each bytecode, the interpreter looks in a table for the equivalent native instructions, executes them and advances to the next bytecode. Shown in Figure 3-2, this technique is predictable and compact, yet slow.

Figure 3-2 Interpreter-Based Method Execution

The dynamic compiler is an alternate mechanism that offers significantly faster runtime execution. Because the compiler operates on a larger block of instructions, it can use more aggressive optimizations and the resulting compiled methods run much faster than the bytecode-at-a-time technique used by the interpreter. This process occurs in two stages. First, the dynamic compiler takes the entire method's bytecodes, compiles them as a group into native code and stores the resulting native code in an area of memory called the code cache as shown in Figure 3-3.

Then the next time the method is called, the runtime system executes the compiled method's native instructions from the code cache as shown in Figure 3-4.

The dynamic compiler cannot compile every method because the overhead would be too great and the start-up time for launching an application would be too noticeable. Therefore, a mechanism is needed to determine which methods get compiled and for how long they remain in the code cache.

Because compiling every method is too expensive, the dynamic compiler identifies important methods that can benefit from compilation. The CDC HotSpot Implementation Java virtual machine has a runtime instrumentation system that measures statistics about methods as they are executed. cvm combines these statistics into a single popularity index for each method. When the popularity index for a given method reaches a certain threshold, the method is compiled and stored in the code cache.

The runtime statistics kept by cvm can be used in different ways to handle various application scenarios. To do this, cvm exposes certain weighting factors as command-line options. By changing the weighting factors, cvm can change the way it performs in different application scenarios. A specific combination of these options express a dynamic compiler policy for a target application. An example of these options and their use is provided in Section 3.5.2.1, "Managing the Popularity Threshold".

The dynamic compiler has options for specifying code quality based on various forms of inlining. These provide space-time trade-offs: aggressive inlining provides faster compiled methods, but consume more space in the code cache. An example of the inlining options is provided in Section 3.5.2.2, "Managing Compiled Code Quality".

Compiled methods are not kept in the code cache indefinitely. If the code cache becomes full or nearly full, the dynamic compiler decompiles the method by releasing its memory and allowing the interpreter to execute the method. An example of how to manage the code cache is provided in Section 3.5.2.3, "Managing the Code Cache".

The cvm application launcher has a group of command-line options that control how the dynamic compiler behaves. Taken together, these options form dynamic compiler policies that target application or device specific needs. The most common are space-time trade-offs. For example, one policy might cause the dynamic compiler to compile early and often while another might set a higher threshold because memory is limited or the application is short-lived.

Table A-7 describes the dynamic compiler-specific command-line options and their defaults. These defaults provide the best overall performance based on experience with a large set of applications and benchmarks and should be useful for most application scenarios. They might not provide the best performance for a specific application or benchmark. Finding alternate values requires experimentation, a knowledge of the target application's runtime behavior and requirements in addition to an understanding of the dynamic compiler's resource limitations and how it operates.

The following examples show how to experiment with these options to tune the dynamic compiler's performance.

When the popularity index for a given method reaches a certain threshold, it becomes a candidate for compiling. cvm provides four command-line options that influence when a given method is compiled: the popularity threshold and three weighting factors that are combined into a single popularity index:

climit, the popularity threshold. The default is 20000.

bcost, the weight of a backwards branch. The default is 4.

icost, the weight of an interpreted to interpreted method call. The default is 20.

mcost, the weight of transitioning between a compiled method and an interpreted method and vice versa. The default is 50.

Each time a method is called, its popularity index is incremented by an amount based on the icost and mcost weighting factors. The default value for climit is 20000. By setting climit at different levels between 0 and 65535, you can find a popularity threshold that produces good results for a specific application.

The following example uses the -Xjit:option command-line option syntax to set an alternate climit value:

% cvm -Xjit:climit=10000 MyTest

Setting the popularity threshold lower than the default causes the dynamic compiler to more eagerly compile methods. Since this usually causes the code cache to fill up faster than necessary, this approach is often combined with a larger code cache size to avoid compilation/decompilation thrashing.

The dynamic compiler can choose to inline methods for providing better code quality and improving the speed of a compiled method. Usually this involves a space-time trade-off. Method inlining consumes more space in the code cache but improves performance. For example, suppose a method to be compiled includes an instruction that invokes an accessor method returning the value of a single variable.

public void popularMethod() {

...

int i = getX();

...

}

public int getX() {

return X;

}

getX() has overhead like creating a stack frame. By copying the method's instructions directly into the calling method's instruction stream, the dynamic compiler can avoid that overhead.

cvm has several options for controlling method inlining, including the following:

maxInliningCodeLength sets a limit on the bytecode size of methods to inline. This value is used as a threshold that proportionally decreases with the depth of inlining. Therefore, shorter methods are inlined at deeper depths. In addition, if the inlined method is less than value/2, the dynamic compiler allows unquick opcodes in the inlined method.

minInliningCodeLength sets the floor value for maxInliningCodeLength when its size is proportionally decreased at greater inlining depths.

maxInliningDepth limits the number of levels that methods can be inlined.

For example, the following command-line specifies a larger maximum method size.

% cvm -Xjit:inline=all,maxInliningCodeLength=80 MyTest

On some systems, the benefits of compiled methods must be balanced against the limited memory available for the code cache. cvm offers several command-line options for managing code cache behavior. The most important is the size of the code cache, which is specified with the codeCacheSize option.

For example, the following command-line specifies a code cache that is half the default size.

% cvm -Xjit:codeCacheSize=256k MyTest

A smaller code cache causes the dynamic compiler to decompile methods more frequently. Therefore, you might also want to use a higher compilation threshold in combination with a lower code cache size.

The build option CVM_TRACE_JIT=true allows the dynamic compiler to generate a status report for when methods are compiled and decompiled. The command-line option -Xjit:trace=status enables this reporting, which can be useful for tuning the codeCacheSize option.

Ahead-of-time compilation (AOTC) refers to compiling Java bytecode into native machine code beforehand, for example during VM build time or install time. In CDC-HI, AOTC happens when the VM is being executed for the first time on the target platform. A set of Java methods is compiled during VM startup and the compiled code is saved into a file. During subsequent executions of CVM the saved AOTC code is found and executed like dynamically compiled code.

AOTC is run in two basic stages: an initial run to compile a method list specified in a text file and subsequent runs that use that precompiled method list.

Initial run. AOTC is enabled with the -aot=true command-line option. The first time cvm is executed, it must also include the aotMethodList=file to specify the location of the method list file. These methods are compiled and stored in the cvm.aot file. The aotFile=file command-line option can be used to specify an alternate location for the precompiled methods.

Subsequent runs. When cvm is run again, it must also use -aot=true command-line option and aotFile=file if it was used.

If it becomes necessary to recompile the method list, this can be done with the recompileAOT=boolean command-line option.

See Table A-7 for a description of the AOTC command-line options.

methodsList.txtA good way to produce a method list is to start by building a VM with CVM_TRACE_JIT=true and running with -Xjit:trace=status. This shows all the methods being compiled while running a particular application. Note that non-romized methods should not be included in the method list.

Adding or removing methods in methodsList.txt does not cause AOTC code being regenerated. To regenerate the precompiled AOTC code, use the recompileAOT=boolean command-line option to delete the bin/cvm.aot file.

Footnote Legend

Footnote 1: See the tools documentation athttp://download.oracle.com/javase/1.4.2/docs/tooldocs/tools.html for a description of the J2SDK tools and how they use Java class search paths.