Example

Configuring Host-Based Data Replication With Sun StorageTek Availability Suite Software

This appendix provides an alternative to host-based replication that does not use Oracle Solaris Cluster Geographic Edition. Use Oracle Solaris Cluster Geographic Edition for host-based replication to simplify the configuration and operation of host-based replication between clusters. See Understanding Data Replication.

The example in this appendix shows how to configure host-based data replication between clusters using the Availability Suite feature of Oracle Solaris software. The example illustrates a complete cluster configuration for an NFS application that provides detailed information about how individual tasks can be performed. All tasks should be performed in the global cluster. The example does not include all of the steps that are required by other applications or other cluster configurations.

If you use role-based access control (RBAC) to access the cluster nodes, ensure that you can assume an RBAC role that provides authorization for all Oracle Solaris Cluster commands. This series of data replication procedures requires the following Oracle Solaris Cluster RBAC authorizations:

solaris.cluster.modify

solaris.cluster.admin

solaris.cluster.read

See the Securing Users and Processes in Oracle Solaris 11.2 for more information about using RBAC roles. See the Oracle Solaris Cluster man pages for the RBAC authorization that each Oracle Solaris Cluster subcommand requires.

Understanding Sun StorageTek Availability Suite Software in a Cluster

This section introduces disaster tolerance and describes the data replication methods that Sun StorageTek Availability Suite software uses.

Disaster tolerance is the ability to restore an application on an alternate cluster when the primary cluster fails. Disaster tolerance is based on data replication and takeover. A takeover relocates an application service to a secondary cluster by bringing online one or more resource groups and device groups.

If data is replicated synchronously between the primary and secondary cluster, then no committed data is lost when the primary site fails. However, if data is replicated asynchronously, then some data may not have been replicated to the secondary cluster before the primary site failed, and thus is lost.

Data Replication Methods Used by Sun StorageTek Availability Suite Software

This section describes the remote mirror replication method and the point-in-time snapshot method used by Sun StorageTek Availability Suite software. This software uses the sndradm and iiadm commands to replicate data. For more information, see the sndradm(1M) and iiadm(1M) man pages.

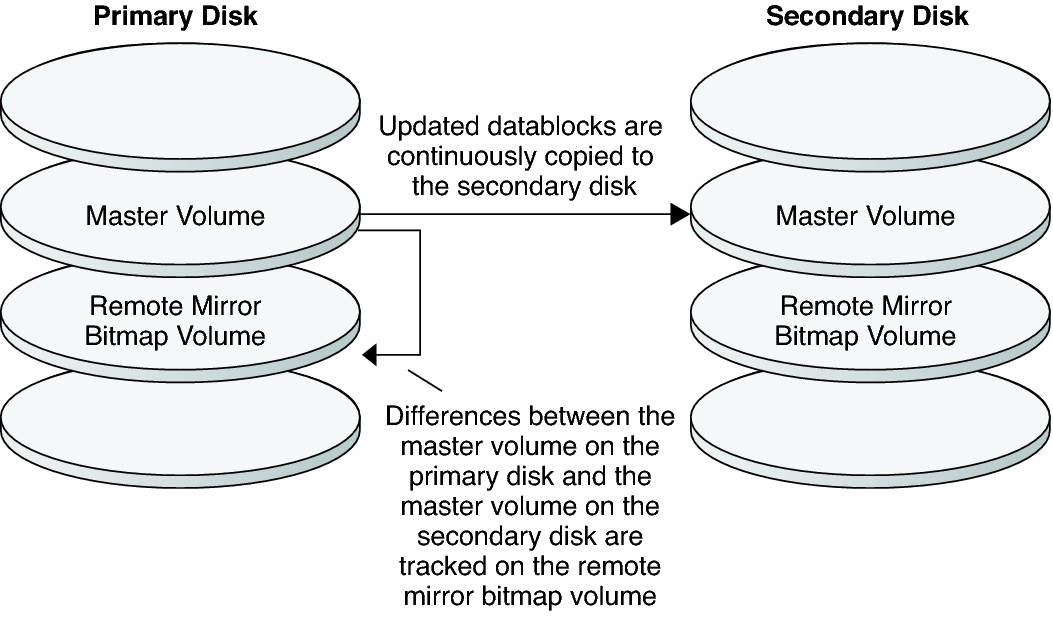

Remote Mirror Replication

Figure A–1 shows remote mirror replication. Data from the master volume of the primary disk is replicated to the master volume of the secondary disk through a TCP/IP connection. A remote mirror bitmap tracks differences between the master volume on the primary disk and the master volume on the secondary disk.

Figure A-1 Remote Mirror Replication

In synchronous data replication, a write operation is not confirmed as complete until the remote volume has been updated.

In asynchronous data replication, a write operation is confirmed as complete before the remote volume is updated. Asynchronous data replication provides greater flexibility over long distances and low bandwidth.

Remote mirror replication can be performed synchronously in real time, or asynchronously. Each volume set in each cluster can be configured individually, for synchronous replication or asynchronous replication.

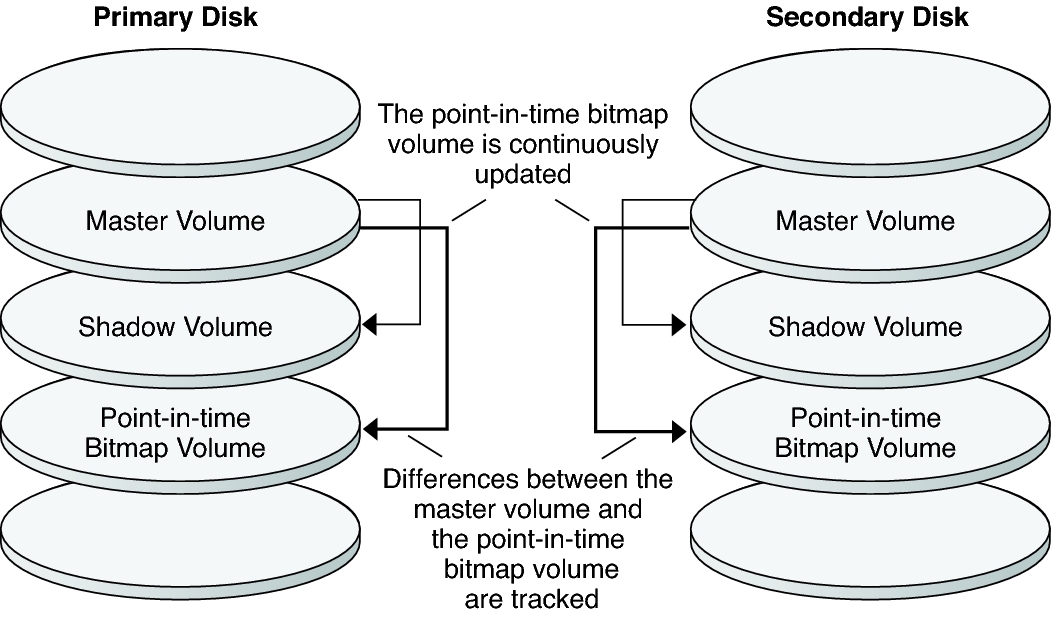

Point-in-Time Snapshot

Figure A–2 shows a point-in-time snapshot. Data from the master volume of each disk is copied to the shadow volume on the same disk. The point-in-time bitmap tracks differences between the master volume and the shadow volume. When data is copied to the shadow volume, the point-in-time bitmap is reset.

Figure A-2 Point-in-Time Snapshot

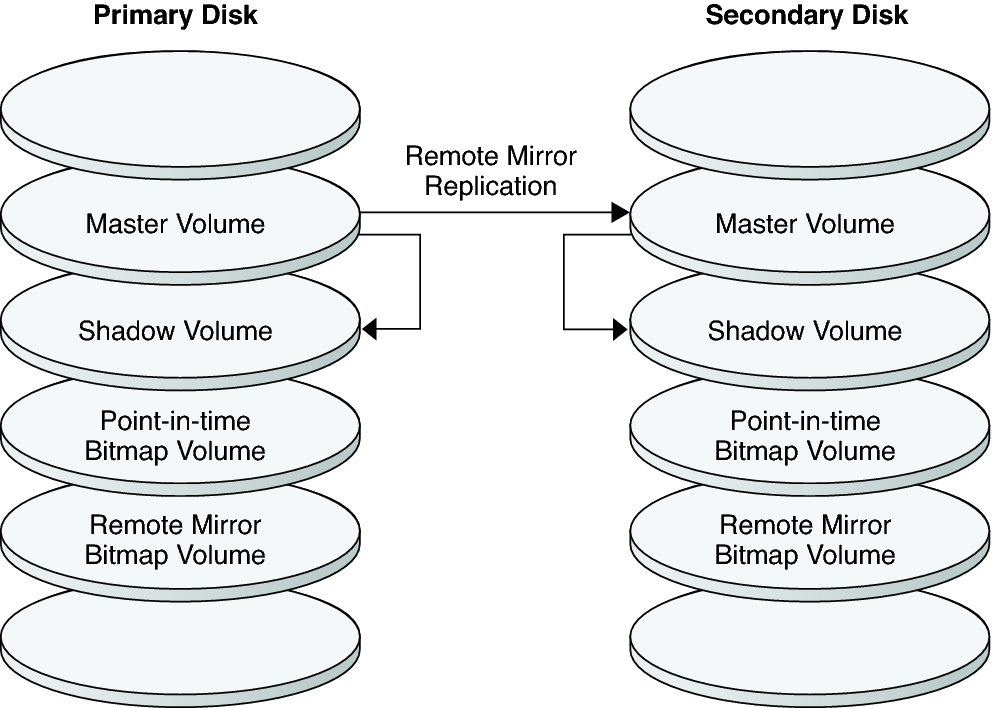

Replication in the Example Configuration

Figure A–3 illustrates how remote mirror replication and point-in-time snapshot are used in this example configuration.

Figure A-3 Replication in the Example Configuration

Guidelines for Configuring Host-Based Data Replication Between Clusters

This section provides guidelines for configuring data replication between clusters. This section also contains tips for configuring replication resource groups and application resource groups. Use these guidelines when you are configuring data replication for your cluster.

This section discusses the following topics:

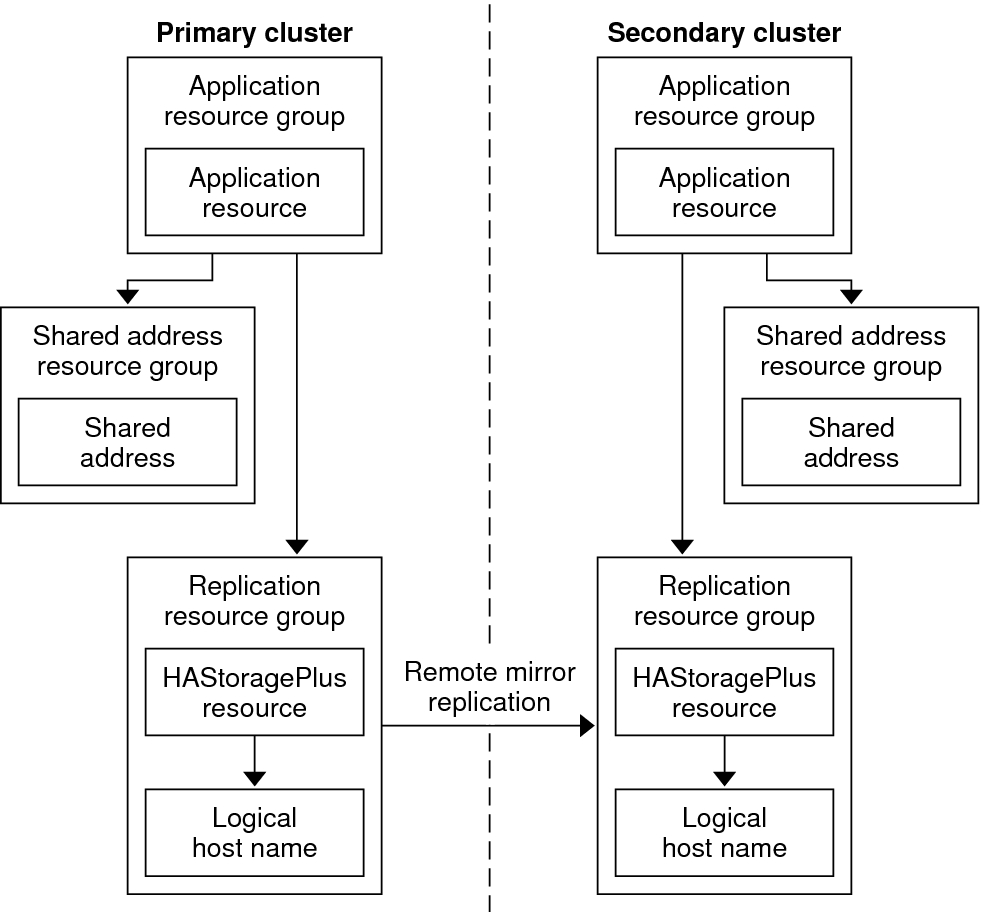

Configuring Replication Resource Groups

Replication resource groups collocate the device group under Sun StorageTek Availability Suite software control with a logical hostname resource. A logical hostname must exist on each end of the data replication stream, and must be on the same cluster node that acts as the primary I/O path to the device. A replication resource group must have the following characteristics:

Be a failover resource group

A failover resource can run on only one node at a time. When a failover occurs, failover resources take part in the failover.

Have a logical hostname resource

A logical hostname is hosted on one node of each cluster (primary and secondary) and is used to provide source and target addresses for the Sun StorageTek Availability Suite software data replication stream.

Have an HAStoragePlus resource

The HAStoragePlus resource enforces the failover of the device group when the replication resource group is switched over or failed over. Oracle Solaris Cluster software also enforces the failover of the replication resource group when the device group is switched over. In this way, the replication resource group and the device group are always colocated, or mastered by the same node.

The following extension properties must be defined in the HAStoragePlus resource:

GlobalDevicePaths. This extension property defines the device group to which a volume belongs.

AffinityOn property = True. This extension property causes the device group to switch over or fail over when the replication resource group switches over or fails over. This feature is called an affinity switchover.

For more information about HAStoragePlus, see the SUNW.HAStoragePlus(5) man page.

Be named after the device group with which it is colocated, followed by -stor-rg

For example, devgrp-stor-rg.

Be online on both the primary cluster and the secondary cluster

Configuring Application Resource Groups

To be highly available, an application must be managed as a resource in an application resource group. An application resource group can be configured for a failover application or a scalable application.

The ZPoolsSearchDir extension property must be defined in the HAStoragePlus resource. This extension property is required to use the ZFS file system.

Application resources and application resource groups configured on the primary cluster must also be configured on the secondary cluster. Also, the data accessed by the application resource must be replicated to the secondary cluster.

This section provides guidelines for configuring the following application resource groups:

Configuring Resource Groups for a Failover Application

In a failover application, an application runs on one node at a time. If that node fails, the application fails over to another node in the same cluster. A resource group for a failover application must have the following characteristics:

Have an HAStoragePlus resource to enforce the failover of the file system or zpool when the application resource group is switched over or failed over.

The device group is colocated with the replication resource group and the application resource group. Therefore, the failover of the application resource group enforces the failover of the device group and replication resource group. The application resource group, the replication resource group, and the device group are mastered by the same node.

Note, however, that a failover of the device group or the replication resource group does not cause a failover of the application resource group.

If the application data is globally mounted, the presence of an HAStoragePlus resource in the application resource group is not required but is advised.

If the application data is mounted locally, the presence of an HAStoragePlus resource in the application resource group is required.

For more information about HAStoragePlus, see the SUNW.HAStoragePlus(5) man page.

Must be online on the primary cluster and offline on the secondary cluster.

The application resource group must be brought online on the secondary cluster when the secondary cluster takes over as the primary cluster.

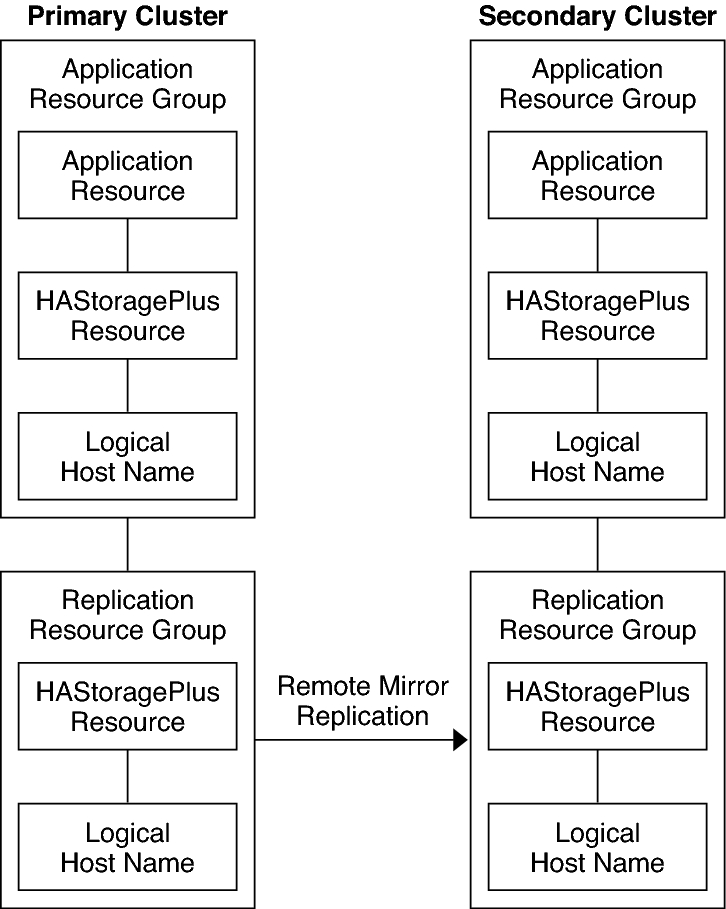

Figure A–4 illustrates the configuration of an application resource group and a replication resource group in a failover application.

Figure A-4 Configuration of Resource Groups in a Failover Application

Configuring Resource Groups for a Scalable Application

In a scalable application, an application runs on several nodes to create a single, logical service. If a node that is running a scalable application fails, failover does not occur. The application continues to run on the other nodes.

When a scalable application is managed as a resource in an application resource group, it is not necessary to collocate the application resource group with the device group. Therefore, it is not necessary to create an HAStoragePlus resource for the application resource group.

Have a dependency on the shared address resource group

The nodes that are running the scalable application use the shared address to distribute incoming data.

Be online on the primary cluster and offline on the secondary cluster

A resource group for a scalable application must have the following characteristics:

Figure A–5 illustrates the configuration of resource groups in a scalable application.

Figure A-5 Configuration of Resource Groups in a Scalable Application

Guidelines for Managing a Takeover

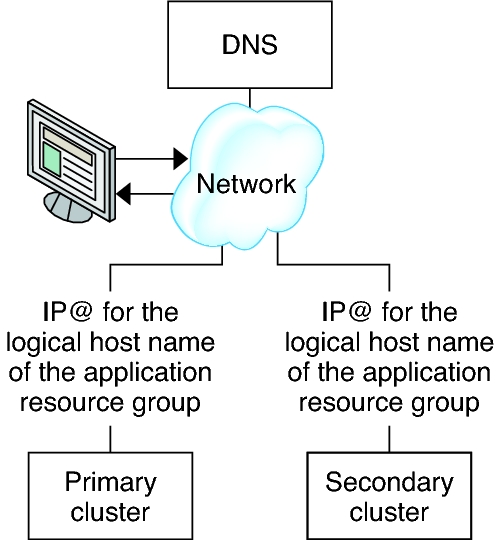

If the primary cluster fails, the application must be switched over to the secondary cluster as soon as possible. To enable the secondary cluster to take over, the DNS must be updated.

Clients use DNS to map an application's logical hostname to an IP address. After a takeover, where the application is moved to a secondary cluster, the DNS information must be updated to reflect the mapping between the application's logical hostname and the new IP address.

Figure A-6 DNS Mapping of a Client to a Cluster

To update the DNS, use the nsupdate command. For information, see the nsupdate(1M) man page. For an example of how to manage a takeover, see Example of How to Manage a Takeover.

After repair, the primary cluster can be brought back online. To switch back to the original primary cluster, perform the following tasks:

Synchronize the primary cluster with the secondary cluster to ensure that the primary volume is up-to-date. You can achieve this by stopping the resource group on the secondary node, so that the replication data stream can drain.

Reverse the direction of data replication so that the original primary is now, once again, replicating data to the original secondary.

Start the resource group on the primary cluster.

Update the DNS so that clients can access the application on the primary cluster.

Task Map: Example of a Data Replication Configuration

Table A–1 lists the tasks in this example of how data replication was configured for an NFS application by using Sun StorageTek Availability Suite software.

|

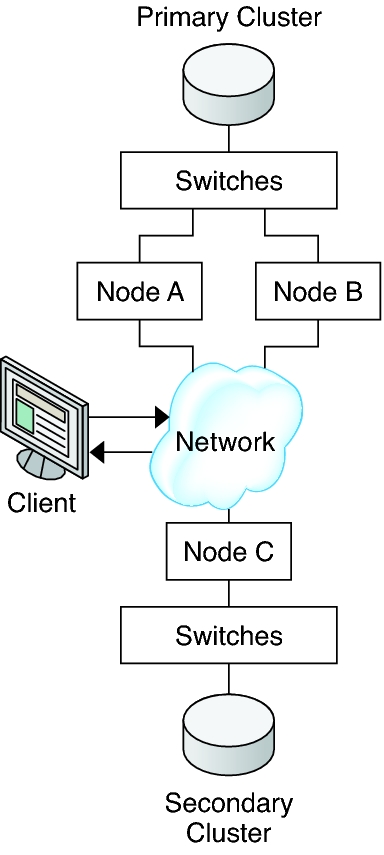

Connecting and Installing the Clusters

Figure A–7 illustrates the cluster configuration the example configuration uses. The secondary cluster in the example configuration contains one node, but other cluster configurations can be used.

Figure A-7 Example Cluster Configuration

Table A–2 summarizes the hardware and software that the example configuration requires. The Oracle Solaris OS, Oracle Solaris Cluster software, and volume manager software must be installed on the cluster nodes before Sun StorageTek Availability Suite software and software updates are installed.

|

Example of How to Configure Device Groups and Resource Groups

This section describes how device groups and resource groups are configured for an NFS application. For additional information, see Configuring Replication Resource Groups and Configuring Application Resource Groups.

How to Configure the File System on the Primary Cluster for the NFS Application

How to Configure the File System on the Secondary Cluster for the NFS Application

How to Create a Replication Resource Group on the Primary Cluster

How to Create a Replication Resource Group on the Secondary Cluster

How to Create an NFS Application Resource Group on the Primary Cluster

How to Create an NFS Application Resource Group on the Secondary Cluster

This section contains the following procedures:

The following table lists the names of the groups and resources that are created for the example configuration.

|

With the exception of devgrp-stor-rg, the names of the groups and resources are example names that can be changed as required. The replication resource group must have a name with the format devicegroupname-stor-rg.

For information about Solaris Volume Manager software, see the Chapter 4, Configuring Solaris Volume Manager Software, in Oracle Solaris Cluster Software Installation Guide .

How to Configure a Device Group on the Primary Cluster

Before You Begin

Ensure that you have completed the following tasks:

Read the guidelines and requirements in the following sections:

Set up the primary and secondary clusters as described in Connecting and Installing the Clusters.

- Access nodeA by assuming the role that provides solaris.cluster.modify RBAC authorization.

The node nodeA is the first node of the primary cluster. For a reminder of which node is nodeA, see Figure A–7.

- Create a metaset to contain the NFS data and associated

replication.

nodeA# metaset -s nfsset a -h nodeA nodeB

- Add disks to the metaset.

nodeA# metaset -s nfsset -a /dev/did/dsk/d6 /dev/did/dsk/d7

- Add mediators to the metaset.

nodeA# metaset -s nfsset -a -m nodeA nodeB

- Create the required volumes (or metadevices).

Create two components of a mirror:

nodeA# metainit -s nfsset d101 1 1 /dev/did/dsk/d6s2 nodeA# metainit -s nfsset d102 1 1 /dev/did/dsk/d7s2

Create the mirror with one of the components:

nodeA# metainit -s nfsset d100 -m d101

Attach the other component to the mirror and allow it to synchronize:

nodeA# metattach -s nfsset d100 d102

Create soft partitions from the mirror, following these examples:

d200 - The NFS data (master volume):

nodeA# metainit -s nfsset d200 -p d100 50G

d201 - The point-in-time copy volume for the NFS data:

nodeA# metainit -s nfsset d201 -p d100 50G

d202 - The point-in-time bitmap volume:

nodeA# metainit -s nfsset d202 -p d100 10M

d203 - The remote shadow bitmap volume:

nodeA# metainit -s nfsset d203 -p d100 10M

d204 - The volume for the Oracle Solaris Cluster SUNW.NFS configuration information:

nodeA# metainit -s nfsset d204 -p d100 100M

- Create file systems for the NFS data and the configuration

volume.

nodeA# yes | newfs /dev/md/nfsset/rdsk/d200 nodeA# yes | newfs /dev/md/nfsset/rdsk/d204

Next Steps

Go to How to Configure a Device Group on the Secondary Cluster.

How to Configure a Device Group on the Secondary Cluster

Before You Begin

Complete the procedure How to Configure a Device Group on the Primary Cluster.

- Access nodeC by assuming the role that provides solaris.cluster.modify RBAC authorization.

- Create a metaset to contain the NFS data and associated

replication.

nodeC# metaset -s nfsset a -h nodeC

- Add disks to the metaset.

In the example below, assume that the disk DID numbers are different.

nodeC# metaset -s nfsset -a /dev/did/dsk/d3 /dev/did/dsk/d4

Note - Mediators are not required on a single node cluster. - Create the required volumes (or metadevices).

Create two components of a mirror:

nodeC# metainit -s nfsset d101 1 1 /dev/did/dsk/d3s2 nodeC# metainit -s nfsset d102 1 1 /dev/did/dsk/d4s2

Create the mirror with one of the components:

nodeC# metainit -s nfsset d100 -m d101

Attach the other component to the mirror and allow it to synchronize:

metattach -s nfsset d100 d102

Create soft partitions from the mirror, following these examples:

d200 - The NFS data master volume:

nodeC# metainit -s nfsset d200 -p d100 50G

d201 - The point-in-time copy volume for the NFS data:

nodeC# metainit -s nfsset d201 -p d100 50G

d202 - The point-in-time bitmap volume:

nodeC# metainit -s nfsset d202 -p d100 10M

d203 - The remote shadow bitmap volume:

nodeC# metainit -s nfsset d203 -p d100 10M

d204 - The volume for the Oracle Solaris Cluster SUNW.NFS configuration information:

nodeC# metainit -s nfsset d204 -p d100 100M

- Create file systems for the NFS data and the configuration

volume.

nodeC# yes | newfs /dev/md/nfsset/rdsk/d200 nodeC# yes | newfs /dev/md/nfsset/rdsk/d204

Next Steps

Go to How to Configure the File System on the Primary Cluster for the NFS Application.

How to Configure the File System on the Primary Cluster for the NFS Application

Before You Begin

Complete the procedure How to Configure a Device Group on the Secondary Cluster.

- On nodeA and nodeB, assume the role that provides solaris.cluster.admin RBAC authorization.

- On nodeA and nodeB,

create a mount-point directory for the NFS file system.

For example:

nodeA# mkdir /global/mountpoint

- On nodeA and nodeB,

configure the master volume to not be mounted

automatically on the mount point.

Add or replace the following text in the /etc/vfstab file on nodeA and nodeB. The text must be on a single line.

/dev/md/nfsset/dsk/d200 /dev/md/nfsset/rdsk/d200 \ /global/mountpoint ufs 3 no global,logging

- On nodeA and nodeB,

create a mount point for metadevice d204.

The following example creates the mount point /global/etc.

nodeA# mkdir /global/etc

- On nodeA and nodeB,

configure metadevice d204 to be mounted automatically on the mount

point.

Add or replace the following text in the /etc/vfstab file on nodeA and nodeB. The text must be on a single line.

/dev/md/nfsset/dsk/d204 /dev/md/nfsset/rdsk/d204 \ /global/etc ufs 3 yes global,logging

- Mount metadevice d204 on nodeA.

nodeA# mount /global/etc

- Create the configuration files and information for the Oracle Solaris Cluster HA for NFS data

service.

- Create a directory called /global/etc/SUNW.nfs on nodeA.

nodeA# mkdir -p /global/etc/SUNW.nfs

- Create the file /global/etc/SUNW.nfs/dfstab.nfs-rs on nodeA.

nodeA# touch /global/etc/SUNW.nfs/dfstab.nfs-rs

- Add the following line to the /global/etc/SUNW.nfs/dfstab.nfs-rs file on nodeA.

share -F nfs -o rw -d "HA NFS" /global/mountpoint

- Create a directory called /global/etc/SUNW.nfs on nodeA.

Next Steps

Go to How to Configure the File System on the Secondary Cluster for the NFS Application.

How to Configure the File System on the Secondary Cluster for the NFS Application

Before You Begin

Complete the procedure How to Configure the File System on the Primary Cluster for the NFS Application.

- On nodeC, assume the role that provides solaris.cluster.admin RBAC authorization.

- On nodeC, create

a mount-point directory for the NFS file system.

For example:

nodeC# mkdir /global/mountpoint

- On nodeC, configure the master volume

to be mounted automatically on the mount point.

Add or replace the following text in the /etc/vfstab file on nodeC. The text must be on a single line.

/dev/md/nfsset/dsk/d200 /dev/md/nfsset/rdsk/d200 \ /global/mountpoint ufs 3 yes global,logging

- Mount metadevice d204 on nodeA.

nodeC# mount /global/etc

- Create the configuration files and information for the Oracle Solaris Cluster HA for NFS data

service.

- Create a directory called /global/etc/SUNW.nfs on nodeA.

nodeC# mkdir -p /global/etc/SUNW.nfs

- Create the file /global/etc/SUNW.nfs/dfstab.nfs-rs on nodeA.

nodeC# touch /global/etc/SUNW.nfs/dfstab.nfs-rs

- Add the following line to the /global/etc/SUNW.nfs/dfstab.nfs-rs file on nodeA.

share -F nfs -o rw -d "HA NFS" /global/mountpoint

- Create a directory called /global/etc/SUNW.nfs on nodeA.

Next Steps

Go to How to Create a Replication Resource Group on the Primary Cluster.

How to Create a Replication Resource Group on the Primary Cluster

Before You Begin

Complete the procedure How to Configure the File System on the Secondary Cluster for the NFS Application.

Ensure that the /etc/netmasks file has IP-address subnet and netmask entries for all logical hostnames. If necessary, edit the /etc/netmasks file to add any missing entries.

- Access nodeA as the role that provides solaris.cluster.modify, solaris.cluster.admin, and solaris.cluster.read RBAC authorization.

- Register the SUNW.HAStoragePlus resource

type.

nodeA# clresourcetype register SUNW.HAStoragePlus

- Create a replication resource group for the device group.

nodeA# clresourcegroup create -n nodeA,nodeB devgrp-stor-rg

- -n nodeA,nodeB

Specifies that cluster nodes nodeA and nodeB can master the replication resource group.

- devgrp-stor-rg

The name of the replication resource group. In this name, devgrp specifies the name of the device group.

- Add a SUNW.HAStoragePlus resource to

the replication resource group.

nodeA# clresource create -g devgrp-stor-rg -t SUNW.HAStoragePlus \ -p GlobalDevicePaths=nfsset \ -p AffinityOn=True \ devgrp-stor

- –g

Specifies the resource group to which resource is added.

- -p GlobalDevicePaths=

Specifies the device group that Sun StorageTek Availability Suite software relies on.

- -p AffinityOn=True

Specifies that the SUNW.HAStoragePlus resource must perform an affinity switchover for the global devices and cluster file systems defined by -p GlobalDevicePaths=. Therefore, when the replication resource group fails over or is switched over, the associated device group is switched over.

For more information about these extension properties, see the SUNW.HAStoragePlus(5) man page.

- Add a logical hostname resource to the replication resource

group.

nodeA# clreslogicalhostname create -g devgrp-stor-rg lhost-reprg-prim

The logical hostname for the replication resource group on the primary cluster is named lhost-reprg-prim.

- Enable

the resources, manage the resource group, and bring the resource group

online.

nodeA# clresourcegroup online -emM -n nodeA devgrp-stor-rg

- –e

Enables associated resources.

- –M

Manages the resource group.

- –n

Specifies the node on which to bring the resource group online.

- Verify that the resource group is online.

nodeA# clresourcegroup status devgrp-stor-rg

Examine the resource group state field to confirm that the replication resource group is online on nodeA.

Next Steps

Go to How to Create a Replication Resource Group on the Secondary Cluster.

How to Create a Replication Resource Group on the Secondary Cluster

Before You Begin

Complete the procedure How to Create a Replication Resource Group on the Primary Cluster.

Ensure that the /etc/netmasks file has IP-address subnet and netmask entries for all logical hostnames. If necessary, edit the /etc/netmasks file to add any missing entries.

- Access nodeC as the role that provides solaris.cluster.modify, solaris.cluster.admin, and solaris.cluster.read RBAC authorization.

- Register SUNW.HAStoragePlus as a resource

type.

nodeC# clresourcetype register SUNW.HAStoragePlus

- Create a replication resource group for the device group.

nodeC# clresourcegroup create -n nodeC devgrp-stor-rg

- create

Creates the resource group.

- –n

Specifies the node list for the resource group.

- devgrp

The name of the device group.

- devgrp-stor-rg

The name of the replication resource group.

- Add

a SUNW.HAStoragePlus resource to the replication

resource group.

nodeC# clresource create \ -t SUNW.HAStoragePlus \ -p GlobalDevicePaths=nfsset \ -p AffinityOn=True \ devgrp-stor

- create

Creates the resource.

- –t

Specifies the resource type.

- -p GlobalDevicePaths=

Specifies the device group that Sun StorageTek Availability Suite software relies on.

- -p AffinityOn=True

Specifies that the SUNW.HAStoragePlus resource must perform an affinity switchover for the global devices and cluster file systems defined by -p GlobalDevicePaths=. Therefore, when the replication resource group fails over or is switched over, the associated device group is switched over.

- devgrp-stor

The HAStoragePlus resource for the replication resource group.

For more information about these extension properties, see the SUNW.HAStoragePlus(5) man page.

- Add a logical hostname resource to the replication resource

group.

nodeC# clreslogicalhostname create -g devgrp-stor-rg lhost-reprg-sec

The logical hostname for the replication resource group on the secondary cluster is named lhost-reprg-sec.

- Enable

the resources, manage the resource group, and bring the resource group

online.

nodeC# clresourcegroup online -eM -n nodeC devgrp-stor-rg

- online

Brings online.

- –e

Enables associated resources.

- –M

Manages the resource group.

- –n

Specifies the node on which to bring the resource group online.

- Verify that the resource group is online.

nodeC# clresourcegroup status devgrp-stor-rg

Examine the resource group state field to confirm that the replication resource group is online on nodeC.

Next Steps

Go to How to Create an NFS Application Resource Group on the Primary Cluster.

How to Create an NFS Application Resource Group on the Primary Cluster

This procedure describes how application resource groups are created for NFS. This procedure is specific to this application and cannot be used for another type of application.

Before You Begin

Complete the procedure How to Create a Replication Resource Group on the Secondary Cluster.

Ensure that the /etc/netmasks file has IP-address subnet and netmask entries for all logical hostnames. If necessary, edit the /etc/netmasks file to add any missing entries.

- Access nodeA as the role that provides solaris.cluster.modify, solaris.cluster.admin, and solaris.cluster.read RBAC authorization.

-

Register SUNW.nfs as a resource type.

nodeA# clresourcetype register SUNW.nfs

-

If SUNW.HAStoragePlus has not been registered as a resource type,

register it.

nodeA# clresourcetype register SUNW.HAStoragePlus

-

Create an application resource group for the NFS service.

nodeA# clresourcegroup create \ -p Pathprefix=/global/etc \ -p Auto_start_on_new_cluster=False \ -p RG_affinities=+++devgrp-stor-rg \ nfs-rg

- Pathprefix=/global/etc

-

Specifies the directory into which the resources in the group can write administrative files.

- Auto_start_on_new_cluster=False

-

Specifies that the application resource group is not started automatically.

- RG_affinities=+++devgrp-stor-rg

-

Specifies the resource group with which the application resource group must be collocated. In this example, the application resource group must be collocated with the replication resource group devgrp-stor-rg.

If the replication resource group is switched over to a new primary node, the application resource group is automatically switched over. However, attempts to switch over the application resource group to a new primary node are blocked because that action breaks the collocation requirement.

- nfs-rg

-

The name of the application resource group.

-

Add a SUNW.HAStoragePlus resource to the application resource

group.

nodeA# clresource create -g nfs-rg \ -t SUNW.HAStoragePlus \ -p FileSystemMountPoints=/global/mountpoint \ -p AffinityOn=True \ nfs-dg-rs

- create

-

Creates the resource.

- –g

-

Specifies the resource group to which the resource is added.

- -t SUNW.HAStoragePlus

-

Specifies that the resource is of the type SUNW.HAStoragePlus.

- -p FileSystemMountPoints=/global/mountpoint

-

Specifies that the mount point for the file system is global.

- -p AffinityOn=True

-

Specifies that the application resource must perform an affinity switchover for the global devices and cluster file systems defined by -p FileSystemMountPoints. Therefore, when the application resource group fails over or is switched over, the associated device group is switched over.

- nfs-dg-rs

-

The name of the HAStoragePlus resource for the NFS application.

For more information about these extension properties, see the SUNW.HAStoragePlus (5) man page.

-

Add a logical hostname resource to the application resource group.

nodeA# clreslogicalhostname create -g nfs-rg \ lhost-nfsrg-prim

The logical hostname of the application resource group on the primary cluster is named lhost-nfsrg-prim.

-

Create the

dfstab.resource-name configuration file and place it

in the SUNW.nfs subdirectory under the Pathprefix

directory of the containing resource group.

-

Create a directory called SUNW.nfs on nodeA.

nodeA# mkdir -p /global/etc/SUNW.nfs

-

Create a dfstab.resource-name file on

nodeA.

nodeA# touch /global/etc/SUNW.nfs/dfstab.nfs-rs

-

Add the following line to the /global/etc/SUNW.nfs/dfstab.nfs-rs file on

nodeA.

share -F nfs -o rw -d "HA NFS" /global/mountpoint

-

Create a directory called SUNW.nfs on nodeA.

-

Bring the application resource group online.

nodeA# clresourcegroup online -M -n nodeA nfs-rg

- online

-

Brings the resource group online.

- –e

-

Enables the associated resources.

- –M

-

Manages the resource group.

- –n

-

Specifies the node on which to bring the resource group online.

- nfs-rg

-

The name of the resource group.

-

Verify that the application resource group is online.

nodeA# clresourcegroup status

Examine the resource group state field to determine whether the application resource group is online for nodeA and nodeB.

Next Steps

Go to How to Create an NFS Application Resource Group on the Secondary Cluster.

How to Create an NFS Application Resource Group on the Secondary Cluster

Before You Begin

Complete the procedure How to Create an NFS Application Resource Group on the Primary Cluster.

Ensure that the /etc/netmasks file has IP-address subnet and netmask entries for all logical hostnames. If necessary, edit the /etc/netmasks file to add any missing entries.

- Access nodeC as the role that provides solaris.cluster.modify, solaris.cluster.admin, and solaris.cluster.read RBAC authorization.

- Register SUNW.nfs as a resource type.

nodeC# clresourcetype register SUNW.nfs

- If SUNW.HAStoragePlus has not been

registered as a resource type, register it.

nodeC# clresourcetype register SUNW.HAStoragePlus

- Create an application resource group for the device group.

nodeC# clresourcegroup create \ -p Pathprefix=/global/etc \ -p Auto_start_on_new_cluster=False \ -p RG_affinities=+++devgrp-stor-rg \ nfs-rg

- create

Creates the resource group.

- –p

Specifies a property of the resource group.

- Pathprefix=/global/etc

Specifies a directory into which the resources in the group can write administrative files.

- Auto_start_on_new_cluster=False

Specifies that the application resource group is not started automatically.

- RG_affinities=+++devgrp-stor-rg

Specifies the resource group where the application resource group must be collocated. In this example, the application resource group must be collocated with the replication resource group devgrp-stor-rg.

If the replication resource group is switched over to a new primary node, the application resource group is automatically switched over. However, attempts to switch over the application resource group to a new primary node are blocked because that breaks the collocation requirement.

- nfs-rg

The name of the application resource group.

- Add

a SUNW.HAStoragePlus resource to the application

resource group.

nodeC# clresource create -g nfs-rg \ -t SUNW.HAStoragePlus \ -p FileSystemMountPoints=/global/mountpoint \ -p AffinityOn=True \ nfs-dg-rs

- create

Creates the resource.

- –g

Specifies the resource group to which the resource is added.

- -t SUNW.HAStoragePlus

Specifies that the resource is of the type SUNW.HAStoragePlus.

- –p

Specifies a property of the resource.

- FileSystemMountPoints=/global/mountpoint

Specifies that the mount point for the file system is global.

- AffinityOn=True

Specifies that the application resource must perform an affinity switchover for the global devices and cluster file systems defined by -p FileSystemMountPoints=. Therefore, when the application resource group fails over or is switched over, the associated device group is switched over.

- nfs-dg-rs

The name of the HAStoragePlus resource for the NFS application.

- Add a logical hostname resource to the application resource

group.

nodeC# clreslogicalhostname create -g nfs-rg \ lhost-nfsrg-sec

The logical hostname of the application resource group on the secondary cluster is named lhost-nfsrg-sec.

- Add an NFS resource to the application resource group.

nodeC# clresource create -g nfs-rg \ -t SUNW.nfs -p Resource_dependencies=nfs-dg-rs nfs-rg

- If the global volume is mounted on the primary cluster,

unmount the global volume from the secondary cluster.

nodeC# umount /global/mountpoint

If the volume is mounted on a secondary cluster, the synchronization fails.

Next Steps

Go to Example of How to Enable Data Replication.

Example of How to Enable Data Replication

This section describes how data replication is enabled for the example configuration. This section uses the Sun StorageTek Availability Suite software commands sndradm and iiadm. For more information about these commands, see the Sun StorageTek Availability Suite documentation.

This section contains the following procedures:

How to Enable Replication on the Primary Cluster

- Access nodeA as the role that provides solaris.cluster.read RBAC authorization.

- Flush all transactions.

nodeA# lockfs -a -f

- Confirm that the logical host names lhost-reprg-prim and lhost-reprg-sec are online.

nodeA# clresourcegroup status nodeC# clresourcegroup status

Examine the state field of the resource group.

- Enable remote mirror replication from the primary cluster

to the secondary cluster.

This step enables replication from the primary cluster to the secondary cluster. This step enables replication from the master volume (d200) on the primary cluster to the master volume (d200) on the secondary cluster. In addition, this step enables replication to the remote mirror bitmap on d203.

If the primary cluster and secondary cluster are unsynchronized, run this command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -e lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

If the primary cluster and secondary cluster are synchronized, run this command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -E lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

- Enable autosynchronization.

Run this command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -a on lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

This step enables autosynchronization. When the active state of autosynchronization is set to on, the volume sets are resynchronized if the system reboots or a failure occurs.

- Verify that the cluster is in logging mode.

Use the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -P

The output should resemble the following:

/dev/md/nfsset/rdsk/d200 -> lhost-reprg-sec:/dev/md/nfsset/rdsk/d200 autosync: off, max q writes:4194304, max q fbas:16384, mode:sync,ctag: devgrp, state: logging

In logging mode, the state is logging, and the active state of autosynchronization is off. When the data volume on the disk is written to, the bitmap file on the same disk is updated.

- Enable point-in-time snapshot.

Use the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/iiadm -e ind \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d201 \ /dev/md/nfsset/rdsk/d202 nodeA# /usr/sbin/iiadm -w \ /dev/md/nfsset/rdsk/d201

This step enables the master volume on the primary cluster to be copied to the shadow volume on the same cluster. The master volume, shadow volume, and point-in-time bitmap volume must be in the same device group. In this example, the master volume is d200, the shadow volume is d201, and the point-in-time bitmap volume is d203.

- Attach the point-in-time snapshot to the remote mirror

set.

Use the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -I a \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d201 \ /dev/md/nfsset/rdsk/d202

This step associates the point-in-time snapshot with the remote mirror volume set. Sun StorageTek Availability Suite software ensures that a point-in-time snapshot is taken before remote mirror replication can occur.

Next Steps

Go to How to Enable Replication on the Secondary Cluster.

How to Enable Replication on the Secondary Cluster

Before You Begin

Complete the procedure How to Enable Replication on the Primary Cluster.

- Access nodeC as the root role.

- Flush all transactions.

nodeC# lockfs -a -f

- Enable remote mirror replication from the primary cluster

to the secondary cluster.

Use the following command for Sun StorageTek Availability Suite software:

nodeC# /usr/sbin/sndradm -n -e lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

The primary cluster detects the presence of the secondary cluster and starts synchronization. Refer to the system log file /var/adm for Sun StorageTek Availability Suite for information about the status of the clusters.

- Enable independent point-in-time snapshot.

Use the following command for Sun StorageTek Availability Suite software:

nodeC# /usr/sbin/iiadm -e ind \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d201 \ /dev/md/nfsset/rdsk/d202 nodeC# /usr/sbin/iiadm -w \ /dev/md/nfsset/rdsk/d201

- Attach the point-in-time snapshot to the remote mirror

set.

Use the following command for Sun StorageTek Availability Suite software:

nodeC# /usr/sbin/sndradm -I a \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d201 \ /dev/md/nfsset/rdsk/d202

Next Steps

Go to Example of How to Perform Data Replication.

Example of How to Perform Data Replication

This section describes how data replication is performed for the example configuration. This section uses the Sun StorageTek Availability Suite software commands sndradm and iiadm. For more information about these commands, see the Sun StorageTek Availability Suite documentation.

This section contains the following procedures:

How to Perform a Remote Mirror Replication

In this procedure, the master volume of the primary disk is replicated to the master volume on the secondary disk. The master volume is d200 and the remote mirror bitmap volume is d203.

- Access nodeA as the root role.

- Verify that the cluster is in logging mode.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -P

The output should resemble the following:

/dev/md/nfsset/rdsk/d200 -> lhost-reprg-sec:/dev/md/nfsset/rdsk/d200 autosync: off, max q writes:4194304, max q fbas:16384, mode:sync,ctag: devgrp, state: logging

In logging mode, the state is logging, and the active state of autosynchronization is off. When the data volume on the disk is written to, the bitmap file on the same disk is updated.

- Flush all transactions.

nodeA# lockfs -a -f

- Repeat Step 1 through Step 3 on nodeC.

- Copy the master volume of nodeA to

the master volume of nodeC.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -m lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

- Wait until the replication is complete and the volumes

are synchronized.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -w lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

- Confirm that the cluster is in replicating mode.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -P

The output should resemble the following:

/dev/md/nfsset/rdsk/d200 -> lhost-reprg-sec:/dev/md/nfsset/rdsk/d200 autosync: on, max q writes:4194304, max q fbas:16384, mode:sync,ctag: devgrp, state: replicating

In replicating mode, the state is replicating, and the active state of autosynchronization is on. When the primary volume is written to, the secondary volume is updated by Sun StorageTek Availability Suite software.

Next Steps

Go to How to Perform a Point-in-Time Snapshot.

How to Perform a Point-in-Time Snapshot

In this procedure, point-in-time snapshot is used to synchronize the shadow volume of the primary cluster to the master volume of the primary cluster. The master volume is d200, the bitmap volume is d203, and the shadow volume is d201.

Before You Begin

Complete the procedure How to Perform a Remote Mirror Replication.

- Access nodeA as the role that provides solaris.cluster.modify and solaris.cluster.admin RBAC authorization.

- Disable the resource that is running on nodeA.

nodeA# clresource disable nfs-rs

- Change the primary cluster to logging mode.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -l lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

When the data volume on the disk is written to, the bitmap file on the same disk is updated. No replication occurs.

- Synchronize the shadow volume of the primary cluster to

the master volume of the primary cluster.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/iiadm -u s /dev/md/nfsset/rdsk/d201 nodeA# /usr/sbin/iiadm -w /dev/md/nfsset/rdsk/d201

- Synchronize the shadow volume of the secondary cluster

to the master volume of the secondary cluster.

Run the following command for Sun StorageTek Availability Suite software:

nodeC# /usr/sbin/iiadm -u s /dev/md/nfsset/rdsk/d201 nodeC# /usr/sbin/iiadm -w /dev/md/nfsset/rdsk/d201

- Restart the application on nodeA.

nodeA# clresource enable nfs-rs

- Resynchronize the secondary volume with the primary volume.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -u lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

Next Steps

Go to How to Verify That Replication Is Configured Correctly.

How to Verify That Replication Is Configured Correctly

Before You Begin

Complete the procedure How to Perform a Point-in-Time Snapshot.

- Access nodeA and nodeC as the role that provides solaris.cluster.admin RBAC authorization.

- Verify that the primary cluster is in replicating mode,

with autosynchronization on.

Use the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -P

The output should resemble the following:

/dev/md/nfsset/rdsk/d200 -> lhost-reprg-sec:/dev/md/nfsset/rdsk/d200 autosync: on, max q writes:4194304, max q fbas:16384, mode:sync,ctag: devgrp, state: replicating

In replicating mode, the state is replicating, and the active state of autosynchronization is on. When the primary volume is written to, the secondary volume is updated by Sun StorageTek Availability Suite software.

- If the primary cluster is not in replicating mode, put

it into replicating mode.

Use the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -u lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

- Create a directory on a client machine.

- Mount the primary volume on the application directory and display the mounted directory.

- Unmount the primary volume from

the application directory.

- Unmount the primary volume from the application directory.

client-machine# umount /dir

- Take the application resource group offline on the primary

cluster.

nodeA# clresource disable -g nfs-rg + nodeA# clresourcegroup offline nfs-rg

- Change the primary cluster to logging mode.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -l lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

When the data volume on the disk is written to, the bitmap file on the same disk is updated. No replication occurs.

- Ensure that the PathPrefix directory

is available.

nodeC# mount | grep /global/etc

- Confirm that the file system is fit to be mounted on the

secondary cluster.

nodeC# fsck -y /dev/md/nfsset/rdsk/d200

- Bring the application into a managed state,

and bring it online on the secondary cluster.

nodeC# clresourcegroup online -eM nodeC nfs-rg

- Access the client machine as the root role.

You see a prompt that resembles the following:

client-machine#

- Mount the application directory that was created

in Step 4 to the

application directory on the secondary volume.

client-machine# mount -o rw lhost-nfsrg-sec:/global/mountpoint /dir

- Display the mounted directory.

client-machine# ls /dir

- Unmount the primary volume from the application directory.

- Ensure that the directory displayed in Step 5 is the same as the directory displayed in Step 6.

- Return the application on the primary volume to the mounted

application directory.

- Take the application resource group offline

on the secondary volume.

nodeC# clresource disable -g nfs-rg + nodeC# clresourcegroup offline nfs-rg

- Ensure that the global volume is unmounted

from the secondary volume.

nodeC# umount /global/mountpoint

- Bring the application resource group into

a managed state, and bring it online on the primary cluster.

nodeA# clresourcegroup online -eM nodeA nfs-rg

- Change the primary volume to replicating mode.

Run the following command for Sun StorageTek Availability Suite software:

nodeA# /usr/sbin/sndradm -n -u lhost-reprg-prim \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 lhost-reprg-sec \ /dev/md/nfsset/rdsk/d200 \ /dev/md/nfsset/rdsk/d203 ip sync

When the primary volume is written to, the secondary volume is updated by Sun StorageTek Availability Suite software.

- Take the application resource group offline

on the secondary volume.

See also

Example of How to Manage a Takeover

Example of How to Manage a Takeover

This section describes how to update the DNS entries. For additional information, see Guidelines for Managing a Takeover.

This section contains the following procedure:

How to Update the DNS Entry

For an illustration of how DNS maps a client to a cluster, see Figure A–6.

- Start the nsupdate command.

For more information, see the nsupdate(1M) man page.

- Remove the current DNS mapping

between the logical hostname of the application resource group and

the cluster IP address for both clusters.

> update delete lhost-nfsrg-prim A > update delete lhost-nfsrg-sec A > update delete ipaddress1rev.in-addr.arpa ttl PTR lhost-nfsrg-prim > update delete ipaddress2rev.in-addr.arpa ttl PTR lhost-nfsrg-sec

- ipaddress1rev

The IP address of the primary cluster, in reverse order.

- ipaddress2rev

The IP address of the secondary cluster, in reverse order.

- ttl

The time to live, in seconds. A typical value is 3600.

- Create a new DNS mapping between

the logical hostname of the application resource group and the cluster

IP address, for both clusters.

Map the primary logical hostname to the IP address of the secondary cluster and map the secondary logical hostname to the IP address of the primary cluster.

> update add lhost-nfsrg-prim ttl A ipaddress2fwd > update add lhost-nfsrg-sec ttl A ipaddress1fwd > update add ipaddress2rev.in-addr.arpa ttl PTR lhost-nfsrg-prim > update add ipaddress1rev.in-addr.arpa ttl PTR lhost-nfsrg-sec

- ipaddress2fwd

The IP address of the secondary cluster, in forward order.

- ipaddress1fwd

The IP address of the primary cluster, in forward order.