5 Using Oracle XQuery for Hadoop

This chapter explains how to use Oracle XQuery for Hadoop to extract and transform large volumes of semistructured data. It contains the following sections:

5.1 What Is Oracle XQuery for Hadoop?

Oracle XQuery for Hadoop is a transformation engine for semistructured big data. Oracle XQuery for Hadoop runs transformations expressed in the XQuery language by translating them into a series of MapReduce jobs, which are executed in parallel on an Apache Hadoop cluster. You can focus on data movement and transformation logic, instead of the complexities of Java and MapReduce, without sacrificing scalability or performance.

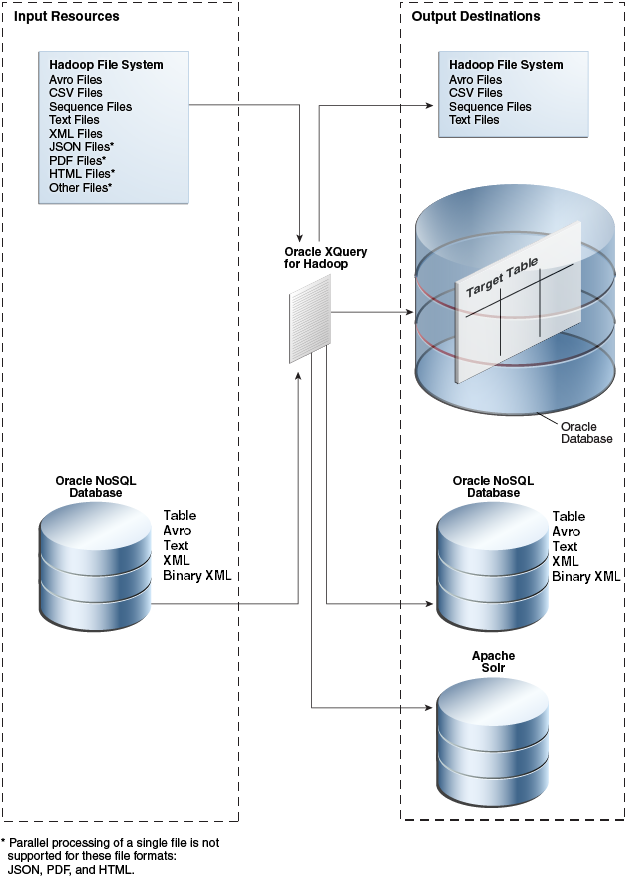

The input data can be located in a file system accessible through the Hadoop File System API, such as the Hadoop Distributed File System (HDFS), or stored in Oracle NoSQL Database. Oracle XQuery for Hadoop can write the transformation results to Hadoop files, Oracle NoSQL Database, or Oracle Database.

Oracle XQuery for Hadoop also provides extensions to Apache Hive to support massive XML files.

Oracle XQuery for Hadoop is based on mature industry standards including XPath, XQuery, and XQuery Update Facility. It is fully integrated with other Oracle products, which enables Oracle XQuery for Hadoop to:

-

Load data efficiently into Oracle Database using Oracle Loader for Hadoop.

-

Provide read and write support to Oracle NoSQL Database.

The following figure provides an overview of the data flow using Oracle XQuery for Hadoop.

Figure 5-1 Oracle XQuery for Hadoop Data Flow

Description of "Figure 5-1 Oracle XQuery for Hadoop Data Flow"

5.2 Getting Started With Oracle XQuery for Hadoop

Oracle XQuery for Hadoop is designed for use by XQuery developers. If you are already familiar with XQuery, then you are ready to begin. However, if you are new to XQuery, then you must first acquire the basics of the language. This guide does not attempt to cover this information.

See Also:

-

XQuery Tutorial at

http://www.w3schools.com/xml/xquery_intro.asp -

XQuery 3.1: An XML Query Language at

https://www.w3.org/TR/xquery-31/

5.2.2 Example: Hello World!

Follow these steps to create and run a simple query using Oracle XQuery for Hadoop:

-

Create a text file named

hello.txtin the current directory that contains the lineHello.$ echo "Hello" > hello.txt

-

Copy the file to HDFS:

$ hdfs dfs -copyFromLocal hello.txt

-

Create a query file named

hello.xqin the current directory with the following content:import module "oxh:text"; for $line in text:collection("hello.txt") return text:put($line || " World!") -

Run the query:

$ hadoop jar $OXH_HOME/lib/oxh.jar hello.xq -output ./myout -print 13/11/21 02:41:57 INFO hadoop.xquery: OXH: Oracle XQuery for Hadoop 4.2.0 ((build 4.2.0-cdh5.0.0-mr1 @mr2). Copyright (c) 2014, Oracle. All rights reserved. 13/11/21 02:42:01 INFO hadoop.xquery: Submitting map-reduce job "oxh:hello.xq#0" id="3593921f-c50c-4bb8-88c0-6b63b439572b.0", inputs=[hdfs://bigdatalite.localdomain:8020/user/oracle/hello.txt], output=myout . . . -

Check the output file:

$ hdfs dfs -cat ./myout/part-m-00000 Hello World!

5.3 About the Oracle XQuery for Hadoop Functions

Oracle XQuery for Hadoop reads from and writes to big data sets using collection and put functions:

-

A collection function reads data from Hadoop files or Oracle NoSQL Database as a collection of items. A Hadoop file is one that is accessible through the Hadoop File System API. On Oracle Big Data Appliance and most Hadoop clusters, this file system is Hadoop Distributed File System (HDFS).

-

A put function adds a single item to a data set stored in Oracle Database, Oracle NoSQL Database, or a Hadoop file.

The following is a simple example of an Oracle XQuery for Hadoop query that reads items from one source and writes to another:

for $x in collection(...) return put($x)

Oracle XQuery for Hadoop comes with a set of adapters that you can use to define put and collection functions for specific formats and sources. Each adapter has two components:

-

A set of built-in put and collection functions that are predefined for your convenience.

-

A set of XQuery function annotations that you can use to define custom put and collection functions.

Other commonly used functions are also included in Oracle XQuery for Hadoop.

5.3.1 About the Adapters

Following are brief descriptions of the Oracle XQuery for Hadoop adapters.

- Avro File Adapter

-

The Avro file adapter provides access to Avro container files stored in HDFS. It includes collection and put functions for reading from and writing to Avro container files.

- JSON File Adapter

-

The JSON file adapter provides access to JSON files stored in HDFS. It contains a collection function for reading JSON files, and a group of helper functions for parsing JSON data directly. You must use another adapter to write the output.

- Oracle Database Adapter

-

The Oracle Database adapter loads data into Oracle Database. This adapter supports a custom put function for direct output to a table in an Oracle database using JDBC or OCI. If a live connection to the database is not available, the adapter also supports output to Data Pump or delimited text files in HDFS; the files can be loaded into the Oracle database with a different utility, such as SQL*Loader, or using external tables. This adapter does not move data out of the database, and therefore does not have collection or get functions.

See "Software Requirements" for the supported versions of Oracle Database.

- Oracle NoSQL Database Adapter

-

The Oracle NoSQL Database adapter provides access to data stored in Oracle NoSQL Database. The data can be read from or written as Table, Avro, XML, binary XML, or text. This adapter includes collection, get, and put functions.

- Sequence File Adapter

-

The sequence file adapter provides access to Hadoop sequence files. A sequence file is a Hadoop format composed of key-value pairs.

This adapter includes collection and put functions for reading from and writing to HDFS sequence files that contain text, XML, or binary XML.

- Solr Adapter

-

The Solr adapter provides functions to create full-text indexes and load them into Apache Solr servers.

- Text File Adapter

-

The text file adapter provides access to text files, such as CSV files. It contains collection and put functions for reading from and writing to text files.

The JSON file adapter extends the support for JSON objects stored in text files.

- XML File Adapter

-

The XML file adapter provides access to XML files stored in HDFS. It contains collection functions for reading large XML files. You must use another adapter to write the output.

5.3.2 About Other Modules for Use With Oracle XQuery for Hadoop

You can use functions from these additional modules in your queries:

- Standard XQuery Functions

-

The standard XQuery math functions are available.

- Hadoop Functions

-

The Hadoop module is a group of functions that are specific to Hadoop.

- Duration, Date, and Time Functions

-

This group of functions parse duration, date, and time values.

- String-Processing Functions

-

These functions add and remove white space that surrounds data values.

5.4 Creating an XQuery Transformation

This chapter describes how to create XQuery transformations using Oracle XQuery for Hadoop. It contains the following topics:

5.4.1 XQuery Transformation Requirements

You create a transformation for Oracle XQuery for Hadoop the same way as any other XQuery transformation, except that you must comply with these additional requirements:

-

The main XQuery expression (the query body) must be in one of the following forms:

FLWOR1

or

(FLWOR1, FLWOR2,... , FLWORN)

In this syntax FLWOR is a top-level XQuery FLWOR expression "For, Let, Where, Order by, Return" expression.

-

Each top-level FLWOR expression must have a

forclause that iterates over an Oracle XQuery for Hadoopcollectionfunction. Thisforclause cannot have a positional variable.See Oracle XQuery for Hadoop Reference for the

collectionfunctions. -

Each top-level FLWOR expression can have optional

let,where, andgroup byclauses. Other types of clauses are invalid, such asorder by,count, andwindowclauses. -

Each top-level FLWOR expression must return one or more results from calling an Oracle XQuery for Hadoop

putfunction. See Oracle XQuery for Hadoop Reference for theputfunctions. -

The query body must be an updating expression. Because all

putfunctions are classified as updating functions, all Oracle XQuery for Hadoop queries are updating queries.In Oracle XQuery for Hadoop, a

%*:putannotation indicates that the function is updating. The%updatingannotation orupdatingkeyword is not required with it.See Also:

-

"FLWOR Expressions" in XQuery 3.1: An XML Query Language at

https://www.w3.org/TR/xquery-31/#id-flwor-expressions -

For a description of updating expressions, "Extensions to XQuery 1.0" in W3C XQuery Update Facility 1.0 at

http://www.w3.org/TR/xquery-update-10/#dt-updating-expression

-

5.4.2 About XQuery Language Support

Oracle XQuery for Hadoop supports W3C XQuery 3.1, except for the following:

-

FLWOR window clause

-

FLWOR count clause

-

namespace constructors

-

fn:parse-ietf-date

-

fn:transform

-

higher order XQuery functions

For the language, see W3C XQuery 3.1: An XML Query Language at https://www.w3.org/TR/xquery-31/.

For the functions, see W3C XPath and XQuery Functions and Operators at https://www.w3.org/TR/xpath-functions-31/.

5.4.3 Accessing Data in the Hadoop Distributed Cache

You can use the Hadoop distributed cache facility to access auxiliary job data. This mechanism can be useful in a join query when one side is a relatively small file. The query might execute faster if the smaller file is accessed from the distributed cache.

To place a file into the distributed cache, use the -files Hadoop command line option when calling Oracle XQuery for Hadoop. For a query to read a file from the distributed cache, it must call the fn:doc function for XML, and either fn:unparsed-text or fn:unparsed-text-lines for text files. See Example 5-7.

5.4.4 Calling Custom Java Functions from XQuery

Oracle XQuery for Hadoop is extensible with custom external functions implemented in the Java language. A Java implementation must be a static method with the parameter and return types as defined by the XQuery API for Java (XQJ) specification.

A custom Java function binding is defined in Oracle XQuery for Hadoop by annotating an external function definition with the %ora-java:binding annotation. This annotation has the following syntax:

%ora-java:binding("java.class.name[#method]")

- java.class.name

-

The fully qualified name of a Java class that contains the implementation method.

- method

-

A Java method name. It defaults to the XQuery function name. Optional.

See Example 5-8 for an example of %ora-java:binding.

All JAR files that contain custom Java functions must be listed in the -libjars command line option. For example:

hadoop jar $OXH_HOME/lib/oxh.jar -libjars myfunctions.jar query.xq

5.4.5 Accessing User-Defined XQuery Library Modules and XML Schemas

Oracle XQuery for Hadoop supports user-defined XQuery library modules and XML schemas when you comply with these criteria:

-

Locate the library module or XML schema file in the same directory where the main query resides on the client calling Oracle XQuery for Hadoop.

-

Import the library module or XML schema from the main query using the location URI parameter of the

import moduleorimport schemastatement. -

Specify the library module or XML schema file in the

-filescommand line option when calling Oracle XQuery for Hadoop.

For an example of using user-defined XQuery library modules and XML schemas, see Example 5-9.

See Also:

"Location URIs" in XQuery 3.1: An XML Query Language at https://www.w3.org/TR/xquery-31/

5.4.6 XQuery Transformation Examples

For these examples, the following text files are in HDFS. The files contain a log of visits to different web pages. Each line represents a visit to a web page and contains the time, user name, page visited, and the status code.

mydata/visits1.log 2013-10-28T06:00:00, john, index.html, 200 2013-10-28T08:30:02, kelly, index.html, 200 2013-10-28T08:32:50, kelly, about.html, 200 2013-10-30T10:00:10, mike, index.html, 401 mydata/visits2.log 2013-10-30T10:00:01, john, index.html, 200 2013-10-30T10:05:20, john, about.html, 200 2013-11-01T08:00:08, laura, index.html, 200 2013-11-04T06:12:51, kelly, index.html, 200 2013-11-04T06:12:40, kelly, contact.html, 200

Example 5-1 Basic Filtering

This query filters out pages visited by user kelly and writes those files into a text file:

import module "oxh:text";

for $line in text:collection("mydata/visits*.log")

let $split := fn:tokenize($line, "\s*,\s*")

where $split[2] eq "kelly"

return text:put($line)

The query creates text files in the output directory that contain the following lines:

2013-11-04T06:12:51, kelly, index.html, 200 2013-11-04T06:12:40, kelly, contact.html, 200 2013-10-28T08:30:02, kelly, index.html, 200 2013-10-28T08:32:50, kelly, about.html, 200

Example 5-2 Group By and Aggregation

The next query computes the number of page visits per day:

import module "oxh:text";

for $line in text:collection("mydata/visits*.log")

let $split := fn:tokenize($line, "\s*,\s*")

let $time := xs:dateTime($split[1])

let $day := xs:date($time)

group by $day

return text:put($day || " => " || fn:count($line))

The query creates text files that contain the following lines:

2013-10-28 => 3 2013-10-30 => 3 2013-11-01 => 1 2013-11-04 => 2

Example 5-3 Inner Joins

This example queries the following text file in HDFS, in addition to the other files. The file contains user profile information such as user ID, full name, and age, separated by colons (:).

mydata/users.txt john:John Doe:45 kelly:Kelly Johnson:32 laura:Laura Smith: phil:Phil Johnson:27

The following query performs a join between users.txt and the log files. It computes how many times users older than 30 visited each page.

import module "oxh:text";

for $userLine in text:collection("mydata/users.txt")

let $userSplit := fn:tokenize($userLine, "\s*:\s*")

let $userId := $userSplit[1]

let $userAge := xs:integer($userSplit[3][. castable as xs:integer])

for $visitLine in text:collection("mydata/visits*.log")

let $visitSplit := fn:tokenize($visitLine, "\s*,\s*")

let $visitUserId := $visitSplit[2]

where $userId eq $visitUserId and $userAge gt 30

group by $page := $visitSplit[3]

return text:put($page || " " || fn:count($userLine))

The query creates text files that contain the following lines:

about.html 2 contact.html 1 index.html 4

The next query computes the number of visits for each user who visited any page; it omits users who never visited any page.

import module "oxh:text";

for $userLine in text:collection("mydata/users.txt")

let $userSplit := fn:tokenize($userLine, "\s*:\s*")

let $userId := $userSplit[1]

for $visitLine in text:collection("mydata/visits*.log")

[$userId eq fn:tokenize(., "\s*,\s*")[2]]

group by $userId

return text:put($userId || " " || fn:count($visitLine))

The query creates text files that contain the following lines:

john 3 kelly 4 laura 1

Note:

When the results of two collection functions are joined, only equijoins are supported. If one or both sources are not from a collection function, then any join condition is allowed.

Example 5-4 Left Outer Joins

This example is similar to the second query in Example 5-3, but also counts users who did not visit any page.

import module "oxh:text";

for $userLine in text:collection("mydata/users.txt")

let $userSplit := fn:tokenize($userLine, "\s*:\s*")

let $userId := $userSplit[1]

for $visitLine allowing empty in text:collection("mydata/visits*.log")

[$userId eq fn:tokenize(., "\s*,\s*")[2]]

group by $userId

return text:put($userId || " " || fn:count($visitLine))

The query creates text files that contain the following lines:

john 3 kelly 4 laura 1 phil 0

Example 5-5 Semijoins

The next query finds users who have ever visited a page:

import module "oxh:text";

for $userLine in text:collection("mydata/users.txt")

let $userId := fn:tokenize($userLine, "\s*:\s*")[1]

where some $visitLine in text:collection("mydata/visits*.log")

satisfies $userId eq fn:tokenize($visitLine, "\s*,\s*")[2]

return text:put($userId)

The query creates text files that contain the following lines:

john kelly laura

Example 5-6 Multiple Outputs

The next query finds web page visits with a 401 code and writes them to trace* files using the XQuery text:trace() function. It writes the remaining visit records into the default output files.

import module "oxh:text";

for $visitLine in text:collection("mydata/visits*.log")

let $visitCode := xs:integer(fn:tokenize($visitLine, "\s*,\s*")[4])

return if ($visitCode eq 401) then text:trace($visitLine) else text:put($visitLine)

The query generates a trace* text file that contains the following line:

2013-10-30T10:00:10, mike, index.html, 401

The query also generates default output files that contain the following lines:

2013-10-30T10:00:01, john, index.html, 200 2013-10-30T10:05:20, john, about.html, 200 2013-11-01T08:00:08, laura, index.html, 200 2013-11-04T06:12:51, kelly, index.html, 200 2013-11-04T06:12:40, kelly, contact.html, 200 2013-10-28T06:00:00, john, index.html, 200 2013-10-28T08:30:02, kelly, index.html, 200 2013-10-28T08:32:50, kelly, about.html, 200

Example 5-7 Accessing Auxiliary Input Data

The next query is an alternative version of the second query in Example 5-3, but it uses the fn:unparsed-text-lines function to access a file in the Hadoop distributed cache:

import module "oxh:text";

for $visitLine in text:collection("mydata/visits*.log")

let $visitUserId := fn:tokenize($visitLine, "\s*,\s*")[2]

for $userLine in fn:unparsed-text-lines("users.txt")

let $userSplit := fn:tokenize($userLine, "\s*:\s*")

let $userId := $userSplit[1]

where $userId eq $visitUserId

group by $userId

return text:put($userId || " " || fn:count($visitLine))

The hadoop command to run the query must use the Hadoop -files option. See "Accessing Data in the Hadoop Distributed Cache."

hadoop jar $OXH_HOME/lib/oxh.jar -files users.txt query.xq

The query creates text files that contain the following lines:

john 3 kelly 4 laura 1

Example 5-8 Calling a Custom Java Function from XQuery

The next query formats input data using the java.lang.String#format method.

import module "oxh:text";

declare %ora-java:binding("java.lang.String#format")

function local:string-format($pattern as xs:string, $data as xs:anyAtomicType*) as xs:string external;

for $line in text:collection("mydata/users*.txt")

let $split := fn:tokenize($line, "\s*:\s*")

return text:put(local:string-format("%s,%s,%s", $split))

The query creates text files that contain the following lines:

john,John Doe,45 kelly,Kelly Johnson,32 laura,Laura Smith, phil,Phil Johnson,27

See Also:

Java Platform Standard Edition 7 API Specification for Class String at http://docs.oracle.com/javase/7/docs/api/java/lang/String.html#format(java.lang.String, java.lang.Object...)

Example 5-9 Using User-Defined XQuery Library Modules and XML Schemas

This example uses a library module named mytools.xq:

module namespace mytools = "urn:mytools";

declare %ora-java:binding("java.lang.String#format")

function mytools:string-format($pattern as xs:string, $data as xs:anyAtomicType*) as xs:string external;

The next query is equivalent to the previous one, but it calls a string-format function from the mytools.xq library module:

import module namespace mytools = "urn:mytools" at "mytools.xq";

import module "oxh:text";

for $line in text:collection("mydata/users*.txt")

let $split := fn:tokenize($line, "\s*:\s*")

return text:put(mytools:string-format("%s,%s,%s", $split))

The query creates text files that contain the following lines:

john,John Doe,45 kelly,Kelly Johnson,32 laura,Laura Smith, phil,Phil Johnson,27

Example 5-10 Filtering Dirty Data Using a Try/Catch Expression

The XQuery try/catch expression can be used to broadly handle cases where input data is in an unexpected form, corrupted, or missing. The next query finds reads an input file, ages.txt, that contains a username followed by the user’s age.

USER AGE ------------------ john 45 kelly laura 36 phil OLD!

Notice that the first two lines of this file contain header text and that the entries for Kelly and Phil have missing and dirty age values. For each user in this file, the query writes out the user name and whether the user is over 40 or not.

import module "oxh:text";

for $line in text:collection("ages.txt")

let $split := fn:tokenize($line, "\s+")

return

try {

let $user := $split[1]

let $age := $split[2] cast as xs:integer

return

if ($age gt 40) then

text:put($user || " is over 40")

else

text:put($user || " is not over 40")

} catch * {

text:trace($err:code || " : " || $line)

}

The query generates an output text file that contains the following lines:

john is over 40 laura is not over 40

The query also generates a trace* file that contains the following lines:

err:FORG0001 : USER AGE err:XPTY0004 : ------------------ err:XPTY0004 : kelly err:FORG0001 : phil OLD!

5.5 Running Queries

To run a query, call the oxh utility using the hadoop jar command. The following is the basic syntax:

hadoop jar $OXH_HOME/lib/oxh.jar [generic options] query.xq -output directory [-clean] [-ls] [-print] [-sharelib hdfs_dir][-skiperrors] [-version]

5.5.1 Oracle XQuery for Hadoop Options

- query.xq

-

Identifies the XQuery file. See "Creating an XQuery Transformation."

- -clean

-

Deletes all files from the output directory before running the query. If you use the default directory, Oracle XQuery for Hadoop always cleans the directory, even when this option is omitted.

- -exportliboozie directory

-

Copies Oracle XQuery for Hadoop dependencies to the specified directory. Use this option to add Oracle XQuery for Hadoop to the Hadoop distributed cache and the Oozie shared library. External dependencies are also copied, so ensure that environment variables such as

KVHOME,OLH_HOME, andOXH_SOLR_MR_HOMEare set for use by the related adapters (Oracle NoSQL Database, Oracle Database, and Solr). - -ls

-

Lists the contents of the output directory after the query executes.

- -output directory

-

Specifies the output directory of the query. The put functions of the file adapters create files in this directory. Written values are spread across one or more files. The number of files created depends on how the query is distributed among tasks. The default output directory is

/tmp/oxh-user_name/output.See "About the Oracle XQuery for Hadoop Functions" for a description of put functions.

-

Prints the contents of all files in the output directory to the standard output (your screen). When printing Avro files, each record prints as JSON text.

- -sharelib hdfs_dir

-

Specifies the HDFS folder location containing Oracle XQuery for Hadoop and third-party libraries.

- -skiperrors

-

Turns on error recovery, so that an error does not halt processing.

All errors that occur during query processing are counted, and the total is logged at the end of the query. The error messages of the first 20 errors per task are also logged. See these configuration properties:

- -version

-

Displays the Oracle XQuery for Hadoop version and exits without running a query.

5.5.2 Generic Options

You can include any generic hadoop command-line option. Oracle XQuery for Hadoop implements the org.apache.hadoop.util.Tool interface and follows the standard Hadoop methods for building MapReduce applications.

The following generic options are commonly used with Oracle XQuery for Hadoop:

- -conf job_config.xml

-

Identifies the job configuration file. See "Oracle XQuery for Hadoop Configuration Properties."

When you work with the Oracle Database or Oracle NoSQL Database adapters, you can set various job properties in this file. See "Oracle Loader for Hadoop Configuration Properties and Corresponding %oracle-property Annotations" and "Oracle NoSQL Database Adapter Configuration Properties".

- -D property=value

-

Identifies a configuration property. See "Oracle XQuery for Hadoop Configuration Properties."

- -files

-

Specifies a comma-delimited list of files that are added to the distributed cache. See "Accessing Data in the Hadoop Distributed Cache."

See Also:

For full descriptions of the generic options, go to

5.5.3 About Running Queries Locally

When developing queries, you can run them locally before submitting them to the cluster. A local run enables you to see how the query behaves on small data sets and diagnose potential problems quickly.

In local mode, relative URIs resolve against the local file system instead of HDFS, and the query runs in a single process.

To run a query in local mode:

-

Set the Hadoop

-jtand-fsgeneric arguments tolocal. This example runs the query described in "Example: Hello World!" in local mode:$ hadoop jar $OXH_HOME/lib/oxh.jar -jt local -fs local ./hello.xq -output ./myoutput -print

-

Check the result file in the local output directory of the query, as shown in this example:

$ cat ./myoutput/part-m-00000 Hello World!

5.6 Running Queries from Apache Oozie

Apache Oozie is a workflow tool that enables you to run multiple MapReduce jobs in a specified order and, optionally, at a scheduled time. Oracle XQuery for Hadoop provides an Oozie action node that you can use to run Oracle XQuery for Hadoop queries from an Oozie workflow.

5.6.1 Getting Started Using the Oracle XQuery for Hadoop Oozie Action

Follow these steps to execute your queries in an Oozie workflow:

5.6.2 Supported XML Elements

The Oracle XQuery for Hadoop action extends Oozie's Java action. It supports the following optional child XML elements with the same syntax and semantics as the Java action:

-

archive -

configuration -

file -

job-tracker -

job-xml -

name-node -

prepare

See Also:

The Java action description in the Oozie Specification at

https://oozie.apache.org/docs/4.0.0/WorkflowFunctionalSpec.html#a3.2.7_Java_Action

In addition, the Oracle XQuery for Hadoop action supports the following elements:

-

script: The location of the Oracle XQuery for Hadoop query file. Required.The query file must be in the workflow application directory. A relative path is resolved against the application directory.

Example:

<script>myquery.xq</script> -

output: The output directory of the query. Required.The

outputelement has an optionalcleanattribute. Set this attribute totrueto delete the output directory before the query is run. If the output directory already exists and thecleanattribute is either not set or set tofalse, an error occurs. The output directory cannot exist when the job runs.Example:

<output clean="true">/user/jdoe/myoutput</output>

Any error raised while running the query causes Oozie to perform the error transition for the action.

5.6.3 Example: Hello World

This example uses the following files:

-

workflow.xml: Describes an Oozie action that sets two configuration values for the query inhello.xq: an HDFS file and the stringWorld!The HDFS input file is

/user/jdoe/data/hello.txtand contains this string:Hello

See Example 5-11.

-

hello.xq: Runs a query using Oracle XQuery for Hadoop.See Example 5-12.

-

job.properties: Lists the job properties for Oozie. See Example 5-13.

To run the example, use this command:

oozie job -oozie http://example.com:11000/oozie -config job.properties -run

After the job runs, the /user/jdoe/myoutput output directory contains a file with the text "Hello World!"

Example 5-11 The workflow.xml File for Hello World

This file is named /user/jdoe/hello-oozie-oxh/workflow.xml. It uses variables that are defined in the job.properties file.

<workflow-app xmlns="uri:oozie:workflow:0.4" name="oxh-helloworld-wf">

<start to="hello-node"/>

<action name="hello-node">

<oxh xmlns="oxh:oozie-action:v1">

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<!--

The configuration can be used to parameterize the query.

-->

<configuration>

<property>

<name>myinput</name>

<value>${nameNode}/user/jdoe/data/src.txt</value>

</property>

<property>

<name>mysuffix</name>

<value> World!</value>

</property>

</configuration>

<script>hello.xq</script>

<output clean="true">${nameNode}/user/jdoe/myoutput</output>

</oxh>

<ok to="end"/>

<error to="fail"/>

</action>

<kill name="fail">

<message>OXH failed: [${wf:errorMessage(wf:lastErrorNode())}]</message>

</kill>

<end name="end"/>

</workflow-app>

Example 5-12 The hello.xq File for Hello World

This file is named /user/jdoe/hello-oozie-oxh/hello.xq.

import module "oxh:text";

declare variable $input := oxh:property("myinput");

declare variable $suffix := oxh:property("mysuffix");

for $line in text:collection($input)

return

text:put($line || $suffix)

Example 5-13 The job.properties File for Hello World

oozie.wf.application.path=hdfs://example.com:8020/user/jdoe/hello-oozie-oxh nameNode=hdfs://example.com:8020 jobTracker=hdfs://example.com:8032 oozie.use.system.libpath=true

5.7 Oracle XQuery for Hadoop Configuration Properties

Oracle XQuery for Hadoop uses the generic methods of specifying configuration properties in the hadoop command. You can use the -conf option to identify configuration files, and the -D option to specify individual properties. See "Running Queries."

See Also:

Hadoop documentation for job configuration files at

| Property | Description | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

oracle.hadoop.xquery.lib.share |

Type: String Default Value: Not defined. Description: Identifies an HDFS directory that contains the libraries for Oracle XQuery for Hadoop and third-party software. For example: http://path/to/shared/folder All HDFS files must be in the same directory. Alternatively, use the Pattern Matching: You can use pattern matching characters in a directory name. If multiple directories match the pattern, then the directory with the most recent modification timestamp is used. To specify a directory name, use alphanumeric characters and, optionally, any of the following special, pattern matching characters:

Oozie libraries: The value |

||||||||||||||||||

|

oracle.hadoop.xquery.output |

Type: String Default Value: Description: Sets the output directory for the query. This property is equivalent to the |

||||||||||||||||||

|

oracle.hadoop.xquery.scratch |

Type: String Default Value: Description: Sets the HDFS temp directory for Oracle XQuery for Hadoop to store temporary files. |

||||||||||||||||||

|

oracle.hadoop.xquery.timezone |

Type: String Default Value: Client system time zone Description: The XQuery implicit time zone, which is used in a comparison or arithmetic operation when a date, time, or datetime value does not have a time zone. The value must be in the format described by the Java

|

||||||||||||||||||

|

oracle.hadoop.xquery.skiperrors |

Type: Boolean Default Value: Description: Set to |

||||||||||||||||||

|

oracle.hadoop.xquery.skiperrors.counters |

Type: Boolean Default Value: Description: Set to |

||||||||||||||||||

|

oracle.hadoop.xquery.skiperrors.max |

Type: Integer Default Value: Unlimited Description: Sets the maximum number of errors that a single MapReduce task can recover from. |

||||||||||||||||||

|

oracle.hadoop.xquery.skiperrors.log.max |

Type: Integer Default Value: 20 Description: Sets the maximum number of errors that a single MapReduce task logs. |

||||||||||||||||||

|

log4j.logger.oracle.hadoop.xquery |

Type: String Default Value: Not defined Description: Configures the |

5.8 Third-Party Licenses for Bundled Software

Oracle XQuery for Hadoop depends on the following third-party products:

Unless otherwise specifically noted, or as required under the terms of the third party license (e.g., LGPL), the licenses and statements herein, including all statements regarding Apache-licensed code, are intended as notices only.

5.8.1 Apache Licensed Code

The following is included as a notice in compliance with the terms of the Apache 2.0 License, and applies to all programs licensed under the Apache 2.0 license:

You may not use the identified files except in compliance with the Apache License, Version 2.0 (the "License.")

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

A copy of the license is also reproduced below.

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and limitations under the License.

5.8.2 Apache License

- Version 2.0, January 2004

http://www.apache.org/licenses/

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

-

Definitions

"License" shall mean the terms and conditions for use, reproduction, and distribution as defined by Sections 1 through 9 of this document.

"Licensor" shall mean the copyright owner or entity authorized by the copyright owner that is granting the License.

"Legal Entity" shall mean the union of the acting entity and all other entities that control, are controlled by, or are under common control with that entity. For the purposes of this definition, "control" means (i) the power, direct or indirect, to cause the direction or management of such entity, whether by contract or otherwise, or (ii) ownership of fifty percent (50%) or more of the outstanding shares, or (iii) beneficial ownership of such entity.

"You" (or "Your") shall mean an individual or Legal Entity exercising permissions granted by this License.

"Source" form shall mean the preferred form for making modifications, including but not limited to software source code, documentation source, and configuration files.

"Object" form shall mean any form resulting from mechanical transformation or translation of a Source form, including but not limited to compiled object code, generated documentation, and conversions to other media types.

"Work" shall mean the work of authorship, whether in Source or Object form, made available under the License, as indicated by a copyright notice that is included in or attached to the work (an example is provided in the Appendix below).

"Derivative Works" shall mean any work, whether in Source or Object form, that is based on (or derived from) the Work and for which the editorial revisions, annotations, elaborations, or other modifications represent, as a whole, an original work of authorship. For the purposes of this License, Derivative Works shall not include works that remain separable from, or merely link (or bind by name) to the interfaces of, the Work and Derivative Works thereof.

"Contribution" shall mean any work of authorship, including the original version of the Work and any modifications or additions to that Work or Derivative Works thereof, that is intentionally submitted to Licensor for inclusion in the Work by the copyright owner or by an individual or Legal Entity authorized to submit on behalf of the copyright owner. For the purposes of this definition, "submitted" means any form of electronic, verbal, or written communication sent to the Licensor or its representatives, including but not limited to communication on electronic mailing lists, source code control systems, and issue tracking systems that are managed by, or on behalf of, the Licensor for the purpose of discussing and improving the Work, but excluding communication that is conspicuously marked or otherwise designated in writing by the copyright owner as "Not a Contribution."

"Contributor" shall mean Licensor and any individual or Legal Entity on behalf of whom a Contribution has been received by Licensor and subsequently incorporated within the Work.

-

Grant of Copyright License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable copyright license to reproduce, prepare Derivative Works of, publicly display, publicly perform, sublicense, and distribute the Work and such Derivative Works in Source or Object form.

-

Grant of Patent License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable (except as stated in this section) patent license to make, have made, use, offer to sell, sell, import, and otherwise transfer the Work, where such license applies only to those patent claims licensable by such Contributor that are necessarily infringed by their Contribution(s) alone or by combination of their Contribution(s) with the Work to which such Contribution(s) was submitted. If You institute patent litigation against any entity (including a cross-claim or counterclaim in a lawsuit) alleging that the Work or a Contribution incorporated within the Work constitutes direct or contributory patent infringement, then any patent licenses granted to You under this License for that Work shall terminate as of the date such litigation is filed.

-

Redistribution. You may reproduce and distribute copies of the Work or Derivative Works thereof in any medium, with or without modifications, and in Source or Object form, provided that You meet the following conditions:

-

You must give any other recipients of the Work or Derivative Works a copy of this License; and

-

You must cause any modified files to carry prominent notices stating that You changed the files; and

-

You must retain, in the Source form of any Derivative Works that You distribute, all copyright, patent, trademark, and attribution notices from the Source form of the Work, excluding those notices that do not pertain to any part of the Derivative Works; and

-

If the Work includes a "NOTICE" text file as part of its distribution, then any Derivative Works that You distribute must include a readable copy of the attribution notices contained within such NOTICE file, excluding those notices that do not pertain to any part of the Derivative Works, in at least one of the following places: within a NOTICE text file distributed as part of the Derivative Works; within the Source form or documentation, if provided along with the Derivative Works; or, within a display generated by the Derivative Works, if and wherever such third-party notices normally appear. The contents of the NOTICE file are for informational purposes only and do not modify the License. You may add Your own attribution notices within Derivative Works that You distribute, alongside or as an addendum to the NOTICE text from the Work, provided that such additional attribution notices cannot be construed as modifying the License.

You may add Your own copyright statement to Your modifications and may provide additional or different license terms and conditions for use, reproduction, or distribution of Your modifications, or for any such Derivative Works as a whole, provided Your use, reproduction, and distribution of the Work otherwise complies with the conditions stated in this License.

-

-

Submission of Contributions. Unless You explicitly state otherwise, any Contribution intentionally submitted for inclusion in the Work by You to the Licensor shall be under the terms and conditions of this License, without any additional terms or conditions. Notwithstanding the above, nothing herein shall supersede or modify the terms of any separate license agreement you may have executed with Licensor regarding such Contributions.

-

Trademarks. This License does not grant permission to use the trade names, trademarks, service marks, or product names of the Licensor, except as required for reasonable and customary use in describing the origin of the Work and reproducing the content of the NOTICE file.

-

Disclaimer of Warranty. Unless required by applicable law or agreed to in writing, Licensor provides the Work (and each Contributor provides its Contributions) on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied, including, without limitation, any warranties or conditions of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A PARTICULAR PURPOSE. You are solely responsible for determining the appropriateness of using or redistributing the Work and assume any risks associated with Your exercise of permissions under this License.

-

Limitation of Liability. In no event and under no legal theory, whether in tort (including negligence), contract, or otherwise, unless required by applicable law (such as deliberate and grossly negligent acts) or agreed to in writing, shall any Contributor be liable to You for damages, including any direct, indirect, special, incidental, or consequential damages of any character arising as a result of this License or out of the use or inability to use the Work (including but not limited to damages for loss of goodwill, work stoppage, computer failure or malfunction, or any and all other commercial damages or losses), even if such Contributor has been advised of the possibility of such damages.

-

Accepting Warranty or Additional Liability. While redistributing the Work or Derivative Works thereof, You may choose to offer, and charge a fee for, acceptance of support, warranty, indemnity, or other liability obligations and/or rights consistent with this License. However, in accepting such obligations, You may act only on Your own behalf and on Your sole responsibility, not on behalf of any other Contributor, and only if You agree to indemnify, defend, and hold each Contributor harmless for any liability incurred by, or claims asserted against, such Contributor by reason of your accepting any such warranty or additional liability.

END OF TERMS AND CONDITIONS

APPENDIX: How to apply the Apache License to your work

To apply the Apache License to your work, attach the following boilerplate notice, with the fields enclosed by brackets "[]" replaced with your own identifying information. (Don't include the brackets!) The text should be enclosed in the appropriate comment syntax for the file format. We also recommend that a file or class name and description of purpose be included on the same "printed page" as the copyright notice for easier identification within third-party archives.

Copyright [yyyy] [name of copyright owner]

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

This product includes software developed by The Apache Software Foundation (http://www.apache.org/) (listed below):5.8.3 ANTLR 3.2

[The BSD License]

Copyright © 2010 Terence Parr

All rights reserved.

Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met:

-

Redistributions of source code must retain the above copyright notice, this list of conditions and the following disclaimer.

-

Redistributions in binary form must reproduce the above copyright notice, this list of conditions and the following disclaimer in the documentation and/or other materials provided with the distribution.

-

Neither the name of the author nor the names of its contributors may be used to endorse or promote products derived from this software without specific prior written permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

5.8.4 Apache Ant 1.9.8

Copyright 1999-2008 The Apache Software Foundation

This product includes software developed by The Apache Software Foundation (http://www.apache.org).

This product includes also software developed by:

-

the W3C consortium (

http://www.w3c.org) -

the SAX project (

http://www.saxproject.org)

The <sync> task is based on code Copyright (c) 2002, Landmark Graphics Corp that has been kindly donated to the Apache Software Foundation.

Portions of this software were originally based on the following:

-

software copyright (c) 1999, IBM Corporation, http://www.ibm.com.

-

software copyright (c) 1999, Sun Microsystems, http://www.sun.com.

-

voluntary contributions made by Paul Eng on behalf of the Apache Software Foundation that were originally developed at iClick, Inc., software copyright (c) 1999

W3C® SOFTWARE NOTICE AND LICENSE

http://www.w3.org/Consortium/Legal/2002/copyright-software-20021231

This work (and included software, documentation such as READMEs, or other related items) is being provided by the copyright holders under the following license. By obtaining, using and/or copying this work, you (the licensee) agree that you have read, understood, and will comply with the following terms and conditions.

Permission to copy, modify, and distribute this software and its documentation, with or without modification, for any purpose and without fee or royalty is hereby granted, provided that you include the following on ALL copies of the software and documentation or portions thereof, including modifications:

-

The full text of this NOTICE in a location viewable to users of the redistributed or derivative work.

-

Any pre-existing intellectual property disclaimers, notices, or terms and conditions. If none exist, the W3C Software Short Notice should be included (hypertext is preferred, text is permitted) within the body of any redistributed or derivative code.

-

Notice of any changes or modifications to the files, including the date changes were made. (We recommend you provide URIs to the location from which the code is derived.)

THIS SOFTWARE AND DOCUMENTATION IS PROVIDED "AS IS," AND COPYRIGHT HOLDERS MAKE NO REPRESENTATIONS OR WARRANTIES, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO, WARRANTIES OF MERCHANTABILITY OR FITNESS FOR ANY PARTICULAR PURPOSE OR THAT THE USE OF THE SOFTWARE OR DOCUMENTATION WILL NOT INFRINGE ANY THIRD PARTY PATENTS, COPYRIGHTS, TRADEMARKS OR OTHER RIGHTS.

COPYRIGHT HOLDERS WILL NOT BE LIABLE FOR ANY DIRECT, INDIRECT, SPECIAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF ANY USE OF THE SOFTWARE OR DOCUMENTATION.

The name and trademarks of copyright holders may NOT be used in advertising or publicity pertaining to the software without specific, written prior permission. Title to copyright in this software and any associated documentation will at all times remain with copyright holders.

This formulation of W3C's notice and license became active on December 31 2002. This version removes the copyright ownership notice such that this license can be used with materials other than those owned by the W3C, reflects that ERCIM is now a host of the W3C, includes references to this specific dated version of the license, and removes the ambiguous grant of "use". Otherwise, this version is the same as the previous version and is written so as to preserve the Free Software Foundation's assessment of GPL compatibility and OSI's certification under the Open Source Definition. Please see our Copyright FAQ for common questions about using materials from our site, including specific terms and conditions for packages like libwww, Amaya, and Jigsaw. Other questions about this notice can be directed to site-policy@w3.org.

Joseph Reagle <site-policy@w3.org>

This license came from: http://www.megginson.com/SAX/copying.html

However please note future versions of SAX may be covered under http://saxproject.org/?selected=pd

SAX2 is Free!

I hereby abandon any property rights to SAX 2.0 (the Simple API for XML), and release all of the SAX 2.0 source code, compiled code, and documentation contained in this distribution into the Public Domain. SAX comes with NO WARRANTY or guarantee of fitness for any purpose.

David Megginson, david@megginson.com

2000-05-05

5.8.5 Apache Xerces 2.11

Xerces Copyright © 1999-2016 The Apache Software Foundation. All rights reserved. Licensed under the Apache 1.1 License Agreement.

The names "Xerces" and "Apache Software Foundation must not be used to endorse or promote products derived from this software or be used in a product name without prior written permission. For written permission, please contact apache@apache.org email address.

This software consists of voluntary contributions made by many individuals on behalf of the Apache Software Foundation. For more information on the Apache Software Foundation, please see http://www.apache.org website.

The Apache Software License, Version 1.1

Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met:

-

Redistributions of source code must retain the above copyright notice, this list of conditions and the following disclaimer.

-

Redistributions in binary form must reproduce the above copyright notice, this list of conditions and the following disclaimer in the documentation and/or other materials provided with the distribution.

-

The end-user documentation included with the redistribution, if any, must include the acknowledgements set forth above in connection with the software (“This product includes software developed by the ….) Alternately, this acknowledgement may appear in the software itself, if and wherever such third-party acknowledgements normally appear.

-

The names identified above with the specific software must not be used to endorse or promote products derived from this software without prior written permission. For written permission, please contact apache@apache.org email address.

-

Products derived from this software may not be called "Apache" nor may "Apache" appear in their names without prior written permission of the Apache Group.

THIS SOFTWARE IS PROVIDED "AS IS" AND ANY EXPRESSED OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE APACHE SOFTWARE FOUNDATION OR ITS CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

5.8.6 Stax2 API 3.1.4

Copyright © 2004–2010, Woodstox Project (http://woodstox.codehaus.org/)

All rights reserved.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS," AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

5.8.7 Woodstox XML Parser 5.0.2

This copy of Woodstox XML processor is licensed under the Apache (Software) License, version 2.0 ("the License"). See the License for details about distribution rights, and the specific rights regarding derivate works.

You may obtain a copy of the License at:

http://www.apache.org/licenses/

A copy is also included with both the downloadable source code package and jar that contains class bytecodes, as file "ASL 2.0". In both cases, that file should be located next to this file: in source distribution the location should be "release-notes/asl"; and in jar "META-INF/"

This product currently only contains code developed by authors of specific components, as identified by the source code files.

Since product implements StAX API, it has dependencies to StAX API classes.

For additional credits (generally to people who reported problems) see CREDITS file.