6 Using the In-Memory Analyst (PGX)

The in-memory analyst feature of Oracle Spatial and Graph supports a set of analytical functions.

This chapter provides examples using the in-memory analyst (also referred to as Property Graph In-Memory Analytics, and often abbreviated as PGX in the Javadoc, command line, path descriptions, error messages, and examples). It contains the following major topics.

- Reading a Graph into Memory

This topic provides an example of reading graph interactively into memory using the shell interface. - Configuring the In-Memory Analyst

You can configure the in-memory analyst engine and its run-time behavior by assigning a single JSON file to the in-memory analyst at startup. - Reading Custom Graph Data

You can read your own custom graph data. This example creates a graph, alters it, and shows how to read it properly. - Storing Graph Data on Disk

After reading a graph into memory using either Java or the Shell, you can store it on disk in different formats. You can then use the stored graph data as input to the in-memory analyst at a later time. - Executing Built-in Algorithms

The in-memory analyst contains a set of built-in algorithms that are available as Java APIs. - Creating Subgraphs

You can create subgraphs based on a graph that has been loaded into memory. - Using Pattern-Matching Queries with Graphs

You can issue a pattern-matching query against an in-memory graph, and then work with the results of that query. - Starting the In-Memory Analyst Server

Big Data Spatial and Graph bundles a preconfigured version of Apache Tomcat that allows you to start the in-memory analyst server by running a script. - Deploying to Jetty

You can deploy the in-memory analyst to Eclipse Jetty, Apache Tomcat, or Oracle WebLogic Server. This example shows how to deploy the in-memory analyst as a web application with Eclipse Jetty. - Deploying to Apache Tomcat

You can deploy the in-memory analyst to Eclipse Jetty, Apache Tomcat, or Oracle WebLogic. This example shows how to deploy the in-memory analyst as a web application with Apache Tomcat. - Deploying to Oracle WebLogic Server

You can deploy the in-memory analysts to Eclipse Jetty, Apache Tomcat, or Oracle WebLogic Server. This example shows how to deploy the in-memory analyst as a web application with Oracle WebLogic Server. - Connecting to the In-Memory Analyst Server

After the property graph in-memory analyst is deployed as a server, and installed in a Hadoop cluster -- or on a client system without Hadoop as a web application on Eclipse Jetty, Apache Tomcat, or Oracle WebLogic Server -- you can connect to the in-memory analyst server. - Using the In-Memory Analyst in Distributed Mode

The in-memory analyst can be run in shared memory mode or distributed mode. - Reading and Storing Data in HDFS

The in-memory analyst supports the Hadoop Distributed File System (HDFS). This example shows how to read and access graph data in HDFS using the in-memory analyst APIs. - Running the In-Memory Analyst as a YARN Application

In this example you will learn how to start, stop and monitor in-memory analyst servers on a Hadoop cluster via Hadoop NextGen MapReduce (YARN) scheduling. - Using Oracle Two-Tables Relational Format

When using a relational data model, graph data can be represented with two relational tables. One table is for nodes and their properties; the other table is for edges and their properties. - Using the In-Memory Analyst to Analyze Graph Data in Apache Spark

The property graph feature in Oracle Big Data Spatial and Graph enables integration of in-memory analytics and Apache Spark. - Using the In-Memory Analyst Zeppelin Interpreter

The in-memory analyst provides an interpreter implementation for Apache Zeppelin. This tutorial topic explains how to install the in-memory analyst interpreter into your local Zeppelin installation and to perform some simple operations. - Using the In-Memory Analyst Enterprise Scheduler

The in-memory analyst enterprise scheduler provides advanced scheduling features.

6.1 Reading a Graph into Memory

This topic provides an example of reading graph interactively into memory using the shell interface.

These are the major steps:

6.1.1 Connecting to an In-Memory Analyst Server Instance

To start the in-memory analyst:

If the in-memory analyst software is installed correctly, you will see an engine-running log message and the in-memory analyst shell prompt (pgx>):

The variables instance, session, and analyst are ready to use.

In the preceding example in this topic, the shell started a local instance because the pgx command did not specify a remote URL.

6.1.2 Using the Shell Help

The in-memory analyst shell provides a help system, which you access using the :help command.

6.1.3 Providing Graph Metadata in a Configuration File

An example graph is included in the installation directory, under /opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/. It uses a configuration file that describes how the in-memory analyst reads the graph.

pgx> cat /opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj.json

===> {

"uri": "sample.adj",

"format": "adj_list",

"node_props": [{

"name": "prop",

"type": "integer"

}],

"edge_props": [{

"name": "cost",

"type": "double"

}],

"separator": " "

}

The uri field provides the location of the graph data. This path resolves relative to the parent directory of the configuration file. When the in-memory analyst loads the graph, it searches the examples/graphs directory for a file named sample.adj.

The other fields indicate that the graph data is provided in adjacency list format, and consists of one node property of type integer and one edge property of type double.

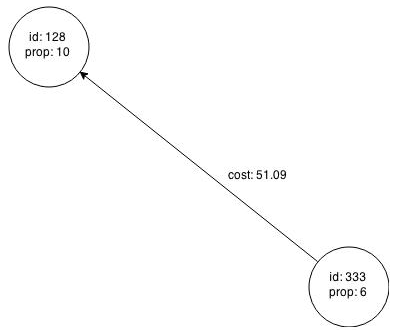

This is the graph data in adjacency list format:

pgx> cat /opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj

===> 128 10 1908 27.03 99 8.51

99 2 333 338.0

1908 889

333 6 128 51.09

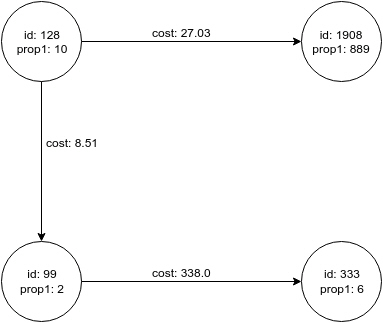

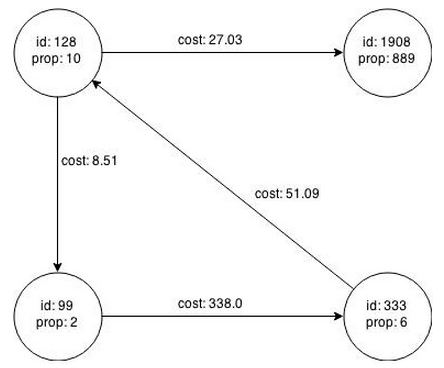

Figure 6-1 shows a property graph created from the data:

Figure 6-1 Property Graph Rendered by sample.adj Data

Description of "Figure 6-1 Property Graph Rendered by sample.adj Data"

6.1.4 Reading Graph Data into Memory

To read a graph into memory, you must pass the following information:

-

The path to the graph configuration file that specifies the graph metadata

-

A unique alphanumeric name that you can use to reference the graph

An error results if you previously loaded a different graph with the same name.

To specify the path to the file:

-

If the vertices and edges are specified in one file, use

uri:{ "uri": "path/to/file.format", ...} -

To specify multiple files (for example, ADJ_LIST, EDGE_LIST), use

uris:{ "uris": ["file1.format", "file2.format"] ...}Note that most plain-text formats can be parsed in parallel by thee in-memory analyst.

-

If the file format is different depending on whether it contains vertices or edges (for example, FLAT_FILE, TWO_TABLES), use

vertex_urisandedge_uris:{ "vertex_uris": ["vertices1.format", "vertices2.format"], "edge_uris": ["edges1.format", "edges2.format"] ...}

Supported File Systems

The in-memory analyst supports loading from graph configuration files and graph data files over various protocols and virtual file systems. The type of file system or protocol is determined by the scheme of the uniform resource identifier (URI):

-

Local file system (

file:). This is also the default if the given URI does not contain any scheme. -

classpath (

classpath:orres:) -

HDFS (

hdfs:) -

HTTP (

http:orhttps:) -

Various archive formats (

zip:,jar:,tar:,tgz:,tbz2:,gz:, andbz2:)The URI format is

scheme://arch-file-uri[!absolute-path]. For example:jar:../lib/classes.jar!/META-INF/graph.jsonPaths may be nested. For example:

tar:gz:http://anyhost/dir/mytar.tar.gz!/mytar.tar!/path/in/tar/graph.dataTo use the exclamation point (!) as a literal file-name character, it must be escaped using:

%21;

Note that relative paths are always resolved relative to the parent directory of the configuration file.

Example: Using the Shell to Read a Graph

pgx> graph = session.readGraphWithProperties("/opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj.json", "sample");

==> PgxGraph[name=sample,N=4,E=4,created=1476225669037]

pgx> graph.getNumVertices()

==> 4

Example: Using Java to Read a Graph

import oracle.pgx.api.*;

ServerInstance instance = Pgx.getInstance(Pgx.EMBEDDED_URL);

// ServerInstance instance = Pgx.getInstance("http://my-server:7007"); // remote instance

PgxSession session = instance.createSession("my-session");

PgxGraph graph = session.readGraphWithProperties("/opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj.json");

Example: Using JavaScript to Read a Graph

const pgx = require('oracle-pgx-client');

let p = pgx.connect("http://my-server:7007");

let json = {

"uri": "sample.adj",

"format": "adj_list",

"node_props": [{

"name": "prop",

"type": "integer"

}],

"edge_props": [{

"name": "cost",

"type": "double"

}],

"separator": " "

}

p.then(function(session) {

return session.readGraphWithProperties(json);

}).then(function(graph) {

// do something with graph

});

The following topics contain additional examples of reading a property graph into memory.

6.1.4.1 Read a Graph Stored in Apache HBase into Memory

To read a property graph stored in Apache HBase, you can create a JSON based configuration file as follows. Note that the quorum, client port, graph name, and other information must be customized for your own setup.

% cat /tmp/my_graph_hbase.json

{

"format": "pg",

"db_engine": "hbase",

"zk_quorum": "scaj31bda07,scaj31bda08,scaj31bda09",

"zk_client_port": 2181,

"name": "connections",

"node_props": [{

"name": "country",

"type": "string"

}],

"edge_props": [{

"name": "label",

"type": "string"

}, {

"name": "weight",

"type": "float"

}],

"loading": {

"load_edge_label": true

}

}

EOF

With the following command, the property graph connections will be read into memory:

pgx> session.readGraphWithProperties("/tmp/my_graph_hbase.json", "connections")

==> PGX Graph named connections ...

Note that when dealing with a large graph, it may become necessary to tune parameters like number of IO workers, number of workers for analysis, task timeout, and others. See Configuring the In-Memory Analyst.

6.1.4.2 Read a Graph Stored in Oracle NoSQL Database into Memory

To read a property graph stored in Oracle NoSQL Database, you can create a JSON based configuration file as follows. Note that the hosts, store name, graph name, and other information must be customized for your own setup.

% cat /tmp/my_graph_nosql.json

{

"format": "pg",

"db_engine": "nosql",

"hosts": [

"zathras01:5000"

],

"store_name": "kvstore",

"name": "connections",

"node_props": [{

"name": "country",

"type": "string"

}],

"loading": {

"load_edge_label": true

},

"edge_props": [{

"name": "label",

"type": "string"

}, {

"name": "weight",

"type": "float"

}]

}

Then, read the configuration file into memory. The following example snippet read the file into memory, generates an undirected graph (named U) from the original data, and counts the number of triangles.

pgx> g = session.readGraphWithProperties("/tmp/my_graph_nosql.json", "connections")

pgx> analyst.countTriangles(g, false)

==> 8

6.1.4.3 Read a Graph Stored in the Local File System into Memory

The following command uses the configuration file from "Providing Graph Metadata in a Configuration File" and the name my-graph:

pgx> g = session.readGraphWithProperties("/opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj.json", "my-graph")

6.2 Configuring the In-Memory Analyst

You can configure the in-memory analyst engine and its run-time behavior by assigning a single JSON file to the in-memory analyst at startup.

This file can include the parameters shown in the following table. Some examples follow the table.

To specify the specify the configuration file, see Specifying the Configuration File to the In-Memory Analyst.

Note:

-

Relative paths in parameter values are always resolved relative to the configuration file in which they are specified. For example, if the configuration file is

/pgx/conf/pgx.confand if a file in a parameter value is specified asgraph-configs/my-graph.bin.json, then the file path is resolved to/pgx/conf/graph-configs/my-graph.bin.json. -

The parameter default values are optimized to deliver the best performance across a wide set of algorithms. Depending on your workload. you may be able to improve performance further by experimenting with different strategies, sizes, and thresholds.

Table 6-1 Configuration Parameters for the In-Memory Analyst

| Parameter | Type | Description | Default |

|---|---|---|---|

|

admin_request_cache_timeout |

integer |

After how many seconds admin request results get removed from the cache. Requests which are not done or not yet consumed are excluded from this timeout. Note: this is only relevant if PGX is deployed as a webapp. |

60 |

|

allow_idle_timeout_overwrite |

boolean |

If true, sessions can overwrite the default idle timeout. |

true |

|

allow_local_filesystem |

boolean |

(This flag reduces security, enable it only if you know what you're doing!) Allow loading from local filesystem, if in client/server mode. WARNING: This should only be enabled if you want to explicitly allow users of the PGX remote interface to access files on the local file system. |

false |

|

allow_task_timeout_overwrite |

boolean |

If true, sessions can overwrite the default task timeout |

true |

|

allow_user_auto_refresh |

boolean |

If true, users may enable auto refresh for graphs they load. If false, only graphs mentioned in graphs can have auto refresh enabled. |

false |

|

bfs_iterate_que_task_size |

integer |

Task size for BFS iterate QUE phase. |

128 |

|

bfs_threshold_read_based |

integer |

Threshold of BFS traversal level items to switch to read-based visiting strategy. |

1024 |

|

bfs_threshold_single_threaded |

integer |

Until what number of BFS traversal level items vertices are visited single-threaded. |

128 |

|

cctrace |

boolean |

If true, log every call to a Control or Core interface. |

false |

|

cctrace_out |

string |

[relevant for cctrace] When cctrace is enabled, specifies a path to a file where cctrace should log to. If null, it will log to stderr. If it is the special value :log: it will use the default PGX logging facility |

null |

|

character_set |

string |

Standard character set to use throughout PGX. UTF-8 is the default. Note: Some formats may not be compatible. |

utf-8 |

|

cni_diff_factor_default |

integer |

Default diff factor value used in the common neighbor iterator implementations. |

8 |

|

cni_small_default |

integer |

Default value used in the common neighbor iterator implementations, to indicate below which threshold a subarray is considered small. |

128 |

|

cni_stop_recursion_default |

integer |

Default value used in the common neighbor iterator implementations, to indicate the minimum size where the binary search approach is applied. |

96 |

|

dfs_threshold_large |

integer |

Value that determines at which number of visited vertices the DFS implementation will switch to data structures that are optimized for larger numbers of vertices. |

4096 |

|

enable_csrf_token_checks |

boolean |

If true, the PGX webapp will verify the Cross-Site Request Forgery (CSRF) token cookie and request parameters sent by the client exist and match. This is to prevent CSRF attacks. |

true |

|

enable_solaris_studio_labeling |

boolean |

[relevant when profiling with solaris studio] When enabled, label experiments using the 'er_label' command. |

false |

|

explicit_spin_locks |

boolean |

true means spin explicitly in a loop until lock becomes available. false means using JDK locks which rely on the JVM to decide whether to context switch or spin. Setting this value to true usually results in better performance. |

true |

|

graphs |

array of string |

List of paths to graph configurations to be registered at startup. |

[] |

|

max_active_sessions |

integer |

Maximum number of sessions allowed to be active at a time. |

1024 |

|

max_off_heap_size |

integer |

Maximum amount of off-heap memory (in megabytes) that PGX is allowed to allocate before an OutOfMemoryError will be thrown. Note: this limit is not guaranteed to never be exceeded, because of rounding and synchronization trade-offs. It only serves as threshold when PGX starts to reject new memory allocation requests. |

<available-physical-memory> |

|

max_queue_size_per_session |

integer |

The maximum number of pending tasks allowed to be in the queue, per session. If a session reaches the maximum, new incoming requests of that sesssion get rejected. A negative value means no limit. |

-1 |

|

max_snapshot_count |

integer |

Number of snapshots that may be loaded in the engine at the same time. New snapshots can be created via auto or forced update. If the number of snapshots of a graph reaches this threshold, no more auto-updates will be performed, and a forced update will result in an exception until one or more snapshots are removed from memory. A value of zero indicates to support an unlimited amount of snapshots. |

0 |

|

memory_cleanup_interval |

integer |

Memory cleanup interval in seconds. |

600 |

|

ms_bfs_frontier_type_strategy |

enum[auto_grow, short, int] |

The type strategy to use for MS-BFS frontiers. |

auto_grow |

|

num_spin_locks |

integer |

Number of spin locks each generated app will create at instantiation. Trade-off: a small number implies less memory consumption; a large number implies faster execution (if algorithm uses spin locks). |

1024 |

|

num_workers_analysis |

integer |

Number of worker threads to use for analysis tasks. |

<no-of-cpus> |

|

num_workers_fast_track_analysis |

integer |

Number of worker threads to use for fast-track analysis tasks. |

1 |

|

num_workers_io |

integer |

Number of worker threads to use for I/O tasks (load/refresh/write from/to disk). This value will not affect file-based loaders, because they are always single-threaded. Database loaders will open a new connection for each I/O worker. |

<no-of-cpus> |

|

pattern_matching_semantic |

enum[isomorphism, homomorphism] |

The graph pattern-matching semantic, which is either homomorphism or isomorphism. |

homomorphism |

|

parallelization_strategy |

enum[segmented, task_stealing, task_stealing_counted, rts] |

Parallelization strategy to use: segmented = split work into segments, use 1 thread per segment; task_stealing = F/J pool using recursive actions; task_stealing_counted = F/J pool using counted completers to reduce joins; rts = experimental run-time system. |

task_stealing_counted |

|

random_generator_strategy |

enum[non_deterministic, deterministic] |

Mmethod of generating random numbers in the in-memory analyst. |

non_deterministic |

|

random_seed |

long |

[relevant for deterministic random number generator only] Seed for the deterministic random number generator used in the in-memory analyst. The default is -24466691093057031. |

-24466691093057031 |

|

release_memory_threshold |

number |

Threshold percentage (decimal fraction) of used memory after which the engine starts freeing unused graphs. Examples: A value of 0.0 means graphs get freed as soon as their reference count becomes zero. That is, all sessions which loaded that graph were destroyed/timed out. A value of 1.0 means graphs never get freed, and the engine will throw OutOfMemoryErrors as soon as a graph is needed which does not fit in memory anymore. A value of 0.7 means the engine keeps all graphs in memory as long as total memory consumption is below 70% of total available memory, even if there is currently no session using them. When consumption exceeds 70% and another graph needs to get loaded, unused graphs get freed until memory consumption is below 70% again. |

0.85 |

|

session_idle_timeout_secs |

integer |

Timeout of idling sessions in seconds. Zero (0) means no timeout |

0 |

|

session_task_timeout_secs |

integer |

Timeout in seconds to interrupt long-running tasks submitted by sessions (algorithms, I/O tasks). Zero (0) means no timeout. |

0 |

|

small_task_length |

integer |

Task length if the total amount of work is smaller than default task length (only relevant for task-stealing strategies). |

128 |

|

spark_streams_interface |

string |

The name of an interface will be used for spark data communication. |

null |

|

strict_mode |

boolean |

If true, exceptions are thrown and logged with ERROR level whenever the engine encounters configuration problems, such as invalid keys, mismatches, and other potential errors. If false, the engine logs problems with ERROR/WARN level (depending on severity) and makes best guesses and uses sensible defaults instead of throwing exceptions. |

true |

|

task_length |

integer |

Default task length (only relevant for task-stealing strategies). Should be between 100 and 10000. Trade-off: a small number implies more fine-grained tasks are generated, higher stealing throughput; a large number implies less memory consumption and GC activity. |

4096 |

|

tmp_dir |

string |

Temporary directory to store compilation artifacts and other temporary data. If set to <system-tmp-dir>, uses the standard tmp directory of the underlying system (/tmp on Linux). |

<system-tmp-dir> |

|

use_string_pool |

boolean |

If true, the in-memory analyst will store string properties in a pool in order to consume less memory on string properties. |

true |

Example 6-1 Minimal In-Memory Analyst Configuration

The following example causes the in-memory analyst to initialize its analysis thread pool with 32 workers. (Default values are used for all other parameters.)

{ "num_workers_analysis": 32 }

Example 6-2 In-Memory Analyst Configuration with Two Fixed Graphs

The following example specifies additional parameters, including the graphs parameter to load two fixed graphs into memory during in-memory analyst startup. This feature helps to avoid redundancy when you need the same graph configuration pre-loaded and for standalone use later to reference the graph.

{

"num_workers_analysis": 32,

"num_workers_fast_track_analysis": 32,

"memory_cleanup_interval": 600,

"max_active_sessions": 1,

"release_memory_threshold": 0.2,

"graphs": ["graph-configs/my-graph.bin.json", "graph-configs/my-other-graph.adj.json"]

}

Example 6-3 In-Memory Analyst Configuration with Non-Default Run-Time Values

The following example specifies some parameters to configure in-memory analyst run-time behavior.

{

"num_workers_analysis": 32,

"num_spin_locks": 128,

"task_length": 1024,

"array_factory_strategy": "java_arrays"

}

6.2.1 Specifying the Configuration File to the In-Memory Analyst

The in-memory analyst configuration file is parsed by the in-memory analyst at startup-time whenever ServerInstance#startEngine (or any of its variants) is called. You can write the path to your configuration file to the in-memory analyst or specify it programmatically. This topic identifies several ways to specify the file

Programmatically

All configuration fields exist as Java enums. Example:

Map<PgxConfig.Field, Object> pgxCfg = new HashMap<>(); pgxCfg.put(PgxConfig.Field.NUM_WORKERS_ANALYSIS, 32); ServerInstance instance = ... instance.startEngine(pgxCfg);

All parameters not explicitly set will get default values.

Explicitly Using a File

Instead of a map, you can write the path to an in-memory analyst configuration JSON file. Example:

instance.startEngine("path/to/pgx.conf"); // file on local file system

instance.startEngine("hdfs:/path/to/pgx.conf"); // file on HDFS (required $HADOOP_CONF_DIR on the classpath)

instance.startEngine("classpath:/path/to/pgx.conf"); // file on current classpath

For all other protocols, you can write directly in the input stream to a JSON file. Example:

InputStream is = ... instance.startEngine(is);

Implicitly Using a File

If startEngine() is called without an argument, the in-memory analyst looks for a configuration file at the following places, stopping when it finds the file:

-

File path found in the Java system property

pgx_conf. Example:java -Dpgx_conf=conf/my.pgx.config.json ... -

A file named

pgx.confin the root directory of the current classpath -

A file named

pgx.confin the root directory relative to the currentSystem.getProperty("user.dir")directory

Note: Providing a configuration is optional. A default value for each field will be used if the field cannot be found in the given configuration file, or if no configuration file is provided.

Using the Local Shell

To change how the shell configures the local in-memory analyst instance, edit $PGX_HOME/conf/pgx.conf. Changes will be reflected the next time you invoke $PGX_HOME/bin/pgx.

You can also change the location of the configuration file as in the following example:

./bin/pgx --pgx_conf path/to/my/other/pgx.conf

Setting System Properties

Any parameter can be set using Java system properties by writing -Dpgx.<FIELD>=<VALUE> arguments to the JVM that the in-memory analyst is running on. Note that setting system properties will overwrite any other configuration. The following example sets the maximum off-heap size to 256 GB, regardless of what any other configuration says:

java -Dpgx.max_off_heap_size=256000 ...

Setting Environment Variables

Any parameter can also be set using environment variables by adding 'PGX_' to the environment variable for the JVM in which the in-memory analyst is executed. Note that setting environment variables will overwrite any other configuration; but if a system property and an environment variable are set for the same parameter, the system property value is used. The following example sets the maximum off-heap size to 256 GB using an environment variable:

PGX_MAX_OFF_HEAP_SIZE=256000 java ...

6.3 Reading Custom Graph Data

You can read your own custom graph data. This example creates a graph, alters it, and shows how to read it properly.

This graph uses the adjacency list format, but the in-memory analyst supports several graph formats.

The main steps are the following.

6.3.1 Creating a Simple Graph File

This example creates a small, simple graph in adjacency list format with no vertex or edge properties. Each line contains the vertex (node) ID, followed by the vertex IDs to which its outgoing edges point:

1 2 2 3 4 3 4 4 2

In this list, a single space separates the individual tokens. The in-memory analyst supports other separators, which you can specify in the graph configuration file.

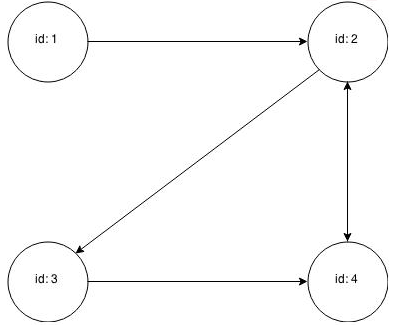

Figure 6-2 shows the data rendered as a property graph with 4 vertices and 5 edges. (There are two edges between vertex 2 and vertex 4, each pointing in a direction opposite form the other.)

Reading a graph into the in-memory analyst requires a graph configuration. You can provide the graph configuration using either of these methods:

-

Write the configuration settings in JSON format into a file

-

Using a Java

GraphConfigBuilderobject.

The following examples show both methods.

JSON Configuration

{

"uri": "graph.adj",

"format":"adj_list",

"separator":" "

}

Java Configuration

import oracle.pgx.config.FileGraphConfig;

import oracle.pgx.config.Format;

import oracle.pgx.config.GraphConfigBuilder;

FileGraphConfig config = GraphConfigBuilder

.forFileFormat(Format.ADJ_LIST)

.setUri("graph.adj")

.setSeparator(" ")

.build();

6.3.2 Adding a Vertex Property

The graph in "Creating a Simple Graph File" consists of vertices and edges, without vertex or edge properties. Vertex properties are positioned directly after the source vertex ID in each line. The graph data would look like this if you added a double vertex (node) property with values 0.1, 2.0, 0.3, and 4.56789 to the graph:

1 0.1 2 2 2.0 3 4 3 0.3 4 4 4.56789 2

Note:

The in-memory analyst supports only homogeneous graphs, in which all vertices have the same number and type of properties.

For the in-memory analyst to read the modified data file, you must add a vertex (node) property in the configuration file or the builder code. The following examples provide a descriptive name for the property and set the type to double.

JSON Configuration

{

"uri": "graph.adj",

"format":"adj_list",

"separator":" ",

"node_props":[{

"name":"double-prop",

"type":"double"

}]

}

Java Configuration

import oracle.pgx.common.types.PropertyType;

import oracle.pgx.config.FileGraphConfig;

import oracle.pgx.config.Format;

import oracle.pgx.config.GraphConfigBuilder;

FileGraphConfig config = GraphConfigBuilder.forFileFormat(Format.ADJ_LIST)

.setUri("graph.adj")

.setSeparator(" ")

.addNodeProperty("double-prop", PropertyType.DOUBLE)

.build();

6.3.3 Using Strings as Vertex Identifiers

The previous examples used integer vertex (node) IDs. The default in In-Memory Analytics is integer vertex IDs, but you can define a graph to use string vertex IDs instead.

This data file uses "node 1", "node 2", and so forth instead of just the digit:

"node 1" 0.1 "node 2" "node 2" 2.0 "node 3" "node 4" "node 3" 0.3 "node 4" "node 4" 4.56789 "node 2"

Again, you must modify the graph configuration to match the data file:

JSON Configuration

{

"uri": "graph.adj",

"format":"adj_list",

"separator":" ",

"node_props":[{

"name":"double-prop",

"type":"double"

}],

"node_id_type":"string"

}

Java Configuration

import oracle.pgx.common.types.IdType;

import oracle.pgx.common.types.PropertyType;

import oracle.pgx.config.FileGraphConfig;

import oracle.pgx.config.Format;

import oracle.pgx.config.GraphConfigBuilder;

FileGraphConfig config = GraphConfigBuilder.forFileFormat(Format.ADJ_LIST)

.setUri("graph.adj")

.setSeparator(" ")

.addNodeProperty("double-prop", PropertyType.DOUBLE)

.setNodeIdType(IdType.STRING)

.build();

Note:

string vertex IDs consume much more memory than integer vertex IDs.

Any single or double quotes inside the string must be escaped with a backslash (\).

Newlines (\n) inside strings are not supported.

6.3.4 Adding an Edge Property

This example adds an edge property of type string to the graph. The edge properties are positioned after the destination vertex (node) ID.

"node1" 0.1 "node2" "edge_prop_1_2" "node2" 2.0 "node3" "edge_prop_2_3" "node4" "edge_prop_2_4" "node3" 0.3 "node4" "edge_prop_3_4" "node4" 4.56789 "node2" "edge_prop_4_2"

The graph configuration must match the data file:

JSON Configuration

{

"uri": "graph.adj",

"format":"adj_list",

"separator":" ",

"node_props":[{

"name":"double-prop",

"type":"double"

}],

"node_id_type":"string",

"edge_props":[{

"name":"edge-prop",

"type":"string"

}]

}

Java Configuration

import oracle.pgx.common.types.IdType;

import oracle.pgx.common.types.PropertyType;

import oracle.pgx.config.FileGraphConfig;

import oracle.pgx.config.Format;

import oracle.pgx.config.GraphConfigBuilder;

FileGraphConfig config = GraphConfigBuilder.forFileFormat(Format.ADJ_LIST)

.setUri("graph.adj")

.setSeparator(" ")

.addNodeProperty("double-prop", PropertyType.DOUBLE)

.setNodeIdType(IdType.STRING)

.addEdgeProperty("edge-prop", PropertyType.STRING)

.build();

6.4 Storing Graph Data on Disk

After reading a graph into memory using either Java or the Shell, you can store it on disk in different formats. You can then use the stored graph data as input to the in-memory analyst at a later time.

Storing graphs over HTTP/REST is currently not supported.

The options include the following.

6.4.1 Storing the Results of Analysis in a Vertex Property

These examples read a graph into memory and analyze it using the Pagerank algorithm. This analysis creates a new vertex property to store the PageRank values.

Using the Shell to Run PageRank

pgx> g = session.readGraphWithProperties("/opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj.json", "my-graph")

==> ...

pgx> rank = analyst.pagerank(g, 0.001, 0.85, 100)

Using Java to Run PageRank

PgxGraph g = session.readGraphWithProperties("/opt/oracle/oracle-spatial-graph/property_graph/examples/pgx/graphs/sample.adj.json", "my-graph");

VertexProperty<Integer, Double> rank = session.createAnalyst().pagerank(g, 0.001, 0.85, 100);

Using JavaScript to Run PageRank

let p = pgx.connect(url, options);

p.then(function(session) {

return session.readGraphWithProperties(jsonContent);

}).then(function(graph) {

return graph.session.analyst.pagerank(graph);

});

6.4.2 Storing a Graph in Edge-List Format on Disk

This example stores the graph, the result of the Pagerank analysis, and all original edge properties as a file in edge-list format on disk.

To store a graph, you must specify:

-

The graph format

-

A path where the file will be stored

-

The properties to be stored. Specify VertexProperty.

ALLor EdgeProperty.ALLto store all properties, or VertexProperty.NONEor EdgePropery.NONEto store no properties. To specify individual properties, pass in the VertexProperty or EdgeProperty objects you want to store. -

A flag that indicates whether to overwrite an existing file with the same name

The following examples store the graph data in /tmp/sample_pagerank.elist, with the /tmp/sample_pagerank.elist.json configuration file. The return value is the graph configuration for the stored file. You can use it to read the graph again.

Using the Shell to Store a Graph

pgx> config = g.store(Format.EDGE_LIST, "/tmp/sample_pagerank.elist", [rank], EdgeProperty.ALL, false)

==> {"uri":"/tmp/sample_pagerank.elist","edge_props":[{"type":"double","name":"cost"}],"vertex_id_type":"integer","loading":{},"format":"edge_list","attributes":{},"vertex_props":[{"type":"double","name":"pagerank"}],"error_handling":{}}

Using Java to Store a Graph

import oracle.pgx.api.*; import oracle.pgx.config.*; FileGraphConfig config = g.store(Format.EDGE_LIST, "/tmp/sample_pagerank.elist", Collections.singletonList(rank), EdgeProperty.ALL, false);

Using JavaScript to Store a Graph

let p = graph.store({format: 'EDGE_LIST', targetPath: '/tmp/sample_pagerank.elist'});

6.5 Executing Built-in Algorithms

The in-memory analyst contains a set of built-in algorithms that are available as Java APIs.

This topic describes the use of the in-memory analyst using Triangle Counting and Pagerank analytics as examples.

6.5.1 About the In-Memory Analyst

The in-memory analyst contains a set of built-in algorithms that are available as Java APIs. The details of the APIs are documented in the Javadoc that is included in the product documentation library. Specifically, see the Analyst class Method Summary for a list of the supported in-memory analyst methods.

For example, this is the Pagerank procedure signature:

/** * Classic pagerank algorithm. Time complexity: O(E * K) with E = number of edges, K is a given constant (max * iterations) * * @param graph * graph * @param e * maximum error for terminating the iteration * @param d * damping factor * @param max * maximum number of iterations * @return Vertex Property holding the result as a double */ public <ID> VertexProperty<ID, Double> pagerank(PgxGraph graph, double e, double d, int max);

6.5.2 Running the Triangle Counting Algorithm

For triangle counting, the sortByDegree boolean parameter of countTriangles() allows you to control whether the graph should first be sorted by degree (true) or not (false). If true, more memory will be used, but the algorithm will run faster; however, if your graph is very large, you might want to turn this optimization off to avoid running out of memory.

Using the Shell to Run Triangle Counting

pgx> analyst.countTriangles(graph, true) ==> 1

Using Java to Run Triangle Counting

import oracle.pgx.api.*; Analyst analyst = session.createAnalyst(); long triangles = analyst.countTriangles(graph, true);

Using JavaScript to Run Triangle Counting

p.then(function(graph) {

return graph.session.analyst.countTriangles(graph, true);

})

The algorithm finds one triangle in the sample graph.

Tip:

When using the in-memory analyst shell, you can increase the amount of log output during execution by changing the logging level. See information about the :loglevel command with :h :loglevel.

6.5.3 Running the Pagerank Algorithm

Pagerank computes a rank value between 0 and 1 for each vertex (node) in the graph and stores the values in a double property. The algorithm therefore creates a vertex property of type double for the output.

In the in-memory analyst, there are two types of vertex and edge properties:

-

Persistent Properties: Properties that are loaded with the graph from a data source are fixed, in-memory copies of the data on disk, and are therefore persistent. Persistent properties are read-only, immutable and shared between sessions.

-

Transient Properties: Values can only be written to transient properties, which are session private. You can create transient properties by calling

createVertexPropertyandcreateEdgePropertyonPgxGraphobjects.

This example obtains the top three vertices with the highest Pagerank values. It uses a transient vertex property of type double to hold the computed Pagerank values. The Pagerank algorithm uses the following default values for the input parameters: error (tolerance = 0.001, damping factor = 0.85, and maximum number of iterations = 100.

Using the Shell to Run Pagerank

pgx> rank = analyst.pagerank(graph, 0.001, 0.85, 100); ==> ... pgx> rank.getTopKValues(3) ==> 128=0.1402019732468347 ==> 333=0.12002296283541904 ==> 99=0.09708583862990475

Using Java to Run Pagerank

import java.util.Map.Entry;

import oracle.pgx.api.*;

Analyst analyst = session.createAnalyst();

VertexProperty<Integer, Double> rank = analyst.pagerank(graph, 0.001, 0.85, 100);

for (Entry<Integer, Double> entry : rank.getTopKValues(3)) {

System.out.println(entry.getKey() + "=" + entry.getValue());

}

Using JavaScript to Run Pagerank

p.then(function(graph) {

return graph.session.analyst.pagerank(graph, {e: 0.001, d: 0.85, max: 100});

});

6.6 Creating Subgraphs

You can create subgraphs based on a graph that has been loaded into memory.

You can use filter expressions or create bipartite subgraphs based on a vertex (node) collection that specifies the left set of the bipartite graph.

For information about reading a graph into memory, see Reading Graph Data into Memory.

- About Filter Expressions

- Using a Simple Edge Filter to Create a Subgraph

- Using a Simple Vertex Filter to Create a Subgraph

- Using a Complex Filter to Create a Subgraph

- Combining Expression Filters

- Using an Expression Filter to Create a Set of Vertices or Edges

- Using a Vertex Set to Create a Bipartite Subgraph

6.6.1 About Filter Expressions

Filter expressions are expressions that are evaluated for either each vertex or each edge. The expression can define predicates that an edge must fulfill to be contained in the result, in this case a subgraph.

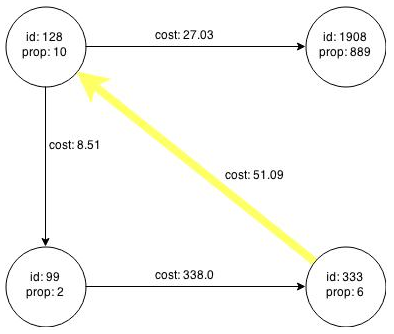

Consider the graph in Figure 6-1, which consists of four vertices (nodes) and four edges. For an edge to match the filter expression src.prop1 == 10, the source vertex prop property must equal 10. Two edges match that filter expression, as shown in Figure 6-3.

The following edge filter expression:

src.prop1 == 10

specifies that each edge where the source vertex’s property named prop1 has the value 10 will match the filter expression. In this case the following edges match the expression:

-

The edge across the top (

cost: 27.03) from vertexid: 128 to vertexid: 1908 -

The edge on the left (

cost: 8.51) from vertexid: 128 to vertexid: 99

Figure 6-4 shows the subgraph that results when the preceding filter expression is applied. This subgraph includes the vertex with id: 128, the left and top edges, and the destination vertex of each edge (vertices with id: 1908 and id: 99).

Figure 6-4 Subgraph Created by the Simple Edge Filter

Description of "Figure 6-4 Subgraph Created by the Simple Edge Filter"

The following vertex filter expression:

vertex.prop1 < 10

specifies that each vertex where the property named prop1 has a value less than 10 will match the filter expression. In this case the following edge matches the expression:

-

The edge across the bottom (

cost: 338.0) from vertexid: 99 to vertexid: 333

Filter Expression Examples

-

Date. The following expression accesses the property

dateof an edge and checks if it is equal to03/27/2007 06:00.edge.date = date('2007-03-27 06:00:00') -

In/out degree.

inDegree()returns the number of incoming edges of a vertex, whileoutDegree()returns the number of outgoing edges of the vertex. In the following examples, src denotes the source (out) vertex of the current edge, while dst denotes the destination (in) vertex.src.inDegree() > 3 dst.outDegree() < 5

-

Label.

hasLabelreturnstrueif a vertex has a particular label. The following returnstrueif a vertex has acitylabel and if its population is greater than 10000.vertex.hasLabel('city') && (vertex.population > 10000) -

Label.

labelreturns the label of an edge. The following example returnstrueif an edge label is eitherfriend_oforclicked_by.edge.label() = 'friend_of' || edge.label() = 'clicked_by'

-

Type Conversion: The following example converts the value of the cost property of the source vertex to an integer.

(int) src.cost

-

Arithmetic Support: The following examples show arithmetic expressions that can be used in filter expressions.

1 + 5 -vertex.degree() edge.cost * 2 > 5 src.value * 2.5 = (dst.inDegree() + 5) / dst.outDegree()

6.6.2 Using a Simple Edge Filter to Create a Subgraph

The following examples create the first subgraph described in About Filter Expressions.

Using the Shell to Create a Subgraph

subgraph = graph.filter(new EdgeFilter("src.prop1 == 10"))

Using Java to Create a Subgraph

import oracle.pgx.api.*;

import oracle.pgx.api.filter.*;

PgxGraph graph = session.readGraphWithProperties(...);

PgxGraph subgraph = graph.filter(new EdgeFilter("src.prop1 == 10"));

Using JavaScript to create a Subgraph

return graph.filter(pgx.createEdgeFilter("src.prop1 == 10"));

6.6.3 Using a Simple Vertex Filter to Create a Subgraph

The following examples create the second subgraph described in About Filter Expressions.

Using the Shell to Create a Subgraph

subgraph = graph.filter(new VertexFilter("vertex.prop1 < 10"))

Using Java to Create a Subgraph

import oracle.pgx.api.*;

import oracle.pgx.api.filter.*;

PgxGraph graph = session.readGraphWithProperties(...);

PgxGraph subgraph = graph.filter(new VertexFilter("src.prop1 < 10"));

Using JavaScript to create a Subgraph

return graph.filter(pgx.createVertexFilter("vertex.prop1 < 10"));

6.6.4 Using a Complex Filter to Create a Subgraph

This example uses a slightly more complex filter. It uses the outDegree function, which calculates the number of outgoing edges for an identifier (source src or destination dst). The following filter expression matches all edges with a cost property value greater than 50 and a destination vertex (node) with an outDegree greater than 1.

dst.outDegree() > 1 && edge.cost > 50

One edge in the sample graph matches this filter expression, as shown in Figure 6-5.

Figure 6-5 Edges Matching the outDegree Filter

Description of "Figure 6-5 Edges Matching the outDegree Filter"

Figure 6-6 shows the graph that results when the filter is applied. The filter excludes the edges associated with vertixes 99 and 1908, and so excludes those vertices also.

Figure 6-6 Graph Created by the outDegree Filter

Description of "Figure 6-6 Graph Created by the outDegree Filter"

6.6.5 Combining Expression Filters

You can combine vertex filters with edge filters.

Both filters are evaluated separately and afterwards merged by creating either of the following:

-

A union of the results

-

An intersection of the results

Creating a Union of Two Filters

If you perform a union of the edge filter:

src.prop1 == 10

and the vertex filter:

vertex.prop1 < 10

Then the result is shown in the following graph.

Shell Example:

edgeFilter = new EdgeFilter("src.prop1 == 10")

vertexFilter = new VertexFilter("vertex.prop1 < 10")

filter = edgeFilter.union(vertexFilter)

subgraph = g.filter(filter)

Java Example:

import oracle.pgx.api.filter.*;

...

EdgeFilter edgeFilter = new EdgeFilter("src.prop1 == 10");

VertexFilter vertexFilter = new VertexFilter("vertex.prop1 < 10");

GraphFilter filter = edgeFilter.union(vertexFilter);

PgxGraph subgraph = g.filter(filter);

JavaScript Example:

return p.then(function(graph) {

let edgeFilter = pgx.createEdgeFilter("src.prop1 == 10");

let vertexFilter = pgx.createVertexFilter("vertex.prop1 < 10");

let filter = edgeFilter.union(vertexFilter);

return graph.filter(filter);

});

Creating an Intersection of Two Filters

Creating the intersection of the filters mentioned in the union example will result in the following graph, which consists only of a single vertex.

Shell Example:

edgeFilter = new EdgeFilter("src.prop1 == 10")

vertexFilter = new VertexFilter("vertex.prop1 < 10")

filter = edgeFilter.intersect(vertexFilter)

subgraph = g.filter(filter)

Java Example:

import oracle.pgx.filter.expressions.*;

...

EdgeFilter edgeFilter = new EdgeFilter("src.prop1 == 10");

VertexFilter vertexFilter = new VertexFilter("vertex.prop1 < 10");

GraphFilter filter = edgeFilter.intersect(vertexFilter);

PgxGraph subgraph = g.filter(filter);

JavaScript Example:

return p.then(function(graph) {

let edgeFilter = pgx.createEdgeFilter("src.prop1 == 10");

let vertexFilter = pgx.createVertexFilter("vertex.prop1 < 10");

let filter = edgeFilter.intersect(vertexFilter);

return graph.filter(filter);

});

6.6.6 Using an Expression Filter to Create a Set of Vertices or Edges

In addition to using expression filters to create a subgraph (discussed in other topics), you can use them to select just a set of vertices or edges from a graph.

For example, you can create a vertex set on the sample graph from About Filter Expressions using the following vertex filter expression:

vertex.prop1 < 10

This yields the following set: vertices with ID values 99 and 333.

Example 6-4 Creating a Vertex Set

Shell Example:

vertices = g.getVertices( new VertexFilter("vertex.prop1 < 10") )

==> PgxVertex with ID 99

==> PgxVertex with ID 333

Java Example:

import oracle.pgx.api.*;

import oracle.pgx.filter.expressions.*;

...

VertexSet<Integer> = g.getVertices( new VertexFilter("vertex.prop1 < 10") );

Example 6-5 Creating an EdgeSet

Shell Example:

edges = g.getEdges( new EdgeFilter("src.prop1 == 10") )

==> PgxEdge with ID 0

==> PgxEdge with ID 1

Java Example:

import oracle.pgx.api.*;

import oracle.pgx.filter.expressions.*;

...

EdgeSet = g.getEdges( new EdgeFilter("src.prop1 == 10") );

6.6.7 Using a Vertex Set to Create a Bipartite Subgraph

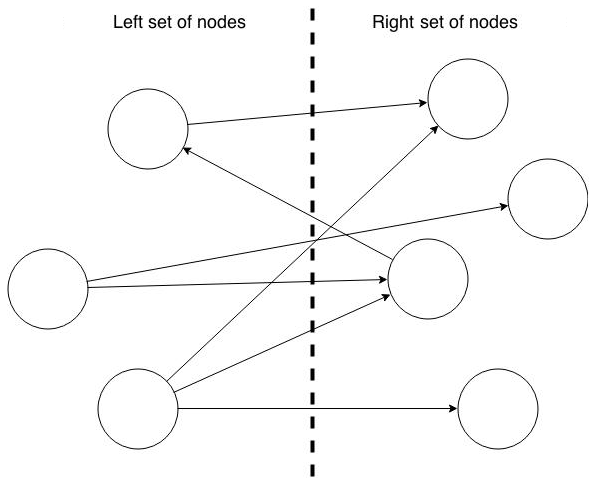

You can create a bipartite subgraph by specifying a set of vertices (nodes), which are used as the left side. A bipartite subgraph has edges only between the left set of vertices and the right set of vertices. There are no edges within those sets, such as between two nodes on the left side. In the in-memory analyst, vertices that are isolated because all incoming and outgoing edges were deleted are not part of the bipartite subgraph.

The following figure shows a bipartite subgraph. No properties are shown.

The following examples create a bipartite subgraph from the simple graph created in Figure 6-1. They create a vertex collection and fill it with the vertices for the left side.

Using the Shell to Create a Bipartite Subgraph

pgx> s = graph.createVertexSet() ==> ... pgx> s.addAll([graph.getVertex(333), graph.getVertex(99)]) ==> ... pgx> s.size() ==> 2 pgx> bGraph = graph.bipartiteSubGraphFromLeftSet(s) ==> PGX Bipartite Graph named sample-sub-graph-4

Using Java to Create a Bipartite Subgraph

import oracle.pgx.api.*; VertexSet<Integer> s = graph.createVertexSet(); s.addAll(graph.getVertex(333), graph.getVertex(99)); BipartiteGraph bGraph = graph.bipartiteSubGraphFromLeftSet(s);

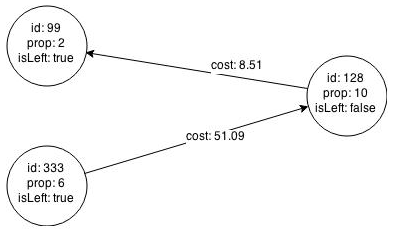

When you create a subgraph, the in-memory analyst automatically creates a Boolean vertex (node) property that indicates whether the vertex is on the left side. You can specify a unique name for the property.

The resulting bipartite subgraph looks like this:

Vertex 1908 is excluded from the bipartite subgraph. The only edge that connected that vertex extended from 128 to 1908. The edge was removed, because it violated the bipartite properties of the subgraph. Vertex 1908 had no other edges, and so was removed also.

6.7 Using Pattern-Matching Queries with Graphs

You can issue a pattern-matching query against an in-memory graph, and then work with the results of that query.

Data Sets for the Pattern-Matching Query Examples

The pattern-matching examples use two different data sets:

-

Relationships between politicians, athletes, celebrities, and companies

-

An electrical network with devices, connections and switches

Submitting the Pattern-Matching Queries Using PGQL

You can submit a graph pattern-matching query in the Property Graph Suery Language (PGQL), a SQL-like declarative language that allows you to express a pattern consisting of vertices and edges, plus constraints on the properties of the vertices and edges.

To submit a query to the in-memory analyst, you can use the queryPgql() Java method of PgxGraph (the type of object you get when you load a graph using the session), or you can use the equivalent JavaScript function. Java example:

The following topics use pattern matching in queries.

6.7.1 Example: The Enemy of My Enemy is My Friend

The example in this topic describes a graph pattern inspired by the famous ancient proverb, The enemy of my enemy is my friend. Specifically, the graph includes two entities that are connected by two edges of the feuds edge label. Vertices represent people or clans or countries. A pair of vertices that are feuding with each other will have an edge with the feuds edge label.

Such a query is written in PGQL as follows:

SELECT x.name, z.name

WHERE

(x) -[e1:feuds]-> (y),

(y) -[e2:feuds]-> (z),

x != y

ORDER BY x.name, z.name

Note that in the preceding query, the results are ordered by x.name and then z.name.

Submit the query to PGX:

Shell Example:

pgx> resultSet = connectionsGraph.queryPgql("SELECT x.name, z.name WHERE (x) -[e1:feuds]-> (y), (y) -[e2:feuds]-> (z), x != z ORDER BY x.name, z.name")

Java Example:

import oracle.pgx.api.*;

...

PgqlResultSet resultSet = connectionsGraph.queryPgql("SELECT x.name, z.name WHERE (x) -[e1:feuds]-> (y), (y) -[e2:feuds]-> (z), x != z ORDER BY x.name, z.name");

JavaScript Example:

return p.then(function(graph) {

return graph.queryPgql("SELECT x.name, z.name WHERE (x) -[e1:feuds]-> (y), (y) -[e2:feuds]-> (z), x != z ORDER BY x.name, z.name");

});

PgqlResultSet manages a result set of a query. A result set contains multiple results (such a query may match many sub-graphs). Each result consists of a list of result elements. The order of result elements follows the order of variables in the SELECT clause of a query.

Iterating over a query results means iterating over a set of PgqlResultElement instances. A PgqlResultElement maintains the type and variable name of a result element.

You can get the list of PgqlResultElement instances as follows:

Shell Example:

pgx> resultElements = resultSet.getPgqlResultElements()

Java Example:

import oracle.pgx.api.*; import java.util.List; ... List<PgqlResultElement> resultElements = resultSet.getPgqlResultElements();

JavaScript Example:

return p.then(function(resultSet) {

console.log(resultSet.resultElements);

});

Get the type and variable name of the first result element:

Shell Example:

pgx> resultElement = resultElements.get(0) pgx> type = resultElement.getElementType() // STRING pgx> varName = resultElement.getVarName() // x.name

Java Example:

import oracle.pgx.api.*; ... PgqlResultElement resultElement = resultElements.get(0); PqglResultElement.Type = resultElement.getElementType(); // STRING String varName = resultElement.getVarName(); // x.name

JavaScript Example:

return p.then(function(resultSet) {

console.log(resultSet.resultElements[0].varName);

console.log(resultSet.resultElements[0].elementType);

});

Iterate over a result set using the for-each style for loop. In the loop, you get a PgqlResult instance that contains a query result.

Shell Example:

pgx> resultSet.getResults().each { \

// the variable 'it' is implicitly declared to references each PgqlResult instance

}

Java Example:

import oracle.pgx.api.*;

...

for (PgqlResult result : resultSet.getResults()) {

...

}

JavaScript Example:

return p.then(function(resultSet) {

return resultSet.iterate(function(row) {

console.log(row);

});

});

In the shell, you can conveniently print out the result set in textual format using print method of PqglResultSet.

pgx> resultSet.print(10) // print the first 10 results

You will see the following results:

-------------------------------- | x.name | z.name | ================================ | ABC | CBS | | ABC | NBC | | Alibaba | Beyonce | | Alibaba | Google | | Alibaba | eBay | | Amazon | Carl Icahn | | Amazon | Facebook | | Amazon | Tencent | | Angela Merkel | Barack Obama | | Angela Merkel | John Kerry | --------------------------------

You can also get a handle of individual PgqlResult instances or their elements.

By the index of the result element:

pgx> nameX = it.getString(0) pgx> nameZ = it.getString(1)

By the variable name of the result element:

pgx> nameX = it.getString("x.name")

pgx> nameZ = it.getString("z.name")

You can also get a result element without knowing its type:

pgx> nameX = it.get(0)

// or

pgx> nameX = it.get("x.name")

In JavaScript, you can access result elements by the variable name like this:

return p.then(function(resultSet) {

return resultSet.iterate(function(row) {

console.log(row['n']);

console.log(row['n.pagerank']);

});

});

6.7.2 Example: Top 10 Most Collaborative People

This example finds the top 10 most collaborative people in the graph in a decreasing order of the number of collaborators. Such a query exploits various features of PGQL, which include grouping, aggregating, ordering, and limiting the graph patterns found in the WHERE clause. The following query string expresses a user's inquiry in PGQL.

pgx> resultSet = connectionsGraph.queryPgql("SELECT x.name, COUNT(*) AS num_collaborators WHERE (x) -[:collaborates]-> () GROUP BY x ORDER BY DESC(num_collaborators) LIMIT 10")

The preceding query does the following:

-

Find all collaboration relationship patterns from the graph by matching the `collaborates' edge label.

-

Group the found patterns by its source vertex.

-

Apply the count aggregation to each group to find the number of collaborators.

-

Order the groups by the number of collaborators in a decreasing order.

-

Take only the first 10 results.

The print() method shows the name and the number of collaborators of the top 10 collaborative people in the graph.

pgx> resultSet.print()

You will see the following results.

--------------------------------------------------- | x.name | num_collaborators | =================================================== | Barack Obama | 10 | | Charlie Rose | 4 | | Omar Kobine Layama | 3 | | Dieudonne Nzapalainga | 3 | | Nicolas Guerekoyame Gbangou | 3 | | NBC | 3 | | Pope Francis | 3 | | Beyonce | 2 | | Eric Holder | 2 | | Tom Steyer | 2 | ---------------------------------------------------

6.7.3 Example: Transitive Connectivity Between Electrical Devices

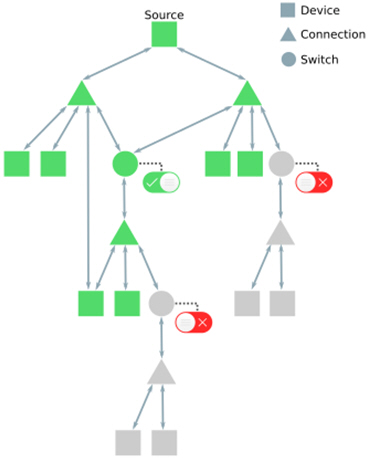

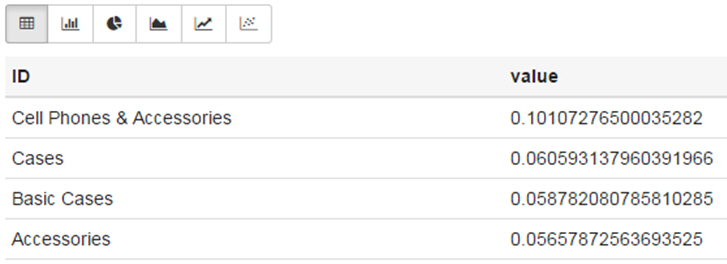

This example tests for reachability between vertices. It uses the electrical network graph in the following figure.

The example seeks to determine whether every Device in the graph is transitively connected to every other Device. Note that devices are connected by Connection vertices and Switch vertices.

First, find out how many devices there are in the graph by submitting the following PGQL query:

SELECT COUNT(*) AS numDevices WHERE (n:Device)

The result is 6031:

-------------- | numDevices | ============== | 6031 | --------------

For each device, count the number of devices that can be reached by following zero or more Connection or Switch vertices (and necessary edges). This query can be expressed in PGQL as follows:

PATH connects_to := () <- (/*:Connection|Switch*/) -> () SELECT n.nickname AS device, count(m) AS reachabilityCount WHERE (n:Device) -/:connects_to*/-> (m:Device) GROUP BY n ORDER BY COUNT(m), n.nickname

In the preceding query, express connectivity between two neighboring devices/connections is expressed using a path pattern connects_to. A Kleene star (*) expresses that the path pattern may repeatedly match zero or more times, with the goal of determining transitive connectivity. (The labels in the path pattern are commented out because the in-memory analyst does not yet support this feature.)

The query uses GROUP BY to make a group for each of the source devices n, and then counts the number of reachable destination devices m. The first 20 results are as follows:

------------------------------------------------ | device | reachabilityCount | ================================================ | 190-7361-M1089120 | 6031 | | 190-8581-D5587291-3_INT | 6031 | | 190-8593-D5860423-3_INT | 6031 | | 196-29518-L3122816 | 6031 | | 196-29519-L3066815 | 6031 | | 196-29520-L3160109 | 6031 | | 196-29521-N1136355 | 6031 | | 196-31070-D5861005-2_INT | 6031 | | 196-35541-M1108317 | 6031 | | 196-35813-N1140519 | 6031 | | 196-36167-L3011298 | 6031 | | 198-5320-221-311359 | 6031 | | 221-240988-L3141411 | 6031 | | 221-240991-L3066817 | 6031 | | 221-242079-L3011293 | 6031 | | 221-282818-N1230123 | 6031 | | 221-282819-N1230122 | 6031 | | 221-306686-L2970258 | 6031 | | 221-306687-L2916625 | 6031 | | 221-308718-L2803199 | 6031 | ------------------------------------------------

Because the results are sorted by increasing reachabilityCount and because even the first device in the results transitively connects to every device in the graph (reachabilityCount = 6031), you now know that all the devices in the graph are fully reachable from each other.

6.8 Starting the In-Memory Analyst Server

Big Data Spatial and Graph bundles a preconfigured version of Apache Tomcat that allows you to start the in-memory analyst server by running a script.

If you need to configure the server before starting it, see Configuring the In-Memory Analyst Server.

You can start the server by running the following script: /opt/oracle/oracle-spatial-graph/property_graph/pgx/bin/start-server

6.8.1 Configuring the In-Memory Analyst Server

You can configure the in-memory analyst server by modifying the /opt/oracle/oracle-spatial-graph/property_graph/pgx/conf/server.conf file. The following table shows the valid configuration options, which can be specified in JSON format:

Table 6-2 Configuration Options for In-Memory Analyst Server

| Option | Type | Description | Default |

|---|---|---|---|

|

authorization |

string |

File that maps clients to roles for authorization. |

server.auth.conf |

|

ca_certs |

array of string |

List of trusted certificates (PEM format). If 'enable_tls' is set to false, this option has no effect. |

[See information after this table.] |

|

enable_client_authentication |

boolean |

If true, the client is authenticated during TLS handshake. See the TLS protocol for details. This flag does not have any effect if 'enable_tls' is false. |

true |

|

enable_tls |

boolean |

If true, the server enables transport layer security (TLS). |

true |

|

port |

integer |

Port that the PGX server should listen on |

7007 |

|

server_cert |

string |

The path to the server certificate to be presented to TLS clients (PEM format). If 'enable_tls' is set to false, this option has no effect |

null |

|

server_private_key |

string |

the private key of the server (PKCS#8, PEM format). If 'enable_tls' is set to false, this option has no effect |

null |

The in-memory analyst web server enables two-way SSL/TLS (Transport Layer Security) by default. The server enforces TLS 1.2 and disables certain cipher suites known to be vulnerable to attacks. Upon a TLS handshake, both the server and the client present certificates to each other, which are used to validate the authenticity of the other party. Client certificates are also used to authorize client applications.

The following is an example server.conf configuration file:

{

"port": 7007,

"server_cert": "certificates/server_certificate.pem",

"server_private_key": "certificates/server_key.pem",

"ca_certs": [ "certificates/ca_certificate.pem" ],

"authorization": "auth/server.auth.conf",

"enable_tls": true,

"enable_client_authentication": true

}

The following is an example server.auth.conf configuration file: mapping client (applications) identified by their certificate DN string to roles:

{

"authorization": [{

"dn": "CN=Client, OU=Development, O=Oracle, L=Belmont, ST=California, C=US",

"admin": false

}, {

"dn": "CN=Admin, OU=Development, O=Oracle, L=Belmont, ST=California, C=US",

"admin": true

}]

}

You can turn off client-side authentication or SSL/TLS authentication entirely in the server configuration. However, we recommend having two-way SSL/TLS enabled for any production usage.

6.9 Deploying to Jetty

You can deploy the in-memory analyst to Eclipse Jetty, Apache Tomcat, or Oracle WebLogic Server. This example shows how to deploy the in-memory analyst as a web application with Eclipse Jetty.

Note:

These steps are meant only for testing the in-memory analyst. For any serious deployment, you should configure Jetty to enforce proper authentication and authorization, store the credentials securely, and only allow connections over HTTPS.

-

Copy the in-memory analyst web application archive (WAR) file into the Jetty

webappsdirectory (replace<VERSION>with the actual version number):cp $PGX_HOME/server/shared-mem/pgx-webapp-<VERSION>.war.war $JETTY_HOME/webapps/pgx.war

-

Ensure that port 8080 is not already in use, and then start Jetty:

cd $JETTY_HOME java -jar start.jar

-

Verify that Jetty is working:

cd $PGX_HOME ./bin/pgx --base_url http://localhost:8080/pgx

-

(Optional) Modify the in-memory analyst configuration files.

The configuration file

(pgx.conf) and the logging parameters (log4j.xml) for the in-memory analyst engine are in the WAR file underWEB-INF/classes.After you make any changes, restart the server to enable the changes.

See Also:

The Jetty documentation for configuration and use at http://eclipse.org/jetty/documentation/

6.10 Deploying to Apache Tomcat

You can deploy the in-memory analyst to Eclipse Jetty, Apache Tomcat, or Oracle WebLogic. This example shows how to deploy the in-memory analyst as a web application with Apache Tomcat.

Note:

These steps are meant only for testing the in-memory analyst. For any serious deployment, you should configure Apache Tomcat to enforce proper authentication and authorization, store the credentials securely, and only allow connections over HTTPS.

6.11 Deploying to Oracle WebLogic Server

You can deploy the in-memory analysts to Eclipse Jetty, Apache Tomcat, or Oracle WebLogic Server. This example shows how to deploy the in-memory analyst as a web application with Oracle WebLogic Server.

Note:

These steps are meant only for testing the in-memory analyst. For any serious deployment, you should configure WebLogic Server to enforce proper authentication and authorization, store the credentials securely, and only allow connections over HTTPS.

6.11.1 Installing Oracle WebLogic Server

To download and install the latest version of Oracle WebLogic Server, see

http://www.oracle.com/technetwork/middleware/weblogic/documentation/index.html

6.11.2 Deploying the In-Memory Analyst

To deploy the in-memory analyst to Oracle WebLogic, use commands like the following. Substitute your administrative credentials and WAR file for the values shown in this example:

. $MW_HOME/user_projects/domains/mydomain/bin/setDomainEnv.sh . $MW_HOME/wlserver/server/bin/setWLSEnv.sh java weblogic.Deployer -adminurl http://localhost:7001 -username username -password password -deploy -source $PGX_HOME/server/pgx-webapp-wls.war

If the script runs successfully, you will see a message like this one:

Target state: deploy completed on Server myserver

6.12 Connecting to the In-Memory Analyst Server

After the property graph in-memory analyst is deployed as a server, and installed in a Hadoop cluster -- or on a client system without Hadoop as a web application on Eclipse Jetty, Apache Tomcat, or Oracle WebLogic Server -- you can connect to the in-memory analyst server.

6.12.1 Connecting with the In-Memory Analyst Shell

The simplest way to connect to an in-memory analyst instance is to specify the base URL of the server.

You can use the --base_url command line argument to connect to a server running on that base URL. For example, if the server has SSL/TLS disabled, does not require any authentication, and is running on http://localhost:8080/pgx, you can connect to it using PGX shell by entering the following:

cd $PGX_HOME ./bin/pgx --base_url http://scott:tiger@localhost:8080/pgx

You can connect to a remote instance the same way.

If the server requires BASIC auth, you can specify the username and password as in the following example:

./bin/pgx --base_url http://localhost:8080/pgx --username scott --password <password-for-scott>

If the server has SSL/TLS enabled, you can specify the path to the trust store (in JKS format) which is used to validate the server certificate with the --truststore option.

./bin/pgx --base_url https://localhost:8080/pgx --truststore path/to/truststore.jks

If the server has two-way SSL/TLS enabled, you can specify the keystore file containing the client certificate and the keystore password with the --keystore and –-password options.

./bin/pgx --base_url https://localhost:8080/pgx --truststore path/to/truststore.jks --keystore path/to/keystore.jks --password <password>

6.12.1.1 About Logging HTTP Requests

The in-memory analyst shell suppresses all debugging messages by default. To see which HTTP requests are executed, set the log level for oracle.pgx to DEBUG, as shown in this example:

pgx> :loglevel oracle.pgx DEBUG ===> log level of oracle.pgx logger set to DEBUG pgx> session.readGraphWithProperties("sample_http.adj.json", "sample") 10:24:25,056 [main] DEBUG RemoteUtils - Requesting POST http://scott:tiger@localhost:8080/pgx/core/session/session-shell-6nqg5dd/graph HTTP/1.1 with payload {"graphName":"sample","graphConfig":{"uri":"http://path.to.some.server/pgx/sample.adj","separator":" ","edge_props":[{"type":"double","name":"cost"}],"node_props":[{"type":"integer","name":"prop"}],"format":"adj_list"}} 10:24:25,088 [main] DEBUG RemoteUtils - received HTTP status 201 10:24:25,089 [main] DEBUG RemoteUtils - {"futureId":"87d54bed-bdf9-4601-98b7-ef632ce31463"} 10:24:25,091 [pool-1-thread-3] DEBUG PgxRemoteFuture$1 - Requesting GET http://scott:tiger@localhost:8080/pgx/future/session/session-shell-6nqg5dd/result/87d54bed-bdf9-4601-98b7-ef632ce31463 HTTP/1.1 10:24:25,300 [pool-1-thread-3] DEBUG RemoteUtils - received HTTP status 200 10:24:25,301 [pool-1-thread-3] DEBUG RemoteUtils - {"stats":{"loadingTimeMillis":0,"estimatedMemoryMegabytes":0,"numEdges":4,"numNodes":4},"graphName":"sample","nodeProperties":{"prop":"integer"},"edgeProperties":{"cost":"double"}}

6.12.2 Connecting with Java

You can specify the base URL when you initialize the in-memory analyst using Java. An example is as follows. A URL to an in-memory analyst server is provided to the getInstance API call.

import oracle.pg.nosql.*;

import oracle.pgx.api.*;

PgNosqlGraphConfig cfg = GraphConfigBuilder.forNosql().setName("mygraph").setHosts(...).build();

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(cfg);

ServerInstance remoteInstance = Pgx.getInstance("http://scott:tiger@hostname:port/pgx");

PgxSession session = remoteInstance.createSession("my-session");

PgxGraph graph = session.readGraphWithProperties(opg.getConfig());

To specify the trust store, key store, and keystore password when connecting with Java, you must set the javax.net.ssl.trustStore, javax.net.ssl.keyStore, and javax.net.ssl.keyStorePassword system properties, respectively.

6.12.3 Connecting with JavaScript

You can specify the base URL when you initialize the in-memory analyst using JavaScript. An example is as follows. A URL to an in-memory analyst server is provided to the connect API call.

const pgx = require('oracle-pgx-client'); // oracle-pgx-client npm package

const fs = require('fs');

// options to connect to pgx server

let options = {

clientKey: fs.readFileSync('client_key.pem'),

clientCert: fs.readFileSync('client_cert.pem'),

caCert: fs.readFileSync('ca_cert.pem'),

passphrase: 'passphrase',

};

// connect to pgx server

let p = pgx.connect(url, options).then(function(session) {

return session.readGraphWithProperties(…); // load graph from pgx server

}).then(function(graph) {

return graph.session.analyst.pagerank(graph); // run pagerank algorithm in pgx server

}).catch(function(err) {

console.log("error: " + err);

});

6.13 Using the In-Memory Analyst in Distributed Mode

The in-memory analyst can be run in shared memory mode or distributed mode.

-

Shared memory mode

Multiple threads work in parallel on in-memory graph data stored in a single node (a single, shared memory space). In shared memory mode, the size of the graph is constrained by the physical memory size and by other applications running on the same node.

-

Distributed mode

To overcome the limitations of shared memory mode, you can run the in-memory analyst in distributed mode, in which multiple nodes (computers) form a cluster, partition a large property graph across distributed memory, and work together to provide efficient and scalable graph analytics.

For using the in-memory analyst feature in distributed mode, the following requirements apply to each node in the cluster:

-

GNU Compiler Collection (GCC) 4.8.2 or later

C++ standard libraries built upon 3.4.20 of the GNU C++ API are needed.

-

Ability to open a TCP port

Distributed in-memory analyst requires a designated TCP port to be open for initial handshaking. The default port number is 7777, but you can set it using the run-time parameter

pgx_side_channel_port. -

Ability to use InfiniBand or UDP on Ethernet

Data communication among nodes mainly uses InfiniBand (IB) or UDP on Ethernet. When using Ethernet, the machines in the cluster need to accept UDP packets from other computers.

-

JDK8 or later

To start the in-memory analyst in distributed mode, do the following. (For this example, assume that four nodes (computers) have been allocated for this purpose, and that they have the host names hostname0, hostname1, hostname2, and hostname3.)

On each of the nodes, log in and perform the following operations (modifying the details for your actual environment):

export PGX_HOME=/opt/oracle/oracle-spatial-graph/property_graph/pgx export LD_LIBRARY_PATH=$PGX_HOME/server/distributed/lib:$JAVA_HOME/jre/lib/amd64/server:$LD_LIBRARY_PATH cd $PGX_HOME/server/distributed ./bin/node ./package/main/ClusterHost.js -server_config=./package/options.json -pgx_hostnames=hostname0,hostname1,hostname2,hostname3

After the operations have successfully completed on all four nodes. you can see a log message similar to the following:

17:11:42,709 [hostname0] INFO pgx.dist.cluster_host - PGX.D Server listening on http://hostname0:8023/pgx

The distributed in-memory analyst in now up and running. It provides service through the following endpoint: http://hostname0:8023/pgx

This endpoint can be consumed in the same manner as a remotely deployed shared-memory analyst. You can use Java APIs, Groovy shells, and the PGX shell. An example of using the PGX shell is as follows:

cd $PGX_HOME ./bin/pgx --base_url=http://hostname0:8023/pgx

The following example uses the service from a Groovy shell for Oracle NoSQL Database:

opg-nosql> session=Pgx.createSession("http://hostname0:8023/pgx", "session-id-123");

opg-nosql> analyst=session.createAnalyst();

opg-nosql> pgxGraph = session.readGraphWithProperties(opg.getConfig());

The following is an example options.json file:

$ cat ./package/options.json

{

"pgx_use_infiniband": 1,

"pgx_command_queue_path": ".",

"pgx_builtins_path": "./lib",

"pgx_executable_path": "./bin/pgxd",

"java_class_path": "./jlib/*",

"pgx_httpserver_port": 8023,

"pgx_httpserver_enable_csrf_token": 1,

"pgx_httpserver_enable_ssl": 0,

"pgx_httpserver_client_auth": 1,

"pgx_httpserver_key": "<INSERT_VALUE_HERE>/server_key.pem",

"pgx_httpserver_cert": "<INSERT_VALUE_HERE>/server_cert.pem",

"pgx_httpserver_ca": "<INSERT_VALUE_HERE>/server_cert.pem",

"pgx_httpserver_auth": "<INSERT_VALUE_HERE>/server.auth.json",

"pgx_log_configure": "./package/log4j.xml",

"pgx_ranking_query_max_cache_size": 1048576,

"zookeeper_timeout": 10000,

"pgx_partitioning_strategy": "out_in",

"pgx_partitioning_ignore_ghostnodes": false,

"pgx_ghost_min_neighbors": 5000,

"pgx_ghost_max_node_counts": 40000,

"pgx_use_bulk_communication": true,

"pgx_num_worker_threads": 28

}

6.14 Reading and Storing Data in HDFS

The in-memory analyst supports the Hadoop Distributed File System (HDFS). This example shows how to read and access graph data in HDFS using the in-memory analyst APIs.

Graph configuration files are parsed on the client side. The graph data and configuration files must be stored in HDFS. You must install a Hadoop client on the same computer as In-Memory Analytics. See Oracle Big Data Appliance Software User's Guide.

Note:

The in-memory analyst engine runs in memory on one node of the Hadoop cluster only.