| C H A P T E R 3 |

|

Managing Disk Volumes |

This chapter describes redundant array of independent disks (RAID) concepts, and how to configure and manage RAID disk volumes using the Sun Netra T6340 server module on-board serial attached SCSI (SAS) disk controller.

| Note - The server module can be configured with a RAID Host Bus Adapter (HBA). For managing HBA and disk volumes see the documentation for your HBA. |

To configure and use RAID disk volumes on the server module, you must install the appropriate patches. For the latest information on patches, see the latest product notes for your system.

Installation procedures for patches are included in text README files that accompany the patches.

The on-board disk controller treats disk volumes as logical disk devices comprising one or more complete physical disks.

Once you create a volume, the operating system uses and maintains the volume as if it were a single disk. By providing this logical volume management layer, the software overcomes the restrictions imposed by physical disk devices.

The on-board disk controller can create as many as two hardware RAID volumes. The controller supports either two-disk RAID 1 (integrated mirror, or IM) volumes, or up to eight-disk RAID 0 (integrated stripe, or IS) volumes.

| Note - Due to the volume initialization that occurs on the disk controller when a new volume is created, properties of the volume such as geometry and size are unknown. RAID volumes created using the hardware controller must be configured and labeled using format(1M) prior to use with the Solaris Operating System. See To Configure and Label a Hardware RAID Volume for Use in the Solaris Operating System, or the format(1M) man page for further details. |

Volume migration (relocating all RAID volume disk members from one server module to another server module) is not supported. If you must perform this operation, contact your service provider.

RAID technology enables the construction of a logical volume, made up of several physical disks, in order to provide data redundancy, increased performance, or both. The on-board disk controller supports both RAID 0 and RAID 1 volumes.

This section describes the RAID configurations supported by the on-board disk controller:

Integrated stripe volumes are configured by initializing the volume across two or more physical disks, and sharing the data written to the volume across each physical disk in turn, or striping the data across the disks.

Integrated stripe volumes provide for a logical unit (LUN) that is equal in capacity to the sum of all its member disks. For example, a three-disk IS volume configured on 72-gigabyte drives will have a capacity of 216 gigabytes.

FIGURE 3-1 Graphical Representation of Disk Striping

IS volumes are likely to provide better performance than IM volumes or single disks. Under certain workloads, particularly some write or mixed read-write workloads, I/O operations complete faster because the I/O operations are being handled in a round-robin fashion, with each sequential block being written to each member disk in turn.

Disk mirroring (RAID 1) is a technique that uses data redundancy (two complete copies of all data stored on two separate disks) to protect against loss of data due to disk failure. One logical volume is duplicated on two separate disks.

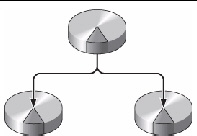

FIGURE 3-2 Graphical Representation of Disk Mirroring

Whenever the operating system needs to write to a mirrored volume, both disks are updated. The disks are maintained at all times with exactly the same information. When the operating system needs to read from the mirrored volume, the OS reads from whichever disk is more readily accessible at the moment, which can result in enhanced performance for read operations.

The SAS controller supports mirroring and striping using the Solaris OS raidctl utility.

A hardware RAID volume created under the raidctl utility behaves slightly differently than a volume created using volume management software. Under a software volume, each device has its own entry in the virtual device tree, and read-write operations are performed to both virtual devices. Under hardware RAID volumes, only one device appears in the device tree. Member disk devices are invisible to the operating system and are accessed only by the SAS controller.

To perform a disk hot-plug procedure, you must know the physical or logical device name for the drive that you want to install or remove. If your system encounters a disk error, often you can find messages about failing or failed disks in the system console. This information is also logged in the /var/adm/messages files.

These error messages typically refer to a failed hard drive by its physical device name (such as /devices/pci@1f,700000/scsi@2/sd@1,0) or by its logical device name (such as c1t1d0). In addition, some applications might report a disk slot number (0 through 3).

You can use TABLE 3-1 to associate internal disk slot numbers with the logical and physical device names for each hard drive.

|

Logical Device Name[1] |

||

|---|---|---|

|

1. Verify which hard drive corresponds with which logical device name and physical device name, using the raidctl command:

# raidctl Controller: 1 Disk: 0.0.0 Disk: 0.1.0 Disk: 0.2.0 Disk: 0.3.0 Disk: 0.4.0 Disk: 0.5.0 Disk: 0.6.0 Disk: 0.7.0 |

See Physical Disk Slot Numbers, Physical Device Names, and Logical Device Names for Non-RAID Disks.

The preceding example indicates that no RAID volume exists. In another case:

# raidctl Controller: 1 Volume:c1t0d0 Disk: 0.0.0 Disk: 0.1.0 Disk: 0.2.0 Disk: 0.3.0 Disk: 0.4.0 Disk: 0.5.0 Disk: 0.6.0 Disk: 0.7.0 |

In this example, a single volume (c1t0d0) has been enabled.

The on-board SAS controller can configure as many as two RAID volumes. Prior to volume creation, ensure that the member disks are available and that there are not two volumes already created.

The Disk Status column displays the status of each physical disk. Each member disk might be GOOD, indicating that it is online and functioning properly, or it might be FAILED, indicating that the disk has hardware or configuration issues that need to be addressed.

For example, an IM with a secondary disk that has been removed from the chassis appears as:

See the raidctl(1M) man page for additional details regarding volume and disk status.

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

2. Type the following command:

The creation of the RAID volume is interactive, by default. For example:

# raidctl -c c1t0d0 c1t1d0 Creating RAID volume c1t0d0 will destroy all data on member disks, proceed (yes/no)? yes ... Volume c1t0d0 is created successfully! # |

As an alternative, you can use the -f option to force the creation if you are sure of the member disks, and sure that the data on both member disks can be lost. For example:

When you create a RAID mirror, the secondary drive (in this case, c1t1d0) disappears from the Solaris device tree.

3. To check the status of a RAID mirror, type the following command:

The preceding example indicates that the RAID mirror is still resynchronizing with the backup drive.

The following example shows that the RAID mirror is synchronized and online.

The disk controller synchronizes IM volumes one at a time. If you create a second IM volume before the first IM volume completes its synchronization, the first volume’s RAID status will indicate SYNC, and the second volume’s RAID status will indicate OPTIMAL. Once the first volume has completed, its RAID status changes to OPTIMAL, and the second volume automatically starts synchronizing, with a RAID status of SYNC.

Under RAID 1 (disk mirroring), all data is duplicated on both drives. If a disk fails, replace it with a working drive and restore the mirror. For instructions, see To Perform a Mirrored Disk Hot-Plug Operation.

For more information about the raidctl utility, see the raidctl(1M) man page.

|

Due to the volume initialization that occurs on the disk controller when a new volume is created, the volume must be configured and labeled using the format(1M) utility prior to use with the Solaris Operating System (see To Configure and Label a Hardware RAID Volume for Use in the Solaris Operating System). Because of this limitation, raidctl(1M) blocks the creation of a hardware RAID volume if any of the member disks currently have a file system mounted.

This section describes the procedure required to create a hardware RAID volume containing the default boot device. Since the boot device always has a mounted file system when booted, an alternate boot medium must be employed, and the volume created in that environment. One alternate medium is a network installation image in single-user mode. (Refer to the Solaris 10 Installation Guide for information about configuring and using network-based installations.)

1. Determine which disk is the default boot device.

From the OpenBoot ok prompt, type the printenv command, and if necessary the devalias command, to identify the default boot device. For example:

2. Type the boot net -s command.

3. Once the system has booted, use the raidctl(1M) utility to create a hardware mirrored volume, using the default boot device as the primary disk.

See To Create a Hardware Mirrored Volume. For example:

# raidctl -c -r 1 c1t0d0 c1t1d0 Creating RAID volume c1t0d0 will destroy all data on member disks, proceed (yes/no)? yes ... Volume c1t0d0 is created successfully! # |

4. Install the volume with the Solaris OS using any supported method.

The hardware RAID volume c1t0d0 appears as a disk to the Solaris installation program.

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

|

1. Verify which hard drive corresponds with which logical device name and physical device name.

See Disk Slot Numbers, Logical Device Names, and Physical Device Names.

To verify the current RAID configuration, type:

# raidctl Controller: 1 Disk: 0.0.0 Disk: 0.1.0 Disk: 0.2.0 Disk: 0.3.0 Disk: 0.4.0 Disk: 0.5.0 Disk: 0.6.0 Disk: 0.7.0 |

The preceding example indicates that no RAID volume exists.

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

2. Type the following command:

The creation of the RAID volume is interactive, by default. For example:

When you create a RAID striped volume, the other member drives (in this case, c1t2d0 and c1t3d0) disappear from the Solaris device tree.

As an alternative, you can use the -f option to force the creation if you are sure of the member disks and sure that the data on all other member disks can be lost. For example:

3. To check the status of a RAID striped volume, type the following command:

# raidctl -l Controller: 1 Volume:c1t3d0 Disk: 0.0.0 Disk: 0.1.0 Disk: 0.2.0 Disk: 0.3.0 Disk: 0.4.0 Disk: 0.5.0 Disk: 0.6.0 Disk: 0.7.0 |

4. To check the status of a RAID striped volume, type the following command:

The example shows that the RAID striped volume is online and functioning.

Under RAID 0 (disk striping), there is no replication of data across drives. The data is written to the RAID volume across all member disks in a round-robin fashion. If any one disk is lost, all data on the volume is lost. For this reason, RAID 0 cannot be used to ensure data integrity or availability, but can be used to increase write performance in some scenarios.

For more information about the raidctl utility, see the raidctl(1M) man page.

|

After creating a RAID volume using raidctl, use format(1M) to configure and label the volume before attempting to use it in the Solaris Operating System.

The format utility might generate messages about corruption of the current label on the volume, which you are going to change. You can safely ignore these messages.

2. Select the disk name that represents the RAID volume that you have configured.

In this example, c1t2d0 is the logical name of the volume.

3. Type the type command at the format prompt, then select 0 (zero) to autoconfigure the volume.

4. Use the partition command to partition, or slice, the volume according to your desired configuration.

See the format(1M) man page for additional details.

5. Write the new label to the disk using the label command.

6. Verify that the new label has been written by printing the disk list using the disk command.

Note that c1t2d0 now has a type indicating it is an LSILOGIC-LogicalVolume.

The volume can now be used in the Solaris OS.

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

|

1. Verify which hard drive corresponds with which logical device name and physical device name.

See Disk Slot Numbers, Logical Device Names, and Physical Device Names.

2. Determine the name of the RAID volume, type:

In this example, the RAID volume is c1t1d0.

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

3. To delete the volume, type the following command:

If the RAID volume is an IS volume, the deletion of the RAID volume is interactive, for example:

# raidctl -d c1t0d0 Deleting volume c1t0d0 will destroy all data it contains, proceed (yes/no)? yes ... Volume c1t0d0 is deleted successfully! # |

The deletion of an IS volume results in the loss of all data that it contains. As an alternative, you can use the -f option to force the deletion if you are sure that you no longer need the IS volume or the data it contains. For example:

4. To confirm that you have deleted the RAID array, type the following command:

For more information, see the raidctl(1M) man page.

|

1. Verify which hard drive corresponds with which logical device name and physical device name.

See Disk Slot Numbers, Logical Device Names, and Physical Device Names.

2. To confirm a failed disk, type the following command:

If the Disk Status is FAILED, then the drive can be removed and a new drive inserted. Upon insertion, the new disk should be GOOD and the volume should be SYNC.

This example indicates that the disk mirror has degraded due to a failure in disk c1t2d0 (0.1.0).

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

3. Remove the hard drive, as described in your server module service manual.

There is no need to use a software command to bring the drive offline when the drive has failed.

4. Install a new hard drive, as described in your server module service manual.

The RAID utility automatically restores the data to the disk.

5. To check the status of a RAID rebuild, type the following command:

This example indicates that RAID volume c1t1d0 is resynchronizing.

If you type the command again once synchronization has completed, it indicates that the RAID mirror is finished resynchronizing and is back online:

For more information, see the raidctl(1M) man page.

|

1. Verify which hard drive corresponds with which logical device name and physical device name.

See Disk Slot Numbers, Logical Device Names, and Physical Device Names. Ensure that no applications or processes are accessing the hard drive.

2. Type the following command:

| Note - The logical device names might appear differently on your system, depending on the number and type of add-on disk controllers installed. |

The -al options return the status of all SCSI devices, including buses and USB devices. In this example, no USB devices are connected to the system.

Note that while you can use the Solaris OS cfgadm install_device and cfgadm remove_device commands to perform a hard drive hot-plug procedure, these commands issue the following warning message when you invoke them on a bus containing the system disk:

This warning is issued because these commands attempt to quiesce the (SAS) SCSI bus, but the server firmware prevents it. This warning message can be safely ignored, but the following step avoids this warning message altogether.

3. Remove the hard drive from the device tree.

This example removes c1t3d0 from the device tree. The blue OK-to-Remove LED lights.

4. Verify that the device has been removed from the device tree.

Note that c1t3d0 is now unavailable and unconfigured. The corresponding hard drive OK-to-Remove LED is lit.

5. Remove the hard drive, as described in your server module service manual.

The blue OK-to-Remove LED is extinguished when you remove the hard drive.

6. Install a new hard drive, as described in your server module service manual.

7. Configure the new hard drive.

The green Activity LED flashes as the new disk at c1t3d0 is added to the device tree.

8. Verify that the new hard drive is in the device tree.

Note that c1t3d0 is now listed as configured.

Copyright © 2010, Oracle and/or its affiliates. All rights reserved.