| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris Cluster 3.3 With SCSI JBOD Storage Device Manual |

| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris Cluster 3.3 With SCSI JBOD Storage Device Manual |

1. Installing a SCSI JBOD Storage Device

2. Maintaining a SCSI JBOD Storage Device

FRUs That Do Not Require Oracle Solaris Cluster Maintenance Procedures

Sun StorEdge 3120 Storage Array FRUs

Sun StorEdge 3310 and 3320 SCSI Storage Array FRUs

SPARC: Sun StorEdge D1000 Storage Array FRUs

SPARC: Sun StorEdge Netra D130/S1 Storage Array FRUs

SPARC: Sun StorEdge D2 Storage Array FRUs

SPARC: Sun StorEdge Multipack Storage Array FRUs

Disconnecting and Reconnecting a Node from Shared Storage

How to Disconnect the Node from Shared Storage

How to Reconnect the Node to Shared Storage

How to Replace a Host Adapter When Using Failover and Scalable Data Services Only

How to Replace a Host Adapter When Using Oracle Real Application Clusters Only

How to Replace a Disk Drive Without Oracle Real Application Clusters

SPARC: How to Replace a Disk Drive With Oracle Real Application Clusters

The maintenance procedures in FRUs That Do Not Require Oracle Solaris Cluster Maintenance Procedures are performed the same as in a noncluster environment. Table 2-1 lists the procedures that require cluster-specific steps.

Table 2-1 Task Map: Maintaining a Storage Array

|

Each storage device has a different set of FRUs that do not require cluster-specific procedures. Choose among the following storage devices:

(SPARC) Sun StorEdge D1000

(SPARC) Sun StorEdge D130/S1

(SPARC) Sun StorEdge D2

(SPARC) Sun StorEdge Multipack

The following is a list of administrative tasks that require no cluster-specific procedures. See the Sun StorEdge 3000 Family FRU Installation Guide for the procedures for the following FRUs. For a URL to this storage documentation, see Related Documentation.

The following is a list of administrative tasks that require no cluster-specific procedures. See the Sun StorEdge 3000 Family FRU Installation Guide for instructions on replacing the following FRUs. http://www.tokensbeads.com/education.htm

The following is a list of administrative tasks that require no cluster-specific procedures. See the Sun StorEdge A1000 and D1000 Installation, Operations, and Service Manual and the Sun StorEdge A1000 and D1000 Installation, Operations, and Service Manual for the procedures for the following FRUs. For a URL to this storage documentation, see Related Documentation.

The following is a list of administrative tasks that require no cluster-specific procedures. See the Netra st D130 Installation and Maintenance Manual for the procedures for the following FRUs. For a URL to this storage documentation, see Related Documentation.

The following is a list of administrative tasks that require no cluster-specific procedures. See the Sun StorEdge D2 Array Installation, Operation, and Service Manual for the procedures for the following FRUs. For a URL to this storage documentation, see Related Documentation.

The following is a list of administrative tasks that require no cluster-specific procedures. See the SPARCstorage MultiPack Service Manual for the procedures for the following FRUs. For a URL to this storage documentation, see Related Documentation.

Removing a storage array enables you to downsize or reallocate your existing storage pool.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

You plan to remove the references to the disk drives in the array.

You do not need to replace the storage array's chassis.

If you need to replace your storage array's chassis, see How to Replace the Chassis.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify role-based access control (RBAC) authorization.

To determine whether the affected array contains a quorum device, use the following command.

# clquorum show

For procedures about how to add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

If a volume manager does manage the disk drives, run the appropriate volume manager commands to remove the disk drives from any diskset or disk group. For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation. See the following paragraph for additional Veritas Volume Manager commands that are required.

Note - Disk drives that were managed by Veritas Volume Manager must be completely removed from Veritas Volume Manager control before you can remove the disk drives from the Oracle Solaris Cluster environment. After you delete the disk drives from any disk group, use the following commands on both nodes to remove the disk drives from Veritas Volume Manager control.

# vxdisk offline cNtXdY # vxdisk rm cNtXdY

# cfgadm -al

# cfgadm -c unconfigure cN::dsk/cNtXdY

# devfsadm -C

# cldevice clear

For the procedure about how to power off a storage array, see your storage documentation. For a list of storage documentation, see Related Documentation.

For the procedure about how to remove a storage array, see your storage documentation. For a list of storage documentation, see Related Documentation.

Note - If other parallel SCSI devices are connected to the nodes, you can delete the contents of the nvramrc script. Then, at the OpenBoot PROM, set setenv use-nvramrc? false. Afterward, reset the scsi-initiator-id to 7 as outlined in Installing a Storage Array.

For the procedure about how to remove a host adapter, see your host adapter and server documentation.

# cldevice list -v

You might need to replace a chassis if the chassis fails. With this procedure, you are able to replace the chassis and retain the storage array's disk drives and the references to those disk drives. By retaining the storage array's disk drives, you save time because you no longer need to resynchronize your mirrors or restore your data.

If you need to replace the entire storage array, see How to Remove a Storage Array.

Before You Begin

This procedure relies on the following assumptions.

You plan to retain the disk drives in the storage array that you need to replace.

You plan to retain the references to the disk drives.

Your replacement chassis is unpacked and ready to be placed into the cluster.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

You are not using a StorEdge D2, a StorEdge 3310 SCSI, or a StorEdge 3320 SCSI storage array in split-bus mode.

If you are running your storage array in split-bus mode, you must shut down the entire cluster to replace the chassis of the storage array.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

To determine whether a quorum device will be affected by this procedure, use one of the following commands.

# clquorum show

For procedures about how to add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

Note - Disk drives that were managed by Veritas Volume Manager must be completely removed from Veritas Volume Manager control before you can remove the disk drives from the Oracle Solaris Cluster environment. After you delete the disk drives from any disk group, use the following commands on both nodes to remove the disk drives from Veritas Volume Manager control.

If a volume manager manages the disk drives, run the appropriate volume manager commands to remove the disk drives from any diskset or disk group. For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

# vxdisk offline cNtXdY# vxdisk rm cNtXdY

You can disconnect the cables in any order.

For more information, see your storage documentation. For a list of storage documentation, see Related Documentation.

You can connect the cables in any order.

Ensure the cable does not exceed bus-length limitations. For more information on bus-length limitations, see your hardware documentation.

Move your other components to the new chassis as well. For the procedures about how to replace your storage array's components, see your storage documentation. For a list of storage documentation, see Related Documentation.

For the procedure about how to shut down and power off the storage array, see the documentation that came with your array.

# devfsadm

# cldevice populate

For the procedure about how to shut down and power off a node, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Solaris Cluster System Administration Guide.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

Use this procedure to temporarily disconnect a node from shared storage. You need to temporarily disconnect a node from shared storage if you intend to replace an HBA.

This procedure relies on the following assumptions.

You intend to reconnect the shared storage to the same host adapter that the storage array was connected before you disconnected the SCSI cable.

This procedure defines Node A as the node you want to disconnect from the shared storage. Node B is the remaining node.

This procedure assumes that you might have more than one storage array connected to Node A.

These procedures provide the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

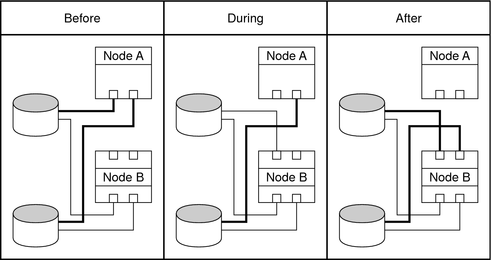

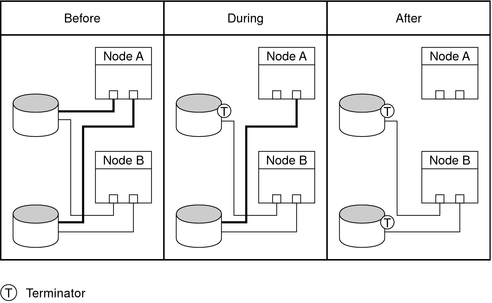

You must maintain proper SCSI-bus termination during this procedure. The process by which you disconnect the node from shared storage depends on whether you have host adapters available on Node B (see Figure 2-1) . If you do not have host adapters available on Node B and your storage device does not have auto-termination, you must use terminators (see Figure 2-2).

Note - To determine the specific terminator that your storage array supports, see your storage documentation. For a list of storage documentation, see Related Documentation.

Record this information because you use this information in How to Reconnect the Node to Shared Storage to return resource groups and device groups to this node.

Use the following command:

# clresourcegroup status -n NodeA # cldevicegroup status -n NodeA

# clnode evacuate NodeA

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

To shut down and power off a node, see your Oracle Solaris Cluster system administration documentation.

Figure 2-1 Disconnecting the Node from Shared Storage by Using Host Adapters on Node B

Figure 2-2 Disconnecting the Node from Shared Storage by Using Terminators

For the procedure about booting cluster nodes, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Solaris Cluster System Administration Guide.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

| Caution - Connect this storage array to the same host adapter that the storage array was connected before you disconnected the SCSI cable. |

For the procedure about booting cluster nodes, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Solaris Cluster System Administration Guide.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

Perform the following step for each device group you want to return to the original node.

# cldevicegroup switch -n NodeA devicegroup1[ devicegroup2 ...]

The node to which you are restoring device groups.

The device group or groups that you are restoring to the node.

Perform the following step for each resource group you want to return to the original node.

# clresourcegroup switch -n NodeA resourcegroup1[ resourcegroup2 ...]

For failover resource groups, the node to which the groups are returned. For scalable resource groups, the node list to which the groups are returned.

The resource group or groups that you are returning to the node or nodes.

Use this procedure to replace a failed SCSI cable in a running cluster.

Before You Begin

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

To determine whether a quorum device will be affected by this procedure, use the following command.

# clquorum show

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

You need to replace a host adapter if your host adapter fails, if it becomes unstable, or if you want to upgrade to a newer version. These procedures define Node A as the node with the host adapter that you plan to replace.

Choose the procedure that corresponds to your cluster configuration.

x86 only - If your cluster is x86 based, Oracle RAC services are not supported. Follow the instructions in How to Replace a Host Adapter When Using Failover and Scalable Data Services Only.

|

Note - The first three procedures in this section assume that you are using the recommended HBA configuration: two redundant hardware paths to shared data. If you choose to use a single HBA configuration, see Configuring Cluster Nodes With a Single, Dual-Port HBA in Oracle Solaris Cluster 3.3 Hardware Administration Manual for the risks and restrictions of that configuration and use How to Replace a Host Adapter When Using a Single, Dual-Port HBA to Provide Both Paths to Shared Data.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

You are not using Oracle Real Application Clusters. If you are using Oracle Real Application Clusters, follow the instructions in How to Replace a Host Adapter When Using Oracle Real Application Clusters Only or How to Replace a Host Adapter When Using Both Failover and Scalable Data Services and Oracle Real Application Clusters.

Except for the failed host adapter, your cluster is fully operational and all nodes are powered on.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

Record this information because you use this information later in this procedure to return resource groups and device groups to Node A.

# clresourcegroup status -n NodeA # cldevicegroup status -n NodeA

# clnode evacuate NodeA

To determine whether the affected device contains a quorum device, use the following command.

# clquorum show

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

Record this information because you use it in Step 16 of this procedure to reattach submirrors on the storage array. To determine which submirrors or plexes are affected, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For more information on DR, see your Oracle Solaris Cluster system administration documentation.

To shut down and power off a node, see your Oracle Solaris Cluster system administration documentation.

To remove and add host adapters, see the documentation that shipped with your nodes.

For more information on DR, see your Oracle Solaris Cluster system administration documentation.

For instructions on setting SCSI initiator IDs in x86 based systems, see x86: How to Install a Storage Array in a New x86 Based Cluster.

For the procedure about booting cluster nodes, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Solaris Cluster System Administration Guide.

The Oracle Enterprise Manager Ops Center 2.5 software helps you patch and monitor your data center assets. Oracle Enterprise Manager Ops Center 2.5 helps improve operational efficiency and ensures that you have the latest software patches for your software. Contact your Oracle representative to purchase Oracle Enterprise Manager Ops Center 2.5.

Additional information for using the Oracle patch management tools is provided in Oracle Solaris Administration Guide: Basic Administration at http://docs.sun.com. Refer to the version of this manual for the Oracle Solaris OS release that you have installed.

If you must apply a patch when a node is in noncluster mode, you can apply it in a rolling fashion, one node at a time, unless instructions for a patch require that you shut down the entire cluster. Follow the procedures in How to Apply a Rebooting Patch (Node) in Oracle Solaris Cluster System Administration Guide to prepare the node and to boot it in noncluster mode. For ease of installation, consider applying all patches at the same time. That is, apply all patches to the node that you place in noncluster mode.

For required firmware, see the Sun System Handbook.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

Perform the following step for each device group you want to return to the original node.

# cldevicegroup switch -n NodeA devicegroup1[ devicegroup2 ...]

The node to which you are restoring device groups.

The device group or groups that you are restoring to the node.

Perform the following step for each resource group you want to return to the original node.

# clresourcegroup switch -n NodeA resourcegroup1[ resourcegroup2 ...]

For failover resource groups, the node to which the groups are returned. For scalable resource groups, the node list to which the groups are returned.

The resource group or groups that you are returning to the node or nodes.

To add and remove quorum devices, see your Oracle Solaris Cluster system administration documentation.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

You are using Oracle Real Application Clusters only. If you are using other failover or scalable data services, follow the instructions in How to Replace a Host Adapter When Using Failover and Scalable Data Services Only or How to Replace a Host Adapter When Using Both Failover and Scalable Data Services and Oracle Real Application Clusters.

Except for the failed host adapter, your cluster is fully operational and all nodes are powered on.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

# ps -ef | grep oracle

To shut down and restart an Oracle instance in the RAC environment, refer to your Oracle documentation.

To determine whether the affected device contains a quorum device, use the following command.

# clquorum show

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Veritas Volume Manager documentation.

Record this information because you use it in Step 15 of this procedure to reattach plexes on the storage array. To determine which plexes are affected, see your Veritas Volume Manager documentation.

For more information on DR, see your Oracle Solaris Cluster system administration documentation.

To shut down and power off a node, see your Oracle Solaris Cluster system administration documentation.

To remove and add host adapters, see the documentation that shipped with your nodes.

For more information on DR, see your Oracle Solaris Cluster system administration documentation.

For the procedure about booting cluster nodes, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Solaris Cluster System Administration Guide.

The Oracle Enterprise Manager Ops Center 2.5 software helps you patch and monitor your data center assets. Oracle Enterprise Manager Ops Center 2.5 helps improve operational efficiency and ensures that you have the latest software patches for your software. Contact your Oracle representative to purchase Oracle Enterprise Manager Ops Center 2.5.

Additional information for using the Oracle patch management tools is provided in Oracle Solaris Administration Guide: Basic Administration at http://docs.sun.com. Refer to the version of this manual for the Oracle Solaris OS release that you have installed.

If you must apply a patch when a node is in noncluster mode, you can apply it in a rolling fashion, one node at a time, unless instructions for a patch require that you shut down the entire cluster. Follow the procedures in How to Apply a Rebooting Patch (Node) in Oracle Solaris Cluster System Administration Guide to prepare the node and to boot it in noncluster mode. For ease of installation, consider applying all patches at the same time. That is, apply all patches to the node that you place in noncluster mode.

For required firmware, see the Sun System Handbook.

For more information, see your Veritas Volume Manager documentation.

To shut down and restart an Oracle instance in the RAC environment, refer to the Oracle 9iRAC Administration Guide.

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

You are using both Oracle Real Application Clusters and failover or scalable data services.

If you are using only Oracle Real Application Clusters, follow the instructions in How to Replace a Host Adapter When Using Oracle Real Application Clusters Only. If you are using only failover and scalable data services, follow the instructions in How to Replace a Host Adapter When Using Failover and Scalable Data Services Only.

Except for the failed host adapter, your cluster is operational and all nodes are powered on.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

# ps -ef | grep oracle

To shut down and restart an Oracle instance in the RAC environment, refer to the Oracle 9iRAC Administration Guide.

Record this information because you use it later in this procedure to return resource groups and device groups to Node A.

# clresourcegroup status -n NodeA # cldevicegroup status -n NodeA

# clnode evacuate NodeA

To determine whether the affected device contains a quorum device, use the following command.

# clquorum show

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Veritas Volume Manager documentation.

Record this information because you use it in Step 17 of this procedure to reattach plexes on the storage array. To determine which plexes are affected, see your Veritas Volume Manager documentation.

For more information on DR, see your Oracle Solaris Cluster system administration documentation.

To shut down and power off a node, see your Oracle Solaris Cluster system administration documentation.

To remove and add host adapters, see the documentation that shipped with your nodes.

For more information, see your Oracle Solaris Cluster system administration documentation.

For the procedure about booting cluster nodes, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Solaris Cluster System Administration Guide.

The Oracle Enterprise Manager Ops Center 2.5 software helps you patch and monitor your data center assets. Oracle Enterprise Manager Ops Center 2.5 helps improve operational efficiency and ensures that you have the latest software patches for your software. Contact your Oracle representative to purchase Oracle Enterprise Manager Ops Center 2.5.

Additional information for using the Oracle patch management tools is provided in Oracle Solaris Administration Guide: Basic Administration at http://docs.sun.com. Refer to the version of this manual for the Oracle Solaris OS release that you have installed.

If you must apply a patch when a node is in noncluster mode, you can apply it in a rolling fashion, one node at a time, unless instructions for a patch require that you shut down the entire cluster. Follow the procedures in How to Apply a Rebooting Patch (Node) in Oracle Solaris Cluster System Administration Guide to prepare the node and to boot it in noncluster mode. For ease of installation, consider applying all patches at the same time. That is, apply all patches to the node that you place in noncluster mode.

For required firmware, see the Sun System Handbook.

For more information, see your Veritas Volume Manager documentation.

Perform the following step for each device group you want to return to the original node.

# cldevicegroup switch -n NodeA devicegroup1[ devicegroup2 ...]

The node to which you are restoring device groups.

The device group or groups that you are restoring to the node.

Perform the following step for each resource group you want to return to the original node.

# clresourcegroup switch -n NodeA resourcegroup1[ resourcegroup2 ...]

For failover resource groups, the node to which the groups are returned. For scalable resource groups, the node list to which the groups are returned.

The resource group or groups that you are returning to the node or nodes.

To shut down and restart an Oracle instance in the RAC environment, refer to the Oracle 9iRAC Administration Guide.

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

Example 2-1 SPARC: Replacing a Host Adapter in a Running Cluster

In the following example, a two-node cluster is running Oracle Real Application Clusters and Veritas Volume Manager. In this situation, you begin the host adapter replacement by determining the Oracle instance name.

# ps -ef | grep oracle oracle 14716 14414 0 14:05:47 console 0:00 grep oracle oracle 14438 1 0 13:05:44 ? 0:02 ora_lmon_tpcc1 . . . oracle 14434 1 0 13:05:43 ? 0:00 ora_pmon_tpcc1 oracle 14458 1 0 13:05:50 ? 0:00 ora_d000_tpcc1

This output identifies the Oracle Real Application Clusters instance as tpcc1.

Shutting down the Oracle Real Application Clusters instance on Node A involves several steps, as shown in the following example.

# su - oracle Sun Microsystems Inc. SunOS 5.9 Generic May 2002 $ ksh $ ORACLE_SID=tpcc1 $ ORACLE_HOME=/export/home/oracle/OraHome1 $ export ORACLE_SID ORACLE_HOME $ sqlplus " /as sysdba " SQL*Plus: Release 9.2.0.4.0 - Production on Mon Jan 5 14:12:28 2004 Copyright (c) 1982, 2002, Oracle Corporation. All rights reserved. Connected to: Oracle9i Enterprise Edition Release 9.2.0.4.0 - 64bit Production With the Partitioning, Real Application Clusters, OLAP and Oracle Data Mining options JServer Release 9.2.0.4.0 - Production SQL> shutdown immediate ; Database closed. Database dismounted. ORACLE instance shut down. SQL> exit Disconnected from Oracle9i Enterprise Edition Release 9.2.0.4.0 - 64bit Production With the Partitioning, Real Application Clusters, OLAP and Oracle Data Mining options JServer Release 9.2.0.4.0 - Production $ lsnrctl LSNRCTL for Solaris: Version 9.2.0.4.0 - Production on 05-JAN-2004 14:15:09 Copyright (c) 1991, 2002, Oracle Corporation. All rights reserved. Welcome to LSNRCTL, type "help" for information. LSNRCTL> stop Connecting to (DESCRIPTION=(ADDRESS=(PROTOCOL=IPC)(KEY=EXTPROC))) The command completed successfully LSNRCTL>

After you have stopped the Oracle Real Application Clusters instance, check for a quorum device and, if necessary, reconfigure the quorum device.

When you are certain that the node with the failed adapter does not contain a quorum device, proceed to determine the affected plexes.

Record this information for use in reestablishing the original storage configuration. In the following example, c2 is the controller with the failed host adapter.

# vxprint -ht -g tpcc | grep c2 dm tpcc01 c2t0d0s2 sliced 4063 8374320 - dm tpcc02 c2t1d0s2 sliced 4063 8374320 - dm tpcc03 c2t2d0s2 sliced 4063 8374320 - dm tpcc04 c2t3d0s2 sliced 4063 8374320 - dm tpcc09 c2t8d0s2 sliced 4063 8374320 - dm tpcc10 c2t9d0s2 sliced 4063 8374320 - sd tpcc02-01 control_001-01 tpcc02 0 41040 0 c2t1d0 ENA . . . sd tpcc03-06 temp_0_0-02 tpcc03 2967840 276480 0 c2t2d0 ENA sd tpcc03-04 ware_0_0-02 tpcc03 2751840 95040 0 c2t2d0 ENA

From this output, you can easily determine which plexes and subdisks are affected by the failed adapter. These are the plexes you detach from the storage array.

/usr/sbin/vxplex -g tpcc det control_001-02 /usr/sbin/vxplex -g tpcc det temp_0_0-02

After the plexes are detached, you can safely shut down the node, if necessary.

Proceed with replacing the failed host adapter, following instructions that accompanied that device.

After you replace the failed host adapter, and Node A is in cluster mode, reattach the plexes and replace any quorum device to reestablish your original cluster configuration.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

You are using a single, dual-port HBA to provide the redundant paths to your shared data.

For an explanation of the limitations and risks of using this configuration, see Configuring Cluster Nodes With a Single, Dual-Port HBA in Oracle Solaris Cluster 3.3 Hardware Administration Manual.

Except for the failed host adapter, your cluster is operational and all nodes are powered on.

Your nodes are not configured for dynamic reconfiguration.

You cannot use dynamic reconfiguration for this procedure when using a single HBA.

Node A is the node with the failed host adapter.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

Record this information because you use it in Step 17 of this procedure to return resource groups and device groups to Node A.

# clresourcegroup status -n NodeA # cldevicegroup status -n NodeA

To shut down and restart an Oracle instance in the RAC environment, refer to the Oracle 9iRAC Administration Guide.

To shut down a cluster, see your Oracle Solaris Cluster system administration documentation.

To remove and add host adapters, see the documentation that shipped with your nodes.

For the procedure about booting cluster nodes, see Chapter 3, Shutting Down and Booting a Cluster, in Oracle Cluster System Administration Guide.

The Oracle Enterprise Manager Ops Center 2.5 software helps you patch and monitor your data center assets. Oracle Enterprise Manager Ops Center 2.5 helps improve operational efficiency and ensures that you have the latest software patches for your software. Contact your Oracle representative to purchase Oracle Enterprise Manager Ops Center 2.5.

Additional information for using the Oracle patch management tools is provided in Oracle Solaris Administration Guide: Basic Administration at http://docs.sun.com. Refer to the version of this manual for the Oracle Solaris OS release that you have installed.

If you must apply a patch when a node is in noncluster mode, you can apply it in a rolling fashion, one node at a time, unless instructions for a patch require that you shut down the entire cluster. Follow the procedures in How to Apply a Rebooting Patch (Node) in Oracle Solaris Cluster System Administration Guide to prepare the node and to boot it in noncluster mode. For ease of installation, consider applying all patches at the same time. That is, apply all patches to the node that you place in noncluster mode.

For required firmware, see the Sun System Handbook.

For more information, see your volume manager software documentation.

Perform the following step for each device group you want to return to the original node.

# cldevicegroup switch -n NodeA devicegroup

Perform the following step for each resource group you want to return to the original node.

# clresourcegroup switch -n NodeA resourcegroup

For failover resource groups, the node to which the groups are returned. For scalable resource groups, the node list to which the groups are returned.

The resource group that is returned to the node or nodes.

To shut down and restart an Oracle instance in the RAC environment, refer to the Oracle 9iRAC Administration Guide.

Adding a disk drive enables you to increase your storage space after a storage array has been added to your cluster.

| Caution - (Sun StorEdge Multipack Enclosures Only) SCSI-reservations failures have been observed when clustering storage arrays that contain a particular model of Quantum disk drive: SUN4.2G VK4550J. Do not use this particular model of Quantum disk drive for clustering with storage arrays. If you must use this model of disk drive, you must set the scsi-initiator-id of Node A to 6. If you use a six-slot storage array, you must set the storage array for the 9-through-14 SCSI target address range. For more information, see the Sun StorEdge MultiPack Storage Guide. |

Before You Begin

This procedure relies on the following prerequisites and assumptions.

Your storage array contains an empty disk slot.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

Identify the empty disk slot and note the target number. Refer to your storage array documentation.

For the procedure about how to install a disk drive, see your storage array documentation.

# cfgadm -c configure cN

# devfsadm

Depending on the number of devices that are connected to the node, the devfsadm command can require at least five minutes to complete.

# ls -l /dev/rdsk

If a volume management daemon such as vold is running on your node, and you have a CD-ROM drive that is connected to the node, a device busy error might be returned even if no disk is in the drive. This error is an expected behavior.

# cldevice populate

The new device ID that is assigned to the new disk drive might not be in sequential order in the storage array.

# cldevice list -v

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For procedures about how to add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

Removing a disk drive can allow you to downsize or reallocate your existing storage pool. You might want to perform this procedure in the following scenarios.

You no longer need to make data accessible to a particular node.

You want to migrate a portion of your storage to another storage array.

For conceptual information about quorum, quorum devices, global devices, and device IDs, see the Oracle Solaris Cluster concepts documentation.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

You do not need to remove the entire storage array.

If you need to remove the storage array, see How to Remove a Storage Array.

You do not need to replace the storage array's chassis.

If you need to replace your storage array's chassis, see How to Replace the Chassis.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

If the disk error message reports the drive problem by device ID, use the cldevice list to determine the Oracle Solaris device name. To list all configurable hardware information, use the cfgadm -al command.

# cldevice list -v # cfgadm -al

To determine whether a quorum device will be affected by this procedure, use one of the following commands.

# clquorum show +

For procedures about how to add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

# cfgadm -c unconfigure cN::dsk/cNtXdY

For the procedure about how to remove a disk drive, see your storage documentation. For a list of storage documentation, see Related Documentation.

# devfsadm -C

# cldevice clear

You need to replace a disk drive if the disk drive fails or when you want to upgrade to a higher-quality or to a larger disk.

For conceptual information about quorum, quorum devices, global devices, and device IDs, see the Oracle Solaris Cluster concepts documentation.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

Your cluster is operational.

Your system is not running Oracle Real Application Clusters.

If your system is running Oracle Real Application Clusters, see SPARC: How to Replace a Disk Drive With Oracle Real Application Clusters.

(Solaris Volume Manager Only) If the disk drive failure prevents Solaris Volume Manager from reading the disk label, you have a backup of the disk-partitioning information.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

# cldevice show -v cNtNdN

To determine whether a quorum device will be affected by this procedure, use one of the following commands.

# clquorum show +

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

You saved this information when you performed one of the following tasks:

Installed your storage array in an initial cluster as outlined in Step 10 of SPARC: How to Install a Storage Array in a New SPARC Based Cluster

Added the storage array to an operational cluster.

| Caution - Do not save disk partitioning information under /tmp because you will lose this file when you reboot. Instead, save this file under /usr/tmp. |

# prtvtoc /dev/rdsk/cNtNdNsN > filename

Use this information when you partition the new disk drive.

# cldevicegroup status devgroup1

# vxdisk offline cNtNdN # vxdisk rm cNtNdN

# cfgadm -x replace_device cN::disk/cNtNdN

When prompted, type y to suspend activity on the SCSI bus.

# mv /usr/lib/rcm/scripts/es_rcm.pl /usr/lib/rcm/scripts/DONTUSE

# mv /etc/rcm/scripts/es_rcm.pl /etc/rcm/scripts/DONTUSE

# cfgadm -x replace_device cN::disk/cNtNdN

# mv /usr/lib/rcm/scripts/DONTUSE /usr/lib/rcm/scripts/es_rcm.pl

# mv /etc/rcm/scripts/DONTUSE /etc/rcm/scripts/es_rcm.pl

After replacing the disk, warning messages might be displayed. Ignore these messages.

# devfsadm

Depending on the number of devices that are connected to the node, the devfsadm(1M) command can require at least five minutes to complete.

# fmthard -s filename /dev/rdsk/cNtNdNsN

# cldevice repair DID_number

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

To add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

You need to replace a disk drive if the disk drive fails or when you want to upgrade to a higher-quality or to a larger disk.

For conceptual information about quorum, quorum devices, global devices, and device IDs, see the Oracle Solaris Cluster concepts documentation.

While performing this procedure, ensure that you use the correct controller number. Controller numbers can be different on each node.

Note - If the following warning message is displayed, ignore the message. Continue with the next step.

vxvm:vxconfigd: WARNING: no transactions on slave vxvm:vxassist: ERROR: Operation must be executed on master

Before You Begin

This procedure relies on the following prerequisites and assumptions.

Your cluster is operational.

Your system is running Oracle Real Application Clusters.

If your system is not running Oracle Real Application Clusters, see How to Replace a Disk Drive Without Oracle Real Application Clusters.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

# vxdisk list

You use this instance number in Step 12.

# ls -l /dev/dsk/cWtXdYsZ # cat /etc/path_to_inst | grep "device_path"

Note - Ensure that you do not use the ls command output as the device path. The following example demonstrates how to find the device path and how to identify the sd instance number by using the device path.

# ls -l /dev/dsk/c4t0d0s2 lrwxrwxrwx 1 root root 40 Jul 31 12:02 /dev/dsk/c4t0d0s2 -> ../../devices/pci@4,2000/scsi@1/sd@0,0:cb41d # cat /etc/path_to_inst | grep "/pci@4,2000/scsi@1/sd@0,0" "/node@2/pci@4,2000/scsi@1/sd@0,0" 60 "sd"

The node@2 portion of this output is not present. Oracle Solaris 10 does not add this prefix for cluster nodes.

You will use the disk ID number in Step 15 and Step 16.

# cldevice show -v cNtXdY

To determine whether a quorum device will be affected by this procedure, use one of the following commands.

# clquorum show +

For procedures about how to add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

# vxdisk offline cXtYdZ # vxdisk rm cXtYdZ

# vxdisk list

For the procedure about how to remove a disk drive, see your storage documentation. For a list of storage documentation, see Related Documentation.

# cfgadm -c unconfigure cX::dsk/cXtYdZ

# devfsadm -C

# cfgadm -al | grep cXtYdZ # ls /dev/dsk/cXtYdZ

For the procedure about how to add a disk drive, see your storage documentation. For a list of storage documentation, see Related Documentation.

Use the instance number that you identified in Step 2.

# cfgadm -c configure cX::sd_instance_Number # devfsadm

# ls /dev/dsk/cXtYdZ

Use the device ID number that you identified in Step 3.

# cldevice repair

Use the device ID number that you identified in Step 3.

# cldevice show | grep DID_number

# vxdctl enable

# vxdisk list |grep cXtYdZ

# vxdctl -c mode

Depending on your configuration and volume layout, select the appropriate Veritas Volume Manager menu item to recover the failed disk.

# vxdiskadm # vxtask list

Upgrade your disk drive firmware if you want to apply bug fixes or enable new functionality.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

Your cluster is operational.

Your nodes are not configured with dynamic reconfiguration functionality.

If your nodes are configured for dynamic reconfiguration, see the Oracle Solaris Cluster system administration documentation, and skip steps that instruct you to shut down the node.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

To determine whether a quorum device will be affected by this procedure, use the following command.

# clquorum show

For procedures about how to add and remove quorum devices, see the Oracle Solaris Cluster system administration documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

For more information about how to install firmware, see the patch installation instructions.

For more information, see your Solaris Volume Manager or Veritas Volume Manager documentation.

Upgrade your host adapter firmware if you want to apply bug fixes or enable new functionality.

Before You Begin

This procedure relies on the following prerequisites and assumptions.

Your cluster is operational.

This procedure defines Node A as the node on which you are upgrading the host adapter firmware.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

# clresourcegroup status -n NodeA # cldevicegroup status -n NodeA

# clnode evacuate NodeA

For the procedure about how to upgrade your host adapter firmware, see the patch documentation.

Perform the following step for each device group you want to return to the original node.

# cldevicegroup switch -n NodeA devicegroup

Perform the following step for each resource group you want to return to the original node.

# clresourcegroup switch -n NodeA resourcegroup

For failover resource groups, the node to which the groups are returned. For scalable resource groups, the node list to which the groups are returned.

The resource group that is returned to the node or nodes.