| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris Cluster With Network-Attached Storage Device Manual Oracle Solaris Cluster 4.0 |

| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris Cluster With Network-Attached Storage Device Manual Oracle Solaris Cluster 4.0 |

Requirements, Recommendations, and Restrictions for Sun ZFS Storage Appliance NAS Devices

Requirements for Sun ZFS Storage Appliance NAS Devices

Requirements When Configuring Sun ZFS Storage Appliances

Requirements When Configuring Sun ZFS Storage Appliance NAS Devices for Oracle RAC or HA Oracle

Requirements When Configuring Sun ZFS Storage Appliance NAS Devices as Quorum Devices

Restrictions for Sun ZFS Storage Appliance NAS Devices

Installing a Sun ZFS Storage Appliance NAS Device in an Oracle Solaris Cluster Environment

How to Install a Sun ZFS Storage Appliance in a Cluster

Maintaining a Sun ZFS Storage Appliance NAS Device in an Oracle Solaris Cluster Environment

How to Prepare the Cluster for Sun ZFS Storage Appliance NAS Device Maintenance

How to Restore Cluster Configuration After Sun ZFS Storage Appliance NAS Device Maintenance

How to Remove a Sun ZFS Storage Appliance NAS Device From a Cluster

How to Add Sun ZFS Storage Appliance Directories and Projects to a Cluster

How to Remove Sun ZFS Storage Appliance Directories and Projects and From a Cluster

This section contains procedures about maintaining Sun ZFS Storage Appliance NAS devices that are attached to a cluster. If a device's maintenance procedure might jeopardize the device's availability to the cluster, you must always perform the steps in How to Prepare the Cluster for Sun ZFS Storage Appliance NAS Device Maintenance before performing the maintenance procedure. After performing the maintenance procedure, perform the steps in How to Restore Cluster Configuration After Sun ZFS Storage Appliance NAS Device Maintenance to return the cluster to its original configuration.

Follow the instructions in this procedure whenever the Sun ZFS Storage Appliance NAS device maintenance you are performing might affect the device's availability to the cluster nodes.

Note - If your cluster requires a quorum device (for example, a two-node cluster) and you are maintaining the only shared storage device in the cluster, your cluster is in a vulnerable state throughout the maintenance procedure. Loss of a single node during the procedure causes the other node to panic and your entire cluster becomes unavailable. Limit the amount of time for performing such procedures. To protect your cluster against such vulnerability, add a shared storage device to the cluster.

Before You Begin

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

If you have data services using NFS file systems from the Sun ZFS Storage Appliance, bring the data services offline and disable the resources for the applications using those file systems. On each node, ensure that no existing processes are still using any of the NFS file systems from the device.

If you have a resource of type SUNW.ScalMountPoint managing the file system, disable that resource to achieve that.

Note - For more information on disabling a resource, see How to Disable a Resource and Move Its Resource Group Into the UNMANAGED State in Oracle Solaris Cluster Data Services Planning and Administration Guide.

If that resource is not configured, use the Oracle Solaris umount(1M) command. If the file system cannot be unmounted because it is still busy, check for applications or processes that are still on that file system, as explained in Step 1. You can also force the unmount by using the -f option with the umount command.

# clquorum show

See Chapter 6, Administering Quorum, in Oracle Solaris Cluster System Administration Guide for instructions on adding and removing quorum devices.

Follow the instructions in this procedure after performing any Sun ZFS Storage Appliance NAS device maintenance that might affect the device's availability to the cluster nodes.

If you have configured a resource of type SUNW.ScalMountPoint for the file system, enable the resource and bring its resource group online.

If you do, configure the LUN as a quorum device by following the steps in How to Add a Sun ZFS Storage Appliance NAS Quorum Device in Oracle Solaris Cluster System Administration Guide.

Remove any extraneous quorum device that you configured in How to Prepare the Cluster for Sun ZFS Storage Appliance NAS Device Maintenance.

Before You Begin

This procedure relies on the following assumptions:

Your cluster is operating.

You have prepared the cluster by performing the steps in How to Prepare the Cluster for Sun ZFS Storage Appliance NAS Device Maintenance.

You have removed any device directories from the cluster by performing the steps in How to Remove Sun ZFS Storage Appliance Directories and Projects and From a Cluster.

Note - When you remove the device from cluster configuration, the data on the device is not available to the cluster. Ensure that other shared storage in the cluster can continue to serve the data when the Sun ZFS Storage Appliance NAS device is removed. When the device is removed, change the following items in the cluster configuration:

Change the NFS file system entries in the /etc/vfstab file for that device, and unconfigure any SUNW.ScalMountPoint resources.

Reconfigure applications or data services with dependencies on these file systems to use other storage devices, or remove them from the cluster.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

# clnasdevice remove myfiler

Enter the name of the Sun ZFS Storage Appliance NAS device that you are removing.

For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

# clnasdevice remove -Z zcname myfiler

Enter the name of the zone cluster where the Sun ZFS Storage Appliance NAS device is being removed.

# clnasdevice list

# clnasdevice list -Z zcname

Note - You can also perform zone cluster-related commands inside the zone cluster by omitting the -Z option. For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

Before You Begin

The procedure relies on the following assumptions:

Perform the steps in this procedure only if the directory or project is meant to be protected by cluster fencing, restricting access to read-only for nodes that leave the cluster.

Your cluster is operating.

The Sun ZFS Storage Appliance NAS device is properly configured.

See Requirements, Recommendations, and Restrictions for Sun ZFS Storage Appliance NAS Devices for the details about required device configuration.

You have added the device to the cluster by performing the steps in How to Install a Sun ZFS Storage Appliance in a Cluster.

An NFS file system or directory from the Sun ZFS Storage Appliance is already created in a project, which is itself in one of the storage pools of the device. It is important that in order for a directory (i.e., the NFS file system) to be used by the cluster, to perform the configuration at the project level, as described below.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

After you have identified the appropriate project, click Edit for that project.

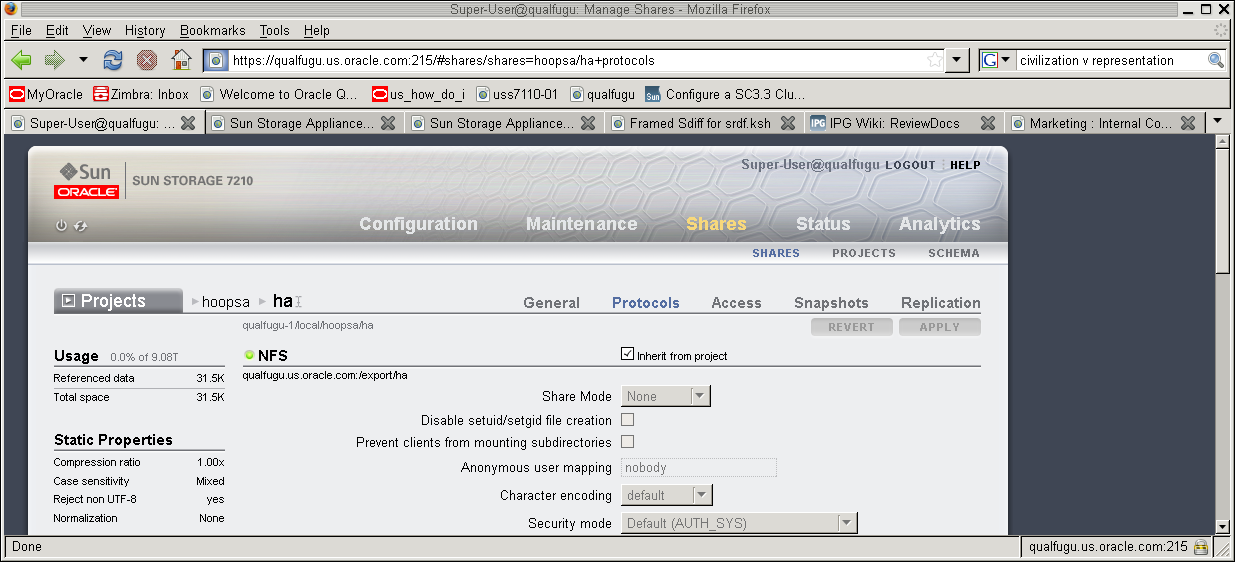

In the Sun ZFS Storage Appliance GUI, select the Protocols tab in the Edit Project page.

If you are adding multiple directories within the same project, verify that each directory that needs to be protected by cluster fencing has the Inherit from project property set.

Use clnasdevice show -v command to determine if the project has already been configured with the cluster.

# clnasdevice show -v

===NAS Devices===

Nas Device: device1.us.example.com

Type: sun_uss

userid: osc_agent

nodeIPs{node1} 10.111.11.111

nodeIPs{node2} 10.111.11.112

nodeIPs{node3} 10.111.11.113

nodeIPs{node4} 10.111.11.114

Project: pool-0/local/projecta

Project: pool-0/local/projectb# clnasdevice add-dir -d project1,project2 myfiler

Enter the project or projects that you are adding.

Specify the full path name of the project, including the pool. For example, pool-0/local/projecta.

Enter the name of the NAS device containing the projects.

For example:

# clnasdevice add-dir -d pool-0/local/projecta device1.us.example.com # clnasdevice add-dir -d pool-0/local/projectb device1.us.example.com

For example:

# clnasdevice find-dir -v

=== NAS Devices ===

Nas Device: device1.us.example.com

Type: sun_uss

Unconfigured Project: pool-0/local/projecta

File System: /export/projecta/filesystem-1

File System: /export/projecta/filesystem-2

Unconfigured Project: pool-0/local/projectb

File System: /export/projectb/filesystem-1For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

# clnasdevice add-dir -d project1,project2 -Z zcname myfiler

Enter the name of the zone cluster where the NAS projects are being added.

# clnasdevice show -v -d all

For example:

# clnasdevice show -v -d all

===NAS Devices===

Nas Device: device1.us.example.com

Type: sun_uss

nodeIPs{node1} 10.111.11.111

nodeIPs{node2} 10.111.11.112

nodeIPs{node3} 10.111.11.113

nodeIPs{node4} 10.111.11.114

userid: osc_agent

Project: pool-0/local/projecta

File System: /export/projecta/filesystem-1

File System: /export/projecta/filesystem-2

Project: pool-0/local/projectb

File System: /export/projectb/filesystem-1# clnasdevice show -v -Z zcname

Note - You can also perform zone cluster-related commands inside the zone cluster by omitting the -Z option. For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

After you confirm that a project name is associated with the desired NFS file system, use that project name in the configuration command.

# mkdir -p /path-to-mountpoint

Name of the directory on which to mount the project.

If you are using your Sun ZFS Storage Appliance NAS device for Oracle RAC or HA Oracle, consult your Oracle database guide or log into My Oracle Support for a current list of supported files and mount options. After you log into My Oracle Support, click the Knowledge tab and search for Bulletin 359515.1.

When mounting Sun ZFS Storage Appliance NAS directories, select the mount options appropriate to your cluster applications. Mount the directories on each node that will access the directories. Oracle Solaris Cluster places no additional restrictions or requirements on the options that you use.

For more information, see Configuring Failover and Scalable Data Services on Shared File Systems in Oracle Solaris Cluster Data Services Planning and Administration Guide.

Before You Begin

This procedure relies on the following assumptions:

Your cluster is operating.

You have prepared the cluster by performing the steps in How to Prepare the Cluster for Sun ZFS Storage Appliance NAS Device Maintenance.

Note - When you remove the directories, the data on those directories is not available to the cluster. Ensure that other device projects or shared storage in the cluster can continue to serve the data when these directories are removed. When the directory is removed, change the following items in the cluster configuration:

Change the NFS file system entries in the /etc/vfstab file for that device, and unconfigure any SUNW.ScalMountPoint resources.

Reconfigure applications or data services with dependencies on these file systems to use other storage devices, or remove them from the cluster.

This procedure provides the long forms of the Oracle Solaris Cluster commands. Most commands also have short forms. Except for the forms of the command names, the commands are identical.

To perform this procedure, become superuser or assume a role that provides solaris.cluster.read and solaris.cluster.modify RBAC authorization.

# umount /mount-point

Skip this step if you are using the automounter.

# clnasdevice remove-dir -d project1 myfiler

Enter the project or projects that you are removing.

Enter the name of the Sun ZFS Storage Appliance NAS device containing the projects.

To remove all of this device's projects, specify all for the -d option:

# clnasdevice remove-dir -d all myfiler

For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

# clnasdevice remove-dir -d project1 -Z zcname myfiler

Enter the name of the zone cluster where the Sun ZFS Storage Appliance NAS projects are being removed.

To remove all of this device's projects, specify all for the -d option:

# clnasdevice remove-dir -d all -Z zcname myfiler

For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

# clnasdevice show -v

# clnasdevice show -v -Z zcname

Note - You can also perform zone cluster-related commands inside the zone cluster by omitting the -Z option. For more information about the clnasdevice command, see the clnasdevice(1CL) man page.

See Also

To remove the device, see How to Remove a Sun ZFS Storage Appliance NAS Device From a Cluster.