A DevOps Engineer's Guide to OCI

Oracle Cloud Infrastructure (OCI) provides developers tools to build, manage, and automate cloud native applications. This guide gives an overview of those tools and their application within the DevOps life cycle.

The DevOps Life Cycle

DevOps is a way of working that encourages multiple teams, mainly Development and Operations, to collaborate and achieve continuous delivery in the software development life cycle. Different tools contribute to different steps in the life cycle.

|

|

On the Development side, you need tools to help you with the following steps:

|

And on the Operations side you need tools to help you with the following steps:

|

Many of the tools that OCI provides for DevOps use are closely connected and address multiple steps in the life cycle. The sections in this guide group related steps and information accordingly.

- Plan and manage your infrastructure with configuration management (CM) and infrastructure-as-code (IaC) tools.

- Code for constant change by using application design strategies and tools.

- Build, test, release, and deploy with CI/CD tools.

- Operate with confidence by using OCI security.

- Monitor with observability tools.

The DevOps Approach

Automation is used throughout the DevOps life cycle. It reduces the need for manual tasks and intervention and increases the frequency of deliveries. Configuration management and infrastructure-as-code make much of this automation possible.

Configuration management (CM) is the process of applying programmatic methods to ensure an application's implementation, function, and performance.

Infrastructure-as-code (IaC) uses human-readable code to define, provision, and manage infrastructure. IaC is a key component of the continuous delivery model.

In OCI, Ansible and Terraform are the most commonly used tools that help with CM and IaC. Ansible is primarily a CM tool used for infrastructure configuration, patching, and application deployment and maintenance. Terraform is an IaC tool used for infrastructure provisioning and decommissioning. They are frequently used together.

Configuration Management

The OCI DevOps service is designed to integrate seamlessly with two CM tools: Ansible and Chef.

Ansible

The OCI Ansible Collection automates infrastructure provisioning and configuring of OCI resources, such as compute, load balancing, and database services.

OCI Ansible modules are a set of interpreters that help Ansible make calls against OCI API endpoints. At a basic level, Ansible brings a server or a list of hosts to a known state by using the following concepts:

- Inventory: Explains where to run; can be static or dynamic

- Task: A call to an Ansible module

- Plays: A series of Ansible tasks or roles mapped to a group of hosts in the inventory; run in order

- Playbooks: A series of plays that explain what to run and use YAML

- Role: A standard structure for specifying tasks and variable; enables modularity and reuse

Chef

Chef is an automation tool for CM that focuses on the delivery and management of entire IT stacks. With the OCI DevOps service, users can manage OCI resources by using the Chef Knife Plug-in.

Puppet is another common CM tool used to design, deploy, configure, and manage servers. You can integrate Puppet with OCI, but it requires manual scripting because OCI does not have a direct plug-in.

Infrastructure-as-Code

OCI uses Terraform for IaC. Terraform has a declarative language that lets you codify infrastructure and an engine that uses those configurations to manage infrastructure.

You can use the OCI Terraform Provider and the Terraform CLI to draft and apply configurations from your local machine that manage OCI infrastructure, however, that approach has two problems:

- Lack of version control: You need to track of various versions of the code if you need to roll back or branch.

- Collaboration: You must centralize configurations and plans to ensure that everyone stays in sync.

Instead of using Terraform directly, use OCI Resource Manager, a cloud-based Terraform host for centralized source control, state management, and job queuing. Resource Manager makes it easy for DevOps personnel to manage infrastructure, or stacks, by hiding Terraform in templates.

Resource Manager also includes Terraform-based automation such as resource discovery and drift detection. Because it's not always realistic to draft Terraform configurations first and then provision infrastructure, you can create resources in the Console and then use Resource Manager Resource Discovery to generate the stack and configuration. Drift detection reports can determine if provisioned resources have different states than those defined in the stack's last-run configuration.

Microservices let you design an application as a collection of loosely coupled services that use the "share-nothing" model and run as stateless processes. This approach makes it easier to scale and maintain the application.

In a microservices architecture, each microservice owns a simple task, and communicates with clients or other microservices by using lightweight communication mechanisms such as REST API requests. Applications that are designed as microservices have the following characteristics:

- Easy to maintain and independently deployable

- Easily scalable and highly available

- Loosely coupled with other services

- Developed using the programming language and framework that best suits the problem

Containerization is a common approach to microservice architecture. Containers use OS virtualization and hold only the application and its related binaries, which results in quick startup and increased security.

Containerization and Docker

Docker is an open source project and containerization platform that standardizes the packaging of applications and their dependencies into containers, which share the same host OS. Use Docker containers for fast, consistent delivery of your applications, responsive deployment and scaling, and portability.

A Docker image is a read-only template with instructions for creating a Docker container. A Docker image holds the application that you want Docker to run as a container, along with any dependencies. To create a Docker image, you first create a Dockerfile to describe that application, then build the Docker image from the Dockerfile.

Docker images can be stored in a registry such as OCI Container Registry. Without a registry, it's hard for development teams to maintain a consistent set of Docker images for their containerized applications. Without a managed registry, it's hard to enforce access rights and security policies for images.

Kubernetes

Deploying containerized applications creates a new problem: managing thousands of containers. Kubernetes is an open source tool that automatically orchestrates the container life cycle, distributing the containers across the hosting infrastructure. Kubernetes scales resources up or down, depending on demand. It provisions, schedules, deletes, and monitors the health of the containers.

OCI Kubernetes Engine

OCI Kubernetes Engine (OKE) (sometimes referred to as OKE) is a fully managed, scalable, and highly available service that you can use to deploy your containerized applications to the cloud.

Although you don’t need to use a managed Kubernetes service, OCI Kubernetes Engine is an easy way to run highly available clusters with the control, security, and predictable performance of OCI that gives DevOps teams greater visibility and control.

-

Access your clusters using

kubectl, which is included in Cloud Shell, or use a local installation ofkubectl. - Increase the availability of applications using clusters that span multiple availability domains (data centers) in any commercial region by taking advantage of autoscaling Kubernetes node pools and pods.

- Easily and quickly upgrade container clusters, with zero downtime, to keep them up to date with the latest stable version of Kubernetes.

- Observing Kubernetes Clusters using metrics, logs, and work requests.

Functions

Functions hosts applications while abstracting away from the actual servers. The serverless and elastic architecture of Functions means that there's no infrastructure administration or software administration for you to perform. You don't provision or maintain compute instances, and operating system software patches and upgrades are applied automatically. Functions ensures your app is highly available, scalable, secure, and monitored. You can write code in Java, Python, Node, Go, Ruby, and C# (and for advanced use cases, bring your own Dockerfile, and Graal VM). You can then deploy your code, call it directly, or trigger it in response to events.

Continuous integration and continuous delivery or deployment—CI/CD—is a DevOps best practice.

Continuous integration is the practice of developers integrating all of their work together as soon as possible in the life cycle. Incremental, frequent code changes are built, tested, and revised as needed based on constant feedback. A change to the code should automatically trigger standardized build-and-test steps that ensure that the code changes being merged into the repository are error-free and work with the existing code.

Continuous delivery or deployment is the practice of quickly getting code changes from developers to users. After the code passes unit, integration, acceptance, and other tests, it's released to production in either an automated continuous deployment or a manual continuous delivery process.

OCI DevOps

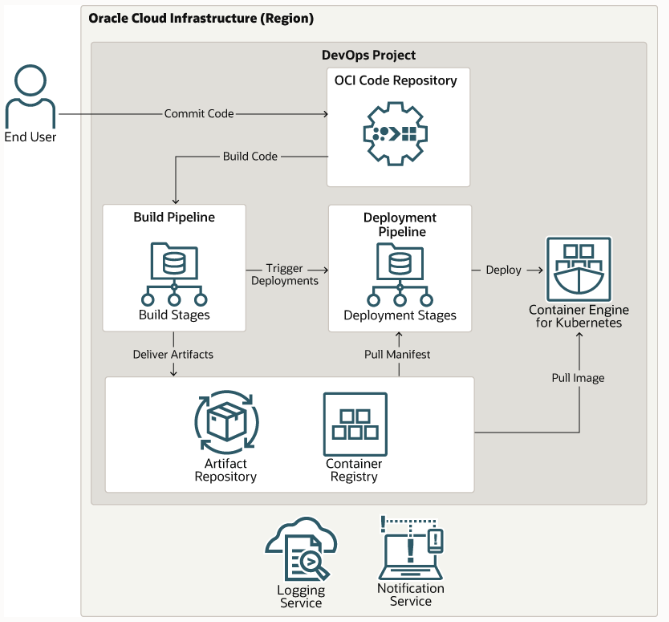

The build and deployment pipelines are the heart of the DevOps CI/CD workflow.

The OCI DevOps service lets you visually script build and deployment pipelines that automate calls to more focused tools. DevOps has the flexibility to integrate with your existing CI/CD workflows.

To explore CI/CD in OCI, you can build a CD pipeline by using DevOps or develop, debug, and deploy applications on Kubernetes Engine .

DevOps Projects

The first step in building and deploying applications using the OCI DevOps service is to create a DevOps project. A DevOps project is a logical grouping of DevOps resources needed to implement a CI/CD workflow. As part of creating the project in the Console, or by using an API or CLI, you create the following resources:

|

DevOps projects let manage your software delivery life cycle resources in one place and share them easily. When you use OCI DevOps and artifact repositories together, you can be confident that the software version intended for release is what is deployed, and that you can roll back to a known previous version.

Code

In the DevOps service, you can create your own private code repositories in an OCI code repository, which is like Git. Commits, updates, branches, and storage usage are visible in the Console, and an Oracle Cloud ID (OCID) is assigned to the repository when you create it. You can also edit, clone, and delete repositories.

Alternatively, you can use external code repositories such as GitHub and GitLab, or mirror an external code repository from GitHub, GitLab, or Bitbucket Cloud to an OCI code repository. If you're using self-hosted repositories (GitLab Server and Bitbucket Server) with private IP addresses, you can still access them from your pipelines.

Artifacts

An artifact is a reference to any binary, package, Kubernetes manifest, container image, or other file that makes up your application and will be delivered to a target deployment environment. Artifacts generated by your build and used in your deployment are stored in registries. OCI has two types of registries for storing, sharing, and managing artifacts:

- Container Registry is an open standards-based, managed service that stores multiple versions of Docker or other container images, plus related files like manifests and Helm charts. These images can be pushed to a Kubernetes cluster for deployment by using Kubernetes Engine (OKE).

- Artifact Registry stores software packages, libraries, .zip files, and other types of files used for deploying applications. These files can be called when the deployment pipeline is triggered.

You can also use external artifact registries like Sonatype or Artifactory and mirror them on OCI's Artifact Registry. This means you can continue using your current setup with OCI DevOps.

Build and Deployment Pipelines

OCI DevOps build and deployment pipelines reduce change-driven errors and decrease the time you spend on builds and deployments. Developers commit source code to a code repository, build and test software artifacts with a build runner, deliver artifacts to OCI repositories, and then run a deployment to OCI platforms.

You can use DevOps to implement various deployment pipeline strategies, including a blue-green strategy (which consists of two identical production environments), a canary strategy, and a rolling strategy.

For detailed pipeline instructions, see Managing Build Pipelines and Managing Deployment Pipelines.

Logs from your build runs and deployments are stored in the OCI Logging service for audit and governance. Your team can also receive notifications from events in your DevOps pipelines through the OCI Notifications service.

You can also use the Compute Jenkins Plug-in to centralize build automation and scale deployments, deliver your artifacts to OCI Artifact Registry, and trigger DevOps deployment pipelines.

An environment is a collection of the compute resources to which artifacts are deployed, and it's the target platform for your application. Target runtime environment types supported in OCI include VM or bare metal Compute instances in Oracle Linux and CentOS, Kubernetes Engine, and Functions. Which environment you choose for deployment depends on your needs regarding flexibility, security, speed, and other requirements:

- Bare metal hosts offer the most control, security, and cost. A good option for projects that require a more constant, larger, or separate and secure space. See Overview of Compute.

- VMs allow for more versatility, scaling, and resource sharing. Shared applications and physical hardware provide cost savings, disaster recovery speed, and faster provisioning. See Overview of Compute.

- Kubernetes Engine is ideal for high-density environments and for small and medium deployments where you need to do more with fewer resources. See Application Design.

- Functions are best with dynamic workloads where usage changes frequently, because you pay for services only as you use them.

You must create your Kubernetes Engine cluster, function, or Compute instance before you create a DevOps environment. You can then create references to different destination environments for DevOps deployment.

DevSecOps is an approach to culture, automation, and platform design that integrates security as a shared responsibility throughout the entire DevOps life cycle.

Continuous security has three major elements:

- As new features are added, the security posture must be continually refined and updated. New or modified security controls might be added (or some might be removed). These controls must be continually tested during the development and deployment cycles using automated security test suites.

- Cloud native applications must be built for resiliency. Because even carefully designed and tested software can contain security vulnerabilities, a robust security monitoring system must identify intrusions and infections in real time. Automated incident responses must protect services.

- As new features are released in production through the CI/CD pipeline, security compliance checkers and application vulnerability assessment systems must measure and record any new risk exposure. Risks that are identified during new feature integration require revisiting the security controls framework, which must be modified to mitigate those risks.

IAM

Vault

As a best practice, DevOps engineers use OCI Vault to help improve the overall security posture for their application and service deployments.

You can use the Vault service to centrally manage encryption keys and secrets. Secrets are credentials such as passwords, tokens, or any other confidential data that you need to use with other OCI services and external applications or systems. Storing secrets in a vault provides greater security than you might achieve by storing them elsewhere, such as in code or configuration files.

Vault integrates directly with OCI services, SDKs, and API clients, and handles the encryption of password credentials used with any external application.

Security Best Practices for Kubernetes Engine

When using OCI Kubernetes Engine, follow these security best practices:

- Control access to your provisioned clusters by using both IAM policies and Kubernetes role-based access control (RBAC) rules. See About Access Control and Kubernetes Engine (OKE).

- Encrypt sensitive data objects (such as authentication tokens and credentials) as Kubernetes secrets when they are stored in etcd.

- Use pod security policies to limit or restrict access to resources. See Using Pod Security Policies with Kubernetes Engine (OKE).

- Use node pool security for clusters and pods.

- Define the network access rules for communications to and from pods.

- Define multiple-tenanacy usage models for Kubernetes clusters (isolation).

- Enhance container image security by using signature verification and vulnerability scanning. See Signing Images for Security and Scanning Images for Vulnerabilities.

When using OCI Functions, follow these security best practices:

- Control both invoke and management access to functions and applications by using IAM .

- Enhance container image security by using signature verification and vulnerability scanning. See Signing Images for Security and Scanning Images for Vulnerabilities.

- Follow the guidance for controlling access.

DevOps tools should help you form a complete understanding of the workings of your system and choose the right metrics to monitor proactively. You should find issues early in the process, and be able to monitor health constantly.

DevOps tools should also help you maintain the end-to-end security of information systems, whether it's the physical data center, networks, or applications.

With right approach to observability, you can reduce downtime, accelerate mean time to detection (MTTD) and mean time to resolution (MTTR), and increase customer satisfaction and service delivery.

Key OCI Observability Services

Monitoring can help you can gain insights into OCI workloads with DevOps metrics for health and performance. You can configure alarms with thresholds to detect and respond to infrastructure and application anomalies.

Although OCI metrics are visible in charts through the Console, you can use the Grafana Plug-in to view metrics from resources across providers on a single Grafana dashboard.

Logging can ingest log data from hundreds of sources. You can generate logs like audit logs and networking flow logs and use them to diagnose security issues. DevOps logs emit all the DevOps project resources logs.

Log Analytics is a machine learning-based cloud solution that monitors, aggregates, indexes, and analyzes all log data from on-premises and multicloud environments. It enables you to search, explore, and correlate this data to troubleshoot problems faster, derive operational insights, and make better decisions.

Notifications is a highly available, low-latency publish/subscribe service that sends alerts and messages to functions, email, and message delivery partners, including Slack and PagerDuty.

Events tracks resource changes by using events that comply with the Cloud Native Computing Foundation (CNCF) CloudEvents standard. It eliminates the complexity of manually tracking changes across cloud resources. DevOps events are JSON files that are emitted with some service operations and carry information about that operation. You can define rules that trigger a specific action when an event occurs.

DevOps Services and Tools

- Application Dependency Management detects security vulnerabilities in application dependencies.

- Application Performance Monitoring provides a comprehensive set of features to monitor applications and diagnose performance issues.

- Artifact Registry provides repositories for storing, sharing, and managing software development packages.

- Kubernetes Engine (OKE) helps you define and create Kubernetes clusters to enable the deployment, scaling, and management of containerized applications.

- Container Registry lets you store, share, and manage container images (such as Docker images) in an Oracle-managed registry.

- Console Dashboards lets you create custom dashboards in the Console to monitor resources, diagnostics, and key metrics for your tenancy.

- DevOps is a (CI/CD) service that automates the delivery and deployment of software.

- Events helps you create automation based on the state changes of resources throughout your tenancy.

- Functions is a serverless platform that lets you create, run, and scale business logic without managing any infrastructure.

- IAM uses identity domains to provide identity and access management features such as authentication, single sign-on (SSO), and identity life cycle management.

- Logging provides a highly scalable and fully managed single interface for all the logs in your tenancy.

- Log Analytics lets you index, enrich, aggregate, explore, search, analyze, correlate, visualize and monitor all log data from your applications and system infrastructure.

- Monitoring lets you query metrics and manage alarms. Metrics and alarms help monitor the health, capacity, and performance of your cloud resources.

- Notifications broadcasts messages to distributed components through a publish-subscribe pattern, delivering secure, highly reliable, low latency and durable messages.

- Resource Manager automates deployment and operations for all OCI resources using the IaC model.

- Streaming provides a fully managed, scalable, and durable solution for ingesting and consuming high-volume data streams in real time.

- Vault is an encryption management service that stores and manages encryption keys and secrets to securely access resources.

- Vulnerability Scanning helps improve security posture by routinely checking hosts and container images for potential vulnerabilities.