Edit and Test Agents Faster with Playground in AI Agent Studio

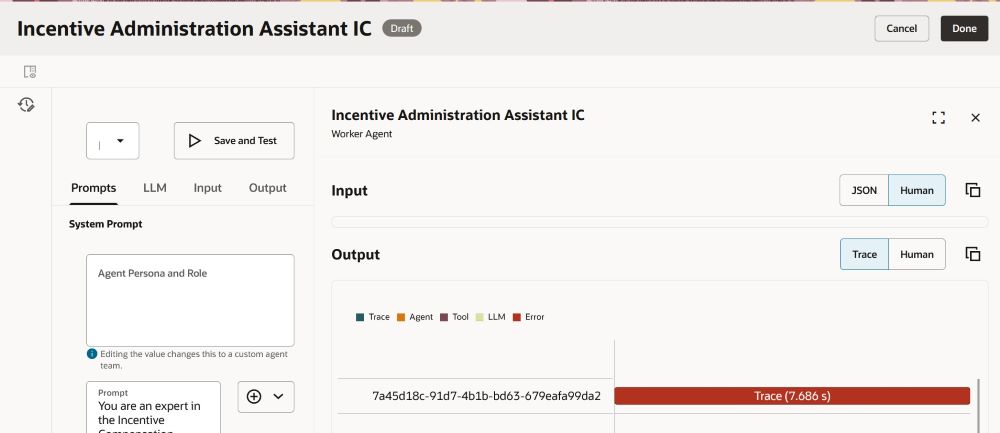

You can now use Edit in Playground to refine and test your AI agents and large language model (LLM) nodes directly within AI Agent Studio. You can isolate and verify specific logic, instructions, or nodes independently rather than running an entire agent flow from start to finish. Using this feature, you can do the following tasks:

- Accelerate iteration by testing individual nodes (supervisor, non-reusable workers, agent nodes, and LLM nodes) without running the full workflow.

- Improve quality and control by adjusting prompts and parameters and validating outcomes in real time.

- After editing, apply changes and validate them using Save and Run.

This feature is also available for both supervisor and workflow agent teams:

-

Supervisor Agents: Test the supervisor agents and any non-reusable worker agents.

-

Workflow Agents: Test individual agent nodes and LLM nodes.

Using the playground layout, you can modify prompt logic and LLM parameters, then run the agent team with those modifications to see results immediately. You can also use dynamic prompt insertion to browse and add expressions on the fly, and track every change from Run History.

Playground Layout

Steps to enable and configure

You must have access to use AI Agent Studio.

Key resources

Edit and Test AI Agents Iteratively in Playground

Access requirements

See Access Requirements for AI Agent Studio.