5 Use the Vector Index Service

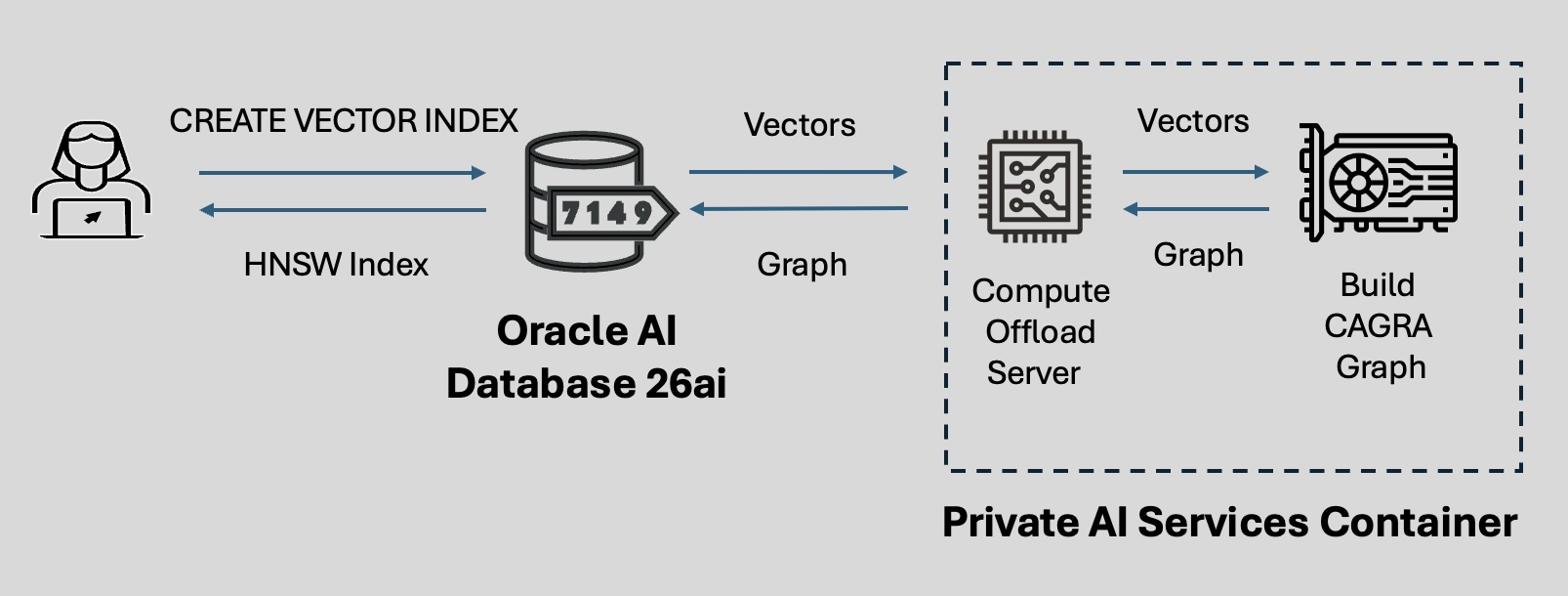

The Private AI Services Container allows you to offload the creation of Hierarchical Navigable Small World (HNSW) indexes to an external container equipped with a powerful NVIDIA GPU. This offloading is completely transparent, maintaining simplicity while delivering enhanced performance.

Vector indexes play a crucial role in approximate search of vector data. Constructing these indexes is compute-intensive and time consuming. With the vector index service of the Private AI Services Container, this computational expense is offloaded to an external container.

When you send a request to create an HNSW vector index, the database intelligently compiles and redirects the task, along with the necessary vector data, to a new Compute Offload Server instance running in the Private AI Services Container. This server instance leverages the computational power of an NVIDIA GPU and employs the CAGRA algorithm, part of the NVIDIA cuVS library, to rapidly generate a graph index. Once the CAGRA index is created, it is automatically transferred back to the Oracle AI Database instance, where it is converted into an HNSW graph index. The final HNSW index is then readily available for subsequent vector search operations within the database instance. The following is an example of the syntax used to create an HNSW vector index by offloading the computation to a GPU-powered container:

CREATE VECTOR INDEX gist_idx ON gist(embedding)

ORGANIZATION INMEMORY NEIGHBOR GRAPH

WITH DISTANCE EUCLIDEAN

PARAMETERS (

TYPE HNSW,

NEIGHBORS 32,

EFCONSTRUCTION 200,

OFFLOAD_CREDENTIAL_NAME mycredname,

OFFLOAD_URL 'https://<container_url>:<port>/v1/index'

) PARALLEL 4;This approach to index creation combines the versatile converged data management capabilities of Oracle AI Database with the computational power of GPUs. By integrating GPU acceleration into the database and offloading the computationally intensive task of index creation to specialized hardware, processing speed and efficiency can be significantly improved, while maintaining the ease of use and reliability of Oracle AI Database.

The container is only used during index creation. Once the index is created and transferred back to the database instance, the container is no longer needed. All subsequent DML statements and queries will be executed on the base table and the HNSW index stored in the database.

Restrictions

The following are not currently supported by the vector index service of the Private AI Services Container:

- Concurrent offloads

- Distributed HNSW indexes, Online HNSW indexes, and indexes with included columns

- Manhattan, Hamming, Dot Product, and Jaccard distance metrics

- Vectors with the dimension format

FLOAT64orBINARY - Scalar quantized HNSW indexes

See Also:

For additional information that is helpful to understanding the use and implementation of the Private AI Services Container vector index service, see the following:

- Oracle AI Database AI Vector Search User's Guide for more information about HNSW vector indexes.

- Oracle AI Database SQL

Language Reference for the full syntax and parameters of

the

CREATE VECTOR INDEXDDL.

- Configure the Vector Index Service

To use the Private AI Services Container vector index service, you must configure the container and your Oracle AI Database. - Considerations for the Vector Index Service