13 Debugging and Troubleshooting

This chapter provides information about debugging and troubleshooting issues that you may face while setting up UIM cloud native environment and creating UIM cloud native instances.

- Setting Up Java Flight Recorder (JFR)

- Troubleshooting Issues with Traefik, UIM UI, and WebLogic Administration Console

- Common Error Scenarios

- Known Issues

Setting Up Java Flight Recorder (JFR)

The Java Flight Recorder (JFR) is a tool that collects diagnostic data about running Java applications. UIM cloud native leverages JFR. See Java Platform, Standard Edition Java Flight Recorder Runtime Guide for details about JFR.

You can change the JFR settings provided with the toolkit by updating the appropriate values in the instance specification.

To analyze the output produced by the JFR, use Java Mission Control. See Java Platform, Standard Edition Java Mission Control User's Guide for details about Java Mission Control.

JFR is turned off by default in all managed servers. You can enable this

feature by setting the enabled flag to true.

max_age parameter in the instance

specification:# Java Flight Recorder (JFR) Settings

jfr:

enabled: true

max_age: 4hPersisting JFR Data

JFR data can be persisted outside of the container by re-directing it to persistent storage through the use of a PV-PVC. See "Setting Up Persistent Storage" for details.

Once the storage has been set up, enable the storageVolume. The default storage volume type is

emptydir. Once enabled, JFR data is re-directed

automatically.

storageVolume:

type: emptydir # Acceptable values are pvc and emptydir

volumeName: storage-volume

# pvc: storage-pvc #Specify this only if case type is PVC

isBlockVolume: false #set thisto trueifblock volume is usedemtpydir deletes the corresponding logs. To retain the logs:

- Set the storageVolume.type to pvc.

- Uncomment storageVolume.pvc.

- Specify the name of the pvc created.

# The storage volume must specify the PVC to be used for persistent storage.

storageVolume:

type: pvc # Acceptable values are pvc and emptydir

volumeName: storage-volume

pvc: storage-pvc #Specify this only if case type is PVC

isBlockVolume: false #set thisto trueifblock volume is usedTroubleshooting Issues with Traefik, UIM UI, and WebLogic Administration Console

This section describes how to troubleshoot issues with access to the UIM UI clients, WLST, and WebLogic Administration Console.

It is assumed that Traefik is the Ingress controller being used and the

domain name suffix is uim.org. You can modify the

instructions to suit any other domain name suffix that you may have chosen.

Table 13-1 URLs for Accessing UIM Clients

| Client | If Not Using Oracle Cloud Infrastructure Load Balancer | If Using Oracle Cloud Infrastructure Load Balancer |

|---|---|---|

| UIM Web Client | http://instance.project.uim.org:30305/Inventory | http://instance.project.uim.org:80/Inventory |

| WLST | http://t3.instance.project.uim.org:30305 | http://t3.instance.project.uim.org:80 |

| WebLogic Admin Console | http://admin.instance.project.uim.org:30305/console | http://admin.instance.project.uim.org:80/console |

Error: Http 503 Service Unavailable (for UIM UI Clients)

This error occurs if the managed servers are not running.

To resolve this issue:

- Check the status of the managed servers and ensure that at least one

managed server is up and

running:

kubectl -n project get pods - Log into WebLogic Admin Console and navigate to the Deployments

section and check if the State column for oracle.communications.inventory

shows Active. The value in the Targets column indicates the name of the

cluster.

If the application is not Active, check the managed server logs and see if there are any errors. For example, it is likely that the UIM DB Connection pool could not be created. The following could be the reasons for this:

- DB connectivity could not be established due to reasons such as password expired, account locked, and so on.

- DB Schema heath check policy failed.

Resolution: To resolve this issue, address the errors that prevent the application from becoming Active. Depending on the nature of the corrective action you take, you may have to perform the following procedures as required:- Restart the instance, by running restart-instance.sh

- Delete and create a new instance, by running delete-instance.sh followed by create-instance.sh

Security Warning in Mozilla Firefox

If you use Mozilla Firefox to connect to a UIM cloud native instance via

HTTP, your connection may fail with a security warning. You may notice that the URL

you entered automatically change to https://. This can happen even

if HTTPS is disabled for the UIM instance. If HTTPS is enabled, it only happens if

you are using a self-signed (or otherwise untrusted) certificate.

If you wish to continue with the connection to the UIM instance using HTTP, in the configuration settings for your Firefox browser (URL: "about:config"), set the network.stricttransportsecurity.preloadlist parameter to FALSE.

Error: Http 404 Page not found

This is the most common problem that you may encounter.

To resolve this issue:

- Check the Domain Name System (DNS) configuration.

Note:

These steps apply for local DNS resolution via the hosts file. For any other DNS resolution, such as corporate DNS, follow the corresponding steps.The hosts configuration file is located at:- On Windows: C:\Windows\System32\drivers\etc\hosts

- On Linux: /etc/hosts

Check if the following entry exists in the hosts configuration file of the client machine from where you are trying to connect to UIM:- Local installation of Kubernetes without Oracle Cloud

Infrastructure load

balancer:

Kubernetes_Cluster_Master_IP instance.project.uim.org t3.instance.project.uim.org admin.instance.project.uim.org - If Oracle Cloud Infrastructure load balancer is

used:

Load_balancer_IP instance.project.uim.org t3.instance.project.uim.org admin.instance.project.uim.org

- Check the browser settings and ensure that *.uim.org is added to the No proxy list, if your proxy cannot route to it.

- Check if the Traefik pod is running and install or update the

Traefik Helm

chart:

kubectl -n traefik get pod NAME READY STATUS RESTARTS AGE traefik-operator-657b5b6d59-njxwg 1/1 Running 0 128m - Check if Traefik service is

running:

If the Traefik service is not running, install or update the Traefik Helm chart.kubectl -n traefik get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE oci-lb-service-traefik LoadBalancer 10.96.136.31 100.77.18.141 80:31115/TCP 20d <---- Is expected in OCI environment only -- traefik-operator NodePort 10.98.176.16 <none> 443:30443/TCP,80:30305/TCP 141m traefik-operator-dashboard ClusterIP 10.103.29.101 <none> 80/TCP 141m - Check if Ingress is configured, by running the following

command:

If Ingress is not running, install Ingress by running the following command:kubectl -n project get ingressroute NAME AGE project-instance-traefik 89m$UIM_CNTK/scripts/create-ingress.sh -p project -i instance -s specPath - Check if the Traefik back-end systems are registered, by using one

of the following options:

- Run the following commands to check if your project

namespace is being monitored by Traefik. Absence of your project

namespace means that your managed server back-end systems are not

registered with

Traefik.

$ cd $UIM_CNTK $ source scripts/common-utils.sh $ find_namespace_list 'namespaces' traefik traefik-operator "traefik","project_1", "project_2" - Check the Traefik Dashboard and add the following DNS entry

in your hosts configuration

file:

Add the same entry regardless of whether you are using Oracle Cloud Infrastructure load balancer or not. Navigate to:Kubernetes_Access_IP traefik.uim.orghttp://traefik.uim.org:30305/dashboard/and check the back-end systems that are registered. If you cannot find your project namespace, install or upgrade the Traefik Helm chart. See "Installing the Traefik Ingress Controller as Alternate (Deprecated)".

- Run the following commands to check if your project

namespace is being monitored by Traefik. Absence of your project

namespace means that your managed server back-end systems are not

registered with

Traefik.

Reloading Instance Backend Systems

If your instance's ingress is present, yet Traefik does not recognize the URLs of your instance, try to unregister and register your project namespace again. You can do this by using the unregister-namespace.sh and register-namespace.sh scripts in the toolkit.

Note:

Unregistering a project namespace will stop access to any existing instances in that namespace that were working prior to the unregistration.Debugging Traefik Access Logs

To increase the log level and debug Traefik access logs:

Note:

While upgrading Traefik operator, set the Traefik Chart version as per UIM Compatibility Matrix.

- Run the following

command:

A new instance of the Traefik pod is created automatically.$ helm upgrade traefik-operator traefik/traefik --version 15.1.0 --namespace traefik --reuse-values --set logs.access.enabled=true - Look for the pod that is created most

recently:

$ kubectl get po -n traefik NAME READY STATUS RESTARTS AGE traefik-operator-pod_name 1/1 Running 0 0 5s $ kubectl -n traefik logs -f traefik-operator-pod_name - Enabling access logs generates large amounts of information in the

logs. After debugging is complete, disable access logging by running the

following

command:

$ helm upgrade traefik-operator traefik/traefik --version 15.1.0 --namespace traefik --reuse-values --set logs.access.enabled=false

Cleaning Up Traefik

Note:

Clean up is not usually required. It should be performed as a desperate measure only. Before cleaning up, make a note of the monitoring project namespaces. Once Traefik is re-installed, run $UIM_CNTK/scripts/register-namespace.sh for each of the previously monitored project namespaces.

Warning: Uninstalling Traefik in this manner will interrupt access to all UIM instances in the monitored project namespaces.

helm uninstall traefik-operator -n traefikCleaning up of Traefik does not impact actively running UIM instances. However, they cannot be accessed during that time. Once the Traefik chart is re-installed with all the monitored namespaces and registered as Traefik back-end systems successfully, UIM instances can be accessed again.

Setting up Logs

As described earlier in this guide, UIM and WebLogic logs can be stored in the individual pods or in a location provided via a Kubernetes Persistent Volume. The PV approach is strongly recommended, both to allow for proper preservation of logs (as pods are ephemeral) and to avoid straining the in-pod storage in Kubernetes.

Note:

Replace ms1 with the appropriate managed server or with "admin".When a PV is configured, stdout logs are available at the following path starting from the root of the PV storage:

project-instance/server/<servername>/logs

When a PV is configured, main logs are available at the following path starting from the root of the PV storage:

project-instance/server/introspector/logs

The following logs are available in the location (within the pod or in PV) based on the specification:

- admin.log - Main log file of the admin server

- admin.out - stdout from admin server

- admin_nodemanager.log: Main log from nodemanager on admin server

- admin_nodemanager.out: stdout from nodemanager on admin server

- admin_access.log: Log of http/s access to admin server

- ms1.log - Main log file of the ms1 managed server

- ms1.out - stdout from ms1 managed server

- ms1_nodemanager.log: Main log from nodemanager on ms1 managed server

- ms1_nodemanager.out: stdout from nodemanager on ms1 managed server

- ms1_access.log: Log of http/s access to ms1 managed server

All the logs in the above list for "ms1" are repeated for each running managed server, with the logs being named for their originating managed server in each case.

In addition to these logs:

- Each JMS Server configured will have its log file with the name <server>_ms<x>-jms_messages.log (for example: uim_jms_server_ms2-jms_messages.log). By default, the JMS queue or topic logs are disabled. These logs temporarily can be enabled from weblogic console.

- When custom templates for SAF agent are configured, it will have log file with the name <server>_ms<x>-jms_messages.log (for example: uim_saf_agent_ms1-jms_messages.log).

UIM Cloud Native and Oracle Enterprise Manager

UIM cloud native instances contain a deployment of the Oracle Enterprise Manager application, reachable at the admin server URL with the path "/em". However, the use of Enterprise Manager in this Kubernetes context is not supported. Do not use the Enterprise Manager to monitor UIM. Use standard Kubernetes pod-based monitoring and UIM cloud native logs and metrics to monitor UIM.

Recovering a UIM Cloud Native Database Schema

When the UIM DB Installer fails during an installation, it exits with an error message. You must then find and resolve the issue that caused the failure. You can re-run the DB Installer after the issue (for example, space issue or permissions issue) is rectified. You do not have to rollback the DB.

Note:

Remember to uninstall the failed DB Installer helm chart before rerunning it. Contact Oracle Support for further assistance.It is recommended that you always run the DB Installer with the logs directed to a Persistent Volume so that you can examine the log for errors. The log file is located at: filestore/project-instance/uim-dbinstaller/logs/DbVersionController.log.

- Delete the new schema or use a new schema user name for the subsequent installation.

- Restart the installation of the database schema from the beginning.

- Fix the issue. Use the information in the log or error messages to fix the issue before you restart the upgrade process. For information about troubleshooting log or error messages, see UIM Cloud Native System Administrator's Guide.

Common Problems and Solutions

This section describes some common problems that you may experience because you have run a script or a command erroneously or you have not properly followed the recommended procedures and guidelines regarding setting up your cloud environment, components, tools, and services in your environment. This section provides possible solutions for such problems.

Domain Introspection Pod Does Not Start

Upon running create-instance.sh or upgrade-instance.sh, no

change is observed. Running kubectl get pods -n project

--watch shows that the introspector pod did not start at all.

The following are the potential causes and mitigations for this issue:

- WebLogic Kubernetes Operator (WKO) is not up or not healthy: Confirm if

WKO is up by running

kubectl get pods -n $WLSKO_NS. There should be one WKO pod in theRUNNINGstate. If there is a pod, check its logs. If a pod is not there, check if WKO is uninstalled. You may need to terminate an unhealthy pod or reinstall WKO. - Project is not registered with WKO.

Run the following command:

Your project namespace must be listed in the list that the command returns. If it is not listed, runkubectl get cm -n $WLSKO_NS -o yaml weblogic-operator-cm | grep domainNamespaces$UIM_CNTK/scripts/register-namespace.sh.Other causes are infrastructure related issues such as worker capacity and user RBAC.

In case the introspector does not start, the Operator may not monitoring your namespace or your namespace that is not tagged to right label which operator is monitoring. See https://oracle.github.io/weblogic-kubernetes-operator/managing-operators/common-mistakes/#forgetting-to-configure-the-operator-to-monitor-a-namespace for more information on Operator monitoring

Domain Introspection Pod Status

kubectl get pods -n namespace

## healthy status looks like this

NAME READY STATUS RESTARTS AGE

project-instance-introspect-hzh9t 1/1 Running 0 3sNAME READY STATUS RESTARTS AGE

project-instance-introspect-r2d6j 0/1 ErrImagePull 0 5s

### OR

NAME READY STATUS RESTARTS AGE

project-instance-introspect-r2d6j 0/1 ImagePullBackOff 0 45sThis shows that the introspection pod status is not healthy. If the image can be pulled, it is possible that it took a long time to pull the image.

To resolve this issue, verify that the image name and the tag and that it is accessible from the repository by the pod.

You can also try the following:

- Increase the value of

podStartupDeadlineSecondsin the instance specification.Start with a very high timeout value and then monitor the average time it takes, because it depends on the speed with which the images are downloaded and how busy your cluster is. Once you have a good idea of the average time, you can reduce the timeout values accordingly to a value that includes the average time and some buffer.

- Pull the container image manually on all Kubernetes nodes where the UIM cloud native pods can be started up.

Domain Introspection Errors Out

Some times, the domain introspector pod runs, but ends with an error.

kubectl logs introspector_pod -n projectThe following are the possible causes for this issue:

- RCU Schema is pre-existing: If the logs shows the following, then RCU

schema could be

pre-existing:

This could happen because the database was reused or cloned from a UIM cloud native instance. If this is so, and you wish to reuse the RCU schema as well, provide the required secrets. For details, see "Reusing the Database State".WLSDPLY-12409: createDomain failed to create the domain: Failed to write domain to /u01/oracle/user_projects/domains/domain: wlst.writeDomain(/u01/oracle/user_projects/domains/domain) failed : Error writing domain: 64254: Error occurred in "OPSS Processing" phase execution 64254: Encountered error: oracle.security.opss.tools.lifecycle.LifecycleException: Error during configuring DB security store. Exception oracle.security.opss.tools.lifecycle.LifecycleException: The schema FMW1_OPSS is already in use for security store(s). Please create a new schema.. 64254: Check log for more detail.If you do not have the secrets required to reuse the RCU instance, you must use the UIM cloud native DB Installer to create a new RCU schema in the DB. Use this new schema in your

rcudbsecret. If you have login details for the old RCU users in yourrcudbsecret, you can use the UIM cloud native DB Installer to delete and re-create the RCU schema in place. Either of these options gives you a clean slate for your next attempt.Finally, it is possible that this was a clean RCU schema but the introspector ran into an issue after RCU data population but before it could generate the wallet secret (opssWF). If this is the case, debug the introspector failure and then use the UIM cloud native DB Installer to delete and re-create the RCU schema in place before the next attempt.

- Fusion Middleware cannot access the RCU: If the introspector logs show

the following error, then it means that Fusion Middleware could not access the

schema inside the RCU DB.

Typically, this happens when wrong values are entered while creating secrets for this deployment. Less often, the cause is a corrupted RCU DB or an invalid one. Re-create your secrets, verifying the credentials and drop and re-create the RCU DB.WLSDPLY-12409: createDomain failed to create the domain: Failed to get FMW infrastructure database defaults from the service table: Failed to get the database defaults: Got exception when auto configuring the schema component(s) with data obtained from shadow table: Failed to build JDBC Connection object:

Recovery After Introspection Error

If the introspection fails during instance creation, once you have gathered the required information and have a solution, delete the instance and then re-run the instance creation script with the fixed specification, model extension, or other environmental failure cause.

If the introspection fails while upgrading a running instance, then do the following:

- Make the change to fix the introspection failure. Trigger an instance upgrade. If this results in successful introspection, the recovery process stops here.

- If the instance upgrade in step 1 fails to trigger a fresh

introspection, then do the following:

- Rollback to the last good Helm release by first running the

helm history -n project project-instancecommand to identify the version in the output that matches the running instance (that is, before the upgrade that led to introspection failure). The timestamp on each version helps you identify the version. Once you know the "good" version, rollback to that version by running:helm rollback -n project project-instance version. Monitor the pods in the instance to ensure only the Admin server and the appropriate number of Managed Server pods are running. - Upgrade the instance with the fixed specification.

- Rollback to the last good Helm release by first running the

Instance Deletion Errors with Timeout

You use the delete-instance.sh script to delete an instance that is no longer required. The script attempts to do this in a graceful manner and is configured to wait up to 10 minutes for any running pods to shut down. If the pods remain after this time, the script times out and exits with an error after showing the details of the leftover pods.

The leftover pods can be UIM pods (Admin Server, Managed Server) or the DBInstaller pod.

For the leftover UIM pods, see the WKO logs to identify why cleanup has not run. Delete the pods manually if necessary, using the kubectl delete commands.

For the leftover DBInstaller pod, this happens only if

install-uimdb.sh is interrupted or if it failed in its last run. This should

have been identified and handled at that time itself. However, to complete the cleanup,

run helm ls -n

project to find the failed DBInstaller release, and then invoke helm uninstall -n project

release. Monitor the pods in the project namespace until the DBInstaller pod

disappears.

Changing the WebLogic Kubernetes Operator Log Level

Some situations may require analysis of the WKO logs. These logs can be certain kinds of introspection failures or unexpected behavior from the operator. The default log level for the Operator is INFO.

For information about changing the log level for debugging, see the documentation at: https://oracle.github.io/weblogic-kubernetes-operator/managing-operators/troubleshooting/#operator-and-conversion-webhook-logging-level.

Deleting and Re-creating the WLS Operator

You may need to delete a WLS operator and re-create it. You do this when you want to use a new version of the operator where upgrade is not possible, or when the installation is corrupted.

When the corresponding operator is removed, the existing UIM cloud native instances continue to function. However, any updates cannot be processed (when you run upgrade-instance.sh) or respond to the Kubernetes events such as the termination of a pod.

Go through WKO troubleshooting to avoid errors while installing the operator. To uninstall the operator, see Uninstall the Operator

Register namespaces using RegisterNamespace and UnregisterNamespace scripts from CNTK . You can install the operator following the instructions from WKO Documentation and then register all the projects again, one after the other as mentioned in Registering the Namespace.

one by following

Normally, the remove-operator.sh script fails if there are UIM cloud native projects registered with the operator. But you can use the -f flag to force the removal. When this is done, the script prints out the list of registered projects and reminds you to manually re-register them (by running register-namespace.sh) after reinstalling the operator.

You can install the operator as usual and then register all the projects again, one by one by running register-namespace.sh -p project -t wlsko.

Lost or Missing opssWF and opssWP Contents

For a UIM instance to successfully connect to a previously initialized set of DB schemas, it needs to have the opssWF (OPSS Wallet File) and opssWP (OPSS Wallet-file Password) secrets in place. The $UIM_CNTK/scripts/manage-instance-credentials.sh script can be used to set these up if they are not already present.

If these secrets or their contents are lost, you can delete and recreate the RCU schemas (using $UIM_CNTK/scripts/install-uimdb.sh with command code 2). This deletes data (such as some user preferences, MDS, and so on) stored in the RCU schemas and requires redeployment cartridges. On the other hand, if there is a WebLogic domain currently running against that DB (or its clone), the "exportEncryptionKey" offline WLST command can be run to dump out the "ewallet.p12" file. This command also takes a new encryption password. See "Oracle Fusion MiddleWare WLST Command Reference for Infrastructure Security" for details. The contents of the resulting ewallet.p12 file can be used to recreate the opssWF secret, and the encryption password can be used to recreate the opssWP secret. This method is also suitable when a DB (or the clone of a DB) from a traditional UIM installation needs to be brought into UIM cloud native.

Clock Skew or Delay

When submitting JMS message over the Web Service queue, you might see the following in the SOAP response:

Security token failed to validate.

weblogic.xml.crypto.wss.SecurityTokenValidateResult@5f1aec15[status: false][msg UNT

Error:Message older than allowed MessageAge]

Oracle recommends synchronizing the time across all machines that are involved in communication. See "Synchronizing Time Across Servers" for more details. Implement Network Time Protocol (NTP) across the hosts involved, including the Kubernetes cluster hosts.

It is also possible to temporarily fix this through configuration by adding the following properties to java_options in the project specification for each managed server.managedServers: project:

#JAVA_OPTIONS for all managed servers at project level java_options:

-Dweblogic.wsee.security.clock.skew=72000000

-Dweblogic.wsee.security.delay.max=72000000Cartridge Deployment Error

When the secret for encrypted WebLogic password <project>-<instance>-weblogic-encrypted-credentials is incorrect, you may find the following errors during cartridge deployment:

[deployCartridge] Deployment of ora_uim_baseextpts (7.4.2.0.0) failed due to :

[deployCartridge] Exception: EJB Exception: ; nested exception is:

[deployCartridge] java.lang.NoClassDefFoundError: org/springframework/context/ApplicationContextTo resolve this issue:

- Delete the secret: <project>-<instance>-weblogic-encrypted-credentials.

- Generate the WebLogic encrypted password as

follows:

$ $UIM_CNTK/scripts/install-uimdb.sh -p project -i instance -s $SPEC_PATH -c 8 - Restart the Managed server as

follows:

$ $UIM_CNTK/scripts/restart-instance.sh -p project -i instance -s $SPEC_PATH -r ms

Show the encrypted merged model json file for Model in Image

Weblogic operator has scripts to show the encrypted merged model json file for model in image.

~/weblogic-kubernetes-operator/operator/integration-tests/bash/show_merged_model.sh

-h will provide all the required parameters to use this script.

Sample usage:

show_merged_model.sh -i model-in-image:v1 -n sample-domain1-ns -p weblogic -d domain1Upgrading WebLogic Operator

To upgrade the WebLogic Operator, you have the following approaches:

- Operator Upgrade: Follow the Operator documentation for a standard

upgrade process. Ensure that the target version is compatible with the current

version within your Kubernetes cluster.

Here are some points you need to consider before using this approach:

- You do not need any additional Kubernetes resources.

- You do not need to register namespace again.

- You cannot test with a canary namespace.

- It is very challenging to to roll back to the previous version.

- PhasedCutover Approach: To install the new WKO (WebLogic Kubernetes

Operator), create a new namespace with a fresh label selector. Transition UIM

namespaces by removing the old label and adding the new label to each respective

namespace. Once all namespaces have successfully transitioned and are stable,

proceed to uninstall the old WKO.

Here are some points you need to consider before using this approach:

- You can test with a canary namespace before full deployment.

- You can perform a phased cutover while accommodating program timelines.

- This approach supports an easy backout option by reverting the label change on UIM namespaces.

- This approach requires modification of all UIM namespaces to use the new WKO.

- Extra Kubernetes resources are in use until the old WKO is uninstalled.

Known Issues

This section describes known issues that you may come across, their causes, and the resolutions.

SituationalConfig NullPointerException

In the managed server logs, you might notice a stacktrace that indicates a NullPointerException in situational config.

This exception can be safely ignored.

Connectivity Issues During Cluster Re-size

When the cluster size changes, whether from the termination and re-creation of a pod, through an explicit upgrade to the cluster size, or due to a rolling restart, transient errors are logged as the system adjusts.

These transient errors can usually be ignored and stop after the cluster has stabilized with the correct number of Managed Servers in the Ready state.

If the error messages were to persist after a Ready state is achieved, then looking for secondary symptoms of a real problem would be appropriate. Such connectivity errors could result in orders that were inexplicably stuck or were otherwise processing abnormally.

- An MDB is unable to connect to a JMS destination. The specific MDB

and JMS destination can vary, such as:

-

The Message-Driven EJB inventoryQueueListener is unable to connect to the JMS destination inventoryWSQueue. -

The Message-Driven EJB ActivityListenerBean is unable to connect to the JMS destination inventoryActivityQueue.

-

- Failed to Initialize JNDI context. Connection refused; No available router to destination. This type of error is seen in an instance where SAF is configured.

- Failed to process events for event type[AutomationEvents].

- Consumer destination was closed.

Upgrade Instance failed with spec.persistentvolumesource: Forbidden: is immutable after creation.

Error: UPGRADE FAILED: cannot patch "<project>-<instance>-nfs-pv" with kind

PersistentVolume: PersistentVolume "<project>-<instance>-nfs-pv" is invalid: spec.persistentvolumesource:

Forbidden: is immutable after creation

Error in upgrading UIM helm chart- Disable NFS by setting the

nfs.enabledparameter to false and run the upgrade-instance script. This removes the PV from the instance. - Enable it again by changing

nfs.enabled:to true with the new values of NFS and then run upgrade-instance.

JMS Servers for Managed Servers are Reassigned to Remaining Managed Servers

When scaling down, the JMS servers for managed servers that do not exist are getting reassigned to remaining managed servers.

For example, for a JMS server when there is only 1 managed server running, you can see the server details as follows, in the WebLogic console:

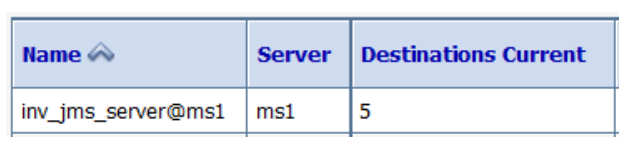

Figure 13-1 Server Details for a JMS Server with One Managed Server

Notice that uim_jms_server@ms1 is targeting

ms1.

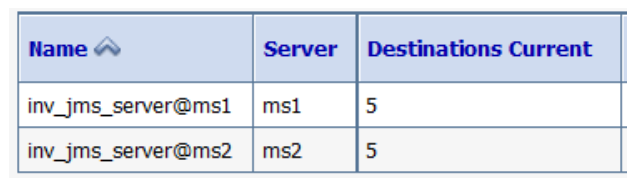

When scaled to 2 Managed Servers, the WebLogic console shows the following server details:

Figure 13-2 Server Details of WebLogic Console with Two Managed Servers

Notice that uim_jms_server@ms1 is targeting

ms1 and uim_jms_server@ms2 is targeting ms2.

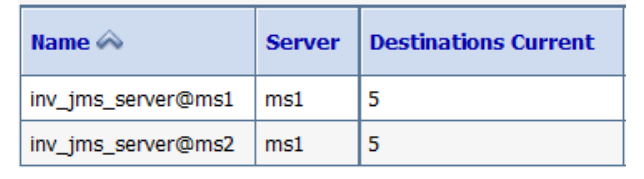

After scaling back to 1 managed server, the WebLogic console shows the following server details:

Figure 13-3 Server Details of WebLogic Console with One Managed Server

Notice that the JMS Server uim_jms_server@ms2 is not deleted and is targeting ms1.

This is completely expected behavior. This is a WebLogic feature and not to be mistaken for any inconsistency in the functionality.