3 AI Foundation Applications Standalone Processes

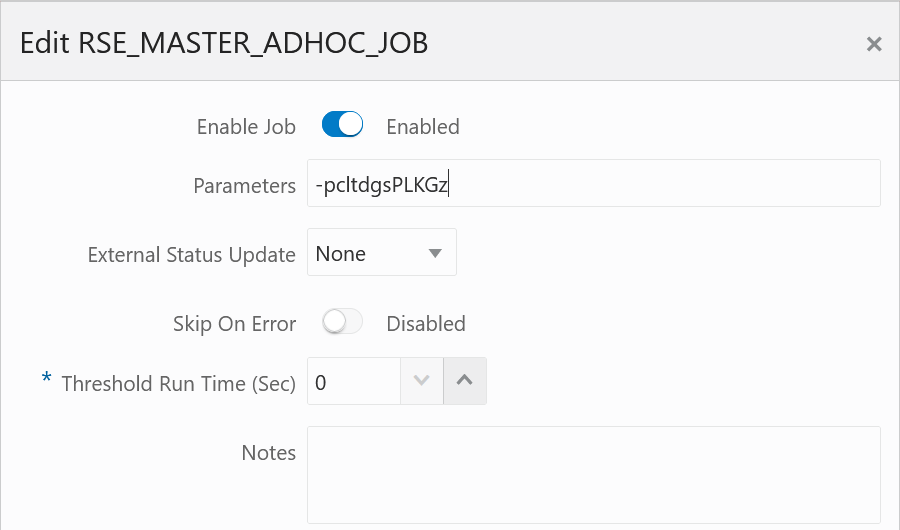

The primary function of standalone processes in the AI Foundation Applications (AIF APPS schedule in POM) is to move data from the data warehouse or external sources into the application data models, or to move data out of the platform to send it elsewhere. These process flows differ from the AIF DATA jobs in that most processes contain only one POM job. That job contains many individual programs in it, but the execution flow is determined by parameters passed into the job. This is done by editing the job’s parameters from the Batch Monitoring screen in POM:

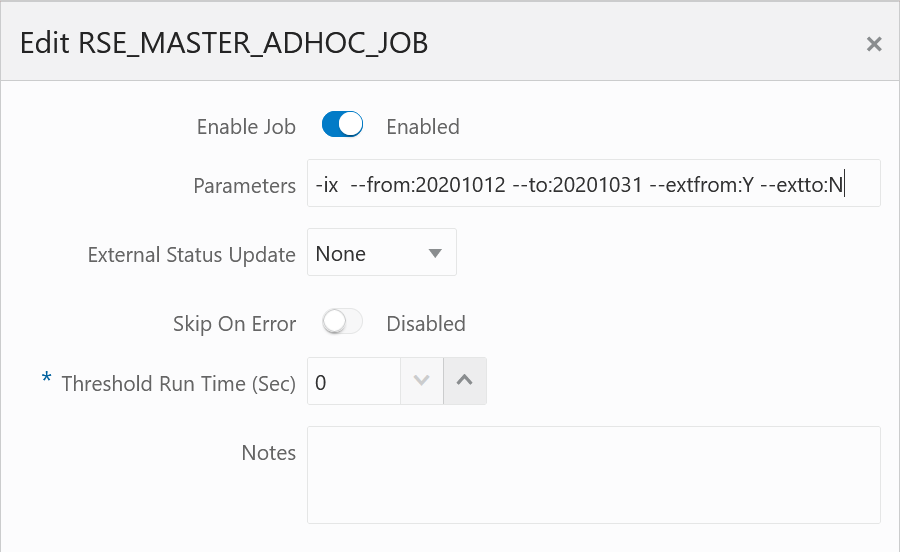

Each letter in the string refers to a specific program or step in the execution flow, which will be covered in more detail in the sections of this chapter. When multiple parameters are used, such as when start/end dates are provided, the format of those parameters uses double-hyphens and colons as shown here:

This chapter includes the following programs:

Customer Metrics - Base Calculation

|

Module Name |

RSE_CUST_ENG_METRIC_BASE_ADHOC |

|

Description |

Calculate base values for customer engagement metrics. |

|

Dependencies |

RSE_SLS_TXN_ADHOC |

|

Business Activity |

Analytical Batch Processing |

Design Overview

This process aggregates sales transaction data for use in customer engagement metric calculations. The process runs for

a range of weeks, depending on which weeks of sales have had a run already performed. It will output the results to a database

table for downstream consumption. The RSE_SLS_TXN_ADHOC job is normally a prerequisite for this, as it is

used to refresh or load additional sales data.

Running this process requires parameters to specify the start and end date range for which data should be processed. The -s parameter is for the Start Date and the -e parameter provides the End Date. Both are in format YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YCustomer Metrics - Final Calculation

|

Module Name |

RSE_CUST_ENG_METRIC_CALC_ADHOC |

|

Description |

Finalize the customer engagement metrics calculation. |

|

Dependencies |

RSE_CUST_ENG_METRIC_BASE_ADHOC |

|

Business Activity |

Analytical Batch Processing |

Design Overview

This process calculates customer engagement metrics based on numerous inputs, including sales transaction aggregates (for

behavioral and predictive metrics) and product attributes (for attribute loyalty metrics). Currently, supported product attributes

must have a group type of BRAND, STYLE, COLOR, LOC_LOYALTY, or PRICE_EFF_LOYALTY, as defined in RSE_BUSINESS_OBJECT_ATTR_MD. The RSE_CUST_ENG_METRIC_BASE_ADHOC job is normally a prerequisite for this, as it calculates the aggregated customer sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YCustomer Metrics - Loyalty Score

|

Module Name |

RSE_CUST_ATTR_LOY_ADHOC |

|

Description |

Calculate customer loyalty score metrics. |

|

Dependencies |

RSE_CUST_ENG_METRIC_BASE_ADHOC |

|

Business Activity |

Analytical Batch Processing |

Design Overview

This process calculates customer engagement loyalty data based on numerous inputs, including sales transactions and product

attributes. Currently, supported product attributes must have a group type of BRAND, STYLE, COLOR, LOC_LOYALTY, or PRICE_EFF_LOYALTY, as defined in RSE_BUSINESS_OBJECT_ATTR_MD. The RSE_CUST_ENG_METRIC_BASE_ADHOC job is normally a prerequisite for this, as it calculates the aggregated

customer sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YData Cleanup Utility

| Module Name | AIF_APPS_MAINT_DATA_CLEANUP_ADHOC_PROCESS |

| Description | Erases database tables within the AIF Apps database schema

(RASE01)

|

| Dependencies | None |

| Business Activity | Initial Data Loads |

Design Overview

As you are loading and reloading data into AIF applications, you may run into conflicts or constraint violations where

you need to purge old data that is causing issues. An ad hoc process is available in the AIF APPS schedule to facilitate this

cleanup activity. The job invokes the rse_data_cleanup.ksh program. The cleanup that will be done is determined

based on the parameters passed into the POM job for each execution. Initially, no parameter is indicated in the job in POM.

The user enters the parameters depending on their requirement. This truncates the tables based on the entered parameters.

Use the table below to identify the parameters you need to use.

-h <input value> This parameter indicates which hierarchy type will be cleaned up (PRODUCT, LOCATION, CALENDAR, PROMOTION, or CUSTSEG).

Valid values:

-

ALL- All Hierarchy records will be cleaned up. This includes Product, Location, Calendar, Promotion and Customer Segment Hierarchies. Alternate Hierarchies are also included for location and product. -

PRODUCT- Product Hierarchy Data -

LOCATION- Location Hierarchy Data -

CALENDAR- Calendar Hierarchy Data -

PROMOTION- Promotion Hierarchy Data -

CUSTSEG- Customer Segment Hierarchy DataNote:

If this parameter is not indicated, no hierarchies will be cleaned up even if you are using the other parameters to clean app data.

-r <input value> This parameter indicates whether all tables referencing the hierarchy IDs directly

or indirectly will also be deleted.

Valid values:

-

Y(Yes) -

N(No)

Default value is Y to ensure no stranded records will remain. This means any data referencing a hierarchy

ID will be purged along with the hierarchy itself. All affected tables will be deleted in full; no data will be preserved.

-a <input value> This parameter indicates whether AIF application tables (such as for PMO, SPO, and

so on) will be deleted.

Global Values:

-

ALL- All application tables will be cleaned up. -

NONE- Application tables will NOT be cleaned up.

Default value is ALL to avoid any stranded records that will no longer work after data is purged. This

parameter can also be used to clean up specific application tables that reference the hierarchies (directly or indirectly).

Application Values:

-

CDT(Customer Decision Tree) -

CIS(Advanced Clustering & Segmentation) -

DT(Demand Transference) -

IO(IPO - Inventory Optimization) -

MBA(Affinity Analysis / Market Basket Analysis) -

PMO(Lifecycle Pricing Optimization –PMO_*tables) -

PRO(Lifecycle Pricing Optimization –PRO_*tables) -

RODS(Retail Operational Data Store) -

SO(Space Optimization) -

SPO(Size Profile Optimization)

-o <input value> This parameter is to indicate whether ONLY the application data will be deleted, but

not any hierarchies.

Valid values:

-

Y(Yes) -

N(No)

Note:

Application Data parameter (-a) should also be indicated. Default value is N if not

indicated. PMO and RODS don’t have specific app tables, hence they are not covered by this option. All affected tables will

be deleted in full; no data will be preserved.

Examples

To clean up all the hierarchy tables together with dependent tables and app tables, add the following as the parameter in POM:

-h ALL -r Y -a ALL -o NTo clean up only the location hierarchy tables together with AIF Apps dependent tables and app tables, add the following as the parameter in POM:

-h LOCATION -r Y -a ALL -o NTo clean up only the application tables:

-a ALL -o YTo clean up only a specific application (in this example, the CIS - Clustering application tables):

-a CIS -o YFake Customer Identification

|

Module Name |

RSE_FAKE_CUST_ADHOC |

|

Description |

Identify fake customers by looking through sales transaction data, so they can be automatically excluded from some applications. |

|

Dependencies |

RSE_SLS_TXN_ADHOC |

|

Business Activity |

Sales Preprocessing |

Design Overview

This process analyzes sales transaction data looking for “fake” customers, which usually represent excessive sales attributed

to a single customer ID. This could be caused by store cards used at the register, corporate cards used by many people, or

wholesale transactions involving large numbers of sales. These kinds of transactions can have negative effects on processes

like Demand Transference because they are not representative of real customer activity. The threshold for identifying a customer

as fake is set using the RSE_CONFIG property FAKE_CUST_DAY_TXN_THRESHOLD.

Running this routine requires parameters to specify the start and end date range, for which data

should be re-processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YFile Export Execution

|

Module Name |

RSE_POST_EXPORT_ADHOC |

|

Description |

Runs the export processes for any prepared AI Foundation export files, which includes file movement, zipping, and export to SFTP. |

|

Dependencies |

RSE_EXPORT_PREP_ADHOC |

|

Business Activity |

Outbound Integrations |

Design Overview

This process moves, zips, and exports files from the AI Foundation applications based on the file export type. It accepts

a single input parameter for the file frequency type, using one of DAILY, WEEKLY, QUARTERLY, INTRADAY, or ADHOC. This process is the second step in the data flow

and assumes files have already been prepared for export using the dependent process.

File Export Preparation

|

Module Name |

RSE_EXPORT_PREP_ADHOC |

|

Description |

Export preparation job for a specific group of AI Foundation export files. |

|

Dependencies |

None |

|

Business Activity |

Outbound Integrations |

Design Overview

This process will prepare a set of export files from the AI Foundation applications based on the file export type. It accepts

a single input parameter for the file frequency type, using one of DAILY, WEEKLY, QUARTERLY, INTRADAY, or ADHOC. This is the first step in the data flow and does

not perform the file movement to SFTP; it only prepares the files of the specified type so that the RSE_POST_EXPORT_ADHOC process can consume them.

Forecast Aggregates

|

Module Name |

PMO_ACTIVITY_LOAD_ADHOC_PROCESS |

|

Description |

Refresh the |

|

Dependencies |

None |

|

Business Activity |

Initial Data Loads |

Design Overview

This process regenerates aggregate data on the PMO_ACTIVITIES table for a

subset of forecast run types and time periods. You may want to use this process if you have

loaded new historical data into AIF and want it reflected on your existing forecast run types.

Make sure the run types are active in the UI before attempting to run this process on them.

The process has 2 jobs: PMO_ACTIVITY_STG_ADHOC_JOB and

PMO_ACTIVITY_LOAD_ADHOC_JOB. All of the parameters must be provided on the

STG job.

Options:

-

-nNumber of weeks to process -

-fForce updates to existing data -

-sStart date inYYYYMMDDformat -

-eEnd date inYYYYMMDDformat -

-SStart calendar day ID -

-EEnd calendar day ID -

-wCalendar week ID to process -

-NNew Forecast Run Type Aggregation Flag -

-rForecast Run Type ID -

-?Display this usage information

Running this routine requires parameters to specify the start and end date range for weeks of

data to process. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f Y -N NLifecycle Pricing Optimization Run

|

Module Name |

PRO_OPT_ADHOC |

|

Description |

Runs the LPO optimization process outside of the normal batch. |

|

Dependencies |

None |

|

Business Activity |

Analytical Batch Processing |

Design Overview

This process triggers the lifecycle pricing optimization batch processing outside of the normal batch window. All of the necessary steps to calculate optimization results are included in the ad hoc job and no parameters are used. The process triggers the Java libraries on the application server that are responsible for the optimization.

Location Ranging

|

Module Name |

DT_LOC_RANGE_ADHOC |

|

Description |

Refresh Location Ranging data for Demand Transference. |

|

Dependencies |

DT_PROD_LOC_RANGE_ADHOC |

|

Business Activity |

Application Setup |

Design Overview

This process calculates SKU Counts for the available ranges of products, for a given CM Group, Store Location, and Week, which may be needed during implementation of Demand Transference when using CM Groups.

Running this routine requires parameters to specify the start and end date range for weeks of

data to process. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YMaster Data Load - AA

|

Module Name |

MBA_MASTER_ADHOC_PROCESS |

|

Description |

Run the Affinity Analysis/Market Basket Analysis master script. This is the best way to execute all the initial processing steps for the MBA application module. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Affinity Analysis (also known as Market Basket Analysis or MBA) application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-eExecute MBA ETL routines -

-cExecute ARM configuration load routines -

-aExecute ARM processes -

-rExecute RI ARM processes -

-bExecute Baseline processes -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches

Example

-Aa will result in running all steps except -a

Master Data Load - AC

|

Module Name |

CIS_MASTER_ADHOC_PROCESS |

|

Description |

Run the Advanced Clustering/Customer Segmentation master script. This is the best option to run all initial processing steps for the AC/CS modules. NOTE: when running through POM, if any -- options are required, use : instead of = to separate the option from the value. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Advanced Clustering and Customer Segmentation applications. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-aAttribute Maintenance -

-hProduct/Attribute Share Processing -

-tLoading cluster templates -

-vSetup a new version -

-sUpdate sales data for use by any versions -

-mMarket Sales Aggregation load -

-cUpdate new versions with all the attribute summary information -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Example

-

-Ahwill result in running all steps except -h -

--fromStart date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--from:20170101. Must be accompanied by the end date and optionally by theextfromflag -

--toEnd date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--to:20170201. Must be accompanied by the end date and optionally by the extto flag -

--extfromOptional flag to indicate if the start date must be extended to the start of the week. Accepts Y or N (default). For example,--extfrom:Y, with no spaces -

--exttoOptional flag to indicate if the end date must be extended to the end of the week. Accepts Y or N (default). For example,--extto:Y, with no spaces

Master Data Load - AE

|

Module Name |

AE_MASTER_ADHOC_PROCESS |

|

Description |

Run the Attribute Extraction master script. This is the best way to trigger all initial processing for the AE application. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Attribute Extraction application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-GGlobal Lists of Strings loading -

-CProduct Categories loading -

-PProduct loading -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Example

-AGP will result in running all steps except

-G and -P

Master Data Load - Common

|

Module Name |

RSE_MASTER_ADHOC_PROCESS |

|

Description |

Run the AI Foundation Cloud Services common master script. This is the first step that should be run once data has been loaded into RI, and is ready to initialize data needed by all the other application modules. |

|

Dependencies |

None |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Retail AI Foundation Cloud Services foundation data tables. This process is generally required as the first step in loading data to any AI Foundation application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options for order) -

-pProduct Hierarchy -

-cCM Group Product Hierarchy -

-lLocation Hierarchy -

-tTrade Area Location Hierarchy -

-XAlternate (Flex) Product & Location Hierarchy -

-dCalendar Hierarchy -

-rPromotion Hierarchy -

-gCustomer Segment Hierarchy -

-sConsumer segment data -

-PProduct Attributes -

-LLocation attributes -

-KLike Location / Product data load -

-GCustomer Segment Attributes -

-zPrice zone ETL -

-hHoliday data load -

-iInventory data load -

-xSales transaction data -

-fFake customer data load -

-kFake customer data identification -

-wWeekly Aggregate Sales data (Load or Calc) -

-aAggregate Sales data processing -

-FForecast Aggregate Sales data processing -

-CPrice and Cost data load -

-uUDA load -

-EExport Group Setup -

-WWeather Driven Demand data load -

-TWeekly Return transactions -

-eWeekly Return Aggregation -

-SWeekly Sales Return Price Consolidation -

-mCustomer Engagement Attribute -

-oForecast Plan Load -

-bBudget Allocation Load -

-OOrder Cost data Load -

-nPromotion data Load -

-DDaily data Load -

-USupplier, Supplier Item, Daily Supplier Cost, Supplier Inv Mgmt Load -

-NSeason Phase Item Load -

-JRules Engine data for PRO -

-jRules Engine data for IO -

-qGroup Flex Load -

-HBuyer, Allocation, Purchase Order, Transfer Loads -

-MForecast Spread Profiles Load -

-VForecast Lifecycle Classification Load -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. The A flag indicates to run all steps except the letters following it, while the R flag indicates to resume from

the letter following it. Any switch provided more than once, or after a -A or -R will toggle

the switch On/Off. This will enable excluding a small number of steps from processing, without requiring specifying all other

switches.

Examples:

-

-Actwill result in running all steps except-cand-t -

-Rc -twill result in running all steps starting withc, but excluding stept -

-pldgxwawill result in extracting the product, location, calendar, customer segment, and sales data from the data warehouse and populating all the core AIF aggregates for sales (this is a common set of load steps for first-time runs)

Additional optional flags may be specified after the sequence of steps is provided, as listed below. Date ranges will apply to any step that extracts historical data, such as sales and inventory loads. If no date range is provided, then the job will attempt to determine the range of dates in the data warehouse and extract that entire range. If a step has already extracted data from the data warehouse once, then you must specify dates on additional runs of that step to ensure only that date range is re-extracted.

-

--alt_prod_hierRun only the alternate (flex) product hierarchy load steps -

--alt_loc_hierRun only the alternate (flex) location hierarchy load steps -

--alt_hier_setupRun only the alternate (flex) hierarchy setup steps -

--prioritizefilesSpecifies that data files should be prioritized as the source for a load instead of RI, where it is possible to get data from either source -

--fromStart date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example--from:20170101. Must be accompanied by the end date and optionally by theextfromflag -

--toEnd date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--to:20170201. Must be accompanied by the end date and optionally by theexttoflag -

--extfromOptional flag to indicate whether the start date must be extended to the start of the week. AcceptsYorN(default). For example,--extfrom:Y, with no spaces -

--exttoOptional flag to indicate whether the end date must be extended to the end of the week. AcceptsYorN(default). For example,--extto:Y, with no spaces

Master Data Load - DT

|

Module Name |

DT_MASTER_ADHOC_PROCESS |

|

Description |

Run the Demand Transference master script. This is the best way to run all the initial processing steps needed by the DT application module. NOTE: when running through POM, if any -- options are required, use : instead of = to separate the option from the value. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Demand Transference application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-rLoad Store Sku Ranging Data -

-lAggregate Location Ranging Statistics -

-bCalculate Baseline -

-iUpdate model intervals -

-gRun Group Load -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Examples

-

-Abwill result in running all steps except-b -

--fromStart date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--from:20170101. Must be accompanied by the end date and optionally by theextfromflag -

--toEnd date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--to:20170201. Must be accompanied by the end date and optionally by theexttoflag -

--extfromOptional flag to indicate if the start date must be extended to the start of the week. AcceptsYorN(default). For example,--extfrom:Y, with no spaces -

--exttoOptional flag to indicate if the end date must be extended to the end of the week. AcceptsYorN(default). For example,--extto:Y, with no spaces

Master Data Load - Forecast Estimation

|

Module Name |

PMO_MASTER_ADHOC_PROCESS |

|

Description |

Run the master script for processing forecast input loads and calculations. This is the way to prepare certain inputs for the forecast such as returns and activities tables. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Forecasting

module which is in addition to the common master data load

(RSE_MASTER_ADHOC_PROCESS). It accepts one or more single-character

parameters to control which steps in the process are executed. Multiple steps executed

in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-aActivities -

-dReturn Data Preparation -

-cReturn Calculation -

-hHoliday load -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

The activities load (-a) supports date parameters when you are reloading data for a specific historical

period. Both parameters should be provided when used.

-

--fromStart date of the data processing timeframe. Must be provided inYYYYMMDDformat with no spaces. For example--from:20170101. Must be accompanied by the end date and optionally by theextfromflag -

--toEnd date of the data processing timeframe. Must be provided inYYYYMMDDformat with no spaces. For example,--to:20170201. Must be accompanied by the end date and optionally by theexttoflag

Examples

-Adh will result in running all steps except -d and

-h

-a --from:20210502 --to:20210807 will process the historical

activities data between 2021-05-02 and 2021-08-07

Master Data Load - IO

|

Module Name |

IO_MASTER_ADHOC_PROCESS |

|

Description |

Run the Inventory Planning Optimization-Optimization master script. This is the best option for running all the initial processing steps needed by the Inventory Planning Optimization-Optimization application module. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Inventory Planning Optimization-Inventory Optimization application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-aReplenishment Attributes at Product/Location or Group level -

-sSeasons -

-rStrategy Rules -

-CSupply chain for Distribution Networks -

-SReview Schedule -

-LLead Time of Internal Locations -

-PSupply chain for Procurement Networks -

-QSupplier Review Cycles -

-TLead Time of Suppliers -

-MInternal Order Multiples -

-mSupplier Order Multiples -

-lRounding Levels -

-ILoad IO rules data to rules engine -

-NLoad N-tier interface data to rules engine -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Example

-AaP will result in running all steps except -a and -P

Master Data Load - LPO

|

Module Name |

PRO_MASTER_ADHOC_PROCESS |

|

Description |

Run the Lifecycle Pricing Optimization master script. This is the best option for running all the initial processing steps for the LPO application module. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Lifecycle Pricing Optimization application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-bBaseline -

-cCustomer Segment Lifetime Value -

-iInventory Aggregation -

-fLifecycle Fatigue -

-pPromotion -

-lPromotion Lift -

-CPrice and Cost for Promotion/Markdown Recommendations -

-ePrice Elasticity -

-LPrice Ladder -

-rSales Return -

-sSeason -

-PSeason Product -

-dSeason Period -

-EMarkdown Day of Week -

-ySeasonality -

-DModel Dates -

-OCountry Locale -

-FForecast Adjustment -

-WDays of Week Profile -

-uLoad Optimization rules for Promotion/Markdown Recommendations (using interface) -

-GLoad Optimization rules for Regular Recommendations (using interface) -

-MFuture Markdowns -

-UProduct Location CDA Flex Facts for Promotion/Markdown Recommendations -

-gProduct Location CDA Flex Facts for Regular Recommendations -

-nInventory Aggregation for Regular Recommendations -

-oPrice and Cost for Regular Recommendations -

-qActivities aggregation for Regular Recommendations -

-SLoad Trailing metrics for Promotion/Markdown Recommendations -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Example

-AbP will result in running all steps except -b and -P

Master Data Load - SO

|

Module Name |

SO_MASTER_ADHOC_PROCESS |

|

Description |

Run the Space Optimization master script. This is the best way to run all the initial steps for the SO application module. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Assortment & Space Optimization application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-FAssortment Finalization -

-aAssortment -

-hPlaceholder Product Loading -

-MProduct Cluster mapping -

-CAssortment product location forecast and price/cost -

-fAssortment Forecast loading -

-rReplenishment Parameters -

-SProduct Stacking Height Limit -

-pPog Loading -

-bBay/Fixture Loading -

-yDisplay Style Loading -

-cProduct Fixture Configuration Loading -

-PPerform Product Attribute maintenance -

-mAssortment Mapping -

-vGlobal Validation -

-sAssortment to POG mapping -

-gPOG Set location creation -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Example

-AaP will result in running all steps except -a and

-P

Master Data Load - SPO

|

Module Name |

SPO_MASTER_ADHOC_PROCESS |

|

Description |

Run the Size Profile Optimization master script. This is the best way to run all the initial processing steps needed by the SPO application module. NOTE: when running through POM, if any -- options are required, use : instead of = to separate the option from the value. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process controls the master set of batch programs for loading data into the Size Profile Optimization application. It accepts one or more single-character parameters to control which steps in the process are executed. Multiple steps executed in sequence should be passed as one string.

Options:

-

-AProcess all steps -

-R <Option>Resume processing all steps, starting with the step associated with the provided option (see below options) for order -

-SSeason Data Load -

-rSize Range Data Load -

-sSize Data Load -

-pProduct Size Data Load -

-lSub-Size Range Product Location Data Load -

-?Display this usage information

Options -A and -R will enable processing of appropriate steps. Any switch provided more

than once, or after a -A or -R will toggle the switch On/Off. This will enable excluding

a small number of steps from processing, without requiring specifying all other switches.

Example

-

-Arwill result in running all steps except-r -

--fromStart date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--from:20170101. Must be accompanied by the end date and optionally by theextfromflag -

--toEnd date of the data processing timeframe. Must be provided in YYYYMMDD format with no spaces. For example,--to:20170201. Must be accompanied by the end date and optionally by theexttoflag -

--extfromOptional flag to indicate if the start date must be extended to the start of the week. AcceptsYorN(default). For example,--extfrom:Y, with no spaces -

--exttoOptional flag to indicate if the end date must be extended to the end of the week. AcceptsYorN(default). For example,--extto:Y, with no spaces

Product Location Ranging

|

Module Name |

DT_PROD_LOC_RANGE_ADHOC |

|

Description |

Refresh Product Location Ranging data for Demand Transference. |

|

Dependencies |

W_RTL_IT_LC_D_JOB (in RI) |

|

Business Activity |

Application Setup |

Design Overview

This process extracts the item/location ranging information from Retail Insights table W_RTL_IT_LC_D.

This process is also performed in the DT master batch process, but it can be run on its own if you are modifying the data

and need to reload it.

Running this routine requires parameters to specify the start and end date range, for which data

should be re-processed from the W_RTL_IT_LC_D table or from AI

Foundation sales tables. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Aggregation – Cumulative Sales

|

Module Name |

PMO_CUMUL_SLS_ADHOC_PROCESS |

|

Description |

Creates aggregate cumulative sales data for Lifecycle Pricing Optimization. |

|

Dependencies |

RSE_MASTER_ADHOC_PROCESS |

|

Business Activity |

Initial Data Loads |

Design Overview

This process allows the user to execute the cumulative sales aggregation for Lifecycle Pricing Optimization application

in an ad hoc manner. When the user creates a new forecast run type, this aggregation is automatically called as part of “Start

Data Aggregation”. This requires that sales aggregations have already been performed using the RSE_MASTER ad hoc process, and inventory position/receipts data has already been loaded into the data warehouse and AIF (so that first

receipt dates can be used).

Running this process requires parameters to specify the start and end date range for which data should be processed. The -s parameter is for the Start Date and the -e parameter provides the End Date. Both are in format YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YThe process has the following list of supported options. All job parameters are passed into the PMO_CUMUL_SLS_SETUP_ADHOC_JOB process when invoking it from Postman.

-

-nNumber of weeks to process -

-fForce update of existing data -Y/N(Default) -

-sStart dateyyyymmdd -

-eEnd dateyyyymmdd -

-SStart calendar day ID -

-EEnd calendar day ID -

-wCalendar Week ID to process -

-NNew Forecast Run Type Aggregation Flag -Y/N

Sales Aggregation - Customer Segment

|

Module Name |

RSE_WKLY_SLS_CUST_SEG_ADHOC |

|

Description |

Aggregates Sales Transaction data to Weekly Customer Segment Sales tables. |

|

Dependencies |

RSE_SLS_TXN_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales data by customer segment for use in AI Foundation applications. The RSE_SLS_TXN_ADHOC job is normally a prerequisite for this, as it is used to refresh or load additional sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Aggregation - Product

|

Module Name |

RSE_WKLY_SLS_PR_AGGR_ADHOC |

|

Description |

Calculates Product-based sales aggregate tables. |

|

Dependencies |

RSE_WKLY_SLS_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales data by product for use in AI Foundation applications. The RSE_WKLY_SLS_ADHOC job is normally a prerequisite for this, as it is used to refresh or load additional sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Aggregation - Product Attribute

|

Module Name |

RSE_WKLY_SLS_PH_ATTR_AGGR_ADHOC |

|

Description |

Calculates Product Attribute-based sales aggregate tables. |

|

Dependencies |

RSE_WKLY_SLS_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales data by product attribute and product hierarchy levels for use in AI Foundation applications.

The RSE_WKLY_SLS_ADHOC job is normally a prerequisite for this, as it is used to refresh or load additional

sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Aggregation - Product Hierarchy

|

Module Name |

RSE_WKLY_SLS_PH_AGGR_ADHOC |

|

Description |

Calculates Product Hierarchy-based sales aggregate tables. |

|

Dependencies |

RSE_WKLY_SLS_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales data by product hierarchy levels for use in AI Foundation applications. The RSE_WKLY_SLS_ADHOC job is normally a prerequisite for this, as it is used to refresh or load additional sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Aggregation - Weekly

|

Module Name |

RSE_WKLY_SLS_ADHOC |

|

Description |

Aggregates Sales Transaction data to week level tables. |

|

Dependencies |

RSE_SLS_TXN_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales data by product hierarchy levels for use in AI Foundation applications. The RSE_SLS_TXN_ADHOC job is normally a prerequisite for this, as it is used to refresh or load additional sales data.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Forecast Aggregation - Product Attribute (Legacy)

|

Module Name |

RSE_SLSFC_PH_ATTR_AGGR_ADHOC |

|

Description |

Calculates Product Attribute-based sales forecast aggregate tables. |

|

Dependencies |

RSE_SLSFC_PH_AGGR_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales forecast data by product attribute and product hierarchy levels for use in AI Foundation

applications. The RSE_SLSFC_PH_AGGR_ADHOC job is normally a prerequisite for this, as it is used to refresh

or load additional sales forecast data.

Running this process requires parameters to specify the start and end date range, for which data should be processed. The -s parameter is for the Start Date and the -e parameter provides the End Date. Both are in format YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YNote:

This is a legacy process which uses a forecast interface from the data warehouse that has been deprecated.Sales Forecast Aggregation - Product Hierarchy (Legacy)

|

Module Name |

RSE_SLSFC_PH_AGGR_ADHOC |

|

Description |

Calculates Product Hierarchy-based sales forecast aggregate tables. |

|

Dependencies |

None |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales forecast data by product hierarchy levels for use in AI Foundation applications.

Running this process requires parameters to specify the start and end date range, for which data should be processed. The -s parameter is for the Start Date and the -e parameter provides the End Date. Both are in format YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YNote:

This is a legacy process which uses a forecast interface from the data warehouse that has been deprecated.Sales Shares - Product Attribute

|

Module Name |

AC_PROD_ATTR_LOC_SHARE_ADHOC |

|

Description |

Calculate product attribute sales shares for use in Advanced Clustering. |

|

Dependencies |

RSE_WKLY_SLS_ADHOC |

|

Business Activity |

Initial Data Loads |

Design Overview

This process aggregates sales shares by product attribute for use in the Advanced Clustering application, specifically

for use in clustering by product attribute. The RSE_WKLY_SLS_ADHOC job is normally a prerequisite for this,

as it is used to refresh or load additional sales data at week level.

You also must choose which attribute mode is applicable for AC. If it is specified as CDT in RSE_CONFIG property PERF_CIS_APPROACH, then this program will expect additional information for CDT-like attribute

groups in RSE_PROD_ATTR_GRP and RSE_PROD_ATTR_GRP_VALUE_MAP. It will also use sales data

from RSE_SLS_PH_ATTR_LC_WK_A. For any other configuration, these tables are not required and a more generic

approach will be taken.

Running this process requires parameters to specify the start and end date range, for which data

should be processed. The -s parameter is for the Start Date and the

-e parameter provides the End Date. Both are in format

YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f YSales Transaction Load

|

Module Name |

RSE_SLS_TXN_ADHOC |

|

Description |

Performs bulk retrieval of Sales Transaction data. |

|

Dependencies |

W_RTL_SLS_TRX_IT_LC_DY_F_JOB (in RI) |

|

Business Activity |

Initial Data Loads |

Design Overview

This process extracts sales transactions from Retail Insights for use in all AI Foundation applications. The W_RTL_SLS_TRX_IT_LC_DY_F table in the data warehouse is the source of this data and the data warehouse must be populated with sales before this program

runs.

Running this process requires parameters to specify the start and end date range, for which data should be processed. The -s parameter is for the Start Date and the -e parameter provides the End Date. Both are in format YYYYMMDD. For example:

-s YYYYMMDD -e YYYYMMDD -f Y