14 Sales Posting

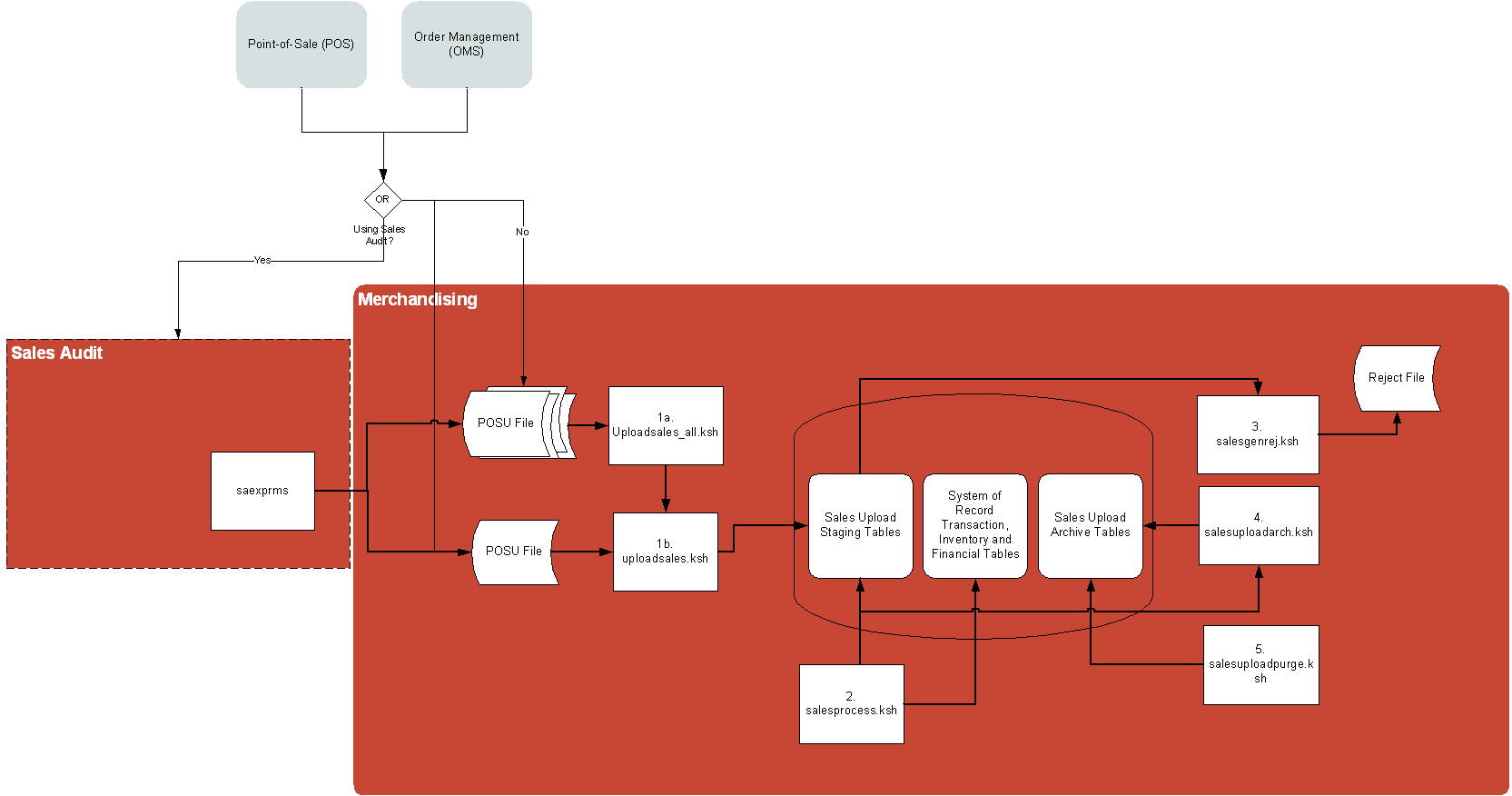

If not using Sales Audit, such as if you are using a 3rd party sales auditing solution, sales and returns from your point-of-sale (POS) and order management (OMS) solutions can be integrated directly to Merchandising using the Upload Sales job.

Once the data is staged in Merchandising, other modules take over the posting of that data to sales transaction, sales history, and stock-on-hand tables. This is the processing used by data integrated from the Oracle Retail Sales Audit solution (as part of the Merchandising Foundation Cloud Service), as well.

The Sales Posting process consists of a number of related programs.

-

Upload POSU File for Processing (uploadsales.ksh) reads the input file and writes its contents to a series of staging tables. Process Multiple POSU Files (uploadsales_all.ksh) wraps uploadsales.ksh to process multiple files.

-

Main Processing of Staged Sales/Returns (salesprocess.ksh) reads the staged data and performs major validation, financial and inventory processing.

-

Reject POSU Transactions (salesgenrej.ksh) creates a reject file for transactions that fail validation.

-

Archive Successfully Posted Transactions (salesuploadarch.ksh) archives successfully processed transactions and clears them out of the staging tables.

-

Purge Aged Archived POSU Transactions (salesuploadpurge.ksh) purges transactions from the archive tables after the transactions age out of the system.

Program Summary

The following batch designs are included in this chapter

-

Archive Successfully Posted Transactions (salesuploadarch.ksh)

-

Main Processing of Staged Sale/Return Transactions (salesprocess.ksh)

-

Purge Aged Archived POSU Transactions (salesuploadpurge.ksh)

-

Purge FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS Tables (file_upload_errors_purge.ksh)

These integration programs are described in the Merchandising Operations Guide Volume 2:

-

uploadsales.ksh (Upload POSU File for Processing)

-

uploadsales_all.ksh (Process Multiple POSU Files)

-

salesgenrej.ksh (Reject POSU Transactions)

Archive Successfully Posted Transactions (salesuploadarch.ksh)

|

Module Name |

salesuploadarch.ksh |

|

Description |

Archive Successfully Posted Transactions |

|

Functional Area |

Sales Processing |

|

Module Type |

Admin |

|

Module Technology |

Ksh |

|

Catalog ID |

RMS340 |

|

Wrapper Script |

rmswrap_shell.ksh |

Design Overview

The purpose of this module is to archive the successfully posted transactions, and clear the staging table.

Main Processing of Staged Sale/Return Transactions (salesprocess.ksh)

|

Module Name |

salesprocess.ksh |

|

Description |

Main Processing of Staged Sale/Return Transactions |

|

Functional Area |

Sales Posting |

|

Module Type |

Business Processing |

|

Module Technology |

ksh |

|

Catalog ID |

RMS151 |

|

Wrapper Script |

rmswrap_shell.ksh |

Design Overview

The purpose of the SALESPROCESS.KSH module is to process sales and return details from an external point of sale system (either POS or OMS). The sales/return transactions will be validated against Oracle Retail item/store relations to ensure the sale is valid, but this validation process can be eliminated if the sales that are being passed in, have been screened by sales auditing. The following common functions will be performed on each sales/return record read from the input file:

-

Read sales/return transaction record

-

Lock associated record in Merchandising

-

Validate item sale

-

Check whether TAX maintenance is required, and if so determine the TAX amount for the sale.

-

Write all financial transactions for the sale and any relevant markdowns to the stock ledger.

-

Post item/location/week sales to the relevant sales history tables

-

Perform last sales processing to maintain accurate sales information in the system

POSU Chunking

Table 14-1 Concurrent Threads and Chunk Size

| MAX_CONCURRENT_THREADS | MAX_CHUNK_SIZE |

|---|---|

|

2 |

3 |

Number of Threads: 11

|

Thread 1 |

Chunk 1 |

THEAD 1 |

Item 1 |

|

Thread 1 |

Chunk 1 |

THEAD 2 |

Item 1 |

|

Thread 1 |

Chunk 1 |

THEAD 3 |

Item 2 |

|

Thread 1 |

Chunk 1 |

THEAD 4 |

Item 2 |

|

Thread 1 |

Chunk 1 |

THEAD 5 |

Item 3 |

|

Thread 2 |

Chunk 2 |

THEAD 6 |

Item 5 |

|

Thread 2 |

Chunk 2 |

THEAD 7 |

Item 6 |

|

Thread 2 |

Chunk 2 |

THEAD 8 |

Item 7 |

|

Thread 3 |

Chunk 3 |

THEAD 9 |

Item 8 |

|

Thread 3 |

Chunk 3 |

THEAD 10 |

Item 9 |

|

Thread 3 |

Chunk 3 |

THEAD 11 |

Item 10 |

In this run, threads would be allocated first to chunks 1 and 2. The other threads would only be picked up once either thread 1 or 2 has finished its processing.

Restart/Recovery

The logical unit of work for salesprocess.ksh is a set of a single or multiple valid item sales transactions at a given store location. This set is defined as a chunk. Based on the example above, if for some reason, chunk 2 raised an error, THEAD 4, 5, and 6 wouldn't be posted in Merchandising. Other chunks, if there are no errors, would be processed. User has to correct the transaction details and upload the updated POSU file that includes the affected THEAD lines for reprocessing.

Locking Strategy

Since the sales upload processes are run multiple times a day in a trickle-polling system, a locking mechanism is put in place to allow on-line transactions and the salesprocess.ksh module to run at the same time.

Because multithreading logic based on chunks is applied, it is possible that a record is locked by another thread. Without a mechanism to perform waiting/retrying, record locking errors would happen more frequently.

In the table RMS_PLSQL_BATCH_CONFIG, RETRY_LOCK_ATTEMPTS is the number of times the thread will try to acquire the lock for a table and RETRY_WAIT_TIME is the number of seconds the thread will wait before it retries. Once the number of retries is equal to the limit defined, the whole chunk wouldn't be processed. This would create a reject file with which you can use to upload again to the staging table.

Performance Considerations

The number of threads, the amount of waiting time, number for retries, and average volume of data should be considered.

Be careful when increasing the number of threads. When the number exceeds the capacity of the server, new jobs wouldn't be able to start when this program is running and would impact other users of the system.

Because this is multithreaded and can be executed during the store day, it is prone to locking errors. Record locking errors would happen if the thread reached the maximum number of retries (RETRY_LOCK_ATTEMPT) to fetch the lock. To prevent this, increase the value of the retries and let the value of RETRY_WAIT_TIME remain at 1. This means that it would retry every second until the maximum number of retries have been reached.

It is also important to know the average volume of data. It is a determinant of what would be the chunk size. If the chunk is too small, it couldn't utilize processing the records in bulk. If the chunk size is too large, in such that, all records would be in one chunk, it wouldn't utilize the multithreaded approach and thus, be inefficient.

I/O Specification

|

Integration Type |

Upload to Merchandising |

|

File Name |

N/A; at this point, the POSU data has already been uploaded to the staging tables |

|

Integration Contract |

IntCon000103 |

The module will have the ability to re-process a POSU reject file directly. The file format will therefore be identical to the input file layout for the uploadsales.ksh process. A reject line counter will be kept in the program and is required to ensure that the file line count in the trailer record matches the number of rejected records. If no errors occur, no reject files would be generated.

Design Assumptions

Tax Handling:

POS can send either transactional level tax details in TTAX lines or item-level tax details in IGTAX lines through the RTLOG file to Sales Audit. These tax details will be passed on to Merchandising in the TTAX lines of the POSU file. Even though POS can pass multiple IGTAX/TTAX lines to Sales Audit and from Sales Audit to Merchandising, Merchandising only supports one tax code per item. If multiple taxes for an item are sent from POS to Sales Audit, they will be summed to a single tax in Merchandising sales upload process and assigned one of the applicable tax codes when writing tran_data 88.

Financial Transactions

salesprocess.ksh writes transaction records to the TRAN_DATA table primarily through its write_tran_data function. From the entire list of valid transaction codes (For the full list of transaction codes, see the chapter “General ledger batch" in this volume of the Merchandising Operations Guide), for the column TRAN_CODE, salesupload.ksh writes the following:

Table 14-2 Transaction Records

| Transaction Code | Description |

|---|---|

|

01 |

Net Sales (retail & cost) |

|

02 |

Net sales (retail & cost) where - retail is always VAT exclusive, written only if system_options.stkldgr_vat_incl_retl_ind = Y |

|

03 |

Non-inventory Items Sales/Returns |

|

04 |

Customer Returns (retail & cost) |

|

05 |

Non-inventory VAT Exclusive Sales |

|

06 |

Deal Income (sales) |

|

11 |

Markup (retail only) |

|

12 |

Markup cancel (retail only) |

|

13 |

Permanent Markdown (retail only) |

|

14 |

Markdown cancel (retail only) |

|

15 |

Promotional Markdown (retail only), including ‘in-store' markdown |

|

20 |

Purchases (retail & cost) |

|

24 |

Return to Vendor (RTV) from inventory (retail & cost) |

|

60 |

Employee discount (retail only) |

Note:

Where value-added-tax is enabled (system_options table, stkldgr_vat_incl_retl_ind column shows ‘Y') and the retail accounting method is also enabled, salesupload.ksh writes an additional transaction record for code 02.

Any items sold on consignment are written as a code 20 (Purchases) as well as a 01 (Net Sales) along with all other applicable transactions, like returns. The 20 reflects the fact that the item is purchased at the time it is sold, in other words, a consignment sale.

Purge Aged Archived POSU Transactions (salesuploadpurge.ksh)

|

Module Name |

salesuploadpurge.ksh |

|

Description |

Purge Aged Archived POSU Transactions |

|

Functional Area |

Sales Processing |

|

Module Type |

Admin |

|

Module Technology |

Ksh |

|

Catalog ID |

RMS341 |

|

Wrapper Script |

rmswrap_shell.ksh |

Design Overview

The purpose of this module is delete the archive tables for the rejects, retry records and the posted transaction based on the given retention period.

Purge FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS Tables (file_upload_errors_purge.ksh)

|

Module Name |

file_upload_errors_purge.ksh |

|

Description |

Purge FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS Tables. |

|

Functional Area |

Administration |

|

Module Type |

Admin |

|

Module Technology |

Ksh |

|

Catalog ID |

N/A |

|

Wrapper Script |

rmswrap_shell.ksh |

Design Overview

The purpose of this program is to purge FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS tables regularly in Merchandising.

To validate the status of sales file upload process in Merchandising, the error handling in sales upload process has been enhanced to capture the following attributes of file upload status in FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS tables.

-

Filename

-

Status

-

# of lines in file

-

# of Records uploaded

-

# of Records failed processing

-

Date/Time process started

-

Date/Time processing completes

-

Location (store or warehouse where file originated). For stock counts this would be the physical warehouse.

If errors are identified, the error message, line text and line ID are captured in the FILE_UPLOAD_ERRORS table. The FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS tables are replicated thru golden gate, so that customer can verify the upload file results through DAS views.

The file_upload_errors_purge.ksh script is scheduled to run as part of the nightly batch, to purge FILE_UPLOAD_STATUS and FILE_UPLOAD_ERRORS tables regularly in Merchandising based on the retention days input parameter.