9 Managing and Monitoring

This topic includes the following:

Managing Tasks

Tasks are processes that are special runs, such as a one-time data synchronization, or direct file extraction. Tasks are useful in managing Oracle GoldenGate, because they allow you to load data that may have been missed due to a variety of system errors. You can define a task with the GGSCI commands:

GGSCI> ADD EXTRACT group_name, SOURCEISTABLE GGSCI> ADD REPLICAT group_name, SPECIALRUN

When you define a task, you must include the task type parameter in the parameter file. For the Extract parameter file, include SOURCEISTABLE or SOURCEISFILE. For the Replicat parameter file include SPECIALRUN.

Manager can purge tasks. To purge tasks enter parameters such as:

PURGEOLDTASKS EXTRACT wildcard_spec, AFTER number HOURS, USESTOPSTATUS PURGEOLDTASKS REPLICAT wildcard_spec, AFTER number DAYS, USESTOPSTATUS PURGEOLDTASKS ER wildcard_spec, AFTER number HOURS, USESTOPSTATUS

Getting Information on Tasks

You can retrieve information about a task using the INFO and STATUS commands with the TASKS or ALLPROCESSES options:

GGSCI> INFO EXTRACT *, TASKS GGSCI> INFO REPLICAT *, ALLPROCESSES GGSCI> STATUS ER *, ALLPROCESSES

TASKS reports on SPECIALRUN or SOURCEISFILE tasks. ALLPROCESSES reports on all processes.

Managing Tasks Using the Process Name

Tasks defined with SPECIALRUN, SOURCEISFILE, or SOURCEISTABLE do not require a group name. Even without this name, it is possible to communicate with these running tasks by using the SEND PROCESS command. The syntax for this uses the process name instead of a group name as shown below.

GGSCI> SEND PROCESS process_name {text | WAKE | BREAK}

The text option can be any one of the subset of GGSCI commands that are recognized by the receiving process.

See SEND PROCESS for more details.

Managing Oracle GoldenGate Trails

Oracle GoldenGate trails can be managed by allocating optimal storage for the trail files and setting parameters for cleaning up trail files that are no longer needed.

Initial Allocation of Storage for Trails

To prevent trail activity from interfering with business applications, use a separate disk managed by a disk process different than that of the application.

To ensure there is enough disk space for the trail files, follow these guidelines:

-

For trails on the source system, there should be enough space to handle data accumulation if the network connection fails. In a failure, reading from a trail terminates but the primary Extract group reading from logs or audit file continues extracting data. It is not good practice to stop the primary Extract group to prevent further accumulation. The logs could recycle or the audit files could be off-loaded.

-

For trails on the target system, data will accumulate because data is extracted and transferred across the network faster than it can be applied to the target database.

Ongoing Trail Management

Oracle GoldenGate provides options that let you manage your trails in two ways.

-

Based on the number and size of the files.

The

MEGABYTES, MAXFILES, andEXTENTSoptions specify how large each trail file may become, and how many files may exist before Extract stops with an error. -

With the

PURGEOLDEXTRACTSparameter.This lets you purge old extracted data you no longer need. This can be based on rules you set up.

-

The

MINKEEPHOURS,MINKEEPDAYSoptions set the time to keep files.MINKEEPFILESsets the minimum number of files to keep. -

In the Manager only, the

USECHECKPOINTSoption uses checkpoints to determine whether processing is complete. You can also set theCHECKMINUTESparameter to control how often the process checks the parameters to determine if anything must be purged.

-

Setting the Size of the Trail

Two options for managing trail size are MEGABYES and MAXFILES. MEGABYTES lets you specify how large your trail file gets before your data rolls to another trail file. It is useful if you want to equally distribute data between your files. The default size is 134 megabytes and the largest size supported is two gigabytes. MAXFILES lets you specify the number of trail files Oracle GoldenGate creates. The default is 100 files. Allowing multiple files lets data roll over when one file is full, which prevents errors. The syntax for using MEGABYTES and MAXFILES is:

GGSCI> ADD EXTTRAIL trail_name, EXTRACT group_name, MEGABYTES num, MAXFILES num

Trails that either reside on the local node or on a node that is connected by Expand are considered local for NonStop. For these trails, you can also control size by setting the files' primary, secondary and maximum number of extents. The syntax for this is:

GGSCI> ADD EXTTRAIL trail_name, EXTRACT group_name [, EXTENTS (primary, secondary, max)]

The defaults for EXTENTS are (64, 128, 512).

From GGSCI, an INFO of the trail will show the current trail settings.

Example 9-1 Showing Trail Settings

GGSCI> INFO EXTTRAIL GGSDAT.ET

Extract file: \NY.$DATA04.GGSDAT.ET

Extract group: EXTSQL

Owner: 150,110

Security: NUNU

Current seqno: 0

Current rba: 2280

Primary extent: 64

Secondary extent: 128

Max extents: 512

Max files: 100Setting the PURGEOLDEXTRACTS rules

You can set PURGEOLDEXTRACT in the Manager, Extract, or Replicat, but only Manager has options.

In the Manager

You can set options for purging trails with the PURGEOLDEXTRACTS in the Manager's parameter file.

-

Use

USECHECKPOINTSto purge when all processes are finished with a file as indicated by checkpoints. This is the default, but it can be turned off with theNOUSECHECKPOINTSoption. -

MINKEEPHOURSorMINKEEPDAYSto keep filesnhours or days.MINKEEPFILESto keep at leastnfiles including the active file. The default is1.

Only one of the three MINKEEP options should be set. If more than one is entered the system will select one based on the following:

-

If both

MINKEEPHOURSandMINKEEPDAYSare specified, only the last setting will be used and the other will be ignored. -

If both

MINKEEP{HOURS|DAYS} andMINKEEPFILESare specifiedMINKEEP{HOURS|DAYS}will be used andMINKEEPFILESwill be ignored.

In Extract or Replicat

You cannot set any options for the Extract and Replicat PURGEOLDEXTRACTS parameter. In this case the trail is purged as soon as the process moves to the next trail.

Manager Purge Trail Processing

If PURGEOLDEXTRACTS is set in the Manager parameter file, when the Manager reaches CHECKMINUTES the purge rules are evaluated as explained below.

Example 9-2 Purge Processing Examples

-

Trail files

AA000000,AA000001, andAA000002exist. The Replicat has been down for four hours and has not completed processing any of the filesThe Manager parameters include:

PURGEOLDEXTRACTS $DATA1.DB.AA*, USECHECKPOINTS, MINKEEPHOURS 2

Result: The time files that are not accessed must be retained has been exceeded. No files will be purged, however, because checkpoints indicate that the files have not been fully processed by Replicat.

-

Trail files

AA000000,AA000001, andAA000002exist. The Replicat has been down for four hours and has not completed processing.The Manager parameters include:

PURGEOLDEXTRACTS $DATA1.DB.AA*, NOUSECHECKPOINTS, MINKEEPHOURS 2

Result: All trail files will be purged since the minimums have been met.

-

The following is an example of why only one of the

MINKEEPoptions should be setReplicat and Extract have completed processing. There has been no access to the trail files for the last five hours. Trail files

AA000000,AA000001, andAA000002exist.The Manager parameters include:

PURGEOLDEXTRACTS $DATA1.DB.AA*, USECHECKPOINTS, MINKEEPHOURS 4, MINKEEPFILES 4

Result:

USECHECKPOINTSrequirements have been met so the minimum rules will be considered when deciding whether to purgeAA000002.There will only be two files if

AA000002is purged, which will violate theMINKEEPFILESparameter. Since bothMINKEEPFILESandMINKEEPHOURShave been entered, however,MINKEEPFILESis ignored. The file will be purged because it has not been modified for 5 hours, which meets theMINKEEPHOURSrequirement of 4 hours.The Manager process determines which files to purge based on the Extract processes configured on the local system. If at least one Extract process reads the trail file, Manager applies the specified rules.

For more information see, PURGEOLDEXTRACTS for Extract and Replicat and ADD EXTTRAIL.

Recommendations for Managing Trail Purges

Consider the following recommendations for managing Oracle GoldenGate trails.

-

For setting the purge rules, it is recommended that: you

-

Specify

PURGEOLDEXTRACTSin the Manager parameter file so you manage your trails from a single location. -

Purge trail files through Extract or Replicat only when one process is processing the trail, such as a data pump. Use Manager to purge trail files that are being processed by both Extract and Replicat.

-

Use

USECHECKPOINTSto ensure that the checkpoints of both Extract and Replicat are considered and reduce the chance of data loss. -

Be aware that

PURGEOLDEXTRACTSin Extract or Replicat can remove trails still needed by the Coordinator. If you use the Coordinator, specifyPURGEOLDEXTRACTSin the Manager to manage the Coordinator checkpoints.

-

-

The rules should be assigned to the process that resides where the trail must be cleaned.

For example, if there are three nodes:

\Awhere the Extract is running and extracting the data;\Bwhere a subset of the data is replicated and\Cwhere another part of the data is replicated, it is the Manager on\Athat should be assigned the parameters that define how to manage the trails.For

USECHECKPOINTS, this Manager will need to know the location of the checkpoint files on\Band\C, but this can be accomplished withREMOTECHKPTas shown below.GGSCI> ADD REMOTECHKPT \node.$volume.subvolume.REPCTXT

Oracle GoldenGate Self Describing Trail Files

The default behavior is to store and forward metadata from the source to the target and encapsulates it in each of the trail files.

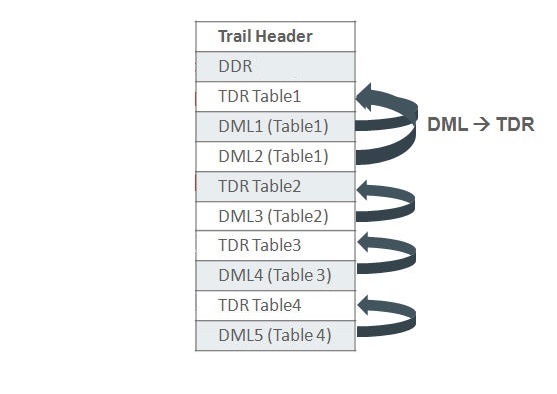

Metadata records are as follows:

-

Database Definition Record (DDR)

A DDR provides information about the specific database, such as character set and time zone. Extract writes a DDR to the trail following the file header to store the database metadata for the source database.

-

Table Definition Record (TDR)

A TDR provides details about the definition about a table and the columns that it contains. The content of this record is similar, though not identical, to a record in a

sourcedeffile that was created usingDEFGEN. Extract writes a new TDR when the output trail rolls over to a new file or the source table definition has changed.The metadata records in a self-describing trail file format operate as follows:

The metadata records in a self-describing trail file format operate as follows:

Using self-described trail files eliminates the need for SOURCEDEFS and ASSUMETARGETDEFS so parameter files are simpler and it is easier to configure. This feature also provides:

-

A reduction in trail file size due to object name compression.

-

No necessity to create and maintain source definitions files.

-

Replicating OpenSys databases to HP-NonStop no longer requires

TARGETDEFSand the mapping of column names, as well as mapping ANSI names to three part Tandem names. -

No necessity to create and maintain source definitions files.

Understanding the Self-Describing Trail Behavior

OpenSys (Non Enscribe or SQL/MP) source databases

When performing table maintenance on objects that are part of an extract group only after the remote Extract has completely output all the data change records to the trail and the Extract process is stopped. Then after both the source and target changes are completed, restart the Extract. There is no need to regenerate TARGETDEFS using DEFGEN on NSK and moving them to OpenSys, this assumes that since it is not required to map the OpenSys table names to NSK (Tandem) names or colmap individual columns, that all of it was already removed from the Extract parameter file. In the case of adding a new table, if the parameter files already wildcards this name, simply create the target table and then the source.

SQL/MP to SQL/MP

If replicating new columns, then no action is required to update metadata. For Drop and Create table statements, you must perform those database actions on the target and then the source outside of Oracle GoldenGate replication, but no other action is required. This assumes that the qualified table names are either, already in the parameter file, or fit a wildcard specification.

Enscribe to Enscribe

- Logger Capture

- The logger process has not been changed and will continue write trails without a file header or metadata this is equivalent to format release 9.5. There is no means to change this; therefore, Oracle recommends that if you are not already using Extract to pump logger trails, you should start using them. The Extract pump needs the source

DICTIONARYand each file statement needs aDEForTARGETDEFoption on theFILEparameter to create metadata. - TMF Extract Capture

-

The TMF based Extract writes the metadata based on a provided

DICTIONARYand specificDEForTARGETDEFoption on theFILEparameter.If you have not provided the recordDEFfor a file, then a fabricated metadata record is generated. There is an info message in Extract as follows:2018-08-07 04:44:50 OGG INFO 103 No columns loaded for \NODE.$DISK.SUBVOL.FILE, fabricating metadata TDR record.

Regardless of the method of capture, you can only use fabricated Enscribe metadata, when Extract or Replicat has No Colmaps, Filters, @functions(), or anything else that requires a real column information. Typically, you should use the fabricated metadata only when the source and target are the same files and no DEF or TARGETDEFS is used. Otherwise attempting to do so causes an abend.

In the following example, the source metadata was fabricated, while the target has a TARGETDEFS, it abends attempting to colmap default names.

MAP \NODE.$DISK.SUBVOL1.FILE, target \NODE.$DISK.SUBVOL2.FILE, targetdef REC-DEF; Metadata loaded from trail for file \NODE.$DISK.SUBVOL1.FILE Building Map ID 1 for \NODE.$DISK.SUBVOL1.FILE 2018-08-07 04:44:49 OGG WARNING 101 Source metadata found in trail for \NODE.$DISK.SUBVOL1.FILE was fabricated and may not match the target \NODE.$DISK.SUBVOL2.FILE. Error with default mapping. No matching fields found in source and target 2018-08-07 04:44:50 OGG ERROR 101 Error in COLMAP clause from compile_map. 2018-08-07 04:44:50 OGG ERROR 191 REPLICAT abending.

Managing Log Trails

Unlike trails that are created externally, if a trail created by Logger runs out of space there are no audit records to be reprocessed once the problem is fixed. This can cause data loss, so it is important to have adequate space available for log trails. The following steps help do this.

-

Include adequate trail space when the Logger process is added.

Log trail files are pre-allocated during

ADDLOGGERso this ensures that the space is available before the logging process begins. -

Monitor and adjust the trail space as needed.

During processing, adjust the number and size of each log trail as needed by editing the Logger parameter file with the command

EDITPARAMLOGPARM. Then the number of files can be increased or decreased by changing theNUMFILESoption, and the size of each trail file can be adjusted by changing theMEGABYTESorEXTENTS. The changes will be activated when anALTERLOGGERcommand is issued. -

Monitor the impact of the trail space on your system.

Manage creates the next log trail file if it is not available when it is time to rollover. This helps ensure that data will not be lost, but it also means that you may have more log trail files than specified in the

NUMFILESofLOGPARM.

Monitoring Processing

You can monitor the state of Oracle GoldenGate processing with the following tools.

Each of these tools is discussed in greater detail below.

Error Handling

There are several error handling parameters available in Oracle GoldenGate. In addition, Oracle GoldenGate provides error-handling options for Replicat and TCP/IP processing.

Error Handling Parameters

Error handling parameters let you insert missing records, prevent duplicate records from being loaded. For a complete list, see Oracle GoldenGate Parameters.

Handling Replicat Errors

To control the way that Replicat responds to errors, use the REPERROR parameter in the Replicat parameter file. This parameter handles most errors in a default fashion (for example, to cease processing), and you can specify REPERROR options to handle other errors in a specific manner, or to ignore them altogether.

REPERROR provides the following options:

TCP/IP Error Handling

The TCPERRS file in the Oracle GoldenGate installation location contains preset TCP/IP errors and instructions for how Oracle GoldenGate generally reacts to them. If a response is not explicitly defined in this file, Oracle GoldenGate responds to TCP/IP errors by exiting.

Note:

The Manager process is an exception. When Manager has an IP error it retries every 60 seconds and does not abend. It does not use the TCPERRS file to determine the number of retries or the delay.

Example 9-3 Sample of the TCPERRS File

# # TCP/IP error handling parameters # Default error response is abend # # error Response Delay (csecs) Max Retries ECONNABORTED RETRY 1000 10 ECONNREFUSED RETRY 1000 12 ECONNRESET RETRY 500 10 ENETDOWN RETRY 3000 50 ENETRESET RETRY 1000 10 ENOBUFS RETRY 100 60 ENOTCONN RETRY 100 10 EPIPE RETRY 500 10 ESHUTDOWN RETRY 1000 10 ETIMEDOUT RETRY 1000 10 NODYNPORTS RETRY 100 10

Altering TCP/IP Error Handling Parameters

To alter the instructions or add instructions for new errors, open the file in a text editor and change any of the values in the following columns:

-

Error column: Specifies a TCP/IP error for which you are defining a response.

-

Response column: Controls whether Oracle GoldenGate tries to connect again after the defined error.

-

Delay column: Controls how long Oracle GoldenGate waits before attempting to connect again.

-

Max Retries column: Controls the number of times that Oracle GoldenGate attempts to connect again before aborting.

See Oracle GoldenGate Parameters for details about the TCP/IP error messages, their causes, effects, and recovery.

Using Discard Files

Two types of discard files are available with Oracle GoldenGate for HP NonStop:

-

Discard files identify a record and operation that failed., providing information to help troubleshoot the error.

-

SQL discard files provide SQLCI formatted input to process the operation to an SQL/MP database once the error is corrected.

Using the Discard File

The discard file logs information about operations that failed. Extract discard records have a header and a data portion; the discard file is entry-sequenced. Replicat produces discard records in an external, easy-to-understand format

Full record images are provided when IO-TYPE is Delete, Insert or Update. Each record has the same format as if retrieved from a program reading the original file or table directly. For SQL tables, datetime fields, nulls and other fields are output exactly as a program would SELECT them into an application buffer. Even though datetime fields are represented internally as an eight byte timestamp, their external form can be up to 26 bytes expressed as a string. Enscribe records are retrieved as they exist in the original file.

Full record images are output unless the original file has the AUDITCOMPRESS attribute set to ON. When AUDITCOMPRESS is ON, compressed update records are generated whenever the original file receives an update operation. (A full image can be retrieved by Extract using the FETCHCOMPS parameter.)

When the operation type is Insert or Update, the image is the contents of the record after the change is made. When the operation type is Delete, the image is the contents of the record before the change.

To control how discard files are generated, use the DISCARDROLLOVER parameter in the Extract or Replicat parameter file. The parameter has provisions for specifying when new files are created.

Generating Discard Files

To generate a discard file, use the DISCARDFILE parameter in the Extract or Replicat parameter file.

If there is no DISCARDFILE parameter for Replicat, a discard file is created by default when Replicat is started from GGSCI (as opposed to when it is run from the TACL prompt.) The default discard file will have the following characteristics:

-

The file name is derived by appending a D to up to 7 characters of the Replicat group name. For example, the Replicat

GROUPAwill create discard fileGROUPADand ReplicatGROUPABwill create discard fileGROUPABD. If the Replicat group name is more than 7 characters, the process name is used instead. ReplicatGROUPABCwith process name $ABR00will create discard fileABR00D. -

The file is created in the report file location.

-

The extents are set to (4, 4, 100)

Note:

Discard files that have been created by default cannot be rolled over.

To specify a non-default location or characteristic use the DISCARDFILE parameter in the Replicat parameter file.

Using the SQL Formatted Discard File

SQL discard files provide SQLCI formatted input for failed replication operations to a target SQL/MP database. Specify SQLFORMATDISCARDFILE in the Replicat parameter file to generate the SQL discard file.

To use the SQL formatted discard file the target must be SQL/MP. The source of the operation can be any database and platform that Oracle GoldenGate supports.

The SQLCI formatted input will only process DML records once the original problem has been corrected.

To control how SQL formatted discard files are generated, use the parameter SQLFORMATDISCARDROLLOVER. This parameter specifies when new files are created.

SQL DIscard File Example

The following is an example of a section of a SQL formatted discard file record:

SET SESSION ERROR ABORT ON; BEGIN WORK; -- Problem with Insert Record, Seqno 0, RBA 1234 Error -8227 INSERT INTO \NY.$DATA2.SALES11.CUSTOMER & (CUST_CODE, NAME, CITY, STATE) VALUES & (“A543", “Advantage Software ",& “SEATTLE “, “WA"); COMMIT WORK;

The following is an example of the corresponding section from the discard file record:

*** ERROR from SQL [-8227]: The row being inserted or updated in underlying *** table \NY.$DATA2.SALES11.CUSTOMER contains a key value that must be *** unique but is already present in a row. Error -82227, guardian 10 occurred with insert record (target format). . . *--- 2013-08-28 09:46:13.775946 ----* 0, 4:CUST_CODE = ‘A543' 1, 12:NAME = ‘Advantage Software' 2, 46:CITY = ‘SEATTLE' 3, 70:STATE = ‘WA' *--- End Discard Record --- * Process Abending: 2013-08-28 09:46:14

Editing the SQL Discard File

The SQL discard file is an edit file that can be changed if needed. To change the file, first do a rollover on the file to trigger Replicat to close it and begin to use a new one. Then edit the file.

When you edit the SQL formatted discard file input, you must make sure your statements are valid for SQLCI:

-

Commands greater than 132 bytes must force a line break.

-

Strings must be enclosed in quotation marks. If embedded quotes are part of the data, the outermost set of quotation marks must be of the opposite type (single or double.)

-

Quoted strings longer than the maximum size must be broken into the 132 byte segments surrounded by quotation marks and terminated by the (&) continuation symbol. Note that the quotation marks and & symbol are all counted in the 132 byte limit.

-

The requirements for date-time and timestamp syntax are specific to the metadata.

Conflict Detection with SQLEXEC

SQLEXEC works on SQL for NonStop databases to call queries you specify. This lets you leverage application rules to resolve conflicts between incoming source records. To do this, add a SQLEXEC parameter in a MAP statement in the Replicat parameter file.

Some applications that support distributed processing provide their own methods for handling conflicts. Such methods include IP persistent routers or application privileges that prevent multiple users from modifying the same data. These rules can be combined with the use of SQLEXEC procedures.

A SQLEXEC Example

The following is an example of basic conflict detection based on a timestamp. It raises an exception based on a user-defined error number passed to Replicat and a SQL query to check the value of the TIMESTAMP column. If the timestamp is newer than the one on the existing record, the update is performed. Otherwise, the operation is ignored (because the newer timestamp is considered more valid) and a message is generated to the Replicat process report.

Example 9-4 REPLICAT Parameter File for Conflict Detection

REPERROR (9999, EXCEPTION) MAP $DATA.MASTER.SRC, TARGET $DATA.MASTER.TAR, SQLEXEC (ID check, QUERY " SELECT TIMESTAMP FROM TARGTAB" WHERE PKCOL =?P1 ", ERROR IGNORE); PARAMS (P1 = PKCOL)), FILTER (CREATED_BY <> "DBA"), FILTER (ON UPDATE, BEFORE.TIMESTAMP < CHECK.TIMESTAMP, RAISEERROR 9999); INSERTALLRECORDS MAP $DATA.MASTER.SRC, TARGET $DATA.MASTER.TAREXEC, EXCEPTIONSONLY, COLMAP (USEDEFAULTS, ERRTYPE = "UPDATE FILTER FAILED.");

In the example, the query is run under the logical name of check. Values retrieved from this query can be utilized anywhere in the MAP statement by referencing check.column name.

The FILTER statements in the example parameter file are processed in the order that they are written. If, in the first FILTER statement, the value of the CREATED_BY column in the record being applied by Replicat is equal to the DBA account, the operation is accepted for processing by the second FILTER statement. Otherwise, it is ignored.

In this example, SQLEXEC also detects database errors, but ignores them and continues processing. This is the default action for ERROR.

SQLEXEC (ID check, QUERY " SELECT TIMESTAMP FROM TARGTAB" WHERE PKCOL =?P1 ',ERROR IGNORE);

However, SQLEXEC could perform any of the following:

| Syntax | Description |

|---|---|

ERROR REPORT |

Write the database error to a report. |

ERROR RAISE |

Enable the same error handling capabilities available for table replication errors. |

ERROR FINAL |

Enable the same error handling capabilities as |

ERROR FATAL |

Abend the process immediately. |

In the second FILTER statement, the ON UPDATE clause directs the filter to run only for update statements. It compares the value of BEFORE.TIMESTAMP (the timestamp of the row that Replicat is attempting to apply) to CHECK.TIMESTAMP (the timestamp of the row already in the database). If the row in the database is newer than the row being applied, then the filter raises an error and the update is ignored.

In the example, the error correction was implemented with RAISEERROR in the SQLEXEC clause in the first MAP statement, but it could have been implemented in the second MAP statement by replacing the COLMAP clause with a SQLEXEC clause.

To handle specific issues, additional SQLEXEC statements could be performed after the filter or even between the filter statements for increased control.

Using the Event Log

The Oracle GoldenGate event log shows processing events, messages, errors, and warnings generated by Oracle GoldenGate. Although this information is also recorded in the NonStop Event Management System (EMS), viewing the Oracle GoldenGate log is sometimes more convenient. Use GGSCI VIEW GGSEVT command to view the event log.

Using the Process Report

Oracle GoldenGate generates a report about Manager, Logger, Extract, Replicat, and Syncfile at the end of each run. The report provides information about run-time parameters and statistics, including process events, and operations that were performed. The name of a process report is either MANAGER for the Manager process, or it is the same as the group name for Extract and Replicat. By default, reports are created in the subvolume GGSRPT. For example, a report for an Extract group EXTORD would have a report named GGSRPT.EXTORD.

Generate process reports with the SEND EXTRACT group_name command and the following options:

| Report | Option | Description |

|---|---|---|

|

The end of an audit trail |

Queries Extract to determine whether all records in the TMF audit trails have been processed. This command indicates whether more Extract or Replicat activity must occur before a scheduled switch between databases. Until |

|

|

Processing status |

Returns a detailed status of the processing state, including current position and activity. |

|

|

Processing statistics |

|

Generates an interim statistical report to the report file, including the number of inserts, updates, and deletes since the last report (default) or according to report options that can be entered. For more information, see Send Report. |

|

TCP/IP statistics |

Retrieves TCP/IP statistics, such as the quantity and byte length of inbound and outbound messages, the number of messages received and sent, wait times, process CPU time, and byte transmit averages. Time accumulates when Extract is waiting on a socket send or receive and all times are reported in microseconds. Resets the TCP/IP statistics so the next report displays fresh statistics |

Note:

In Oracle GoldenGate for NonStop, several additional reporting options are available. For specifics, see Report commands.

Example 9-5 Sample Report

RMTTRAIL $DATA10.LOGGER.R1000038, RBA 5348453 Session Index 1 Stats started 2011/01/10 11:46:18.804165 0:00:41.522086 Local address 192.0.2.2:1000 Remote address 192.0.2.2:1000 Inbound Msgs 199 Bytes 2337, 57 bytes/second Outbound Msgs 200 Bytes 5389492, 131451 bytes/second Recvs 199 Sends 200 Avg bytes per recv 11, per msg 11 Avg bytes per send 26947, per msg 26947 Recv Wait Time 17592208, per msg 88403, per recv 88403 Send Wait Time 774603, per msg 3873, per send 3873 Process CPU Time 0:00:07.715372

Viewing Process Reports

To view a process report, view the file directly from the operating system's command shell, or use the VIEW REPORT command in GGSCI. You also can view process reports from the Activity Console by clicking More Info beneath the name of the process.

Storing Process Reports

By default, process reports are stored in the GGSRPT subvolume of the Oracle GoldenGate installation volume. You can designate an alternative file name by using the REPORT option of the ADD EXTRACT and ADD REPLICAT commands when you create the group from the GGSCI interface. Specify the fully qualified file name.

Once paired with Extract or Replicat, the report file may remain in its original location, or you may change its location with the ALTER command, such as:

ALTER EXTRACT group_name REPORT filename

Managing Process Reports

Whenever a process starts, a new report file is created, and a sequence number is appended to the name of the old file. The naming sequence goes from no sequence number (current), to 0 (the most recent) to 9 (the oldest), for example: $DATA.GGSRPT.EXTORD, $DATA.GGSRPT.EXTORD0, $DATA.GGSRPT.EXTORD1 and so forth. When the file number reaches nine, the oldest file is deleted to make room for a new file, so there are never more than 11 files on the system at one time (the current report plus the ten aged reports).

To prevent the size of the report file from becoming too large, use the REPORTROLLOVER parameter in the Extract and Replicat parameter files. This parameter forces the report files to age on a regular schedule. Options are available to age the current file on a specific day and/or a specific time.

To minimize the impact of errors on the size of the Replicat report file, use the WARNRATE parameter in the Replicat parameter file. This parameter conserves the size of the report file and the event log by issuing a warning only after a specific number of errors have been generated, instead of after each one. This parameter is useful if you expect a certain number of errors and can tolerate them. The default for this parameter is to warn after 100 errors.

Generating Run-time Statistics

Run-time statistics show the current state of processing. By default, run-time statistics are written to the existing process report at the end of each run. To control when run-time statistics are generated, use the REPORT parameter. This parameter has options for controlling the day and time that statistics are generated.

To generate interim run-time statistics, use the SEND EXTRACT or SEND REPLICAT GGSCI command with the REPORT option syntax as shown below.

GGSCI> SEND {EXTRACT|REPLICAT} group_name

REPORT [time_option [RESET | FILE filename | TABLE name]]

The time_option controls the time span covered by the report, such as since the start of Extract or since the last report request. RESET sets the counters for that time_option to zero. FILE or TABLE limits the report to counts for name. For more information, see SEND REPORT.

To generate run-time statistics and also cause the report file to roll over to a new one, add the ROLLREPORT option to the command, for example:

GGSCI> SEND EXTRACT EXTORD, REPORT GGSCI> SEND EXTRACT EXTORD, ROLLREPORT

Viewing Record Counts

You can produce record counts at designated times during processing by using the REPORTCOUNT parameter in the Extract and Replicat parameter files. Results are printed to the process report file and to screen.

The record count shows the number of records extracted and replicated since the Extract or Replicat process started. Counts can be obtained at regular intervals or each time a specific number of records is processed.

The STATS Command

To generate a statistical report for Extract or Replicat, specify the LAGSTATS parameter. Oracle GoldenGate measures lag in bytes and time:

-

Lag in bytes is the difference between the position of the Extract program in the source at the time of the last checkpoint, and the current end-of-file. A lag value of

UNKNOWNindicates that the process may have recently started and hasn't yet processed records, or that the source system's clock may be ahead of the target system's clock due to a reason other than time zone differences. -

Time lag reflects the lag in seconds at the time the last checkpoint was written. For example, if it is now 15:00:00, the last checkpoint was at 14:59:00 and the timestamp of the last record processed by the Replicat program was 14:58:00, the lag is reported as 00:01:00 (one minute, the difference between 14:58 and 14:59).

The report includes the following general performance categories:

-

General statistics

-

Lag statistics

-

Extract's processing in the Oracle GoldenGate trail

-

Audit trail reading statistics for Extract (when applicable)

-

Output statistics for Extract only

The following table describes each item in the lag statistics report.

| Item | Description |

|---|---|

|

Last Record Timestamp |

The timestamp of the source record (when the source record was input or committed to the target database). |

|

Configured Interval |

Determined by the |

|

Actual Duration |

The duration of time measured. |

|

Records Processed |

Number of records output or replicated during the period. |

|

Records per Second |

Records processed per second during the interval. |

|

Source Records per Second |

The estimated rate of records read for either the TMF audit trails or logger processes. |

|

Last lag |

The time lag of the last record measured between (1) the records update into the source database and (2) the actual processing of the record by Extract or Replicat. |

|

Min lag |

Smallest value of Last lag during the interval. |

|

Average lag |

Average time lag calculated by adding the maximum lag during the interval to the minimum lag and dividing the result by 2. |

|

Peak lag |

Peak time lag during the interval for all records processed and the timestamp of the peak. |

|

Last est. record lag |

An estimate of the number of records the component is behind the current record. |

|

Pct Below Lag of mi:ss:mmm |

The percentage of times lag was below the time threshold you specified. This is an optional statistic, which can occur up to five times. To generate the statistic, specify the |

|

Pct CPU Busy |

The amount of time the CPU in which Extract or Replicat was running was busy during the interval. |

|

PCT Process Busy |

The amount of time Extract or Replicat was busy during the interval. |

|

At EOF? |

Whether more data was available to process the last time more data was requested by Extract or Replicat from the audit or Oracle GoldenGate trails. |

|

Trail Reads per Second |

When reading Oracle GoldenGate trails, the number of attempted block reads per second. |

|

Bytes per Trail Read |

When reading Oracle GoldenGate trails, the number of bytes read per successful read. |

|

Records per Block Read |

When reading Oracle GoldenGate trails, the number of records read per successful read. This indicates the blocking factor on input. |

|

Wait per Block Read |

When reading Oracle GoldenGate trails, the amount of time Extract or Replicat waits, on average, to complete the read. |

|

Audit Bytes per Second |

The number of bytes of audit processed per second (TMF Extract only). |

|

Pct EOF Trail Reads |

For TMF Extract, the percentage of times Extract reached the end of file, compared with the number of records processed. For Replicat or Extract reading Oracle GoldenGate trails, the number of times the process read at the end of file, compared with the total number of blocks it attempted to read. |

|

Transactions per Second |

For TMF Extract, the number of transactions processed per second. |

|

Transactions Aborted |

For TMF Extract, the number of transactions aborted during the interval. |

|

Audit positions |

The number of times during the interval that Extract requested Audserv to position for read. |

|

Audit position seconds |

The elapsed time in seconds required for Audserv to position for read. |

|

Audserv requests |

The number of data requests to Audserv during the interval. |

|

Audserv request wait seconds |

The elapsed time for the Audserv to fulfill data requests during the interval. |

|

Long transactions |

The number of long transactions during the interval. |

|

Long transaction seconds |

The elapsed time for the long transactions that occurred during the interval. |

|

Output Bytes per Second |

For Extract, the bytes of data output to the extract trails per second. |

|

Output Blocks per Second |

For Extract, the number of blocks of data written to the Oracle GoldenGate trails per second. |

|

Records per Block Written |

For Extract, the average number of records in each block written. |

|

Bytes per Block Written |

For Extract, the average number of bytes in each block written. |

|

Wait per Block Written |

For Extract, the amount of time waiting for the last write or TCP/IP send to complete before sending the next block of data. This statistic can indicate whether the network might be introducing a delay to Extract. |

|

Average Record Flush Delay |

For Extract, the estimated average amount of time a record was held in its buffers before flushing. |

|

Pct Output/Input |

For Extract, the ratio of bytes output compared with input bytes processed. Useful for estimating potential bandwidth required by Extract based on amount of TMF audit generated. |

Collecting Events from Other Systems

Event messages created by the Collector and Replicat on Windows and UNIX systems can be captured and sent back to EMS on NonStop systems. This feature enables centralized viewing of Oracle GoldenGate messages across platforms.

To collect events from other systems:

-

Run Collector on NonStop to collect and distribute EMS messages. For each EMSCLNT process, run one Collector process. The following example runs Collector and outputs its messages to

$0.TACL> ASSIGN STDERR, $0 TACL> RUN SERVER /NOWAIT/ –p 7880

-

Run the EMSCLNT utility on the remote target. EMSCLNT reads a designated error log and runs indefinitely, waiting for more messages to send. When EMSCLNT receives a message, it sends the message to a TCP/IP collector process on NonStop. See the examples for running EMSCLNT on other operating systems for syntax information.

Running EMSCLNT on Other Operating Systems

This Unix example reads the file ggslog.err for error messages. Error messages are sent to the collector to the NonStop at IP address 192.0.2.2 listening on port 7850. The Collector on NonStop writes formatted messages to EMS Collector $0.

> $emsclnt –h 192.0.2.2 –p 7850 –f ggserr.log –c $0

This Windows example (from the DOS prompt) reads the file d:\ggserrs\log.txt for error messages. Error messages are sent to the Collector on host ggs2 listening on port 9876. The Collector on NonStop writes formatted messages to EMS Collector $P0.

> emsclnt –h ggs2 –p 9876 –f c:\ggs\ggserr.log –c $P0

| Argument | Description |

|---|---|

–h ggs2 |

The node on which the collector is being run. Can be a name or IP address. This is a required parameter. |

–p 9876 |

The port at which the collector is listening for messages. This is a required parameter. |

–f c:\ggs\ggserr.log |

The error file from which EMSCLNT retrieves error messages. This is a required parameter. |

–c $P0 |

The collector to which EMS messages should be written on the NonStop (default is $0). |