7 Managing Oracle Cluster File System Version 2

WARNING:

Oracle Linux 7 is now in Extended Support. See Oracle Linux Extended Support and Oracle Open Source Support Policies for more information.

Migrate applications and data to Oracle Linux 8 or Oracle Linux 9 as soon as possible.

This chapter describes how to configure and use Oracle Cluster File System Version 2 (OCFS2) file system.

About OCFS2

Oracle Cluster File System version 2 (OCFS2) is a general-purpose, high-performance, high-availability, shared-disk file system intended for use in clusters. It is also possible to mount an OCFS2 volume on a standalone, non-clustered system.

Although it might seem that there is no benefit in mounting

ocfs2 locally as compared to alternative file

systems such as ext4 or

btrfs, you can use the

reflink command with OCFS2 to create

copy-on-write clones of individual files in a similar way to using

the cp --reflink command with the btrfs file

system. Typically, such clones allow you to save disk space when

storing multiple copies of very similar files, such as VM images

or Linux Containers. In addition, mounting a local OCFS2 file

system allows you to subsequently migrate it to a cluster file

system without requiring any conversion. Note that when using the

reflink command, the resulting filesystem

behaves like a clone of the original filesystem. This means that

their UUIDs are identical. When using reflink

to create a clone, you must change the UUID using the

tunefs.ocfs2 command. See

Querying and Changing Volume Parameters for more information.

-

Oracle VM to host shared access to virtual machine images.

-

Oracle VM and VirtualBox to allow Linux guest machines to share a file system.

-

Oracle Real Application Cluster (RAC) in database clusters.

-

Oracle E-Business Suite in middleware clusters.

OCFS2 has a large number of features that make it suitable for deployment in an enterprise-level computing environment:

-

Support for ordered and write-back data journaling that provides file system consistency in the event of power failure or system crash.

-

Block sizes ranging from 512 bytes to 4 KB, and file-system cluster sizes ranging from 4 KB to 1 MB (both in increments of powers of 2). The maximum supported volume size is 16 TB, which corresponds to a cluster size of 4 KB. A volume size as large as 4 PB is theoretically possible for a cluster size of 1 MB, although this limit has not been tested.

-

Extent-based allocations for efficient storage of very large files.

-

Optimized allocation support for sparse files, inline-data, unwritten extents, hole punching, reflinks, and allocation reservation for high performance and efficient storage.

-

Indexing of directories to allow efficient access to a directory even if it contains millions of objects.

-

Metadata checksums for the detection of corrupted inodes and directories.

-

Extended attributes to allow an unlimited number of

name:valuepairs to be attached to file system objects such as regular files, directories, and symbolic links. -

Advanced security support for POSIX ACLs and SELinux in addition to the traditional file-access permission model.

-

Support for user and group quotas.

-

Support for heterogeneous clusters of nodes with a mixture of 32-bit and 64-bit, little-endian (x86, x86_64, ia64) and big-endian (ppc64) architectures.

-

An easy-to-configure, in-kernel cluster-stack (O2CB) with a distributed lock manager (DLM), which manages concurrent access from the cluster nodes.

-

Support for buffered, direct, asynchronous, splice and memory-mapped I/O.

-

A tool set that uses similar parameters to the

ext3file system.

For more information about OCFS2, see https://oss.oracle.com/projects/ocfs2/documentation/.

Creating a Local OCFS2 File System

To create an OCFS2 file system that will be locally mounted and not associated with a cluster, use the following command:

sudo mkfs.ocfs2 -M local --fs-features=local -N 1 [options] device

/dev/sdc1 with one

node slot and the label localvol:

sudo mkfs.ocfs2 -M local --fs-features=local -N 1 -L "localvol" /dev/sdc1

You can use the tunefs.ocfs2 utility to convert a local OCFS2 file system to cluster use, for example:

sudo umount /dev/sdc1 sudo tunefs.ocfs2 -M cluster --fs-features=clusterinfo -N 8 /dev/sdc1

This example also increases the number of node slots from 1 to 8 to allow up to eight nodes to mount the file system.

Installing and Configuring OCFS2

This section describes procedures for setting up a cluster to use OCFS2.

Preparing a Cluster for OCFS2

For best performance, each node in the cluster should have at least two network interfaces. One interface is connected to a public network to allow general access to the systems. The other interface is used for private communication between the nodes; the cluster heartbeat that determines how the cluster nodes coordinate their access to shared resources and how they monitor each other's state. These interface must be connected via a network switch. Ensure that all network interfaces are configured and working before continuing to configure the cluster.

You have a choice of two cluster heartbeat configurations:

-

Local heartbeat thread for each shared device. In this mode, a node starts a heartbeat thread when it mounts an OCFS2 volume and stops the thread when it unmounts the volume. This is the default heartbeat mode. There is a large CPU overhead on nodes that mount a large number of OCFS2 volumes as each mount requires a separate heartbeat thread. A large number of mounts also increases the risk of a node fencing itself out of the cluster due to a heartbeat I/O timeout on a single mount.

-

Global heartbeat on specific shared devices. You can configure any OCFS2 volume as a global heartbeat device provided that it occupies a whole disk device and not a partition. In this mode, the heartbeat to the device starts when the cluster comes online and stops when the cluster goes offline. This mode is recommended for clusters that mount a large number of OCFS2 volumes. A node fences itself out of the cluster if a heartbeat I/O timeout occurs on more than half of the global heartbeat devices. To provide redundancy against failure of one of the devices, you should therefore configure at least three global heartbeat devices.

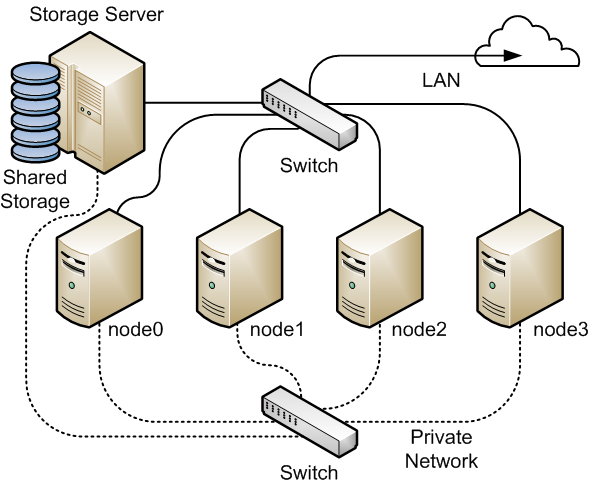

Figure 7-1 shows a cluster of four nodes connected via a network switch to a LAN and a network storage server. The nodes and the storage server are also connected via a switch to a private network that they use for the local cluster heartbeat.

Figure 7-1 Cluster Configuration Using a Private Network

It is possible to configure and use OCFS2 without using a private network but such a configuration increases the probability of a node fencing itself out of the cluster due to an I/O heartbeat timeout.

Configuring the Firewall

Configure or disable the firewall on each node to allow access on the interface that the cluster will use for private cluster communication. By default, the cluster uses both TCP and UDP over port 7777.

To allow incoming TCP connections and UDP datagrams on port 7777, use the following commands:

sudo firewall-cmd --zone=zone --add-port=7777/tcp --add-port=7777/udp sudo firewall-cmd --permanent --zone=zone --add-port=7777/tcp --add-port=7777/udp

Configuring the Cluster Software

Ideally, each node should be running the same version of the OCFS2 software and a compatible version of the Oracle Linux Unbreakable Enterprise Kernel (UEK). It is possible for a cluster to run with mixed versions of the OCFS2 and UEK software, for example, while you are performing a rolling update of a cluster. The cluster node that is running the lowest version of the software determines the set of usable features.

-

kernel-uek -

ocfs2-tools

Note:

If you want to use the global heartbeat feature, you must

install ocfs2-tools-1.8.0-11 or later.

Creating the Configuration File for the Cluster Stack

You can create the configuration file by using the o2cb command or a text editor.

-

Use the following command to create a cluster definition.

sudo o2cb add-cluster cluster_nameFor example, to define a cluster namedmyclusterwith four nodes:sudo o2cb add-cluster mycluster

The command creates the configuration file

/etc/ocfs2/cluster.confif it does not already exist. -

For each node, use the following command to define the node.

sudo o2cb add-node cluster_name node_name --ip ip_address

The name of the node must be same as the value of system's

HOSTNAMEthat is configured in/etc/sysconfig/network. The IP address is the one that the node will use for private communication in the cluster.For example, to define a node namednode0with the IP address 10.1.0.100 in the clustermycluster:sudo o2cb add-node mycluster node0 --ip 10.1.0.100

Note that OCFS2 only supports IPv4 addresses. -

If you want the cluster to use global heartbeat devices, use the following commands.

sudo o2cb add-heartbeat cluster_name device1 . . . sudo o2cb heartbeat-mode cluster_name global

Note:

You must configure global heartbeat to use whole disk devices. You cannot configure a global heartbeat device on a disk partition.

For example, to use/dev/sdd,/dev/sdg, and/dev/sdjas global heartbeat devices:sudo o2cb add-heartbeat mycluster /dev/sdd sudo o2cb add-heartbeat mycluster /dev/sdg sudo o2cb add-heartbeat mycluster /dev/sdj sudo o2cb heartbeat-mode mycluster global

-

Copy the cluster configuration file

/etc/ocfs2/cluster.confto each node in the cluster.Note:

Any changes that you make to the cluster configuration file do not take effect until you restart the cluster stack.

/etc/ocfs2/cluster.conf defines a 4-node

cluster named mycluster with a local

heartbeat.

node:

name = node0

cluster = mycluster

number = 0

ip_address = 10.1.0.100

ip_port = 7777

node:

name = node1

cluster = mycluster

number = 1

ip_address = 10.1.0.101

ip_port = 7777

node:

name = node2

cluster = mycluster

number = 2

ip_address = 10.1.0.102

ip_port = 7777

node:

name = node3

cluster = mycluster

number = 3

ip_address = 10.1.0.103

ip_port = 7777

cluster:

name = mycluster

heartbeat_mode = local

node_count = 4

node:

name = node0

cluster = mycluster

number = 0

ip_address = 10.1.0.100

ip_port = 7777

node:

name = node1

cluster = mycluster

number = 1

ip_address = 10.1.0.101

ip_port = 7777

node:

name = node2

cluster = mycluster

number = 2

ip_address = 10.1.0.102

ip_port = 7777

node:

name = node3

cluster = mycluster

number = 3

ip_address = 10.1.0.103

ip_port = 7777

cluster:

name = mycluster

heartbeat_mode = global

node_count = 4

heartbeat:

cluster = mycluster

region = 7DA5015346C245E6A41AA85E2E7EA3CF

heartbeat:

cluster = mycluster

region = 4F9FBB0D9B6341729F21A8891B9A05BD

heartbeat:

cluster = mycluster

region = B423C7EEE9FC426790FC411972C91CC3

The cluster heartbeat mode is now shown as

global, and the heartbeat regions are

represented by the UUIDs of their block devices.

-

The

cluster:,heartbeat:, andnode:headings must start in the first column. -

Each parameter entry must be indented by one tab space.

-

A blank line must separate each section that defines the cluster, a heartbeat device, or a node.

Configuring the Cluster Stack

-

Run the following command on each node of the cluster:

sudo /sbin/o2cb.init configure

The following table describes the values for which you are prompted.Prompt Description Load O2CB driver on boot (y/n)Whether the cluster stack driver should be loaded at boot time. The default response is

n.Cluster stack backing O2CBThe name of the cluster stack service. The default and usual response is

o2cb.Cluster to start at boot (Enter "none" to clear)Enter the name of your cluster that you defined in the cluster configuration file,

/etc/ocfs2/cluster.conf.Specify heartbeat dead threshold (>=7)The number of 2-second heartbeats that must elapse without response before a node is considered dead. To calculate the value to enter, divide the required threshold time period by 2 and add 1. For example, to set the threshold time period to 120 seconds, enter a value of 61. The default value is 31, which corresponds to a threshold time period of 60 seconds.

Note:

If your system uses multipathed storage, the recommended value is 61 or greater.

Specify network idle timeout in ms (>=5000)The time in milliseconds that must elapse before a network connection is considered dead. The default value is 30,000 milliseconds.

Note:

For bonded network interfaces, the recommended value is 30,000 milliseconds or greater.

Specify network keepalive delay in ms (>=1000)The maximum delay in milliseconds between sending keepalive packets to another node. The default and recommended value is 2,000 milliseconds.

Specify network reconnect delay in ms (>=2000)The minimum delay in milliseconds between reconnection attempts if a network connection goes down. The default and recommended value is 2,000 milliseconds.

To verify the settings for the cluster stack, enter the /sbin/o2cb.init status command:sudo /sbin/o2cb.init status

Driver for "configfs": Loaded Filesystem "configfs": Mounted Stack glue driver: Loaded Stack plugin "o2cb": Loaded Driver for "ocfs2_dlmfs": Loaded Filesystem "ocfs2_dlmfs": Mounted Checking O2CB cluster "mycluster": Online Heartbeat dead threshold: 61 Network idle timeout: 30000 Network keepalive delay: 2000 Network reconnect delay: 2000 Heartbeat mode: Local Checking O2CB heartbeat: Active

In this example, the cluster is online and is using local heartbeat mode. If no volumes have been configured, the O2CB heartbeat is shown as

Not activerather thanActive.The next example shows the command output for an online cluster that is using three global heartbeat devices:

# /sbin/o2cb.init status

Driver for "configfs": Loaded Filesystem "configfs": Mounted Stack glue driver: Loaded Stack plugin "o2cb": Loaded Driver for "ocfs2_dlmfs": Loaded Filesystem "ocfs2_dlmfs": Mounted Checking O2CB cluster "mycluster": Online Heartbeat dead threshold: 61 Network idle timeout: 30000 Network keepalive delay: 2000 Network reconnect delay: 2000 Heartbeat mode: Global Checking O2CB heartbeat: Active 7DA5015346C245E6A41AA85E2E7EA3CF /dev/sdd 4F9FBB0D9B6341729F21A8891B9A05BD /dev/sdg B423C7EEE9FC426790FC411972C91CC3 /dev/sdj

-

Configure the

o2cbandocfs2services so that they start at boot time after networking is enabled:sudo systemctl enable o2cb sudo systemctl enable ocfs2

These settings allow the node to mount OCFS2 volumes automatically when the system starts.

Configuring the Kernel for Cluster Operation

| Kernel Setting | Description |

|---|---|

|

|

Specifies the number of seconds after a panic before a system will automatically reset itself. If the value is 0, the system hangs, which allows you to collect detailed information about the panic for troubleshooting. This is the default value.

To enable automatic reset, set a non-zero value. If

you require a memory image

( |

|

|

Specifies that a system must panic if a kernel oops occurs. If a kernel thread required for cluster operation crashes, the system must reset itself. Otherwise, another node might not be able to tell whether a node is slow to respond or unable to respond, causing cluster operations to hang. |

panic and panic_on_oops: sudo sysctl kernel.panic=30 sudo sysctl kernel.panic_on_oops=1

/etc/sysctl.conf file:

# Define panic and panic_on_oops for cluster operation kernel.panic=30 kernel.panic_on_oops=1

Starting and Stopping the Cluster Stack

The following list shows the commands that you can use to perform various operations on the cluster stack.

-

/sbin/o2cb.init status: Check the status of the cluster stack.

-

/sbin/o2cb.init online: Start the cluster stack.

-

/sbin/o2cb.init offline: Stop the cluster stack.

-

/sbin/o2cb.init unload: Unload the cluster stack.

Creating OCFS2 volumes

| Command Option | Description |

|---|---|

|

-bblock-size --block-sizeblock-size |

Specifies the unit size for I/O transactions to and from the file system, and the size of inode and extent blocks. The supported block sizes are 512 (512 bytes), 1K, 2K, and 4K. The default and recommended block size is 4K (4 kilobytes). |

|

-Ccluster-size --cluster-sizecluster-size |

Specifies the unit size for space used to allocate file data. The supported cluster sizes are 4K, 8K, 16K, 32K, 64K, 128K, 256K, 512K, and 1M (1 megabyte). The default cluster size is 4K (4 kilobytes). |

|

--fs-feature-level=feature-level |

Allows you select a set of file-system features:

|

|

--fs_features=feature |

Allows you to enable or disable individual features

such as support for sparse files, unwritten extents,

and backup superblocks. For more information, see

the |

|

-Jsize=journal-size --journal-optionssize=journal-size |

Specifies the size of the write-ahead journal. If

not specified, the size is determined from the file

system usage type that you specify to the

-T option, and, otherwise, from

the volume size. The default size of the journal is

64M (64 MB) for |

|

-Lvolume-label --labelvolume-label |

Specifies a descriptive name for the volume that allows you to identify it easily on different cluster nodes. |

|

-Nnumber --node-slotsnumber |

Determines the maximum number of nodes that can concurrently access a volume, which is limited by the number of node slots for system files such as the file-system journal. For best performance, set the number of node slots to at least twice the number of nodes. If you subsequently increase the number of node slots, performance can suffer because the journal will no longer be contiguously laid out on the outer edge of the disk platter. |

|

-Tfile-system-usage-type |

Specifies the type of usage for the file system:

|

/dev/sdc1 labeled as

myvol using all the default settings for generic usage on file systems that

are no larger than a few gigabytes. The default values are a 4 KB block and cluster size,

eight node slots, a 256 MB journal, and support for default file-system features.

sudo mkfs.ocfs2 -L "myvol" /dev/sdc1

/dev/sdd2 labeled as dbvol for

use with database files. In this case, the cluster size is set to 128 KB and the journal size

to 32 MB. sudo mkfs.ocfs2 -L "dbvol" -T datafiles /dev/sdd2

/dev/sde1 with a 16 KB cluster size, a 128 MB

journal, 16 node slots, and support enabled for all features except refcount trees.

sudo mkfs.ocfs2 -C 16K -J size=128M -N 16 --fs-feature-level=max-features --fs-features=norefcount /dev/sde1

Note:

Do not create an OCFS2 volume on an LVM logical volume. LVM is not cluster-aware.

You cannot change the block and cluster size of an OCFS2

volume after it has been created. You can use the

tunefs.ocfs2 command to modify other

settings for the file system with certain restrictions. For

more information, see the tunefs.ocfs2(8)

manual page.

If you intend the volume to store database files, do not specify a cluster size that is smaller than the block size of the database.

The default cluster size of 4 KB is not suitable if the file system is larger than a few gigabytes. The following table suggests minimum cluster size settings for different file system size ranges:

| File System Size | Suggested Minimum Cluster Size |

|---|---|

|

1 GB - 10 GB |

8K |

|

10GB - 100 GB |

16K |

|

100 GB - 1 TB |

32K |

|

1 TB - 10 TB |

64K |

|

10 TB - 16 TB |

128K |

Mounting OCFS2 Volumes

_netdev option in

/etc/fstab if you want the system to mount an

OCFS2 volume at boot time after networking is started, and to

unmount the file system before networking is stopped.

myocfs2vol /dbvol1 ocfs2 _netdev,defaults 0 0

Note:

The file system will not mount unless you have enabled the

o2cb and ocfs2 services

to start after networking is started. See

Configuring the Cluster Stack.

Querying and Changing Volume Parameters

You can use the tunefs.ocfs2 command to query or change volume parameters. For example, to find out the label, UUID and the number of node slots for a volume:

sudo tunefs.ocfs2 -Q "Label = %V\nUUID = %U\nNumSlots =%N\n" /dev/sdb

Label = myvol UUID = CBB8D5E0C169497C8B52A0FD555C7A3E NumSlots = 4

Generate a new UUID for a volume:

sudo tunefs.ocfs2 -U /dev/sda sudo tunefs.ocfs2 -Q "Label = %V\nUUID = %U\nNumSlots =%N\n" /dev/sdb

Label = myvol UUID = 48E56A2BBAB34A9EB1BE832B3C36AB5C NumSlots = 4

Troubleshooting OCFS2

The following sections describes some techniques that you can use for investigating any problems that you encounter with OCFS2.

Recommended Tools for Debugging

To you want to capture an oops trace, it is recommended that you set up netconsole on the nodes.

em2, you could use a command such as the following:

sudo tcpdump -i em2 -C 10 -W 15 -s 10000 -Sw /tmp/`hostname -s`_tcpdump.log -ttt 'port 7777' &

You can use the debugfs.ocfs2 command, which

is similar in behavior to the debugfs command

for the ext3 file system, and allows you to

trace events in the OCFS2 driver, determine lock statuses, walk

directory structures, examine inodes, and so on.

For more information, see the

debugfs.ocfs2(8) manual page.

The o2image command saves an OCFS2 file system's metadata (including information about inodes, file names, and directory names) to an image file on another file system. As the image file contains only metadata, it is much smaller than the original file system. You can use debugfs.ocfs2 to open the image file, and analyze the file system layout to determine the cause of a file system corruption or performance problem.

For example, the following command creates the image /tmp/sda2.img from the

OCFS2 file system on the device /dev/sda2:

sudo o2image /dev/sda2 /tmp/sda2.img

For more information, see the o2image(8)

manual page.

Mounting the debugfs File System

OCFS2 uses the debugfs file system to allow

access from user space to information about its in-kernel state.

You must mount the debugfs file system to be

able to use the debugfs.ocfs2 command.

To mount the debugfs file system, add the

following line to /etc/fstab:

debugfs /sys/kernel/debug debugfs defaults 0 0

Then, run the mount -a command.

Configuring OCFS2 Tracing

| Command | Description |

|---|---|

|

debugfs.ocfs2 -l |

List all trace bits and their statuses. |

|

debugfs.ocfs2 -l SUPER allow |

Enable tracing for the superblock. |

|

debugfs.ocfs2 -l SUPER off |

Disable tracing for the superblock. |

|

debugfs.ocfs2 -l SUPER deny |

Disallow tracing for the superblock, even if implicitly enabled by another tracing mode setting. |

|

debugfs.ocfs2 -l HEARTBEAT \ ENTRY EXIT allow |

Enable heartbeat tracing. |

|

debugfs.ocfs2 -l HEARTBEAT off \ ENTRY EXIT deny |

Disable heartbeat tracing. |

|

debugfs.ocfs2 -l ENTRY EXIT \ NAMEI INODE allow |

Enable tracing for the file system. |

|

debugfs.ocfs2 -l ENTRY EXIT \ deny NAMEI INODE allow |

Disable tracing for the file system. |

|

debugfs.ocfs2 -l ENTRY EXIT \ DLM DLM_THREAD allow |

Enable tracing for the DLM. |

|

debugfs.ocfs2 -l ENTRY EXIT \ deny DLM DLM_THREAD allow |

Disable tracing for the DLM. |

One method for obtaining a trace its to enable the trace, sleep for a short while, and then disable the trace. As shown in the following example, to avoid seeing unnecessary output, you should reset the trace bits to their default settings after you have finished.

sudo debugfs.ocfs2 -l ENTRY EXIT NAMEI INODE allow && sleep 10 && debugfs.ocfs2 -l ENTRY EXIT deny NAMEI INODE off

To limit the amount of information displayed, enable only the trace bits that you believe are relevant to understanding the problem.

If you believe a specific file system command, such as mv, is causing an error, the following example shows the commands that you can use to help you trace the error.

sudo debugfs.ocfs2 -l ENTRY EXIT NAMEI INODE allow sudo mv source destination & CMD_PID=$(jobs -p %-) echo $CMD_PID sudo debugfs.ocfs2 -l ENTRY EXIT deny NAMEI INODE off

As the trace is enabled for all mounted OCFS2 volumes, knowing the correct process ID can help you to interpret the trace.

For more information, see the

debugfs.ocfs2(8) manual page.

Debugging File System Locks

-

Mount the debug file system.

sudo mount -t debugfs debugfs /sys/kernel/debug

-

Dump the lock statuses for the file system device (

/dev/sdx1in this example).echo "fs_locks" | debugfs.ocfs2 /dev/sdx1 >/tmp/fslocks 62

Lockres: M00000000000006672078b84822 Mode: Protected Read Flags: Initialized Attached RO Holders: 0 EX Holders: 0 Pending Action: None Pending Unlock Action: None Requested Mode: Protected Read Blocking Mode: Invalid

The

Lockresfield is the lock name used by the DLM. The lock name is a combination of a lock-type identifier, an inode number, and a generation number. The following list shows the possible lock types:-

D: File data. -

M: Metadata -

R: Rename -

S: Superblock -

W: Read-write

-

-

Use the

Lockresvalue to obtain the inode number and generation number for the lock.sudo echo "stat <M00000000000006672078b84822>" | debugfs.ocfs2 -n /dev/sdx1

Inode: 419616 Mode: 0666 Generation: 2025343010 (0x78b84822) ...

-

Determine the file system object to which the inode number relates by using the following command.

echo "locate <419616>" | debugfs.ocfs2 -n /dev/sdx1

419616 /linux-2.6.15/arch/i386/kernel/semaphore.c

-

Obtain the lock names that are associated with the file system object.

echo "encode /linux-2.6.15/arch/i386/kernel/semaphore.c" | debugfs.ocfs2 -n /dev/sdx1

M00000000000006672078b84822 D00000000000006672078b84822 W00000000000006672078b84822

In this example, a metadata lock, a file data lock, and a read-write lock are associated with the file system object.

-

Determine the DLM domain of the file system.

echo "stats" | debugfs.ocfs2 -n /dev/sdX1 | grep UUID: | while read a b ; do echo $b ; done

82DA8137A49A47E4B187F74E09FBBB4B

-

Use the values of the DLM domain and the lock name with the following command, which enables debugging for the DLM.

echo R 82DA8137A49A47E4B187F74E09FBBB4B M00000000000006672078b84822 > /proc/fs/ocfs2_dlm/debug

-

Examine the debug messages.

sudo dmesg | tail

struct dlm_ctxt: 82DA8137A49A47E4B187F74E09FBBB4B, node=3, key=965960985 lockres: M00000000000006672078b84822, owner=1, state=0 last used: 0, on purge list: no granted queue: type=3, conv=-1, node=3, cookie=11673330234144325711, ast=(empty=y,pend=n), bast=(empty=y,pend=n) converting queue: blocked queue:The DLM supports 3 lock modes: no lock (

type=0), protected read (type=3), and exclusive (type=5). In this example, the lock is owned by node 1 (owner=1) and node 3 has been granted a protected-read lock on the file-system resource. -

Run the following command, and look for processes that are in an uninterruptable sleep state as shown by the

Dflag in theSTATcolumn.ps -e -o pid,stat,comm,wchan=WIDE-WCHAN-COLUMN

At least one of the processes that are in the uninterruptable sleep state will be responsible for the hang on the other node.

flock()), the problem could lie in

the application. If possible, kill the holder of the lock. If

the hang is due to lack of memory or fragmented memory, you can

free up memory by killing non-essential processes. The most

immediate solution is to reset the node that is holding the

lock. The DLM recovery process can then clear all the locks that

the dead node owned, so letting the cluster continue to operate.

Configuring the Behavior of Fenced Nodes

echo panic > /sys/kernel/config/cluster/cluster_name/fence_method

In the previous command, cluster_name

is the name of the cluster. To set the value after each reboot

of the system, add this line to the

/etc/rc.local file. To restore the default

behavior, use the value reset instead of

panic.

Use Cases for OCFS2

The following sections describe some typical use cases for OCFS2.

Load Balancing

You can use OCFS2 nodes to share resources between client systems. For example, the nodes could export a shared file system by using Samba or NFS. To distribute service requests between the nodes, you can use round-robin DNS, a network load balancer, or specify which node should be used on each client.

Oracle Real Application Cluster (RAC)

Oracle RAC uses its own cluster stack, Cluster Synchronization Services (CSS). You can use O2CB in conjunction with CSS, but you should note that each stack is configured independently for timeouts, nodes, and other cluster settings. You can use OCFS2 to host the voting disk files and the Oracle cluster registry (OCR), but not the grid infrastructure user's home, which must exist on a local file system on each node.

As both CSS and O2CB use the lowest node number as a tie breaker

in quorum calculations, you should ensure that the node numbers

are the same in both clusters. If necessary, edit the O2CB

configuration file /etc/ocfs2/cluster.conf to

make the node numbering consistent, and update this file on all

nodes. The change takes effect when the cluster is restarted.

Oracle Databases

Specify the noatime option when mounting

volumes that host Oracle datafiles, control files, redo logs,

voting disk, and OCR. The noatime option

disables unnecessary updates to the access time on the inodes.

Specify the nointr mount option to prevent

signals interrupting I/O transactions that are in progress.

By default, the init.ora parameter

filesystemio_options directs the database to

perform direct I/O to the Oracle datafiles, control files, and

redo logs. You should also specify the

datavolume mount option for the volumes that

contain the voting disk and OCR. Do not specify this option for

volumes that host the Oracle user's home directory or Oracle

E-Business Suite.

To avoid database blocks becoming fragmented across a disk,

ensure that the file system cluster size is at least as big as

the database block size, which is typically 8KB. If you specify

the file system usage type as datafiles to

the mkfs.ocfs2 command, the file system

cluster size is set to 128KB.

mtime) on the disk when performing non-extending direct I/O writes. The

value of mtime is updated in memory, but OCFS2 does not write the value to

disk unless an application extends or truncates the file, or performs a operation to change

the file metadata, such as using the touch command. This behavior leads

to results in different nodes reporting different time stamps for the same file. You can use

the following command to view the on-disk timestamp of a file:

sudo debugfs.ocfs2 -R "stat /file_path" device | grep "mtime:"