Set Up a CAE Environment Using Altair HyperWorks with Oracle Cloud Guard

Free your engineers from on-premises hardware restrictions so that they can run massive engineering simulations anywhere in the world from almost any device.

Altair HyperWorks is an engineering software suite running on Oracle Cloud Infrastructure that lets engineers build models and perform engineering analysis and design optimization for different performance requirements. Oracle Cloud Infrastructure provides remote direct memory access (RDMA)-enabled cluster networking and bare-metal high-performance computing (HPC) instances. Oracle Cloud Infrastructure now combines its proven HPC instance with a low-latency network that can span more than 20,000 cores.

In addition, Altair provides a managed service called Altair HyperWorks Unlimited similar to this deployment that offers:

-

Reduced design times: Companies can reduce design times and bring products to the market faster by accessing software and hardware on demand.

-

No waiting: With this on-demand solution from Oracle and Altair, engineers don’t have to wait in job queues or endure long HPC hardware procurement cycles.

-

Flexible licensing: Altair’s innovative licensing model allows customers to use unlimited software licenses in the managed service environment.

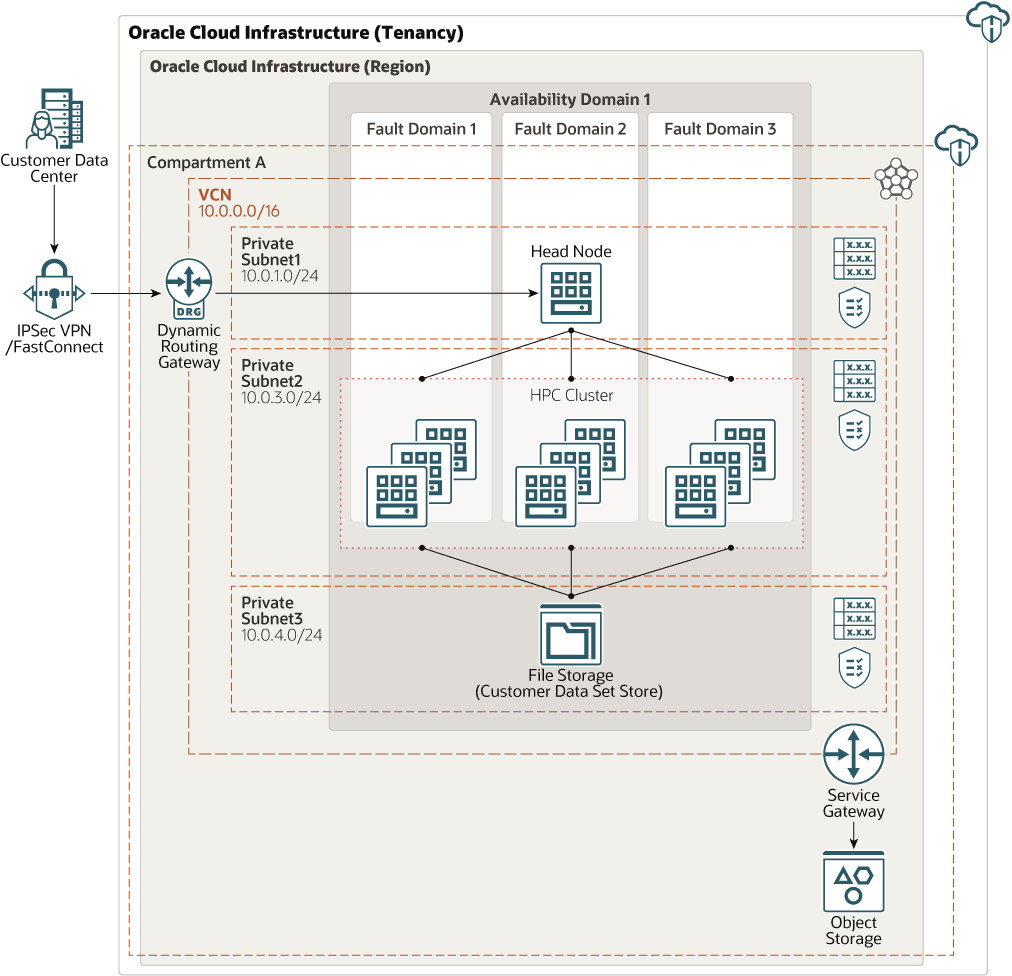

Architecture

This architecture deploys a head node, which runs the scheduler, provisions and deprovisions high-performance computing (HPC) compute node clusters, and preprocess some customer data. Work is done in the HPC compute node cluster and the results are stored in file storage.

This architecture is deployed using a private virtual cloud network (VCN). The customer network can access the head node only through IPSec VPN or FastConnect. This limitation provides controlled access to the head node. For specific use cases, however, customers can choose to deploy this architecture in a public subnet, where the head node is also accessible from public internet.

The architecture also uses Oracle Cloud Guard, which continuously monitors configurations and activities to identify threats and automatically acts to remedy issues at a compartment level.

The architecture uses a region with one availability domain and regional subnets. You can use the same architecture in a region with multiple availability domains. We recommend that you use regional subnets for your deployment, regardless of the number of availability domains.

Note:

If you use FastConnect, mount the file storage to the on-premises node for faster transfer of the data set. If you use an IPSec VPN, transfer data to object storage first (because of speed and connection variations) and then transfer it to file storage.The architecture has the following components:

- Region

An Oracle Cloud Infrastructure region is a localized geographic area that contains one or more data centers, called availability domains. Regions are independent of other regions, and vast distances can separate them (across countries or even continents).

- Availability domains

Availability domains are standalone, independent data centers within a region. The physical resources in each availability domain are isolated from the resources in the other availability domains, which provides fault tolerance. Availability domains don’t share infrastructure such as power or cooling, or the internal availability domain network. So, a failure at one availability domain is unlikely to affect the other availability domains in the region.

- Fault domains

A fault domain is a grouping of hardware and infrastructure within an availability domain. Each availability domain has three fault domains with independent power and hardware. When you distribute resources across multiple fault domains, your applications can tolerate physical server failure, system maintenance, and power failures inside a fault domain.

- Virtual cloud network (VCN) and subnets

A VCN is a customizable, software-defined network that you set up in an Oracle Cloud Infrastructure region. Like traditional data center networks, VCNs give you complete control over your network environment. A VCN can have multiple non-overlapping CIDR blocks that you can change after you create the VCN. You can segment a VCN into subnets, which can be scoped to a region or to an availability domain. Each subnet consists of a contiguous range of addresses that don't overlap with the other subnets in the VCN. You can change the size of a subnet after creation. A subnet can be public or private.

- Head node

Use a web-based portal to connect to the head node and schedule HPC jobs. The job request comes through FastConnect or IPSec VPN to the head node. The head node also sends the customer data set to file storage and can do some preprocessing on the data.

The head node provisions HPC node clusters and deprovisions HPC clusters on job completion.

- HPC cluster node

The head node provisions and deprovisions these compute nodes, which are RDMA-enabled clusters. They process the data stored in file storage and return the results to file storage.

- Cloud guard

You can use Oracle Cloud Guard to monitor and maintain the security of your resources in the cloud. Cloud Guard examines your resources for security weakness related to configuration, and monitors operators and users for risky activities. When any security issue or risk is identified, Cloud Guard recommends corrective actions and assists you in taking those actions, based on security recipes that you can define.

- File storage

The File Storage service file system is mounted on both the head node and HPC cluster nodes. It stores the customer data set and the results after the HPC cluster nodes process the data.

- Security list

For each subnet, you can create security rules that specify the source, destination, and type of traffic that must be allowed in and out of the subnet.

Recommendations

Your requirements might differ from the architecture described here. Use the following recommendations as a starting point.

- VCN

When you create a VCN, determine the number of CIDR blocks required and the size of each block based on the number of resources that you plan to attach to subnets in the VCN. Use CIDR blocks that are within the standard private IP address space.

Select CIDR blocks that don't overlap with any other network (in Oracle Cloud Infrastructure, your on-premises data center, or another cloud provider) to which you intend to set up private connections.

After you create a VCN, you can change, add, and remove its CIDR blocks.

When you design the subnets, consider your traffic flow and security requirements. Attach all the resources within a specific tier or role to the same subnet, which can serve as a security boundary.

Use regional subnets.

- Security lists

Use security lists to define ingress and egress rules that apply to the entire subnet.

- Cloud Guard

Clone and customize the default recipes provided by Oracle to create custom detector and responder recipes. These recipes enable you to specify what type of security violations generate a warning and what actions are allowed to be performed on them. For example, you might want to detect Object Storage buckets that have visibility set to public.

Apply Cloud Guard at the tenancy level to cover the broadest scope and to reduce the administrative burden of maintaining multiple configurations.

You can also use the Managed List feature to apply certain configurations to detectors.

- Head node

Use the VM.DenseIO2.24 Compute shape. It provides locally attached NVME storage, which speeds preprocessing of data.

The head node exists only in compartments with Cloud Guard enabled. You can clone and modify the default recipe for detector and responder for any specific requirement. We recommend that you use the default recipe as is.

- HPC Cluster node

Use the BM.HPC2.36 Compute shape. This shape has 36 cores from two 3.7GHz Intel Xeon Gold 6154 processors, 384-GB RAM, and 6.4-TB NVME local storage. By using powerful NVIDIA GPUs available on Oracle Cloud Infrastructure, you can post-process results on the cloud through remote visualization.

The HPC cluster node exists in compartments only with Cloud Guard enabled. You can clone and modify the default recipe for detector and responder for any specific requirement. We recommend that you use the default recipe as is.

Considerations

Consider the following when deploying this reference architecture.

- Performance

To get the best performance, choose the correct compute shape with appropriate bandwidth.

- Availability

Consider using a high-availability option based on your deployment requirements and region. Options include using multiple availability domains in a region and fault domains.

- Cost

A bare metal GPU instance provides necessary CPU power for a higher cost. Evaluate your requirements to choose the appropriate compute shape.

- Monitoring and alerts

Set up monitoring and alerts on CPU and memory usage for your nodes, so that you can scale the shape up or down as needed.