Set Up an Open-source Machine-Learning and AI Environment

Quickly set up a machine learning and artificial intelligence (AI) environment by using a preconfigured GPU stack with preinstalled common IDEs, notebooks, and frameworks so you can start producing results.

Oracle’s preconfigured environment for deep learning is useful in many industries across a wide range of applications.

-

Natural language processing

-

Image recognition and classification

-

Fraud detection for financial services

-

Recommendation engines for online retailers

-

Risk management

This preconfigured environment includes a virtual machine (VM) with an NVIDIA GPU and CUDA and cuDNN drivers, common Python and R integrated development environments (IDEs), Jupyter Notebooks, and open source machine learning (ML) and deep learning (DL) frameworks.

You can scale your Compute resources by using autoscaling, or you can stop the Compute instance when it’s not needed, to control costs. The VM includes basic sample data and code for you to test and explore.

The AI Datascience VM for Oracle Cloud Infrastructure image is available in Oracle Cloud Marketplace.

Architecture

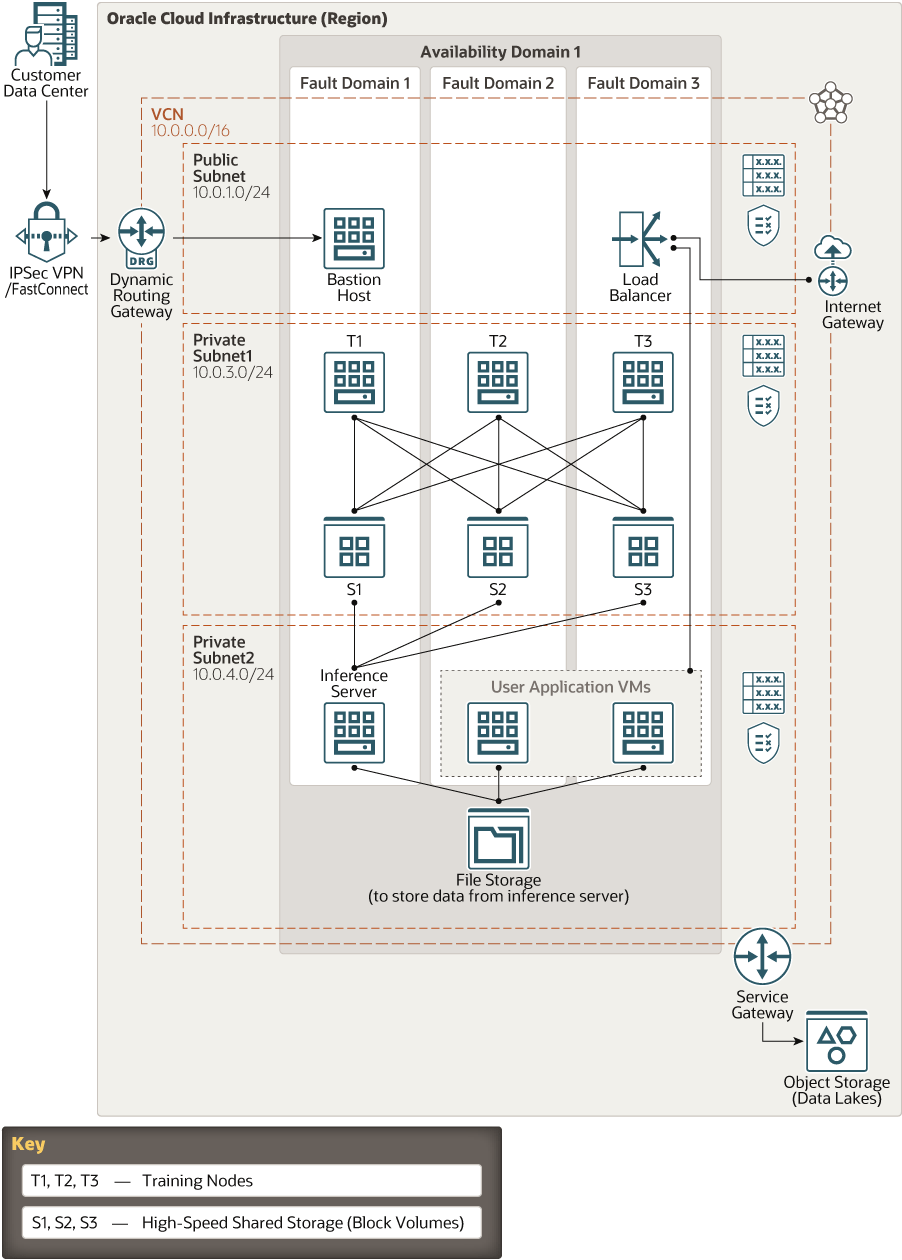

This reference architecture shows how to implement a machine learning and artificial intelligence (AI) environment in a region on Oracle Cloud Infrastructure.

This reference architecture deploys a bastion host, a training node, an inference node, a user application VM, and other components on Oracle Cloud Infrastructure. The architecture uses a region with one availability domain and regional subnets. You can use the same architecture in a region with multiple availability domains.

This architecture has the following components:

- Bastion host

Compute instance that provides access to other Compute instances in a private subnet.

- Training node

Compute instance in which customers develop and verify the model of their application, such as a neural network simulation. Training nodes are powerful instances that retrieve data from Object Storage, perform operations on the data according to the model being used, and store the data in attached shared block volume storage.

- Inference server

Compute instance that prepares the data stored in the block volumes (processed by the training nodes) for consumption by user applications. Inference servers store their processed data in File Storage.

- User application VM

This VM runs the user application and accesses the data processed by the inference server that’s stored in shared File Storage.

- Load balancer

The load balancer distributes incoming traffic to user application VMs.

- File storage

The file system is mounted on the inference server and user application VMs.

- Object storage

Object Storage is used as a data lake to store data that’s used by the training nodes.

- Block volume

The Oracle Cloud Infrastructure Block Volume service lets you dynamically provision and manage block storage volumes. You can create, attach, connect, and move volumes, as well as change volume performance to meet your storage, performance, and application requirements. After you attach and connect a volume to an instance, you can use the volume like a regular hard drive. You can also disconnect a volume and attach it to another instance without losing data. Use Block storage to store journal or log files.

- Virtual cloud network (VCN) and subnets

Every Compute instance is deployed in a VCN that can be segmented into subnets.

- Security lists

For each subnet, you can create security rules that specify the source, destination, and type of traffic that must be allowed in and out of the subnet.

- Availability domains

Availability domains are standalone, independent data centers within a region. The physical resources in each availability domain are isolated from the resources in the other availability domains, which provides fault tolerance. Availability domains don’t share infrastructure such as power or cooling, or the internal availability domain network. So, a failure at one availability domain is unlikely to affect the other availability domains in the region.

- Fault domains

A fault domain is a grouping of hardware and infrastructure within an availability domain. Each availability domain has three fault domains with independent power and hardware. When you distribute resources across multiple fault domains, your applications can tolerate physical server failure, system maintenance, and power failures inside a fault domain.

Recommendations

Your requirements might differ from the architecture described here. Use the following recommendations as a starting point.

- Bastion host

Use a VM.Standard.1.1 Compute shape. This host is used to access other Compute nodes and isn’t involved in data processing or other tasks.

- Training node

Use the BM.GPU3.8 shape, which provides 2x25 Gbps of networking bandwidth and sufficient GPU (8xV100) for Data Science applications. This node deploys and verifies the model of the application, so it needs enhanced GPU power. Start with up to three nodes, and use the autoscaling feature to scale up or down as necessary.

- Inference server

Use the BM.GPU2.2 shape, which provides 2x25 Gbps of networking bandwidth and sufficient GPU (2xP100) for Data Science applications. This node requires slightly less GPU power because of the nature of its role. Start with one node, and use the autoscaling feature to scale up as necessary.

- User application VM

Use the VM.Standard.2.2shape. These nodes are used for the user application, so a VM should be sufficient. Start with two VM nodes, and use the autoscaling feature to scale up or down as necessary.

- Load balancer

The load balancer distributes incoming traffic to user application VMs. Use the 100-Mbps shape.

- File storage

File Storage scales automatically as needed.

- Object storage

Use a single private bucket with a pre-authenticated link for a data lake object.

- Block volume

In addition to locally attached storage, use at least three block volumes (1 TB) using the multiple-attach feature. This addition provides more storage.

- VCN

-

When you create a VCN, determine the number of CIDR blocks required and the size of each block based on the number of resources that you plan to attach to subnets in the VCN. Use CIDR blocks that are within the standard private IP address space.

-

Select CIDR blocks that don't overlap with any other network (in Oracle Cloud Infrastructure, your on-premises data center, or another cloud provider) to which you intend to set up private connections.

-

After you create a VCN, you can change, add, and remove its CIDR blocks.

-

When you design the subnets, consider your functionality and security requirements. Attach all the compute instances within the same tier or role to the same subnet.

-

Use regional subnets.

-

- Security lists

Use security lists to define ingress and egress rules that apply to the entire subnet. For example, this architecture allows ICMP internally for the entire private subnet.

Considerations

Consider the following points when deploying this reference architecture.

- Performance

To get the best performance, choose the correct Compute shape with appropriate bandwidth.

- Availability

Consider using a high-availability option based on your deployment requirements and region. Options include using multiple availability domains in a region and fault domains.

- Cost

A bare metal GPU instance provides necessary CPU power for a higher cost. Evaluate your requirements to choose the appropriate Compute shape.

- Monitoring and alerts

Set up monitoring and alerts on CPU and memory usage for your nodes, so that you can scale the shape up or down as needed.

Deploy

The Terraform code for this reference architecture is available as a stack in Oracle Cloud Marketplace.

- Go to Oracle Cloud Marketplace.

- Click Get App.

- Follow the on-screen prompts.