Learn About Setting Up a Kubernetes Cluster in the Cloud

A Kubernetes-based topology in the cloud contains numerous components, including networking resources, compute instances, and the Kubernetes nodes. To deploy and manage such a complex topology efficiently, define your cloud infrastructure as code (IaC) in Terraform configuration files.

To change the topology, version the appropriate Terraform modules, update the resource definitions, and apply the revised configuration. When necessary, you can roll back to a previous version of the infrastructure easily.

Use the Terraform building blocks provided in this solution to deploy the infrastructure required for a cloud-based Kubernetes environment.

Before You Begin

See Learn about designing a Kubernetes topology for containerized applications in the cloud.

Architecture

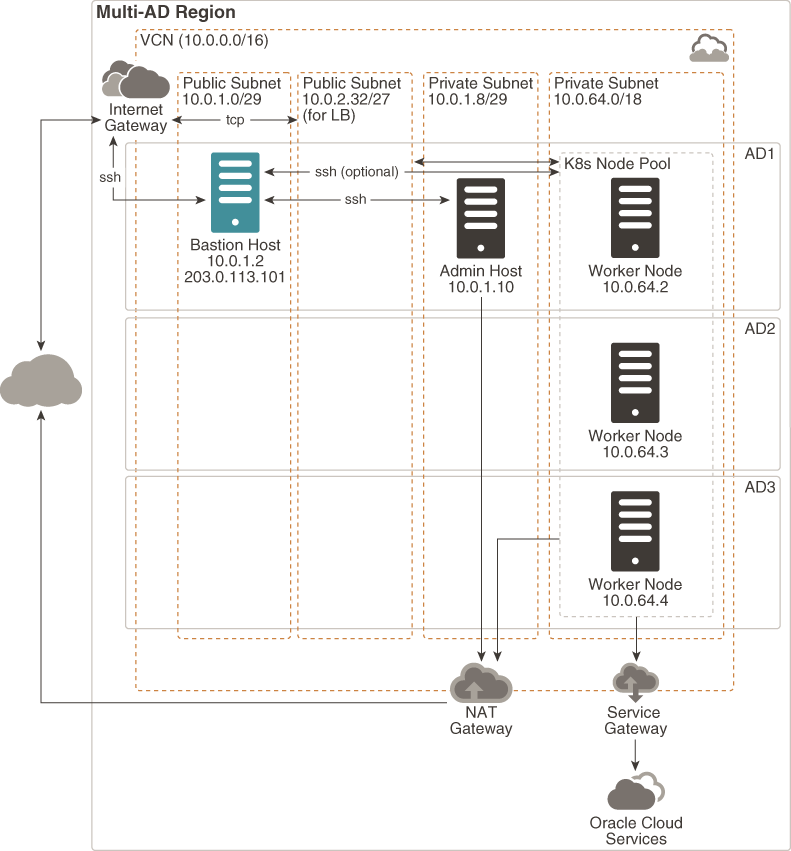

The sample topology that this solution provides Terraform code for contains a single virtual cloud network (VCN) with the required networking and Kubernetes resources, all in a single Oracle Cloud Infrastructure region.

Note:

The Terraform code includes input variables, which you can use to tune the architecture to suit the networking requirements of your containerized workloads, the size and number of node pools required, your fault-tolerance constraints, and so on.- Virtual cloud network (VCN)

All the resources in the topology are in a single network. You define the CIDR prefix for the network (default: 10.0.0.0/16).

- SubnetsThe VCN in the sample topology contains four subnets. You define the sizes of the subnets.

Subnet Default CIDR Prefix Description Bastion subnet 10.0.1.0/29 A public subnet for the optional bastion host. Load balancer subnet 10.0.2.32/27 if public (10.0.2.0/27 if private) A subnet for the load balancer nodes. You choose whether the subnet is public or private. Admin subnet 10.0.1.8/29 A private subnet for the optional admin host, which contains the tools necessary to manage the Kubernetes cluster, such as kubectl,helm, and the Oracle Cloud Infrastructure CLI.Worker-nodes subnet 10.0.64.0/18 A subnet for the Kubernetes worker nodes. You choose whether the subnet is public or private. All the subnets are regional; that is, they span all the availability domains in the region, abbreviated as AD1, AD2, and AD3 in the architecture diagram. So they are protected against availability-domain failure. You can use a regional subnet for the resources that you deploy to any availability domain in the region.

- Network gateways

- Service gateway (optional)

The service gateway enables resources in the VCN to access Oracle services such as Oracle Cloud Infrastructure Object Storage, Oracle Cloud Infrastructure File Storage, and Oracle Cloud Infrastructure Database privately; that is, without exposing the traffic to the public internet. Connections over the service gateway can be initiated from the resources within the VCN, and not from the services that the resources communicate with.

- NAT gateway (optional)

The NAT gateway enables compute instances that are attached to private subnets in the VCN to access the public internet. Connections through the NAT gateway can be initiated from the resources within the VCN, and not from the public internet.

- Internet gateway

The internet gateway enables connectivity between the public internet and any resources in public subnets within the VCN.

- Service gateway (optional)

- Bastion host (optional)

The bastion host is a compute instance that serves as the entry point to the topology from outside the cloud.

The bastion host is provisioned typically in a DMZ. It enables you to protect sensitive resources by placing them in private networks that can't be accessed directly from outside the cloud. You expose a single, known entry point that you can audit regularly. So you avoid exposing the more sensitive components of the topology, without compromising access to them.

The bastion host in the sample topology is attached to a public subnet and has a public IP address. An ingress security rule is configured to allow SSH connections to the bastion host from the public internet. To provide an additional level of security, you can limit SSH access to the bastion host from only a specific block of IP addresses.

You can access Oracle Cloud Infrastructure instances in private subnets through the bastion host. To do this, enable

ssh-agentforwarding, which allows you to connect to the bastion host, and then access the next server by forwarding the credentials from your computer. You can also access the instances in the private subnet by using dynamic SSH tunneling. The dynamic tunnel provides a SOCKS proxy on the local port; but the connections originate from the remote host. - Load balancer nodes (not included in the sample code)

The load balancer nodes intercept and distribute traffic to the available Kubernetes nodes running your containerized applications. If the applications must be accessible from the public internet, then use public load balancers; otherwise, use private load balancers, which don't have a public IP address. The architecture doesn't show any load balancer nodes.

- Admin host (optional)

By using an admin host, you can avoid installing and running infrastructure-management tools such as,

kubectl,helm, and the Oracle Cloud Infrastructure CLI outside the cloud. The admin host in the sample topology is in a private subnet, and can be accessed through the bastion host. To be able to run the Oracle Cloud Infrastructure CLI on the admin host, you must designate it as an instance principal. - Kubernetes worker nodes

The Kubernetes worker nodes are the compute instances on which you can deploy your containerized applications. In the sample topology all the worker nodes are in a single node pool and are attached to a private subnet. You can customize the number of node pools, the size of each pool, and whether to use a public subnet based on your requirements.

If the worker nodes are attached to a private subnet, then they can't be accessed directly from the public internet. Users can access the containerized applications through the load balancer.

If you enable SSH access to the worker nodes, then administrators can create SSH connections the worker nodes through the bastion host.

The architecture shows three worker nodes, each in a distinct availability domain within the region: AD1, AD2, and AD3.Note:

If the region in which you want to deploy your containerized applications contains a single availability domain, then the worker nodes are distributed across the fault domains (FD) within the availability domain.

About Required Services and Permissions

This solution requires the following services and permissions:

| Service | Permissions Required |

|---|---|

| Oracle Cloud Infrastructure Identity and Access Management | Manage dynamic groups and policies. |

| Oracle Cloud Infrastructure Networking | Manage VCNs, subnets, internet gateways, NAT gateways, service gateways, route tables, and security lists. |

| Oracle Cloud Infrastructure Compute | Manage compute instances. |

| Oracle Cloud Infrastructure Container Engine for Kubernetes | Manage clusters and node pools.

See Policy Configuration for Cluster Creation and Deployment. |