18 Tuning the Performance of Oracle GoldenGate

Topics:

18.1 Using Multiple Process Groups

Typically, only one Extract group is required to efficiently capture from a database. However, depending on the redo (transactional) values, or the data and operation types, you may find that you are required to add one or more Extract group to the configuration.

Similarly, only one Replicat group is typically needed to apply data to a target database if using Replicat in coordinated mode. (See About Coordinated Replicat Mode for more information.) However, even in some cases when using Replicat in coordinated mode, you may be required to use multiple Replicat groups. If you are using Replicat in classic mode and your applications generate a high transaction volume, you probably will need to use parallel Replicat groups.

Because each Oracle GoldenGate component — Extract, data pump, trail, Replicat — is an independent module, you can combine them in ways that suit your needs. You can use multiple trails and parallel Extract and Replicat processes (with or without data pumps) to handle large transaction volume, improve performance, eliminate bottlenecks, reduce latency, or isolate the processing of specific data.

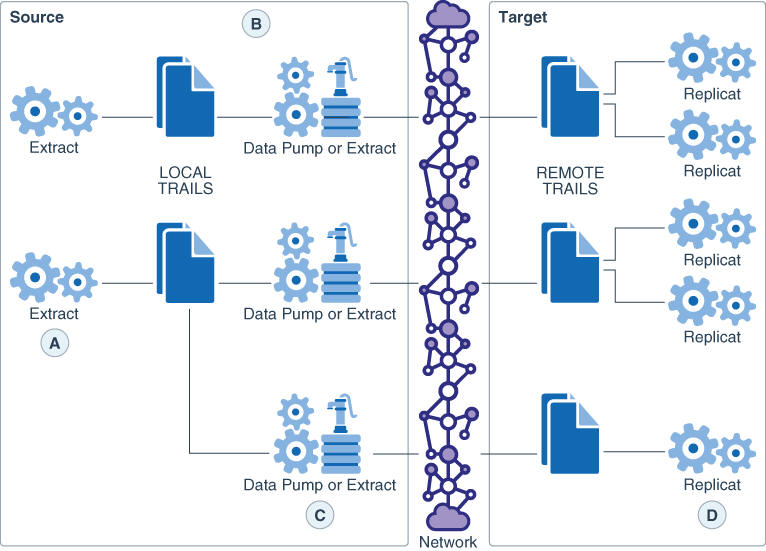

Figure 18-1 shows some of the ways that you can configure Oracle GoldenGate to improve throughput speed and overcome network bandwidth issues.

Figure 18-1 Load-balancing configurations that improve performance

-

A: Parallel Extracts divide the load. For example, by schema or to isolate tables that generate fetches.

-

B: A data pump with local trail can be used for filtering, conversion, and network false tolerance.

-

C: Multiple data pumps work around network per-process bandwidth limitations to enable TCP/IP throughput. Divide the TABLE parameter statements among them.

-

D: Parallel Replicats increase throughput to the database. Any trail can be read by one or more Replicats. Divide MAP statements among them.

18.1.1 Considerations for Using Multiple Process Groups

Before configuring multiple processing groups, review the following considerations to ensure that your configuration produces the desired results and maintains data integrity.

18.1.1.1 Maintaining Data Integrity

Not all workloads can be partitioned across multiple groups and still preserve the original transaction atomicity. You must determine whether the objects in one group will ever have dependencies on objects in any other group, transactional or otherwise. For example, tables for which the workload routinely updates the primary key cannot easily be partitioned in this manner. DDL replication (if supported for the database) is not viable in this mode, nor is the use of some SQLEXEC or EVENTACTIONS features that base their actions on a specific record.

If your tables do not have any foreign- key dependencies or updates to primary keys, you may be able to use multiple processes. Keep related DML together in the same process stream to ensure data integrity.

18.1.1.2 Number of Groups

The number of concurrent Extract and Replicat process groups that can run on a system depends on how much system memory is available. Each Extract and Replicat process needs approximately 25-55 MB of memory, or more depending on the size of the transactions and the number of concurrent transactions.

The Oracle GoldenGate GGSCI command interface fully supports up to 5,000 concurrent Extract and Replicat groups per instance of Oracle GoldenGate Manager. At the supported level, all groups can be controlled and viewed in full with GGSCI commands such as the INFO and STATUS commands. Beyond the supported level, group information is not displayed and errors can occur. Oracle GoldenGate recommends keeping the number of Extract and Replicat groups (combined) at the default level of 300 or below in order to manage your environment effectively. The number of groups is controlled by the MAXGROUPS parameter.

Note:

When creating the groups, keep tables that have relational constraints to each other in the same group.

18.1.1.3 Memory

The system must have sufficient swap space for each Oracle GoldenGate Extract and Replicat process that will be running. To determine the required swap space:

- Start up one Extract or Replicat.

- Run GGSCI.

- View the report file and find the line

PROCESS VM AVAIL FROM OS (min). - Round up the value to the next full gigabyte if needed. For example, round up 1.76GB to 2 GB.

- Multiply that value by the number of Extract and Replicat processes that will be running. The result is the maximum amount of swap space that could be required

See the CACHEMGR parameter in Reference for Oracle GoldenGate for more information about how memory is managed.

18.1.1.4 Isolating Processing-Intensive Tables

You can use multiple process groups to support certain kinds of tables that tend to interfere with normal processing and cause latency to build on the target. For example:

-

Extract may need to perform a fetch from the database because of the data type of the column, because of parameter specifications, or to perform SQL procedures. When data must be fetched from the database, it affects the performance of Extract. You can get fetch statistics from the

STATS EXTRACTcommand if you include theSTATOPTIONS REPORTFETCHparameter in the Extract parameter file. You can then isolate those tables into their own Extract groups, assuming that transactional integrity can be maintained. -

In its classic mode, Replicat process can be a source of performance bottlenecks because it is a single-threaded process that applies operations one at a time by using regular SQL. Even with

BATCHSQLenabled (see Reference for Oracle GoldenGate) Replicat may take longer to process tables that have large or long-running transactions, heavy volume, a very large number of columns that change, and LOB data. You can then isolate those tables into their own Replicat groups, assuming that transactional integrity can be maintained.

18.1.2 Using Parallel Replicat Groups on a Target System

This section contains instructions for creating a configuration that pairs one Extract group with multiple Replicat groups. Although it is possible for multiple Replicat processes to read a single trail (no more than three of them to avoid disk contention) it is recommended that you pair each Replicat with its own trail and corresponding Extract process.

-

Refer to Reference for Oracle GoldenGate for Windows and UNIX for command and parameter syntax.

-

For detailed instructions on configuring change synchronization, see Configuring Online Change Synchronization.

18.1.2.2 To Create the Replicat Groups

- On the target, create a Replicat checkpoint table. For instructions, see Creating a Checkpoint Table. All Replicat groups can use the same checkpoint table.

- On the target, use the

ADD REPLICATcommand to create a Replicat group for each trail that you created. Use theEXTTRAILargument of ADD REPLICAT to link the Replicat group to the appropriate trail. - On the target, use the

EDIT PARAMScommand to create a Replicat parameter file for each Replicat group that contains the parameters required for your database environment. AllMAPstatements for a given Replicat group must specify the same objects that are contained in the trail that is linked to that group. - In the Manager parameter file on the target system, use the

PURGEOLDEXTRACTSparameter to control the purging of files from the trails.

18.1.3 Using Multiple Extract Groups with Multiple Replicat Groups

Multiple Extract groups write to their own trails. Each trail is read by a dedicated Replicat group.

-

Refer to Reference for Oracle GoldenGate for Windows and UNIX for command and parameter syntax.

-

For detailed instructions on configuring change synchronization, see Configuring Online Change Synchronization.

18.1.3.1 To Create the Extract Groups

Note:

This configuration includes data pumps.

- On the source, use the

ADD EXTRACTcommand to create the primary Extract groups. - On the source, use the

ADD EXTTRAILcommand to specify a local trail for each of the Extract groups that you created. - On the source create a data-pump Extract group to read each local trail that you created.

- On the source, use the

ADD RMTTRAILcommand to specify a remote trail for each of the data-pumps that you created. - On the source, use the

EDIT PARAMScommand to create an Extract parameter file for each primary Extract group and each data-pump Extract group.

18.1.3.2 To Create the Replicat Groups

- On the target, create a Replicat checkpoint table. For instructions, see Creating a Checkpoint Table. All Replicat groups can use the same checkpoint table.

- On the target, use the

ADD REPLICATcommand to create a Replicat group for each trail. Use theEXTTRAILargument ofADD REPLICATto link the group to the trail. - On the target, use the

EDIT PARAMScommand to create a Replicat parameter file for each Replicat group. AllMAPstatements for a given Replicat group must specify the same objects that are contained in the trail that is linked to the group. - In the Manager parameter files on the source system and the target system, use the

PURGEOLDEXTRACTSparameter to control the purging of files from the trails.

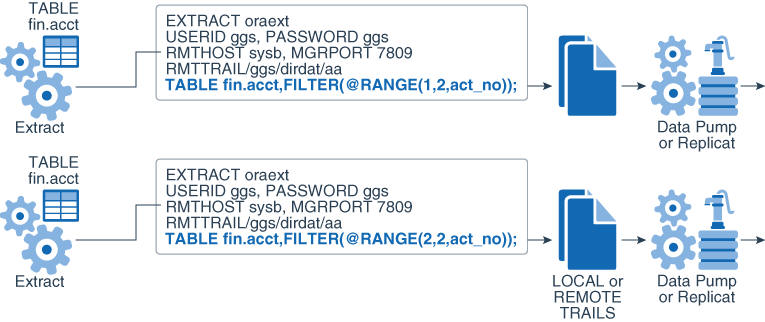

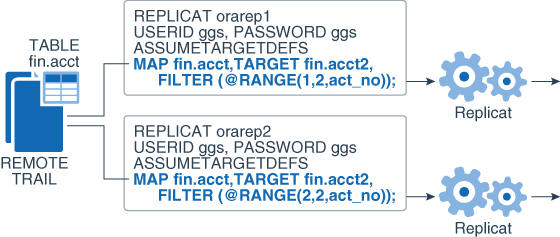

18.2 Splitting Large Tables Into Row Ranges Across Process Groups

You can use the @RANGE function to divide the rows of any table across two or more Oracle GoldenGate processes. It can be used to increase the throughput of large and heavily accessed tables and also can be used to divide data into sets for distribution to different destinations. Specify each range in a FILTER clause in a TABLE or MAP statement.

@RANGE is safe and scalable. It preserves data integrity by guaranteeing that the same row will always be processed by the same process group.

It might be more efficient to use the primary Extract or a data pump to calculate the ranges than to use Replicat. To calculate ranges, Replicat must filter through the entire trail to find data that meets the range specification. However, your business case should determine where this filtering is performed.

Figure 18-2 Dividing rows of a table between two Extract groups

Description of "Figure 18-2 Dividing rows of a table between two Extract groups"

Figure 18-3 Dividing rows of a table between two Replicat groups

Description of "Figure 18-3 Dividing rows of a table between two Replicat groups"

18.3 Configuring Oracle GoldenGate to Use the Network Efficiently

Inefficiencies in the transfer of data across the network can cause lag in the Extract process and latency on the target. If not corrected, it can eventually cause process failures.

When you first start a new Oracle GoldenGate configuration:

-

Establish benchmarks for what you consider to be acceptable lag and throughput volume for Extract and for Replicat. Keep in mind that Extract will normally be faster than Replicat because of the kind of tasks that each one performs. Over time you will know whether the difference is normal or one that requires tuning or troubleshooting.

-

Set a regular schedule to monitor those processes for lag and volume, as compared to the benchmarks. Look for lag that remains constant or is growing, as opposed to occasional spikes. Continuous, excess lag indicates a bottleneck somewhere in the Oracle GoldenGate configuration. It is a critical first indicator that Oracle GoldenGate needs tuning or that there is an error condition.

To view volume statistics, use the STATS EXTRACT or STATS REPLICAT command. To view lag statistics, use the LAG EXTRACT or LAG REPLICAT command. See Reference for Oracle GoldenGate for Windows and UNIX for more information.

18.3.1 Detecting a Network Bottleneck that is Affecting Oracle GoldenGate

To detect a network bottleneck that is affecting the throughput of Oracle GoldenGate, follow these steps.

There is a network bottleneck if the status of Replicat is either in delay mode or at the end of the trail file and either of the following is true:

-

You are only using a primary Extract and its write checkpoint is not increasing or is increasing too slowly. Because this Extract process is responsible for sending data across the network, it will eventually run out of memory to contain the backlog of extracted data and abend.

-

You are using a data pump, and its write checkpoint is not increasing, but the write checkpoint of the primary Extract is increasing. In this case, the primary Extract can write to its local trail, but the data pump cannot write to the remote trail. The data pump will abend when it runs out of memory to contain the backlog of extracted data. The primary Extract will run until it reaches the last file in the trail sequence and will abend because it cannot make a checkpoint.

Note:

Even when there is a network outage, Replicat will process in a normal manner until it applies all of the remaining data from the trail to the target. Eventually, it will report that it reached the end of the trail file.

18.3.2 Working Around Bandwidth Limitations by Using Data Pumps

Using parallel data pumps may enable you to work around bandwidth limitations that are imposed on a per-process basis in the network configuration. You can use parallel data pumps to send data to the same target system or to different target systems. Data pumps also remove TCP/IP responsibilities from the primary Extract, and their local trails provide fault tolerance.

18.3.3 Reducing the Bandwidth Requirements of Oracle GoldenGate

Use the compression options of the RMTHOST parameter to compress data before it is sent across the network. Weigh the benefits of compression against the CPU resources that are required to perform the compression. See Reference for Oracle GoldenGate for more information.

18.3.4 Increasing the TCP/IP Packet Size

Use the TCPBUFSIZE option of the RMTHOST parameter to control the size of the TCP socket buffer that Extract maintains. By increasing the size of the buffer, you can send larger packets to the target system. See Reference for Oracle GoldenGate for more information.

Use the following steps as a guideline to determine the optimum buffer size for your network.

The maximum socket buffer size for non-Windows systems is usually limited by default. Ask your system administrator to increase the default value on the source and target systems so that Oracle GoldenGate can increase the buffer size configured with TCPBUFSIZE.

18.4 Eliminating Disk I/O Bottlenecks

I/O activity can cause bottlenecks for both Extract and Replicat.

-

A regular Extract generates disk writes to a trail and disk reads from a data source.

-

A data pump and Replicat generate disk reads from a local trail.

-

Each process writes a recovery checkpoint to its checkpoint file on a regular schedule.

18.4.1 Improving I/O performance Within the System Configuration

If there are I/O waits on the disk subsystems that contain the trail files, put the trails on the fastest disk controller possible.

Check the RAID configuration. Because Oracle GoldenGate writes data sequentially, RAID 0+1 (striping and mirroring) is a better choice than RAID 5, which uses checksums that slow down I/O and are not necessary for these types of files.

18.4.2 Improving I/O Performance Within the Oracle GoldenGate Configuration

You can improve I/O performance by making configurations changes within Oracle GoldenGate. Try increasing the values of the following parameters.

-

Use the

CHECKPOINTSECSparameter to control how often Extract and Replicat make their routine checkpoints.Note:

CHECKPOINTSECSis not valid for an integrated Replicat on an Oracle database system. -

Use the

GROUPTRANSOPSparameter to control the number of SQL operations that are contained in a Replicat transaction when operating in its normal mode. Increasing the number of operations in a Replicat transaction improves the performance of Oracle GoldenGate by reducing the number of transactions executed by Replicat, and by reducing I/O activity to the checkpoint file and the checkpoint table, if used. Replicat issues a checkpoint whenever it applies a transaction to the target, in addition to its scheduled checkpoints.Note:

GROUPTRANSOPSis not valid for an integrated Replicat on an Oracle database system, unless the inbound server parameterparallelismis set to 1. -

Use the

EOFDELAYorEOFDELAYCSECSparameter to control how often Extract, a data pump, or Replicat checks for new data after it has reached the end of the current data in its data source. You can reduce the system I/O overhead of these reads by increasing the value of this parameter.

Note:

Increasing the values of these parameters improves performance, but it also increases the amount of data that must be reprocessed if the process fails. This has an effect on overall latency between source and target. Some testing will help you determine the optimal balance between recovery and performance.

18.5 Managing Virtual Memory and Paging

Because Oracle GoldenGate replicates only committed transactions, it stores the operations of each transaction in a managed virtual-memory pool known as a cache until it receives either a commit or a rollback for that transaction. One global cache operates as a shared resource of an Extract or Replicat process. The Oracle GoldenGate cache manager takes advantage of the memory management functions of the operating system to ensure that Oracle GoldenGate processes work in a sustained and efficient manner. The CACHEMGR parameter controls the amount of virtual memory and temporary disk space that is available for caching uncommitted transaction data that is being processed by Oracle GoldenGate.

When a process starts, the cache manager checks the availability of resources for virtual memory, as shown in the following example:

CACHEMGR virtual memory values (may have been adjusted)CACHESIZE: 32GCACHEPAGEOUTSIZE (normal): 8M PROCESS VM AVAIL FROM OS (min): 63.97GCACHESIZEMAX (strict force to disk): 48G

If the current resources are not sufficient, a message like the following may be returned:

2013-11-11 14:16:22 WARNING OGG-01842 CACHESIZE PER DYNAMIC DETERMINATION (32G) LESS THAN RECOMMENDED: 64G (64bit system)vm found: 63.97GCheck swap space. Recommended swap/extract: 128G (64bit system).

If the system exhibits excessive paging and the performance of critical processes is affected, you can reduce the CACHESIZE option of the CACHEMGR. parameter. You can also control the maximum amount of disk space that can be allocated to the swap directory with the CACHEDIRECTORY option. For more information about CACHEMGR, see Reference for Oracle GoldenGate.

18.6 Optimizing Data Filtering and Conversion

Heavy amounts of data filtering or data conversion add processing overhead. The following are suggestions for minimizing the impact of this overhead on the other processes on the system.

-

Avoid using the primary Extract to filter and convert data. Keep it dedicated to data capture. It will perform better and is less vulnerable to any process failures that result from those activities. The objective is to make certain the primary Extract process is running and keeping pace with the transaction volume.

-

Use Replicat or a data-pump to perform filtering and conversion. Consider any of the following configurations:

-

Use a data pump on the source if the system can tolerate the overhead. This configuration works well when there is a high volume of data to be filtered, because it uses less network bandwidth. Only filtered data gets sent to the target, which also can help with security considerations.

-

Use a data pump on an intermediate system. This configuration keeps the source and target systems free of the overhead, but uses more network bandwidth because unfiltered data is sent from the source to the intermediate system.

-

Use a data pump or Replicat on the target if the system can tolerate the overhead, and if there is adequate network bandwidth for sending large amounts of unfiltered data.

-

-

If you have limited system resources, a least-best option is to divide the filtering and conversion work between Extract and Replicat.

18.7 Tuning Replicat Transactions

Replicat uses regular SQL, so its performance depends on the performance of the target database and the type of SQL that is being applied (inserts, versus updates or deletes). However, you can take certain steps to maximize Replicat efficiency.

18.7.1 Tuning Coordination Performance Against Barrier Transactions

In a coordinated Replicat configuration, barrier transactions such as updates to the primary key cause an increased number of commits to the database, and they interrupt the benefit of the GROUPTRANSOPS feature of Replicat. When there is a high number of barrier transactions in the overall workload of the coordinated Replicat, using a high number of threads can actually degrade Replicat performance.

To maintain high performance when large numbers of barrier transactions are expected, you can do the following:

-

Reduce the number of active threads in the group. This reduces the overall number of commits that Replicat performs.

-

Move the tables that account for the majority of the barrier transactions, and any tables with which they have dependencies, to a separate coordinated Replicat group that has a small number of threads. Keep the tables that have minimal barrier transactions in the original Replicat group with the higher number of threads, so that parallel performance is maintained without interruption by barrier transactions.

-

(Oracle RAC) In a new Replicat configuration, you can increase the

PCTFREEattribute of the Replicat checkpoint table. However, this must be done before Replicat is started for the first time. The recommended value ofPCTFREEis 90.

18.7.2 Applying Similar SQL Statements in Arrays

Use the BATCHSQL parameter to increase the performance of Replicat. BATCHSQL causes Replicat to organize similar SQL statements into arrays and apply them at an accelerated rate. In its normal mode, Replicat applies one SQL statement at a time.

When Replicat is in BATCHSQL mode, smaller row changes will show a higher gain in performance than larger row changes. At 100 bytes of data per row change, BATCHSQL has been known to improve the performance of Replicat by up to 300 percent, but actual performance benefits will vary, depending on the mix of operations. At around 5,000 bytes of data per row change, the benefits of using BATCHSQL diminish.

The gathering of SQL statements into batches improves efficiency but also consumes memory. To maintain optimum performance, use the following BATCHSQL options:

BATCHESPERQUEUE BYTESPERQUEUE OPSPERBATCH OPSPERQUEUE

As a benchmark for setting values, assume that a batch of 1,000 SQL statements at 500 bytes each would require less than 10 megabytes of memory.

You can use BATCHSQL with the BATCHTRANSOPS option to tune array sizing. BATCHTRANSOPS controls the maximum number of batch operations that can be grouped into a transaction before requiring a commit. The default for non-integrated Replicat is 1000. The default for integrated Replicat is 50. If there are many wait dependencies when using integrated Replicat, try reducing the value of BATCHTRANSOPS. To determine the number of wait dependencies, view the TOTAL_WAIT_DEPS column of the V$GG_APPLY_COORDINATOR database view in the Oracle database.

See Reference for Oracle GoldenGate for additional usage considerations and syntax.

18.7.3 Preventing Full Table Scans in the Absence of Keys

If a target table does not have a primary key, a unique key, or a unique index, Replicat uses all of the columns to build its WHERE clause. This is, essentially, a full table scan.

To make row selection more efficient, use a KEYCOLS clause in the TABLE and MAP statements to identify one or more columns as unique. Replicat will use the specified columns as a key. The following example shows a KEYCOLS clause in a TABLE statement:

TABLE hr.emp, KEYCOLS (FIRST_NAME, LAST_NAME, DOB, ID_NO);

For usage guidelines and syntax, see the TABLE and MAP parameters in Reference for Oracle GoldenGate.

18.7.4 Splitting Large Transactions

If the target database cannot handle large transactions from the source database, you can split them into a series of smaller ones by using the Replicat parameter MAXTRANSOPS. See Reference for Oracle GoldenGate for more information.

Note:

MAXTRANSOPS is not valid for an integrated Replicat on an Oracle database system.

18.7.5 Adjusting Open Cursors

The Replicat process maintains cursors for cached SQL statements and for SQLEXEC operations. Without enough cursors, Replicat must age more statements. By default, Replicat maintains as many cursors as allowed by the MAXSQLSTATEMENTS parameter. You might find that the value of this parameter needs to be increased. If so, you might also need to adjust the maximum number of open cursors that are permitted by the database. See Reference for Oracle GoldenGate for more information.

18.7.6 Improving Update Speed

Excessive block fragmentation causes Replicat to apply SQL statements at a slower than normal speed. Reorganize heavily fragmented tables, and then stop and start Replicat to register the new object ID.

18.7.7 Set a Replicat Transaction Timeout

Use the TRANSACTIONTIMEOUT parameter to prevent an uncommitted Replicat target transaction from holding locks on the target database and consuming its resources unnecessarily. You can change the value of this parameter so that Replicat can work within existing application timeouts and other database requirements on the target.

TRANSACTIONTIMEOUT limits the amount of time that Replicat can hold a target transaction open if it has not received the end-of-transaction record for the last source transaction in that transaction. By default, Replicat groups multiple source transactions into one target transaction to improve performance, but it will not commit a partial source transaction and will wait indefinitely for that last record. The Replicat parameter GROUPTRANSOPS controls the minimum size of a grouped target transaction.

The following events could last long enough to trigger TRANSACTIONTIMEOUT:

-

Network problems prevent trail data from being delivered to the target system.

-

Running out of disk space on any system, preventing trail data from being written.

-

Collector abends (a rare event).

-

Extract abends or is terminated in the middle of writing records for a transaction.

-

An Extract data pump abends or is terminated.

-

There is a source system failure, such as a power outage or system crash.

See Reference for Oracle GoldenGate for more information.