13 Common Configuration and Management Tasks for an Enterprise Deployment

The configuration and management tasks that may need to be performed on the enterprise deployment environment are detailed in this section.

- Verifying Manual Failover of the Administration Server

In case a host computer fails, you can fail over the Administration Server to another host. The steps to verify the failover and failback of the Administration Server from BIHOST1 and BIHOST2 are detailed in the following sections. - Enabling SSL Communication Between the Middle Tier and the Hardware Load Balancer

It is important to understand how to enable SSL communication between the middle tier and the hardware load balancer. - Performing Backups and Recoveries for an Enterprise Deployment

It is recommended that you follow the below mentioned guidelines for making sure you back up the necessary directories and configuration data for an Oracle Business Intelligence enterprise deployment. - Using JDBC Persistent Stores for TLOGs and JMS in an Enterprise Deployment

The guidelines for when to use JDBC persistent stores for transaction logs (TLOGs) and JMS, and the procedures for configuring the persistent stores in a supported database are explained in this section.

13.1 Verifying Manual Failover of the Administration Server

In case a host computer fails, you can fail over the Administration Server to another host. The steps to verify the failover and failback of the Administration Server from BIHOST1 and BIHOST2 are detailed in the following sections.

Assumptions:

-

The Administration Server is configured to listen on ADMINVHN, and not on localhost or ANY address.

For more information about the ADMINVHN virtual IP address, see Reserving the Required IP Addresses for an Enterprise Deployment.

-

These procedures assume that the Administration Server domain home (ASERVER_HOME) has been mounted on both host computers. This ensures that the Administration Server domain configuration files and the persistent stores are saved on the shared storage device.

-

The Administration Server is failed over from BIHOST1 to BIHOST2, and the two nodes have these IPs:

-

BIHOST1: 100.200.140.165

-

BIHOST2: 100.200.140.205

-

ADMINVHN : 100.200.140.206. This is the Virtual IP where the Administration Server is running, assigned to a virtual sub-interface (e.g. eth0:1), to be available on BIHOST1 or BIHOST2.

-

-

Oracle WebLogic Server and Oracle Fusion Middleware components have been installed in BIHOST2 as described in the specific configuration chapters in this guide.

Specifically, both host computers use the exact same path to reference the binary files in the Oracle home.

- Failing Over the Administration Server to a Different Host

The following procedure shows how to fail over the Administration Server to a different node (BIHOST2). Note that even after failover, the Administration Server will still use the same Oracle WebLogic Server machine (which is a logical machine, not a physical machine). - Validating Access to the Administration Server on BIHOST2 Through Oracle HTTP Server

After you perform a manual failover of the Administration Server, it is important to verify that you can access the Administration Server, using the standard administration URLs. - Configuring Roles for Administration of an Enterprise Deployment

In order to manage each product effectively within a single enterprise deployment domain, you must understand which products require specific administration roles or groups, and how to add a product-specific administration role to the Enterprise Deployment Administration group. - Failing the Administration Server Back to BIHOST1

After you have tested a manual Administration Server failover, and after you have validated that you can access the administration URLs after the failover, you can then migrate the Administration Server back to its original host.

13.1.1 Failing Over the Administration Server to a Different Host

The following procedure shows how to fail over the Administration Server to a different node (BIHOST2). Note that even after failover, the Administration Server will still use the same Oracle WebLogic Server machine (which is a logical machine, not a physical machine).

This procedure assumes you’ve configured a per domain Node Manager for the enterprise topology. For more information, see About the Node Manager Configuration in a Typical Enterprise Deployment

To fail over the Administration Server to a different host:

-

Stop the Administration Server.

-

Stop the Node Manager in the Administration Server domain directory (ASERVER_HOME).

-

Migrate the ADMINVHN virtual IP address to the second host:

-

Run the following command as root on BIHOST1 (where X:Y is the current interface used by ADMINVHN):

/sbin/ifconfig ethX:Y down

-

Run the following command as root on BIHOST2:

/sbin/ifconfig <interface:index> ADMINVHN netmask <netmask>Note:

The index should be different than that of the BI Server 2 on the BIHOST2.For example:

/sbin/ifconfig eth0:1 100.200.140.206 netmask 255.255.255.0

Note:

Ensure that the netmask and interface to be used to match the available network configuration in BIHOST2.

-

-

Update the routing tables using

arping, for example:/sbin/arping -q -U -c 3 -I eth0 100.200.140.206

-

Start the Node Manager in the Administration Server domain home on BIHOST2.

-

Start the Administration Server on BIHOST2.

-

Test that you can access the Administration Server on BIHOST2 as follows:

-

Ensure that you can access the Oracle WebLogic Server Administration Console using the following URL:

http://ADMINVHN:7001/console -

Check that you can access and verify the status of components in Fusion Middleware Control using the following URL:

http://ADMINVHN:7001/em

-

13.1.2 Validating Access to the Administration Server on BIHOST2 Through Oracle HTTP Server

After you perform a manual failover of the Administration Server, it is important to verify that you can access the Administration Server, using the standard administration URLs.

From the load balancer, access the following URLs to ensure that you can access the Administration Server when it is running on BIHOST2:

-

http://admin.example.com/console

This URL should display the WebLogic Server Administration console.

-

http://admin.example.com/em

This URL should display Oracle Enterprise Manager Fusion Middleware Control.

13.1.3 Configuring Roles for Administration of an Enterprise Deployment

In order to manage each product effectively within a single enterprise deployment domain, you must understand which products require specific administration roles or groups, and how to add a product-specific administration role to the Enterprise Deployment Administration group.

Each enterprise deployment consists of multiple products. Some of the products have specific administration users, roles, or groups that are used to control administration access to each product.

However, for an enterprise deployment, which consists of multiple products, you can use a single LDAP-based authorization provider and a single administration user and group to control access to all aspects of the deployment. For more information about creating the authorization provider and provisioning the enterprise deployment administration user and group, see Creating a New LDAP Authenticator and Provisioning a New Enterprise Deployment Administrator User and Group.

To be sure that you can manage each product effectively within the single enterprise deployment domain, you must understand which products require specific administration roles or groups, you must know how to add any specific product administration roles to the single, common enterprise deployment administration group, and if necessary, you must know how to add the enterprise deployment administration user to any required product-specific administration groups.

For more information, see the following topics.

13.1.3.1 Adding the Enterprise Deployment Administration User to a Product-Specific Administration Group

For products with a product-specific administration group, use the following procedure to add the enterprise deployment administration user (weblogic_bi to the group. This will allow you to manage the product using the enterprise manager administrator user:

13.2 Enabling SSL Communication Between the Middle Tier and the Hardware Load Balancer

It is important to understand how to enable SSL communication between the middle tier and the hardware load balancer.

Note:

The following steps are applicable if the hardware load balancer is configured with SSL and the front end address of the system has been secured accordingly.

- When is SSL Communication Between the Middle Tier and Load Balancer Necessary?

- Generating Self-Signed Certificates Using the utils.CertGen Utility

- Creating an Identity Keystore Using the utils.ImportPrivateKey Utility

- Creating a Trust Keystore Using the Keytool Utility

- Importing the Load Balancer Certificate into the Trust Store

- Adding the Updated Trust Store to the Oracle WebLogic Server Start Scripts

- Configuring Node Manager to Use the Custom Keystores

- Configuring WebLogic Servers to Use the Custom Keystores

13.2.1 When is SSL Communication Between the Middle Tier and Load Balancer Necessary?

In an enterprise deployment, there are scenarios where the software running on the middle tier must access the front-end SSL address of the hardware load balancer. In these scenarios, an appropriate SSL handshake must take place between the load balancer and the invoking servers. This handshake is not possible unless the Administration Server and Managed Servers on the middle tier are started using the appropriate SSL configuration.

13.2.2 Generating Self-Signed Certificates Using the utils.CertGen Utility

This section describes the procedure for creating self-signed certificates on BIHOST1. Create these certificates using the network name or alias of the host.

The directory where keystores and trust keystores are maintained must be on shared storage that is accessible from all nodes so that when the servers fail over (manually or with server migration), the appropriate certificates can be accessed from the failover node. Oracle recommends using central or shared stores for the certificates used for different purposes (for example, SSL set up for HTTP invocations).For more information, see the information on filesystem specifications for the KEYSTORE_HOME location provided in Understanding the Recommended Directory Structure for an Enterprise Deployment.

For information on using trust CA certificates instead, see the information about configuring identity and trust in Oracle Fusion Middleware Administering Security for Oracle WebLogic Server.

About Passwords

The passwords used in this guide are used only as examples. Use secure passwords in a production environment. For example, use passwords that include both uppercase and lowercase characters as well as numbers.

To create self-signed certificates:

13.2.3 Creating an Identity Keystore Using the utils.ImportPrivateKey Utility

This section describes how to create an Identity Keystore on BIHOST1.example.com.

In previous sections you have created certificates and keys that reside on shared storage. In this section, the certificate and private keys created earlier for all hosts and ADMINVHN are imported into a new Identity Store. Make sure that you use a different alias for each of the certificate/key pair imported.

Note:

The Identity Store is created (if none exists) when you import a certificate and the corresponding key into the Identity Store using the utils.ImportPrivateKey utility.

13.2.4 Creating a Trust Keystore Using the Keytool Utility

To create the Trust Keystore on BIHOST1.example.com.

13.2.5 Importing the Load Balancer Certificate into the Trust Store

For the SSL handshake to behave properly, the load balancer's certificate needs to be added to the WLS servers trust store. For adding it, follow these steps:

13.2.6 Adding the Updated Trust Store to the Oracle WebLogic Server Start Scripts

setDomainEnv.sh script is provided by Oracle WebLogic Server and is used to start the Administration Server and the Managed Servers in the domain. To ensure that each server accesses the updated trust store, edit the setDomainEnv.sh script in each of the domain home directories in the enterprise deployment.13.2.7 Configuring Node Manager to Use the Custom Keystores

To configure the Node Manager to use the custom keystores, add the following lines to the end of the nodemanager.properties files located both in ASERVER_HOME/nodemanager and MSERVER_HOME/nodemanager directories in all nodes:

KeyStores=CustomIdentityAndCustomTrust CustomIdentityKeyStoreFileName=Identity KeyStore CustomIdentityKeyStorePassPhrase=Identity KeyStore Passwd CustomIdentityAlias=Identity Key Store Alias CustomIdentityPrivateKeyPassPhrase=Private Key used when creating Certificate

Make sure to use the correct value for CustomIdentityAlias for Node Manager's listen address. For example, in the BIHOST1 MSERVER_HOME, use the alias BIHOST1 and in the ASERVER_HOME on BIHOST1, use the alias ADMINVHN according to the steps in Creating an Identity Keystore Using the utils.ImportPrivateKey Utility.

Example for BIHOST1: KeyStores=CustomIdentityAndCustomTrust CustomIdentityKeyStoreFileName=KEYSTORE_HOME/appIdentityKeyStore.jks CustomIdentityKeyStorePassPhrase=password CustomIdentityAlias=BIHOST1 CustomIdentityPrivateKeyPassPhrase=password

The passphrase entries in the nodemanager.properties file are encrypted when you start Node Manager as described in Starting the Node Manager on BIHOST1. For security reasons, minimize the time the entries in the nodemanager.properties file are left unencrypted. After you edit the file, restart Node Manager as soon as possible so that the entries are encrypted.

Note:

TheCustomIdentityAlias value will need to be corrected every time the domain is extended after this configuration is performed. An unpack operation will replace the CustomIdentityAlias with the Administration Server's value when the domain configuration is written.13.2.8 Configuring WebLogic Servers to Use the Custom Keystores

To configure the identity and trust keystores:

13.3 Performing Backups and Recoveries for an Enterprise Deployment

It is recommended that you follow the below mentioned guidelines for making sure you back up the necessary directories and configuration data for an Oracle Business Intelligence enterprise deployment.

Note:

Some of the static and run-time artifacts listed in this section are hosted from Network Attached Storage (NAS). If possible, backup and recover these volumes from the NAS filer directly rather than from the application servers.

For general information about backing up and recovering Oracle Fusion Middleware products, see the following sections in Oracle Fusion Middleware Administering Oracle Fusion Middleware:

Table 13-1 lists the static artifacts to back up in a typical Oracle Business Intelligence enterprise deployment.

Table 13-1 Static Artifacts to Back Up in the Oracle Business Intelligence Enterprise Deployment

| Type | Host | Tier |

|---|---|---|

|

Database Oracle home |

DBHOST1 and DBHOST2 |

Data Tier |

|

Oracle Fusion Middleware Oracle home |

WEBHOST1 and WEBHOST2 |

Web Tier |

|

Oracle Fusion Middleware Oracle home |

BIHOST1 and BIHOST2 (or NAS Filer) |

Application Tier |

|

Installation-related files |

WEBHOST1, WEHOST2, and shared storage |

N/A |

Table 13-2 lists the runtime artifacts to back up in a typical Oracle Business Intelligence enterprise deployment.

Table 13-2 Run-Time Artifacts to Back Up in the Oracle Business Intelligence Enterprise Deployment

| Type | Host | Tier |

|---|---|---|

|

Administration Server domain home (ASERVER_HOME) |

BIHOST1 (or NAS Filer) |

Application Tier |

|

Application home (APPLICATION_HOME) |

BIHOST1 (or NAS Filer) |

Application Tier |

|

Oracle RAC databases |

DBHOST1 and DBHOST2 |

Data Tier |

|

Scripts and Customizations |

Per host |

Application Tier |

|

Deployment Plan home (DEPLOY_PLAN_HOME) |

BIHOST1 (or NAS Filer) |

Application Tier |

|

Singleton Data Directory (SDD) |

BIHOST1 and BIHOST2 |

Application Tier |

|

OHS Configuration directory |

WEBHOST1 and WEBHOST2 |

Web Tier |

13.4 Using JDBC Persistent Stores for TLOGs and JMS in an Enterprise Deployment

The guidelines for when to use JDBC persistent stores for transaction logs (TLOGs) and JMS, and the procedures for configuring the persistent stores in a supported database are explained in this section.

- About JDBC Persistent Stores for JMS and TLOGs

Oracle Fusion Middleware supports both database-based and file-based persistent stores for Oracle WebLogic Server transaction logs (TLOGs) and JMS. Before deciding on a persistent store strategy for your environment, consider the advantages and disadvantages of each approach. - Products and Components that use JMS Persistence Stores and TLOGs

- Performance Impact of the TLOGs and JMS Persistent Stores

One of the primary considerations when selecting a storage method for Transaction Logs and JMS persistent stores is the potential impact on performance. This topic provides some guidelines and details to help you determine the performance impact of using JDBC persistent stores for TLOGs and JMS. - Roadmap for Configuring a JDBC Persistent Store for TLOGs

The following topics describe how to configure a database-based persistent store for transaction logs. - Roadmap for Configuring a JDBC Persistent Store for JMS

The following topics describe how to configure a database-based persistent store for JMS. - Creating a User and Tablespace for TLOGs

Before you can create a database-based persistent store for transaction logs, you must create a user and tablespace in a supported database. - Creating a User and Tablespace for JMS

Before you can create a database-based persistent store for JMS, you must create a user and tablespace in a supported database. - Creating GridLink Data Sources for TLOGs and JMS Stores

Before you can configure database-based persistent stores for JMS and TLOGs, you must create two data sources: one for the TLOGs persistent store and one for the JMS persistent store. - Assigning the TLOGs JDBC store to the Managed Servers

After you create the tablespace and user in the database, and you have created the datasource, you can then assign the TLOGs persistence store to each of the required Managed Servers. - Creating a JDBC JMS Store

After you create the JMS persistent store user and table space in the database, and after you create the data source for the JMS persistent store, you can then use the Administration Console to create the store. - Assigning the JMS JDBC store to the JMS Servers

After you create the JMS tablespace and user in the database, create the JMS datasource, and create the JDBC store, then you can then assign the JMS persistence store to each of the required JMS Servers. - Creating the Required Tables for the JMS JDBC Store

The final step in using a JDBC persistent store for JMS is to create the required JDBC store tables. Perform this task before restarting the Managed Servers in the domain.

13.4.1 About JDBC Persistent Stores for JMS and TLOGs

Oracle Fusion Middleware supports both database-based and file-based persistent stores for Oracle WebLogic Server transaction logs (TLOGs) and JMS. Before deciding on a persistent store strategy for your environment, consider the advantages and disadvantages of each approach.

Note:

Regardless of which storage method you choose, Oracle recommends that for transaction integrity and consistency, you use the same type of store for both JMS and TLOGs.

When you store your TLOGs and JMS data in an Oracle database, you can take advantage of the replication and high availability features of the database. For example, you can use OracleData Guard to simplify cross-site synchronization. This is especially important if you are deploying Oracle Fusion Middleware in a disaster recovery configuration.

Storing TLOGs and JMS data in a database also means you don’t have to identity a specific shared storage location for this data. Note, however, that shared storage is still required for other aspects of an enterprise deployment. For example, it is necessary for Administration Server configuration (to support Administration Server failover), for deployment plans, and for adapter artifacts, such as the File/FTP Adapter control and processed files.

If you are storing TLOGs and JMS stores on a shared storage device, then you can protect this data by using the appropriate replication and backup strategy to guarantee zero data loss, and you will potentially realize better system performance. However, the file system protection will always be inferior to the protection provided by an Oracle Database.

For more information about the potential performance impact of using a database-based TLOGs and JMS store, see Performance Impact of the TLOGs and JMS Persistent Stores.

13.4.2 Products and Components that use JMS Persistence Stores and TLOGs

Determining which installed FMW products and components utilize persistent stores can be done through the WebLogic Server Console in the Domain Structure navigation under DomainName > Services > Persistent Stores. The list will indicate the name of the store, the store type (usually FileStore), the targeted managed server, and whether the target can be migrated to or not.

The persistent stores with migratable targets are the appropriate candidates for consideration of the use of JDBC Persistent Stores. The stores listed that pertain to MDS are outside the scope of this chapter and should not be considered.

13.4.3 Performance Impact of the TLOGs and JMS Persistent Stores

One of the primary considerations when selecting a storage method for Transaction Logs and JMS persistent stores is the potential impact on performance. This topic provides some guidelines and details to help you determine the performance impact of using JDBC persistent stores for TLOGs and JMS.

Performance Impact of Transaction Logs Versus JMS Stores

For transaction logs, the impact of using a JDBC store is relatively small, because the logs are very transient in nature. Typically, the effect is minimal when compared to other database operations in the system.

On the other hand, JMS database stores can have a higher impact on performance if the application is JMS intensive.

Factors that Affect Performance

There are multiple factors that can affect the performance of a system when it is using JMS DB stores for custom destinations. The main ones are:

-

Custom destinations involved and their type

-

Payloads being persisted

-

Concurrency on the SOA system (producers on consumers for the destinations)

Depending on the effect of each one of the above, different settings can be configured in the following areas to improve performance:

-

Type of data types used for the JMS table (using raw vs. lobs)

-

Segment definition for the JMS table (partitions at index and table level)

Impact of JMS Topics

If your system uses Topics intensively, then as concurrency increases, the performance degradation with an Oracle RAC database will increase more than for Queues. In tests conducted by Oracle with JMS, the average performance degradation for different payload sizes and different concurrency was less than 30% for Queues. For topics, the impact was more than 40%. Consider the importance of these destinations from the recovery perspective when deciding whether to use database stores.

Impact of Data Type and Payload Size

When choosing to use the RAW or SecureFiles LOB data type for the payloads, consider the size of the payload being persisted. For example, when payload sizes range between 100b and 20k, then the amount of database time required by SecureFiles LOB is slightly higher than for the RAW data type.

More specifically, when the payload size reach around 4k, then SecureFiles tend to require more database time. This is because 4k is where writes move out-of-row. At around 20k payload size, SecureFiles data starts being more efficient. When payload sizes increase to more than 20k, then the database time becomes worse for payloads set to the RAW data type.

One additional advantage for SecureFiles is that the database time incurred stabilizes with payload increases starting at 500k. In other words, at that point it is not relevant (for SecureFiles) whether the data is storing 500k, 1MB or 2MB payloads, because the write is asynchronized, and the contention is the same in all cases.

The effect of concurrency (producers and consumers) on the queue’s throughput is similar for both RAW and SecureFiles until the payload sizes reeach 50K. For small payloads, the effect on varying concurrency is practically the same, with slightly better scalability for RAW. Scalability is better for SecureFiles when the payloads are above 50k.

Impact of Concurrency, Worker Threads, and Database Partioning

Concurrency and worker threads defined for the persistent store can cause contention in the RAC database at the index and global cache level. Using a reverse index when enabling multiple worker threads in one single server or using multiple Oracle WebLogic Server clusters can improve things. However, if the Oracle Database partitioning option is available, then global hash partition for indexes should be used instead. This reduces the contention on the index and the global cache buffer waits, which in turn improves the response time of the application. Partitioning works well in all cases, some of which will not see significant improvements with a reverse index.

13.4.4 Roadmap for Configuring a JDBC Persistent Store for TLOGs

The following topics describe how to configure a database-based persistent store for transaction logs.

13.4.5 Roadmap for Configuring a JDBC Persistent Store for JMS

The following topics describe how to configure a database-based persistent store for JMS.

13.4.6 Creating a User and Tablespace for TLOGs

Before you can create a database-based persistent store for transaction logs, you must create a user and tablespace in a supported database.

13.4.7 Creating a User and Tablespace for JMS

Before you can create a database-based persistent store for JMS, you must create a user and tablespace in a supported database.

13.4.8 Creating GridLink Data Sources for TLOGs and JMS Stores

Before you can configure database-based persistent stores for JMS and TLOGs, you must create two data sources: one for the TLOGs persistent store and one for the JMS persistent store.

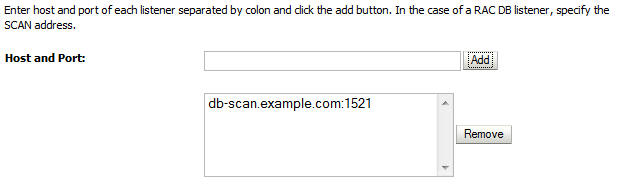

For an enterprise deployment, you should use GridLink data sources for your TLOGs and JMS stores. To create a GridLink data source:

13.4.9 Assigning the TLOGs JDBC store to the Managed Servers

After you create the tablespace and user in the database, and you have created the datasource, you can then assign the TLOGs persistence store to each of the required Managed Servers.

- Login in to the Oracle WebLogic Server Administration Console.

- In the Change Center, click Lock and Edit.

- In the Domain Structure tree, expand Environment, then Servers.

- Click the name of the Managed Server you want to use the TLOGs store.

- Select the Configuration > Services tab.

- Under Transaction Log Store, select JDBC from the Type menu.

- From the Data Source menu, select the data source you created for the TLOGs persistence store.

- In the Prefix Name field, specify a prefix name to form a unique JDBC TLOG store name for each configured JDBC TLOG store

- Click Save.

- Repeat steps 3 to 7 for each of the additional Managed Servers in the cluster.

- To activate these changes, in the Change Center of the Administration Console, click Activate Changes.

13.4.10 Creating a JDBC JMS Store

After you create the JMS persistent store user and table space in the database, and after you create the data source for the JMS persistent store, you can then use the Administration Console to create the store.

13.4.11 Assigning the JMS JDBC store to the JMS Servers

After you create the JMS tablespace and user in the database, create the JMS datasource, and create the JDBC store, then you can then assign the JMS persistence store to each of the required JMS Servers.

- Login in to the Oracle WebLogic Server Administration Console.

- In the Change Center, click Lock and Edit.

- In the Domain Structure tree, expand Services, then Messaging, then JMS Servers.

- Click the name of the JMS Server that you want to use the persistent store.

- From the Persistent Store menu, select the JMS persistent store you created earlier.

- Click Save.

- Repeat steps 3 to 6 for each of the additional JMS Servers in the cluster.

- To activate these changes, in the Change Center of the Administration Console, click Activate Changes.