11 Working with A/B Testing

The A/B Testing feature lets you to experiment with design and content variations on your website pages.This helps you determine which variations produce the optimal results before you make permanent changes to your website. Use the feature's in-context, visual method to create A/B tests on any of the pages delivered by Oracle WebCenter Sites.

Note:

A/B test functionality is provided through an integration with Google Analytics. To use the A/B test functionality you must first register with Google for a Google Analytics account that lets you measure the performance of an A/B test. In addition, you acknowledge that as part of an A/B test, anonymous end user information (that is, information of end users who access your website for the test) is delivered to and may be used by Google under Google’s terms that govern that information.For example, you might use A/B Testing to explore:

-

Which banner image resulted in more leads generated from the home page?

-

Which featured article resulted in visitors landing on a promoted section of the website?

-

Do visitors spend more time on the home page with a red banner displayed versus a blue banner?

-

Does adding a testimonial increase the click-through rate?

-

Which page layout resulted in more visitors downloading the featured white paper?

11.1 What are the main tasks to create and run an A/B test?

Creating an A/B test involves tasks, such as deciding what to compare, what to measure, as well as how and when to start and end the test. Once these tasks are complete, you can approve the test, see the experiment in Google, and then view the test result in the A/B test report.

Here are the main tasks:

-

Select the variants to test.

You can compare two or more variants, where variant A serves as control (base) and compared with other variants B, C, or D. You can run multiple A/B tests at the same time.

-

Choose parameters to measure the test.

You cam use a goal to specify the visitor action the test captures and compares for the variants, and then displays as A/B test results. A goal refers to a specific visitor action that you identify for tracking, and you can specify the type of goal that fits your use case. The default goals are Destination, Duration, Pages per session, and Event. For example, if you choose Destination, you can specify a page like

surfing.html. The conversion occurs whenever anyone visits this page. -

Create an A/B test and add variants to it.

On the management system, you can create the test and use the WYSIWYG A/B test mode to add one or more variants to a selected web or mobile page. You can identify a variant by its color-coding, and easily save or discard its tracked changes. In the following example, first variant (B) displays in green in both the A/B Test panel (B) and in each change (1) on the page. See How do I create A/B tests?.

-

Define criteria to start and end the test.

-

Define criteria on how and when the test starts and ends. Google Analytics only shows the duration of the event. For example, if you select start date as 14 and end date as 28, Google Analytics shows as 2 weeks. See How to specify the start and end date of an A/B test?.

-

Specify a statistical confidence level, for example, 95 for a 95% confidence level to achieve the results See How to select the confidence level of an A/B test?.

-

Click Select Goal and choose a goal in the dialog box that appears. See How to specify the goal to track in an A/B test?.

-

Select the visitors or segments to target for the test. You can display the test to a specified percent of the entire visitor pool or to a specified percent of one or more selected visitor segments. See How to specify visitors or segments to target in A/B tests?.

Figure 11-2 A/B Test Criteria Pane

-

-

Approve the test (and its dependencies) to see the actual Experiment in Google.

-

View test results in the A/B Test Report.

As visitors view variants and conversions take place, you can view the results for individual A/B tests on the report. This maintains the same variant color-coding you saw while creating test variants. The report lets you see if there are measurable differences in visitor actions between variants. A cookie is left so that visitors see the same variant upon returning to the site. See How do I view A/B test results?.

-

Optionally update the site to use the winning variant.

After displaying the winning variant for a while, you might copy or create an A/B Test to include new variants, giving you the option to refine iterations over time.

11.2 What you need to do before A/B testing?

You need to complete a number of prerequisites before you can create your A/B test. For example, enabling asset type WCS_ABTest, including A/B code element, and setting the property abtest.delivery.enabled to true on the delivery instance.

-

You must enable

WCS_ABTestasset type.A WebCenter Sites administrator or developer can enable the WCS_ABTest asset type, by default it’s enabled only in "avisports" and "FirstSite II" sample sites.

See Administering A/B Testing in Administering Oracle WebCenter Sites.

-

You must include the A/B code element inside the template before testing. By default, the code element isn’t included in the template.

A WebCenter Sites developer adds the A/B code element to templates.

See Template Assets in Developing with Oracle WebCenter Sites.

-

The property

abtest.delivery.enabledmust be set to true on the delivery instance. This is an instance that delivers the A/B test variants to site visitors. You must not set the property on instances that are only used to create the A/B tests as this gives false results. Theabtest.delivery.enabledproperty is in the ABTest category of thewcs_properties.jsonfile.See A/B Test Properties in Property Files Reference for Oracle WebCenter Sites.

-

Any user that is able to “promote the winner” of an A/B test must be given the

MarketingAuthorrole. Any user that is able to view A/B test reports and stop tests must be given theMarketingEditorrole.See Configuring Users, Profiles, and Attributes in Administering Oracle WebCenter Sites.

11.3 How do I create A/B tests?

You can create your A/B test by setting up variants of the web page that you want to test and also set the test criteria to get relevant results.

For information about creating A/B tests, see these topics:

11.3.1 How do I work in A/B test mode?

You’ll find that creating A/B tests in the Contributor interface is similar to editing in Web mode. What’s different between Web mode and A/B test pages is that you can make page changes to a test layer of variants instead of on the site pages; you’ll also see an A/B Test panel displaying on the right.

You can enable A/B test mode from the Contributor Web view by opening the browser page or mobile page on which to add a test; then click the A/B test icon in the upper right of the menu bar. (If you like to find the number of tests that exist for this page, you can hover over the icon.)

If A/B testing is not enabled for a page, you can still create an A/B test for that page; however, you’ll get a warning message.

If an A/B test is not added to a page, you see a control on the right panel with the text “Create a test”.

If one or more A/B tests already exist for a page, the panel at right shows the A/B Test controls and an option to change to the Criteria controls:

-

You can use the A/B Test controls to create and edit tests for a page, and to track changes that you make to a page’s variants.

-

You can use the Criteria controls to set the criteria for the selected test, such as its start and end determinants, which conversion to measure, and target and confidence information.

The controls in the toolbar also change when in A/B Test mode:

-

The toolbar label changes to A/B Test.

-

If the test has not yet been published, a Save icon and a Change Page Layout icon are available.

-

An Approve icon and a Delete icon are always available.

-

There are no Edit, Preview, Checkin/Checkout, or Reports icons.

11.3.2 How do I modify A/B tests?

You can create A/B tests, make copies of them, and select existing tests. You must perform these tasks in A/B Test Mode.

How do I create A/B test for a page?

-

In the list box containing either the text "Create a test" or a list of existing tests, click + (plus) button.

-

In the Create New Test window, enter a name for the test, then click Submit.

-

In the A/B Test toolbar, click Save .

How do I copy A/B tests?

-

Click + (plus) button.

-

In the Create New Test window, select a test to copy. In the top field, you can change the default name assigned.

-

Change the default name assigned.

-

Click Submit.

-

In the A/B Test toolbar, click Save.

How do I select A/B tests?

-

Open the drop-down list of existing tests and select the one you want.

After creating, copying, or selecting a test, you can work with its variants (described in How to add, select, and edit A/B test variants?) and select its criteria. The criteria you select applies to all variants of the test.

11.3.3 How do I set up A/B test variants?

You can set up A/B test variants by adding, selecting, deleting, or editing them, or changing their page layout.

To set up A/B test variants, see these topics:

11.3.3.1 How to add, select, and edit A/B test variants?

You can define the A/B test by selecting a variant and editing its webpage. Use the color-coding and numbering to identify the variant and its changes.

To add, select, and edit test variants:

11.3.4 How do I set up A/B test criteria?

Setting up A/B test criteria involves number of tasks including specifying the start and end date of the test, the confidence level, the goal to track, and specifying visitors or segments to target.

To set up A/B test criteria, see these topics:

11.3.4.1 How to specify the start and end date of an A/B test?

When creating a test, you can specify a start and end date for it. If you don’t specify a start date, the test starts as soon as it’s published. Once a test starts running, you can view its current results, stop it early, and promote its winner.

See, How do I view A/B test results? and How do I promote a test variant to the control (base) webpage?. You can’t edit a test after it’s published.

To specify the start and end date of an A/B test:

11.3.4.2 How to select the confidence level of an A/B test?

When creating a test, you can select a confidence level for its results. This number determines the confidence in the significance of the test results, specifically that conversion differences are caused by variant differences themselves rather than random visitor variations. The larger the number of A/B test visitors, the easier it is to determine statistical significance in variant differences.

If you set a confidence level, the A/B test continues until that confidence level is reached, even beyond the specified end point for the test.

See How is the A/B test confidence level calculated?.

To select a test confidence level:

- In A/B test view, click Criteria in the A/B Test panel (see How do I work in A/B test mode?).

- In the Confidence field, select or enter a confidence level percent. You can select percentages ranging from 85% to 99.9%

- Click Save.

11.3.4.3 How is the A/B test confidence level calculated?

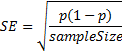

The confidence level is calculated using the Wald method for a binomial distribution. This method consists of two separate calculations. The first calculation determines the confidence interval. The second calculation determines the Z-score, which measures how accurate the results are. For A/B testing, the confidence interval for each variant is calculated by the number of visitors (who perform a specified action) divided by the number of visitors to that page variant.

In the Wald Method , conversion rate is represented by p.

Figure 11-5 Wald Method of Determining Confidence Intervals for Binomial Distributions

Description of "Figure 11-5 Wald Method of Determining Confidence Intervals for Binomial Distributions"

The results for each variant are then used to calculate the Z-Score. The Z-score measures how accurate results are. A common Z-Score (confidence range) used in A/B Testing is a 3% range from the final score. However, this is only a common use, and any range can be used. The range is then determined for the conversion rate (p) by multiplying the standard error with that percentile range of standard normal distribution.

At this point the results must be determined to be significant; that is, that conversion rates are not different based on random variations. The Z-Score is calculated in this way:

The Z-Score is the number of positive standard deviation values between the control and the test mean values. Using the previous standard confidence interval, a statistical significance of 95% is determined when the view event count is greater than 1000 and that the Z-Score probability is either greater than 95% or less than 5%.

11.3.4.4 How to specify the goal to track in an A/B test?

Marketers are required to create and use custom goals in Google Analytics for A/B testing. These goals must be created before setting up A/B tests. You can create goals of your own, or you can use goals created by others.

To specify the goal to track:

11.3.4.5 How to specify visitors or segments to target in A/B tests?

You can define your A/B test to target only a certain percentage of all visitors in a segment or the percentage of visitors in a segment. For example, you might target the test to 50% of all visitors or to 50% of visitors in a segment of visitors 65 years or older.

See Creating Segments.

Within the specified percent, test variants display in equal percentages to the target visitors. For example, if a test targeting 30% of visitors include two variants (B and C) in addition to the control (A), 10% of visitors would be shown control A, 10% variant B, and 10% variant C.

- In A/B test view, click Criteria in the A/B Test panel.

- In the Target field, select Visitors to target any visitors, or Segments to target visitors who are part of a selected segment.

- Visitors Enter the percentage of visitors to include in the test in the % of Visitors field that displays.

- Segments Select one or more segments:

-

Click Select Segments that displays.

-

In the Target field in the Segments window, enter the percentage of visitors in selected segments to include in the test.

-

From the Segment Name column, select one or more segments. Click Sort to sort the segments list by name or modification date. To deselect a segment, click its x in the Selected Segments column.

-

Click Select. Selected segment names display under the Select Segments button.

-

- Click Save.

11.4 How do I use A/B test results?

You can review your A/B test results in the A/B test report. You can decide whether to promote one of the variant pages you tested to the active website.

To use A/B test results see these topics:

11.4.1 How do I view A/B test results?

Once the test begins, whenever visitors view the control (A), or variant versions of the webpage, their site visit information is captured and these statistics become available in the A/B Test report. This means, that for completed tests, you can compare the relative performance of the base and variant webpage designs. You can then use this information to determine whether or not to promote one of the variants to become the webpage that visitors see.

To view the A/B Test report in the Contributor interface:

11.4.2 How do I approve and publish A/B tests?

Once you’ve completed creating and editing your test's variants and specified its criteria, you’re ready to approve the test for publishing to the delivery system. The test is created in Google Analytics during approval. Once the approval process is complete, you can log into Google and see the experiment and all its settings.

Consider the following:

-

Approving the test approves both the test and its variants. As with all webpage approvals, you must approve an asset and all of its dependencies.

-

The test must include a goal before you can approve it.

-

A/B tests are published to a destination that you select. Before a destination is available to select, it too must be published. If there are no published destinations available, consult your administrator.

-

Upon publishing, the test begins at the date and time you specified in the test's criteria. If that date and time has already passed, the test starts immediately.

To approve a test's assets for publishing and to start the test:

11.4.3 How do I analyze the A/B test report data?

You can view the A/B test report from the Contributor interface. The report provides the data to compare the relative performance of the test variants webpage designs to the control (base web page). You can then use this information to decide whether or not to promote the winning test variant to the control webpage that visitors see.

The A/B test report summarizes the data in a number of different ways to help you make important marketing decisions:

-

Summary

-

Metrics

-

Conversions

-

Confidence

The report status and action button are displayed in the header.. The example below shows a report that is currently in progress and therefore has the action option of Stop.

Summary Section

Figure 11-7 Summary

| Feature | Description |

|---|---|

|

Information area |

Shows basic information about the A/B test, such as, webpage name, owner, and description. |

|

Conversion |

Displays the type of conversion that the test is monitoring. |

|

Segments |

Describes the segmentation if the test includes a segmented user base. All means the user base is not segmented. |

|

Stop |

Displays when and how the test is set to stop. |

|

Chart |

Shows the conversion rate for each variant. |

Metrics Bar Section

Figure 11-8 Metrics Bar

| Feature | Description |

|---|---|

|

Target |

Shows the target percentage of all visitors, the end date, and the confidence percentage for this test. |

|

Summary to date |

Shows the number of visitors served, the number of conversions, and the confidence percentage reached so far, for all variants combined. If this test allows one variant to be identified as better than the others, this is shown as the winner, when the test is complete. |

Conversions Section

Figure 11-9 Conversions

| Feature | Description |

|---|---|

|

Select criteria |

Normally set to Device. |

|

Display |

Conversion information for each variant. |

Confidence Section

Figure 11-10 Confidence

The chart shows the number of visitors, the number of conversions, the conversion percentage, the Z-score (see How is the A/B test confidence level calculated?), and the confidence percentage.

11.4.4 How do I promote a test variant to the control (base) webpage?

Once you’ve finished reviewing your A/B test results, you may decide to promote a particular test variant. This action permanently replaces the control (Base webpage) with the winning variant and displays it to all visitors.

To promote the winning test variant:

11.5 How do I delete an A/B test?

Once an A/B test is deleted, the reports generated for that test are also deleted but not the data. If you want to re-use this data, you can create your own reports using the Oracle Endeca Information Discovery (EID) system. A warning message appears if you attempt to delete an A/B test that is currently running.

Note:

The experiment that’s created in Google also remains even though the A/B test has been deleted. If you want to delete this experiment, you’ll have go to Google Analytics interface and delete it.To delete an A/B test, click the Delete option in A/B test toolbar, select the assets you want to delete, and click Delete. If the A/B test has been published, you’ll get a warning message.