| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Java CAPS Adapter for Batch User's Guide Java CAPS Documentation |

| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Java CAPS Adapter for Batch User's Guide Java CAPS Documentation |

Oracle Java CAPS Adapter for Batch User's Guide

Additional Licensing Considerations

Batch Adapter System Requirements

Installing Adapter Enterprise Manager plug-ins

Creating and Configuring Batch Adapters

Selecting a Batch External Application

Modifying the Adapter Properties

BatchFTP Adapter Connectivity Map Properties

Pre Transfer (BatchFTP Connectivity Map)

SOCKS (BatchFTP Connectivity Map)

FTP (BatchFTP Connectivity Map)

FTP Raw Commands (BatchFTP Connectivity Map)

Sequence Numbering (BatchFTP Connectivity Map)

Post Transfer (BatchFTP Connectivity Map)

Target Location (BatchFTP Connectivity Map)

SSH Tunneling (BatchFTP Connectivity Map)

Additional SSH-supporting Software

General Settings (BatchFTP Connectivity Map)

BatchFTP Adapter Environment Properties

General Settings (BatchFTP Environment)

SSH Tunneling (BatchFTP Environment)

Connection Pool Settings (BatchFTP Environment)

Connection Retry Settings (BatchFTP Environment)

BatchFTPOverSSL Adapter Connectivity Map Properties

Pre Transfer (BatchFTPOverSSL Connectivity Map)

FTP and SSL Settings (BatchFTPOverSSL Connectivity Map)

Post Transfer (BatchFTPOverSSL Connectivity Map)

Firewall Settings (BatchFTPOverSSL Connectivity Map)

Synchronization (BatchFTPOverSSL Connectivity Map)

BatchFTPOverSSL Adapter Environment Properties

FTP and SSL Settings (BatchFTPOverSSL Environment)

Firewall Settings (BatchFTPOverSSL Environment)

General Settings (BatchFTPOverSSL Environment)

Connection Pool Settings (BatchFTPOverSSL Environment)

Connection Retry Settings (BatchFTPOverSSL Environment)

BatchSCP Adapter Connectivity Map Properties

SCP Settings (BatchSCP Connectivity Map)

Firewall Settings (BatchSCP Connectivity Map)

Synchronization (BatchSCP Connectivity Map)

BatchSCP Adapter Environment Properties

SSH Settings (BatchSCP Environment)

Firewall Settings (BatchSCP Environment)

General Settings (BatchSCP Environment)

Connection Pool Settings (BatchSCP Environment)

Connection Retry Settings (BatchSCP Environment)

BatchSFTP Adapter Connectivity Map Properties

Pre Transfer (BatchSFTP Connectivity Map)

SFTP Settings (BatchSFTP Connectivity Map)

Post Transfer (BatchSFTP Connectivity Map)

Firewall Settings (BatchSFTP Connectivity Map)

Synchronization (BatchSFTP Connectivity Map)

BatchSFTP Adapter Environment Properties

SFTP Settings (BatchSFTP Environment)

Firewall Settings (BatchSFTP Environment)

General Settings (BatchSFTP Environment)

Connection Pool Settings (BatchSFTP Environment)

Connection Retry Settings (BatchSFTP Environment)

BatchLocalFile Connectivity Map Properties

Pre Transfer (BatchLocalFile Connectivity Map)

Sequence Numbering (BatchLocalFile Connectivity Map)

Post Transfer (BatchLocalFile Connectivity Map)

General Settings (BatchLocalFile Connectivity Map)

Target Location (BatchLocalFile Connectivity Map)

BatchLocalFile Environment Properties

General Settings (BatchLocalFile Environment)

Connection Pool Settings (BatchLocalFile Environment)

BatchRecord Connectivity Map Properties

General Settings (BatchRecord Connectivity Map)

Record (BatchRecord Connectivity Map)

BatchRecord Environment Properties

Connection Pool Settings (BatchRecord Environment)

BatchInbound Connectivity Map Properties

Settings (BatchInbound Connectivity Map)

BatchInbound Environment Properties

MDB Settings (BatchInbound Environment)

IBM IP Stack Required for MVS Sequential, MVS GDG, and MVS PD

Creating User Defined Heuristic Directory Listing Styles

Heuristics Configuration File Format

FTP Heuristics Configuration Parameters

Commands Supported by FTP Server

Header Indication Regex Expression

Trailer Indication Regex Expression

Directory Indication Regex Expression

File Link Indication Regex Expression

File Link Symbol Regex Expression

Valid File Line Minimum Position

Special Envelope For Absolute Path Name

Listing Directory Yields Absolute Path Names

Absolute Path Name Delimiter Set

Change Directory Before Listing

Directory Name Requires Terminator

FTP Configuration Requirements for AS400 UNIX (UFS)

Dynamic Configurable Parameters for Secure FTP OTDs

Configuration Parameters that Accept Integer Values

Understanding Batch Adapter OTDs

Code Conversion and Generation

Type Conversion Troubleshooting

Essential BatchFTP OTD Methods

Additional FTP File Transfer Commands

BatchFTPOverSSL OTD Node Functions

BatchLocalFile OTD Node Functions

BatchLocalFile Specific Features

Pre/Post File Transfer Commands

Essential BatchLocalFile OTD Methods

Operation Without Resume Reading Enabled

To Avoid Storing a Resume Reading State

Generating Multiple Files with Sequence Numbering

Example 1: Parsing a Large File

Example 2: Slow, Complex Query

Record-processing OTD Node Functions

Using the Record-processing OTD

Regular Expressions and the Adapter

Rules for Directory Regular Expressions

Restrictions for Using Regular Expressions as Directory Names

Regular Expression Directory Name Examples

Additional Batch Adapter Features

Streaming Data Between Components

Introduction to Data Streaming

Overcoming Large-file Limitations

Data Streaming Versus Payload Data Transfer

SOCKS Configuration Properties

Additional Software Requirements

BatchRecord, the Batch Adapter’s record-processing OTD, allows you to parse (extract) records from an incoming payload (payload data) or to create an outgoing payload consisting of records. Understanding the operation of this OTD and how to use it requires an explanation of some of these terms.

The word payload here refers to an in-memory buffer, that is, a sequence of bytes or a stream. Also, records in this context are not records in the database sense. Instead, a record simply means a sequence of bytes with a known and simple structure, for example, fixed-length or delimited records.

For example, each of the following record types can be parsed or created by this OTD:

A large data file that contains a number of SAP IDocs, each with 1024 bytes in length.

A data file that contains a large number of X12 purchase orders, each terminated by a special sequence of bytes.

The record-processing OTD can handle records in the following formats:

Fixed length: Each record in the payload is exactly the same size.

Delimited: Each record is followed by a specific sequence of bytes, for example, CR,LF.

Single: The entire payload is the record.

When using character delimiters with DBCS data, use single byte character(s) or equivalent hex values with hex values that do not coincide with either byte of the double byte character.

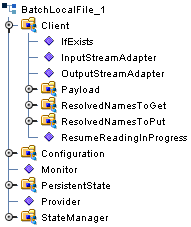

The BatchRecord OTD contains two top-level nodes, Client, Configuration, PersistentState, and StateManager. Expand these nodes to reveal additional sub-nodes.

Figure 9 BatchRecord OTD Structure

Each field node under the Configuration node of the OTD, corresponds to one of the adapter’s record-processing configuration parameters.

The following list explains these primary nodes in the record-processing OTD, including their functions:

BatchRecord: Represents the OTD’s root node.

Configuration: Each sub-node within this node corresponds to an adapter configuration parameter and contains the corresponding settings information, except for the Parse or Create parameter. See BatchRecord Connectivity Map Properties for details.

InputStreamAdapter and OutputStreamAdapter: Allow you to use and control the data-streaming features of the OTD. For details on their operation, see Streaming Data Between Components.

Note - You can transfer data using the Payload node or by using data streaming (InputStreamAdapter and OutputStreamAdapter nodes), but you cannot use both methods in the same OTD.

Note - For the record-processing OTD, these configuration nodes are read-only. They are provided only for the purpose of accessing and checking the configuration information at run time.

Record: A properties node that represents either:

The current record just retrieved via the get() method, if the call succeeded

The current record to be added to the data payload when put() is called

Payload: The in-memory buffer containing the data payload byte array you are parsing or creating.

| Caution - It is a good practice to use a byte array in all cases. Failure to do so can cause loss of data. |

put(): Adds whatever is currently in the Record node to the data payload. The method returns true if the call is successful.

get(): Retrieves the next record from the data payload (or stream), and populates the Record node with the record retrieved. get() returns true if the call is successful.

finish(): Allows you to indicate a successful completion of either a parse or create loop for both put() and get().

Note - Use reset() to indicate any errors and to allow the OTD to clean up any unneeded internal data structures.

This OTD has the following basic uses:

Parsing a payload: When the payload comes from an external system

Creating a payload: Before sending the payload to an external system

A single instance of the OTD is not designed to be used for both purposes at the same time in the same Collaboration. To enforce this restriction, there is a setting under the adapter’s General Settings parameters called Parse or Create Mode, for which you can select either Parse or Create.

The get() and put() methods are the heart of the OTD’s functionality. If you call either method, the record retrieved or added is assumed to be of the type specified in the adapter configuration, for example, fixed-length or delimited.

The get() method can throw an exception, but generally this action only happens when there is a severe failure. One such failure is an attempt to call get() before the payload data (or stream if you are streaming) has been set. However, the best practice is to code the Collaboration to check the return value from a get() call. A return of true means a successful get operation; a false means the opposite.

The adapter checks to ensure that the proper calls are made according to your mode setting. For example, calling put() in a parse-mode environment would cause the adapter to throw an exception with an appropriate error message explaining why. Calling get() in the create mode would also result in an error.

The adapter requires these restrictions because:

If you are processing an inbound payload, you are calling get() to extract records from the payload (parsing). In this situation it makes little sense to call put(). Doing so at this point would alter the payload while you are trying to extract records from it. Calling put() would overwrite the payload and destroy the data you are trying to obtain.

Conversely, when you are creating a payload by calling put(), you have no need to extract or parse data at this point. Therefore, you cannot call get().

As a result, you can place the OTD on the source or destination side of a given Collaboration, as desired, and use the OTD for either parsing or creating a payload. However, you cannot parse and create at the same time. Implement your OTD in a Collaboration using the Java CAPS Collaboration Rules Editor.

When you want the payload data sent to an external system, you can place the OTD on the outbound side of the Collaboration interfacing with that system. Successive calls to put() build up the payload data in the format defined in the adapter configuration.

Once all the records have been added to the payload, you can drag and drop the payload onto the node or nodes that represent the Collaboration’s outbound destination. Also, you can set an output stream as the payload’s destination.

When you are building a data payload, you must take into account the type and format of the data you are sending. The adapter allows you to use the following formats:

Single Record: This type of payload represents a single record to be sent. Each successive call to put() has the effect of growing the payload by the size of the data being put, and the payload is one contiguous stream of bytes.

Fixed-size Records: This type of payload is made up of records, with each being exactly the same size. An attempt to put() a record that is not of the size specified causes an exception to be thrown.

Delimited Records: This type of payload is made up of records that have a delimiter at the end. Each record can be a different size. Do not add any delimiters to this data type when it is passed to put(). The delimiters are added automatically by the adapter.

User Defined: In this type of payload, the semantics are fully controlled by your own implementation.

To represent payload data inbound from an external system, you map the data to the payload node in the OTD (from the Collaboration Rules Editor). In addition, you can specify an input stream as a source.

Either way, each successive call to get() extracts the next record from the payload. The type of record extracted depends on the parameters you set in the adapter’s configuration, for example, fixed size or delimited.

You must design the parsing Collaboration with instructions on what to do with each record extracted. Normally, the record can be sent to another Collaboration where a custom OTD describes the record format and carries on further processing.

It is possible to fully consume a payload. That is, after a number of successive calls to get(), you can retrieve all the records in the payload. After this point, successive calls to get() return the Boolean false. You must design the business rules in the subject Collaboration to take this possibility into account.

If you are using the record-processing OTD with data streaming, you must be careful not to overwrite the output files. If the OTD is continually streaming to a BatchLocalFile OTD that uses the same output file name, the OTD can write over files on the output side.

To avoid this problem, you must use either file sequence numbering or change the output file names in the Collaboration Rules. Sequence numbering allows the BatchLocalFile OTD to distinguish individual files by adding a sequence number to them. If you use target file names, post-transfer file names, or both, you can change the name of the output file to a different file name.

For more information on how to use these features, see Sequence Numbering and Pre/Post File Transfer Commands.