4 Using Property Graphs in a Big Data Environment

This chapter provides conceptual and usage information about creating, storing, and working with property graph data in a Big Data environment.

4.1 About Property Graphs

Property graphs allow an easy association of properties (key-value pairs) with graph vertices and edges, and they enable analytical operations based on relationships across a massive set of data.

4.1.1 What Are Property Graphs?

A property graph consists of a set of objects or vertices, and a set of arrows or edges connecting the objects. Vertices and edges can have multiple properties, which are represented as key-value pairs.

Each vertex has a unique identifier and can have:

-

A set of outgoing edges

-

A set of incoming edges

-

A collection of properties

Each edge has a unique identifier and can have:

-

An outgoing vertex

-

An incoming vertex

-

A text label that describes the relationship between the two vertices

-

A collection of properties

Figure 4-1 illustrates a very simple property graph with two vertices and one edge. The two vertices have identifiers 1 and 2. Both vertices have properties name and age. The edge is from the outgoing vertex 1 to the incoming vertex 2. The edge has a text label knows and a property type identifying the type of relationship between vertices 1 and 2.

Standards are not available for Big Data Spatial and Graph property graph data model, but it is similar to the W3C standards-based Resource Description Framework (RDF) graph data model. The property graph data model is simpler and much less precise than RDF. These differences make it a good candidate for use cases such as these:

-

Identifying influencers in a social network

-

Predicting trends and customer behavior

-

Discovering relationships based on pattern matching

-

Identifying clusters to customize campaigns

4.1.2 What Is Big Data Support for Property Graphs?

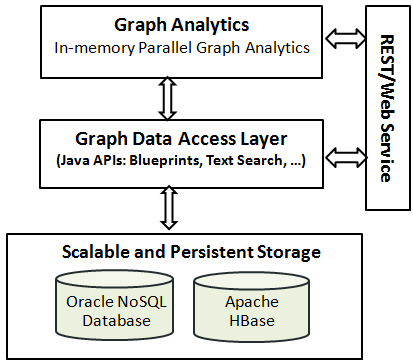

Property graphs are supported for Big Data in Hadoop and in Oracle NoSQL Database. This support consists of a data access layer and an analytics layer. A choice of databases in Hadoop provides scalable and persistent storage management.

Figure 4-2 provides an overview of the Oracle property graph architecture.

Figure 4-2 Oracle Property Graph Architecture

Description of "Figure 4-2 Oracle Property Graph Architecture"

4.1.2.1 In-Memory Analyst

The in-memory analyst layer enables you to analyze property graphs using parallel in-memory execution. It provides over 35 analytic functions, including path calculation, ranking, community detection, and recommendations.

4.1.2.2 Data Access Layer

The data access layer provides a set of Java APIs that you can use to create and drop property graphs, add and remove vertices and edges, search for vertices and edges using key-value pairs, create text indexes, and perform other manipulations. The Java APIs include an implementation of TinkerPop Blueprints graph interfaces for the property graph data model. The APIs also integrate with the Apache Lucene and Apache SolrCloud, which are widely-adopted open-source text indexing and search engines.

4.1.2.3 Storage Management

You can store your property graphs in either Oracle NoSQL Database or Apache HBase. Both databases are mature and scalable, and support efficient navigation, querying, and analytics. Both use tables to model the vertices and edges of property graphs.

4.2 About Property Graph Data Formats

The following graph formats are supported:

4.2.1 GraphML Data Format

The GraphML file format uses XML to describe graphs. Example 4-1 shows a GraphML description of the property graph shown in Figure 4-1.

Example 4-1 GraphML Description of a Simple Property Graph

<?xml version="1.0" encoding="UTF-8"?>

<graphml xmlns="http://graphml.graphdrawing.org/xmlns">

<key id="name" for="node" attr.name="name" attr.type="string"/>

<key id="age" for="node" attr.name="age" attr.type="int"/>

<key id="type" for="edge" attr.name="type" attr.type="string"/>

<graph id="PG" edgedefault="directed">

<node id="1">

<data key="name">Alice</data>

<data key="age">31</data>

</node>

<node id="2">

<data key="name">Bob</data>

<data key="age">27</data>

</node>

<edge id="3" source="1" target="2" label="knows">

<data key="type">friends</data>

</edge>

</graph>

</graphml>

4.2.2 GraphSON Data Format

The GraphSON file format is based on JavaScript Object Notation (JSON) for describing graphs. Example 4-2 shows a GraphSON description of the property graph shown in Figure 4-1.

See Also:

"GraphSON Reader and Writer Library" at

https://github.com/tinkerpop/blueprints/wiki/GraphSON-Reader-and-Writer-Library

Example 4-2 GraphSON Description of a Simple Property Graph

{

"graph": {

"mode":"NORMAL",

"vertices": [

{

"name": "Alice",

"age": 31,

"_id": "1",

"_type": "vertex"

},

{

"name": "Bob",

"age": 27,

"_id": "2",

"_type": "vertex"

}

],

"edges": [

{

"type": "friends",

"_id": "3",

"_type": "edge",

"_outV": "1",

"_inV": "2",

"_label": "knows"

}

]

}

}

4.2.3 GML Data Format

The Graph Modeling Language (GML) file format uses ASCII to describe graphs. Example 4-3 shows a GML description of the property graph shown in Figure 4-1.

See Also:

"GML: A Portable Graph File Format" by Michael Himsolt at

Example 4-3 GML Description of a Simple Property Graph

graph [

comment "Simple property graph"

directed 1

IsPlanar 1

node [

id 1

label "1"

name "Alice"

age 31

]

node [

id 2

label "2"

name "Bob"

age 27

]

edge [

source 1

target 2

label "knows"

type "friends"

]

]

4.2.4 Oracle Flat File Format

The Oracle flat file format exclusively describes property graphs. It is more concise and provides better data type support than the other file formats. The Oracle flat file format uses two files for a graph description, one for the vertices and one for edges. Commas separate the fields of the records.

Example 4-4 shows the Oracle flat files that describe the property graph shown in Figure 4-1.

See Also:

Example 4-4 Oracle Flat File Description of a Simple Property Graph

Vertex file:

1,name,1,Alice,, 1,age,2,,31, 2,name,1,Bob,, 2,age,2,,27,

Edge file:

1,1,2,knows,type,1,friends,,

4.4 Using Java APIs for Property Graph Data

Creating a property graph involves using the Java APIs to create the property graph and objects in it.

4.4.1 Overview of the Java APIs

The Java APIs that you can use for property graphs include:

4.4.1.1 Oracle Big Data Spatial and Graph Java APIs

Oracle Big Data Spatial and Graph property graph support provides database-specific APIs for Apache HBase and Oracle NoSQL Database. The data access layer API (oracle.pg.*) implements TinkerPop Blueprints APIs, text search, and indexing for property graphs stored in Oracle NoSQL Database and Apache HBase.

To use the Oracle Big Data Spatial and Graph API, import the classes into your Java program:

import oracle.pg.nosql.*; // or oracle.pg.hbase.*

import oracle.pgx.config.*;

import oracle.pgx.common.types.*;

Also include TinkerPop Blueprints Java APIs.

See Also:

Oracle Big Data Spatial and Graph Java API Reference

4.4.1.2 TinkerPop Blueprints Java APIs

TinkerPop Blueprints supports the property graph data model. The API provides utilities for manipulating graphs, which you use primarily through the Big Data Spatial and Graph data access layer Java APIs.

To use the Blueprints APIs, import the classes into your Java program:

import com.tinkerpop.blueprints.Vertex; import com.tinkerpop.blueprints.Edge;

See Also:

"Blueprints: A Property Graph Model Interface API" at

http://www.tinkerpop.com/docs/javadocs/blueprints/2.3.0/index.html

4.4.1.3 Apache Hadoop Java APIs

The Apache Hadoop Java APIs enable you to write your Java code as a MapReduce program that runs within the Hadoop distributed framework.

To use the Hadoop Java APIs, import the classes into your Java program. For example:

import org.apache.hadoop.conf.Configuration;

See Also:

"Apache Hadoop Main 2.5.0-cdh5.3.2 API" at

4.4.1.4 Oracle NoSQL Database Java APIs

The Oracle NoSQL Database APIs enable you to create and populate a key-value (KV) store, and provide interfaces to Hadoop, Hive, and Oracle NoSQL Database.

To use Oracle NoSQL Database as the graph data store, import the classes into your Java program. For example:

import oracle.kv.*; import oracle.kv.table.TableOperation;

See Also:

"Oracle NoSQL Database Java API Reference" at

4.4.1.5 Apache HBase Java APIs

The Apache HBase APIs enable you to create and manipulate key-value pairs.

To use HBase as the graph data store, import the classes into your Java program. For example:

import org.apache.hadoop.hbase.*; import org.apache.hadoop.hbase.client.*; import org.apache.hadoop.hbase.filter.*; import org.apache.hadoop.hbase.util.Bytes; import org.apache.hadoop.conf.Configuration;

See Also:

"HBase 0.98.6-cdh5.3.2 API" at

http://archive.cloudera.com/cdh5/cdh/5/hbase/apidocs/index.html?overview-summary.html

4.4.2 Parallel Loading of Graph Data

A Java API is provided for performing parallel loading of graph data.

Given a set of vertex files (or input streams) and a set of edge files (or input streams), they can be split into multiple chunks and loaded into database in parallel. The number of chunks is determined by the degree of parallelism (DOP) specified by the user.

Parallelism is achieved with Splitter threads that split vertex and edge flat files into multiple chunks and Loader threads that load each chunk into the database using separate database connections. Java pipes are used to connect Splitter and Loader threads -- Splitter: PipedOutputStream and Loader: PipedInputStream.

The simplest usage of data loading API is specifying a property graph instance, one vertex file, one edge file, and a DOP.

The following example of the load process loads graph data stored in a vertices file and an edges file of the optimized Oracle flat file format, and executes the load with 48 degrees of parallelism.

opgdl = OraclePropertyGraphDataLoader.getInstance(); vfile = "../../data/connections.opv"; efile = "../../data/connections.ope"; opgdl.loadData(opg, vfile, efile, 48);

4.4.2.1 Parallel Data Loading Using Partitions

The data loading API allows loading the data into database using multiple partitions. This API requires the property graph, the vertex file, the edge file, the DOP, the total number of partitions, and the partition offset (from 0 to total number of partitions - 1). For example, to load the data using two partitions, the partition offsets should be 0 and 1. That is, there should be two data loading API calls to fully load the graph, and the only difference between the two API calls is the partition offset (0 and 1).

The following code fragment loads the graph data using 4 partitions. Each call to the data loader can be processed using a separate Java client, on a single system or from multiple systems.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(

args, szGraphName);

int totalPartitions = 4;

int dop= 32; // degree of parallelism for each client.

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

SimpleLogBasedDataLoaderListenerImpl dll = SimpleLogBasedDataLoaderListenerImpl.getInstance(100 /* frequency */,

true /* Continue on error */);

// Run the data loading using 4 partitions (Each call can be run from a

// separate Java Client)

// Partition 1

OraclePropertyGraphDataLoader opgdlP1 = OraclePropertyGraphDataLoader.getInstance();

opgdlP1.loadData(opg, szOPVFile, szOPEFile, dop,

4 /* Total number of partitions, default 1 */,

0 /* Partition to load (from 0 to totalPartitions - 1, default 0 */,

dll);

// Partition 2

OraclePropertyGraphDataLoader opgdlP2 = OraclePropertyGraphDataLoader.getInstance();

opgdlP2.loadData(opg, szOPVFile, szOPEFile, dop, 4 /* Total number of partitions, default 1 */,

1 /* Partition to load (from 0 to totalPartitions - 1, default 0 */, dll);

// Partition 3

OraclePropertyGraphDataLoader opgdlP3 = OraclePropertyGraphDataLoader.getInstance();

opgdlP3.loadData(opg, szOPVFile, szOPEFile, dop, 4 /* Total number of partitions, default 1 */,

2 /* Partition to load (from 0 to totalPartitions - 1, default 0 */, dll);

// Partition 4

OraclePropertyGraphDataLoader opgdlP4 = OraclePropertyGraphDataLoader.getInstance();

opgdlP4.loadData(opg, szOPVFile, szOPEFile, dop, 4 /* Total number of partitions, default 1 */,

3 /* Partition to load (from 0 to totalPartitions - 1, default 0 */, dll);

4.4.2.2 Parallel Data Loading Using Fine-Tuning

Data loading APIs also support fine-tuning those lines in the source vertex and edges files that are to be loaded. You can specify the vertex (or edge) offset line number and vertex (or edge) maximum line number. Data will be loaded from the offset line number until the maximum line number. If the maximum line number is -1, the loading process will scan the data until reaching the end of file.

The following code fragment loads the graph data using fine-tuning.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(

args, szGraphName);

int totalPartitions = 4;

int dop= 32; // degree of parallelism for each client.

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

SimpleLogBasedDataLoaderListenerImpl dll = SimpleLogBasedDataLoaderListenerImpl.getInstance(100 /* frequency */,

true /* Continue on error */);

// Run the data loading using fine tuning

long lVertexOffsetlines = 0;

long lEdgeOffsetLines = 0;

long lVertexMaxlines = 100;

long lEdgeMaxlines = 100;

int totalPartitions = 1;

int idPartition = 0;

OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(m_opg, szOPVFile, szOPEFile,

lVertexOffsetlines /* offset of lines to start loading

from partition, default 0*/,

lEdgeOffsetlines /* offset of lines to start loading

from partition, default 0*/,

lVertexMaxlines /* maximum number of lines to start loading

from partition, default -1 (all lines in partition)*/,

lEdgeMaxlines /* maximun number of lines to start loading

from partition, default -1 (all lines in partition)*/,

dop,

totalPartitions /* Total number of partitions, default 1 */,

idPartition /* Partition to load (from 0 to totalPartitions - 1,

default 0 */,

dll);

4.4.2.3 Parallel Data Loading Using Multiple Files

Oracle Big Data Spatial and Graph also support loading multiple vertex files and multiple edges files into database. The given multiple vertex files will be split into DOP chunks and loaded into database in parallel using DOP threads. Similarly, the multiple edge files will also be split and loaded in parallel.

The following code fragment loads multiple vertex fan and edge files using the parallel data loading APIs. In the example, two string arrays szOPVFiles and szOPEFiles are used to hold the input files; Although only one vertex file and one edge file is used in this example, you can supply multiple vertex files and multiple edge files in these two arrays.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(

args, szGraphName);

String[] szOPVFiles = new String[] {"../../data/connections.opv"};

String[] szOPEFiles = new String[] {"../../data/connections.ope"};

// Clear existing vertices/edges in the property graph

opg.clearRepository();

opg.setQueueSize(100); // 100 elements

// This object will handle parallel data loading over the property graph

OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFiles, szOPEFiles, dop);

System.out.println("Total vertices: " + opg.countVertices());

System.out.println("Total edges: " + opg.countEdges());

4.4.2.4 Parallel Retrieval of Graph Data

The parallel property graph query provides a simple Java API to perform parallel scans on vertices (or edges). Parallel retrieval is an optimized solution taking advantage of the distribution of the data among splits with the back-end database, so each split is queried using separate database connections.

Parallel retrieval will produce an array where each element holds all the vertices (or edges) from a specific split. The subset of shards queried will be separated by the given start split ID and the size of the connections array provided. This way, the subset will consider splits in the range of [start, start - 1 + size of connections array]. Note that an integer ID (in the range of [0, N - 1]) is assigned to all the splits in the vertex table with N splits.

The following code loads a property graph using Apache HBase, opens an array of connections, and executes a parallel query to retrieve all vertices and edges using the opened connections. The number of calls to the getVerticesPartitioned (getEdgesPartitioned) method is controlled by the total number of splits and the number of connections used.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(

args, szGraphName);

// Clear existing vertices/edges in the property graph

opg.clearRepository();

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

// This object will handle parallel data loading

OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFile, szOPEFile, dop);

// Create connections used in parallel query

HConnection hConns= new HConnection[dop];

for (int i = 0; i < dop; i++) {

Configuration conf_new =

HBaseConfiguration.create(opg.getConfiguration());

hConns[i] = HConnectionManager.createConnection(conf_new);

}

long lCountV = 0;

// Iterate over all the vertices’ splits to count all the vertices

for (int split = 0; split < opg.getVertexTableSplits();

split += dop) {

Iterable<Vertex>[] iterables

= opg.getVerticesPartitioned(hConns /* Connection array */,

true /* skip store to cache */,

split /* starting split */);

lCountV += consumeIterables(iterables); /* consume iterables using

threads */

}

// Count all vertices

System.out.println("Vertices found using parallel query: " + lCountV);

long lCountE = 0;

// Iterate over all the edges’ splits to count all the edges

for (int split = 0; split < opg.getEdgeTableSplits();

split += dop) {

Iterable<Edge>[] iterables

= opg.getEdgesPartitioned(hConns /* Connection array */,

true /* skip store to cache */,

split /* starting split */);

lCountE += consumeIterables(iterables); /* consume iterables using

threads */

}

// Count all edges

System.out.println("Edges found using parallel query: " + lCountE);

// Close the connections to the database after completed

for (int idx = 0; idx < hConns.length; idx++) {

hConns[idx].close();

}

4.4.2.5 Using an Element Filter Callback for Subgraph Extraction

Oracle Big Data Spatial and Graph provides support for an easy subgraph extraction using user-defined element filter callbacks. An element filter callback defines a set of conditions that a vertex (or an edge) must meet in order to keep it in the subgraph. Users can define their own element filtering by implementing the VertexFilterCallback and EdgeFilterCallback API interfaces.

The following code fragment implements a VertexFilterCallback that validates if a vertex does not have a political role and its origin is the United States.

/**

* VertexFilterCallback to retrieve a vertex from the United States

* that does not have a political role

*/

private static class NonPoliticianFilterCallback

implements VertexFilterCallback

{

@Override

public boolean keepVertex(OracleVertexBase vertex)

{

String country = vertex.getProperty("country");

String role = vertex.getProperty("role");

if (country != null && country.equals("United States")) {

if (role == null || !role.toLowerCase().contains("political")) {

return true;

}

}

return false;

}

public static NonPoliticianFilterCallback getInstance()

{

return new NonPoliticianFilterCallback();

}

}

The following code fragment implements an EdgeFilterCallback that uses the VertexFilterCallback to keep only edges connected to the given input vertex, and whose connections are not politicians and come from the United States.

/**

* EdgeFilterCallback to retrieve all edges connected to an input

* vertex with "collaborates" label, and whose vertex is from the

* United States with a role different than political

*/

private static class CollaboratorsFilterCallback

implements EdgeFilterCallback

{

private VertexFilterCallback m_vfc;

private Vertex m_startV;

public CollaboratorsFilterCallback(VertexFilterCallback vfc,

Vertex v)

{

m_vfc = vfc;

m_startV = v;

}

@Override

public boolean keepEdge(OracleEdgeBase edge)

{

if ("collaborates".equals(edge.getLabel())) {

if (edge.getVertex(Direction.IN).equals(m_startV) &&

m_vfc.keepVertex((OracleVertex)

edge.getVertex(Direction.OUT))) {

return true;

}

else if (edge.getVertex(Direction.OUT).equals(m_startV) &&

m_vfc.keepVertex((OracleVertex)

edge.getVertex(Direction.IN))) {

return true;

}

}

return false;

}

public static CollaboratorsFilterCallback

getInstance(VertexFilterCallback vfc, Vertex v)

{

return new CollaboratorsFilterCallback(vfc, v);

}

}

Using the filter callbacks previously defined, the following code fragment loads a property graph, creates an instance of the filter callbacks and later gets all of Barack Obama’s collaborators who are not politicians and come from the United States.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance( args, szGraphName); // Clear existing vertices/edges in the property graph opg.clearRepository(); String szOPVFile = "../../data/connections.opv"; String szOPEFile = "../../data/connections.ope"; // This object will handle parallel data loading OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance(); opgdl.loadData(opg, szOPVFile, szOPEFile, dop); // VertexFilterCallback to retrieve all people from the United States // who are not politicians NonPoliticianFilterCallback npvfc = NonPoliticianFilterCallback.getInstance(); // Initial vertex: Barack Obama Vertex v = opg.getVertices("name", "Barack Obama").iterator().next(); // EdgeFilterCallback to retrieve all collaborators of Barack Obama // from the United States who are not politicians CollaboratorsFilterCallback cefc = CollaboratorsFilterCallback.getInstance(npvfc, v); Iterable<<Edge> obamaCollabs = opg.getEdges((String[])null /* Match any of the properties */, cefc /* Match the EdgeFilterCallback */ ); Iterator<<Edge> iter = obamaCollabs.iterator(); System.out.println("\n\n--------Collaborators of Barack Obama from " + " the US and non-politician\n\n"); long countV = 0; while (iter.hasNext()) { Edge edge = iter.next(); // get the edge // check if obama is the IN vertex if (edge.getVertex(Direction.IN).equals(v)) { System.out.println(edge.getVertex(Direction.OUT) + "(Edge ID: " + edge.getId() + ")"); // get out vertex } else { System.out.println(edge.getVertex(Direction.IN)+ "(Edge ID: " + edge.getId() + ")"); // get in vertex } countV++; }

By default, all reading operations such as get all vertices, get all edges (and parallel approaches) will use the filter callbacks associated with the property graph using the methods opg.setVertexFilterCallback(vfc) and opg.setEdgeFilterCallback(efc). If there is no filter callback set, then all the vertices (or edges) and edges will be retrieved.

The following code fragment uses the default edge filter callback set on the property graph to retrieve the edges.

// VertexFilterCallback to retrieve all people from the United States // who are not politicians NonPoliticianFilterCallback npvfc = NonPoliticianFilterCallback.getInstance(); // Initial vertex: Barack Obama Vertex v = opg.getVertices("name", "Barack Obama").iterator().next(); // EdgeFilterCallback to retrieve all collaborators of Barack Obama // from the United States who are not politicians CollaboratorsFilterCallback cefc = CollaboratorsFilterCallback.getInstance(npvfc, v); opg.setEdgeFilterCallback(cefc); Iterable<Edge> obamaCollabs = opg.getEdges(); Iterator<Edge> iter = obamaCollabs.iterator(); System.out.println("\n\n--------Collaborators of Barack Obama from " + " the US and non-politician\n\n"); long countV = 0; while (iter.hasNext()) { Edge edge = iter.next(); // get the edge // check if obama is the IN vertex if (edge.getVertex(Direction.IN).equals(v)) { System.out.println(edge.getVertex(Direction.OUT) + "(Edge ID: " + edge.getId() + ")"); // get out vertex } else { System.out.println(edge.getVertex(Direction.IN)+ "(Edge ID: " + edge.getId() + ")"); // get in vertex } countV++; }

4.4.2.6 Using Optimization Flags on Reads over Property Graph Data

Oracle Big Data Spatial and Graph provides support for optimization flags to improve graph iteration performance. Optimization flags allow processing vertices (or edges) as objects with none or minimal information, such as ID, label, and/or incoming/outgoing vertices. This way, the time required to process each vertex (or edge) during iteration is reduced.

The following table shows the optimization flags available when processing vertices (or edges) in a property graph.

| Optimization Flag | Description |

| DO_NOT_CREATE_OBJECT | Use a predefined constant object when processing vertices or edges. |

| JUST_EDGE_ID | Construct edge objects with ID only when processing edges. |

| JUST_LABEL_EDGE_ID | Construct edge objects with ID and label only when processing edges. |

| JUST_LABEL_VERTEX_EDGE_ID | Construct edge objects with ID, label, and in/out vertex IDs only when processing edges |

| JUST_VERTEX_EDGE_ID | Construct edge objects with just ID and in/out vertex IDs when processing edges. |

| JUST_VERTEX_ID | Construct vertex objects with ID only when processing vertices. |

The following code fragment uses a set of optimization flags to retrieve only all the IDs from the vertices and edges in the property graph. The objects retrieved by reading all vertices and edges will include only the IDs and no Key/Value properties or additional information.

import oracle.pg.common.OraclePropertyGraphBase.OptimizationFlag; OraclePropertyGraph opg = OraclePropertyGraph.getInstance( args, szGraphName); // Clear existing vertices/edges in the property graph opg.clearRepository(); String szOPVFile = "../../data/connections.opv"; String szOPEFile = "../../data/connections.ope"; // This object will handle parallel data loading OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance(); opgdl.loadData(opg, szOPVFile, szOPEFile, dop); // Optimization flag to retrieve only vertices IDs OptimizationFlag optFlagVertex = OptimizationFlag.JUST_VERTEX_ID; // Optimization flag to retrieve only edges IDs OptimizationFlag optFlagEdge = OptimizationFlag.JUST_EDGE_ID; // Print all vertices Iterator<Vertex> vertices = opg.getVertices((String[])null /* Match any of the properties */, null /* Match the VertexFilterCallback */, optFlagVertex /* optimization flag */ ).iterator(); System.out.println("----- Vertices IDs----"); long vCount = 0; while (vertices.hasNext()) { OracleVertex v = vertices.next(); System.out.println((Long) v.getId()); vCount++; } System.out.println("Vertices found: " + vCount); // Print all edges Iterator<Edge> edges = opg.getEdges((String[])null /* Match any of the properties */, null /* Match the EdgeFilterCallback */, optFlagEdge /* optimization flag */ ).iterator(); System.out.println("----- Edges ----"); long eCount = 0; while (edges.hasNext()) { Edge e = edges.next(); System.out.println((Long) e.getId()); eCount++; } System.out.println("Edges found: " + eCount);

By default, all reading operations such as get all vertices, get all edges (and parallel approaches) will use the optimization flag associated with the property graph using the method opg.setDefaultVertexOptFlag(optFlagVertex) and opg.setDefaultEdgeOptFlag(optFlagEdge). If the optimization flags for processing vertices and edges are not defined, then all the information about the vertices and edges will be retrieved.

The following code fragment uses the default optimization flags set on the property graph to retrieve only all the IDs from its vertices and edges.

import oracle.pg.common.OraclePropertyGraphBase.OptimizationFlag; // Optimization flag to retrieve only vertices IDs OptimizationFlag optFlagVertex = OptimizationFlag.JUST_VERTEX_ID; // Optimization flag to retrieve only edges IDs OptimizationFlag optFlagEdge = OptimizationFlag.JUST_EDGE_ID; opg.setDefaultVertexOptFlag(optFlagVertex); opg.setDefaultEdgeOptFlag(optFlagEdge); Iterator<Vertex> vertices = opg.getVertices().iterator(); System.out.println("----- Vertices IDs----"); long vCount = 0; while (vertices.hasNext()) { OracleVertex v = vertices.next(); System.out.println((Long) v.getId()); vCount++; } System.out.println("Vertices found: " + vCount); // Print all edges Iterator<Edge> edges = opg.getEdges().iterator(); System.out.println("----- Edges ----"); long eCount = 0; while (edges.hasNext()) { Edge e = edges.next(); System.out.println((Long) e.getId()); eCount++; } System.out.println("Edges found: " + eCount);

4.4.2.7 Adding and Removing Attributes of a Property Graph Subgraph

Oracle Big Data Spatial and Graph supports updating attributes (key/value pairs) to a subgraph of vertices and/or edges by using a user-customized operation callback. An operation callback defines a set of conditions that a vertex (or an edge) must meet in order to update it (either add or remove the given attribute and value).

You can define your own attribute operations by implementing the VertexOpCallback and EdgeOpCallback API interfaces. You must override the needOp method, which defines the conditions to be satisfied by the vertices (or edges) to be included in the update operation, as well as the getAttributeKeyName and getAttributeKeyValue methods, which return the key name and value, respectively, to be used when updating the elements.

The following code fragment implements a VertexOpCallback that operates over the obamaCollaborator attribute associated only with Barack Obama collaborators. The value of this property is specified based on the role of the collaborators.

private static class CollaboratorsVertexOpCallback implements VertexOpCallback { private OracleVertexBase m_obama; private List<Vertex> m_obamaCollaborators; public CollaboratorsVertexOpCallback(OraclePropertyGraph opg) { // Get a list of Barack Obama'sCollaborators m_obama = (OracleVertexBase) opg.getVertices("name", "Barack Obama") .iterator().next(); Iterable<Vertex> iter = m_obama.getVertices(Direction.BOTH, "collaborates"); m_obamaCollaborators = OraclePropertyGraphUtils.listify(iter); } public static CollaboratorsVertexOpCallback getInstance(OraclePropertyGraph opg) { return new CollaboratorsVertexOpCallback(opg); } /** * Add attribute if and only if the vertex is a collaborator of Barack * Obama */ @Override public boolean needOp(OracleVertexBase v) { return m_obamaCollaborators != null && m_obamaCollaborators.contains(v); } @Override public String getAttributeKeyName(OracleVertexBase v) { return "obamaCollaborator"; } /** * Define the property's value based on the vertex role */ @Override public Object getAttributeKeyValue(OracleVertexBase v) { String role = v.getProperty("role"); role = role.toLowerCase(); if (role.contains("political")) { return "political"; } else if (role.contains("actor") || role.contains("singer") || role.contains("actress") || role.contains("writer") || role.contains("producer") || role.contains("director")) { return "arts"; } else if (role.contains("player")) { return "sports"; } else if (role.contains("journalist")) { return "journalism"; } else if (role.contains("business") || role.contains("economist")) { return "business"; } else if (role.contains("philanthropist")) { return "philanthropy"; } return " "; } }

The following code fragment implements an EdgeOpCallback that operates over the obamaFeud attribute associated only with Barack Obama feuds. The value of this property is specified based on the role of the collaborators.

private static class FeudsEdgeOpCallback implements EdgeOpCallback { private OracleVertexBase m_obama; private List<Edge> m_obamaFeuds; public FeudsEdgeOpCallback(OraclePropertyGraph opg) { // Get a list of Barack Obama's feuds m_obama = (OracleVertexBase) opg.getVertices("name", "Barack Obama") .iterator().next(); Iterable<Vertex> iter = m_obama.getVertices(Direction.BOTH, "feuds"); m_obamaFeuds = OraclePropertyGraphUtils.listify(iter); } public static FeudsEdgeOpCallback getInstance(OraclePropertyGraph opg) { return new FeudsEdgeOpCallback(opg); } /** * Add attribute if and only if the edge is in the list of Barack Obama's * feuds */ @Override public boolean needOp(OracleEdgeBase e) { return m_obamaFeuds != null && m_obamaFeuds.contains(e); } @Override public String getAttributeKeyName(OracleEdgeBase e) { return "obamaFeud"; } /** * Define the property's value based on the in/out vertex role */ @Override public Object getAttributeKeyValue(OracleEdgeBase e) { OracleVertexBase v = (OracleVertexBase) e.getVertex(Direction.IN); if (m_obama.equals(v)) { v = (OracleVertexBase) e.getVertex(Direction.OUT); } String role = v.getProperty("role"); role = role.toLowerCase(); if (role.contains("political")) { return "political"; } else if (role.contains("actor") || role.contains("singer") || role.contains("actress") || role.contains("writer") || role.contains("producer") || role.contains("director")) { return "arts"; } else if (role.contains("journalist")) { return "journalism"; } else if (role.contains("player")) { return "sports"; } else if (role.contains("business") || role.contains("economist")) { return "business"; } else if (role.contains("philanthropist")) { return "philanthropy"; } return " "; } }

Using the operations callbacks defined previously, the following code fragment loads a property graph, creates an instance of the operation callbacks, and later adds the attributes into the pertinent vertices and edges using the addAttributeToAllVertices and addAttributeToAllEdges methods in OraclePropertyGraph.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance( args, szGraphName); // Clear existing vertices/edges in the property graph opg.clearRepository(); String szOPVFile = "../../data/connections.opv"; String szOPEFile = "../../data/connections.ope"; // This object will handle parallel data loading OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance(); opgdl.loadData(opg, szOPVFile, szOPEFile, dop); // Create the vertex operation callback CollaboratorsVertexOpCallback cvoc = CollaboratorsVertexOpCallback.getInstance(opg); // Add attribute to all people collaborating with Obama based on their role opg.addAttributeToAllVertices(cvoc, true /** Skip store to Cache */, dop); // Look up for all collaborators of Obama Iterable<Vertex> collaborators = opg.getVertices("obamaCollaborator", "political"); System.out.println("Political collaborators of Barack Obama " + getVerticesAsString(collaborators)); collaborators = opg.getVertices("obamaCollaborator", "business"); System.out.println("Business collaborators of Barack Obama " + getVerticesAsString(collaborators)); // Add an attribute to all people having a feud with Barack Obama to set // the type of relation they have FeudsEdgeOpCallback feoc = FeudsEdgeOpCallback.getInstance(opg); opg.addAttributeToAllEdges(feoc, true /** Skip store to Cache */, dop); // Look up for all feuds of Obama Iterable<Edge> feuds = opg.getEdges("obamaFeud", "political"); System.out.println("\n\nPolitical feuds of Barack Obama " + getEdgesAsString(feuds)); feuds = opg.getEdges("obamaFeud", "business"); System.out.println("Business feuds of Barack Obama " + getEdgesAsString(feuds));

The following code fragment defines an implementation of VertexOpCallback that can be used to remove vertices having value philanthropy for attribute obamaCollaborator, then call the API removeAttributeFromAllVertices; It also defines an implementation of EdgeOpCallback that can be used to remove edges having value business for attribute obamaFeud, then call the API removeAttributeFromAllEdges.

System.out.println("\n\nRemove 'obamaCollaborator' property from all the" +

"philanthropy collaborators");

PhilanthropyCollaboratorsVertexOpCallback pvoc = PhilanthropyCollaboratorsVertexOpCallback.getInstance();

opg.removeAttributeFromAllVertices(pvoc);

System.out.println("\n\nRemove 'obamaFeud' property from all the" + "business feuds");

BusinessFeudsEdgeOpCallback beoc = BusinessFeudsEdgeOpCallback.getInstance();

opg.removeAttributeFromAllEdges(beoc);

/**

* Implementation of a EdgeOpCallback to remove the "obamaCollaborators"

* property from all people collaborating with Barack Obama that have a

* philanthropy role

*/

private static class PhilanthropyCollaboratorsVertexOpCallback implements VertexOpCallback

{

public static PhilanthropyCollaboratorsVertexOpCallback getInstance()

{

return new PhilanthropyCollaboratorsVertexOpCallback();

}

/**

* Remove attribute if and only if the property value for

* obamaCollaborator is Philanthropy

*/

@Override

public boolean needOp(OracleVertexBase v)

{

String type = v.getProperty("obamaCollaborator");

return type != null && type.equals("philanthropy");

}

@Override

public String getAttributeKeyName(OracleVertexBase v)

{

return "obamaCollaborator";

}

/**

* Define the property's value. In this case can be empty

*/

@Override

public Object getAttributeKeyValue(OracleVertexBase v)

{

return " ";

}

}

/**

* Implementation of a EdgeOpCallback to remove the "obamaFeud" property

* from all connections in a feud with Barack Obama that have a business role

*/

private static class BusinessFeudsEdgeOpCallback implements EdgeOpCallback

{

public static BusinessFeudsEdgeOpCallback getInstance()

{

return new BusinessFeudsEdgeOpCallback();

}

/**

* Remove attribute if and only if the property value for obamaFeud is

* business

*/

@Override

public boolean needOp(OracleEdgeBase e)

{

String type = e.getProperty("obamaFeud");

return type != null && type.equals("business");

}

@Override

public String getAttributeKeyName(OracleEdgeBase e)

{

return "obamaFeud";

}

/**

* Define the property's value. In this case can be empty

*/

@Override

public Object getAttributeKeyValue(OracleEdgeBase e)

{

return " ";

}

}

4.4.2.8 Getting Property Graph Metadata

You can get graph metadata and statistics, such as all graph names in the database; for each graph, getting the minimum/maximum vertex ID, the minimum/maximum edge ID, vertex property names, edge property names, number of splits in graph vertex, and the edge table that supports parallel table scans.

The following code fragment gets the metadata and statistics of the existing property graphs stored in the back-end database (either Oracle NoSQL Database or Apache HBase). The arguments required vary for each database.

// Get all graph names in the database

List<String> graphNames = OraclePropertyGraphUtils.getGraphNames(dbArgs);

for (String graphName : graphNames) {

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(args,

graphName);

System.err.println("\n Graph name: " + graphName);

System.err.println(" Total vertices: " +

opg.countVertices(dop));

System.err.println(" Minimum Vertex ID: " +

opg.getMinVertexID(dop));

System.err.println(" Maximum Vertex ID: " +

opg.getMaxVertexID(dop));

Set<String> propertyNamesV = new HashSet<String>();

opg.getVertexPropertyNames(dop, 0 /* timeout,0 no timeout */,

propertyNamesV);

System.err.println(" Vertices property names: " +

getPropertyNamesAsString(propertyNamesV));

System.err.println("\n\n Total edges: " + opg.countEdges(dop));

System.err.println(" Minimum Edge ID: " + opg.getMinEdgeID(dop));

System.err.println(" Maximum Edge ID: " + opg.getMaxEdgeID(dop));

Set<String> propertyNamesE = new HashSet<String>();

opg.getEdgePropertyNames(dop, 0 /* timeout,0 no timeout */,

propertyNamesE);

System.err.println(" Edge property names: " +

getPropertyNamesAsString(propertyNamesE));

System.err.println("\n\n Table Information: ");

System.err.println("Vertex table number of splits: " +

(opg.getVertexTableSplits()));

System.err.println("Edge table number of splits: " +

(opg.getEdgeTableSplits()));

}

4.4.3 Opening and Closing a Property Graph Instance

When describing a property graph, use these Oracle Property Graph classes to open and close the property graph instance properly:

-

OraclePropertyGraph.getInstance: Opens an instance of an Oracle property graph. This method has two parameters, the connection information and the graph name. The format of the connection information depends on whether you use HBase or Oracle NoSQL Database as the backend database. -

OraclePropertyGraph.clearRepository: Removes all vertices and edges from the property graph instance. -

OraclePropertyGraph.shutdown: Closes the graph instance.

In addition, you must use the appropriate classes from the Oracle NoSQL Database or HBase APIs.

4.4.3.1 Using Oracle NoSQL Database

For Oracle NoSQL Database, the OraclePropertyGraph.getInstance method uses the KV store name, host computer name, and port number for the connection:

String kvHostPort = "cluster02:5000"; String kvStoreName = "kvstore"; String kvGraphName = "my_graph"; // Use NoSQL Java API KVStoreConfig kvconfig = new KVStoreConfig(kvStoreName, kvHostPort); OraclePropertyGraph opg = OraclePropertyGraph.getInstance(kvconfig, kvGraphName); opg.clearRepository(); // . // . Graph description // . // Close the graph instance opg.shutdown();

If the in-memory analyst functions are required for your application, then it is recommended that you use GraphConfigBuilder to create a graph config for Oracle NoSQL Database, and instantiates OraclePropertyGraph with the config as an argument.

As an example, the following code snippet constructs a graph config, gets an OraclePropertyGraph instance, loads some data into that graph, and gets an in-memory analyst.

import oracle.pgx.config.*;

import oracle.pgx.api.*;

import oracle.pgx.common.types.*;

...

String[] hhosts = new String[1];

hhosts[0] = "my_host_name:5000"; // need customization

String szStoreName = "kvstore"; // need customization

String szGraphName = "my_graph";

int dop = 8;

PgNosqlGraphConfig cfg = GraphConfigBuilder.forPropertyGraphNosql()

.setName(szGraphName)

.setHosts(Arrays.asList(hhosts))

.setStoreName(szStoreName)

.addEdgeProperty("lbl", PropertyType.STRING, "lbl")

.addEdgeProperty("weight", PropertyType.DOUBLE, "1000000")

.build();

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(cfg);

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

// perform a parallel data load

OraclePropertyGraphDataLoader opgdl = OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFile, szOPEFile, dop);

...

PgxSession session = Pgx.createSession("session-id-1");

PgxGraph g = session.readGraphWithProperties(cfg);

Analyst analyst = session.createAnalyst();

...

4.4.3.2 Using Apache HBase

For Apache HBase, the OraclePropertyGraph.getInstance method uses the Hadoop nodes and the Apache HBase port number for the connection:

String hbQuorum = "bda01node01.example.com, bda01node02.example.com, bda01node03.example.com";

String hbClientPort = "2181"

String hbGraphName = "my_graph";

// Use HBase Java APIs

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum", hbQuorum);

conf.set("hbase.zookeper.property.clientPort", hbClientPort);

HConnection conn = HConnectionManager.createConnection(conf);

// Open the property graph

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(conf, conn, hbGraphName);

opg.clearRepository();

// .

// . Graph description

// .

// Close the graph instance

opg.shutdown();

// Close the HBase connection

conn.close();

If the in-memory analyst functions are required for your application, then it is recommended that you use GraphConfigBuilder to create a graph config, and instantiates OraclePropertyGraph with the config as an argument.

As an example, the following code snippet sets the configuration for in memory analytics, constructs a graph config for Apache HBase, instantiates an OraclePropertyGraph instance, gets an in-memory analyst, and counts the number of triangles in the graph.

confPgx = new HashMap<PgxConfig.Field, Object>();

confPgx.put(PgxConfig.Field.ENABLE_GM_COMPILER, false);

confPgx.put(PgxConfig.Field.NUM_WORKERS_IO, dop + 2);

confPgx.put(PgxConfig.Field.NUM_WORKERS_ANALYSIS, 8); // <= # of physical cores

confPgx.put(PgxConfig.Field.NUM_WORKERS_FAST_TRACK_ANALYSIS, 2);

confPgx.put(PgxConfig.Field.SESSION_TASK_TIMEOUT_SECS, 0);// no timeout set

confPgx.put(PgxConfig.Field.SESSION_IDLE_TIMEOUT_SECS, 0); // no timeout set

ServerInstance instance = Pgx.getInstance();

instance.startEngine(confPgx);

int iClientPort = Integer.parseInt(hbClientPort);

int splitsPerRegion = 2;

PgHbaseGraphConfig cfg = GraphConfigBuilder.forPropertyGraphHbase()

.setName(hbGraphName)

.setZkQuorum(hbQuorum)

.setZkClientPort(iClientPort)

.setZkSessionTimeout(60000)

.setMaxNumConnections(dop)

.setSplitsPerRegion(splitsPerRegion)

.addEdgeProperty("lbl", PropertyType.STRING, "lbl")

.addEdgeProperty("weight", PropertyType.DOUBLE, "1000000")

.build();

PgxSession session = Pgx.createSession("session-id-1");

PgxGraph g = session.readGraphWithProperties(cfg);

Analyst analyst = session.createAnalyst();

long triangles = analyst.countTriangles(g, false);

4.4.4 Creating the Vertices

To create a vertex, use these Oracle Property Graph methods:

-

OraclePropertyGraph.addVertex: Adds a vertex instance to a graph. -

OracleVertex.setProperty: Assigns a key-value property to a vertex. -

OraclePropertyGraph.commit: Saves all changes to the property graph instance.

The following code fragment creates two vertices named V1 and V2, with properties for age, name, weight, height, and sex in the opg property graph instance. The v1 properties set the data types explicitly.

// Create vertex v1 and assign it properties as key-value pairs

Vertex v1 = opg.addVertex(1l);

v1.setProperty("age", Integer.valueOf(31));

v1.setProperty("name", "Alice");

v1.setProperty("weight", Float.valueOf(135.0f));

v1.setProperty("height", Double.valueOf(64.5d));

v1.setProperty("female", Boolean.TRUE);

Vertex v2 = opg.addVertex(2l);

v2.setProperty("age", 27);

v2.setProperty("name", "Bob");

v2.setProperty("weight", Float.valueOf(156.0f));

v2.setProperty("height", Double.valueOf(69.5d));

v2.setProperty("female", Boolean.FALSE);

4.4.5 Creating the Edges

To create an edge, use these Oracle Property Graph methods:

-

OraclePropertyGraph.addEdge: Adds an edge instance to a graph. -

OracleEdge.setProperty: Assigns a key-value property to an edge.

The following code fragment creates two vertices (v1 and v2) and one edge (e1).

// Add vertices v1 and v2

Vertex v1 = opg.addVertex(1l);

v1.setProperty("name", "Alice");

v1.setProperty("age", 31);

Vertex v2 = opg.addVertex(2l);

v2.setProperty("name", "Bob");

v2.setProperty("age", 27);

// Add edge e1

Edge e1 = opg.addEdge(1l, v1, v2, "knows");

e1.setProperty("type", "friends");

4.4.6 Deleting the Vertices and Edges

You can remove vertex and edge instances individually, or all of them simultaneously. Use these methods:

-

OraclePropertyGraph.removeEdge: Removes the specified edge from the graph. -

OraclePropertyGraph.removeVertex: Removes the specified vertex from the graph. -

OraclePropertyGraph.clearRepository: Removes all vertices and edges from the property graph instance.

The following code fragment removes edge e1 and vertex v1 from the graph instance. The adjacent edges will also be deleted from the graph when removing a vertex. This is because every edge must have an beginning and ending vertex. After removing the beginning or ending vertex, the edge is no longer a valid edge.

// Remove edge e1 opg.removeEdge(e1); // Remove vertex v1 opg.removeVertex(v1);

The OraclePropertyGraph.clearRepository method can be used to remove all contents from an OraclePropertyGraph instance. However, use it with care because this action cannot be reversed.

4.4.7 Reading a Graph from a Database into an Embedded In-Memory Analyst

You can read a graph from Apache HBase or Oracle NoSQL Database into an in-memory analyst that is embedded in the same client Java application (a single JVM). For the following Apache HBase example:

-

A correct

java.io.tmpdirsetting is required. -

dop + 2is a workaround for a performance issue before Release 1.1.2. Effective with Release 1.1.2, you can instead specify adopvalue directly in the configuration settings.

int dop = 8; // need customization

Map<PgxConfig.Field, Object> confPgx = new HashMap<PgxConfig.Field, Object>();

confPgx.put(PgxConfig.Field.ENABLE_GM_COMPILER, false);

confPgx.put(PgxConfig.Field.NUM_WORKERS_IO, dop + 2); // use dop directly with release 1.1.2 or newer

confPgx.put(PgxConfig.Field.NUM_WORKERS_ANALYSIS, dop); // <= # of physical cores

confPgx.put(PgxConfig.Field.NUM_WORKERS_FAST_TRACK_ANALYSIS, 2);

confPgx.put(PgxConfig.Field.SESSION_TASK_TIMEOUT_SECS, 0); // no timeout set

confPgx.put(PgxConfig.Field.SESSION_IDLE_TIMEOUT_SECS, 0); // no timeout set

PgHbaseGraphConfig cfg = GraphConfigBuilder.forPropertyGraphHbase()

.setName("mygraph")

.setZkQuorum("localhost") // quorum, need customization

.setZkClientPort(2181)

.addNodeProperty("name", PropertyType.STRING, "default_name")

.build();

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(cfg);

ServerInstance localInstance = Pgx.getInstance();

localInstance.startEngine(confPgx);

PgxSession session = localInstance.createSession("session-id-1"); // Put your session description here.

Analyst analyst = session.createAnalyst();

// The following call will trigger a read of graph data from the database

PgxGraph pgxGraph = session.readGraphWithProperties(opg.getConfig());

long triangles = analyst.countTriangles(pgxGraph, false);

System.out.println("triangles " + triangles);

// Remove edge e1

opg.removeEdge(e1);

// Remove vertex v1

opg.removeVertex(v1);

4.4.8 Building an In-Memory Graph

In addition to Reading Graph Data into Memory, you can create an in-memory graph programmatically. This can simplify development when the size of graph is small or when the content of the graph is highly dynamic. The key Java class is GraphBuilder, which can accumulate a set of vertices and edges added with the addVertex and addEdge APIs. After all changes are made, an in-memory graph instance (PgxGraph) can be created by the GraphBuilder.

The following Java code snippet illustrates a graph construction flow. Note that there are no explicit calls to addVertex, because any vertex that does not already exist will be added dynamically as its adjacent edges are created.

import oracle.pgx.api.*;

PgxSession session = Pgx.createSession("example");

GraphBuilder<Integer> builder = session.newGraphBuilder();

builder.addEdge(0, 1, 2);

builder.addEdge(1, 2, 3);

builder.addEdge(2, 2, 4);

builder.addEdge(3, 3, 4);

builder.addEdge(4, 4, 2);

PgxGraph graph = builder.build();

To construct a graph with vertex properties, you can use setProperty against the vertex objects created.

PgxSession session = Pgx.createSession("example");

GraphBuilder<Integer> builder = session.newGraphBuilder();

builder.addVertex(1).setProperty("double-prop", 0.1);

builder.addVertex(2).setProperty("double-prop", 2.0);

builder.addVertex(3).setProperty("double-prop", 0.3);

builder.addVertex(4).setProperty("double-prop", 4.56789);

builder.addEdge(0, 1, 2);

builder.addEdge(1, 2, 3);

builder.addEdge(2, 2, 4);

builder.addEdge(3, 3, 4);

builder.addEdge(4, 4, 2);

PgxGraph graph = builder.build();

To use long integers as vertex and edge identifiers, specify IdType.LONG when getting a new instance of GraphBuilder. For example:

import oracle.pgx.common.types.IdType; GraphBuilder<Long> builder = session.newGraphBuilder(IdType.LONG);

During edge construction, you can directly use vertex objects that were previously created in a call to addEdge.

v1 = builder.addVertex(1l).setProperty("double-prop", 0.5)

v2 = builder.addVertex(2l).setProperty("double-prop", 2.0)

builder.addEdge(0, v1, v2)

As with vertices, edges can have properties. The following example sets the edge label by using setLabel:

builder.addEdge(4, v4, v2).setProperty("edge-prop", "edge_prop_4_2").setLabel("label")

4.4.9 Dropping a Property Graph

To drop a property graph from the database, use the OraclePropertyGraphUtils.dropPropertyGraph method. This method has two parameters, the connection information and the graph name.

The format of the connection information depends on whether you use HBase or Oracle NoSQL Database as the backend database. It is the same as the connection information you provide to OraclePropertyGraph.getInstance.

4.4.9.1 Using Oracle NoSQL Database

For Oracle NoSQL Database, the OraclePropertyGraphUtils.dropPropertyGraph method uses the KV store name, host computer name, and port number for the connection. This code fragment deletes a graph named my_graph from Oracle NoSQL Database.

String kvHostPort = "cluster02:5000"; String kvStoreName = "kvstore"; String kvGraphName = "my_graph"; // Use NoSQL Java API KVStoreConfig kvconfig = new KVStoreConfig(kvStoreName, kvHostPort); // Drop the graph OraclePropertyGraphUtils.dropPropertyGraph(kvconfig, kvGraphName);

4.4.9.2 Using Apache HBase

For Apache HBase, the OraclePropertyGraphUtils.dropPropertyGraph method uses the Hadoop nodes and the Apache HBase port number for the connection. This code fragment deletes a graph named my_graph from Apache HBase.

String hbQuorum = "bda01node01.example.com, bda01node02.example.com, bda01node03.example.com";

String hbClientPort = "2181";

String hbGraphName = "my_graph";

// Use HBase Java APIs

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum", hbQuorum);

conf.set("hbase.zookeper.property.clientPort", hbClientPort);

// Drop the graph

OraclePropertyGraphUtils.dropPropertyGraph(conf, hbGraphName);

4.5 Managing Text Indexing for Property Graph Data

Indexes in Oracle Big Data Spatial and Graph allow fast retrieval of elements by a particular key/value or key/text pair. These indexes are created based on an element type (vertices or edges), a set of keys (and values), and an index type.

Two types of indexing structures are supported by Oracle Big Data Spatial and Graph: manual and automatic.

-

Automatic text indexes provide automatic indexing of vertices or edges by a set of property keys. Their main purpose is to enhance query performance on vertices and edges based on particular key/value pairs.

-

Manual text indexes enable you to define multiple indexes over a designated set of vertices and edges of a property graph. You must specify what graph elements go into the index.

Oracle Big Data Spatial and Graph provides APIs to create manual and automatic text indexes over property graphs for Oracle NoSQL Database and Apache HBase. Indexes are managed using the available search engines, Apache Lucene and SolrCloud. The rest of this section focuses on how to create text indexes using the property graph capabilities of the Data Access Layer.

-

Using Automatic Indexes with the Apache Lucene Search Engine

-

Uploading a Collection's SolrCloud Configuration to Zookeeper

-

Updating Configuration Settings on Text Indexes for Property Graph Data

-

Using Parallel Query on Text Indexes for Property Graph Data

-

Using Native Query Objects on Text Indexes for Property Graph Data

4.5.1 Using Automatic Indexes with the Apache Lucene Search Engine

The supplied examples ExampleNoSQL6 and ExampleHBase6 create a property graph from an input file, create an automatic text index on vertices, and execute some text search queries using Apache Lucene.

The following code fragment creates an automatic index over an existing property graph's vertices with these property keys: name, role, religion, and country. The automatic text index will be stored under four subdirectories under the /home/data/text-index directory. Apache Lucene data types handling is enabled. This example uses a DOP (parallelism) of 4 for re-indexing tasks.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(

args, szGraphName);

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

// Do a parallel data loading

OraclePropertyGraphDataLoader opgdl =

OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFile, szOPEFile, dop);

// Create an automatic index using Apache Lucene engine.

// Specify Index Directory parameters (number of directories,

// number of connections to database, batch size, commit size,

// enable datatypes, location)

OracleIndexParameters indexParams =

OracleIndexParameters.buildFS(4, 4, 10000, 50000, true,

"/home/data/text-index ");

opg.setDefaultIndexParameters(indexParams);

// specify indexed keys

String[] indexedKeys = new String[4];

indexedKeys[0] = "name";

indexedKeys[1] = "role";

indexedKeys[2] = "religion";

indexedKeys[3] = "country";

// Create auto indexing on above properties for all vertices

opg.createKeyIndex(indexedKeys, Vertex.class);

By default, indexes are configured based on the OracleIndexParameters associated with the property graph using the method opg.setDefaultIndexParameters(indexParams).

Indexes can also be created by specifying a different set of parameters. This is shown in the following code snippet.

// Create an OracleIndexParameters object to get Index configuration (search engine, etc).

OracleIndexParameters indexParams = OracleIndexParameters.buildFS(args)

// Create auto indexing on above properties for all vertices

opg.createKeyIndex("name", Vertex.class, indexParams.getParameters());

The code fragment in the next example executes a query over all vertices to find all matching vertices with the key/value pair name:Barack Obama. This operation will execute a lookup into the text index.

Additionally, wildcard searches are supported by specifying the parameter useWildCards in the getVertices API call. Wildcard search is only supported when automatic indexes are enabled for the specified property key. For details on text search syntax using Apache Lucene, see https://lucene.apache.org/core/2_9_4/queryparsersyntax.html.

// Find all vertices with name Barack Obama.

Iterator<Vertices> vertices = opg.getVertices("name", "Barack Obama").iterator();

System.out.println("----- Vertices with name Barack Obama -----");

countV = 0;

while (vertices.hasNext()) {

System.out.println(vertices.next());

countV++;

}

System.out.println("Vertices found: " + countV);

// Find all vertices with name including keyword "Obama"

// Wildcard searching is supported.

boolean useWildcard = true;

Iterator<Vertices> vertices = opg.getVertices("name", "*Obama*").iterator();

System.out.println("----- Vertices with name *Obama* -----");

countV = 0;

while (vertices.hasNext()) {

System.out.println(vertices.next());

countV++;

}

System.out.println("Vertices found: " + countV);

The preceding code example produces output like the following:

----- Vertices with name Barack Obama-----

Vertex ID 1 {name:str:Barack Obama, role:str:political authority, occupation:str:44th president of United States of America, country:str:United States, political party:str:Democratic, religion:str:Christianity}

Vertices found: 1

----- Vertices with name *Obama* -----

Vertex ID 1 {name:str:Barack Obama, role:str:political authority, occupation:str:44th president of United States of America, country:str:United States, political party:str:Democratic, religion:str:Christianity}

Vertices found: 1

See Also:

4.5.2 Using Manual Indexes with the SolrCloud Search Engine

The supplied examples ExampleNoSQL7 and ExampleHBase7 create a property graph from an input file, create a manual text index on edges, put some data into the index, and execute some text search queries using Apache SolrCloud.

When using SolrCloud, you must first load a collection's configuration for the text indexes into Apache Zookeeper, as described in Uploading a Collection's SolrCloud Configuration to Zookeeper.

The following code fragment creates a manual text index over an existing property graph using four shards, one shard per node, and a replication factor of 1. The number of shards corresponds to the number of nodes in the SolrCloud cluster.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(args,

szGraphName);

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

// Do a parallel data loading

OraclePropertyGraphDataLoader opgdl =

OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFile, szOPEFile, dop);

// Create a manual text index using SolrCloud// Specify Index Directory parameters: configuration name, Solr Server URL, Solr Node set,

// replication factor, zookeeper timeout (secs),

// maximum number of shards per node,

// number of connections to database, batch size, commit size,

// write timeout (in secs)

String configName = "opgconfig";

String solrServerUrl = "nodea:2181/solr"

String solrNodeSet = "nodea:8983_solr,nodeb:8983_solr," +

"nodec:8983_solr,noded:8983_solr";

int zkTimeout = 15;

int numShards = 4;

int replicationFactor = 1;

int maxShardsPerNode = 1;

OracleIndexParameters indexParams =

OracleIndexParameters.buildSolr(configName,

solrServerUrl,

solrNodeSet,

zkTimeout,

numShards,

replicationFactor,

maxShardsPerNode,

4,

10000,

500000,

15);

opg.setDefaultIndexParameters(indexParams);

// Create manual indexing on above properties for all vertices

OracleIndex<Edge> index = ((OracleIndex<Edge>) opg.createIndex("myIdx", Edge.class));

Vertex v1 = opg.getVertices("name", "Barack Obama").iterator().next();

Iterator<Edge> edges

= v1.getEdges(Direction.OUT, "collaborates").iterator();

while (edges.hasNext()) {

Edge edge = edges.next();

Vertex vIn = edge.getVertex(Direction.IN);

index.put("collaboratesWith", vIn.getProperty("name"), edge);

}

The next code fragment executes a query over the manual index to get all edges with the key/value pair collaboratesWith:Beyonce. Additionally, wildcards search can be supported by specifying the parameter useWildCards in the get API call.

// Find all edges with collaboratesWith Beyonce.

// Wildcard searching is supported using true parameter.

edges = index.get("collaboratesWith", "Beyonce").iterator();

System.out.println("----- Edges with name Beyonce -----");

countE = 0;

while (edges.hasNext()) {

System.out.println(edges.next());

countE++;

}

System.out.println("Edges found: "+ countE);

// Find all vertices with name including Bey*.

// Wildcard searching is supported using true parameter.

edges = index.get("collaboratesWith", "*Bey*", true).iterator();

System.out.println("----- Edges with collaboratesWith Bey* -----");

countE = 0;

while (edges.hasNext()) {

System.out.println(edges.next());

countE++;

}

System.out.println("Edges found: " + countE);

The preceding code example produces output like the following:

----- Edges with name Beyonce -----

Edge ID 1000 from Vertex ID 1 {country:str:United States, name:str:Barack Obama, occupation:str:44th president of United States of America, political party:str:Democratic, religion:str:Christianity, role:str:political authority} =[collaborates]=> Vertex ID 2 {country:str:United States, music genre:str:pop soul , name:str:Beyonce, role:str:singer actress} edgeKV[{weight:flo:1.0}]

Edges found: 1

----- Edges with collaboratesWith Bey* -----

Edge ID 1000 from Vertex ID 1 {country:str:United States, name:str:Barack Obama, occupation:str:44th president of United States of America, political party:str:Democratic, religion:str:Christianity, role:str:political authority} =[collaborates]=> Vertex ID 2 {country:str:United States, music genre:str:pop soul , name:str:Beyonce, role:str:singer actress} edgeKV[{weight:flo:1.0}]

Edges found: 1

See Also:

4.5.3 Handling Data Types

Oracle's property graph support indexes and stores an element's Key/Value pairs based on the value data type. The main purpose of handling data types is to provide extensive query support like numeric and date range queries.

By default, searches over a specific key/value pair are matched up to a query expression based on the value's data type. For example, to find vertices with the key/value pair age:30, a query is executed over all age fields with a data type integer. If the value is a query expression, you can also specify the data type class of the value to find by calling the API get(String key, Object value, Class dtClass, Boolean useWildcards). If no data type is specified, the query expression will be matched to all possible data types.

When dealing with Boolean operators, each subsequent key/value pair must append the data type's prefix/suffix so the query can find proper matches. The following topics describe how to append this prefix/suffix for Apache Lucene and SolrCloud.

4.5.3.1 Appending Data Type Identifiers on Apache Lucene

When Lucene's data types handling is enabled, you must append the proper data type identifier as a suffix to the key in the query expression. This can be done by executing a String.concat() operation to the key. If Lucene's data types handling is disabled, you must insert the data type identifier as a prefix in the value String. Table 4-1 shows the data type identifiers available for text indexing using Apache Lucene (see also the Javadoc for LuceneIndex).

Table 4-1 Apache Lucene Data Type Identifiers

| Lucene Data Type Identifier | Description |

|---|---|

|

TYPE_DT_STRING |

String |

|

TYPE_DT_BOOL |

Boolean |

|

TYPE_DT_DATE |

Date |

|

TYPE_DT_FLOAT |

Float |

|

TYPE_DT_DOUBLE |

Double |

|

TYPE_DT_INTEGER |

Integer |

|

TYPE_DT_SERIALIZABLE |

Serializable |

The following code fragment creates a manual index on edges using Lucene's data type handling, adds data, and later executes a query over the manual index to get all edges with the key/value pair collaboratesWith:Beyonce AND country1:United* using wildcards.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(args,

szGraphName);

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

// Do a parallel data loading

OraclePropertyGraphDataLoader opgdl =

OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFile, szOPEFile, dop);

// Specify Index Directory parameters (number of directories,

// number of connections to database, batch size, commit size,

// enable datatypes, location)

OracleIndexParameters indexParams =

OracleIndexParameters.buildFS(4, 4, 10000, 50000, true,

"/home/data/text-index ");

opg.setDefaultIndexParameters(indexParams);

// Create manual indexing on above properties for all edges

OracleIndex<Edge> index = ((OracleIndex<Edge>) opg.createIndex("myIdx", Edge.class));

Vertex v1 = opg.getVertices("name", "Barack Obama").iterator().next();

Iterator<Edge> edges

= v1.getEdges(Direction.OUT, "collaborates").iterator();

while (edges.hasNext()) {

Edge edge = edges.next();

Vertex vIn = edge.getVertex(Direction.IN);

index.put("collaboratesWith", vIn.getProperty("name"), edge);

index.put("country", vIn.getProperty("country"), edge);

}

// Wildcard searching is supported using true parameter.

String key = "country";

key = key.concat(String.valueOf(oracle.pg.text.lucene.LuceneIndex.TYPE_DT_STRING));

String queryExpr = "Beyonce AND " + key + ":United*";

edges = index.get("collaboratesWith", queryExpr, true /*UseWildcard*/).iterator();

System.out.println("----- Edges with query: " + queryExpr + " -----");

countE = 0;

while (edges.hasNext()) {

System.out.println(edges.next());

countE++;

}

System.out.println("Edges found: "+ countE);

The preceding code example might produce output like the following:

----- Edges with name Beyonce AND country1:United* -----

Edge ID 1000 from Vertex ID 1 {country:str:United States, name:str:Barack Obama, occupation:str:44th president of United States of America, political party:str:Democratic, religion:str:Christianity, role:str:political authority} =[collaborates]=> Vertex ID 2 {country:str:United States, music genre:str:pop soul , name:str:Beyonce, role:str:singer actress} edgeKV[{weight:flo:1.0}]

Edges found: 1

The following code fragment creates an automatic index on vertices, disables Lucene's data type handling, adds data, and later executes a query over the manual index from a previous example to get all vertices with the key/value pair country:United* AND role:1*political* using wildcards.

OraclePropertyGraph opg = OraclePropertyGraph.getInstance(args,

szGraphName);

String szOPVFile = "../../data/connections.opv";

String szOPEFile = "../../data/connections.ope";

// Do a parallel data loading

OraclePropertyGraphDataLoader opgdl =

OraclePropertyGraphDataLoader.getInstance();

opgdl.loadData(opg, szOPVFile, szOPEFile, dop);

// Create an automatic index using Apache Lucene engine.

// Specify Index Directory parameters (number of directories,

// number of connections to database, batch size, commit size,

// enable datatypes, location)

OracleIndexParameters indexParams =

OracleIndexParameters.buildFS(4, 4, 10000, 50000, false, "/ home/data/text-index ");

opg.setDefaultIndexParameters(indexParams);

// specify indexed keys

String[] indexedKeys = new String[4];

indexedKeys[0] = "name";

indexedKeys[1] = "role";

indexedKeys[2] = "religion";

indexedKeys[3] = "country";

// Create auto indexing on above properties for all vertices

opg.createKeyIndex(indexedKeys, Vertex.class);

// Wildcard searching is supported using true parameter.

String value = "*political*";

value = String.valueOf(LuceneIndex.TYPE_DT_STRING) + value;

String queryExpr = "United* AND role:" + value;

vertices = opg.getVertices("country", queryExpr, true /*useWildcard*/).iterator();

System.out.println("----- Vertices with query: " + queryExpr + " -----");

countV = 0;

while (vertices.hasNext()) {

System.out.println(vertices.next());

countV++;

}

System.out.println("Vertices found: " + countV);

The preceding code example might produce output like the following:

----- Vertices with query: United* and role:1*political* -----

Vertex ID 30 {name:str:Jerry Brown, role:str:political authority, occupation:str:34th and 39th governor of California, country:str:United States, political party:str:Democratic, religion:str:roman catholicism}

Vertex ID 24 {name:str:Edward Snowden, role:str:political authority, occupation:str:system administrator, country:str:United States, religion:str:buddhism}

Vertex ID 22 {name:str:John Kerry, role:str:political authority, country:str:United States, political party:str:Democratic, occupation:str:68th United States Secretary of State, religion:str:Catholicism}

Vertex ID 21 {name:str:Hillary Clinton, role:str:political authority, country:str:United States, political party:str:Democratic, occupation:str:67th United States Secretary of State, religion:str:Methodism}

Vertex ID 19 {name:str:Kirsten Gillibrand, role:str:political authority, country:str:United States, political party:str:Democratic, occupation:str:junior United States Senator from New York, religion:str:Methodism}

Vertex ID 13 {name:str:Ertharin Cousin, role:str:political authority, country:str:United States, political party:str:Democratic}

Vertex ID 11 {name:str:Eric Holder, role:str:political authority, country:str:United States, political party:str:Democratic, occupation:str:United States Deputy Attorney General}

Vertex ID 1 {name:str:Barack Obama, role:str:political authority, occupation:str:44th president of United States of America, country:str:United States, political party:str:Democratic, religion:str:Christianity}

Vertices found: 8

Additionally, Oracle Big Data Spatial and Graph provides a set of utilities to help users write their own Lucene text search queries using the query syntax and data type identifiers required by the automatic and manual text indexes. The method buildSearchTerm(key, value, dtClass) in LuceneIndex creates a query expression of the form field:query_expr by adding the data type identifier to the key (or value) and transforming the value into the required string representation based on the given data type and Apache Lucene's data type handling configuration.

The following code fragment uses the buildSearchTerm method to produce a query expression country1:United* (if Lucene's data type handling is enabled), or country:1United* (if Lucene's data type handling is disabled) used in the previous examples:

String szQueryStrCountry = index.buildSearchTerm("country",

"United*", String.class);

To deal with the key and values as individual objects to construct a different Lucene Query like a WildcardQuery using the required syntax, the methods appendDatatypesSuffixToKey(key, dtClass) and appendDatatypesSuffixToValue(value, dtClass) in LuceneIndex will append the appropriate data type identifiers and transform the value into the required Lucene string representation based on the given data type.

The following code fragment uses theappendDatatypesSuffixToKey method to generate the field name required in a Lucene text query. If Lucene’s data type handling is enabled, the string returned will append the String data type identifier as a suffix of the key (country1). In any other case, the retrieved string will be the original key (country).

String key = index.appendDatatypesSuffixToKey("country", String.class);

The next code fragment uses the appendDatatypesSuffixToValue method to generate the query body expression required in a Lucene text query. If Lucene’s data type handling is disabled, the string returned will append the String data type identifier as a prefix of the key (1United*). In all other cases, the string returned will be the string representation of the value (United*).

String value = index.appendDatatypesSuffixToValue("United*", String.class);

LuceneIndex also supports generating a Term object using the method buildSearchTermObject(key, value, dtClass). Term objects are commonly used among different type of Lucene Query objects to constrain the fields and values of the documents to be retrieved. The following code fragment shows how to create a Wildcard Query object using the buildSearchTermObject method.

Term term = index.buildSearchTermObject("country", "United*", String.class);

Query query = new WildcardQuery(term);

4.5.3.2 Appending Data Type Identifiers on SolrCloud