Release 2 (9.2)

Part Number A96571-01

Home |

Book List |

Contents |

Index |

Master Index |

Feedback |

| Oracle9i Streams Release 2 (9.2) Part Number A96571-01 |

|

This chapter briefly describes the basic concepts and terminology related to Oracle Streams. These concepts are described in more detail in other chapters in this book.

This chapter contains these topics:

Oracle Streams enables the sharing of data and events in a data stream, either within a database or from one database to another. The stream routes specified information to specified destinations. The result is a new feature that provides greater functionality and flexibility than traditional solutions for capturing and managing events, and sharing the events with other databases and applications. Streams allows customers to break the cycle of trading off one solution for another. Oracle Streams provides the capabilities needed to build and operate distributed enterprises and applications, data warehouses, and high availability solutions. You can use all the capabilities of Oracle Streams at the same time. If your needs change, you can implement a new capability of Streams without sacrificing existing capabilities.

Using Oracle Streams, you control what information is put into a stream, how the stream flows or is routed from database to database, what happens to events in the stream as they flow into each database, and how the stream terminates. By configuring specific capabilities of Streams, you can address specific requirements. Based on your specifications, Streams can capture and manage events in the database automatically, including, but not limited to, data manipulation language (DML) changes and data definition language (DDL) changes. You can also put user-defined events into a stream. Then, Streams can propagate the information to other databases or applications automatically. Again, based on your specifications, Streams can apply events at a destination database. Figure 1-1 shows the Streams information flow.

You can use Streams to:

You can configure a background capture process to capture changes made to tables, schemas, or the entire database. A capture process captures changes from the redo log and formats each captured change into a logical change record (LCR). The database where changes are generated in the redo log is called the source database.

A capture process enqueues LCR events into a queue that you specify. The queue can then share the LCR events within the same database or with other databases.

You can also enqueue user events explicitly with a user application. These explicitly enqueued events can be LCRs or user messages.

A background apply process can dequeue events. You can also dequeue events explicitly with a user application.

You can configure an apply process to apply all of the events in a queue or only the events that you specify. You can also configure an apply process to call your own PL/SQL subprograms to process events.

The database where LCR events are applied and other types of events are processed is called the destination database. In some configurations, the source database and the destination database may be the same.

Other capabilities of Streams include the following:

These capabilities are discussed briefly later in this chapter and in detail later in this document.

The following sections briefly describe some of the reasons for using Streams.

Streams allows user applications to enqueue messages of different types, propagate the messages to subscribing queues, notify user applications that messages are ready for consumption, and dequeue messages at the destination database. Streams introduces a new type of queue that stages messages of SYS.AnyData type. Messages of almost any type can be wrapped in a SYS.AnyData wrapper and staged in SYS.AnyData queues. Streams interoperates with Advanced Queuing (AQ), which supports all the standard features of message queuing systems, including multi-consumer queues, publishing and subscribing, content-based routing, internet propagation, transformations, and gateways to other messaging subsystems.

| See Also:

Oracle9i Application Developer's Guide - Advanced Queuing for more information about AQ |

Streams can efficiently capture DML and DDL changes made to database objects and replicate those changes to one or more other databases. The Streams capture process captures changes made to source database objects and formats them into LCRs, which can be propagated to destination databases and then applied by the Streams apply process.

The destination databases can allow DML and DDL changes to the same database objects, and these changes may or may not be propagated to the other databases in the environment. In other words, you can configure a Streams environment with one database that propagates changes, or you can configure an environment where changes are propagated between databases bidirectionally. Also, the tables for which data is shared need not be identical copies at all databases. Both the structure and the contents of these tables can differ at different databases, and the information in these tables can be shared between these databases.

Data warehouse loading is a special case of data replication. Some of the most critical tasks in creating and maintaining a data warehouse include refreshing existing data, and adding new data from the operational databases. Streams can capture changes made to a production system and send those changes to a staging database or directly to a data warehouse or operational data store. Streams capture of redo log information avoids unnecessary overhead on the production systems. Support for data transformations and user-defined apply procedures allows the necessary flexibility to reformat data or update warehouse-specific data fields as data is loaded.

| See Also:

Oracle9i Data Warehousing Guide for more information about data warehouses |

One solution for data protection is to create a local or remote copy of a production database. In the event of human error or a catastrophe, the copy can be used to resume processing. Oracle Data Guard, a data protection feature built on Streams, can be used to create and maintain a a logical standby database, which is a logically equivalent standby copy of a production database. As in the case of Streams replication, a capture process captures changes in the redo log and formats these changes into LCRs. These LCRs are applied at the standby databases. The standby databases are fully open for read/write and may include specialized indexes or other database objects. Therefore, these standby databases can be queried as updates are applied, making Oracle Data Guard a good solution for off loading latency sensitive queries from a production database.

The most notable difference between a logical standby database and a Streams data replication environment is where the changes are captured. It is important to move the updates to the remote site as soon as possible with a logical standby database. Doing so ensures that, in the event of a failure, the exposure to lost transactions is minimal. By directly and synchronously writing the redo logs at the remote database, you can achieve no data loss in the event of a disaster. At the standby system, the changes are captured and directly applied to the standby database with an apply process.

| See Also:

Oracle9i Data Guard Concepts and Administration for more information about logical standby databases |

Changes made to database objects in an Oracle database are logged in the redo log to guarantee recoverability in the event of user error or media failure. A capture process is an Oracle background process that reads the database redo log to capture DML and DDL changes made to database objects. A capture process formats these changes into events called LCRs and enqueues them into a queue. You use rules to specify which changes are captured. Figure 1-2 shows a capture process capturing LCRs.

|

Note: The capture process does not capture some types of DML and DDL changes, and it does not capture changes made in the |

You can specify Streams tags for redo entries generated by a certain session or by an apply process. These tags then become part of the LCRs captured by a capture process. A tag can be used to determine whether a redo entry or an LCR contains a change that originated in the local database or at a different database, so that you can avoid sending LCRs back to the database where they originated. Tags may be used for other LCR tracking purposes as well. You can also use tags to specify the set of destination databases for each LCR.

See Also:

|

Streams uses queues to stage events for propagation or consumption. You can use Streams to propagate events from one queue to another, and these queues can be in the same database or in different databases. The queue from which the events are propagated is called the source queue, and the queue that receives the events is called the destination queue. There can be a one-to-many, many-to-one, or many-to-many relationship between source and destination queues.

Events that are staged in a queue can be consumed by the Streams apply process or by a user-defined subprogram. If you configure a propagation job to propagate changes from a source queue to a destination queue, then you can use rules to specify which changes are propagated. Figure 1-3 shows propagation from a source queue to a destination queue.

Streams enables you to configure an environment where changes are shared through directed networks. A directed network is one in which propagated events may pass through one or more intermediate databases before arriving at a destination database. The events may or may not be processed at an intermediate database. Using Streams, you can choose which events are propagated to each destination database, and you can specify the route events will traverse on their way to a destination database.

Table 1-4 shows an example directed networks environment. Notice that, in this example, the queue at the intermediate database in Chicago is both a source queue and a destination queue.

| See Also:

Chapter 3, "Streams Staging and Propagation" for more information about staging and propagation |

User applications can enqueue events into a queue explicitly. User applications can format these events as LCRs, which allows an apply process to apply them at a destination database. Alternatively, these events can be formatted as user messages for consumption by another user application, which either explicitly dequeues the events or processes the events with callbacks from an apply process.

Events that were explicitly enqueued into a queue can be explicitly dequeued from the same queue. Figure 1-5 shows explicit enqueue and dequeue of events from the same queue. If a user-procedure called by an apply process explicitly enqueues an event into a queue, then the event is a user-enqueued event and can be explicitly dequeued, even if the event was originally a captured event.

When events are propagated between queues, events that were explicitly enqueued into a source queue can be explicitly dequeued from a destination queue by a user application without any intervention from an apply process. Figure 1-6 shows explicit enqueue of events into a source queue, propagation to a destination queue, and then explicit dequeue of events from the destination queue.

| See Also:

"SYS.AnyData Queues and User Messages" for more information about explicit enqueue and dequeue of events |

An apply process is an Oracle background process that dequeues events from a queue and either applies each event directly to a database object or passes the event as a parameter to a user-defined procedure called an apply handler. These apply handlers can include message handlers, DML handlers, and DDL handlers.

Typically, an apply process applies events to the local database where it is running, but, in a heterogeneous database environment, it can be configured to apply events at a remote non-Oracle database. You use rules to specify which events in the queue are applied. Figure 1-7 shows an apply process processing LCRs and user messages.

An apply process detects conflicts automatically when directly applying LCRs. Typically, a conflict results when the same row in the source database and destination database is changed at approximately the same time.

When conflicts occur, you need a mechanism to ensure that the conflict is resolved in accordance with your business rules. Streams offers a variety of prebuilt conflict resolution handlers. Using these prebuilt handlers, you can define a conflict resolution system for each of your databases that resolves conflicts in accordance with your business rules. If you have a unique situation that Oracle's prebuilt conflict resolution handlers cannot resolve, then you can build your own conflict resolution handlers.

If a conflict is not resolved, or if a handler procedure raises an error, then all events in the transaction that raised the error are saved in an error queue for later analysis and possible reexecution.

Streams enables you to control which information to share and where to share it using rules. A rule is specified as a condition that is similar to the condition in the WHERE clause of a SQL query, and you can group related rules together into rule sets. A rule consists of the following components:

TRUE, FALSE, or NULL (unknown).For example, the following rule may be used in Streams to specify that the schema name that owns a table must be hr and the table name must be departments for the condition to evaluate to TRUE:

:dml.get_object_owner() = 'hr' AND :dml.get_object_name() = 'departments'

In a Streams environment, this rule condition may be used in the following ways:

hr.departments tablehr.departments tablehr.departments tableStreams performs tasks based on rules. These tasks include capturing changes with a capture process, propagating changes with a propagation job, and applying changes with an apply process. You can define rules for these tasks at three different levels:

When you define a table rule, the Streams task is performed on the table you specify. For example, you can define a rule that instructs a capture process to capture changes to the hr.employees table. Given this rule, if a row is inserted into the hr.employees table, then the capture process captures the insert, formats it into an LCR, and enqueues the LCR into a queue.

When you define a schema rule, the Streams task is performed on the database objects in the schema you specify, and any database objects added to the schema in the future. For example, you can define two rules that instruct a propagation job to propagate DML and DDL changes to the hr schema from a source queue to a destination queue. Given these rules, suppose the source queue contains LCRs that define the following changes:

The propagation job propagates these changes from the source queue to the destination queue, because both changes are to database objects in the hr schema.

When you define a global rule, the Streams task is performed for all DML changes or all DDL changes to database objects. If it is a global DML capture rule, then a capture process captures all DML changes to the database objects in the database. If it is a global DML propagation or apply rule, then the Streams task is performed for all DML changes in a queue.

|

Note: The capture process does not capture certain types of changes and changes to certain datatypes in table columns. Also, a capture process never captures changes in the |

See Also:

|

A rule-based transformation is any modification to an event that results when a rule evaluates to TRUE. For example, you can use a rule-based transformation when you want to change the datatype of a particular column in a table for an event at a particular database. In this case, the transformation can be a PL/SQL function that takes as input a SYS.AnyData object containing a logical change record (LCR) with a NUMBER datatype for a column and returns a SYS.AnyData object containing an LCR with a VARCHAR2 datatype for the same column.

A transformation can occur at the following times:

Figure 1-8 shows a rule-based transformation during apply.

| See Also:

"Rule-Based Transformations" for more information about rule-based transformations |

In addition to information sharing between Oracle databases, Streams supports information sharing between Oracle databases and non-Oracle databases. The following sections contain an overview of this support.

If an Oracle database is the source and a non-Oracle database is the destination, then the non-Oracle database destination lacks the following Streams mechanisms:

To share DML changes from an Oracle source database to a non-Oracle destination database, the Oracle database functions as a proxy and carries out some of the steps that would normally be done at the destination database. That is, the events intended for the non-Oracle destination database are dequeued in the Oracle database itself, and an apply process at the Oracle database uses Heterogeneous Services to apply the changes to the non-Oracle database across a network connection through a gateway. Figure 1-9 shows an Oracle databases sharing data with a non-Oracle database.

To capture and propagate changes from a non-Oracle database to an Oracle database, a custom application is required. This application gets the changes made to the non-Oracle database by reading from transaction logs, using triggers, or some other method. The application must assemble and order the transactions and must convert each change into a logical change record (LCR). Then, the application must enqueue the LCRs into a queue in an Oracle database by using the PL/SQL interface, where they can be processed by an apply process. Figure 1-10 shows a non-Oracle databases sharing data with an Oracle database.

Figure 1-11 shows how Streams might be configured to share information within a single database, while Figure 1-12 shows how Streams might be configured to share information between two different databases.

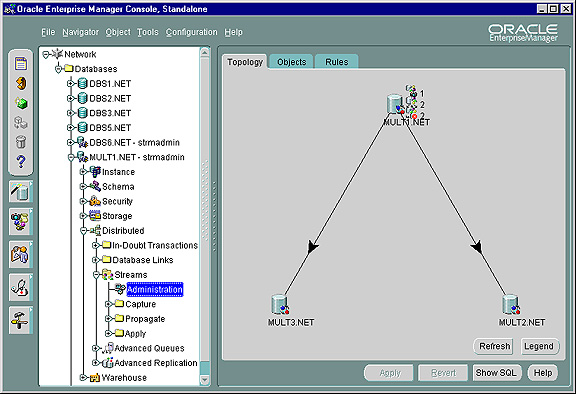

Several tools are available for configuring, administering, and monitoring your Streams environment. Oracle-supplied PL/SQL packages are the primary configuration and management tool, while the Streams tool in Oracle Enterprise Manager provides some configuration, administration, and monitoring capabilities to help you manage your environment. Additionally, Streams data dictionary views keep you informed about your Streams environment.

The following Oracle-supplied PL/SQL packages contain procedures and functions that you can use to configure and manage a Streams environment.

| See Also:

Oracle9i Supplied PL/SQL Packages and Types Reference for more information about these packages |

The DBMS_STREAMS_ADM package provides administrative procedures for adding and removing simple rules for capture, propagation, and apply at the table, schema, and database level. This package also contains procedures for creating queues and for managing Streams metadata, such as data dictionary information. This package is provided as an easy way to complete common tasks in a Streams replication environment. You can use other packages, such as the DBMS_CAPTURE_ADM, DBMS_PROPAGATION_ADM, and DBMS_APPLY_ADM packages, to complete these same tasks, as well as tasks that require additional customization.

The DBMS_CAPTURE_ADM package provides administrative procedures for starting, stopping, and configuring a capture process. The source of the captured changes is the redo logs, and the repository for the captured changes is a queue. This package also provides administrative procedures that prepare database objects at the source database for instantiation at a destination database.

The DBMS_PROPAGATION_ADM package provides administrative procedures for configuring propagation from a source queue to a destination queue.

The DBMS_APPLY_ADM package provides administrative procedures for starting, stopping, and configuring an apply process.

The DBMS_RULE_ADM package provides the administrative interface for creating and managing rules, rule sets, and rule evaluation contexts.

The DBMS_RULE package contains the EVALUATE procedure, which evaluates a rule set. The goal of this procedure is to produce the list of satisfied rules, based on the data.

The DBMS_STREAMS package provides an interface for annotating redo entries generated by a session with a binary tag. This tag affects the behavior of a capture process, a propagation job, or an apply process whose rules include specifications for these binary tags in the LCRs.

Every database in a Streams environment has Streams data dictionary views. These views maintain administrative information about local Streams rules, objects, processes, and jobs. You can use these views to monitor your Streams environment.

See Also:

|

To help configure, administer, and monitor Streams environments, Oracle provides a Streams tool in the Oracle Enterprise Manager Console. You can also use the Streams tool to generate Streams configuration scripts, which you can then modify and run to configure your Streams environment. The Streams tool online help is the primary documentation source for this tool. Figure 1-13 shows the Topology tab in the Streams tool.

Text description of the illustration strms_topology_lrg.jpg

|

Copyright © 2002 Oracle Corporation. All Rights Reserved. |

|