| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris 10 8/11 Installation Guide: Live Upgrade and Upgrade Planning Oracle Solaris 10 8/11 Information Library |

| Skip Navigation Links | |

| Exit Print View | |

|

Oracle Solaris 10 8/11 Installation Guide: Live Upgrade and Upgrade Planning Oracle Solaris 10 8/11 Information Library |

Part I Upgrading With Live Upgrade

1. Where to Find Oracle Solaris Installation Planning Information

4. Using Live Upgrade to Create a Boot Environment (Tasks)

5. Upgrading With Live Upgrade (Tasks)

6. Failure Recovery: Falling Back to the Original Boot Environment (Tasks)

7. Maintaining Live Upgrade Boot Environments (Tasks)

8. Upgrading the Oracle Solaris OS on a System With Non-Global Zones Installed

10. Live Upgrade (Command Reference)

Part II Upgrading and Migrating With Live Upgrade to a ZFS Root Pool

11. Live Upgrade and ZFS (Overview)

What's New in Oracle Solaris 10 8/11 Release

What's New in the Solaris 10 10/09 Release

Introduction to Using Live Upgrade With ZFS

Migrating From a UFS File System to a ZFS Root Pool

Migrating From a UFS root (/) File System to ZFS Root Pool

Migrating a UFS File System With Solaris Volume Manager Volumes Configured to a ZFS Root File System

Creating a New Boot Environment From a ZFS Root Pool

Creating a New Boot Environment Within the Same Root Pool

Creating a New Boot Environment on Another Root Pool

Creating a New Boot Environment From a Source Other Than the Currently Running System

Creating a ZFS Boot Environment on a System With Non-Global Zones Installed

12. Live Upgrade for ZFS (Planning)

13. Creating a Boot Environment for ZFS Root Pools

14. Live Upgrade For ZFS With Non-Global Zones Installed

B. Additional SVR4 Packaging Requirements (Reference)

If you create a boot environment from the currently running system, the lucreate command copies the UFS root (/) file system to a ZFS root pool. The copy process might take time, depending on your system.

When you are migrating from a UFS file system, the source boot environment can be a UFS root (/) file system on a disk slice. You cannot create a boot environment on a UFS file system from a source boot environment on a ZFS root pool.

The following commands create a ZFS root pool and a new boot environment from a UFS root (/) file system in the ZFS root pool. A ZFS root pool must exist before the lucreate operation and must be created with slices rather than whole disks to be upgradeable and bootable. The disk cannot have an EFI label, but must be an SMI label. For more limitations, see System Requirements and Limitations When Using Live Upgrade.

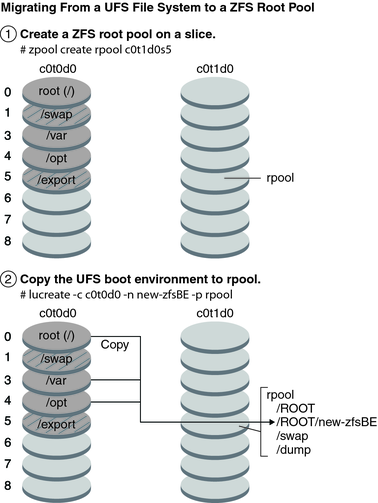

Figure 11-1 shows the zpool command that creates a root pool, rpool on a separate slice, c0t1d0s5. The disk slice c0t0d0s0 contains a UFS root (/) file system. In the lucreate command, the -c option names the currently running system, c0t0d0, that is a UFS root (/) file system. The -n option assigns the name to the boot environment to be created, new-zfsBE. The -p option specifies where to place the new boot environment, rpool. The UFS /export file system and the /swap volume are not copied to the new boot environment.

Figure 11-1 Migrating From a UFS File System to a ZFS Root Pool

To specify the creation of a separate dataset for /var in an alternate boot environment, use the -D option of the lucreate command.

lucreate -c c0t0d0 -n new-zfsBE -p rpool -D /var

The following diagram shows the datasets created in rpool as a part of this sample lucreate command.

Figure 11-2 Migrating From a UFS File System to a ZFS Root Pool

If you do not specify -D /var with the lucreate command, no separate dataset is created for /var in the alternate boot environment even if /var is a separate file system in the source boot environment.

Example 11-1 Migrating From a UFS root (/) File System to ZFS Root Pool

This example shows the same commands as in Figure 11-1. The commands create a new root pool, rpool, and create a new boot environment in the pool from a UFS root (/) file system. In this example, the zfs list command shows the ZFS root pool created by the zpool command. The next zfs list command shows the datasets created by the lucreate command.

# zpool create rpool c0t1d0s5 # zfs list NAME USED AVAIL REFER MOUNTPOINT rpool 5.97G 23.3G 31K /rpool

# lucreate -c c0t0d0 -n new-zfsBE -p rpool # zfs list NAME USED AVAIL REFER MOUNTPOINT rpool 5.97G 23.3G 31K /rpool rpool/ROOT 4.42G 23.3G 31K legacy rpool/ROOT/new-zfsBE 4.42G 23.3G 4.42G / rpool/dump 1.03G 24.3G 16K - rpool/swap 530M 23.8G 16K -

The following zfs list command shows the separate dataset created for /var by using the -D /var option in the lucreate command.

# lucreate -c c0t0d0 -n new-zfsBE -p rpool -D /var # zfs list NAME USED AVAIL REFER MOUNTPOINT rpool 5.97G 23.3G 31K /rpool rpool/ROOT 4.42G 23.3G 31K legacy rpool/ROOT/new-zfsBE 4.42G 23.3G 4.42G / rpool/ROOT/new-zfsBE/var 248MG 23.3G 248M /var rpool/dump 1.03G 24.3G 16K - rpool/swap 530M 23.8G 16K -

The new boot environment is new-zfsBE. The boot environment, new-zfsBE, is ready to be upgraded and activated.

You can migrate your UFS file system if your system has Solaris Volume Manager (SVM) volumes. To create a UFS boot environment from an existing SVM configuration, you create a new boot environment from your currently running system. Then create the ZFS boot environment from the new UFS boot environment.

Overview of Solaris Volume Manager (SVM). ZFS uses the concept of storage pools to manage physical storage. Historically, file systems were constructed on top of a single physical device. To address multiple devices and provide for data redundancy, the concept of a volume manager was introduced to provide the image of a single device. Thus, file systems would not have to be modified to take advantage of multiple devices. This design added another layer of complexity. This complexity ultimately prevented certain file system advances because the file system had no control over the physical placement of data on the virtualized volumes.

ZFS storage pools replace SVM. ZFS completely eliminates the volume management. Instead of forcing you to create virtualized volumes, ZFS aggregates devices into a storage pool. The storage pool describes such physical characteristics of storage device layout and data redundancy and acts as an arbitrary data store from which file systems can be created. File systems are no longer constrained to individual devices, enabling them to share space with all file systems in the pool. You no longer need to predetermine the size of a file system, as file systems grow automatically within the space allocated to the storage pool. When new storage is added, all file systems within the pool can immediately use the additional space without additional work. In many ways, the storage pool acts as a virtual memory system. When a memory DIMM is added to a system, the operating system doesn't force you to invoke some commands to configure the memory and assign it to individual processes. All processes on the system automatically use the additional memory.

Example 11-2 Migrating From a UFS root (/) File System With SVM Volumes to ZFS Root Pool

When migrating a system with SVM volumes, the SVM volumes are ignored. You can set up mirrors within the root pool as in the following example.

In this example, the lucreate command with the -m option creates a new boot environment from the currently running system. The disk slice c1t0d0s0 contains a UFS root (/) file system configured with SVM volumes. The zpool command creates a root pool, c1t0d0s0, and a RAID-1 volume (mirror), c2t0d0s0. In the second lucreate command, the -n option assigns the name to the boot environment to be created, c0t0d0s0. The -s option, identifies the UFS root (/) file system. The -p option specifies where to place the new boot environment, rpool.

# lucreate -n ufsBE -m /:/dev/md/dsk/d104:ufs # zpool create rpool mirror c1t0d0s0 c2t1d0s0 # lucreate -n c0t0d0s0 -s ufsBE -p zpool

The boot environment, c0t0d0s0, is ready to be upgraded and activated.