| Oracle® Big Data Appliance Software User's Guide Release 1 (1.1) Part Number E36162-04 |

|

|

PDF · Mobi · ePub |

| Oracle® Big Data Appliance Software User's Guide Release 1 (1.1) Part Number E36162-04 |

|

|

PDF · Mobi · ePub |

This chapter provides information about the software and services installed on Oracle Big Data Appliance. It contains these sections:

Cloudera Manager is installed on Oracle Big Data Appliance to help you with Cloudera's Distribution including Apache Hadoop (CDH) operations. Cloudera Manager provides a single administrative interface to all Oracle Big Data Appliance servers configured as part of the Hadoop cluster.

Cloudera Manager simplifies the performance of these administrative tasks:

Monitor jobs and services

Start and stop services

Manage security and Kerberos credentials

Monitor user activity

Monitor the health of the system

Monitor performance metrics

Track hardware use (disk, CPU, and RAM)

Cloudera Manager runs on the Cloudera Manager node (node02) and is available on port 7180.

To use Cloudera Manager:

Open a browser and enter a URL like the following:

http://bda1node02.example.com:7180

In this example, bda1 is the name of the appliance, node02 is the name of the server, example.com is the domain, and 7180 is the default port number for Cloudera Manager.

Log in with a user name and password for Cloudera Manager. Only a user with administrative privileges can change the settings. Other Cloudera Manager users can view the status of Oracle Big Data Appliance.

See Also:

Cloudera Manager User Guide athttp://ccp.cloudera.com/display/ENT/Cloudera+Manager+User+Guide

or click Help on the Cloudera Manager Help menu

In Cloudera Manager, you can choose any of the following pages from the menu bar across the top of the display:

Services: Monitors the status and health of services running on Oracle Big Data Appliance. Click the name of a service to drill down to additional information.

Hosts: Monitors the health, disk usage, load, physical memory, swap space, and other statistics for all servers.

Activities: Monitors all MapReduce jobs running in the selected time period.

Logs: Collects historical information about the systems and services. You can search for a particular phrase for a selected server, service, and time period. You can also select the minimum severity level of the logged messages included in the search: TRACE, DEBUG, INFO, WARN, ERROR, or FATAL.

Events: Records a change in state and other noteworthy occurrences. You can search for one or more keywords for a selected server, service, and time period. You can also select the event type: Audit Event, Activity Event, Health Check, or Log Message.

Reports: Generates reports on demand for disk and MapReduce use.

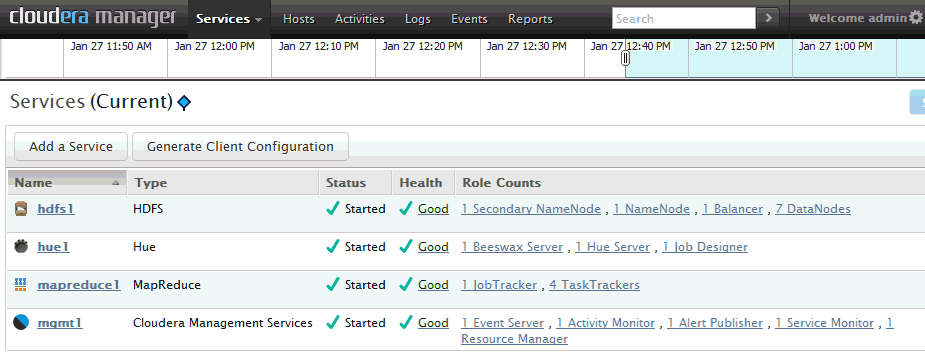

Figure 2-1 shows the opening display of Cloudera Manager, which is the Services page.

Figure 2-1 Cloudera Manager Services Page

As a Cloudera Manager administrator, you can change various properties for monitoring the health and use of Oracle Big Data Appliance, add users, and set up Kerberos security.

To access Cloudera Manager Administration:

Log in to Cloudera Manager with administrative privileges.

Click Welcome admin at the top right of the page.

If you need help from Oracle Support to troubleshoot CDH issues, then you should first collect diagnostic information using Cloudera Manager.

To collect diagnostic information about CDH:

Log in to Cloudera Manager with administrative privileges.

From the Help menu, click Send Diagnostic Data.

Verify that Send Diagnostic Data to Cloudera Automatically is not selected. Keep the other default settings.

Click Collect Host Statistics Globally.

Wait while all statistics are collected on all nodes.

Click Download Result Data and save the ZIP file with the default name. It identifies your CDH license.

Go to My Oracle Support at http://support.oracle.com.

Open a Service Request (SR) if you have not already done so.

Upload the ZIP file into the SR. If the file is too large, then upload it to ftp.oracle.com, as described in the next procedure.

To upload the diagnostics to ftp.oracle.com:

Open an FTP client and connect to ftp.oracle.com.

You can use an FTP client such as WinSCP to upload the ZIP file. See Example 2-1 if you are using a command-line FTP client.

Log in as user anonymous and leave the password field blank.

In the bda/incoming directory, create a directory using the SR number for the name, in the format SRnumber. The resulting directory structure looks like this:

bda

incoming

SRnumber

Set the binary option to prevent corruption of binary data.

Upload the diagnostics ZIP file to the bin directory.

Update the SR with the full path and file name.

Example 2-1 shows the commands to upload the diagnostics using the Windows FTP command interface.

Example 2-1 Uploading Diagnostics Using Windows FTP

ftp> open ftp.oracle.com Connected to bigip-ftp.oracle.com. 220-*********************************************************************** 220-Oracle FTP Server . . . 220-**************************************************************************** 220 User (bigip-ftp.oracle.com:(none)): anonymous 331 Please specify the password. Password: 230 Login successful. ftp> cd bda/incoming 250 Directory successfully changed. ftp> mkdir SR12345 257 "/bda/incoming/SR12345" created ftp> cd SR12345 250 Directory successfully changed. ftp> bin 200 Switching to Binary mode. ftp> put D:\Downloads\3609df...c1.default.20122505-15-27.host-statistics.zip 200 PORT command successful. Consider using PASV. 150 Ok to send data. 226 File receive OK. ftp: 706755 bytes sent in 1.97Seconds 358.58Kbytes/sec.

Users can monitor MapReduce jobs without providing a Cloudera Manager user name and password.

Hadoop Map/Reduce Administration monitors the JobTracker, which runs on port 50030 of the JobTracker node (node03) on Oracle Big Data Appliance.

To monitor the JobTracker:

Open a browser and enter a URL like the following:

http://bda1node03.example.com:50030

In this example, bda1 is the name of the appliance, node03 is the name of the server, and 50030 is the default port number for Hadoop Map/Reduce Administration.

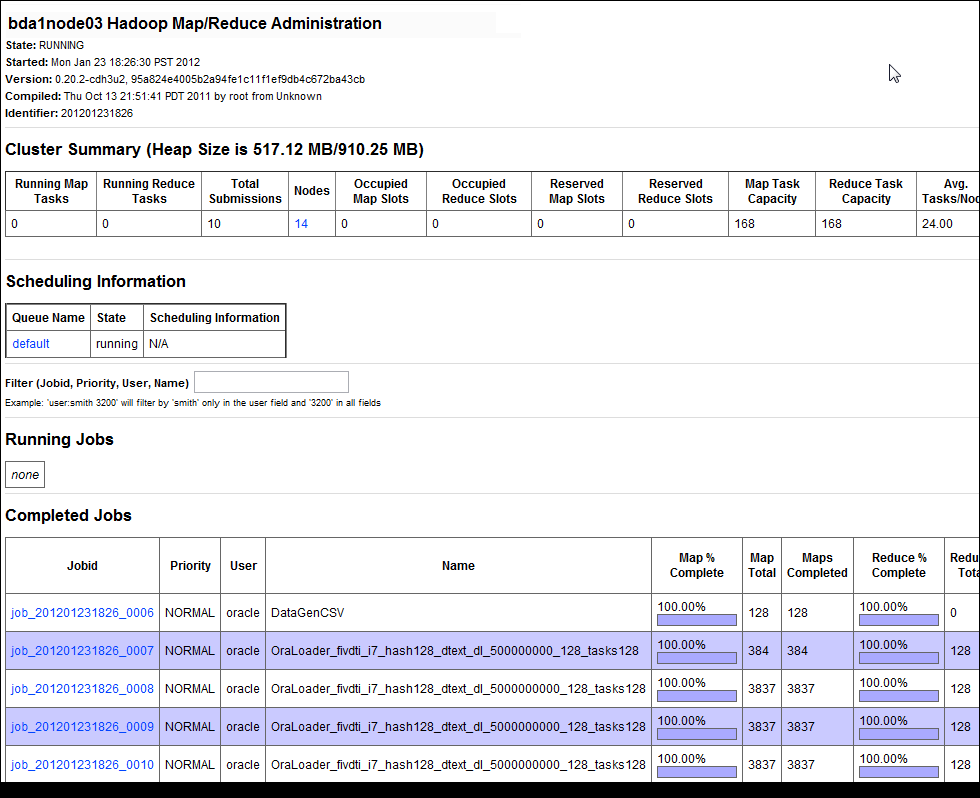

Figure 2-2 shows part of a Hadoop Map/Reduce Administration display.

Figure 2-2 Hadoop Map/Reduce Administration

The Task Tracker Status interface monitors the TaskTracker on a single node. It is available on port 50060 of all noncritical nodes (node04 to node18) in Oracle Big Data Appliance.

To monitor a TaskTracker:

Open a browser and enter the URL for a particular node like the following:

http://bda1node13.example.com:50060

In this example, bda1 is the name of the rack, node13 is the name of the server, and 50060 is the default port number for the Task Tracker Status interface.

Figure 2-3 shows the Task Tracker Status interface.

Oracle Big Data Appliance supports full local access to all commands and utilities in Cloudera's Distribution including Apache Hadoop (CDH).

You can use a browser on any computer that has access to the client network of Oracle Big Data Appliance to access Cloudera Manager, Hadoop Map/Reduce Administration, the Hadoop Task Tracker interface, and other browser-based Hadoop tools.

To issue Hadoop commands remotely, however, you must connect from a system configured as a CDH client with access to the Oracle Big Data Appliance client network. This section explains how to set up a computer so that you can access HDFS and submit MapReduce jobs on Oracle Big Data Appliance.

To follow these procedures, you must have these access privileges:

Root access to the client system

Login access to Cloudera Manager

If you do not have these access privileges, then contact your system administrator for help.

The system that you use to access Oracle Big Data Appliance must run an operating system that Cloudera supports for CDH3. For the list of supported operating systems, see "Before You Install CDH3 on a Cluster" in the Cloudera CDH3 Installation Guide at

https://ccp.cloudera.com/display/CDHDOC/Before+You+Install+CDH3+on+a+Cluster

To install the CDH client software:

Follow the installation instructions for your operating system provided in the Cloudera CDH3 Installation Guide at

https://ccp.cloudera.com/display/CDHDOC/CDH3+Installation

When you are done installing the Hadoop core and native packages, the system can act as a basic CDH client.

Note:

Be sure to install CDH3 Update 4 (CDH3u4) or a later version.To provide support for other components, such as Hive, Pig, or Oozie, see the component installation instructions.

After installing CDH, you must configure it for use with Oracle Big Data Appliance.

To configure the Hadoop client:

Open a browser on your client system and connect to Cloudera Manager. It runs on the Cloudera Manager node (node02) and listens on port 7180, as shown in this example:

http://bda1node02.example.com:7180

Log in as admin.

Cloudera Manager opens on the Services tab. Click the Generate Client Configuration button.

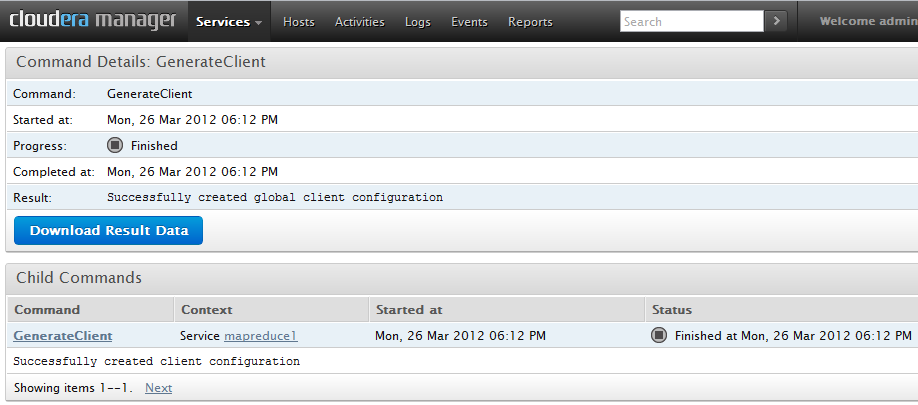

On the Command Details page (shown in Figure 2-4), click Download Result Data to download global-clientconfig.zip.

Unzip global-clientconfig.zip into the /tmp directory on the client system. It creates a hadoop-conf directory containing these files:

core-site.xml hadoop-env.sh hdfs-site.xml log4j.properties mapred-site.xml README.txt ssl-client.xml.example

Open hadoop-env.sh in a text editor and change JAVA_HOME to the correct location on your system:

export JAVA_HOME=full_directory_path

Delete the number sign (#) to uncomment the line, and then save the file.

Copy the configuration files to the Hadoop conf directory:

cd /tmp/hadoop-conf cp * /usr/lib/hadoop/conf/

Validate the installation by changing to the mapred user and submitting a MapReduce job, such as the one shown here:

su mapred hadoop jar /usr/lib/hadoop/hadoop-examples.jar pi 10 1000000

Figure 2-4 shows the download page for the client configuration.

Figure 2-4 Cloudera Manager Command Details: GenerateClient Page

Every open-source package installed on Oracle Big Data Appliance creates one or more users and groups. Most of these users do not have login privileges, shells, or home directories. They are used by daemons and are not intended as an interface for individual users. For example, Hadoop operates as the hdfs user, MapReduce operates as mapred, and Hive operates as hive. Table 2-1 identifies the operating system users and groups that are created automatically during installation of Oracle Big Data Appliance software for use by CDH components and other software packages.

You can use the oracle identity to run Hadoop and Hive jobs immediately after the Oracle Big Data Appliance software is installed. This user account has login privileges, a shell, and a home directory. Oracle NoSQL Database and Oracle Data Integrator run as the oracle user. Its primary group is oinstall.

Note:

Do not delete or modify the users created during installation, because they are required for the software to operate.When creating additional user accounts, define them as follows:

Table 2-1 Operating System Users and Groups

| User Name | Group | Used By | Login Rights |

|---|---|---|---|

|

|

Flume parent and nodes |

No |

|

|

|

HBase processes |

No |

|

|

|

No |

||

|

|

No |

||

|

|

Hue processes |

No |

|

|

|

JobTracker, TaskTracker, Hive Thrift daemon |

Yes |

|

|

|

|

Yes |

|

|

|

Oozie server |

No |

|

|

Oracle NoSQL Database, Oracle Loader for Hadoop, Oracle Data Integrator, and the Oracle DBA |

Yes |

||

|

|

Puppet parent (puppet nodes run as |

No |

|

|

|

Sqoop metastore |

No |

|

|

-- |

Auto Service Request |

No |

|

|

|

ZooKeeper processes |

No |

CDH provides an optional trash facility, so that when a user deletes a file, it is moved to a trash directory for a set period of time instead of being deleted immediately from the system.

When the trash facility is enabled, you can easily restore files that were previously deleted with the Hadoop rm file-system command. Files deleted by other programs are not copied to the trash directory.

To restore a file from the trash directory:

Check that the deleted file is in the trash. The following example checks for files deleted by the oracle user:

$ hadoop fs -ls .Trash/Current/user/oracle

Found 1 items

-rw-r--r-- 3 oracle hadoop 242510990 2012-08-31 11:20 /user/oracle/.Trash/Current/user/oracle/ontime_s.dat

Move or copy the file to its previous location. The following example moves ontime_s.dat from the trash to the HDFS /user/oracle directory.

$ hadoop fs -mv .Trash/Current/user/oracle/ontime_s.dat /user/oracle/ontime_s.dat

In this release of Oracle Big Data Appliance, the trash facility is disabled by default. Complete the following procedure to enable it.

To enable the trash facility:

On each node where you want to enable the trash facility, add the following property description to /etc/hadoop/conf/hdfs-site.xml:

<property>

<name>fs.trash.interval</name>

<value>1</value>

</property>

Note:

You can edit the hdfs-site.xml file once and then use thedcli utility to copy the file to the other nodes. See the Oracle Big Data Appliance Owner's Guide.Change the trash interval as desired (optional). See "Changing the Trash Interval".

Restart the hdfs1 service:

Open Cloudera Manager. See "Managing CDH Operations".

Locate hdfs1 on the Cloudera Manager Services page.

Expand the hdfs1 Actions menu and choose Restart.

Verify that trash collection is working properly:

Copy a file from the local file system to HDFS. This example copies a data file named ontime_s.dat to the HDFS /user/oracle directory:

$ hadoop fs -put ontime_s.dat /user/oracle

Delete the file from HDFS:

$ hadoop fs -rm ontime_s.dat

Moved to trash: hdfs://bda1node02.example.com/user/oracle/ontime_s.dat

Locate the trash directory in your home Hadoop directory, such as /user/oracle/.Trash. The directory is created when you delete a file for the first time after trash is enabled.

$ hadoop fs -ls .Trash

Found 1 items

drwxr-xr-x - oracle hadoop 0 2012-08-31 11:20 /user/oracle/.Trash/Current

Check that the deleted file is in the trash. The following command lists files deleted by the oracle user:

hadoop fs -ls .Trash/Current/user/oracle Found 1 items -rw-r--r-- 3 oracle hadoop 242510990 2012-08-31 11:20 /user/oracle/.Trash/Current/user/oracle/ontime_s.dat

If trash collection is not working on a particular node, then verify that fs.trash.interval is set in the /etc/hadoop/conf/hdfs-site.xml file on that node, and then restart the hdfs1 service.

The trash interval is the minimum number of minutes that a file remains in the trash directory before being deleted permanently from the system. The default value is 1440 minutes (24 hours).

To change the trash interval:

Open Cloudera Manager. See "Managing CDH Operations".

On the Services page under Name, click hdfs1.

On the hdfs1 page, click the Configuration subtab.

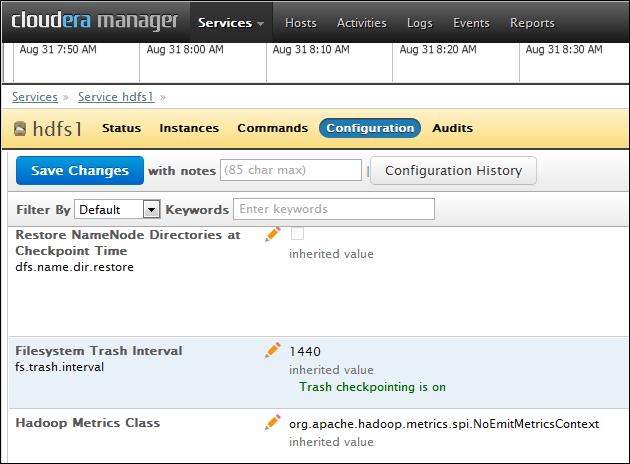

Search for or scroll down to the Filesystem Trash Interval property under NameNode Settings. See Figure 2-5.

Enter a new interval (in minutes) in the value field. A value of 0 disables the trash facility.

Click Save Changes.

Expand the Actions menu at the top of the page and choose Restart.

Figure 2-5 shows the Filesystem Trash Interval property in Cloudera Manager.

Figure 2-5 HDFS Property Settings in Cloudera Manager

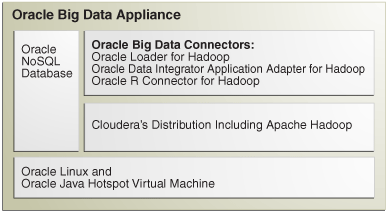

The following sections identify the software installed on Oracle Big Data Appliance and where it runs in the rack. Some components operate with Oracle Database 11.2.0.2 and later releases.

These software components are installed on all 18 servers in Oracle Big Data Appliance Rack. Oracle Linux, required drivers, firmware, and hardware verification utilities are factory installed. All other software is installed on site using the Mammoth Utility.

Note:

You do not need to install software on Oracle Big Data Appliance. Doing so may result in a loss of warranty and support. See the Oracle Big Data Appliance Owner's Guide.Installed software:

Java HotSpot Virtual Machine 6 Update 29

Cloudera's Distribution including Apache Hadoop Release 3 Update 4 (CDH)

Oracle R Distribution 2.13.2

Oracle Database Instant Client 11.2.0.3

MySQL Database 5.5.17 Advanced Edition

See Also:

Oracle Big Data Appliance Owner's Guide for information about the Mammoth UtilityFigure 2-6 shows the relationships among the major components.

Figure 2-6 Major Software Components of Oracle Big Data Appliance

Each server has 12 disks. The critical information is stored on disks 1 and 2.

Table 2-2 describes how the disks are partitioned.

This section contains the following topics:

You can use Cloudera Manager to monitor the services on Oracle Big Data Appliance.

To monitor the services:

In Cloudera Manager, click the Services tab at the top of the page to display the Services page.

Click the name of a service to see its detail pages. The service opens on the Status page.

Click the link to the page that you want to view: Status, Instances, Commands, Configuration, or Audits.

Table 2-3 describes the parent services and those that run without child services. A parent service controls one or more child services.

Services that are always on are required for normal operation. Services that you can switch on and off are optional.

| Service | Role | Description | Default Status |

|---|---|---|---|

|

-- |

HBase database |

OFF |

|

|

hdfs1 |

Tracks all files stored in the cluster. |

Always ON |

|

|

hdfs1 |

Tracks information for the NameNode |

Always ON |

|

|

hdfs1 |

Periodically issues the balancer command; although the balancer service is enabled, it does not run all the time |

Always ON |

|

|

hive |

-- |

Always ON |

|

|

hue1 |

Hue Server |

GUI for HDFS, MapReduce, and Hive, with shells for Pig, Flume, and HBase |

Always ON |

|

mapreduce1 |

Always ON |

||

|

mgmt1 |

all |

Always ON |

|

|

MySQL |

-- |

ON |

|

|

ODI Agent |

-- |

Oracle Data Integrator agent, installed on same node as MySQL Database |

ON |

|

-- |

Workflow and coordination service for Hadoop |

OFF |

|

|

ZooKeeper |

-- |

OFF |

Table 2-4 describes the child services. A child service is controlled by a parent service.

| Service | Role | Description | Default Status |

|---|---|---|---|

|

-- |

Hosts data and processes requests for HBase |

OFF |

|

|

hdfs1 |

Stores data in HDFS |

Always ON |

|

|

mapreduce1 |

Always ON |

||

|

NoSQL DB Storage Node |

-- |

ON |

|

|

nosqldb |

-- |

Supports a web console or command-line interface for administering Oracle NoSQL Database |

ON |

All services are installed on all servers, but individual services run only on designated nodes in the Hadoop cluster.

Table 2-5 identifies the nodes where the services run on the primary rack. Services that run on all nodes run on all racks of a multirack installation.

Table 2-5 Software Service Locations

| Service | Node Name | Initial Node Position |

|---|---|---|

|

HDFS node |

Node01 |

|

|

JobTracker node |

Node03 |

|

|

All nodes |

All nodes |

|

|

Cloudera Manager node |

Node02 |

|

|

All nodes |

All nodes |

|

|

JobTracker node |

Node03 |

|

|

JobTracker node |

Node03 |

|

|

JobTracker node |

Node03 |

|

|

MySQL BackupFoot 1 |

JobTracker node |

Node03 |

|

MySQL Primary ServerFootref 1 |

Cloudera Manager node |

Node02 |

|

HDFS node |

Node01 |

|

|

Oracle Data Integrator AgentFoot 2 |

JobTracker node |

Node03 |

|

Oracle NoSQL Database AdministrationFootref 2 |

Cloudera Manager node |

Node02 |

|

Oracle NoSQL Database Server ProcessesFootref 2 |

All nodes |

All nodes |

|

All nodes |

All nodes |

|

|

HDFS Node |

Node01 |

|

|

Cloudera Manager node |

Node02 |

|

|

All noncritical nodes |

Node04 to Node18 |

Footnote 1 If the software was upgraded from version 1.0, then MySQL Backup remains on node02 and MySQL Primary Server remains on node03.

Footnote 2 Started only if requested in the Oracle Big Data Appliance Configuration Worksheets

The NameNode is the most critical process because it keeps track of the location of all data. Without a healthy NameNode, the entire cluster fails. This vulnerability is intrinsic to Apache Hadoop (v0.20.2 and earlier).

Oracle Big Data Appliance protects against catastrophic failure by maintaining four copies of the NameNode logs:

Node01: The working copy of the NameNode snapshot and update logs is stored in /opt/hadoop/dfs/ and is automatically mirrored in a local Linux partition.

Node02: A backup copy of the logs is stored in /opt/shareddir/ and is also automatically mirrored in a local Linux partition.

A backup copy outside of Oracle Big Data Appliance can be configured during the software installation.

The effects of a server failure vary depending on the server's function within the CDH cluster. Sun Fire servers are more robust than commodity hardware, so you should experience fewer hardware failures. This section highlights the most important services that run on the various servers of the primary rack. For a full list, see "Service Locations".

Critical nodes are required for the cluster to operate normally and provide all services to users. In contrast, the cluster continues to operate with no loss of service when a noncritical node fails.

The critical services are installed initially on the first four nodes of the primary rack. Table 2-6 identifies the critical services that run on these nodes. The remaining nodes (initially node05 to node18) only run DataNode and TaskTracker services. If a hardware failure occurs on one of the critical nodes, then the services can be moved to another, noncritical server. For example, if node02 fails, its critical services might be moved to node05. For this reason, Table 2-6 provides names to identify the nodes providing critical services.

The HDFS node (node01) is critically important because it is where the NameNode runs. If this server fails, the effect is downtime for the entire cluster, because the NameNode keeps track of the data locations. However, there are always four copies of the NameNode metadata.

The current state and update logs are written to these locations:

HDFS node (node01): /opt/hadoop/dfs/ on Disk 1 is the working copy with a Linux mirrored partition on Disk 2 providing a second copy.

Backup node (node04): /opt/shareddir/ on Disk 1 is the third copy, which is also duplicated on a mirrored partition on Disk 2. These copies can be written to an external NFS directory instead of the backup node.

The Secondary NameNode runs on the Cloudera Manager node (node02). There are backups for this data like those for the NameNode. If the node fails, then these services are also disrupted:

Cloudera Manager: This tool provides central management for the entire CDH cluster. Without this tool, you can still monitor activities using the utilities described in "Using Hadoop Monitoring Utilities".

MySQL Master Database: Cloudera Manager, Oracle Data Integrator, Hive, and Oozie use MySQL Database. The data is replicated automatically, but you cannot access it when the master database server is down.

Oracle NoSQL Database KV Administration: Oracle NoSQL Database database is an optional component of Oracle Big Data Appliance, so the extent of a disruption due to a node failure depends on whether you are using it and how critical it is to your applications.

The JobTracker assigns MapReduce tasks to specific nodes in the CDH cluster. Without the JobTracker node (node03), this critical function is not performed. If the node fails, then these services are also disrupted:

Oracle Data Integrator: This service supports Oracle Data Integrator Application Adapter for Hadoop. You cannot use this connector when the JobTracker node is down.

Hue: Cloudera Manager uses Hadoop User Experience (Hue), and so Cloudera Manager is unavailable when Hue is unavailable.

MySQL Backup Database: MySQL Database continues to run, although there is no backup of the master database.

The backup node (node04) backs up the NameNode and Secondary NameNode for most installations. The backups are important for ensuring the smooth functioning of the cluster, but there is no loss of user services if the backup node fails.

Some installations are configured to use an external NFS directory instead of a backup node. This is a configuration option decided during installation of the appliance. When the backup is stored outside the appliance, the node is noncritical.

The noncritical nodes (node05 to node18) are optional in that Oracle Big Data Appliance continues to operate with no loss of service if a failure occurs. The NameNode automatically replicates the lost data to maintain three copies at all times. MapReduce jobs execute on copies of the data stored elsewhere in the cluster. The only loss is in computational power, because there are fewer servers on which to distribute the work.

You can take precautions to prevent unauthorized use of the software and data on Oracle Big Data Appliance.

This section contains these topics:

Apache Hadoop is not an inherently secure system. It is protected only by network security. After a connection is established, a client has full access to the system.

Cloudera's Distribution including Apache Hadoop (CDH) supports Kerberos network authentication protocol to prevent malicious impersonation. You must install and configure Kerberos and set up a Kerberos Key Distribution Center and realm. Then you configure various components of CDH to use Kerberos.

CDH provides these securities when configured to use Kerberos:

The CDH master nodes, NameNode, and JobTracker resolve the group name so that users cannot manipulate their group memberships.

Map tasks run under the identity of the user who submitted the job.

Authorization mechanisms in HDFS and MapReduce help control user access to data.

See Also:

http://oracle.cloudera.com for these manuals:

CDH3 Security Guide

Configuring Hadoop Security with Cloudera Manager

Configuring TLS Security for Cloudera Manager

Table 2-7 identifies the port numbers that might be used in addition to those used by CDH. For the full list of CDH port numbers, go to the Cloudera website at

http://ccp.cloudera.com/display/CDHDOC/Configuring+Ports+for+CDH3

To view the ports used on a particular server:

In Cloudera Manager, click the Hosts tab at the top of the page to display the Hosts page.

In the Name column, click a server link to see its detail page.

Scroll down to the Ports section.

See Also:

The Cloudera website for CDH port numbers:Hadoop Default Ports Quick Reference at

http://www.cloudera.com/blog/2009/08/hadoop-default-ports-quick-reference/

Configuring Ports for CDH3 at

https://ccp.cloudera.com/display/CDHDOC/Configuring+Ports+for+CDH3

The puppet node service (puppetd) runs continuously as root on all servers. It listens on port 8139 for "kick" requests, which trigger it to request updates from the puppet master. It does not receive updates on this port.

The puppet master service (puppetmasterd) runs continuously as the puppet user on the first server of the primary Oracle Big Data Appliance rack. It listens on port 8140 for requests to push updates to puppet nodes.

The puppet nodes generate and send certificates to the puppet master to register initially during installation of the software. For updates to the software, the puppet master signals ("kicks") the puppet nodes, which then request all configuration changes from the puppet master node that they are registered with.

The puppet master sends updates only to puppet nodes that have known, valid certificates. Puppet nodes only accept updates from the puppet master host name they initially registered with. Because Oracle Big Data Appliance uses an internal network for communication within the rack, the puppet master host name resolves using /etc/hosts to an internal, private IP address.