22 Creating Snap Clones

22.1 About Data Lifecycle Management

The Enterprise Manager Data Lifecycle Management solution provides a complete end to end automated flow to move data from the production environment to the test environment.

In a production environment, the database administrator will first take a backup of this production database, create a test master database from the backup, then mask the sensitive data, and finally create clones from the test master. The clones then need to be refreshed as required to ensure that the data is in sync with the production data. With the Data Lifecycle Management solution, this process is completely automated and can be performed either from the Cloning Dashboard or through the Self Service Portal.

22.2 About Snap Clones

Snap Clone provides a storage agnostic approach to creating rapid and space efficient clones of large databases. Clones of the production database are often required for test and development purposes, and it is difficult and time consuming to create these clones, especially if the databases are huge.

Enterprise Manager offers Snap Clone as a way to address this issue, so that thin clones can be created from production databases by using the copy on write technology available in some storage systems. This means that these clones take up little space initially (about 2 GB of writable space required for a thin clone of a multi-terabyte database), and will grow as inserts, updates and deletes are performed. Enterprise Manager offers two solutions with snap clone:

-

Hardware Solution: Vendor specific hardware solution which supports NetApps, Oracle Sun ZFS storage appliance, EMC VMAX, and VNX.

-

Software Solution: Storage agnostic software solution that supports all NAS and SAN storage devices. This is supported through use of the ZFS file system, and the CloneDB feature.

The main features of snap clone are:

-

Self Service Driven Approach: Empowers the self service user to clone databases as required on an ad-hoc basis.

-

Rapid Cloning: Databases can be cloned in minutes and not in days or weeks.

-

Space Efficient: This features allows users to significantly reduce the storage footprint.

22.3 Registering and Managing Storage Servers

Note:

If you are creating thin clones from a snap clone based profile, you must register and manage the storage servers such as NetApp, Sun ZFS, or EMC. See Creating Snap Clones for details.

This section describes the following:

22.3.1 Overview of Registering Storage Servers

Registering a storage server, such as NetApp storage server, Sun ZFS storage server, or EMC storage server in Enterprise Manager enables you to provision databases using the snapshot and cloning features provided by the storage.

The registration process validates the storage, and discovers the Enterprise Manager managed database targets on this storage. Once the databases are discovered, you can enable them for Snap Clone. Snap Clone is the process of creating database clones using the Storage Snapshot technology.

Note:

Databases on Windows operating systems are not supported.

22.3.2 Before You Begin

Before you begin, note the following:

-

Windows databases are not discovered as part of storage discovery. This is because the Windows storage NFS collection does not happen at all. NFS collection is also not supported on certain OS releases, and thus databases on those OS releases cannot be Snap Cloned. For further details please refer to the My Oracle Support note 465472.1. Also, NAS volumes cannot be used on Windows for supporting Oracle databases.

-

Snap Clone is supported on Sun ZFS Storage 7120, 7320, 7410, 7420 and ZS3 models, NetApp 8 hardware in 7-mode and c-mode, EMC VMAX 10K and VNX 5300, and Solaris ZFS Filesystem.

-

Snap Clone supports Sun ZFS storage on HP-UX hosts only if the OS version is B.11.31 or higher. If the OS version is lower than that, the Sun Storage may not function properly thereby Snap Clone gives unexpected results.

-

By default, the maximum number of NFS file systems that Enterprise Manager discovers on a target host is 100. However, this threshold is configurable. You can also choose a list of file systems to be monitored if you do not want all the extra file systems to be monitored.

The configuration file

$agent_inst/sysman/emd/emagent_storage.configfor each host agent contains various storage monitoring related parameters.To configure the threshold for the NFS file systems, you need to edit the following parameters:

Collection Size:START Disks=1000 FileSystems=1000 Volumes=1000 Collection Size:END

If you choose to provide a list of file systems to be monitored, it can be provided between the following lines:

FileSystems:START

FileSystems:END

Restart the Management Agent and refresh the host configuration for the changes to this configuration file to be effective.

-

If the OMS Repository is running on RDBMS with 11.1.0.7.0 and AL32UTF8 character set, you need to apply patch 11893621.

22.3.3 Prerequisites for Registering Storage Servers

22.3.3.1 Configuring Storage Servers

Before you register a storage server, you require the following privileges and licenses to successfully use Snap Clone:

Note:

Enterprise Manager Cloud Control 13c supports NetApp, Sun ZFS, Solaris File System (ZFS) and EMC storage servers.

Configuring NetApp Hardware

This section consists of the following:

Obtaining NetApp Hardware Privileges

Privileges is a generic term. NetApp refers to privileges as Capabilities.

For NetApp storage server, to use Snap Clone, assign the following privileges or capabilities to the NetApp storage credentials:

Note:

You can assign these capabilities individually or by using wildcard notations. For example:

'api-volume-*', 'api-*', 'cli-*'

-

api-aggr-list-info

-

api-aggr-options-list-info

-

api-file-delete-file

-

api-file-get-file-info

-

api-file-read-file

-

api-license-list-info

-

api-nfs-exportfs-append-rules

-

api-nfs-exportfs-delete-rules

-

api-nfs-exportfs-list-rules

-

api-nfs-exportfs-modify-rule

-

api-snapshot-create

-

api-snapshot-delete

-

api-snapshot-list-info

-

api-snapshot-reclaimable-info

-

api-snapshot-restore-volume

-

api-snapshot-set-reserve

-

api-system-api-get-elements

-

api-system-api-list

-

api-snapshot-set-schedule

-

api-system-cli

-

api-system-get-info

-

api-system-get-ontapi-version

-

api-system-get-version

-

api-useradmin-group-list

-

api-useradmin-user-list

-

api-volume-clone-create

-

api-volume-clone-split-estimate

-

api-volume-create

-

api-volume-destroy

-

api-volume-get-root-name

-

api-volume-list-info

-

api-volume-list-info-iter-end

-

api-volume-list-info-iter-next

-

api-volume-list-info-iter-start

-

api-volume-offline

-

api-volume-online

-

api-volume-restrict

-

api-volume-set-option

-

api-volume-size

-

cli-filestats

-

login-http-admin

Obtaining NetApp Hardware Licenses

Snap Clone on a NetApp storage server requires a valid license for the following services:

-

flex_clone

-

nfs

-

snaprestore

Note:

Snap Clone is supported only on NetApp Data ONTAP® 7.2.1.1P1D18 or higher, and ONTAP@ 8.x (7-mode, c-mode, and v-server mode).

-

Create ROLE

em_smf_admin_role' with all the recommended capabilities, such asapi-aggr-list-info,api-file-delete-file,and the like. -

Create GROUP

em_smf_admin_groupwith the ROLEem_smf_admin_role. -

Create USER

em_smf_adminwith GROUPem_smf_admin_groupand a secure password.

Note:

The user em_smf_admin must be a dedicated user to be used by Oracle Enterprise Manager. Oracle does not recommend sharing this account for any other purposes.

Configuring NetApp 8 Cluster Mode Hardware

This topic discusses how to setup NetApp 8 cluster mode (c-mode) hardware for supporting Snap Clone in Enterprise Manager Cloud Control 13c.

NetApp 8 hardware in 7-mode is already supported for Snap Clone.

To configure NetApp 8 c-mode hardware, refer to the following sections:

NetApp 8 Configuration Supported with Cluster Mode

The configuration supported with c-mode is as follows:

-

Snap Clone features are supported only with SVM (Vserver).

-

Registration of a physical cluster node is not supported.

-

Multiple SVMs can be registered with Enterprise Manager Cloud Control 13c. All the registered SVMs are managed independently.

Preparing the NetApp 8 Storage and SVM

To prepare the NetApp 8 storage and SVM, ensure that the following requirements are done:

-

The NetApp 8 c-mode hardware should have an SVM created. If not, you should create an SVM which will be registered with the Enterprise Manager.

-

The SVM should have a network interface (LIF) with both Management and Data access. The domain name and IP address of this interface should be provided on the Storage Registration page in the Enterprise Manager.

-

There should be at least one aggregate (volume) assigned to the SVM. The aggregates should not be shared between the SVMs.

-

The SVM should have a user account that has the vsadmin-volume role assigned for ontapi access.

The user credentials should be supplied on the Storage Registration page in the Enterprise Manager.

-

The root volume of SVM should have an export policy with a rule that allows Read Only access to all hosts. If you are using NFS v4, then the Superuser access needs to be granted from the Modify Export Rule dialog box.

Note:

-

A directory named

em_volumesis created with permissions 0444 inside the root volume of SVM. This directory will be used as an Enterprise Manager name space for the junction point. -

All the storage volumes created will use the junction point

/em_volumesin the name space.

Note:

When you register an SVM in Enterprise Manager Cloud Control, the details of all the aggregates assigned to it are fetched. The total size of an aggregate is required to set the quota, perform space computation, and for reporting.

Presently, NetApp does not provide any Data ONTAP API to fetch the aggregate total size from an SVM. As a workaround, the available size of an aggregate is considered as the total size and is set as the Storage Ceiling during first Synchronize run. Storage Ceiling is the maximum amount of space that Enterprise Manager can use in an aggregate.

If the total space of an aggregate is increased on the storage, you can increase the Storage Ceiling till you consume the available space in that aggregate.

-

Configuring Sun ZFS and ZS3 Hardware

This section consists of the following:

Obtaining Sun ZFS Hardware Privileges

Privileges is a generic term. For example, Sun ZFS refers to privileges as Permissions.

For Sun ZFS storage server, to use Snap Clone, assign the following privileges or permissions to the Sun ZFS storage credentials:

Note:

All the permissions listed must be set to true. The scope must be 'nas' and there must not be any further filters.

-

changeProtocolProps

-

changeSpaceProps

-

clone and createShare

-

destroy

-

rollback

-

takeSnap

Obtaining Sun ZFS Hardware Licenses

Snap Clone on Sun ZFS Storage Appliance requires a license for the Clones feature. A restricted-use license for the same is included with the Enterprise Manager Snap Clone.

-

Create ROLE

em_smf_admin_role. -

Create AUTHORIZATIONS for the ROLE

em_smf_admin_role. -

Set SCOPE as

nas. -

Set the recommended permissions, such as,

allow_changeProtocolProps, allow_changeSpaceProps,and the like totrue. -

Create USER

em_smf_adminand set its ROLE property asem_smf_admin_role.Note:

The user

em_smf_adminmust be a dedicated user to be used by Oracle Enterprise Manager. Oracle does not recommend sharing this account for any other purposes.

Configuring Solaris File System (ZFS) Storage Servers

This section consists of the following:

Obtaining Solaris File System (ZFS) Privileges

Solaris File System (ZFS) refers to privileges as Permissions. For Solaris File System (ZFS) storage server, to use Snap Clone, grant the following permissions on the pool for the Solaris File System (ZFS) user:

-

clone

-

create

-

destroy

-

mount

-

rename

-

rollback

-

share

-

snapshot

-

quota

-

reservation

-

sharenfs

-

canmount

-

recordsize

Obtaining Solaris File System (ZFS) Licenses

Solaris File System (ZFS) does not require any special hardware license. Only Oracle Solaris OS version 11.1 is supported.

Setting Up Solaris File System (ZFS) Storage Servers

Solaris File System (ZFS) storage servers can work with any storage hardware. You do not need to buy any additional storage hardware. Instead, you can attach your in-house storage hardware and to acquire the Oracle Snap Clone functionality. For example, you can attach LUNs from an EMC VMAX, VNX systems, a Hitachi VSP, or an Oracle Pillar Axiom FC array.

The following storage topology figure explains how this works:

Note:

This figure assumes that you have a SAN storage device with 4 x 1TB logical unit devices exposed to the Solaris File System (ZFS) storage server.

This section contains the following:

Prerequisites for Setting Up Solaris File System (ZFS) Storage Servers

Before you configure a Solaris File System (ZFS) storage server, ensure that you meet requirements:

-

Ensure that

zfs_arc_maxis not set in /etc/system. If it needs to be set ensure that it is set to a high value such as 80% of RAM. -

Ensure that the storage server is configured with multiple LUNs. Each LUN should be a maximum of 1TB. A minimum 2 LUNs of 1TB each is recommended for a Snap Clone. Each LUN should have a mirror LUN which is mounted on the host over a different controller to isolate failover. A LUN can be attached to the Solaris host over Fibre Channel for better performance.

Note:

If Fibre Channel is not available, any direct attached storage or iSCSI based LUNs are sufficient.

-

All LUNs used in a pool should be equal in size. It is preferable to use less than 12 LUNs in a pool.

-

Apart from LUNs, the storage needs cache and log devices to improve zpool performance. Both these devices should ideally be individual flash/SSD devices. In case it's difficult to procure individual devices, one can use slices cut from a single device. Log device needs to be about 32GB in size and also have redundancy and battery backup to prevent data loss. Cache device can be about 128GB in size and need not have redundancy.

Requirements for Storage Area Network Storage

The requirements for Storage Area Network (SAN) storage are as follows:

-

It is recommended to create large LUNs and lesser number of LUNs. The maximum recommended size for a LUN is 3TB.

-

LUNs should come from different SAN storage pools or an entirely different SAN storage device.

These LUNs are needed for mirroring, to maintain the pool level redundancy. If your SAN storage maintains a hardware level redundancy, then you can skip this requirement.

-

The LUNs should be exposed over Fiber Channel.

Recommendations for Solaris File System (ZFS) Pools

The recommendations for Solaris File system (ZFS) pools are as follows:

-

Create the Storage pool with multiple LUNs of the same size. You can add more disks to the storage pool to increase the size based on your usage.

-

The storage pool created on the Solaris File System (ZFS) storage server should use the LUNs coming from a different SAN storage pool or an entirely different SAN storage device. You can skip this if your SAN storage maintains hardware level redundancy.

-

To repair data inconsistencies, use ZFS redundancy such as mirror, RAIDZ, RAIDZ-2 or RAIDZ-3 to repair data inconsistencies, regardless of whether RAIDZ is implemented at the underlying storage device.

-

For better throughput and performance, use cache and log devices. Both these devices should ideally be on individual flash/SSD devices. In case of difficulty in procuring individual devices, you can use slices cut from a single device.

It is recommended to have the Log device at about 50% of RAM and also have redundancy and battery backup to prevent data loss. Cache device size could be based on the size of the workload and the pool.

Cache device do not support redundancy. This is optional.

-

While creating the pool, it has to be sized to accommodate the test master database along with the cloned databases. A clone will co-exist with the parent database in the same storage pool. Therefore, you should plan for test master and clone capacity well ahead.

For example, The size of the test master is 1TB and you expect to create 10 clones with each of them expected to differ from the test master by 100G. Then, the storage pool should be minimum 2.5TB in size.

-

Maintain the storage pool with at least 20% free space. If the free space falls below this level, then the performance of the pool degrades.

Configuring Solaris File System (ZFS) Users and Pools

You need to create a user which will be able to administer the storage from Enterprise Manager. To do this, run the following commands as root user:

# /sbin/useradd -d /home/emzfsadm -s /bin/bash emzfsadm # passwd emzfsadm

Note:

The username should be less than or equal to 8 characters.

You need to configure the ZFS pool that is used to host volumes, and grant privileges on this pool to the user created. The emzfsadm user should have the privileges on all the zpools and its mount points in the system.

To configure the ZFS pool, refer to the following table and run the following commands:

Note:

The table displays a reference implementation, and you can choose to change this as required.

| Pool Name | lunpool |

|---|---|

|

Disks (SAN exposed LUNs over FC/iSCI) |

lun1=c9t5006016E3DE0340Ed0, lun2=c9t5006016E3DE0340Ed1 |

|

Disks Mirror (SAN exposed LUNs over FC/iSCI) |

mir1=c10t5006016E3DE0340Ed2, mir2=c10t5006016E3DE0340Ed3 |

|

Flash/ SSD disk (log) |

ssd1=c4t0d0s0 |

|

Flash/SSD disk (cache) |

ssd2=c4t0d1s0 |

# zpool create lunpool mirror lun1=c9t5006016E3DE0340Ed0 mir1=c10t5006016E3DE0340Ed2 mirror lun2=c9t5006016E3DE0340Ed1 mir2=c10t5006016E3DE0340Ed3 log ssd1=c4t0d0s0 cache ssd2=c4t0d1s0

Example format output is as follows:

bash-4.1# /usr/sbin/format

Searching for disks...done

AVAILABLE DISK SELECTIONS:

0. c9t5006016E3DE0340Ed0 <DGC-VRAID-0532-1.00TB>

/pci@78,0/pci8086,3c08@3/pci10df,f100@0/fp@0,0/disk@w5006016e3de0340e,0

1. c9t5006016E3DE0340Ed1 <DGC-VRAID-0532-1.00TB>

/pci@78,0/pci8086,3c08@3/pci10df,f100@0/fp@0,0/disk@w5006016e3de0340e,1

2. c10t5006016E3DE0340Ed2 <DGC-VRAID-0532-1.00TB>

/pci@78,0/pci8086,3c08@3/pci10df,f100@0/fp@0,0/disk@w5006016e3de0340e,2

3. c10t5006016E3DE0340Ed3 <DGC-VRAID-0532-1.00TB>

/pci@78,0/pci8086,3c08@3/pci10df,f100@0/fp@0,0/disk@w5006016e3de0340e,3

[ We need to find the size of pool that was created ]

# df -k /lunpool

Filesystem 1024-blocks Used Available Capacity Mounted on

lunpool 1434746880 31 1434746784 1% /lunpool

[ We use the Available size shown here to set quota as shown below ]

# zfs set quota=1434746784 lunpool

# zfs allow emzfsadm clone,create,destroy,mount,rename,rollback,share,snapshot,quota,reservation,sharenfs,canmount,recordsize,logbias lunpool

# chmod A+user:emzfsadm:add_subdirectory:fd:allow /lunpool

# chmod A+user:emzfsadm:delete_child:fd:allow /lunpool

Note:

this content did not make it through the conversion

Configuring EMC Storage Servers

Before you use an EMC Symmetrix VMAX Family or an EMC VNX storage server, you need to first setup the EMC storage hardware for supporting Snap Clone in Oracle Enterprise Manager 13c. Ensure that all the requirements are met in the following sections:

Supported Configuration for EMC Storage Servers

Before you configure the EMC Symmetrix VMAX family or the EMC VNX storage server, check the following list. The list displays components that are supported and are not supported for EMC VMAX and EMC VNX storage.

-

EMC VMAX 10K and VNX 5300 are certified to use. Higher models in the same series are expected to work.

-

Only Linux and Solaris operating systems are supported. Other operating systems are not yet supported.

-

Multi-pathing is mandatory.

-

Only EMC PowerPath, and Solaris MPxIO are supported.

-

Switched fabric is supported. Arbitrated loop is not supported.

-

Emulex (LPe12002-E) host bus adapters are certified to use. Other adapters are expected to work.

-

SCSI over Fibre Channel is supported. iSCSI, NAS are not yet supported.

-

Oracle Grid Infrastructure 11.2 is supported.

-

Oracle Grid Infrastructure 12.1 with Local ASM Storage option is supported. Flex ASM is not supported.

-

ASM Filter Driver is not supported.

-

ASM support is only for raw devices. File System is not supported.

-

Support for Thin Volumes (TDEV) on VMAX.

-

Support for only LUNs on VNX. NAS is not yet supported.

Requirements for EMC Symmetrix VMAX Family and Database Servers

The requirement for the operating system version of EMC Symmetrix VMAX Family is:

-

EMC VMAX Enginuity Version: 5876.251.161 and above

-

SMI-S Provider Version: V4.6.1.6 and above

-

Solutions Enabler Version: V7.6-1755 and above

Note:

The EMC VMAX Enginuity version is the Operating System version of the storage.

The SMI-Provider and Solutions Enabler are installed on a host in a SAN.

The requirements for database server are as follows:

Oracle Database Requirements

-

Oracle Database 10.2.0.5 and higher

Operating System Requirements

-

Oracle Linux 5 update 8 (compatible with RHEL 5 update 8) and above

-

Oracle Linux 6 (compatible with RHEL 6) and above

-

Oracle Solaris 10 and 11

Multipathing Requirements

-

EMC PowerPath Version 5.6 or above as available for Linux Operating System release and kernel version

-

EMC PowerPath Version 5.5 or above as available for Oracle Solaris 11.1 release

-

EMC PowerPath Version 6 is not supported.

-

Solaris MPxIO as available in latest update

Oracle Grid Infrastructure Requirements

-

Oracle Grid Infrastructure 11.2

-

Oracle Grid Infrastructure 12.1. Flex ASM is not supported.

Preparing the Storage Area Network

To prepare the storage area network, follow the configuration steps outlined in each section.

SAN Fabric Configuration

Configure your SAN fabric with multipathing by ensuring the following:

-

You must have redundancy at storage, switch and server level.

-

Perform the zoning such that multiple paths are configured from the storage to the server.

-

Configure the paths such that a failure at a target port, or a switch or a host bus adapter will not cause unavailability of storage LUNs.

-

Configure gatekeepers on the host where EMC SMI-S provider is installed. To configure gatekeepers, refer to the documentation available on the EMC website:

SMI-S Provider

You should install the SMI-S provider and Solutions Enabler on one of the servers in the fabric where the storage is configured. To install and configure the SMI-S provider, refer to the documentation available on the EMC website:

The SMI-S provider URL and login credentials are needed to interact with the storage. An example of an SMI-S Service Provider URL is https://rstx4100smis:5989.

These details are needed when you register a storage server. You are required to do the following:

-

Ensure that the VMAX or VNX storage is discovered by the SMI-S provider.

-

Add the VNX storages to the SMI-S provider.

-

Create a user account with administrator privileges in the SMI-S provider to access the VMAX or VNX storage.

-

Set a sync interval of 1 hour.

Understanding VMAX Terminology

The following table outlines VMAX terms that are used in this section. Refer to these terms to gain a better conceptual understanding, before you prepare the EMC VMAX storage.

Table 22-1 VMAX terminologies

| Term | Definition |

|---|---|

|

Logical Unit |

An I/O device is referred to as a Logical Unit. |

|

Logical Unit Number |

A unique address associated with a Logical Unit. |

|

Initiator |

Any Logical Unit that starts a service request to another Logical Unit is referred to as an Initiator |

|

Initiator Group |

An initiator group is a logical grouping of up to 32 Fibre Channel initiators (HBA ports), eight iSCSI names, or a combination of both. An initiator group may also contain the name of another initiator group to allow the groups to be cascaded to a depth of one. |

|

Port Group |

A port group is a logical grouping of Fibre Channel and/or iSCSI front-end director ports. The only limit on the number of ports in a port group is the number of ports in the Symmetrix system. It is also likely that a port group can contain a subset of the available ports in order to isolate workloads to specific ports. Note: As a pre-requisite, OEM expects a port group created with the name |

|

Storage Group |

A storage group is a logical grouping of up to 4,096 Symmetrix devices. |

|

Target |

Any Logical Unit to which a service request is targeted is referred to as a Target |

|

Masking View |

A masking view defines an association between one initiator group, one port group, and one storage group. When a masking view is created, the devices in the storage group are mapped to the ports in the port group and masked to the initiators in the initiator group. |

|

SCSI Command |

A service request is referred to as a SCSI command |

|

Host Bus Adapter (HBA) |

The term host bus adapter (HBA) is most often used to refer to a Fibre Channel interface card. Each HBA has a unique World Wide Name (WWN), which is similar to an Ethernet MAC address in that it uses an OUI assigned by the IEEE. However, WWNs are longer (8 bytes). There are two types of WWNs on a HBA: a node WWN (WWNN), which is shared by all ports on a host bus adapter, and a port WWN (WWPN), which is unique to each port. There are HBA models of different speeds: 1Gbit/s, 2Gbit/s, 4Gbit/s, 8Gbit/s, 10Gbit/s, 16Gbit/s and 20Gbit/s. |

For more information on VMAX storage and terminologies, refer to the document EMC Symmetrix VMAX Family with Enginuity available in the EMC website.

Preparing the EMC VMAX Storage

Configure your EMC VMAX appliance such that it is zoned with all the required nodes where you need to provision databases. To prepare the EMC VMAX storage, do the following on the storage server:

-

Ensure that all the Host Initiator ports are available from the storage side.

-

It is recommended to create one initiator group per host with corresponding initiators to increase security. The 'Consistent LUNs' property of the immediate parent Initiator Group of the initiators should to set to 'No'

-

Create a Port Group called

ORACLE_EM_PORT_GROUPto be used by Oracle Enterprise Manager for creating Masking Views. This port group should contain all the target ports that will be viewed collectively by all the hosts registered in the Enterprise Manager Cloud Control system.For example, host1 views storage ports P1 and P2, and host2 views storage ports P3 and P4. Then, the

ORACLE_EM_PORT_GROUPshould include all ports P1, P2, P3 and P4. Include only the necessary target ports as needed by the development infrastructure. -

Create a separate Virtual Provisioning Pool also known as Thin Pool, and dedicate it for Oracle Enterprise Manager.

-

Ensure that the TimeFinder license is enabled to perform VP Snap

Preparing the EMC VNX Storage

Configure your EMC VNX appliance such that it is zoned with all the required nodes where you need to provision databases. To prepare the EMC VNX storage, do the following on the storage server:

Note:

EMC VNX Storage supports only LUN creation, cloning, and deletion. It does not support NAS.

-

Ensure that all host initiator ports are available from the storage side.

-

Ensure that the initiators belonging to one host are grouped and named after the Host on the EMC VNX storage.

-

Create one storage group with one host for each of the hosts registered in Enterprise Manager.

For example, if initiators i1 and i2 belong to host1, register the initiators under the name Host1. Create a new storage group SG1 and connect Host1 to it. Similarly, create one storage group for each of the hosts that are to be added to Enterprise Manager.

Preparing Database Servers

To prepare your server for Enterprise Manager Snap Clone, ensure the following:

-

Servers should be physical and equipped with Host Bus Adapters. NPIV and VMs not supported.

-

Configure your servers with recommended and supported multipath software. If you use EMC PowerPath, then enable the PowerPath license.To enable the PowerPath license, use the following command:

emcpreg -install

-

If you need the servers to support Oracle Real Application Clusters, then install Oracle Clusterware.

Note:

ASM and Clusterware have to be installed and those components have to be discovered in Enterprise Manager as a target. Once ASM and Clusterware are installed, additional ASM disk groups can be created from Enterprise Manager.

-

Enable Privileged Host Monitoring credentials for all the servers. If the server is part of a cluster, then you should enable privileged host monitoring credential for that cluster.

For more details on enabling privileged host monitoring credentials, refer to Oracle Enterprise Manager Framework, Host, and Services Metric Reference Manual.

-

If you are using Linux, you should configure Oracle ASMLib, and set the

asm_diskstringparameter to a valid ASM path. For example:/dev/oracleasm/disks/Update the boot sequence such that the ASMLib service is run first, and then the multipath service.

To install Oracle ASMLib, refer to the following website:

http://www.oracle.com/technetwork/server-storage/linux/install-082632.htmlTo configure Oracle ASMLib on multipath disks, refer to the following website:

http://www.oracle.com/technetwork/server-storage/linux/multipath-097959.html

Setting Privileged Host Monitoring Credentials

You should set the privilege delegation settings before setting Host monitoring credentials. Do the following:

Note:

This is required only on hosts that are used for snap cloning databases on EMC storage.

-

From the Setup menu, click on Security and then select Monitoring Credentials.

-

On the Monitoring Credentials page, select Cluster or Host according to your requirement and then. click Manage Monitoring Credentials.

-

On the Cluster Monitoring Credentials page, select Privileged Host Monitoring Credentials set for the cluster or host and click Set Credentials.

-

In the dialogue box that appears, specify the credentials, and click Save.

-

After the host monitoring credentials are set for the cluster, refresh the cluster metrics. Verify if the Storage Area Network metrics get collected for the hosts.

22.3.3.2 Customizing Storage Proxy Agents

A Proxy Agent is required when you register a NetApp, Sun ZFSSA or Solaris File System (ZFS) File System.

Before you register a NetApp storage server, meet the following prerequisites:

Note:

Storage Proxy Agent is supported only on Linux Intel x64 platform.

Acquiring Third Party Licenses

The Storage Management Framework is shipped by default for Linux x86-64 bit platform, and is dependent on the following third party modules:

-

Source CPAN - CPAN licensing apply

-

IO::Tty (version 1.10)

-

XML::Simple (version 2.20)

-

Net::SSLeay (version 1.52)

-

-

Open Source - Owner licensing apply

-

OpenSSL(version 1.0.1e)

-

-

Download the NetApp Manageability SDK version 5.0R1 for all the platforms from the following NetApp support site:

http://support.netapp.com/NOW/cgi-bin/software -

Unzip the 5.1 SDK and package the Perl NetApp Data OnTap Client SDK as a tar file. Generally, you will find the SDK in the

lib/perl/NetAppfolder. The tar file when extracted should look as follows:NetApp.tar - netapp - Na Element.pm - NaServer.pm - NaErrno.pm

For example, the Software Library entity

Storage Management Framework Third Party/Storage/NetApp/defaultshould have a single file entry that containsNetApp.tarwith the above tar structure.Note:

Ensure that there is no extra space in any file path name or software library name.

-

Once the tar file is ready, create the following folder hierarchy in software library:

Storage Management Framework Third Party/Storage/NetApp -

Upload the tar file as a Generic Component named

default.Note:

YTo upload the tar file, you must use the OMS shared filesystem for the software library.

The tar file should be uploaded to this

defaultsoftware library entity as a Main File.

Overriding the Default SDK

The default SDK is used for all the NetApp storage servers. However, the storage server may work with only a certain SDK. In such a case, you can override the SDK per storage server, by uploading an SDK and using it only for this particular storage server.

To override the existing SDK for a storage server, upload the tar file to the Software Library entity. The tar file should have the structure as mentioned in Step 3 of the previous section.

The Software Library entity name should be the same as the storage server name.

For example, if the storage server name is mynetapp.example.com, then the Software Library entity must be as follows:

Storage Management Framework Third Party/Storage/NetApp/mynetapp.example.com

Note:

A storage specific SDK is given a higher preference than the default SDK,

Overriding Third Party Server Components

By default, all the required third party components are shipped for Linux Intel 64 bit platform. If you need to override it by any chance, package the tar file as follows:

Note:

The tar file should contain a thirdparty folder whose structure should be as mentioned below:

thirdparty |-- lib | |-- engines | | |-- lib4758cca.so | | |-- libaep.so | | |-- libatalla.so | | |-- libcapi.so | | |-- libchil.so | | |-- libcswift.so | | |-- libgmp.so | | |-- libgost.so | | |-- libnuron.so | | |-- libpadlock.so | | |-- libsureware.so | | `-- libubsec.so | |-- libcrypto.a | |-- libcrypto.so | |-- libcrypto.so.1.0.0 | |-- libssl.a | |-- libssl.so | `-- libssl.so.1.0.0 `-- pm |-- CPAN | |-- IO | | |-- Pty.pm | | |-- Tty | | | `-- Constant.pm | | `-- Tty.pm | |-- Net | | |-- SSLeay | | | `-- Handle.pm | | `-- SSLeay.pm | |-- XML | | `-- Simple.pm | `-- auto | |-- IO | | `-- Tty | | |-- Tty.bs | | `-- Tty.so | `-- Net | `-- SSLeay | |-- SSLeay.bs | |-- SSLeay.so

Ensure that the tar file is uploaded to the Software Library entity which is named after the platform name, x86_64. The Software Library entity must be under the following:

Storage Management Framework Third Party/Server

The x86_64 entity, when uploaded is copied to all the storage proxy hosts irrespective of which storage server it would be processing. To use this entity on a specific storage proxy agent, name the entity after the host name.

For example, Storage Management Framework/Third Party/Server/x86_64 will be copied to any storage proxy host which is on an x86_64 platform. Similarly, Storage Management Framework Third Party/Server/myhost.example.com is copied only to myhost.example.com, if it is used as a storage proxy host.

The host name is given a higher preference than the platform preference.

22.3.4 Registering Storage Servers

To register a particular storage server, follow the procedure outlined in the respective section:

22.3.4.3 Registering an EMC Storage Server

To register the storage server, follow these steps:

Note:

Before you register an EMC storage server, the storage server should be prepared. To prepare the storage server refer to Configuring EMC Storage Servers.

22.3.5 Administering the Storage Server

To administer the storage server, refer to the following sections:

22.3.5.1 Synchronizing Storage Servers

When you register a storage server for the first time, a synchronize job is run automatically. However, to discover new changes or creations, you should schedule a synchronize job to run at a scheduled time, preferably during a quiet period when Snap Clone actions are not in progress. To do this, follow these steps:

Note:

The Associating Storage Volumes With Targets step relies on both database target metrics and host metrics. The database target (oracle_database/rac_database) should have up-to-date metrics for the Controlfiles, Datafiles and Redologs. The File Systems metric should be up to date for the hosts on which the database is running.

22.3.5.2 Deregistering Storage Servers

To deregister a registered storage server, follow these steps:

Note:

To deregister a storage server, you need FULL_STORAGE privilege on the storage along with FULL_JOB privilege on the Synchronization GUID of the storage server.

Note:

Once a storage is deregistered, the Snap Clone profiles and Service Templates on the storage will no longer be functional, and the relationship between these Profiles, Service Templates and Snap Cloned targets will be lost.

Note:

It is recommended to delete the volumes created using Enterprise Manager before deregistering a storage. As a self service user, you should submit deletion requests for the cloned databases.

To submit these deletion requests, click Remove from the Hierarchy tab on the Storage Registrations page for deleting the volumes that were created by Enterprise Manager for hosting test master databases.

22.3.6 Managing Storage Servers

22.3.6.1 Managing Storage Allocation

You can manage storage allocation by performing the following tasks:

-

Creating Thin Volumes (for EMC Storage Servers)

Editing the Storage Ceiling

Storage Ceiling is the maximum amount of storage from a project, aggregate, or thin pool that Enterprise Manager is allowed to use. This ensures that Enterprise Manager creates clones in that project only till this limit is reached. When a storage project is discovered for the first time, the entire capacity of the project is set as the ceiling. In case of Sun ZFS, the quota set on the project is used.

Note:

You must explicitly set quota property for the Sun ZFS storage project on the storage end. Also, the project should have a non zero quota set on the storage end. Else, Enterprise Manager will not be able to clone on it.

To edit the storage ceiling, do the following:

-

On the Storage Registration page, from the Storage section, select the storage server for which you want to edit the storage ceiling.

-

Select the Contents tab, select the aggregate, and then click Edit Storage Ceiling.

Note:

Edit Storage Ceiling option enables you modify the maximum amount of storage that Enterprise Manager can use. You can create clones or resize volumes only till this limit is reached.

-

In the Edit Storage Ceiling dialog box, enter the storage ceiling, and then, click OK.

Creating Storage Volumes

To create storage volume, do the following:

-

On the Storage Registration page, from the Storage section, select the storage server for which you want to create storage volume.

-

Select the Contents tab, select the aggregate, and then select Create Storage Volumes.

-

On the Create Storage Volumes page, in the Storage Volume Details section, click Add.

-

Select a storage and specify the size in GB (size cannot exceed the storage size). The specified size should be able to accommodate the test master database size, without consuming the entire storage size.

Next, specify a mount point starting with /.

For example, If the storage is "lunpool", select the "lunpool". The specified size under the size column should not exceed the storage space. If the size of the "lunpool" is 100GB and the test master database is 10 GB, then specify size as 10GB. The mount point should be a meaningful mount point starting with "/". For example: /oracle/oradata

-

In the Host Details section, specify the following:

-

Host Credentials: Specify the target host credentials of the Oracle software.

-

Storage Purpose: For using Snap Clone, the most important options are as follows:

-

Oracle Datafiles for RAC

-

Oracle Datafiles for Single Instance

Note:

You can also store the OCR and Voting disks and Oracle binaries in the storage volume,

-

-

Platform: Select the supported target platform. The volume will be mounted on the supported target platform.

-

Mount Options: Mount options field is automatically filled based on the values specified for the storage purpose and the platform. Do not edit the mount options.

-

Select NFS v3 or NFS v4.

-

-

Select one or more hosts to perform the mount operations by clicking Add.

If you select Oracle Datafiles for RAC, you would normally specify more than one host. The volume is then mounted on the specified hosts automatically after the completion of the procedure activity.

-

Click Submit.

When you click Submit, a procedure activity is executed. On completion of the procedure activity, the volumes get mounted on the target system. You can now proceed to create a test master database on the mounted volumes on the target system.

Resizing Volumes of a Database

When a database runs out of space in any of its volumes, you can resize the volume according to your requirement. To resize volume(s) of a clone, follow these steps:

Note:

This is not available for EMC storage servers.

Note:

Resizing of volumes of a Test Master database cannot be done using Enterprise Manager, unless the volumes for the Test Master were created using the Create Volumes UI.

Note:

You need the FULL_STORAGE privilege to resize volumes of a database or a clone. Also, ensure that the underlying storage supports quota management of volumes.

-

On the Storage Registration page, from the Storage section select the required storage server.

-

In the Details section, select the Hierarchy tab, and then select the target.

The Storage Volume Details table displays the details of the volumes of the target. This enables you to identify which of the volume of the target is running out of space.

-

In the Volume Details table, select Resize.

-

On the Resize Storage Volumes page, specify the New Writable Space for the volume or volumes that you want to resize. If you do not want to resize a volume, you can leave the New Writable Space field blank.

-

You can schedule the resize to take place immediately or at a later time.

-

Click Submit.

Note:

You can monitor the re-size procedure from the Procedure Activity tab.

Creating Thin Volumes

This section is only for EMC Symmetrix VMAX Family and EMC VNX Storage.

An EMC Symmetrix VMAX Family or an EMC VNX storage enables you to create thin volumes and ASM disks from the created thin volumes. To create thin volumes on an EMC Symmetrix VMAX Family or EMC VNX storage, follow these steps:

Note:

Enterprise Manager enables you to create a thin volume from a thin pool after clusterware and ASM components are installed.

After you install the clusterware and ASM components, the asm_diskstring parameter may be set to Null. This could cause failure during creation of the thin pool.

To prevent this from happening, set the asm_diskstring parameter to a valid disk path and restart the ASM instance.

For example, set the asm_diskstring parameter as:

/dev/oracleasm/disks/*

Example 22-1 Understanding Space Utilization on EMC Storage Servers

Writable space implementation on EMC Storage Server is different from NetApp, Sun ZFS SA, and Sun ZFS storage servers. In NetApp, Sun ZFS SA, and Sun ZFS storage servers, writable space defined in a service template will be allocated from the storage pool to the clone database even if data is not written to the volume. In EMC storage servers (VMAX and VNX) space is only reserved on the storage pool. The space is consumed only when data is written to the volume or LUN.

For example, if you define 10GB writable space in a service template, in NetApp, Sun ZFS SA, and Sun ZFS, space of 10GB will be allocated to the clone database from storage pool even if data is not written to the volume. In an EMC storage, space is consumed only when data is written to the volume or LUN.

In Enterprise Manager, to create thin volumes (ASM Disk groups/LUNS) up to the maximum size defined for the storage pool, select the Contents tab on the Storage Registration page, and then select Create Thin Volume.The test master database can then be created on ASM Disk groups or LUNs.

The following graphic shows the Test Master database and the created clone database:

The following graphic shows the storage volume of the Test Master database:

The following graphic shows storage volume of the clone database:

Note:

ASM disk groups, as discussed, can be created using the Create Thin Volumes option. However, they can also be created using other methods. The following example illustrates the space usage on EMC VMAX and VNX storage servers:

Let us assume the Storage Pool is of size 1 TB and Storage ceiling is set to 1 TB.

Scenario 1:

If an Enterprise Manager Storage Administrator creates 2 ASM disk groups, as an example, DATA and REDO of sizes 125GB and 75GB respectively through the Create Thin Volume method and the Test Master database is created on those disk groups, used space on DATA and REDO disk groups are 100GB and 50GB respectively (remaining free space on DATA and REDO disk groups are 25GB), then each clone database created by a self service user will be allocated 25GB of writable space on the DATA and REDO disk groups.

New data written to the cloned database is the actual used space and can grow up to 25GB on each disk group. The DATA and REDO disk groups in this scenario.

The Enterprise Manager Storage Administrator will be able to create 600GB LUNs through the Create Thin volume method, assuming a clone database is created from a 200GB Test Master database. The size of the clone database will also be deducted from available space. The Self Service User will be able to create multiple clones. The number of clone databases that can be created cannot be estimated as it depends on the amount of new data written to the initial clone database in that storage pool.

Scenario 2:

If an Enterprise Manager Storage Administrator creates 2 ASM disk groups, as an example, DATA and REDO of sizes 850GB and 150GB respectively through the Create Thin Volume method, and the Test Master database is created on those ASM disk groups, used space on the DATA and REDO disk groups are 750GB and 50GB respectively (remaining free space on DATA and REDO is 100GB), then each clone database created by a self service user will be allocated 100GB of writable space on DATA and REDO disk groups.

New data written to the cloned database is the actual used space and can grow up to 100GB on each disk group. The DATA and REDO disk groups in this scenario.

Similar to Scenario 1, the Self Service User will be able to create multiple clones, but number of clone databases cannot be estimated. The Enterprise Manager Storage Administrator will not be able to create additional disk groups in scenario 2. This is the major difference when compared to Scenario 1.

In both scenarios, only the actual used space of the clones will be subtracted from the Storage Ceiling.The general formula for writable disk space is the difference between the LUN size and the actual space occupied by data.

22.3.6.3 Viewing Storage Registration Overview and Hierarchy

To view the storage registration overview, on the Storage Registration page, in the Details section, select the Overview tab. The Overview section provides a summary of storage usage information. It also displays a Snap Clone Storage Savings graph that shows the total space savings by creating the databases as a Snap Clone versus without Snap Clone.

Note:

If you have NetApp volumes with no space guarantee, you may see negative allocated space in the Overview tab. Set guarantee to 'volume' to prevent this.

To view the storage registration hierarchy, on the Storage Registration page, in the Details sections, select the Hierarchy tab. This displays the storage relationships between the following:

-

Test Master Database

-

Database Profile

-

Snap Clone Database

-

Snap Clone Database Snapshots

You can select a row to display the corresponding Volume or Snapshot Details.

If a database profile or Snap Clone database creation was not successful, and it is not possible to delete the entity from its respective user interface, click on the Remove button to access the Manage Storage page. From this page, you can submit a procedure to dismount volumes and delete the snapshots or volumes created from an incomplete database profile or snap clone database.

Note:

The Manage Storage page only handles cleanup of storage entities and does not remove any database profile or target information from the repository.

The Remove button is enabled only if you have the FULL_STORAGE privilege.

You can also select the Procedure Activity tab on the right panel, to see any storage related procedures run against that storage entity.

To view the NFS Exports, select the Volume Details tab. Select View, Columns, and then select NFS Exports.

The Volume Details tab, under the Hierarchy tab also has a Synchronize button.This enables you to submit a synchronize target deployment procedure. The deployment procedure collects metrics for a given target and its host, determines which volumes are used by the target, collects the latest information, and updates the storage registration data model. It can be used when a target has been recently changed, data files added in different locations, and the like.

22.3.6.4 Editing Storage Servers

To edit a storage server, on the Storage Registration page, select the storage server and then, click Edit. On the Storage Edit page, you can do the following:

-

Add or remove aliases.

-

Add, remove, or select an Agent that can be used to perform operations on the storage server.

-

Specify a frequency to synchronize storage details with the hardware.

Note:

If the credentials for editing a storage server are not owned by you, an Override Credentials checkbox will be present in the Storage and Agent to Manage Storage sections. You can choose to use the same credentials or you can override the credentials by selecting the checkbox.

22.4 Creating Test Master Databases

This section contains the following topics:

22.4.1 Creating a Discretely Synchronized Test Master

A test master database is a sanitized version of the production database. Production data can be optionally masked before the test master is created. A test master can be created from a snapshot or an RMAN Backup profile taken at a prior point in time and refreshed at specific intervals. This option is useful if the source data has to be masked to hide sensitive data.

To create a test master, follow these steps:

Note:

You can also use the emcli create_clone command to create the test master. See Creating a Database Clone Using EM CLI Verbs for more details.

22.4.2 Using a Physical Standby Database as a Test Master

A test master database is a sanitized version of the production database. A test master can be created from a live standby database by using the Oracle Data Guard feature. Profiles or snapshots can be created from the test master (see Creating a Database Provisioning Profile Using Snapshots) and these profiles can be used to create snap clones (see Requesting a Database). Since the test master is a physical standby database with live data, you must schedule and create profiles and snapshots on a periodic basis to ensure that the latest data is captured in the profile (see Creating and Refreshing Snapshots of the Test Master). Self service users can create multiple snap clones from each profile and refresh their snap clones (see Refreshing a Database) when a new profile or snapshot become available.

To create a test master, follow these steps:

- From the Enterprise menu, select Cloud, then select Cloud Home. From the Oracle Cloud menu, select Setup, then select the Database Service family on the left panel. Click the Test Master Databases tab.

- To create a test master from a live standby database, click Add and select a standby database that is to be designated as the test master. The newly added database appears in the Test Master Database page and can be used to create the snap clone database instances.

22.5 Creating Snap Clone Databases

This section contains the following topics:

22.5.1 Creating Snap Clones from the Cloning Dashboard

You can create a snap clone from the Administration Dashboard and promote the snap clone as the Test Master Database. This section outlines the following procedures which you can use to create and manage snap clone databases:

22.5.1.2 Creating an Exadata Test Master Database

A test master database is a sanitized version of the production database. Production data can be optionally masked before the test master is created. A test master can be created from a snapshot or an RMAN Backup profile taken at a prior point in time and refreshed at specific intervals. This option is useful if the source data has to be masked to hide sensitive data.

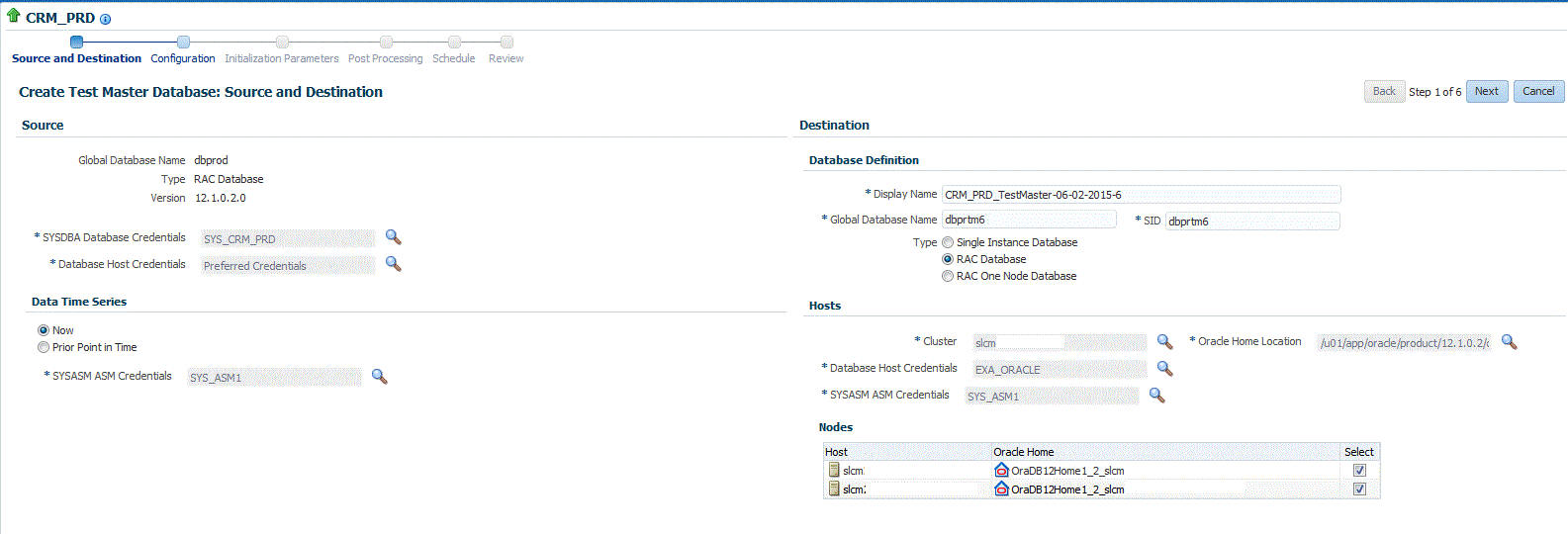

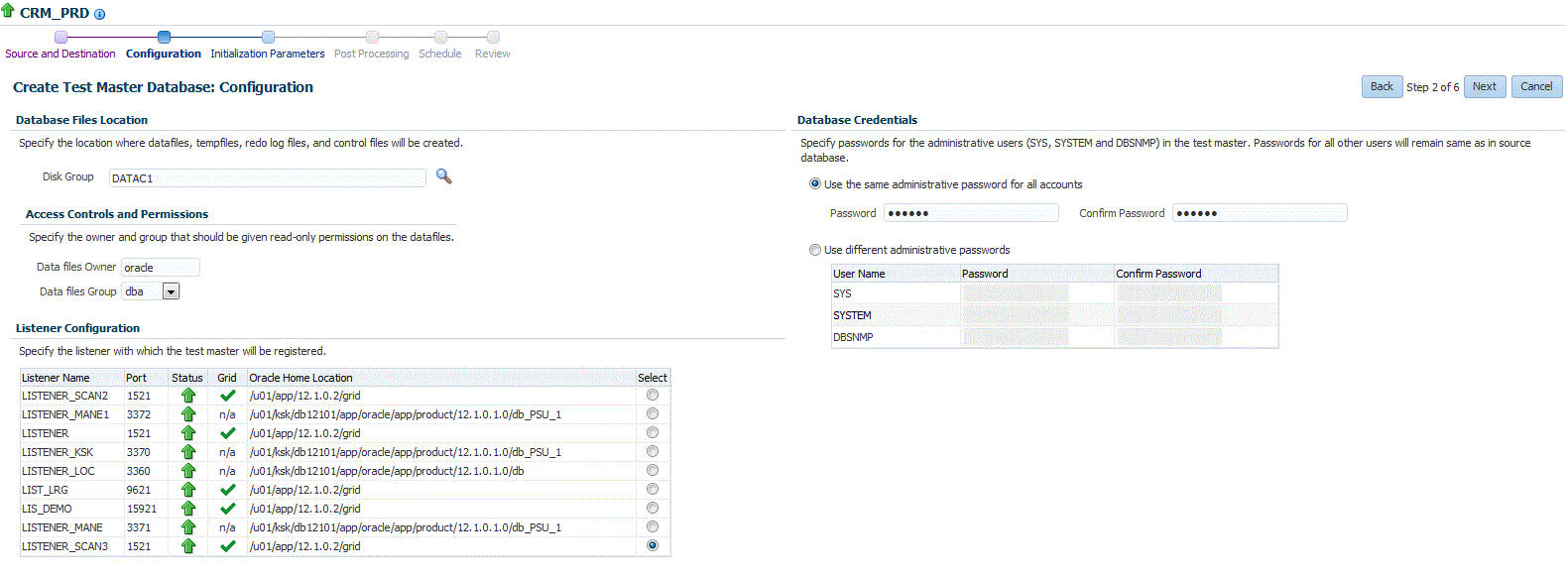

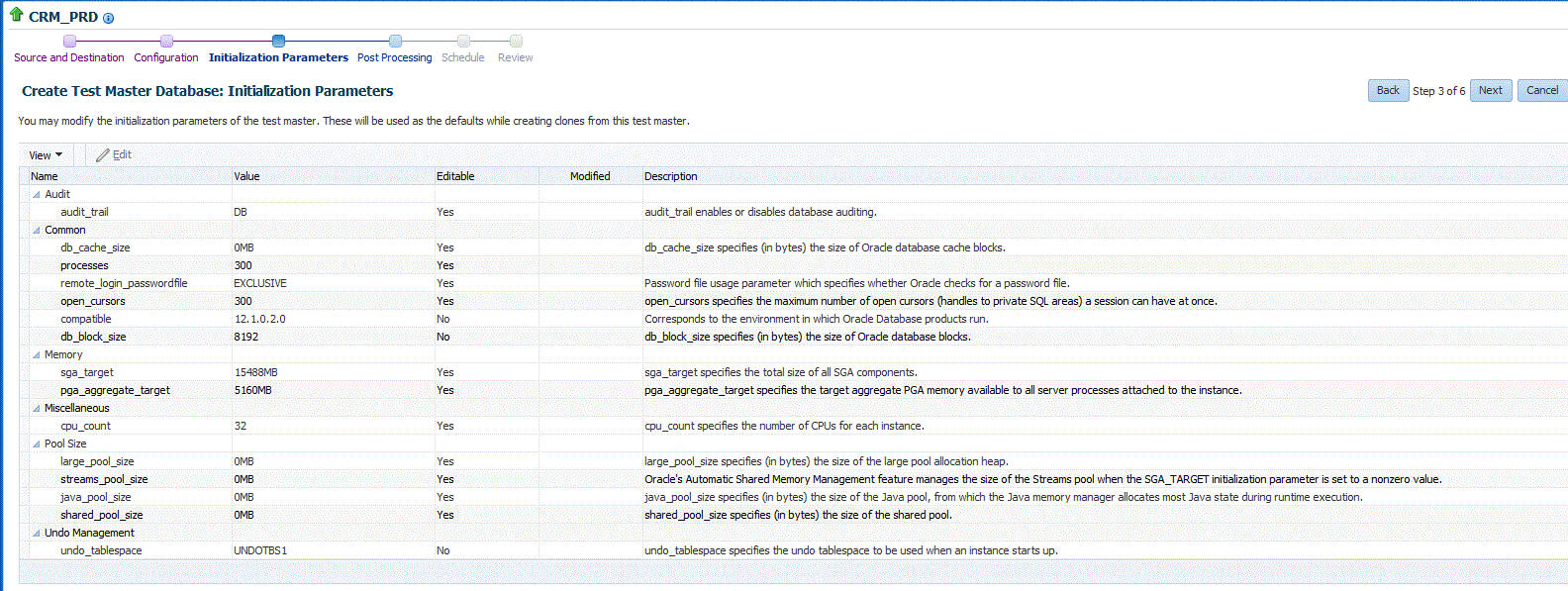

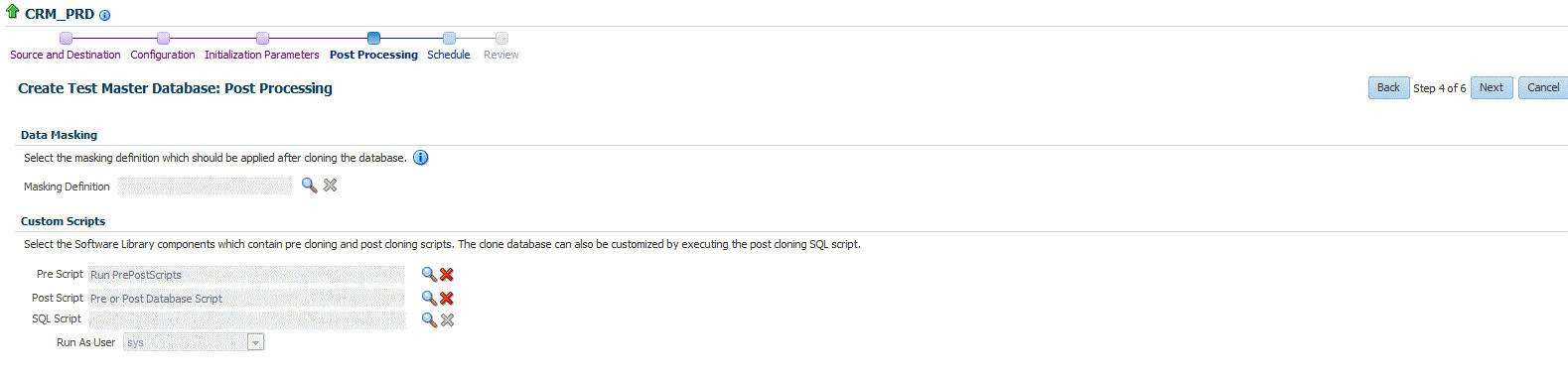

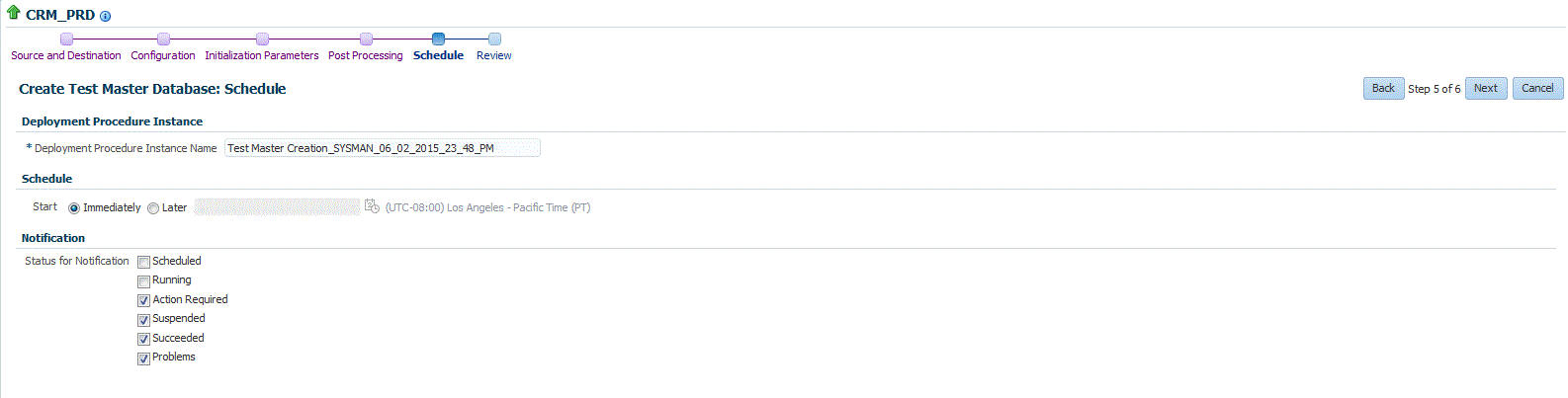

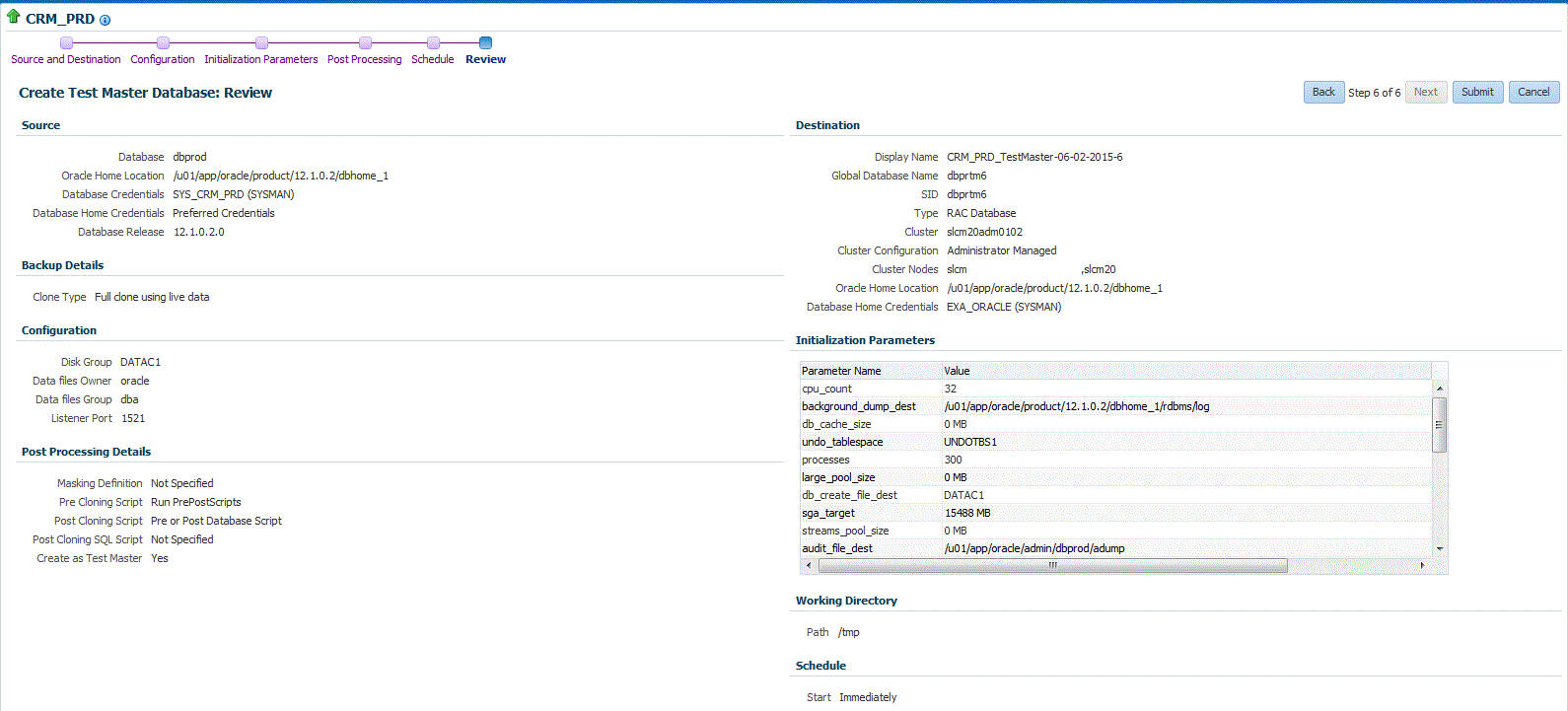

To create a test master, follow these steps:

22.5.1.3 Creating a Test Master Database

To create a Test Master database, you can use either of the following solutions:

22.5.1.3.1 Creating a Test Master Database Using the Clone Wizard

A test master database is a sanitized version of the production database. Production data can be optionally masked before the test master is created. A test master can be created from a snapshot or an RMAN Backup profile taken at a prior point in time and refreshed at specific intervals. This option is useful if the source data has to be masked to hide sensitive data.

To create a test master, follow these steps:

22.5.1.3.2 Creating a Test Master Database Using EM CLI

To create a Test Master database execute the verb emcli create_clone -inputFile=/tmp/create_test_master.props, where create_test_master.props is the properties file with the parameters and values required to create the Test Master.

Sample properties file (create_test_master.props):

CLONE_TYPE=DUPLICATE COMMON_DB_DBSNMP_PASSWORD=password COMMON_DB_SID=clonedb COMMON_DB_SYSTEM_PASSWORD=sunrise COMMON_DB_SYS_PASSWORD=sunrise DATABASE_PASSWORDS=Sumrise1 COMMON_GLOBAL_DB_NAME=clonedb.xyz.com DB_ADMIN_PASSWORD_SAME=true DEST_LISTENER_SELECTION=DEST_DB_HOME HOST_NORMAL_NAMED_CRED=HOST:SYSCO IS_TESTMASTER_DATABASE=Y USAGE_MODE = testMaster CLOUD_TARGET = true LISTENER_PORT=1526 ORACLE_BASE_LOC=/scratch/app ORACLE_HOME_LOC=/scratch/app/product/11.2.0./dbhome_1 EM_USER=sys EM_PWD=Sunrise1 SRC_DB_CRED=DB:SYSCO SRC_DB_TARGET_NAME=ora.xyz.com SRC_HOST_NORMAL_NAMED_CRED=HOST:SYSCO TARGET_HOST_LIST=bl1.xyz.com

To verify the status of the Test Master database creation execute the EM CLI verb emcli get_instance_status -instance={instance GUI}.

22.5.1.4 Enabling a Test Master Database

To convert a database to a test master database, follow these steps:

22.5.1.6 Creating a Test Master Pluggable Database

To create a Test Master PDB, you can use either of the following solutions:

22.5.1.6.1 Creating a Test Master Pluggable Database Using the Clone Wizard

If you have the 12.1.0.8 Enterprise Manager for Oracle Database plug-in deployed in your system, you can create a test master PDB from a source PDB, using the new Clone PDB Wizard.

To create a test master PDB from a source PDB, follow these steps:

22.5.1.6.2 Creating a Test Master Pluggable Database Using EM CLI

To create a Test Master pluggable database, execute the command emcli pdb_clone_management -input_file=data:/xyz/sdf/pdb_test_master.props, where the sample contents of the pdb_test_master.props file is given below.

Sample properties file to create a Test master PDB:

SRC_PDB_TARGET=cdb_prod_PDB SRC_HOST_CREDS=NC_HOST_SCY:SYCO SRC_CDB_CREDS=NC_HOST_SYC:SYCO SRC_WORK_DIR=/tmp/source DEST_HOST_CREDS=NC_SLCO_SSH:SYS DEST_LOCATION=/scratch/sray/app/sray/cdb_tm/HR_TM_PDB6 DEST_CDB_TARGET=cdb_tm DEST_CDB_TYPE=oracle_database DEST_CDB_CREDS=NC_HOST_SYC:SYCO DEST_PDB_NAME=HR_TM_PDB6 IS_CREATE_AS_TESTMASTER=true MASKING_DEFINITION_NAME=CRM_Masking_Defn

Note:

You will need to add two more parameters (ACL_DF_OWNER=oracle and ACL_DF_GROUP=oinstall) in case you need to create the Test Master on Exadata ASM.

22.5.1.8 Creating a CloneDB Database

You can create CloneDB databases only when you have RMAN Image backups.

To create a CloneDB database, follow these steps:

22.5.1.9 Managing Clone Databases

The Clone and Refresh page enables you to manage clone databases by adding clone databases, removing clone database, and promoting the clone databases as Test Master.

To access the Clone and Refresh page, navigate to an Oracle database target home page. On the home page, click Oracle Database, select Provisioning, and then select Clone and Refresh.

Adding Clone Databases

The Add button can be used to add the clones of the current databases which have already been created.To add a database clone instance, click on Add. In the Select Targets dialog box that opens, select a database target, and click Select. The database instance gets added to the Clones section in the Database Cloning page.

Removing Clone Databases

Only the databases that are added using the Add button can be removed using the Remove button.T

o remove a database clone instance, select the database clone instance that you want to remove, from the Clones section. Click Remove.

Promoting Clone Databases as Test Master

To promote a database clone database instance as Test Master, select the clone instance that you want to recreate from the Clone section. Click Promote as Test Master.

You can also remove the clone database instance from Test Masters, by selecting the clone database instance from the Clones section, and clicking Remove from Test Masters.

Refresh Clone Databases

To refresh a clone database, select the clone database instance from the Clone section, and then click Refresh. See Refreshing Clone Databases.

Creating Data Profiles

The Data Profiles tab on the Clone and Refresh page displays the data profiles that you have created from the clone database. On the Data Profiles page, you can view the contents of existing data profiles. You can also Edit and Refresh these data profiles.

You can also create a new data profile by clicking Create. This takes you to the Create Provisioning Profile wizard. Refer to Enterprise Manager Cloud Control Administrator's Guide for information on how to create a provisioning profile using this wizard.

22.5.1.10 Refreshing Clone Databases

- From the Targets menu, select Databases.

- On the databases home page, select the database clone instance that you want to refresh from the list of databases.

- On the database target home page, click Oracle Database, select Provisioning, and then select Clone and Refresh.

- On the Clone and Refresh page, select the Refresh tab.

The Refresh page displays the following sections:

-

Drift from Source Database

This section displays the name of the source database from which this database has been cloned. It shows the number of days since the clone database has been refreshed. Click Refresh to refresh the clone database.

-

Database Volume Details

This section displays the storage details for the selected databases. Click Show files to view the layout of the database files in the volumes. A display box appears that shows the storage layout and file layout of the selected database.

-

History

This section displays the past refreshes of the database. It shows the date and time of the refresh, where it has been refreshed from, the owner of the database, and the status of the refresh action.

-

Storage Utilization

This section displays the storage volume of the database, the storage contents, the mount point, the amount of writable storage used, and the synchronization date.

22.5.2 Creating Snap Clones from a Discretely Synchronized Test Master

You can create snap clones from a discretely synchronized test master if the test master is present on a NAS storage device. This table lists the steps involved in creating a snap clone using a snapshot profile.

Table 22-2 Creating Snap Clone - Discrete Flow

| Step | Task | Role |

|---|---|---|

|

1 |

Follow the steps in the Getting Started section to enable DBaaS. |

See Getting Started |

|

2 |

Register storage servers. |

|

|

3 |

Create one or more resource providers. |

|

|

4 |

Configure the request settings. |

|

|

4 |

Define quotas for each self service user. |

See Defining Quotas |

|

5 |

Create a test master database from an RMAN Backup. |

|

|

6 |

Enable the test master for snap clone |

|

|

6 |

Create a snap clone profile from the test master. |

See Creating a Database Provisioning Profile Using Snapshots |

|

9 |

Create a service template based on the profile you have created. |

|

|

10 |

Configure the Chargeback Service. (this step is optional) |

|

|

11 |

Select the service template you have created and request a database. |

|

|

12 |

Refresh the test master and the database instance:

|

See: |

22.5.2.7 Configuring Chargeback

Optionally, you can configure the chargeback service. See Chargeback Administration.

22.5.2.8 Requesting a Database

The self service user can now select the service template based on the database template profile and create a database. See Requesting a Database.

22.5.2.9 Example: Creating Snap Clones from Discretely Synchronized Test Master

The following example shows how you can create snap clones from a test master database that is refreshed at discrete intervals.

- First, you must make sure that all the prerequisites are met. See Getting Started.

- Next, you must register the storage server. See Registering Storage Servers.

- You must then create one or more PaaS Infrastructure Zones and one or more database pools. See Creating Resource Providers.

- Then, you must define the quota that you wish to allocate to the self service users. See Defining Quotas.

- The next step is to identify the production database (prod1) and create an RMAN backup prod1_backup.

- Create a test master (testmaster1) based on prod1_backup. See Creating a Discretely Synchronized Test Master.

- Next, you must enable testmaster1 for snap clone. This allows creation of snap clones using snapshot technology. See Enabling the Test Master for Snap Clone.

- Next, you must create a profile (snap_profile) that is based on testmaster1. See Creating a Database Provisioning Profile Using Snapshots

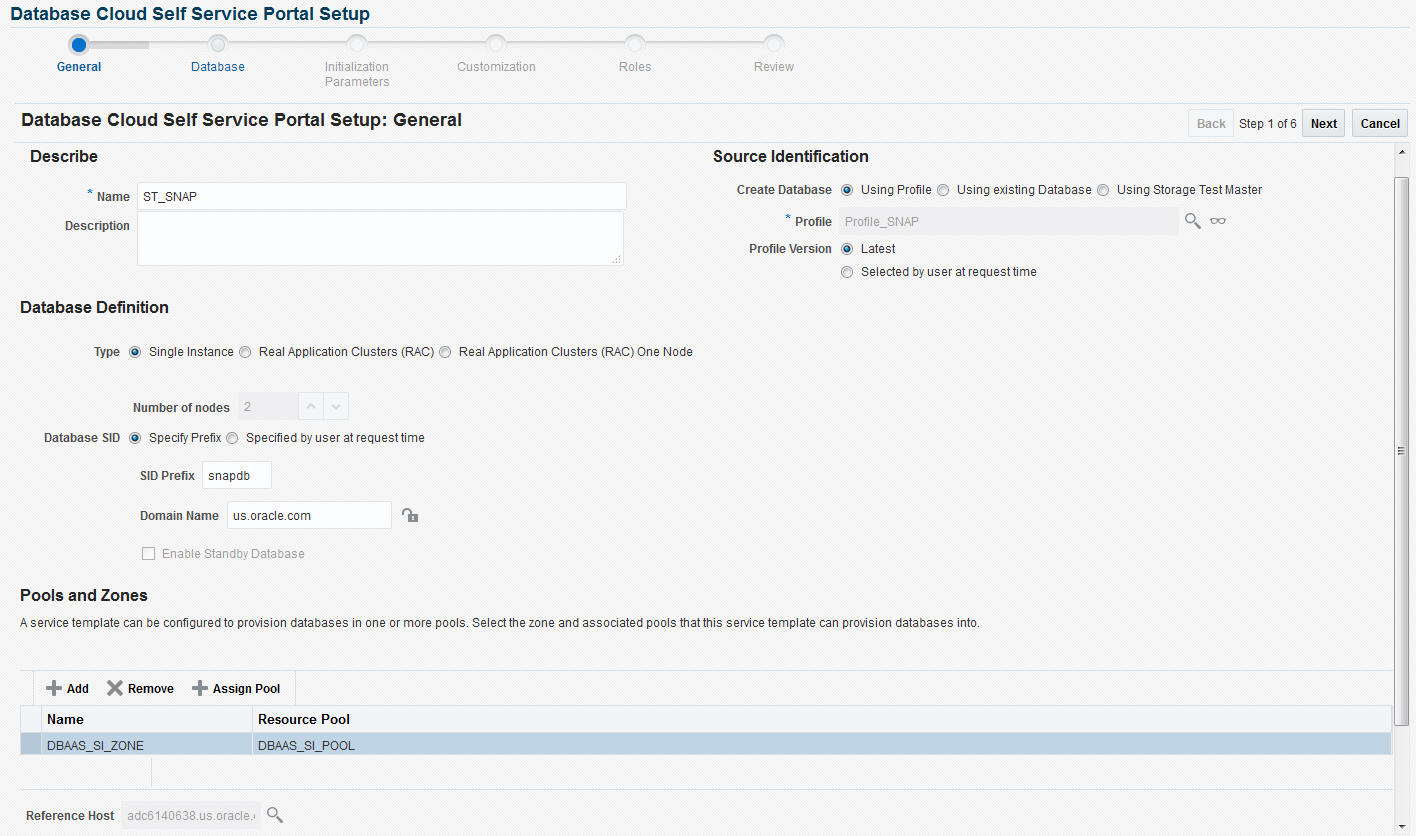

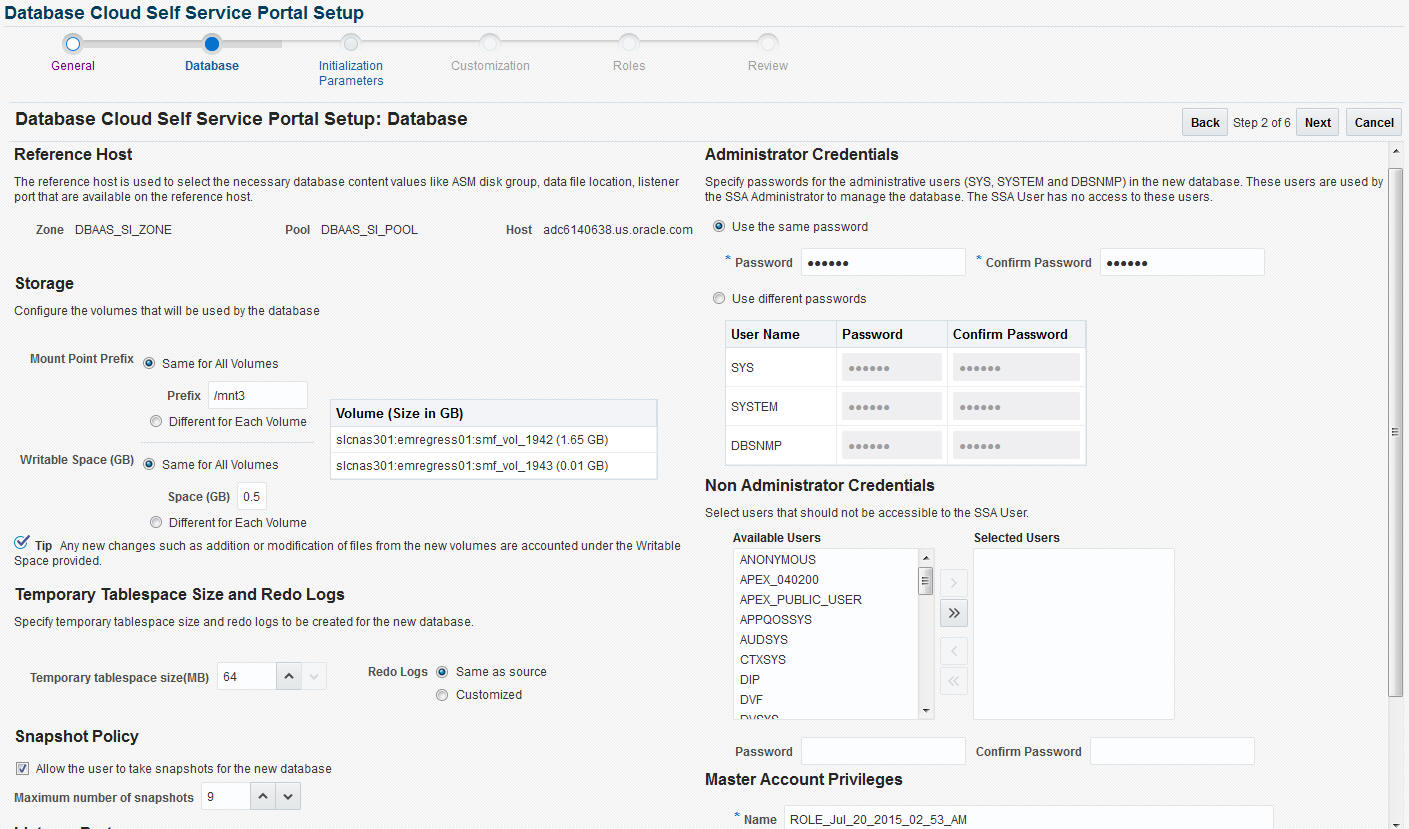

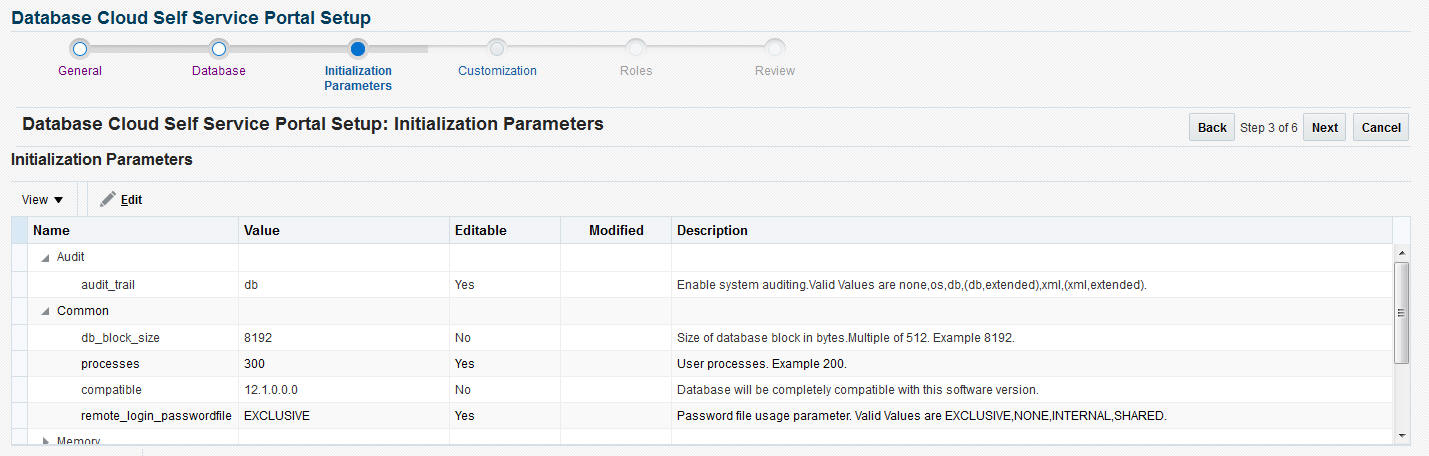

- To make this profile available to the self service user, you must create a service catalog entry or a service template. Create a template called Snap Clone Template1. See Creating a Service Template Using Snap Clone Profile. In the Service Template, the Profile Version field is set to Latest. This will ensure that the self service user will always use the latest version of the profile to create database instances.

- The self service user can then use the Snap Clone Template1 to create the snap clone. See Requesting a Database.

- To get the latest production data, the self service administrator refreshes TestMaster1. See Refreshing the Test Master Database.

- Since the test master now contains updated data, a new revision of the profile must be created. See Refreshing the Snap Shot Profile.

- Now that a new revision of the profile (snap_profile) is available, the self service user can refresh his database instance to get the latest production data. See Refreshing a Database. The storage space that was used by the older version of the test master will be reclaimed by the refreshed test master.

22.5.3 Creating Snap Clones from an In-Sync Test Master

You can create snap clones using a standby database that is designated as the test master database. The test master database is always current and in sync with the production database. To create snap clones using this approach, follow these steps:

Table 22-3 Creating Snap Clone (Continuous Flow)

| Step | Task | Role |

|---|---|---|

|

1 |

Follow the steps in the Getting Started section to enable DBaaS. |

See Getting Started. |

|

2 |

Register storage servers. |

|

|

3 |

Create one or more resource providers. |

|

|

4 |

Configure the request settings. |

|

|

5 |

Define quotas for each self service user. |

See Defining Quotas |

|

7 |

Add a standby database and designate it as the test master. |

See Using a Physical Standby Database as a Test Master Note: This standby database must be present on a registered storage server (such as NetApp, Sun ZFS, or EMC) that allows creation of snap clones. |

|

6 |

Enable the test master for snap clone. |

|

|

7 |

Create snapshot profiles from the test master. |

|

|

8 |

Create a service template. |

|

|

9 |

Configure the Chargeback Service. (this step is optional) |

|

|

10 |

While deploying a database, select the service template you have created. |

22.5.3.2 Registering Storage Servers

To register storage servers for:

-

NetApp and Sun ZFS Storage Server: See Registering a NetApp or a Sun ZFS Storage Server.

-

Solaris File System (ZFS): See Registering a Solaris File System (ZFS) Storage Server.

-

EMC Storage Server: See Registering an EMC Storage Server.

22.5.3.3 Creating Resource Providers

You must create one or more resource providers which include:

-

PaaS Infrastructure Zones: See Creating a PaaS Infrastructure Zone.

-

Database Pool: See Creating a Database Pool for Database as a Service

22.5.3.4 Configuring Request Settings

You can configure the request settings by specifying when a request can be made, its duration, and so on. See Configuring Request Settings for details.

22.5.3.5 Defining Quotas

After configuring the request settings, you must define quotas for each self service user. See Setting Up Quotas for details.

22.5.3.6 Configuring Chargeback

Optionally, you can configure the chargeback service. See Chargeback Administration.

22.5.3.7 Requesting a Database

The self service user can now select the service template based on the database template profile and create a database. See Requesting a Database.

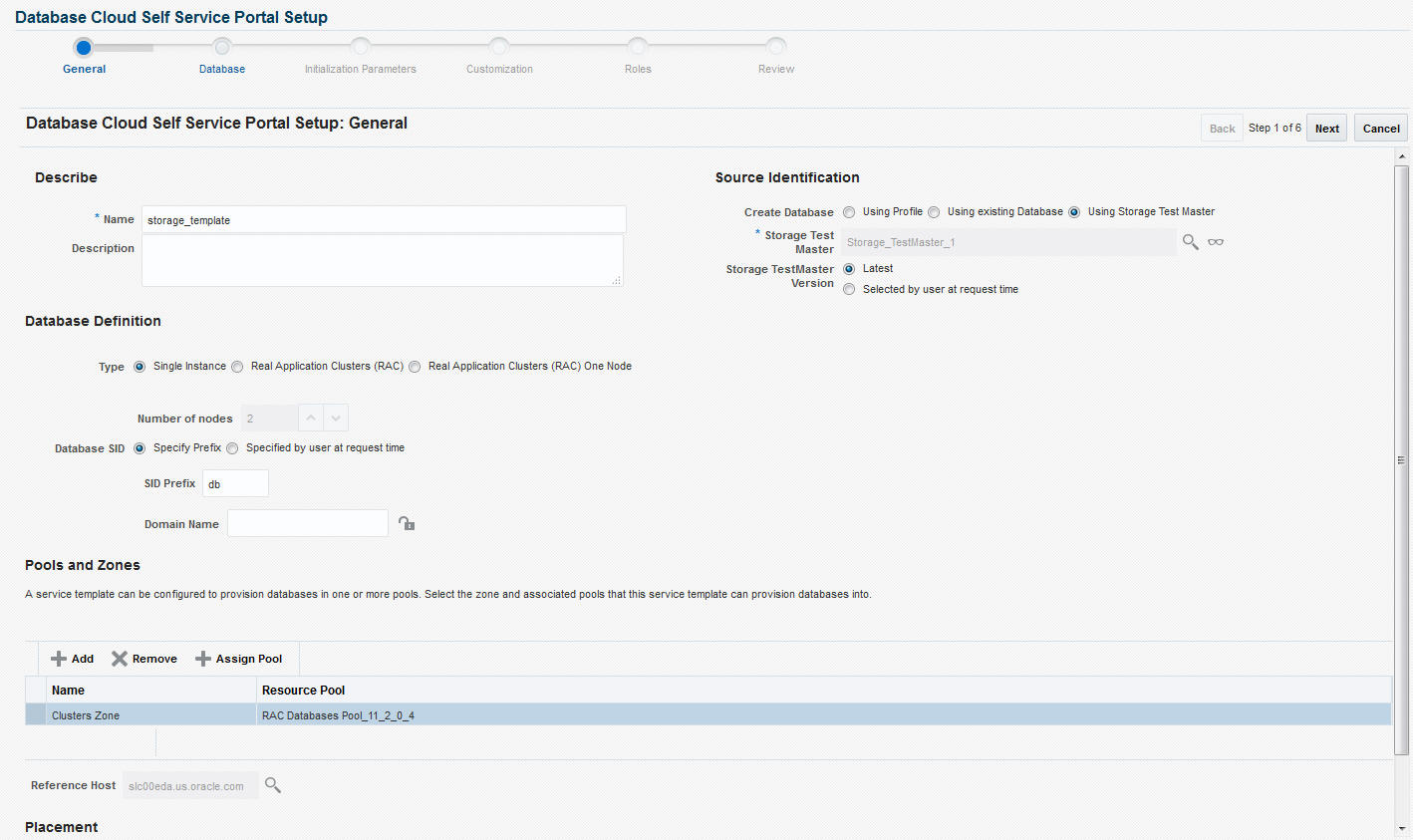

22.5.4 Creating Snap Clones from a Storage Test Master

You can use storage snapshots of the test master to create snap clones. This table lists the steps involved in creating a snap clone using a storage test master.

Table 22-4 Creating Snap Clone - Discrete Flow

| Step | Task | Role |

|---|---|---|

|

1 |

Follow the steps in the Getting Started section to enable DBaaS. |

See Getting Started |

|

2 |

Register storage servers. |

|

|

3 |

Create one or more resource providers. |

|

|

4 |

Configure the request settings. |

|

|

4 |

Define quotas for each self service user. |

See Defining Quotas |

|

5 |

Create a storage test master. |

|

|

9 |

Create a service template based on the storage test master. |

|

|

10 |

Configure the Chargeback Service. (this step is optional) |

|

|

11 |

Select the service template you have created and request a database. |

|

|

12 |

Refresh the storage test master and the database instance:

|

See: |

22.5.4.6 Configuring Chargeback

Optionally, you can configure the chargeback service. See Chargeback Administration.

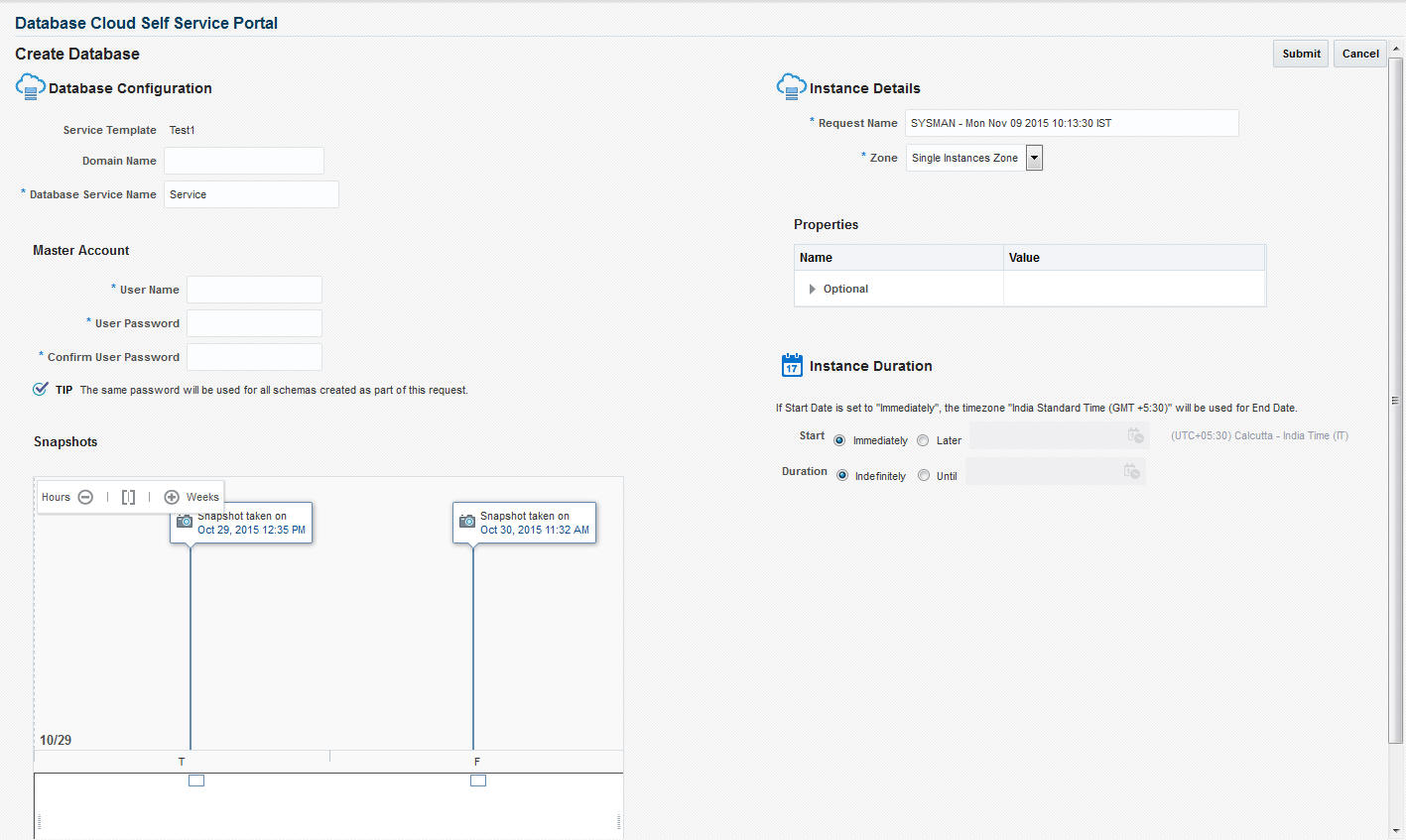

22.5.4.7 Requesting a Database

The self service user can now select the service template based on the database template profile and create a database. See Requesting a Database.

22.5.5 Creating a CloneDB Database

The CloneDB feature allows you to clone a database multiple times without copying the data into different locations. Instead Oracle Database creates the files in the Clone DB database using copy-on-write technology, so that only the blocks that are modified in the Clone DB database require additional storage on disk. Clone DB reduces the amount of storage required for testing purposes and enables rapid creation of multiple database clones. Clone DB is supported for database 11.2.0.3 or later versions.

You can create CloneDB databases by using a discretely synchronized test master by following these steps:

Table 22-5 Creating Snap Clone - Discrete Flow

| Step | Task | Role |

|---|---|---|

|

1 |

Follow the steps in the Getting Started section to enable DBaaS. |

See Getting Started |

|

3 |

Create one or more resource providers. |

|

|

4 |

Configure the request settings. |

|

|

5 |

Define quotas for each self service user. |

See Defining Quotas |

|

6 |

Create a database provisioning profile using snapshots from an RMAN Image Backup. |

See Creating a Database Provisioning Profile Using RMAN Database Image |

|

9 |

Create a service template based on the profile you have created. |

|

|

10 |

Configure the Chargeback Service. (this step is optional) |

|

|

11 |

Select the service template you have created and request a database. |

22.6 Creating a Database Provisioning Profile for Snap Clone

This section covers the following:

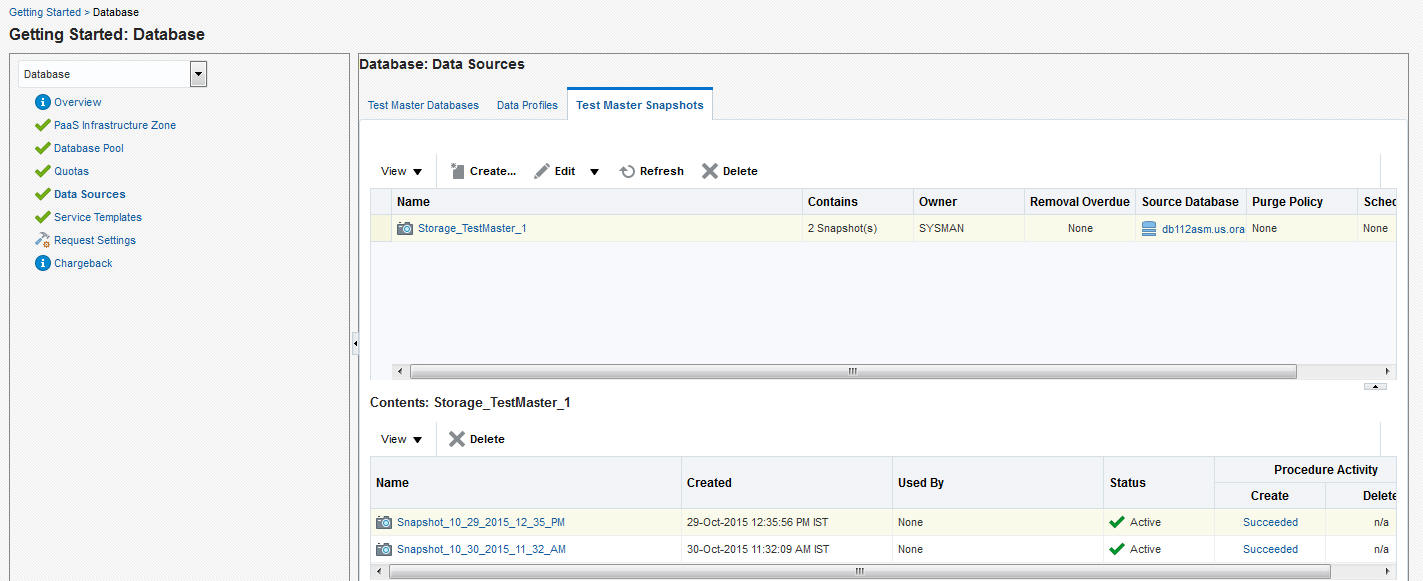

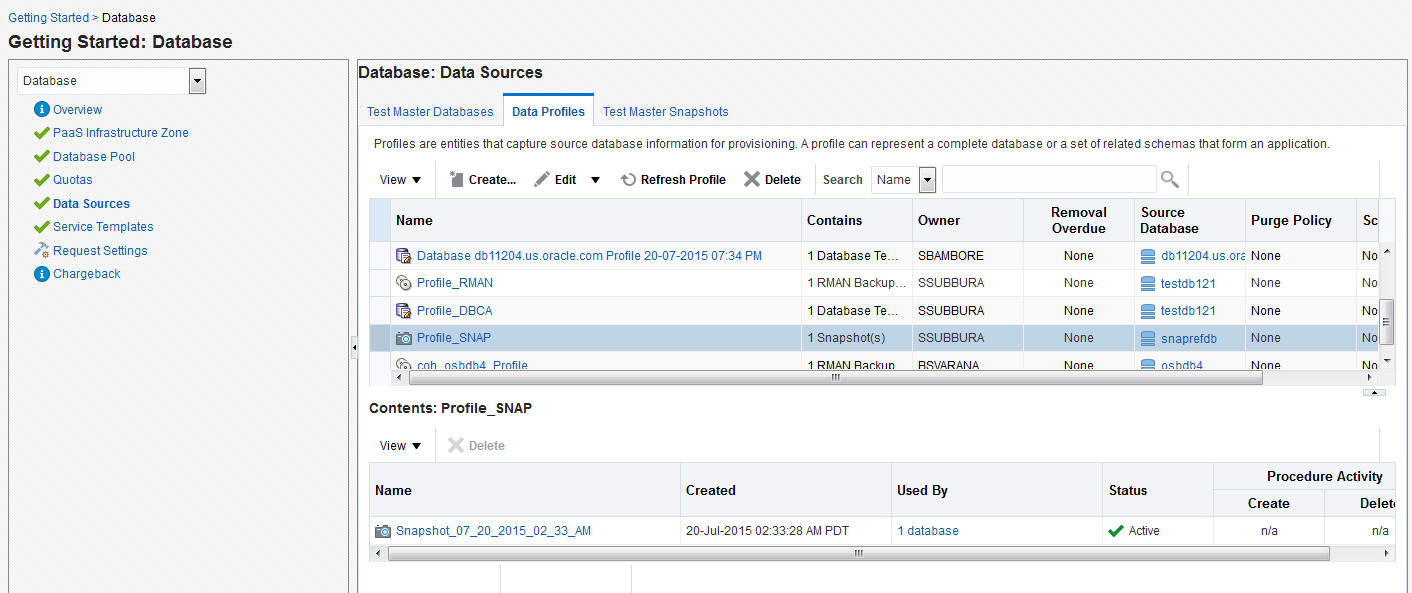

22.6.1 Creating a Database Provisioning Profile Using Snapshots

Prerequisites for Creating a Database Provisioning Profile Using Snapshots

Before you create a database provisioning profile, follow these prerequisites:

-

Ensure that the storage server you want to register for storage is available on the network.To register a storage server, refer to Registering and Managing Storage Servers.

Note:

NetApp ,Sun ZFS, Solaris ZFS, and EMC storage servers are supported in Enterprise Manager Cloud Control 12c.

-

Ensure that the storage server is connected to a Management Agent installed and monitored in Enterprise Manager Cloud Control for communication.

-

Ensure that the storage server is registered, and at least one database should be present which is enabled for Snap Clone.

-

To create the profile, you must have the

EM_STORAGE_OPERATORorEM_STORAGE_ADMINISTRATORprivileges.

Creating a Database Provisioning Profile Using Snapshots

To create a database provisioning profile, follow these steps:

Note:

When a snapshot is used by a database, it cannot be deleted. When you remove a snapshot, it becomes obsolete.

This means that you cannot request any new databases using the obsolete snapshot. This is indicated by the red pushpin against the database, which means that the snapshot is pinned and cannot be used.

When the database using that snapshot is deleted, it gets automatically purged in the next run.

22.7 Creating Service Templates for Snap Clone

This section contains the following topics:

22.7.3 Creating a Service Template Using RMAN Image Profile

To create a service template using RMAN Image Profile, follow these steps:

22.7.4 Creating a Service Template for EMC Snap Clone

Note:

This option can be used only for snapshots created on EMC storage.

Prerequisites

Before you can create snap clones on EMC storage, you must ensure that the prerequisites described in Configuring EMC Storage Servers are met.

To create a service template using an existing database, follow these steps:

22.8 Snap Clone Data Creation

This section contains the following topics:

22.8.1 Requesting a Database

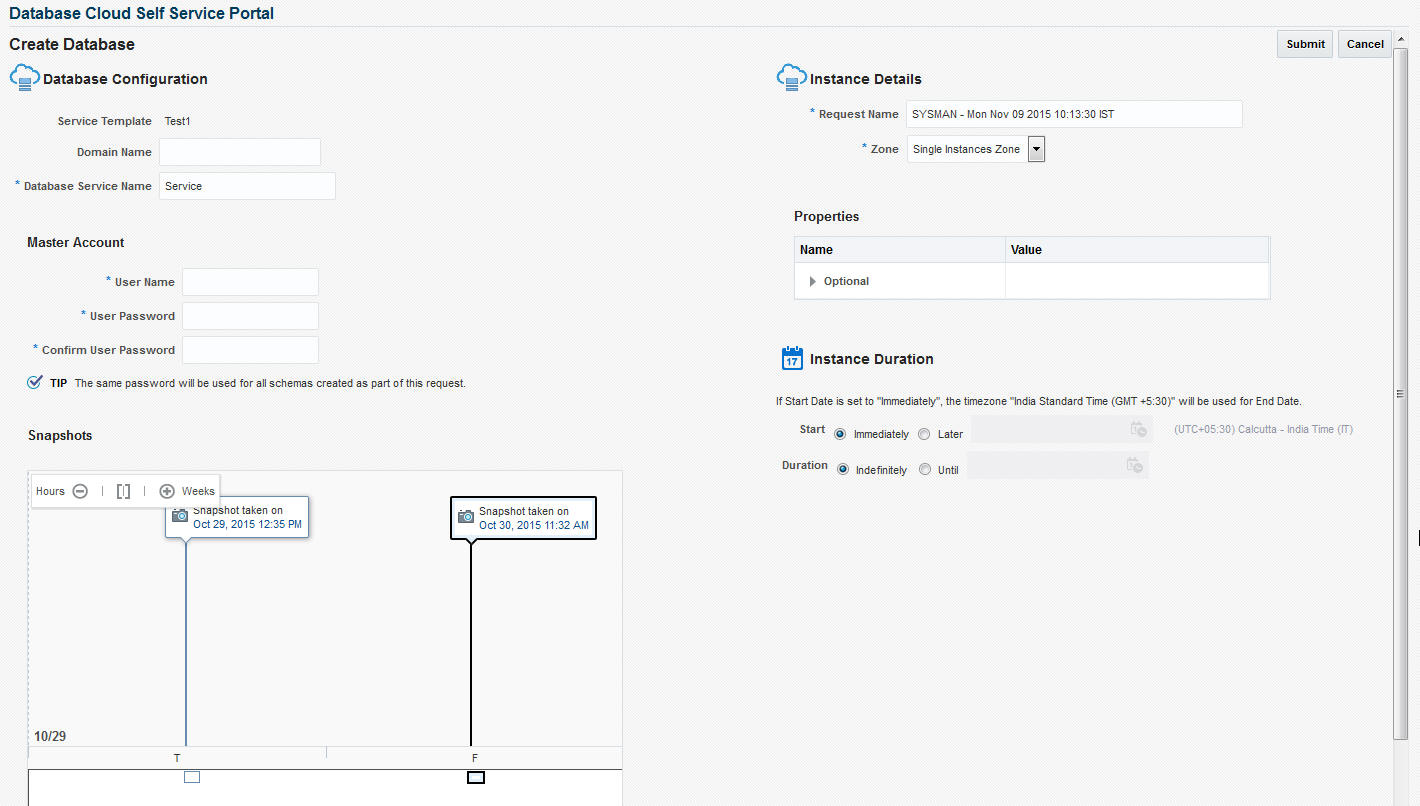

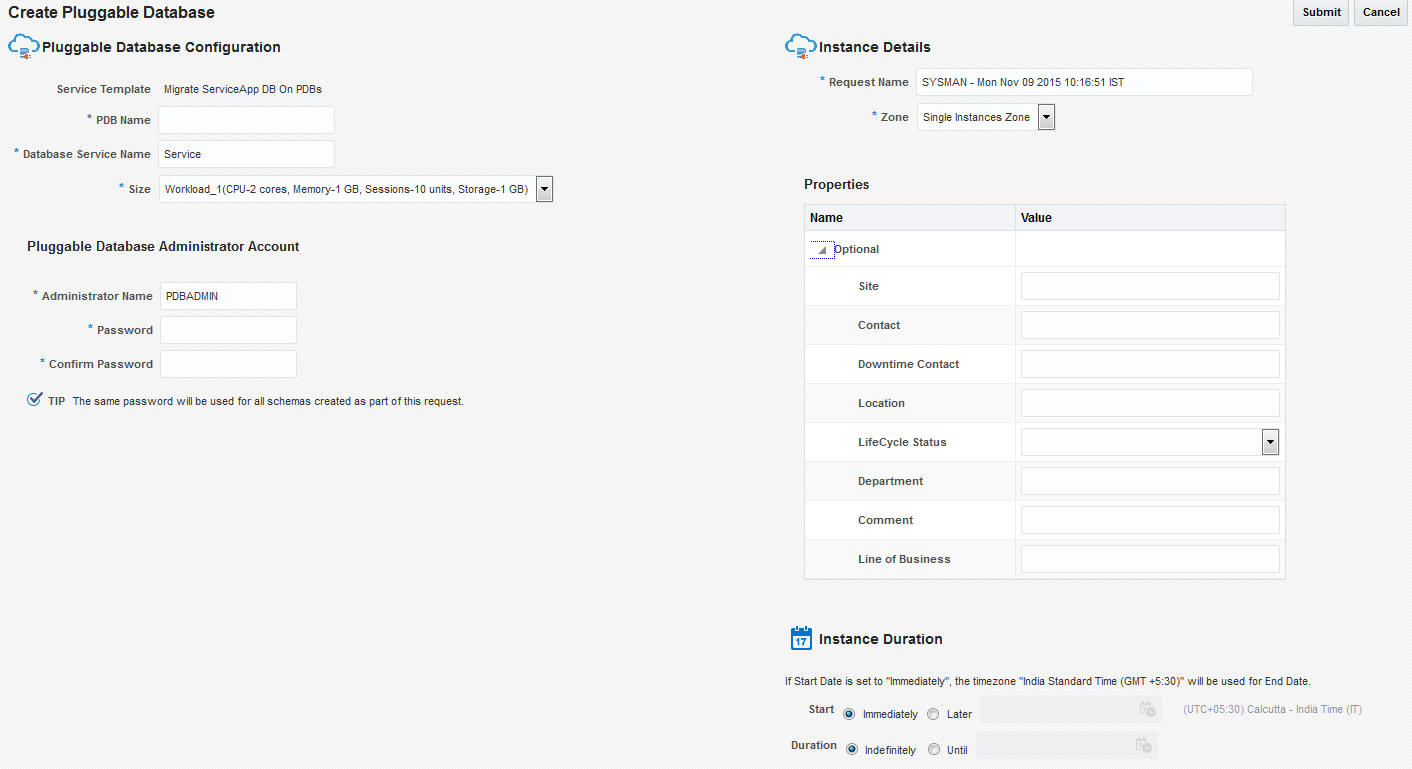

To request a new database, follow these steps:

22.8.1.1 Requesting a Schema

You can create a database service with one or more schemas and populate the schema with the required data.

Requesting an Export Profile Based Schema

You can create a schema based on a service template with a schema export profile or an empty service template. To create a schema based on a schema export profile, follow these steps:

-

Log in to Enterprise Manager as a user with

EM_SSA_USERrole or any role that includesEM_SSA_USERrole. -

The Database Cloud Self Service Portal page appears. Select Databases from the Manage drop down list to navigate to the Database Cloud Self Service Portal.

-

Click Request New Service in the Services region.

-

Choose a Schema Service Template with an schema export profile from the list and click Select. The Create Schema page appears. The name and description of the service template you have selected is displayed. Enter the following details:

-

Request Name: Enter a name for the schema service request.

-

Zone: Select the zone in which the schema is to be created.

-

Database Service Name: Enter a unique name for the database service.

-

Workload Size: Specify the workload size for the service request.

-

Schema Prefix: Enter a prefix for the schema. For clustered databases, the service is registered with Grid Infrastructure credentials.

-

-

Click Rename Schema to enter a new name for the schema. If you wish to retain the source schema name, ensure that the Schema Prefix field is blank.

-

Specify the password for the schema. Select the Same Password for all Schemas checkbox to use the same password for all the schemas.

-

The Master Account for the schema is displayed. The schema with Master Account privileges will have access to all other schemas created as part of the service request.

-

In the Tablespace Details region, the names of all the tablespaces in the schema are displayed. You can modify the tablespace name and specify a new name in the New Tablespace Name box.

-

Specify the schedule for the request and click Submit to create the schema.

Requesting an Empty Schema

To create a schema with an empty schema template, follow these steps:

- Follow steps 1 to 3 listed above and in the Select Service Template window, select an empty schema template from the list.

- Specify the details of the schema as listed in steps 4 to 7.

- In the Tablespace Details region, you can specify a separate tablespace for each schema or use the same tablepsace for all the schemas.

- Specify the schedule for the schema request and click Submit to create the schema.

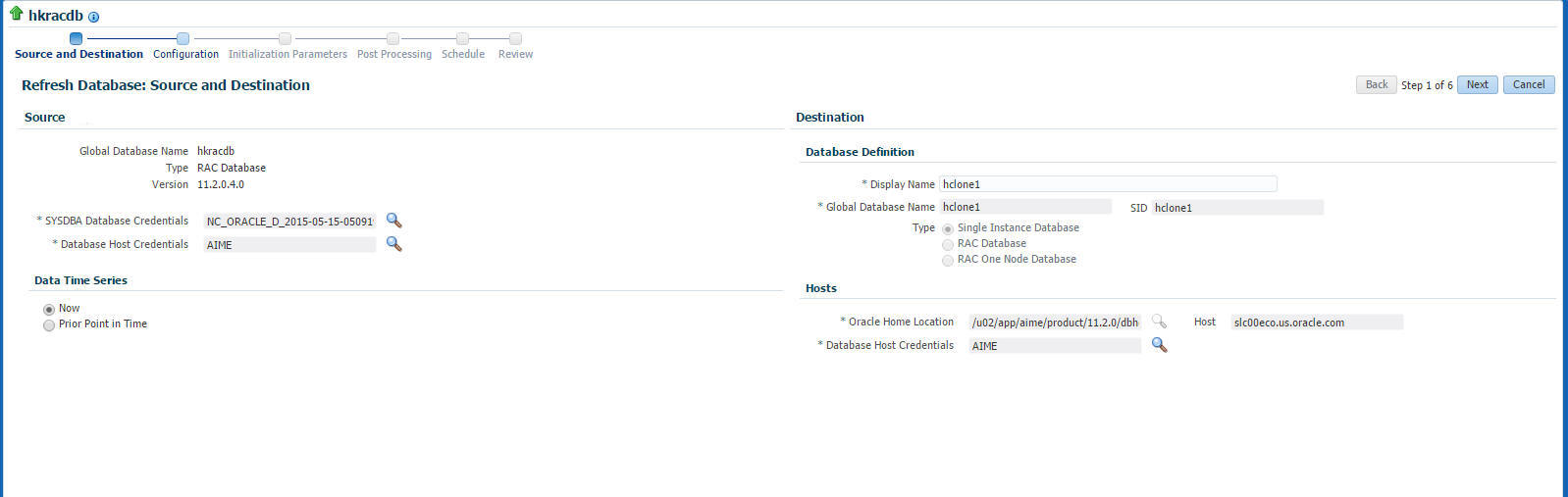

22.8.2 Refreshing the Test Master Database

The test master database is created from an RMAN Backup profile of the production database taken at a particular point in time. Since the production database is constantly updated, to ensure that the latest production data is available in the test master, it has to be refreshed at periodic intervals.

When the test master is refreshed, you can create a new profile based on the updated test master. The self service user can choose to refresh the database instances to the latest profile. The storage space that was used by the older version of the test master will be reclaimed by the updated (refreshed) test master.