6 Upgrades in TimesTen Classic

This chapter describes the process for upgrading to a new release of TimesTen Classic. For information on the upgrade process for TimesTen Scaleout, see "Upgrading a grid" and "Migrating, Backing Up and Restoring Data" in the Oracle TimesTen In-Memory Database Scaleout User's Guide.

Ensure you review the installation process in the preceding chapters before completing the upgrade procedures described in this chapter.

Topics include:

-

Offline upgrade: Moving to a different patch or patch set: ttInstanceModify

-

Offline upgrade: Moving to a different patch or patch set: ttBackup

-

Performing an offline TimesTen upgrade when using Oracle Clusterware

-

Performing an online TimesTen upgrade when using Oracle Clusterware

Overview of release numbers

There is a release numbering scheme for TimesTen releases. This scheme is relevant when discussing upgrades. For example, for a given release, a.b.c.d.e:

-

aindicates the first part of the major release. -

bindicates the second part of the major release. -

cindicates the patch set. -

dindicates the patch level within the patch set. -

eis reserved.

Important considerations:

-

Releases within the same major release (

a.b) are binary compatible. If a release is binary compatible, you do not have to recreate the database for the upgrade (or downgrade). -

Releases with a different major release are not binary compatible. In this case, you must recreate the database. See "Migrating a database" for details.

As an example, for the 18.1.4.1.0 release:

-

The first two numbers of the five-place release number (

18.1) indicate the major release. -

The third number of the five-place release number (

4) indicates the patch set. For example,18.1.4.1.0is binary compatible with18.1.3.5.0because the first two digits (18and1) are the same. -

The fourth number of the five-place release number (

1) indicates the patch level within the patch set.18.1.4.1.0is the first patch level within patch set four. -

The fifth number of the five-place release number (

0) is reserved.

Note:

In releases11.2.1.w.x and 11.2.2.y.z, the first three digits signified the major release. Thus, 11.2.1 is considered a major release as is 11.2.2.Types of upgrades

TimesTen Classic supports two types of upgrades:

-

An offline upgrade requires all applications to disconnect from TimesTen and require all databases to be unloaded from memory. An offline upgrade involves two different situations depending on your requirement.

If your requirement is to:

-

Apply a patch set or a patch level within a patch set:

-

Run the

ttInstanceModifyutility with the-installoption to upgrade the instance. This is the preferred method for upgrading patches and patch sets. See "Offline upgrade: Moving to a different patch or patch set: ttInstanceModify" for details. -

You can also run the

ttBackupand thettRestoreutilities to upgrade patches and patch sets, although this is not the preferred method. See "Offline upgrade: Moving to a different patch or patch set: ttBackup" for information.

-

-

Move to a different major release: You must run the

ttMigrateutility to export a database to a flat file and then usettMigrateagain to import the data into the new database. This is the only method to perform an offline upgrade that involves moving between major releases. See "Offline upgrade: Moving to a different major release" for details.

-

-

An online upgrade involves using a pair of databases that are replicated and then performing an offline upgrade of each database in turn. See "Online upgrade: Using TimesTen replication" for details.

Offline upgrade: Moving to a different patch or patch set: ttInstanceModify

The preferred offline upgrade method to move between a patch set or a patch level involves creating a new installation in a new location and then using the ttInstanceModify utility with the -install option to cause the instance to point to the new installation. This offline upgrade requires the instance administrator to close all databases to user connections, to disconnect all applications from all databases, and to unload all databases from memory.

To perform the upgrade, follow these steps:

-

Create a new installation in a new location. For example, create the

fullinstall_newinstallation directory. Then unzip the new patch release zip file into that directory. (For example, unziptimesten181410.server.linux8664.zipinto thefullinstall_newdirectory).% mkdir fullinstall_new % cd fullinstall_new % unzip /swdir/TimesTen/ttinstallers/timesten181410.server.linux8664.zip [...UNZIP OUTPUT...]See "TimesTen installations" for detailed information.

-

Unload all databases. See "Unloading a database from memory" for details.

-

Stop the TimesTen daemon.

% ttDaemonAdmin -stop TimesTen Daemon (PID: 24224, port: 6324) stopped.

-

Modify the instance to point to the new installation. In this example, point the instance to the installation in

swdir/TimesTen/ttinstallations/ttinstalllatest/tt18.1.4.1.0.% $TIMESTEN_HOME/bin/ttInstanceModify -install /swdir/TimesTen/ttinstallations/ttinstalllatest/tt18.1.4.1.0 Instance Info (UPDATED) ----------------------- Name: ttuserinstance Version: 18.1.4.1.0 Location: /swdir/TimesTen/ttinstances/ttuserinstance Installation: /swdir/TimesTen/ttinstallations/ttinstalllatest/tt18.1.4.1.0 Daemon Port: 6324 Server Port: 6325 ********************************************** NOTE: The ttclasses source code may have changed since the last release. Make sure to rebuild the ttclasses library in /swdir/TimesTen/ttinstances/ttuserinstance/ttclasses. The instance ttuserinstance now points to the installation in /swdir/TimesTen/ttinstallations/ttinstalllatest/tt18.1.4.1.0 -

Restart the daemon.

% ttDaemonAdmin -start TimesTen Daemon (PID: 31202, port: 6324) startup OK.

-

Load the databases. See"Reloading a database into memory" for details.

-

Optional: Ensure you can connect to the database.

% ttIsql database1 Copyright (c) 1996, 2020, Oracle and/or its affiliates. All rights reserved. Type ? or "help" for help, type "exit" to quit ttIsql. ... Command> SELECT * FROM dual; < X > 1 row found.

-

Optional: Delete the previous patch release installation.

% chmod -R 750 installation_dir/tt18.1.3.5.0 % rm -rf installation_dir/tt18.1.3.5.0

Unloading a database from memory

Perform the following steps to unload a database from memory.

-

In release

18.1.3.1.0and later, close the database. This prevents any future connections to the database. In releases prior to18.1.3.1.0, ignore this step.% ttAdmin -close database1 RAM Residence Policy : manual Manually Loaded In RAM : True Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database State : Closed

See "Opening and closing the database for user connections" in the Oracle TimesTen In-Memory Database Operations Guide.

-

If there are connections to the database, disconnect all applications from the database. You can do this manually, or you can instruct TimesTen to perform the disconnects for you. For the latter case, see "Disconnecting from a database" in the Oracle TimesTen In-Memory Database Operations Guide and "ForceDisconnectEnabled" in the Oracle TimesTen In-Memory Database Reference for detailed information.

-

Ensure the RAM policy is set to either

manualorinUse. Then unload the database from memory. See "Specifying a RAM policy" in the Oracle TimesTen In-Memory Database Operations Guide for information on specifying a RAM policy.If the RAM policy is set to

always, change it tomanual, then unload the database from memory.% ttAdmin -ramPolicy manual -ramUnload database1 RAM Residence Policy : manual Manually Loaded In RAM : False Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database state : closed

If the RAM policy is set to

manual, unload the database from memory.ttAdmin -ramUnload database1 RAM Residence Policy : manual Manually Loaded In RAM : False Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database state : closed

If the RAM policy is set to

inUseand a grace period is set, set the grace period to0or wait for the grace period to elapse. TimesTen unloads a database with aninUseRAM policy from memory once all active connections are closed.% ttAdmin -ramGrace 0 database1 RAM Residence Policy : inUse Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database state : closed

-

Run the

ttStatusutility to verify that the database has been unloaded from memory and, for release18.1.3.1.0and later, the database is closed. See "ttStatus" in Oracle TimesTen In-Memory Database Reference for details.% ttStatus TimesTen status report as of Mon Jun 29 14:11:19 2020 Daemon pid 24224 port 6324 instance ttuserinstance TimesTen server pid 22019 started on port 6325 ------------------------------------------------------------------------ Data store /scratch/databases/database1 Daemon pid 24224 port 6324 instance ttuserinstance TimesTen server pid 22019 started on port 6325 There are no connections to the data store Closed to user connections RAM residence policy: Manual Data store is manually unloaded from RAM Replication policy : Manual Cache Agent policy : Manual PL/SQL enabled. ------------------------------------------------------------------------ Accessible by group g900 End of report

Reloading a database into memory

Follow these steps to load a database into memory.

-

Load the database into memory. This example sets the RAM policy to manual and then loads the

database1database into memory.Set the RAM policy to manual.

% ttAdmin -ramPolicy manual database1 RAM Residence Policy : manual Manually Loaded In RAM : False Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database state : closed

Load the

database1database into memory.% ttAdmin -ramLoad database1 RAM Residence Policy : manual Manually Loaded In RAM : True Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database state : closed

See "Specifying a RAM policy" in the Oracle TimesTen In-Memory Database Operations Guide for information on the RAM policy.

-

In release

18.1.3.1.0and later, open the database for user connections. In releases prior to18.1.3.1.0, ignore this step.% ttAdmin -open database1 RAM Residence Policy : manual Manually Loaded In RAM : True Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False Database State : Open

See "Opening and closing the database for user connections" in the Oracle TimesTen In-Memory Database Operations Guide.

Offline upgrade: Moving to a different patch or patch set: ttBackup

You can run the ttBackup and ttRestore utilities to move to a new patch release of TimesTen Classic, although this is not the preferred method.

Perform these steps for each database.

On the old release:

-

Disconnect all applications from the database. You can do this manually or you can instruct TimesTen to perform the disconnects for you. For the latter case, see "Disconnecting from a database" in the Oracle TimesTen In-Memory Database Operations Guide and "ForceDisconnectEnabled" in the Oracle TimesTen In-Memory Database Reference for detailed information.

-

Backup the database. In this example, backup the

database1_1813database for release18.1.3.5.0.ttBackup -dir /tmp/dump/backup_181350 -fname database1_1813 database1_1813 Backup started ... Backup complete

-

Unload the database from memory. This example assumes a RAM policy of manual. See "Specifying a RAM policy" in the Oracle TimesTen In-Memory Database Operations Guide for information on the RAM policy.

% ttAdmin -ramUnload database1_1813 RAM Residence Policy : manual Manually Loaded In RAM : False Replication Agent Policy : manual Replication Manually Started : False Cache Agent Policy : manual Cache Agent Manually Started : False

-

Stop the TimesTen daemon.

% ttDaemonAdmin -stop TimesTen Daemon (PID: 2749, port: 6666) stopped.

For the new release:

-

Create a new installation in a new location. For example, create the

fullinstall_newinstallation directory. Then unzip the patch release zip file into that directory. (For example, unziptimesten181410.server.linux8664.zipinto thefullinstall_newdirectory). See "TimesTen installations" and "Creating an installation on Linux/UNIX" for detailed information.% mkdir fullinstall_new % cd fullinstall_new % unzip /swdir/TimesTen/ttinstallers/timesten181410.server.linux8664.zip [...UNZIP OUTPUT...] -

Run the

ttInstanceCreateutility to create the instance. This example runs thettInstanceCreateutility interactively. See "ttInstanceCreate" in the Oracle TimesTen In-Memory Database Reference and "Creating an instance on Linux/UNIX: Basics" in this book for details.User input is shown in bold.

% installation_dir/tt18.1.4.1.0/bin/ttInstanceCreate NOTE: Each TimesTen instance is identified by a unique name. The instance name must be a non-null alphanumeric string, not longer than 255 characters. Please choose an instance name for this installation? [ tt181 ] inst1814_new Instance name will be 'inst1814_new'. Is this correct? [ yes ] Where would you like to install the inst1814_new instance of TimesTen? [ /home/ttuser ] /scratch/ttuser Creating instance in /scratch/ttuser/inst1814_new ... INFO: Mapping files from the installation to /scratch/ttuser/inst1814_new/install TCP port 6624 is in use! NOTE: If you are configuring TimesTen for use with Oracle Clusterware, the daemon port number must be the same across all TimesTen installations managed within the same Oracle Clusterware cluster. ** The default daemon port (6624) is already in use or within a range of 8 ports of an existing TimesTen instance. You must assign a unique daemon port number for this instance. This installer will not allow you to assign another instance a port number within a range of 8 ports of the port you assign below. NOTE: All installations that replicate to each other must use the same daemon port number that is set at installation time. The daemon port number can be verified by running 'ttVersion'. Please enter a unique port number for the TimesTen daemon (<CR>=list)? [ ] 6324 In order to use the 'TimesTen Application-Tier Database Cache' feature in any databases created within this installation, you must set a value for the TNS_ADMIN environment variable. It can be left blank, and a value can be supplied later using <install_dir>/bin/ttInstanceModify. Please enter a value for TNS_ADMIN (s=skip)? [ ] s What is the TCP/IP port number that you want the TimesTen Server to listen on? [ 6325 ] Would you like to use TimesTen Replication with Oracle Clusterware? [ no ] NOTE: The TimesTen daemon startup/shutdown scripts have not been installed. The startup script is located here : '/scratch/ttuser/inst1814_new/startup/tt_inst1814_new' Run the 'setuproot' script : /scratch/ttuser/inst1814_new/bin/setuproot -install This will move the TimesTen startup script into its appropriate location. The 18.1 Release Notes are located here : '/scratch/ttuser/181410/tt18.1.4.1.0/README.html' Starting the daemon ... TimesTen Daemon (PID: 3253, port: 6324) startup OK.

-

Restore the database. Ensure you source the environment variables, make all necessary changes to your connection attributes in the

sys.odbc.ini(or theodbc.ini) file, and start the daemon (if not already started) prior to restoring the database.% ttRestore -dir /tmp/dump/backup_181350 -fname database1_1813 database1_1814 Restore started ... Restore complete

Once your databases are correctly configured and fully operational, you can optionally remove the backup file (in this example, /tmp/dump/backup_181350/database1_1813).

Offline upgrade: Moving to a different major release

You can have multiple major releases installed on a host at the same time. However, databases created by one major release cannot be accessed directly by applications of a different major release. To migrate data between major releases, for example from TimesTen 11.2.2 to 18.1, you must export the data using the ttMigrate utility from the old release and import it using the ttMigrate utility to the new release.

Before migrating a database from one major release to another, ensure you backup the database in the old release. See "ttBackup" and "ttRestore" in Oracle TimesTen In-Memory Database Reference and "Backing up and restoring a database" in this book for details.

Follow these steps to perform the upgrade:

For the old release:

-

Disconnect all applications from your database. You can do this manually or you can instruct TimesTen to perform the disconnects for you. For the latter case, see "Disconnecting from a database" in the Oracle TimesTen In-Memory Database Operations Guide and "ForceDisconnectEnabled" in the Oracle TimesTen In-Memory Database Reference for detailed information.

-

Save a copy of your database with the

ttMigrateutility. In this example, there are several database objects saved fordatabase1.% ttMigrate -c database1 /tmp/database1.data Saving user PUBLIC User successfully saved. Saving table TTUSER.COUNTRIES Saving foreign key constraint COUNTR_REG_FK Saving rows... 25/25 rows saved. Table successfully saved. Saving table TTUSER.DEPARTMENTS Saving foreign key constraint DEPT_LOC_FK Saving rows... 27/27 rows saved. Table successfully saved. Saving table TTUSER.EMPLOYEES Saving index TTUSER.TTUNIQUE_0 Saving foreign key constraint EMP_DEPT_FK Saving foreign key constraint EMP_JOB_FK 107/107 rows saved. Saving rows... Table successfully saved. Saving table TTUSER.JOBS Saving rows... 19/19 rows saved. Table successfully saved. Saving table TTUSER.JOB_HISTORY Saving foreign key constraint JHIST_DEPT_FK Saving foreign key constraint JHIST_EMP_FK Saving foreign key constraint JHIST_JOB_FK Saving rows... 10/10 rows saved. Table successfully saved. Saving table TTUSER.LOCATIONS Saving foreign key constraint LOC_C_ID_FK Saving rows... 23/23 rows saved. Table successfully saved. Saving table TTUSER.REGIONS Saving rows... 4/4 rows saved. Table successfully saved. Saving view TTUSER.EMP_DETAILS_VIEW View successfully saved. Saving sequence TTUSER.DEPARTMENTS_SEQ Sequence successfully saved. Saving sequence TTUSER.EMPLOYEES_SEQ Sequence successfully saved. Saving sequence TTUSER.LOCATIONS_SEQ Sequence successfully saved.

For more information about the

ttMigrateutility, see "ttMigrate" in the Oracle TimesTen In-Memory Database Reference. -

Unload the database from memory. See "Unloading a database from memory" for details.

-

Stop the TimesTen daemon.

% ttDaemonAdmin -stop TimesTen Daemon (PID: 30841, port: 54496) stopped.

-

Copy the migrate object files to a file system that is accessible by the instance in the new release.

For the new release:

-

Create a new installation in a new location. For example, create the

fullinstall_newinstallation directory. Then unzip the patch release zip file into that directory. (For example, unziptimesten181410.server.linux8664.zipinto thefullinstall_newdirectory). See "TimesTen installations" and "Creating an installation on Linux/UNIX" for detailed information.% mkdir fullinstall_new % cd fullinstall_new % unzip /swdir/TimesTen/ttinstallers/timesten181410.server.linux8664.zip [...UNZIP OUTPUT...] -

Run the

ttInstanceCreateutility to create the instance. This example runs thettInstanceCreateutility interactively. See "ttInstanceCreate" in the Oracle TimesTen In-Memory Database Reference and "Creating an instance on Linux/UNIX: Basics" in this book for details.User input is shown in bold.

% installation_dir/tt18.1.4.1.0/bin/ttInstanceCreate NOTE: Each TimesTen instance is identified by a unique name. The instance name must be a non-null alphanumeric string, not longer than 255 characters. Please choose an instance name for this installation? [ tt181 ] inst1814_new Instance name will be 'inst1814_new'. Is this correct? [ yes ] Where would you like to install the inst1814_new instance of TimesTen? [ /home/ttuser ] /scratch/ttuser Creating instance in /scratch/ttuser/inst1814_new ... INFO: Mapping files from the installation to /scratch/ttuser/inst1814_new/install TCP port 6624 is in use! NOTE: If you are configuring TimesTen for use with Oracle Clusterware, the daemon port number must be the same across all TimesTen installations managed within the same Oracle Clusterware cluster. ** The default daemon port (6624) is already in use or within a range of 8 ports of an existing TimesTen instance. You must assign a unique daemon port number for this instance. This installer will not allow you to assign another instance a port number within a range of 8 ports of the port you assign below. NOTE: All installations that replicate to each other must use the same daemon port number that is set at installation time. The daemon port number can be verified by running 'ttVersion'. Please enter a unique port number for the TimesTen daemon (<CR>=list)? [ ] 6324 In order to use the 'TimesTen Application-Tier Database Cache' feature in any databases created within this installation, you must set a value for the TNS_ADMIN environment variable. It can be left blank, and a value can be supplied later using <install_dir>/bin/ttInstanceModify. Please enter a value for TNS_ADMIN (s=skip)? [ ] s What is the TCP/IP port number that you want the TimesTen Server to listen on? [ 6325 ] Would you like to use TimesTen Replication with Oracle Clusterware? [ no ] NOTE: The TimesTen daemon startup/shutdown scripts have not been installed. The startup script is located here : '/scratch/ttuser/inst1814_new/startup/tt_inst1814_new' Run the 'setuproot' script : /scratch/ttuser/inst1814_new/bin/setuproot -install This will move the TimesTen startup script into its appropriate location. The 18.1 Release Notes are located here : '/scratch/ttuser/181410/tt18.1.4.1.0/README.html' Starting the daemon ... TimesTen Daemon (PID: 3253, port: 6324) startup OK.

-

From the instance of the new release, create a database. Ensure you have sourced the environment variables, made all necessary changes to your connection attributes in the

sys.odbc.ini(or theodbc.ini) file, and started the daemon (if not already started).To create the database:

% ttIsql -connstr "DSN=new_database1;AutoCreate=1" -e "quit" Copyright (c) 1996, 2020, Oracle and/or its affiliates. All rights reserved. Type ? or "help" for help, type "exit" to quit ttIsql. connect "DSN=new_database1;AutoCreate=1"; Connection successful: DSN=new_database1; UID=instadmin;DataStore=/scratch/databases/new_database1; DatabaseCharacterSet=AL32UTF8;ConnectionCharacterSet=AL32UTF8; PermSize=128; (Default setting AutoCommit=1) quit; Disconnecting... Done.

The database will be empty at this point.

-

From the instance of the new release, run the

ttMigrateutility with the-rand-relaxedUpgradeoptions to restore the backed up database to the new release. For example:% ttMigrate -r -relaxedUpgrade new_database1 /tmp/database1.data Restoring rows... Restoring table TTUSER.JOBS 19/19 rows restored. Table successfully restored. Restoring table TTUSER.REGIONS Restoring rows... 4/4 rows restored. Table successfully restored. Restoring table TTUSER.COUNTRIES Restoring rows... 25/25 rows restored. Restoring foreign key dependency COUNTR_REG_FK on TTUSER.REGIONS Table successfully restored. Restoring table TTUSER.LOCATIONS Restoring rows... 23/23 rows restored. Restoring foreign key dependency LOC_C_ID_FK on TTUSER.COUNTRIES Table successfully restored. Restoring table TTUSER.DEPARTMENTS Restoring rows... 27/27 rows restored. Restoring foreign key dependency DEPT_LOC_FK on TTUSER.LOCATIONS Table successfully restored. Restoring table TTUSER.EMPLOYEES Restoring rows... 107/107 rows restored. Restoring foreign key dependency EMP_DEPT_FK on TTUSER.DEPARTMENTS Restoring foreign key dependency EMP_JOB_FK on TTUSER.JOBS Table successfully restored. Restoring table TTUSER.JOB_HISTORY Restoring rows... 10/10 rows restored. Restoring foreign key dependency JHIST_DEPT_FK on TTUSER.DEPARTMENTS Restoring foreign key dependency JHIST_EMP_FK on TTUSER.EMPLOYEES Restoring foreign key dependency JHIST_JOB_FK on TTUSER.JOBS Table successfully restored. Restoring view TTUSER.EMP_DETAILS_VIEW View successfully restored. Restoring sequence TTUSER.DEPARTMENTS_SEQ Sequence successfully restored. Restoring sequence TTUSER.EMPLOYEES_SEQ Sequence successfully restored. Restoring sequence TTUSER.LOCATIONS_SEQ Sequence successfully restored.

Once the database is operational in the new release, create a backup of this database to have a valid restoration point for your database. Once you have created a backup of your database, you may delete the ttMigrate copy of your database (in this example, /tmp/database1.data). Optionally, for the old release, you can remove the instance and delete the installation.

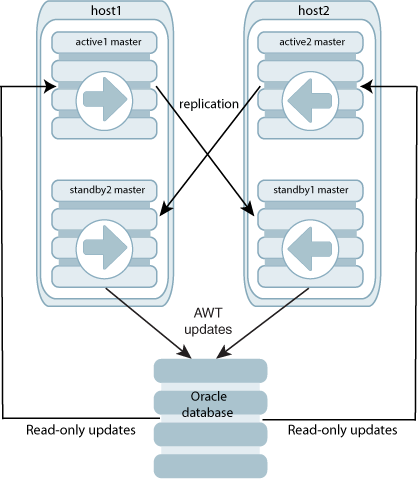

Online upgrade: Using TimesTen replication

When upgrading to a new release of TimesTen Classic, you may have a mission-critical database that must remain continuously available to your applications. You can use TimesTen replication to keep two copies of a database synchronized, even when the databases are from different releases of TimesTen, allowing your applications to stay connected to one copy of the database while the instance for the other database is being upgraded. When the upgrade is finished, any updates that have been made on the active database are transmitted immediately to the database in the upgraded instance, and your applications can then be switched with no data loss and no downtime. See "Performing an online upgrade with classic replication" for information.

The online upgrade process supports only updates to user tables during the upgrade. The tables to be replicated must have a PRIMARY KEY or a unique index on non-nullable columns. Data definition changes such as CREATE TABLE or CREATE INDEX are not replicated except in the case for an active standby pair with DDLReplicationLevel set to 2. In the latter case, CREATE TABLE and CREATE INDEX are replicated.

Because two copies of the database (or two copies of each database, if there are more than one) are required during the upgrade, you must have available twice the memory and disk space normally required, if performing the upgrade on a single host.

Notes:

-

Online major upgrades for active standby pairs with cache groups are only supported for read-only cache groups.

-

Online major upgrades for active standby pairs that are managed by Oracle Clusterware are not supported.

Performing an online upgrade with classic replication

This section describes how to use the TimesTen replication feature to perform online upgrades for applications that require continuous data availability.

This procedure is for classic replication in a unidirectional, bidirectional, or multidirectional scenario.

Typically, applications that require high availability of their data use TimesTen replication to keep at least one extra copy of their databases up to date. An online upgrade works by keeping one of these two copies available to the application while the other is being upgraded. The procedures described in this section assume that you have a bidirectional replication scheme configured and running for two databases, as described in "Unidirectional or bidirectional replication" in the Oracle TimesTen In-Memory Database Replication Guide.

Note the following:

-

For active standby pairs, see "Online upgrades for an active standby pair with no cache groups" and "Online upgrades for an active standby pair with cache groups" for details. Online major upgrades for active standby pairs with cache groups are only supported for read-only cache groups. Instead see "Offline upgrades for an active standby pair with cache groups" for this information.

-

For the use of Oracle Clusterware, see "Performing an online TimesTen upgrade when using Oracle Clusterware" for information. Online major upgrades are not supported for active standby pairs managed by Oracle Clusterware.

The following sections describe how to perform an online upgrade with replication.

Requirements

To perform online upgrades with replication, replication must be configured to use static ports. See "Port assignments" in Oracle TimesTen In-Memory Database Replication Guide for information.

Additional disk space must be allocated to hold a backup copy of the database made by the ttMigrate utility. The size of the backup copy is typically about the same as the in-use size of the database. This size may be determined by querying the v$monitor view, using ttIsql:

Command> SELECT perm_in_use_size FROM v$monitor;

Upgrade steps

The following steps illustrate how to perform an online upgrade while replication is running. The upgrade host is the host on which the database upgrade is being performed, and the active host is the host containing the database to which the application remains connected.

Note:

The following steps are for a standard upgrade. Upgrading from a database in TimesTen 11.2.1 that has the connection attributeReplicationApplyOrdering set to 0, or from a database in TimesTen 11.2.1 or higher that has ReplicationParallelism set to <2, requires that you re-create the database, even if the releases are from the same major release.| Step | Upgrade host | Active host |

|---|---|---|

| 1. | Configure replication to replicate to the active host using static ports. | Configure replication to replicate to the upgrade host using static ports. |

| 2. | n/a | Connect all applications to the active database, if they are not connected. |

| 3. | Disconnect all applications from the database that will be upgraded. | n/a |

| 4. | n/a | Set replication to the upgrade host to the PAUSE state. |

| 5. | Wait for updates to propagate to the active host. | n/a |

| 6. | Stop replication. | n/a |

| 7. | Back up the database with ttMigrate -c and run ttDestroy to destroy the database. |

n/a |

| 8. | Stop the TimesTen daemon for the old release. | n/a |

| 9. | Create a new installation and a new instance for the new release. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information. | n/a |

| 10. | Create a DSN for the post-upgrade database for the new release. Adjust parallelism options for the DSN. | n/a |

| 11. | Restore the database from the backup with ttMigrate -r. |

n/a |

| 12. | Clear the replication bookmark and logs using ttRepAdmin -receiver -reset and by setting replication to the active host to the stop and then the start state. |

n/a |

| 13. | Start replication. | n/a |

| 14. | n/a | Set replication to the upgrade host to the start state, ensuring that the accumulated updates propagate once replication is restarted. |

| 15. | n/a | Start replication. |

| 16. | n/a | Wait for all of the updates to propagate to the upgrade host. |

| 17. | Reconnect all applications to the post-upgrade database. | n/a |

After the above procedures are completed on the upgrade host, the active host can be upgraded using the same steps.

Online upgrade example

This section describes how to perform an online upgrade in a scenario with two bidirectionally replicated databases.

In the following discussion, the two hosts are referred to as the upgrade host, on which the instance (with its databases) is being upgraded, and the active host, which remains operational and connected to the application for the duration of the upgrade. After the procedure is completed, the same steps can be followed to upgrade the active host. However, you may prefer to delay conversion of the active host to first test the upgraded instance.

The upgrade host in this example consists of the database upgrade on the server upgradehost. The active host consists of the database active on the server activehost.

Follow these steps in the order they are presented:

| Step | Upgrade host | Active host |

|---|---|---|

| 1. | Use ttIsql to alter the replication scheme repscheme, setting static replication port numbers so that the databases can communicate across releases:

|

Use ttIsql to alter the replication scheme repscheme, setting static replication port numbers so that the databases can communicate across releases:

|

| 2. | Disconnect all production applications connected to the database. Any workload being run on the upgrade host must start running on the active host instead. | Use the ttRepAdmin utility to pause replication from the database active to the database upgrade:

ttRepAdmin -receiver -name upgrade -state pause active This command temporarily stops the replication of updates from the database See "Set the replication state of subscribers" in Oracle TimesTen In-Memory Database Replication Guide for details. |

| 3. | Wait for all replication updates to be sent to the database active. You can verify that all updates have been sent by applying a recognizable update to a table reserved for that purpose on the database upgrade. When the update appears in the database active, you know that all previous updates have been sent.

For example, call the Command> call ttRepSubscriberWait (,,,,60); < 00 > 1 row found. See "ttRepSubscriberWait" in the Oracle TimesTen In-Memory Database Reference for information. |

n/a |

| 4. | Stop the replication agent with ttAdmin:

ttAdmin -repStop upgrade From this point on, no updates are sent to the database |

Stop the replication agent with ttAdmin:

ttAdmin -repStop active From this point on, no updates are sent to the database See "Starting and stopping the replication agents" in Oracle TimesTen In-Memory Database Replication Guide for details. |

| 5. | Use ttMigrate to back up the database upgrade. If the database is very large, this step could take a significant amount of time. If sufficient disk space is free on the /backup file host, use the following ttMigrate command:

ttMigrate -c upgrade /backup/upgrade.dat |

n/a |

| 6. | If the ttMigrate command is successful, destroy the database upgrade.

ttDestroy upgrade |

Restart the replication agent on the database active:

ttAdmin -repStart active |

| 7. | Create a new installation and a new instance for the new release. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information. | Resume replication from active to upgrade by setting the replication state to start:

ttRepAdmin -receiver -name upgrade -start start active |

| 8. | Use ttMigrate to load the backup created in step 5. into the database upgrade for the new release:

ttMigrate -r upgrade /backup/upgrade.dat ttAdmin -ramLoad upgrade Note: In this step, you must use the |

n/a |

| .9 | Use ttRepAdmin to clear the replication bookmark and logs by resetting the receiver state for the database active and then setting replication to the stop state and then the start state:

ttRepAdmin -receiver -name active -reset upgrade ttRepAdmin -receiver -name active -state stop upgrade sleep 10 ttRepAdmin -receiver -name active -state start upgrade sleep 10 Note: The |

n/a |

| 10. | Use ttAdmin to start the replication agent on the new database upgrade and to begin sending updates to the database active:

ttAdmin -repStart upgrade |

n/a |

| 11. | Verify that the database upgrade is receiving updates from the database active. You can verify that updates are sent by applying a recognizable update to a table reserved for that purpose in the database active. When the update appears in upgrade, you know that replication is operational. |

If the applications are still running on the database active, let them continue until the database upgrade has been successfully migrated and you have verified that the updates are being replicated correctly from active to upgrade. |

| 12. | n/a | Once you are sure that updates are replicated correctly, you can disconnect all of the applications from the database active and reconnect them to the database upgrade. After verifying that the last of the updates from active are replicated to upgrade, the instance with active is ready to be upgraded.

Note: You may choose to delay upgrading the instance with |

Performing an upgrade with active standby pair replication

Active standby pair replication provides high availability of your data to your applications. With active standby pairs, unless you want to perform an upgrade to a new major release of in a configuration that also uses asynchronous writethrough cache groups, you can perform an online upgrade to maintain continuous availability of your data during an upgrade. This section describes the following procedures:

-

Online upgrades for an active standby pair with no cache groups

-

Online upgrades for an active standby pair with cache groups

-

Offline upgrades for an active standby pair with cache groups

Note:

Only asynchronous writethrough or read-only cache groups are supported with active standby pairs.Online upgrades for an active standby pair with no cache groups

This section includes the following topics for online upgrades in a scenario with active standby pairs and no cache groups:

Also see "Performing an online upgrade with classic replication" for an overview, limitations, and requirements.

Online patch upgrade for standby master and subscriber

To perform an online upgrade to a new patch release for the standby master database and subscriber databases, complete the following tasks on each database. For this procedure, assume there are no cache groups.

-

Stop the replication agent on the database using the

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent for themaster2standby database:ttAdmin -repStop master2

-

Create a new installation and a new instance for the new release. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information.

-

Restart the replication agent using the

ttRepStartbuilt-in procedure or thettAdminutility:ttAdmin -repStart master2

Online patch upgrade for active master

To perform an online upgrade to a new patch release for the active master database, you must first reverse the roles of the active and standby master databases, then perform the upgrade. For this procedure, assume there are no cache groups.

-

Pause any applications that are generating updates on the active master database.

-

Run the

ttRepSubscriberWaitbuilt-in procedure on the active master database, using the DSN and host of the standby master database. (The result of the call should be00. If the value is01, you should call ttRepSubscriberWait again until the value00is returned.) For example, to ensure that all transactions are replicated to themaster2standby master on themaster2host:call ttRepSubscriberWait( null, null, 'master2', 'master2host', 120 );

-

Stop the replication agent on the current active master database using the

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent for themaster1active master database:ttAdmin -repStop master1

-

Execute the

ttRepDeactivatebuild-in procedure on the current active master database. This puts the database in theIDLEstate:call ttRepDeactivate;

-

On the standby master database, set the database to the

ACTIVEstate using thettRepStateSetbuilt-in procedure. This database becomes the active master in the active standby pair:call ttRepStateSet( 'ACTIVE' );

-

Resume any applications that were paused in step 1, connecting them to the database that is now acting as the active master (for example,

master2).Note:

At this point, replication will not yet occur from the new active database to subscriber databases. Replication will resume after the host for the new standby database has been upgraded and the replication agent of the new standby database is running. -

Upgrade the instance of the former active master database, which is now the standby master database. See "Offline upgrade: Moving to a different patch or patch set: ttInstanceModify" for details.

-

Restart replication on the database in the upgraded instance, using the

ttRepStartbuilt-in procedure or thettAdminutility:ttAdmin -repStart master2

-

To make the database in the newly upgraded instance the active master database again, see "Reversing the roles of the active and standby databases" in the Oracle TimesTen In-Memory Database Replication Guide.

Online major upgrade for active standby pair

When you perform an online upgrade for an active standby pair to a new major release of TimesTen, you must explicitly specify the TCP/IP port for each database. If your active standby pair replication scheme is not configured with a PORT attribute for each database, you must use the following steps to prepare for the upgrade. For this procedure, assume there are no cache groups. (Online major upgrades for active standby pairs with cache groups are only supported for read-only cache groups.)

-

Stop the replication agent on every database using the call

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent on themaster1database:ttAdmin -repStop master1

-

On the active master database, use the

ALTER ACTIVE STANDBY PAIRstatement to specify aPORTattribute for every database in the active standby pair. For example, to set aPORTattribute for themaster1database on themaster1hosthost and themaster2database on themaster2hosthost and thesubscriber1database on thesubscriber1hosthost:ALTER ACTIVE STANDBY PAIR ALTER STORE master1 ON "master1host" SET PORT 30000 ALTER STORE master2 ON "master2host" SET PORT 30001 ALTER STORE subscriber1 ON "subscriber1host" SET PORT 30002;

-

Destroy the standby master database and all of the subscribers using the

ttDestroyutility. For example, to destroy thesubscriber1database:ttDestroy subscriber1

-

Follow the normal procedure to start an active standby pair and duplicate the standby and subscriber databases from the active master. See "Setting up an active standby pair with no cache groups" in the Oracle TimesTen In-Memory Database Replication Guide for details.

To upgrade the instances of the active standby pair, first upgrade the instance of the standby master. While this node is being upgraded, there is no standby master database, so updates on the active master database are propagated directly to the subscriber databases. Following the upgrade of the standby node, the active and standby roles are switched and the new standby node is created from the new active node. Finally, the subscriber nodes are upgraded.

-

Instruct the active master database to stop replicating updates to the standby master by executing the

ttRepStateSavebuilt-in procedure on the active master database. For example, to stop replication to themaster2standby master database on themaster2hosthost:call ttRepStateSave( 'FAILED', 'master2', 'master2host' );

-

Stop the replication agent on the standby master database using the

ttRepStopbuilt-in procedure or thettAdminutility. The following example stops the replication agent for themaster2standby master database.ttAdmin -repStop master2

-

Use the

ttMigrateutility to back up the standby master database to a binary file.ttMigrate -c master2 master2.bak

See "ttMigrate" in the Oracle TimesTen In-Memory Database Reference for details.

-

Destroy the standby master database, using the

ttDestroyutility.ttDestroy master2

-

Create a new installation and a new instance on the

master2hoststandby master host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information. -

In the new instance on

master2host, usettMigrateto restore the standby master database from the binary file created earlier. (This example performs a checkpoint operation after every 20 megabytes of data has been restored.)ttMigrate -r -C 20 master2 master2.bak

-

Start the replication agent on the standby master database using the

ttRepStartbuilt-in procedure or thettAdminutility.ttAdmin -repStart master2

When the standby master database in the upgraded instance has become synchronized with the active master database, this standby master database moves from the

RECOVERINGstate to theSTANDBYstate. The standby master database also starts sending updates to the subscribers. You can determine when the standby master database is in theSTANDBYstate by calling thettRepStateGetbuilt-in procedure.call ttRepStateGet;

-

Pause any applications that are generating updates on the active master database.

-

Execute the

ttRepSubscriberWaitbuilt-in procedure on the active master database, using the DSN and host of the standby master database. (The result of the call should be00. If the value is01, you should call ttRepSubscriberWait again until the value00is returned.) For example, to ensure that all transactions are replicated to themaster2standby master on themaster2hosthost:call ttRepSubscriberWait( null, null, 'master2', 'master2host', 120 );

-

Stop the replication agent on the active master database using the

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent for themaster1active master database:ttAdmin -repStop master1

-

On the standby master database, set the database to the

ACTIVEstate using thettRepStateSetbuilt-in procedure. This database becomes the active master in the active standby pair.call ttRepStateSet( 'ACTIVE' );

-

Instruct the new active master database (

master2, in our example) to stop replicating updates to what is now the standby master (master1) by executing thettRepStateSavebuilt-in procedure on the active master database. For example, to stop replication to themaster1standby master database onmaster1hosthost:call ttRepStateSave( 'FAILED', 'master1', 'master1host' );

-

Destroy the former active master database, using the

ttDestroyutility.ttDestroy master1

-

Create the new installation and the instance for the new release on

master1host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information. -

Create a new standby master database by duplicating the new active master database, using the

ttRepAdminutility. For example, to duplicate themaster2databasemaster2on themaster2hosthost to themaster1database, use the following on the host containing themaster1database:ttRepAdmin -duplicate -from master2 -host master2host -uid pat -pwd patpwd -setMasterRepStart master1

-

Start the replication agent on the new standby master database using the

ttRepStartbuilt-in procedure or thettAdminutility.ttAdmin -repStart master1

-

Stop the replication agent on the first subscriber database using the

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent for thesubscriber1subscriber database:ttAdmin -repStop subscriber1

-

Destroy the subscriber database using the

ttDestroyutility.ttDestroy subscriber1

-

Create a new installation and a new instance for the new release on the subscriber host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information.

-

Create the subscriber database by duplicating the new standby master database, using the

ttRepAdminutility, as follows.ttRepAdmin -duplicate -from master1 -host master1host -uid pat -pwd patpwd -setMasterRepStart subscriber1

-

Start the replication agent for the duplicated subscriber database using the

ttRepStartbuilt-in procedure or thettAdminutility.ttAdmin -repStart subscriber1

-

Repeat step 17 through step 21 for each other subscriber database.

Online upgrades for an active standby pair with cache groups

This section includes the following topics for online patch upgrades in a scenario with active standby pairs and cache groups:

-

Online patch upgrade for standby master and subscriber (cache groups)

-

Online major upgrade for active standby pair (read-only cache groups)

Also see "Performing an online upgrade with classic replication" for an overview, limitations, and requirements.

Online patch upgrade for standby master and subscriber (cache groups)

To perform an online upgrade to a new patch release for the standby master database and subscriber databases, in a configuration with cache groups, complete the following tasks on each database (with exceptions noted).

-

Stop the replication agent on the database using the

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent for themaster2standby database:ttAdmin -repStop master2

-

Stop the cache agent on the standby database using the

ttCacheStopbuilt-in procedure or thettAdminutility:ttAdmin -cacheStop master2

-

Create a new installation and a new instance for the new release. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information.

-

Restart the cache agent on the standby database using the

ttCacheStartbuilt-in procedure or thettAdminutility:ttAdmin -cacheStart master2

-

Restart the replication agent using the

ttRepStartbuilt-in procedure or thettAdminutility:ttAdmin -repStart master2

Note:

Steps 2 and 4, stopping and restarting the cache agent, are not applicable for subscriber databases.Online patch upgrade for active master (cache groups)

To perform an online upgrade to a new patch release for the active master database, in a configuration with cache groups, perform the following steps. You must first reverse the roles of the active and standby master databases, then perform an the upgrade.

-

Pause any applications that are generating updates on the active master database.

-

Stop the cache agent on the current active master database using the

ttCacheStopbuilt-in procedure or thettAdminutility:ttAdmin -cacheStop master1

-

Execute the

ttRepSubscriberWaitbuilt-in procedure on the active master database, using the DSN and host of the standby master database. For example, to ensure that all transactions are replicated to themaster2standby master on themaster2hosthost:call ttRepSubscriberWait( null, null, 'master2', 'master2host', 120 );

-

Stop the replication agent on the current active master database using the

ttRepStopbuilt-in procedure or thettAdminutility. For example, to stop the replication agent for themaster1active master database:ttAdmin -repStop master1

-

Execute the

ttRepDeactivatebuild-in procedure on the current active master database. This puts the database in theIDLEstate:call ttRepDeactivate;

-

On the standby master database, set the database to the

ACTIVEstate using thettRepStateSetbuilt-in procedure. This database becomes the active master in the active standby pair:call ttRepStateSet( 'ACTIVE' );

-

Resume any applications that were paused in step 1, connecting them to the database that is now acting as the active master (in this example, the

master2database). -

Upgrade the instance for the former active master database, which is now the standby master database. See "Offline upgrade: Moving to a different patch or patch set: ttInstanceModify" for details.

-

Restart the cache agent on the post-upgrade database using the

ttCacheStartbuilt-in procedure or thettAdminutility:ttAdmin -cacheStart master1

-

Restart replication on the post-upgrade database using the

ttRepStartbuilt-in procedure or thettAdminutility:ttAdmin -repStart master1

-

To make the post-upgrade database the active master database again, see "Reversing the roles of the active and standby databases" in the Oracle TimesTen In-Memory Database Replication Guide.

Online major upgrade for active standby pair (read-only cache groups)

Complete the following steps to perform a major upgrade from an 11.2.2 release to a 18.1 release in a scenario with an active standby pair with read-only cache groups.

These steps assume that master1 is the active master database on the master1host host and master2 is the standby master database on the master2host host.

Note:

For more information on the built-in procedures and utilities discussed here, see "Built-In Procedures" and "Utilities" in the Oracle TimesTen In-Memory Database Reference.-

On the active master host, run the

ttAdminutility to stop the replication agent for the active master database.ttAdmin -repStop master1

-

On the active master database, use the

DROP ACTIVE STANDBY PAIRstatement to drop the active standby pair. For example, from thettIsqlutility:Command> DROP ACTIVE STANDBY PAIR;

-

On the active master database, use the

CREATE ACTIVE STANDBY PAIRstatement to create a new active standby pair with the cache groups excluded. Ensure that you explicitly specify the TCP/IP port for each database.Command> CREATE ACTIVE STANDBY PAIR master1 ON "master1host", master2 ON "master2host" STORE master1 ON "master1host" PORT 20000 STORE master2 ON "master2host" PORT 20010 EXCLUDE CACHE GROUP cacheuser.readcache;Note:

You can use thecachegroupscommand within thettIsqlutility to identify all the cache groups defined in the database. In this example,readcacheis a read-only cache group owned by thecacheuseruser. -

On the active master database, call the

ttRepStateSetbuilt-in procedure to set the replication state for the active master database toACTIVE.Command> call ttRepStateSet('ACTIVE');To verify that the replication state for the active master database is set to

ACTIVE, call thettRepStateGetbuilt-in procedure.Command> call ttRepStateGet(); < ACTIVE > 1 row found.

-

On the active master database, call the

ttRepStartbuilt-in procedure to start the replication agent.Command> call ttRepStart();

-

On the standby master host, run the

ttAdminutility to stop the replication agent for the standby master database.ttAdmin -repStop master2

-

On the standby master host, run the

ttAdminutility to stop the cache agent for the standby master database.ttAdmin -cacheStop master2

-

On the standby master host, run the

ttDestroyutility to destroy the standby master database. You must either add the-forceoption or first drop all cache groups.ttDestroy -force master2

-

Create a new standby master database by duplicating the active master database with the

ttRepAdminutility. For example, to duplicate themaster1database on themaster1hosthost of themaster2database, run the following on the host containing themaster2database:ttRepAdmin -duplicate -from master1 -host master1host -UID pat -PWD patpwd -keepCG -cacheUid cacheuser -cachePwd cachepwd master2

Note:

You need a user withADMINprivileges defined in the active master database for it to be duplicated. In this example, thepatuser identified by thepatpwdpassword hasADMINprivileges.To keep the cache group tables, you need a cache administration user while adding the

-keepCGoption. In this example, thecacheuseruser identified by thecachepwdpassword is a cache administration user. -

On the new standby master database, use the

DROP CACHE GROUPstatement to drop all the cache groups.Command> DROP CACHE GROUP cacheuser.readcache;

-

On the standby master host, run the

ttMigrateutility to back up the standby master database to a binary file.ttMigrate -c master2 master2.bak

-

On the standby master host, run the

ttDestroyutility to destroy the standby master database.ttDestroy master2

-

Create a new installation and a new instance for the new release on the standby master host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information.

-

In the new instance on the standby master host, run the

ttMigrateutility to restore the standby master database from the binary file created earlier.ttMigrate -r -C 20 master2 master2.bak

Note:

This example performs a checkpoint operation after every 20 MB of data has been restored. -

On the standby master database, use the

CREATE USERstatement to create a new cache administration user.Command> CREATE USER cacheuser2 IDENTIFIED BY cachepwd; Command> GRANT CREATE SESSION, CACHE_MANAGER, CREATE ANY TABLE, DROP ANY TABLE TO cacheuser2;Note:

You must create the new cache administration user in the Oracle database and grant the user the minimum set of privileges required to perform cache group operations. See "Create users in the Oracle database" in the Oracle TimesTen Application-Tier Database Cache User's Guide for information. -

Connect to the standby master database as the cache administration user, and call the

ttCacheUidPwdSetbuilt-in procedure to set the new cache administration user name and password. Ensure you specify the cache administration user password for the Oracle database in theOraclePWDconnection attribute within the connection string.ttIsql "DSN=master2;UID=cacheuser2;PWD=cachepwd;OraclePWD=oracle" Command> call ttCacheUidPwdSet('cacheuser2','oracle'); -

On the standby master database, call the

ttCacheStartbuilt-in procedure to start the cache agent.Command> call ttCacheStart();

-

On the standby master database, call the

ttRepStartbuilt-in procedure to start the replication agent.Command> call ttRepStart();

The replication state will automatically be set to

STANDBY. You can call thettRepStateGetbuilt-in procedure to confirm this. (This occurs asynchronously and may take a little time.)Command> call ttRepStateGet(); < STANDBY > 1 row found.

-

On the standby master database, use the

CREATE READONLY CACHE GROUPstatement to create all the read-only cache groups.Command> CREATE READONLY CACHE GROUP cacheuser2.readcache AUTOREFRESH INTERVAL 10 SECONDS FROM oratt.readtbl (keyval NUMBER NOT NULL PRIMARY KEY, str VARCHAR(32));Note:

Ensure that the cache administration user hasSELECTprivileges on the cache group tables in the Oracle database. In this example, thecacheuser2user hasSELECTprivileges on thereadtbltable owned by theorattuser in the Oracle database. For more information, see "Create the Oracle Database tables to be cached" in the Oracle TimesTen Application-Tier Database Cache User's Guide. -

On the standby master database, use the

LOAD CACHE GROUPstatement to load the data from the Oracle database tables into the TimesTen cache groups.Command> LOAD CACHE GROUP cacheuser2.readcache COMMIT EVERY 200 ROWS; -

Pause any applications that are generating updates on the active master database.

-

On the active master database, call the

ttRepSubscriberWaitbuilt-in procedure using the DSN and host of the standby master database. For example, to ensure that all transactions are replicated to themaster2database on themaster2hosthost:Command> call ttRepSubscriberWait(NULL,NULL,'master2','master2host',120);

-

On the active master database, call the

ttRepStopbuilt-in procedure to stop the replication agent.Command> call ttRepStop();

-

On the active master database, call the

ttRepDeactivatebuilt-in procedure to set the replication state for the active master database toIDLE.Command> call ttRepDeactivate();

-

On the standby master database, call the

ttRepStateSetbuilt-in procedure to set the replication state for the standby master database toACTIVE. This database and its host become the active master in the active standby pair replication scheme.Command> call ttRepStateSet('ACTIVE');Note:

In this example, themaster2database on themaster2hosthost just became the active master in the active standby pair replication scheme. Likewise, themaster1database on themaster1hosthost is henceforth considered the standby master in the active standby pair replication scheme. -

On the new active master database, call the

ttRepStopbuilt-in procedure to stop the replication agent.Command> call ttRepStop();

-

On the active master database, use the

ALTER CACHE GROUPstatement to set theAUTOREFRESHmode of all cache groups toPAUSED.Command> ALTER CACHE GROUP cacheuser2.readcache SET AUTOREFRESH STATE PAUSED; -

On the active master database, use the

DROP ACTIVE STANDBY PAIRstatement to drop the active standby pair.Command> DROP ACTIVE STANDBY PAIR;

-

On the active master database, use the

CREATE ACTIVE STANDBY PAIRstatement to create a new active standby pair with the cache groups included. Ensure you explicitly specify the TCP/IP port for each database.Command> CREATE ACTIVE STANDBY PAIR master1 ON "master1host", master2 ON "master2host" STORE master1 ON "master1host" PORT 20000 STORE master2 ON "master2host" PORT 20010; -

On the active master database, call the

ttRepStateSetbuilt-in procedure to set the replication state for the active master database toACTIVE.Command> call ttRepStateSet('ACTIVE'); -

On the active master database, call the

ttRepStartbuilt-in procedure to start the replication agent.Command> call ttRepStart();

-

Resume any applications that were paused in step 21, connecting them to the new active master database.

-

On the new standby master host, run the

ttDestroyutility to destroy the new standby master database.ttDestroy master1

-

Create a new installation and a new instance for the new release on the standby master host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information.

-

Create a new standby master database by duplicating the active master database with the

ttRepAdminutility. For example, to duplicate themaster2database on themaster2hosthost to themaster1database, run the following on the host containing themaster1database:ttRepAdmin -duplicate -from master2 -host master2host -UID pat -PWD patpwd -keepCG -cacheUid cacheuser2 -cachePwd cachepwd master1

-

On the standby master host, run the

ttAdminutility to start the cache agent for the standby master database.ttAdmin -cacheStart master1

-

On the standby master host, run the

ttAdminutility to start the cache agent for the standby master database.ttAdmin -repStart master1

Offline upgrades for an active standby pair with cache groups

Performing a major upgrade in a scenario with an active standby pair with asynchronous writethrough cache groups requires an offline upgrade. This is discussed in the subsection that follows.

Offline major upgrade for active standby pair (cache groups)

Complete the following steps to perform a major upgrade from an 11.2.2 release to a 18.1 release in a scenario with an active standby pair with cache groups. You must perform this upgrade offline.

These steps assume master1 is an active master database on the master1host host and master2 is a standby master database on the master2host host. (For information about the built-in procedures and utilities discussed, refer to "Built-In Procedures" and "Utilities" in Oracle TimesTen In-Memory Database Reference.)

-

Stop any updates to the active database before you upgrade.

-

From

master1, call thettRepSubscriberWaitbuilt-in procedure to ensure that all data updates have been applied to the standby database, wherenumsecis the desired wait time.call ttRepSubscriberWait(null, null, 'master2', 'master2host', numsec); -

From

master2, callttRepSubscriberWaitto ensure that all data updates have been applied to the Oracle database.call ttRepSubscriberWait(null, null, '_ORACLE', null, numsec); -

On

master1host, use thettAdminutility to stop the replication agent for the active database.ttAdmin -repStop master1

-

On

master2host, usettAdminto stop the replication agent for the standby database.ttAdmin -repStop master2

-

On

master1host, call thettCacheStopbuilt-in procedure or usettAdminto stop the cache agent for the active database.ttAdmin -cacheStop master1

-

On

master2host, callttCacheStopor usettAdminto stop the cache agent for the standby database.ttAdmin -cacheStop master2

-

On

master1host, use thettMigrateutility to back up the active database to a binary file.ttMigrate -c master1 master1.bak

-

On

master1host, use thettDestroyutility to destroy the active database. You must either use the-forceoption or first drop all cache groups. If you use-force, run the scriptcacheCleanup.sqlafterward.ttDestroy -force /data_store_path/master1The

cacheCleanup.sqlscript is a SQL*Plus script, located in theinstallation_dir/oraclescriptsdirectory (and accessible throughtimesten_home/install/oraclescripts), that you run after connecting to the Oracle database as the cache user. It takes as parameters the host name and the database name (with full path). For information, refer to "Dropping Oracle Database objects used by autorefresh cache groups" in the Oracle TimesTen Application-Tier Database Cache User's Guide. -

Create a new installation and a new instance for the new major release on

master1host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information. -

Create a new database in 18.1.w.x using

ttIsqlwith DSN connection attribute settingAutoCreate=1. In this new database, create a cache user. The following example is a sequence of commands to execute inttIsqlto create this cache user and give it appropriate access privileges.The cache user requires

ADMINprivilege to execute the next step,ttMigrate –r. Once migration is complete, you can revoke theADMINprivilege from this user if desired.Command> CREATE USER cacheuser IDENTIFIED BY cachepassword; Command> GRANT CREATE SESSION, CACHE_MANAGER, CREATE ANY TABLE, DROP ANY TABLE TO cacheuser; Command> GRANT ADMIN TO cacheuser; -

In the new instance on

master1host, use thettMigrateutility as the cache user to restoremaster1from the binary file created earlier. (This example performs a checkpoint operation after every 20 megabytes of data has been restored, and assumes the password is the same in the Oracle database as in TimesTen.)ttMigrate -r -cacheuid cacheuser -cachepwd cachepassword -C 20 -connstr "DSN=master1;uid=cacheuser;pwd=cachepassword;oraclepwd=cachepassword" master1.bak

-

On

master1host, usettAdminto start the replication agent.ttAdmin -repStart master1

Note:

This step also sets the database to the active state. You can then call thettRepStateGetbuilt-in procedure (which takes no parameters) to confirm the state. -

On

master1host, call thettCacheStartbuilt-in procedure or usettAdminto start the cache agent.ttAdmin -cacheStart master1

Then you can use the

ttStatusutility to confirm the replication and cache agents have started. -

Put each automatic refresh cache group into the

AUTOREFRESH PAUSEDstate. This example usesttIsql:Command> ALTER CACHE GROUP mycachegroup SET AUTOREFRESH STATE paused;

-

From

master1, reload each cache group, specifying the name of the cache group and how often to commit during the operation. This example usesttIsql:Command> LOAD CACHE GROUP cachegroupname COMMIT EVERY n ROWS;

You can optionally specify parallel loading as well. See the "LOAD CACHE GROUP" SQL statement in the Oracle TimesTen In-Memory Database SQL Reference for details.

-

On

master2host, usettDestroyto destroy the standby database. You must either use the-forceoption or first drop all cache groups. If you use-force, run the scriptcacheCleanup.sqlafterward (as discussed earlier).ttDestroy -force /data_store_path/master2 -

Create the new installation and the new instance for the new major release on

master2host. See "Creating an installation on Linux/UNIX" and "Creating an instance on Linux/UNIX: Basics" for information. -

In the new instance on

master2host, use thettRepAdminutility with the-duplicateoption to create a duplicate of active databasemaster1to use as standby databasemaster2. Specify the appropriate administrative user onmaster1, the cache manager user and password, and to keep cache groups.ttRepAdmin -duplicate -from master1 -host master1host -uid pat -pwd patpwd -cacheUid orcluser -cachePwd orclpwd -keepCG master2

-

On

master2host, usettAdminto start the replication agent. (You could optionally have used thettRepAdminoption-setMasterRepStartin the previous step instead.)ttAdmin -repStart master2

-

On

master2, the replication state will automatically be set toSTANDBY. You can call thettRepStateGetbuilt-in procedure to confirm this. (This occurs asynchronously and may take a little time.)call ttRepStateGet();

-

On

master2host, call thettCacheStartbuilt-in procedure or usettAdminto start the cache agent.ttAdmin -cacheStart master2

After this, you can use the

ttStatusutility to confirm the replication and cache agents have started.

If you want to create read-only subscriber databases, on each subscriber host you can create the subscriber by using the ttRepAdmin utility -duplicate option to duplicate the standby database. The following example creates subscriber1, using the same ADMIN user as above and the -nokeepCG option to convert the cache tables to normal TimesTen tables, as appropriate for a read-only subscriber.

ttRepAdmin -duplicate -from master2 -host master2host -nokeepCG -uid pat -pwd patpwd subscriber1

For related information, refer to "Rolling out a disaster recovery subscriber" in the Oracle TimesTen In-Memory Database Replication Guide.

Performing an offline TimesTen upgrade when using Oracle Clusterware

This section discusses the steps for an offline upgrade of TimesTen when using TimesTen with Oracle Clusterware. You have the option of also upgrading Oracle Clusterware, independently, while upgrading TimesTen. (See "Performing an online TimesTen upgrade when using Oracle Clusterware" for details on online upgrade.)

Notes:

-

These instructions apply for either a TimesTen patch upgrade (18.1.w.x to 18.1.y.z) or a TimesTen major upgrade (11.2.2 to 18.1).

-

Refer to Oracle TimesTen In-Memory Database Release Notes for information about versions of Oracle Clusterware that are supported by TimesTen.

For this procedure, except where noted, you can execute the ttCWAdmin commands from any host in the cluster. Each command affects all hosts.

-

Stop the replication agents on the databases in the active standby pair:

ttCWAdmin -stop -dsn advancedDSN

-

Drop the active standby pair:

ttCWAdmin -drop -dsn advancedDSN

-

Stop the TimesTen cluster agent. This removes the hosts from the cluster and stops the TimesTen daemon:

ttCWAdmin -shutdown

-

Upgrade TimesTen on the desired hosts.

-

To perform a TimesTen patch upgrade, each node in the cluster must have TimesTen from the same major release.

-

To perform a TimesTen major upgrade, you must use

ttMigrate. See "Offline upgrade: Moving to a different major release" for details.

-

-

Upgrade Oracle Clusterware if desired. See the Oracle Clusterware Administration and Deployment Guide in the Oracle Database documentation for information.

-

If you have upgraded Oracle Clusterware, use the

ttInstanceModifyutility to configure TimesTen with Oracle Clusterware. On each host, run:ttInstanceModify -crs

For Linux or UNIX hosts, see "Change the Oracle Clusterware configuration for an instance" for details.

-

Start the TimesTen cluster agent. This includes the hosts defined in the cluster as specified in

ttcrsagent.options. This also starts the TimesTen daemon.ttCWAdmin -init

-

Create the active standby pair replication scheme:

ttCWAdmin -create -dsn advancedDSN

Important: The host from which you run this command must have access to the

cluster.oracle.inifile. (See "Configuring Oracle Clusterware management with the cluster.oracle.ini file" in the Oracle TimesTen In-Memory Database Replication Guide for information about this file.) -

Start the active standby pair replication scheme:

ttCWAdmin -start -dsn advancedDSN

Performing an online TimesTen upgrade when using Oracle Clusterware

This section discusses how to perform an online rolling upgrade (patch) for TimesTen, from TimesTen 18.1.w.x to 18.1.y.z, in a configuration where Oracle Clusterware manages active standby pairs. (See "Performing an offline TimesTen upgrade when using Oracle Clusterware" for an offline upgrade.)

The following topics are covered:

-

Upgrades for multiple active standby pairs on many pairs of hosts

-

Upgrades for multiple active standby pairs on a pair of hosts

Notes:

-

Refer to Oracle TimesTen In-Memory Database Release Notes for supported versions of Oracle Clusterware.

Supported configurations

The following basic configurations are supported for online rolling upgrades for TimesTen. In all cases, Oracle Clusterware manages the hosts.

-

One active standby pair on two hosts.

-

Multiple active standby pairs with one database on each host.

-

Multiple active standby pairs with one or more database on each host.

(Other scenarios, such as with additional spare hosts, are effectively equivalent to one of these scenarios.)

Restrictions and assumptions

Note the following assumptions for upgrading TimesTen when using Oracle Clusterware:

-

The existing active standby pairs are configured and operating properly.

-

Oracle Clusterware commands are used correctly to stop and start the standby database.

-

The upgrade does not change the TimesTen environment for the active and standby databases.

-

These instructions are for TimesTen patch upgrades only. Online major upgrades are not supported in configurations where Oracle Clusterware manages active standby pairs.

-

There are at least two hosts managed by Oracle Clusterware.

Multiple active or standby databases managed by Oracle Clusterware can exist on a host only if there are at least two hosts in the cluster.

Important:

Upgrade Oracle Clusterware if desired, but not concurrently with an online TimesTen upgrade. When performing an online TimesTen patch upgrade in configurations where Oracle Clusterware manages active standby pairs, you must perform the Clusterware upgrade independently and separately, either before or after the TimesTen upgrade.Note:

For information about Oracle Clusterware, see the Oracle Clusterware Administration and Deployment Guide in the Oracle Database documentation.Upgrade tasks for one active standby pair

This section describes the following tasks:

Note:

In examples in the following subsections, the host name ishost2, the DSN is myDSN, the instance name is upgrade2, and the instance administrator is terry.Verify that the active standby pair is operating properly

Complete these steps to confirm that the active standby pair is operating properly.

-

Verify the following.

-

The active and the standby databases run a TimesTen 18.1.w.x release.

-

The active and standby databases are on separate hosts managed by Oracle Clusterware.

-

Replication is working.

-

If the active standby pair replication scheme includes cache groups, the following are true:

-

AWT and SWT writes are working from the standby database in TimesTen to the Oracle database.

-

Refreshes are working from the Oracle database to the active database in TimesTen.

-

-

-

Run the

ttCWAdmin -status -dsnyourDSNcommand to verify the following.-

The active database is on a different host than the standby database.

-

The state of the active database is

'ACTIVE'and the status is'AVAILABLE'. -

The state of the standby database is

'STANDBY'and the status is'AVAILABLE'.

-

-

Run the

ttStatuscommand on the active database to verify the following.-

The

ttCRSactiveserviceandttCRSmasterprocesses are running. -

The subdaemon and the replication agents are running.

-

If the active standby pair replication scheme includes cache groups, the cache agent is running.

-

-

Run the

ttStatuscommand on the standby database to verify the following.-

The

ttCRSsubserviceandttCRSmasterprocesses are running. -

The subdaemon and the replication agents are running.

-

If the active standby pair replication scheme includes cache groups, the cache agent is running.

-

Shut down the standby database

Complete these steps to shut down the standby database.

-

Run an Oracle Clusterware command similar to the following to obtain the names of the Oracle Clusterware Master, Daemon, and Agent processes on the host of the standby database. It is suggested to filter the output by using the