Configuring Gateway Node Domains

Configure the domains for each of your gateway nodes, including configuring authentication providers, SSL certificates for passing requests to HTTPS endpoints, and locking down your nodes.

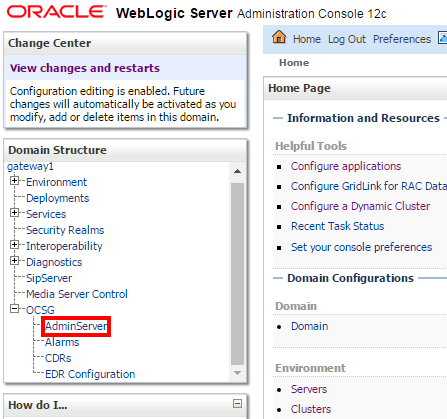

Signing into the WebLogic Adminstration Console for a Gateway Node Domain

Supported WebLogic Authentication Providers

Oracle API Platform Cloud Service - Classic supports WebLogic authentication providers in the gateway node domain for authenticating users in identity management systems.

| Name | Description |

|---|---|

|

WebLogic Authentication provider |

Accesses user and group information in WebLogic Server's embedded LDAP server. |

|

Oracle Internet Directory Authentication provider |

Accesses users and groups in Oracle Internet Directory, an LDAP version 3 directory. |

|

Oracle Virtual Directory Authentication provider |

Accesses users and groups in Oracle Virtual Directory, an LDAP version 3 enabled service. |

|

LDAP Authentication providers |

Access external LDAP stores. You can use an LDAP Authentication provider to access any LDAP server. WebLogic Server provides LDAP Authentication providers already configured for Open LDAP, Sun iPlanet, Microsoft Active Directory, and Novell NDS LDAP servers. |

|

RDBMS Authentication providers |

Access external relational databases. WebLogic Server provides three RDBMS Authentication providers: SQL Authenticator, Read-only SQL Authenticator, and Custom RDBMS Authenticator. |

|

WebLogic Identity Assertion provider |

Validates X.509 and IIOP-CSIv2 tokens and optionally can use a user name mapper to map that token to a user in a WebLogic Server security realm. |

|

SAML Authentication provider |

Authenticates users based on Security Assertion Markup Language 1.1 (SAML) assertions |

|

Negotiate Identity Assertion provider |

Uses Simple and Protected Negotiate (SPNEGO) tokens to obtain Kerberos tokens, validates the Kerberos tokens, and maps Kerberos tokens to WebLogic users |

|

SAML Identity Assertion provider |

Acts as a consumer of SAML security assertions. This enables WebLogic Server to act as a SAML destination site and supports using SAML for single sign-on. |

See also About Configuring the Authentication Providers in WebLogic Server in Administering Security for Oracle WebLogic Server.

Configure WebLogic Authentication Providers

Configure SSL Certificates to Pass Requests to Services Over HTTPS

To pass requests to backend service endpoints using the HTTPS protocol, you must first import the required SSL certificates into the WebLogic trust stores for your gateway node domains.

Gateway Node Lockdown

A Gateway Node exposes REST endpoints for the APIs deployed on it. Apart from the deployed APIs, the nodes have internal REST endpoints which need to be secured. Lockdown will restrict access to the internal endpoints to just the local servers in the domain.

Endpoints on a Gateway Node

Learn about the endpoints exposed by gateway nodes.

Gateway nodes expose the following types of REST endpoints:

-

Gateway controller endpoints

-

Internal endpoints

-

Weblogic Administration Console endpoint

-

Deployed API Endpoints

Gateway controller endpoints function as management control for the gateway node, allowing the user to control the nature of polling and state of the node. The endpoints on the gateway node use the /apiplatform context root and are deployed on the managed servers. See REST API for the Gateway Controller in Oracle API Platform Cloud Service.

The gateway node exposes internal REST endpoints for the gateway controller to invoke to be able to deploy APIs and applications. These internal endpoints uses the /prm_pm_rest context root and are deployed on the node domain’s managed servers.

The Weblogic Administration Console endpoint is deployed on the Administration Server of the gateway node domain. This endpoint uses /console context root only on the Administration Server.

All deployed Oracle API Platform Cloud Service - Classic APIs will have endpoints on the managed servers. The context roots will be decided by the API Manager. API Managers can’t use /prm_pm_rest and /apiplatform as API endpoints.

Lock Down a Gateway Node

Use the lockdown gateway node installer action to lockdown a node.

/prm_pm_rest and /apiplatform contexts) from any host other than the machine you specified are rejected with the following HTTP 403 Forbidden error: IP address is not allowed.

listenIpAddress with * (an asterisk) and run the lockdown action again.

Additional Gateway Node Lockdown Scenarios

Learn about additional gateway node lockdown scenarios.

Locking Down the Administration Server

The WebLogic Administration Console is accessible only using the administrative user credentials for the gateway node domain. This user is created when you install a gateway node domain. As this user, you can shut down the Administration Server to further secure the gateway node. The gateway node performs in limited capacity. After shutting down the Administration Server, the following gateway controller endpoints are unavailable:

-

/apiplatform/gatewaynode/v1/security/credentials -

/apiplatform/gatewaynode/v1/security/profile -

/apiplatform/gatewaynode/v1/registration -

/apiplatform/gatewaynode/v1/registration

See REST API for the Gateway Controller in Oracle API Platform Cloud Service.

Multiple Ethernet Interfaces Exist

If multiple ethernet interfaces exist for the machine you install a node to, the lockdown action uses the ones specified in the listenIpAddress property in gateway-props.json File. Loopback IPs or use of localhost is not supported by the lockdown action.

Note:

Loopback IPs or localhost invocations to the gateway do not work and are not supported, even before lockdown.

Configure Gateway Node Firewall Properties in the WebLogic Adminsitration Console

application/json and application/xml types to APIs deployed on the gateway.These limitations are not applied on the management endpoints that gateway controller exposes.

You can also configure firewall properties for all nodes registered to a gateway in the Management Portal.

Additional Firewall Properties

Your network implementation can be vulnerable to denial of service (DOS) attacks, which generally try to interfere with legitimate communication inside the Gateway in Oracle API Platform Cloud Service - Classic.

To prevent these messages from reaching your network, Gateways in Oracle API Platform Cloud Service - Classic offer configurable RESTful message filtering. You configure this filtering behavior by using the ApiFirewall configuration MBean. ApiFirewall determines how Gateways in Oracle API Platform Cloud Service - Classic filters messages attempting to enter Oracle API Platform Cloud Service.

| Attack Strategy | Protection Strategy | Default Result |

|---|---|---|

|

Malicious Content Attack, including: RESTful message attacks:

|

The ApiFirewall MBean settings (application tier) limit the acceptance of oversize message entities. |

Rejects the message and returns the error message specified with the ErrorStatus attribute of ApiFirewallMBean. |

|

Continuous wrong password attack. |

The default WebLogic Security Provider setting (application tier) locks a subscriber out for 30 minutes after 5 wrong password attempts. This behavior is configurable. See the section on Protecting user Accounts in Administering Security for Oracle WebLogic Server for more information. |

Rejects the message and returns a 500 Internal Server Error message. |

|

External Entity Reference |

Gateways in Oracle API Platform Cloud Service ApiFirewall (application tier) prohibits all references to external entities. It is possible to remove this protection. |

Rejects the message and returns a 500 Internal Server Error message. |

Configure Analytics Properties

Oracle API Platform Cloud Service - Classic gateway nodes use Logstash to collect analytics. Logstash aggregates data on each node before sending it to the management tier. Configure properties on each gateway node to determine how analytics are collected and how data is sent to the management tier.

Ensure that you perform this task as the user who installed the gateway. That user owns the analyticsagent.properties file you edit.

About Logstash Retry Logs

The Oracle API Platform Cloud Service - Classic uses retry logs with logstash to improve performance and reliability. If the network has a high load, bad connectivity, or goes up and down frequently, the retry logs prevents logstash from stopping and starting, which causes performance issues on the gateway.

In the case of processing failures in which a CSV cannot be sent or an EDR cannot be processed, events are written to separate log files which then feeds back into logstash. Multiple files prevent concurrency issues, since only one file can be written at a time. Each type of failure has its own log file:

-

When EDRs are consumed before logstash has been configured, the individual EDR log lines that failed are written to a

Logs/RETRY_EDR_YYYY-MM-dd-HH-mm.%{edr_log_basename}.logfile. Since tenant credentials can be updated dynamically, theconfig jsonfile can be sent at any time while logstash is running. One effect of this dynamic update is that EDRs can be read before the configuration file is sent. In these cases, these early EDRs are sent back into theRETRY_EDRlog, and picked up by a separate logstash file Input, with a slower poll interval. These EDRs are aggregated by log_file matching theRETRY_EDRname. -

When the management tier does not send an HTTP 200 to the plugin because of bad credentials, HTTP timeouts, or other server errors, the retry events are written to a

Logs/RETRY_HTTP_YYYY-MM-dd-HH-mm.logfile. The CSV in theseRETRY_HTTPevents is still aggregated by the original log file name. -

When there is an HTTP failure if the tenant credentials are missing, the retry events are written to a

Logs/RETRY_CRED_YYYY-MM-dd-HH-mm.logfile. The processing behavior is the same as theRETRY_HTTPlogs. They go into a separate log to avoid concurrency issues. This type of retry should not happen in single tenant mode.In multi-tenant mode, it can happen between the time of tenant onboarding and the time logstash gets the updated configuration file containing the new credentials.

In some rare cases, it is possible for EDR totals in the database not to match the totals in the log files. This is possible when logstash failure overlaps with management tier failure. The mismatch should be relatively small.

Every retry creates a new line in one of the RETRY log files. If logstash is restarted, it may try to reprocess the RETRY log files. Since the logic uses ?increaseOnly=true, no data is lost or overwritten.

Retry log files should be purged by file age and sincedb status. Purging is done by the Analytics agent.