1 Get Started with Stream Analytics

Stream Analytics allows for the creation of custom operational dashboards that provide real-time monitoring and analyses of event streams in an Apache Spark based system. Stream Analytics enables customers to identify events of interest in their Apache Spark based system, execute queries against those event streams in real time and drive operational dashboards or raise alerts based on that analysis. Stream Analytics runs as a set of native Spark pipelines.

About Stream Analytics

Stream Analytics is an in-memory technology for real-time analytic computations on streaming data. The streaming data can originate from IoT sensors, web pipelines, log files, point-of-sale devices, ATM machines, social media, or from any other data source. Stream Analytics is available as a managed service in Oracle Cloud and as an on premises installation.

Stream Analytics is used to identify business threats and opportunities by filtering, aggregating, correlating, and analyzing high volume of data in real time.

Once a situation or alert is detected, appropriate action can be taken by triggering a business workflow using Oracle Integration or by sending results to a presentation service such as Oracle Analytics Cloud.

More precisely, Stream Analytics can be used in the following scenarios:

-

Build complex event processing pipelines by blending and transforming data from disparate transactional and non-transactional sources.

-

Perform temporal analytics based on time and event windows.

-

Perform location-based analytics using built-in spatial patterns.

-

Detect patterns in time-series data and execute real-time actions.

-

Build operational dashboards by visualizing processed data streams.

-

Use Machine Learning to score current event and predict next event.

-

Run ad-hoc queries on results of processed data streams.

Some industry specific examples include:

-

Detecting real-time fraud based on incoming transaction data.

-

Tracking transaction losses and margins in real-time to renegotiate with vendors and suppliers.

-

Improving asset maintenance by tracking healthy operating parameters and pro-actively scheduling maintenance.

-

Improving margins by continuously tracking demand and optimizing markdowns instead of randomly lowering prices.

-

Readjusting prices by continuously tracking demand, inventory levels, and product sentiment on social media etc.

-

Marketing and making real-time offers based on customer location and loyalty.

-

Instantly identifying shopping cart defections and improving conversion rates.

-

Upselling products and services by instantly identifying customer’s presence on company website.

-

Improving asset utilization by tracking average time it takes to load and unload merchandise.

-

Improving turnaround time by preparing dock and staff based on estimated arrival time of fleet.

-

Revising schedule estimates based on actual time to enter and exit loading zones, and so on.

Why Stream Analytics?

Various reasons and advantages encourage you to use Stream Analytics instead of similar products available in the industry.

Oracle Managed

There is no infrastructure to worry and you can get started in minutes with Stream Analytics. Scale out when your data volume and velocity increases without worrying about virtual machines, cluster management, and so on.

Simplicity

Author powerful data processing pipeliness using self-service web-based tool in Stream Analytics. The tool automatically generates a Spark pipeline along with instant visual validation of pipeline logic.Built on Apache Spark

Stream Analytics can attach to any version-compliant Yarn cluster running Spark and is first in the industry to bring event-by-event processing to Spark Streaming.Enterprise Grade

Stream Analytics is built on Apache Spark to provide full horizontal scale out and 24x7 availability of mission-critical workloads. Automated check-pointing ensures exact-once processing and zero data loss. Built-in governance provides full accountability of who did what and when to the system. As part of management and monitoring, Stream Analytics provides a visual representation of pipeline topology/relationships along with dataflow metrics to indicate number of events ingested, events dropped, and throughput of each pipeline.How Does Stream Analytics Work?

Stream Analytics starts with ingesting data from Kafka with first-class support for GoldenGate change data capture. Examining and analyzing the stream is performed by creating data pipelines.

A data pipeline can query data using time windows, look for patterns, and apply conditional logic while the data is still in motion. The query language used in Stream Analytics is called Continuous Query Language (CQL) and is similar to SQL. But CQL includes additional constructs for pattern matching and recognition. Though CQL is declarative, there is no need to write any code in Stream Analytics. The web-based tool automatically generates queries and the Spark Streaming pipeline. Once data is analyzed and situation is detected, the pipeline can terminate to trigger BPM workflows in Oracle Integration or save results into a Data Lake for deeper insights and intelligence using Oracle Analytics Cloud.

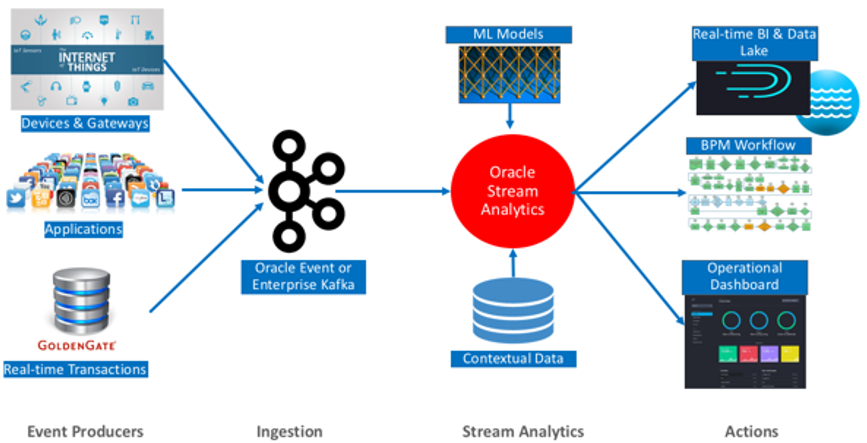

The following diagram illustrates the architecture of Stream Analytics:

Description of the illustration osacs_architecture.png

The analyzed data is used to build operational dashboards, trigger workflows, and it is saved to Data Lakes for business intelligence and ad-hoc queries.

Before You Begin with Stream Analytics

In Stream Analytics you typically start your work in the Catalog. The Catalog is a repository of entities that can be created by the user. There are different types of entities and in this section we are going to introduce each type one by one.

Every entity in the Catalog has a common subset of properties: a name, a description and several (zero, one or more) tags assigned to it. The tags assigned to an entity can later be used to filter the list of entities in the Catalog. In other words, tags can be used to arrange the entities into logical groups, such as projects by assigning the project name to the entity.

Entities may have one or more additional properties based on the entity’s type and purpose. The main and most important entity type is the pipeline, which represents a stream processing pipeline. However, other entities, such as Connections, Streams, References and Targets are required to create and/or make effective use of the pipeline.

Connection

A connection is a very basic artifact and the first entity that you need to create in the Catalog. It is a collection of metadata (such as URLs, credential and the like) required to connect to an external system. A connection is the basis for creation of sources (Streams, References or Geo Fences) and Targets.

It is possible to reuse the same connection to create multiple sources and/or targets. In other words, it can is reused to access different resources in the same system: for example different Kafka topics in the same Kafka cluster, or different database tables in the same Oracle database.

In this release, the only supported connection types are Kafka and Database.

Kafka Connection

A Kafka connection has just a single parameter, the Zookeeper server URL above all the standard properties (name, description, tags) of catalog objects.

The Zookeper URL is of the format host:port. If the port is not provided by the user, the system will assume the default Zookeeper port, i.e. 2181. Authentication to Kafka is not supported in this release.

Sources

There are two kinds of sources in Stream Analytics: streams and references. Though serve as an input to a pipeline, they are quite different. A stream is a representation of streaming data while a reference is that of static data. Streaming data is flowing into the system and is to be processed, whereas static data is used to enrich streaming data by pulling the static data from a static data source.

The initial or primary source of a pipeline must always be a stream. However additional sources can be both streams and/or references.

Stream

A Stream is a source of dynamic data. The data is flowing, it is not static or frozen. For example, stock prices of a particular company can be considered as a stream as the data arrives in every second or even more frequently. Another example of streaming data is the position (geographical location) of vehicles (e.g. trucks) which again can change continuously as each vehicle is moving. Each vehicle reports its own position to a central system periodically, e.g. every second, and the central system receives the position messages as a stream.

Streams can be transmitted using different network protocols, messaging systems as well as using many different message formats. In this release, the supported stream types are: Kafka and GoldenGate.

To create a Kafka stream, you must create a Kafka connection first, and then select that connection in the stream creation wizard. In addition to the connection, the user needs to specify the Kafka topic that represents the stream of data.

Shape

A Shape is the format of the data. In Stream Analytics, each message (or event, in stream processing terminology) in a Stream or Target must have the same format and this format must be specified when creating the Stream or Target. You can think of the shape as the streaming analogy of the database table structure for static data. Each shape consists of a number of fields and each field has a name and a data type. In the Stream creation wizard, it is possible to assign an alias to a field, so that the field can later be referenced by this user-given alias.

Assume that the stream contains data about orders. In this case, the shape may contain the following fields: an order id of type string, a customer id of type integer, product id of type integer, a quantity of type integer and a unit price of type Number.

Reference

A reference is a source of static data that provides contextual information about the event data. The metadata and contextual information of event data is stored in a reference. In this release, the only supported reference type is an Oracle Database table. A reference is basically a link/connection to a specific database table (rather than just to a database).

References are used to enrich data that arrives from a Stream. Referring back to the previous example, the order stream contains order events and each event contains a product Id and a customer Id. Assume that there are two database tables, each containing information about the products and the customers, respectively. After creating two references, one for the products table and one for the customer table, Stream Analytics can use these references to enrich the incoming streams with information from these tables, such as product name, manufacturer, customer name, address, etc.

While references take their data from a database table, a caching mechanism can be applied. By turning on caching (a configuration option of the reference), it is possible to add a caching layer in between the pipeline and the database table. This improves the performance of accessing static data, at the price of higher memory consumption by the pipeline. Once the data is loaded into cache, the reference fetches data from the cache only. Any update on the reference table does not take effect.

Geo Fence

A geo fence is a virtual boundary in a real world geographical area. This virtual boundary can be used to find object position with respect to the geo fence.

For example, the object position can be:

-

Near to geo fence

-

Exit geo fence

-

Based on Stay Duration in geo fence

-

Enters geo fence

-

Present inside geo fence

Target

A target represents an external system where the results of the stream processing is being directed to. Just like streams, targets are the links to the outside world. Streams are the input to a pipeline, whereas targets are the output. While a pipeline can consume and process multiple streams, as of this release, a pipeline can have maximum one target.

It can have no target, but that configuration does not really make sense, as the purpose of creating a pipeline is to process streaming data and direct the output to an external system, i.e a target.

Pipeline

A pipeline defines the pipeline logic and is a sequence of data processing stages. A stage can be one of the following types – Query, Pattern, Rule, Query Group.

A pipeline always starts with a stream and can optionally end with a target. The output stream of one stage is used as an input to another stage and a pipeline can be of arbitrary length with any combination of above stage types.

You can edit/update configuration on any stage, not limited to last stage (the stage before the target) in a draft pipeline.

Query Stage

A query stage is used to configure a SQL-like query on the data stream and comprises additional sources for joins, filters, summaries, group by, time windows, and so on.

For example, the query below calculates hourly total sales where transaction amount is greater than a dollar and outputs the result every 1 second.

Select sum (TransactionAmount) As HourlySales

From SalesStream [Range 1 Hour Slide 1 Second]

Where TransactionAmount > 1

Queries like above or more complex queries can all be configured in the query stage with zero coding and with no intimate knowledge of Continuous Query Language or CQL. The CQL language is similar to SQL but with additional constructs for temporal analytics and pattern matching.

A query stage has the following sub sections:

-

Filter

-

Correlation

-

Summary/Group By

-

Range

-

Evaluation Frequency

Filter

The filter section in a query stage or query group stage allows events in the data stream to be filtered out.

Only events which satisfy the filter condition are passed to the downstream stage. For example, in a data stream containing SensorId and Temperature, you can filter events where Temperature is lower than or equal to 70 degrees by setting the filter condition to Temperature > 70.

Correlation

A correlation is used to enrich the incoming event in the data stream with static data in a database table or with data from other streams.

For example, if the event in the data stream only includes SensorId and Sensor Temperature, the event could be enriched with data from a table to obtain SensorMake, SensorLocation, SensorThreshold, and many more.

Correlating an event with other sources requires the join condition to be based on a common key. In the above example, the SensorId from the stream cand be used to correlate with SensorKey in the database table. The following query illustrates the above data enrichment scenario producing sensor details for all sensors whose temperature exceeds their pre-defined threshold.

Select T.SensorId, T.Temperature, D.SensorName, D.SensorLocation

From TemperatureStream[Now] T, SensorDetailsTable D

Where T.SensorId = D.SensorKey And T.Temperature > D.SensorThreshold

Queries like above and more complex queries can be automatically generated by configuring sources and filter sections of the query stage.

Summary

A data stream is a continuous sequence of events but we can summarize the data over any time range including an unbounded range.

For example, you can continuously compute the maximum temperature for each sensor from the beginning of time by configuring a query like the one below in a Query stage.

Select SesnsorId, max(Temperature)

From TemperatureStream

Group By SensorId

Group By

A group by collects the data of all the rows with an identical column value. Group by is used in conjunction with Summaries (aggregate functions) to provide information about each group.

Here is an example configuration that generates a query for computing the average temperature of each sensor at the end of the hour and using readings from last one hour.

Select SesnsorId, avg(Temperature)

From TemperatureStream [Range 1 Hour Slide 1 Hour]

Group By SensorId

Example

If you add multiple group bys, the data is grouped on multiple columns. For example, you have a stream that gives you sales numbers for geographical locations. You have the following columns BEFORE group by:

COUNTRY CITY REVENUE

US SF 500

US NY 1000

INDIA BOMBAY 800

INDIA BOMBAY 1500

INDIA BOMBAY 700

.........Calculate sum of revenue (summary) by country (groupby) to get:

COUNTRY SUM_OF_REVENUE

US 1500

INDIA 3000Add CITY as another group by, to get your aggregations grouped by city in addition to country:

COUNTRY CITY SUM_OF_REVENUE

US NY 1000

US SF 500

INDIA BOMBAY 1500

INDIA BANGALORE 1500Range

A range is a window applied on the data stream. Since data stream is an unbounded sequence of events it is often necessary to apply a window when computing aggregates.

Examples of ranges include – Last 1 Hour of events, Last 5 Minutes of events, Last 10 Events, and many more. Applying a range retains data in memory so be cautious with use of window ranges. For example, if data is arriving at the rate of 2000 events per second and if each event is 1KB then we have 2MB of data per second. Applying a 1-hour window on this data stream consumes 2MB times 3600 or 7.2GB of memory.

The supported time units in a range are:

-

now

-

nanoseconds

-

microseconds

-

milliseconds

-

seconds

-

minutes

-

hours

-

events

Evaluation Frequency

Evaluation Frequency or a Window Slide (commonly referred to) determines the frequency at which results are desired.

For example, the configured query below outputs total sales every 1 second but using transactions from last 1 hour.

Select sum (TransactionAmount) As HourlySales

From SalesStream [Range 1 Hour Slide 1 Second]

In other words, Evaluation Frequency determines how often you want to see the results. In the above query, if result is only desired at the end of the hour then we set the Evaluation Frequency to 1 hour.

Rule Stage

A rule stage is a stage in the pipeline where you apply conditional (IF - THEN) logic to the events in the stream. You can check for specific conditions and assign values to fields based on the results of your checks.

You can add multiple rules to the stage and they will get applied to pipeline in the sequence they are added.

Rule

A rule is a set of conditions applied to the incoming stream and a set of actions performed on the stream when conditions are true. Each event is analyzed independently of other events.

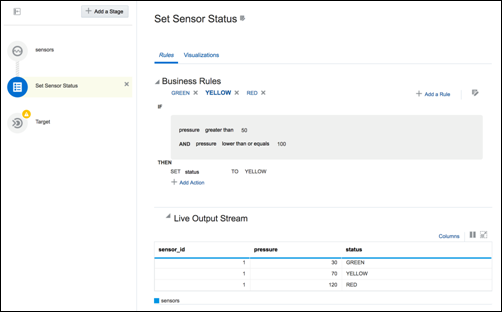

For example, assume that your stream is a stream from pressure sensors and has the following fields:

-

sensor_id -

pressure -

status

If you want to assign a status value based on the pressure, you can define the following rules:

-

if the pressure is less than or equal to 50, the status must be set to GREEN

-

if the pressure is between 50 and 100, the status must be set to YELLOW

-

if the pressure is greater than 100, the status must be set to RED.

To achieve this, you need to create these rules in a rule stage. The YELLOW rule for example, looks as shown below:

Description of the illustration yellow_rule.png

The rules get applied to the events sequentially and actions are triggered if the conditions are met. If you look at the data in the previous screen, the pressure value is 120 in the last row and hence the RED rule conditions resolve to true.

You must be careful while defining the rules. Logical loops or contradictory rules lead to the application never returning any outgoing events. For example, the following rules force the application into running forever without any outgoing events:

-

Rule 1: if n > 0, set n to -1

-

Rule 2: if n <=0, set n to 1

Pattern Stage

Patterns are a stage within a pipeline. When working from a pattern, you need to specify a few key fields to discover an interesting result. You can create pattern stages within the pipeline. Patterns are not stand-alone artifacts. They need to be embedded within a pipeline.

Query Group

A query group stage lets you do aggregations on multiple group bys and multiple windows. It is a collection of groups, where each of the group has its own window, filters that affect the data processing only within that group.

A query group has two types of stages:

-

Stream

-

Table

Query Group Stage: Stream

A query group stage of the type stream is where you can apply aggregate functions with different group-bys and window ranges to your streaming data. You can have multiple query groups in one stage.

Query Group Stage: Table

A query group stage of the type table is where you can apply aggregate functions with different group bys and window ranges to a database table data recreated in memory. Use this stage on a change data capture stream, such as GoldenGate. You can have multiple query groups in one stage.

Live Output Table

The Live Output table is the main feedback mechanism from the pipelines that you build. The Live Output table will display events that go out of your pipeline, after your processing logic has been applied on the incoming stream or streams.

The Live Output table will be displayed for each stage of your pipeline and will include output of that particular stage. On the source stage the Live Output table will display events as they arrive in the stream. On the target stage, the Live Output stage will display events as they will flow to the target.

The Live Output table is also a powerful tool for event shape manipulation. With the Live Output table you can:

-

Add new fields to the event using an extensive library of functions in the expression builder, and remove new fields

-

Change the order of the event fields

-

Rename event fields

-

Remove existing fields from the output of the stage

-

Add a timestamp field to each event

-

Hide fields from view (but retain them in the output)

-

Pause and restart event display in the browser (not affecting downstream stages or targets)

The interaction with the table should be intuitively clear to anyone who has worked with popular spreadsheet pipelines.

Expression Builder

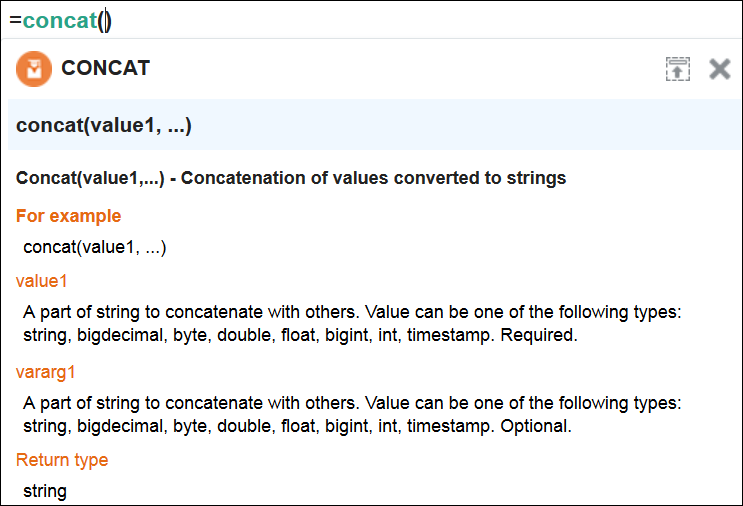

The expression builder provides functionality to add new fields to your output based on existing fields. You can use a rich library of functions to manipulate your event data. A simple example is string concatenation; you can construct a full name from first and last names:

Description of the illustration string_concat.png

Note:

The event shape manipulation functionality is available on the table in the query stage.The expression builder has syntax highlighting and code completion. You can also see the function signature, input parameters and the return value in the Expression Editor.

Description of the illustration concat_details.png

Visualizations

Visualization is mapping of the data (information) to a graphical or tabular format which can be used to answer a specific analytical question.

It translates data, its properties and relationships into an easy to interpretable visual object consisting of points, lines, shapes and colors. It effectively represents the results of the meaningful multi-dimensional questions. It also enables to discover the influential patterns out of the represented data (information) using the visual analysis.

Visualizations

Visualizations are divided into two categories:

-

Axis based

Axis based visualizations display series and groups of data. Series and groups are analogous to the rows and columns of a grid of data. Typically, the rows in the grid appear as a series in visualization, and the columns in the grid appear as groups.

Axis based visualizations enables users to visualize the data along two graph axis x and y like sum of sales over regions or sum of sales over time period. X axis values can be categorical in nature like regions or can be based on time series values whereas Y axis represents the measured value like sum(sales). These charts are useful for visualizing trends in a set of values over time and comparing these values across series.

-

Spatial

Spatial visualizations are used when geography is especially important in analyzing an event. It represents business data superimposed on a single geo fence.

Types of Visualizations

Visualizations can be further classified into the following categories:

-

Bar

Bar visualization is one of the widely used visualization types which represents data as a series of vertical bars. It is best suited for comparison of the values represented along y axis where different categories are spread across x axis. In a Bar visualization vertical columns represent metrics (measured values). The horizontal axis displays multiple or non-consecutive categories.

In Horizontal Bar, the axis positions are switched. The vertical axis displays multiple or non-consecutive categories. The horizontal columns represents metrics (measured values). It is preferable when the category names are long text values and requires more space in order to be displayed.

-

Line

Line visualization represents data as a line, as a series of data points, or as data points that are connected by a line. Line visualization require data for at least two points for each member in a group. The X-axis is a single consecutive dimension, such as a date-time field, and the data lines are likely to cross. X axis can also have non date-time categories. Y axis represents the metrics (measured value). It is preferred to use line visualization when data set is continuous in nature. It is best suited for trend-based plotting of data over a period of time. In Line visualization, emphasis is on the continuation or the flow of the values (a trend) but individual value comparison can also be carried out. Multiple series can also be compared with the line visualizations.

It can have a horizontal orientation where axis are switched i.e. y axis holds categories whereas x axis shows metrics.

-

Area

Area visualization represents data as a filled-in area. Area visualization requires at least two groups of data along an axis. The X-axis is a single consecutive dimension, such as a date-time field, and the data lines are unlikely to cross. Y axis represents the metrics (measured value). X axis can also have non date-time categories. This visualization is mainly suitable for presenting accumulative value changes over time.

It can have a horizontal orientation where axis are switched i.e. y axis holds categories whereas x axis shows metrics.

-

Stacked Bar

A Stacked visualization displays sets of values stacked in a single segmented column instead of side-by-side in separate columns. It is used to show a composition. Bars for each set of data are appended to previous sets of data. The size of the stack represents a cumulative data total.

-

Spatial

Geo Spatial visualization allows displaying location of an object on a geo fence and takes user to the area where events are occurring. User can configure visualization to specify latitude, longitude, identifier etc. Customization of visualization by specifying different pins like arrows with different colors based on certain condition is also allowed.

Draft and Published Pipelines

Stream Analytics supports two lifecycle states, namely draft and published.

Draft Pipelines

Pipelines in the draft state possess the following characteristics:

-

Are visible only to the owner

-

Can be edited

-

Work only when the pipeline editor is open. When you exit catalog or close your browser, the draft pipeline will be removed from the Spark cluster.

-

Do not send events to a downstream target even if a target is configured

A newly created pipeline is in draft state. This is where you can explore your streams and implement the business logic. You do not have to do the implementation all at once; the pipeline will not run between your editing sessions.

Published pipelines

Pipelines in the published state possess the following characteristics:

-

Are visible to any user

-

Cannot be edited

-

Will continue to run in the Spark cluster even after you exit the pipeline

-

Send events to a downstream target

After you are done with the implementation and satisfied, you can add a target and publish your pipeline. The published pipeline runs continually on the Spark Cluster.

If you want to edit a published pipeline, you must unpublish it first.

Oracle GoldenGate Integration

Oracle GoldenGate is a comprehensive software package for real-time data integration and replication in heterogeneous IT environments. The product set enables high availability solutions, real-time data integration, transactional change data capture, data replication, transformations, and verification between operational and analytical enterprise systems.

Use Case

When to use Oracle GoldenGate integration in Stream Analytics? You use this integration when you want to analyze your transaction data without stressing your production database and without waiting for offline reports to be built. Stream Analytics listens to the transaction event stream pushed by Oracle GoldenGate to Kafka and provide you with operational data in real time when transactions occur.

There exists an Orders table in a database. The table is continually affected by transactions (insert/update/delete) and Oracle GoldenGate is replicating the transactions to Kafka. The schema for the Orders table is {OrderId, OrderStatus, ProductSKU, QuantitySold, Revenue}.

The stream from Oracle GoldenGate includes two sets of the original table columns, one to record the state before transaction (for update and delete transactions), and another to record the state after transaction (for insert and update transactions). It also includes the op_type and op_ts columns. The op_type column carries transactional semantics (I (Insert) or U (update) or D (Delete)). The op_ts column is the transaction timestamp.

An order can be canceled either by deleting the row or by updating the order status to Canceled. The default order status is Booked. Orders can also be revised up or down by changing quantity and therefore the revenue. Revenue goes down when quantity is lowered and goes up when quantity is increased.

Solution

A solution is as follows:

-

Create a GoldenGate stream pointing to the Kafka topic GoldenGate replicates the transactions to.

-

Create a pipeline using the GoldenGate stream and add a Query Group Stage of the type Table. In this stage, use the transactional semantics in the

op_tscolumn to rebuild theOrderstable in memory, so that you can see a snapshot of the latest transactions in your table for as long as you specify (remember that the longer the time window, the more the memory consumed).

You can then run any filters, group bys and aggregations on this snapshot. For example:

-

Total Revenue by for the past 24 hours

-

Total Revenue by

ProductSKUfor the past 24 hours -

Average

QuantitySoldbyProductSKUfor the past one hour.

Tip:

To enhance the data, before adding the Query Group Stage, you can add a regular Query Stage where you can join the transaction event stream with a reference database table to obtain more dimensions for your reports, such asProduct Category or Vendor.

Access Stream Analytics

Stream Analytics is a feature within Oracle Integration.

After you provision Stream Analytics, you can access it from Oracle Integration.

-

If you have already registered Stream Analytics, click Streams in the left pane to launch and navigate to Stream Analytics.

-

If you are accessing Stream Analytics for the first time, click Register and provide the deployment details to register an instance of Stream Analytics. Click Streams in the left pane to launch and navigate to Stream Analytics.

About Stream Analytics Roles and Users

Stream Analytics delivers multiple experiences targeting the specific skillsets of every persona associated with complex business process lifecycles.

The roles and users are:

-

Developer — has full access to the design time user interface with privileges to create, edit, and delete pipelines. The privileges do not include the Publish privilege.

-

Viewer — has full access to the design time user interface with privileges to view pipelines and published dashboards. This role has only read access and no Publish privilege.

-

Administrator — has administration or super user access. This is the only role allowed to modify pipeline role permission grants and memberships.

-

Architect — has full access to the design time user interface with the privileges to create, edit, and delete all artifacts: pipelines, streams, maps, connections, references, targets, and patterns. The privileges do not include the Publish privilege.

-

Operator — has the privileges to deploy and undeploy pipelines and to monitor the infrastructure such as the deployed pipelines, Spark Cluster, WebLogic Servers, etc.

Note:

A user with this role permission can publish/unpublish the pipeline only if the user also has the Developer role.

For additional information about the privileges, see Privileges Available to Roles in Stream Analytics.