2 About System Architecture

Learn about the system architecture for machine learning (ML) workflows and models that you create using the artificial intelligence (AI) microservices provided in Oracle Monetization Suite.

Topics in this document:

System Architecture Overview

The system architecture uses a modular approach, with distinct stages for data storage, data fetching, model training, model deployment and management, prediction services, and integration with client applications or AI agents. Each stage consists of independently deployable microservices, which operate as pods in a Kubernetes environment.

The key components of the architecture include:

-

Client applications or AI agents: End-user applications or automated agents that consume AI-driven services.

-

Prediction services: Inference services that use deployed ML models.

-

ML Ops – model management: A repository for managing, versioning, and monitoring trained models.

-

Training services: A framework that supports model training using curated data.

-

Data layer: The source layer for preparing, fragmenting, and supplying data through a configurable data service mesh.

-

Persistence layer: The storage repository for both raw and processed data.

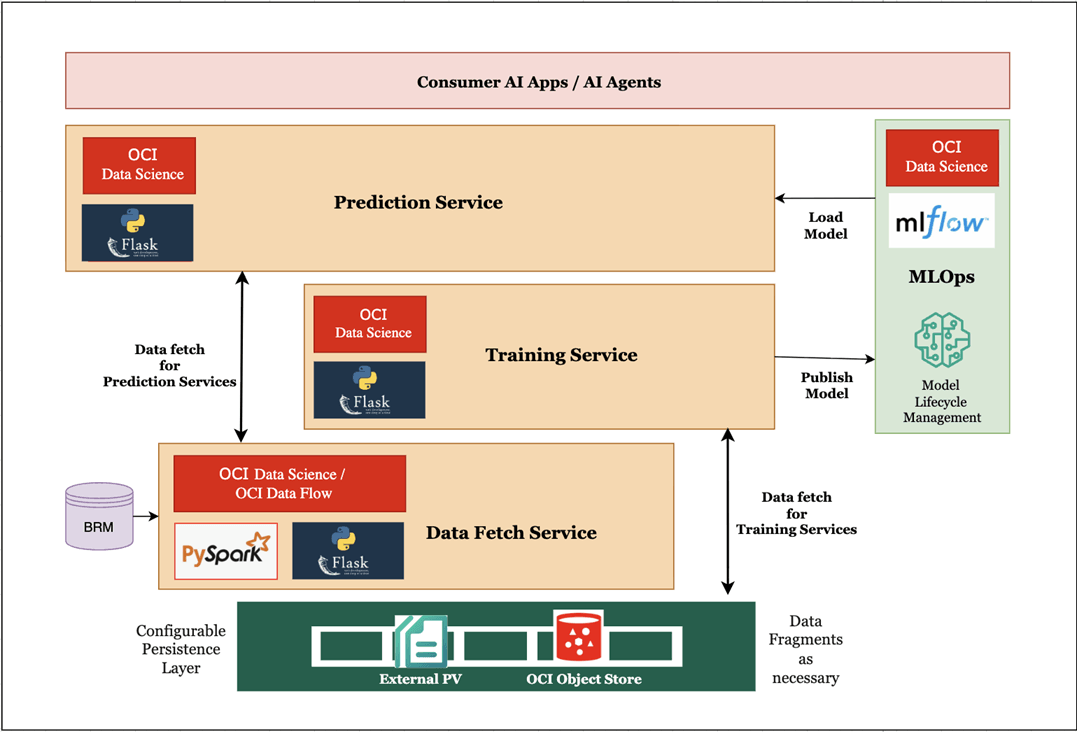

Figure 2-1 shows the high-level system architecture and its major components.

In Figure 2-1, the data layer ingests raw data from source systems, transforms and enriches it, and caches it as domain-specific data fragments. This data is then stored in the persistence layer, which securely stores both raw and processed datasets for downstream use. When you develop a model, training services access high-volume, engineered data from the persistence layer for model training.

After training, the model is published to the ML Ops (model management) layer for versioning, tracking, and lifecycle governance. The prediction services leverage the published model, fetch relevant, cached input data from the data layer, and use them to respond to real-time prediction requests. These predictions are then consumed by the client applications or AI agents to use for different purposes like getting insights for decision making.

About Client Applications or AI Agents

Client applications or AI agents serve as the interface between end users and AI-ML models. These may include Oracle Monetization Suite applications, such as Billing Care, or AI-driven agents that invoke prediction services for real-time or batch inference.

Note:

The client must handle the batch processing as there is no dedicated API for this.

About Prediction Services

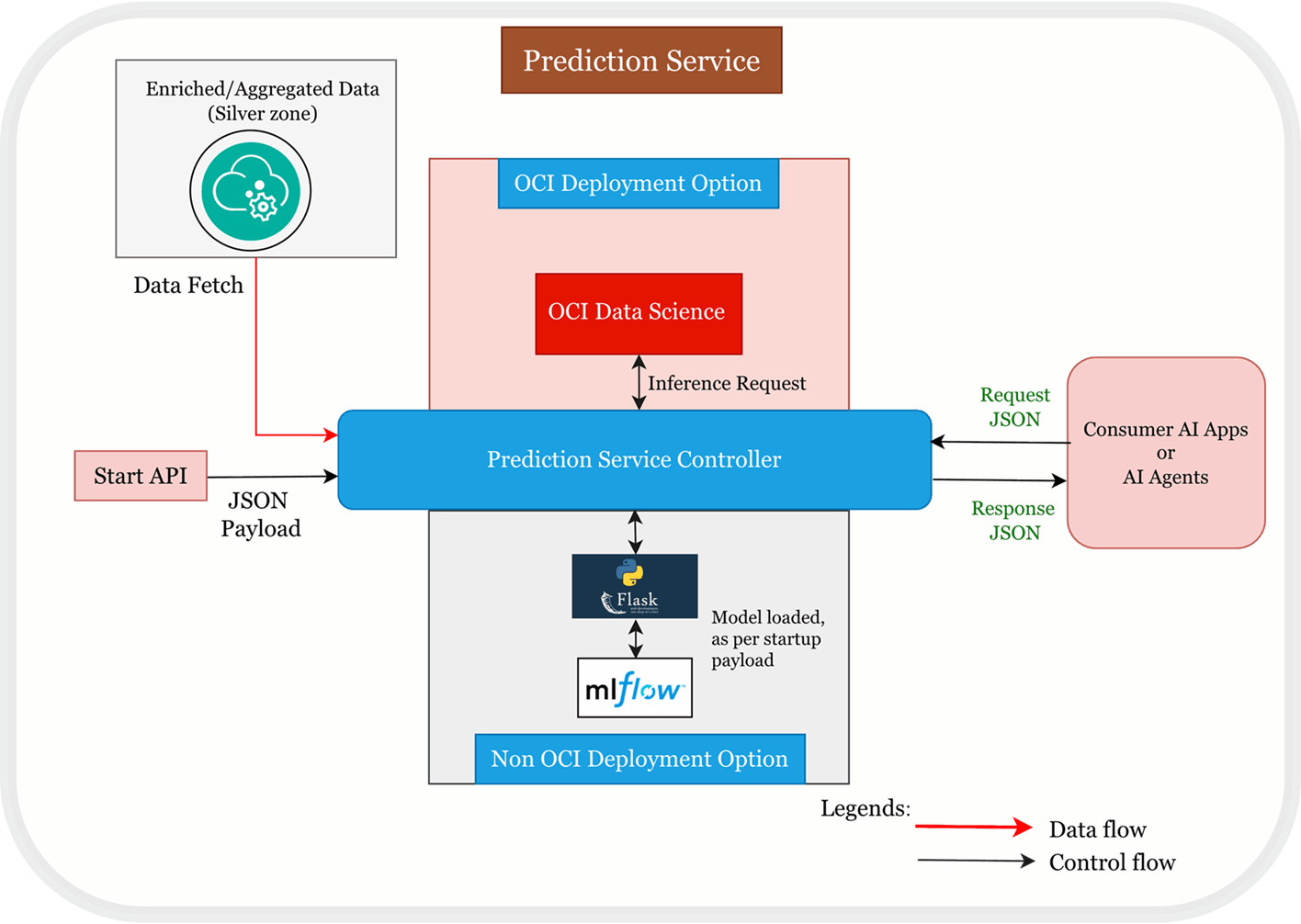

Prediction services are specialized microservices that perform inference operations within the architecture. The microservices retrieve the required input data from the data mesh in the data service layer. The data mesh is a collection of services responsible for owning and caching specific data fragments for immediate use. This design ensures low latency and high throughput, optimizing overall performance.

When a prediction request is received, the prediction services dynamically load the appropriate trained model from the model management layer and use it, with the relevant input data, to generate predictions. Client applications or AI agents then consume and act upon these predictions.

You can use the prediction services by connecting it to a model on OCI Data Science or implement them using Python Flask sample frameworks.

Figure 2-2 shows the high-level architecture of the Prediction Service layer.

Figure 2-2 Prediction Service Layer Architecture

About ML Ops – Model Management

The ML Ops (model management) layer functions as the central repository and governance platform for trained models. Once a model is trained by the training services, it is published here for versioning, tracking, and lifecycle management. The model management system stores metadata for each model, including version history and associated metrics.

This layer is certified with OCI Data Science offering ML Ops capabilities and with the open-source MLFlow technology.

About Training Services

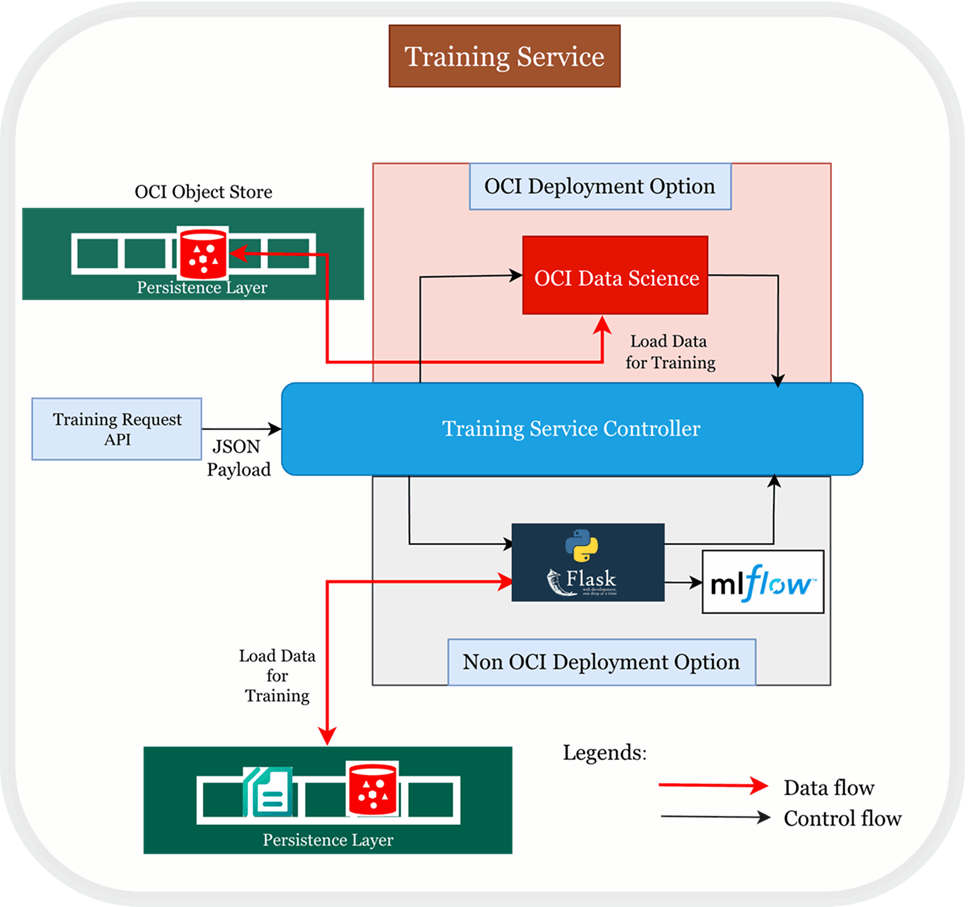

Training Services are primarily responsible for orchestrating machine learning model development. The microservices begin with fetching the input data directly from the persistence layer as the data is required in bulk for high-volume training workload. Using the persistence layer also reduces the time for data fetching in this case.

The microservices then run the training jobs to train the model based on the input data. When the training is complete, the model is pushed to the model management layer along with the relevant metadata or metrics.

You can deploy training services on an OCI environment using OCI Data Science or on a non-OCI environment like Kubernetes.

Figure 2-3 shows the high-level architecture of the Training Service layer.

Figure 2-3 Training Service Layer Architecture

About the Data Layer

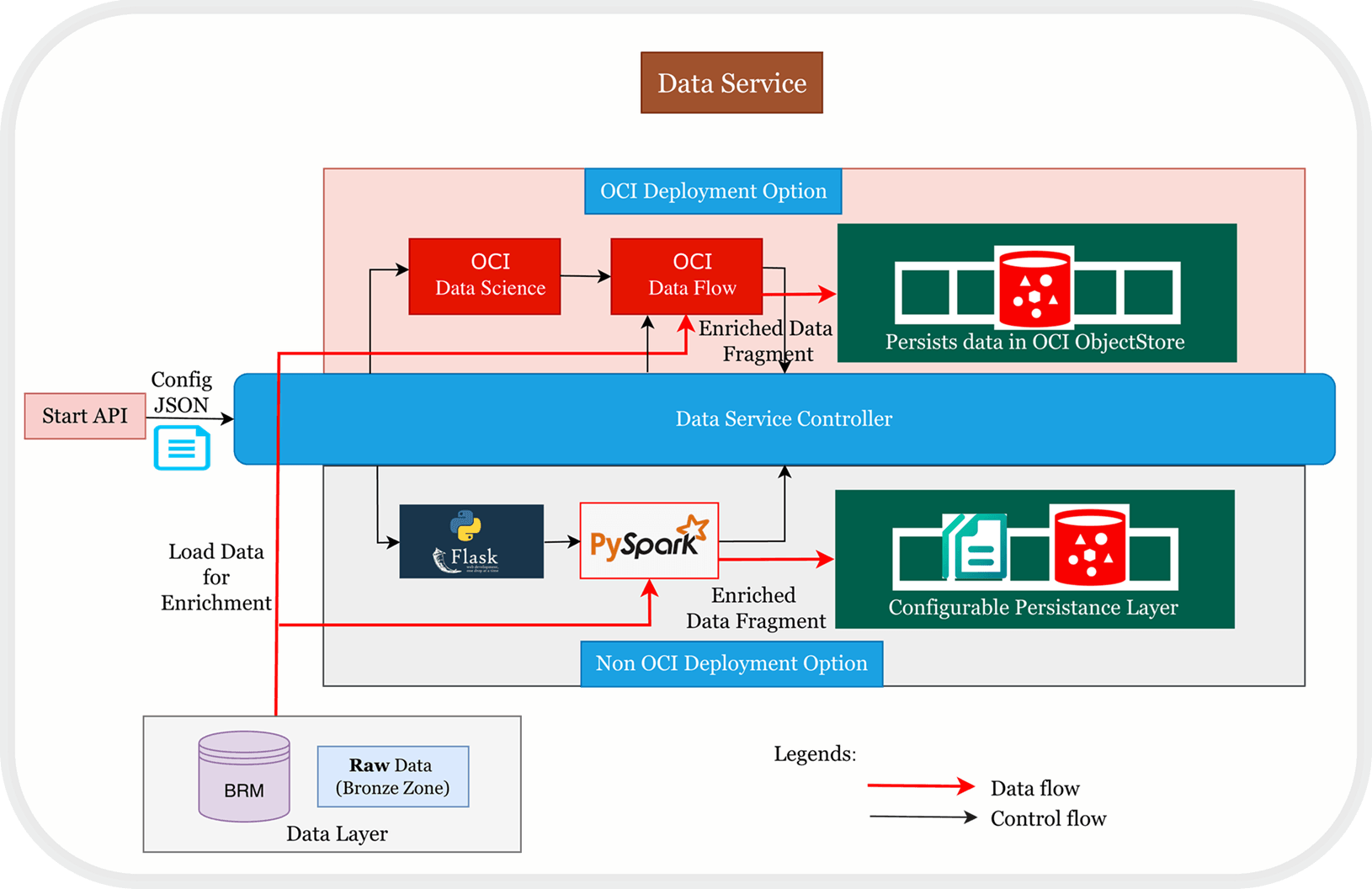

The data layer is the primary source that prepares, fragments, and supplies the required data for downstream ML tasks. Similar to the Medallion architecture, this layer includes two data zones:

-

Bronze zone: Contains raw, unprocessed data ingested from primary sources.

-

Silver zone: Holds cleaned, enriched, and aggregated data optimized for modeling.

The silver zone implements a data mesh, in which each service owns and caches a specific data fragment (for example, a service for subscriber usage data in Billing and Revenue Management).

Data fragments in the silver zone are cached and validated for quick access. Each service includes metadata describing its data. You configure data services in the silver zone to regularly fetch updates from the bronze zone and update cached fragments and the persistence layer as needed.

Data services can be deployed on OCI or non-OCI environments. For OCI deployments, data engineering either uses OCI Data Flow directly or call OCI Data Flow using OCI Data Science, and data is stored in OCI Object Storage. For non-OCI environments, data engineering is handled by PySpark, and engineered data is persisted to either PVC or OCI Object Storage, based on the configuration.

Figure 2-4 shows the high-level architecture of the Data Service layer.

Figure 2-4 Data Service Layer Architecture

About the Persistence Layer

The persistence layer acts as the centralized, long-term storage repository for all data. After data services process raw data from the bronze zone and cache it for immediate use, they persist this data for durability and downstream analytics, training, and prediction.

Data services maintain and secure the persistent data, ensuring it is current and available. They also use the persistence layer to identify and fetch new or changed data from the raw data source, enabling efficient incremental updates.

The data can be persisted in one of the following:

-

Persistent Volume Claims (PVC) for containerized environments

-

OCI Object Store for scalable, object-based storage