4 Factors Affecting Garbage Collection Performance

The two most important factors affecting garbage collection performance are total available memory and proportion of the heap dedicated to the young generation.

Total Heap

The most important factor affecting garbage collection performance is total available memory. Because collections occur when generations fill up, throughput is inversely proportional to the amount of memory available.

Note:

The following discussion regarding growing and shrinking of the heap, the heap layout, and default values uses the serial collector as an example. While the other collectors use similar mechanisms, the details presented here may not apply to other collectors. Refer to the respective topics for similar information for the other collectors.

Heap Options Affecting Generation Size

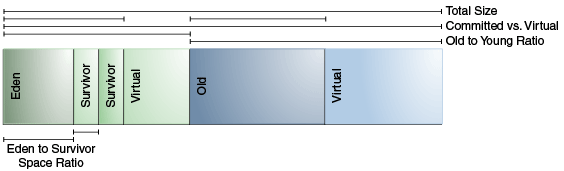

A number of options affects generation size. Figure 4-1 illustrates the difference between committed space and virtual space in the heap. At initialization of the virtual machine, the entire space for the heap is reserved. The size of the space reserved can be specified with the -Xmx option. If the value of the -Xms parameter is smaller than the value of the -Xmx parameter, then not all of the space that's reserved is immediately committed to the virtual machine. The uncommitted space is labeled "virtual" in this figure. The different parts of the heap, that is, the old generation and young generation, can grow to the limit of the virtual space as needed.

Some of the parameters are ratios of one part of the heap to another. For example, the parameter –XX:NewRatio denotes the relative size of the old generation to the young generation.

Default Option Values for Heap Size

By default, the virtual machine grows or shrinks the heap at each collection to try to keep the proportion of free space to live objects at each collection within a specific range.

This target range is set as a percentage by the options -XX:MinHeapFreeRatio=<minimum> and -XX:MaxHeapFreeRatio=<maximum>, and the total size is bounded below by –Xms<min> and above by –Xmx<max>.

With these options, if the percent of free space in a generation falls below 40%, then the generation expands to maintain 40% free space, up to the maximum allowed size of the generation. Similarly, if the free space exceeds 70%, then the generation contracts so that only 70% of the space is free, subject to the minimum size of the generation.

The calculation used in Java SE for the Parallel collector are now used for all the garbage collectors. Part of the calculation is an upper limit on the maximum heap size for 64-bit platforms. See Parallel Collector Default Heap Size. There's a similar calculation for the client JVM, which results in smaller maximum heap sizes than for the server JVM.

The following are general guidelines regarding heap sizes for server applications:

-

Unless you have problems with pauses, try granting as much memory as possible to the virtual machine. The default size is often too small.

-

Setting

-Xmsand-Xmxto the same value increases predictability by removing the most important sizing decision from the virtual machine. However, the virtual machine is then unable to compensate if you make a poor choice. -

In general, increase the memory as you increase the number of processors, because allocation can be made parallel.

Conserving Dynamic Footprint by Minimizing Java Heap Size

If you need to minimize the dynamic memory footprint (the maximum RAM consumed during execution) for your application, then you can do this by minimizing the Java heap size. Java SE Embedded applications may require this.

Minimize Java heap size by lowering the values of the options -XX:MaxHeapFreeRatio (default value is 70%) and -XX:MinHeapFreeRatio (default value is 40%) with the command-line options -XX:MaxHeapFreeRatio and -XX:MinHeapFreeRatio. Lowering -XX:MaxHeapFreeRatio to as low as 10% and -XX:MinHeapFreeRatio has shown to successfully reduce the heap size without too much performance degradation; however, results may vary greatly depending on your application. Try different values for these parameters until they're as low as possible, yet still retain acceptable performance.

In Serial GC, you can specify -XX:-ShrinkHeapInSteps, which

immediately reduces the Java heap to the target size (specified by the parameter

-XX:MaxHeapFreeRatio). You may encounter performance degradation

with this setting. Otherwise, the Java runtime incrementally reduces the Java heap to

the target size; this process requires multiple garbage collection cycles.

The Young Generation

After total available memory, the second most influential factor affecting garbage collection performance is the proportion of the heap dedicated to the young generation.

The bigger the young generation, the less often minor collections occur. However, for a bounded heap size, a larger young generation implies a smaller old generation, which will increase the frequency of major collections. The optimal choice depends on the lifetime distribution of the objects allocated by the application.

Young Generation Size Options

By default, the young generation size is controlled by the option -XX:NewRatio.

For example, setting -XX:NewRatio=3 means that the ratio between the young and old generation is 1:3. In other words, the combined size of the eden and survivor spaces will be one-fourth of the total heap size.

The options -XX:NewSize and -XX:MaxNewSize bound the young generation size from below and above. Setting these to the same value fixes the young generation, just as setting -Xms and -Xmx to the same value fixes the total heap size. This is useful for tuning the young generation at a finer granularity than the integral multiples allowed by -XX:NewRatio.

Survivor Space Sizing

You can use the option -XX:SurvivorRatio to tune the size of the survivor spaces, but often this isn't important for performance.

For example, -XX:SurvivorRatio=6 sets the ratio between eden and a survivor space to 1:6. In other words, each survivor space will be one-sixth of the size of eden, and thus one-eighth of the size of the young generation (not one-seventh, because there are two survivor spaces).

If survivor spaces are too small, then the copying collection overflows directly into the old generation. If survivor spaces are too large, then they are uselessly empty. At each garbage collection, the virtual machine chooses a threshold number, which is the number of times an object can be copied before it's old. This threshold is chosen to keep the survivors half full. You can use the log configuration -Xlog:gc,age can be used to show this threshold and the ages of objects in the new generation. It's also useful for observing the lifetime distribution of an application.

Table 4-1 provides the default values for survivor space sizing.

Table 4-1 Default Option Values for Survivor Space Sizing

| Option | Default Value |

|---|---|

|

|

2 |

|

|

1310 MB |

|

|

not limited |

|

|

8 |

The maximum size of the young generation is calculated from the maximum size of the total heap and the value of the -XX:NewRatio parameter. The "not limited" default value for the -XX:MaxNewSize parameter means that the calculated value isn't limited by -XX:MaxNewSize unless a value for -XX:MaxNewSize is specified on the command line.

The following are general guidelines for server applications:

-

First decide on the maximum heap size that you can afford to give the virtual machine. Then, plot your performance metric against the young generation sizes to find the best setting.

-

Note that the maximum heap size should always be smaller than the amount of memory installed on the machine to avoid excessive page faults and thrashing.

-

-

If the total heap size is fixed, then increasing the young generation size requires reducing the old generation size. Keep the old generation large enough to hold all the live data used by the application at any given time, plus some amount of slack space (10 to 20% or more).

-

Subject to the previously stated constraint on the old generation:

-

Grant plenty of memory to the young generation.

-

Increase the young generation size as you increase the number of processors because allocation can be parallelized.

-